►

From YouTube: Antrea Community Meeting 04/10/2023

Description

Antrea Community Meeting, April 10th 2023

A

All

right,

good

morning,

good

afternoon,

good

day,

good

evening,

thanks

for

joining

the

resistance

of

the

Andrea

community

meeting

today

is

April

11th

and

fortunately

we

have

a

a

fairly

packed

agenda.

So

we

will

start

with

a

discussion

of

CI

improvements

led

by

shuyang

and

in

the

second

part

of

the

meeting,

is

that

we

will

review

some

entry

apis,

which

are

still

in

Alpha

status,

to

figure

out

which

ones

can

be

promoted

to

Beta

or

even

be

promoted

to

GA.

So

this

is

the

agenda

for

today.

A

C

B

B

Hello,

everyone,

I'm

shuyan

and

today

I

will

introduce

some

new

features

and

enhancements

for

the

entry

Jenkins

pipeline

for

the

agenda.

First

I

will

introduce

some

new

CF

features

and

the

related

trigger

phrases

that

developers

can

use

in

their

PR

and

also

I

will

talk

about

the

recent

enhancements

that

improves

the

stability

of

the

CFA

plan.

And

finally,

we

will

talk

about

some

future

improvements

like

the

new

features

or

enhancements.

We

want

to

bring

in

next

release

or

in

a

long

term.

B

So,

firstly,

I

want

to

introduce

some

new

features

for

the

Jenkins,

Pipeline

and

I

will

talk

about

new

features

for

Windows

test

pad

first

and

then

Andrew

will

introduce

the

implementation

of

the

canvas

AI

Support.

Also.

We

have

supported

Adventure

CI

since

entry

1.11,

which

is

also

a

new

feature

for

the

Jenkins

pipeline

and

okay,

let's

start

from

the

new

features

in

Windows

AI.

So

we

know

the

windows

has

a

very

different

test

bed

compared

to

Linux,

node

and

also

building

entry

windows.

B

Image

takes

much

more

time

than

building

Linux

image,

so

we

want

to

optimize

it

to

accelerate

the

whole

process,

not

only

for

can

pipeline,

but

also

for

other

windows

developers

who

want

to

quickly

build

a

Windows

image

for

verification

or

development.

So

that's

our

motivation

and

one

of

the

optimization

is

to

enable

building

the

windows

space

image

to

accelerate

building

agent

image

because

after

investigation

we

found

actually

we

we

waste

a

lot

of

time

on

downloading,

duplicates

libraries

and

fails

to

create

the

base

environments.

B

So,

just

like

what

we

did

in

Linux,

we

started

to

support

building

windows,

space

image

after

entry

1.8

and

on

our

our

testbed

building

latest

entry

windows.

Image

can

cost

more

than

six

six

minutes

with

without

space

image,

but

building

windows.

Image

with

basic

image

supports

will

only

takes

about

two

minutes,

so

this

new

features

can

reduce

image,

building

Time

by

more

than

60

percent,

and

another

good

thing

is

we

we

we

have

updated

the

make

fails.

So

the

developer

don't

need

to

change

their

building

comments.

B

As

we

know,

after

kubernetes

1.24,

the

docker

became

a

deprecated

feature,

so

we

need

a

container

ID

support

to

verify,

and

trade

agent

supports

the

most

different

points

for

the

container

D

test

pad

is

that

it

has

one

more

image

node

for

image

building,

because

we

don't

want

to

install

and

manage

both

continuity

and

Docker

on

the

same

Windows

host

and

it

could

bring

a

potential

conflicts

during

tests.

So

we

only

run

continuity

on

Windows

worker

nodes

and

only

run

Docker

for

building

image

on

another

windows.

B

Image

node,

so

other

workflows

for

continuity

cell

pipeline

is

as

same

as

the

docker

testbed,

and

you

can

see

as

the

lower

left.

We

have

three

new

trigger

phrases

for

e2e

conference

and

the

network

policy

on

Windows

testbed.

Now,

after

entry,

1.10

developers

can

trigger

Windows

continuity

tests

by

these

three

trigger

comments,

and

another

thing

I

want

to

highlight

is

that

the

test

Windows

all

commands

will

trigger

all

windows,

sell

drops,

including

Docker

and

continuity

test

drops

after

entry

1.10.

D

D

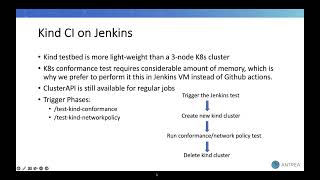

So

the

kind

is

one

of

them,

as

we

know

that,

like

a

kind

or

community

in

Docker

is

a

suit

of

a

tooling

for

the

local

cabinet

cluster,

where

each

spot

is

a

Docker

container

and

kind

targets,

local

cluster,

for

the

testing

purpose,

so

we

have

added

a

set

of

kind

tested

as

a

Jenkins

node

and

a

kind

tested

is

a

more

lightweight

than

the

three

kubernet

cluster

and

the

related

to

the

conformance

test.

Here

we

are

running

kind

test

in

Jenkins

VM,

instead

of

GitHub

actions.

Why?

D

Because

a

conformance

tests

requires

some

considerable

amount

of

memory,

that's

why

we

prefer

a

jks

VM

instead

of

GitHub

actions,

so

the

next

spot

related

to

the

cluster

API.

Actually,

the

cluster

API

is

a

framework

to

create

kubernetes

cluster,

with

a

different

cloud

provider

such

as

AWS,

vsphere

Azure

and

for

the

our

vsphere

environment,

the

regular

jobs

like

e2e,

conformance

and

network

policy.

D

These

are

using

this

framework

to

create

runtime

VMS

for

the

new

humanit

cluster,

so,

like

all

the

kind

tests

can

save

lots

of

resources

for

us

and

it

simulates

multiple

nodes

on

one

real

host,

but

we

can

say

that

that

like

cluster

created

by

cabbies

still

closer

to

the

real

scenario,

and

we

will

keep

using

it

for,

for

the

other,

our

regular

Jenkins

job

and

for

the

kind.

Currently

we

have

two

trigger

faces,

one

for

the

conformance

test

and

other

for

the

network

policy.

D

It

is

so

when

we

trigger

these

faces

on

GitHub

PR,

then

it

will

start

running

the

test

in

Jenkins,

so

we

can

see

in

the

workflow

first,

you

will

trigger

the

Jenkins

test.

Then

it

will

create

a

new

kind

cluster

and

then

it

will

start

running

conformance

or

network

policy

test

and

after

finishing

the

test,

it

will

delete

the

created

cluster.

D

But

suppose

you

have

triggered

the

face

and

then

it

will

create

the

and

then

it

will

create

the

new

current

cluster

and

it

will

start

to

the

test

and

during

that

time,

if

you

abort

the

process,

then

that

case

current

cluster

will

not

be

deleted

and

it

becomes

garbage

cluster.

So

we

need

to

handle

this

aborted

case

also

and

for

handling.

This

I

have

created

a

PR,

so

in

that

PR

I

have

added

one

35

minute

timeout

for

the

test.

So

after

merging

that

PR,

what

will

be

the

updated

flow

like?

D

If

you

trigger

the

face,

then

it

will

start

creating

a

new

kind

cluster

and

just

before

running

the

actual

test,

it

will

run

the

cleanup

function

and

there

we

will

check.

If

there

is

any

cluster

present

from

from

more

than

135

minute,

then

we

need

to

delete

that

particular

cluster

and

after

finishing

the

cleanup

function,

it

will

start

to

the

actual

test

on

new

kind

cluster.

D

Then

after

finishing

the

test,

it

will

delete

the

cluster.

So

these

all

about

the

workflow

and

one

more

thing

like

currently,

we

have

added

six

kind

tested

with

one

slot

for

handling

the

parallel

lens,

but

in

future

we

will.

We

will

handle

that

in

a

single

test

set.

We

will

create

multiple

cluster

in

the

single

test,

where

we

will

run

the

multiple

test

in

the

single

test

field

and

that

work

is

in

progress.

So

that's

all

from

my

side

now

showing

over

to

you.

B

Thanks

Andrew

and

the

next

new

feature

is

about

the

Rancher

said

pipeline,

but

we

won't

cover

more

details

in

today's

spread

presentation,

because

pocket

had

introduced

the

its

implementation

in

last

month's

company

meeting.

I

just

want

to

reaffirm.

If

you

want

to

test

the

entry

as

a

cni

plugin

on

render

testpad,

it

can

be

supported

after

entry

1.11.

B

Previously

we

used

the

public

service

to

create

server

channel

for

GitHub

requests,

but

recently

the

public

service

faced

a

series

of

service

abusing

issue

and

their

maintainer

have

to

shut

down

the

service

for

more

than

two

weeks

and

so

I

think

for

entry

set

pipeline.

We

can't

just

rely

on

the

public

service,

so

we

we

need

another

backup

service

for

the

Smee.

B

So

we

also

have

this

opportunity

to

improve

the

stability,

because

we

can

redeploy

the

SS

SME

server

and

the

clients

based

on

the

latest

environment

and

the

and

now

we

we

have

enabled

private

SME

service

for

entry

CI

since

last

month,

and

also

we

have

a

good

news

this

week,

the

public

SME

service

also

back

to

normal.

So

in

the

future

we

are

able

to

migrate

SME

between

public

and

the

previous

service

to

improve

the

stability

and

the

robustness

for

our

private

drinking

stuff

decline.

B

And

another

enhancement

is

that

we

support

multiple

Jenkins

description

Fields.

Previously

we

only

create

the

public

Jenkins

job

yamo

for

users

who

who

want

to

build

their

own

Jenkins

test

bat,

but

for

other

test

bats.

We

didn't

support

their

description

Fields,

but

now

we

created

another

lab

Jenkins

yaml

field

for

the

private

Jenkins

job,

so

developers

can

can

learn

how

these

the

test

drops

wrong

and

in

the

future.

We

also

hope

we

can

involve

more

developers

in

the

pipeline

contribution

and

next

I

will

introduce

some

future

improvements.

B

I

have

said

we,

we

are

using

vmc

for

the

public

drinking

experts.

Currently

we

are

working

on

migrated

to

the

AWS

and

in

the

future

we

will

have

more

their

jobs

running

with

can

or

cpv

support,

as

Andrew

said

for

the

kind

of

cancer

test

and

the

judging

is

working

on

support.

Multi-Clusters

CI

in

Kent

and

Andrew

will

continue

working

on

migrates,

our

fpv6

and

other

more

Jenkins

jobs

to

Kent

and,

moreover,

our

Windows,

their

jobs,

still

takes

more

time

than

the

Linux

drops.

B

B

Currently,

we

we

keep

both

Docker

and

continuity

test

bed

for

Windows,

but

in

the

future,

maybe

we

will

duplicate

the

docker

test

pad

because

we

see

the

kubernetes

communities

they

they

depreciate,

the

docker

after

1.24.

So

as

I

think

yes

in

the

future,

we

will

only

use

the

containerdy

test

bed,

but

currently

I

think

we

we

still

support

both

of

them.

B

A

Yeah

sounds

good

all

right,

so

that

was

my

only

curiosity.

It

seems

that

we

don't

have

any

other

question

on

the

CI,

so

perhaps

we

can

move

to

the

next

topic

and

which

is

a

part

of

this

guy.

Thank

you

thank

you,

which

is

about

discussing

the

apis

that

we

can

or

want

to

promote.

So

I,

don't

know.

What's

going

to

lead

this

conversation

antonan

Chan,

with

going

to

do

that.

F

C

C

F

Yeah

this

this

is

a

issue

about

the

preparation

we

we

might

need

to

do

for

Angel,

2.0

and

just

a

summarize

something

we

have

discussed

before

in

other

channels

and

I

have

added

something

I

have

investigated

for

for

this

release

and

yeah

it's

made

about

the

API

migration

and

the

API

removal,

and

also

there

are

some

items.

There

are

some

tasks

to

promote

some

official

case

that

are

not

some.

Some

of

them

are

not

API

based,

but

just

a

code

based.

F

We

have

we

added

some

configurations

in

one

new

answer:

one

and

Angela

one

two:

is

it

zero

to

one

daughter

11,

but,

and

we

we

remove,

we

duplicated

some

configurations,

but

never

rarely

remove

them

from

the

configuration

file.

So

I

think

it's

also

a

good

opportunity

to

remove

the

duplicated

configurations

in

the

new

major

release.

E

Yeah

sure

so,

I

think

what

what

we've

noticed

is

that

one

notice

we

knew

that,

but

most

of

the

entry

apis

or

still

in

a

V1

Alpha

stage

for

some

of

them.

We've

introduced

new

versions,

for

example

in

the

for

the

network

policy.

Things

like

cluster

groups,

where

we

may

have

had

like

more

than

one

version,

but

most

of

them

are

in

Alpha,

One

or

Alpha

2

stage,

and

we

think

that

Andrea

is

a

pretty

mature

project.

E

That's

that's

about

it.

I

know

that

Chan

had

some

comments

about

potential

improvements

to

some

API

because,

as

we

change

the

version

of

those

apis,

this

is

kind

of

like

a

good

opportunity

to

make

modifications

to

the

API

and

API

types

that

may

break

backwards.

Compatibility.

Of

course.

That

means

that

for

a

while,

we

need

to

be

able

to

support

both

versions

of

the

apis

in

accordance

to

or

upgrade

policies.

But

this

is

a

good

opportunity

to

do

some

cleanups

to

some

of

the

apis

trace

flow.

E

For

example,

I

know

that

over

the

last

couple

of

years,

we've

like

taken

note

of

some

possible

improvements

that

China's

detailed

here

and

if

there

is

an

API

that

you've

been

working

working

on

and

you

kind

of

like

have

something

on

the

back

burner

that

you

think

should

be

improved.

I

think

now

is

the

right

time

to

speak

up,

because

obviously

upgrading

API

is

changing

the

version,

that's

a

bit

painful,

and

so

we

don't

want

to

be

doing

this

all

the

time,

it's

painful

for

us

and

painful

for

users.

F

If

we

are

confident

in

enough

I

remember

changing

said

he

may

be

not

very

confident

of

about

graduating

to

way

one

directly

yeah,

so

I

I

really

I

left

this

as

an

open

question

about

this

API

and

for

the

other

one

Android

agent

team

funded

controller

info

I

found.

That

is,

since

this

is

the

first

crd

we

introduced

everything

I

think

we

might.

We

might

made

some

mistakes

when

adding

this

crd,

because

in

the

schema

is

unstructed-

and

it's

still

in

that

case

here,

no

so

I

think

we

shouldn't.

F

Maybe

this

is

not

really

related

to

graduating

to

National

release,

but

we

should

do

it

immediately

if

it

was

a

mistake,

because

typically,

any

kind

of

data

can

be

stored

in

the

CR

yeah

and

except

for

that,

I.

Don't

have

other

concerns

I

on

this

on

the

new

version

of

financial

reasoning

for

under

control

info,

because

they

are

basically

for

real

purpose.

F

Only

and

I

don't

see

a

big

risk

to

how

we

want

version

for

that

and

for

other

apis

I

think

we

discussed

in

other

channels

and

personally

I'm

fine

with

the

The

Proposal

Anthony

had

I'm

going

to

show

you

for

others.

I

will

comments

on

this

part

and

besides

that,

I

I

also

added

two

other

apis

for

or

two

other

group

of

apis

for

consideration.

The

first

one

is

the

multicast

loop.

F

F

My

concern

was

currently

we

don't

persistent,

persist,

the

stats

in

any

storage

and

and

every

time

the

controller

restarts

the

stats.

The

the

the

start

of

an

airport

says

will

be

reset

and

we

are

starting

from

zero,

so

I

until

we

had,

we

introduced

some

processing,

storage

or

use

crd

to

persist.

This

data

I

personally

I'm

I'm

not

confident

to

graduating

it

to

next

stage.

So

any

comments

on

this

proposal,

or

or

you

want

to

add

a

new

API

you

are

familiar

with-

is

welcome.

A

G

F

I

I

think

he

is

not

not

necessary

to

make

all

promotion

in

two

build

a

turtle.

Yeah

I

think

he's

trying

to

do

it

before

that

release.

I

I,

I

I

thought

we

just

want

to

make

sure

that

we

have

we

we

how

reasonable

beta

and

the

ga

features

in

the

next

major

release,

but

the

process

could

be

could

be

in

this.

It's

there

in

this

in

one

dot

well

in

in

one

in

the

major

release

of

1.0

yeah.

E

F

F

But

even

we

updated

the

the

crd

configuration

to

see

that

the

store

watch,

the

storage

washing

should

should

now

be.

We

want.

The

existing

data

doesn't

doesn't

automatically

update

to

the

new

version

and

they

will

be

kept

as

they

are

until

there

is

a

chance

to

rewrite

the

the

object

to

each

City.

So

there

could

be

two

cases

actually

three

cases.

F

F

The

first

is

the

API.

We

are

block

the

the

CRT

update.

Api

will

block

the

request,

because

the

status

stored

watching

field

will

have

two

versions

until

user.

Remove

the

old

one

manually-

and

this

is

by

Community

design

and

another

problem

is

even

use-

is

able

to

do

this

themselves.

The

as

it

comes

around

as

yeah

it's

at

least

one

still

object

is

stored

in

etcd.

Even

the

update

request

is

not

the

CRT

update.

Request

is

not

blocked

and

is

applied

successfully.

F

The

CR

that

API

will

no

longer

be

available

because

the

API

server

will

not

be

able

to

convert

the

stored

object

to

the

right

version,

because

the

missing

version

in

crd

schema

and

the

API

will

stop

work

will

stop

working

so

to

make

sure

that

user

could

continue.

Using

that

API

in

the

future,

there

are

two

preconditions

and

the

first

one

is

the

O

of

the

stored

objects

need

to

be

stored,

updated

to

a

new

version

and

the

status

field.

F

Stores

version

should

be

updated

to

contain

to

not

contain

the

the

remote

version

so

that

the

update

of

crd

could

success.

So

kubernetes

could

documented

two

ways

to

do

that.

The

first

one.

The

first

option

is

to

use

a

storage

washing

migrator

I

tried

this

report

I'm,

not

sure

whether

it's

mutual

enough,

but

at

least

it's

not

very

friendly

to

end

the

user,

because

you

have

to.

F

And

finally,

you

need

to

apply

the

Manifest

and

make

sure

the

image

is

available

in

your

cluster

and

then

wait

the

the

job

of

finishes

so

I

I

think

it

is

not

worry

to

end

users

yeah,

especially

who

is

not

familiar

with

this

stuff

and

after

that,

you'd

have

to

remove

the

old

version

from

the

sales

status

first

manually.

The

second

option

is,

it

sounds

simpler,

but

it's

a

pure

manual

operations,

because

the

the

key

or

for

upgrade

the

existing

objects

is

to

make

sure

that

every

object

is

updated

once

so.

F

Remove

the

field

in

in

stated

field

the

the

overwatching

in

the

student

failed,

and

if

you

remember

how

we

dealt

with

the

API,

Group

or

migration,

we

introduced

a

mirroring

controller

and

it

belongs.

It

is

a

long

running

controller

in

Android

controller

and

will

be

responsible

for

mirroring

the

objects

users

stored

in

Old

API

Loop

to

new

group.

But

I

think

that

scenario

is

much

more

complex

than

the

current

one,

because

in

that

one

they

are

not

same.

F

They

are

not

same

API,

so

so

we

will

have

where

we,

we

are

actually

copying

the

data

and

converting

them

and

we

we

are

actually

daring.

We

are

actually

we

were

actually

dealing

with

two

objects

because

there's

all

the

same

object

exists

in

uses

the

two

groups

at

the

same

time,

but

currently

our

our

purpose

is

only

making

sure

that

every

object

is

updated

once

with

exactly

same

content.

F

Is

that

with

it

has

no

impact

on

the

existing

lung

longing

process

like

Android

controller,

if

we

add

a

new

controller

to

to

do

this,

API

migration-

and

there

are

some

changes

needed

to.

There-

are

some

side

effects.

First,

we

need

to

Grant

unnecessary

and

permissions,

because

under

controller

now

will

need

to

create

or

update

all

study

resources

which

we

don't

have

to

before,

and

the

second

is

that

it

will

how

to

cash

or

objects,

even

it.

F

F

It

could

also

mean

that

is

the

start

of

the

support

of

new

versions.

I

think

different

projects

have

different

qualities.

I

saw

some

projects

like

it's

still

when

they

introduce

the

one

1.0,

they

just

add

new.

They

just

copied

their

old

apis

and

create

new

apis,

and

they

later

they

have

to

I'm,

not

sure

how

many

minorities

later

we

just

remove

the

support

of

the

other

versions.

F

But

in

our

case

I

think,

since

we

are

preparing

the

the

map,

the

new

major

release,

we

could

consider

that

the

do

some

preparation.

For

example.

If

we

are

we,

we

know

what

we

want

to

graduate

in

the

next

major

release.

We

could

add

the

new

versions

earlier

before

that,

for

example,

to

at

least

two

minor

release

earlier

than

that

in

the

next

minorities

of.

F

So

that

users

get

enough

time

window

to

migrate

to

new

versions,

and

then

we

could

just

remove

the

duplicated

versions

in

the

next

major

release

and

as

the

material

major

release

normally

also

means

some

non-compatible

changes.

So

it's

reasonable

to

remove

some

duplicated

API

API

versions,

but

we

we

are

not

ready

now

backwards

compatible

because

which

will

also

provide

some

tools

or

we

do

it

automatically

to

have

user

grids

for

upgrade

to

new

major

release.

F

And

if

we

follow

the

Easter

way

or

we

could,

we

could

just

add

new

versions

in

1.0

to

which

means

that

this

is

the

start

of

support

of

new

versions.

And

then

we

support

the

two

versions

for

some

minorities

and

eventually

remove

the

duplicate.

The

apis,

for

example,

2.2,

but

regardless

of

which

way

we

choose

I,

think

we

need

to

consider

how

to

do

the

clean

up

earlier

and

whether

it

is

it

is

necessary

to

introduce

to

us

or

control

us

to

have

user

grid

for

upgrade.

F

Basically,

I

summarize

that

to

three

options:

the

first

one

is:

we

just

leave

the

upload

to

users

that

under

guide

them

to

follow

kubernetes

options.

Pattern

I

feel

this

is

not

right.

Randomly

and

I.

Think

underneath

some

projects

tries

to

avoid

that,

for

example,

the

a

certain

manager

project

they

had

a

tour

they

introduced.

The

tour

like

I

will

described

in

the

third

option,

and

the

second

choice

is

that

we,

like

a

mirroring

controller,

we

add

a

new

controller

to

help

user

migrate,

the

stored

object,

but

how

yeah

the

this?

F

F

A

No,

no,

not

much

for

me.

I

just

want

to

say

that

the

third

option,

the

one

that's

apparently

seems

they

are

preferred

for

the

users

that

are

leveraging

the

entry

operator.

I,

think

that

we

can

also

use

the

the

operator

to

automate

this

execution

of

of

resource

upgrades.

There

will

be

also

consistent

to

the

third

option

we

can.

Probably

just

you

know

the

operator

calling

the

Sim

logic

implemented

by

the

CLI.

F

C

A

F

F

A

A

All

right,

so

we

are

pretty

much

at

time

for

today's

meeting,

but

if

there

is

any

other

topic

that

you

would

like

to

discuss

any

other

thing

that

you'd

like

to

mention

please

go

ahead.

We

have

a

one

minute

for

open

discussion.

Of

course,

if

needed,

we

can

stretch

the

meeting

for

a

few

few

more

minutes.