►

From YouTube: Antrea Community Meeting 06/20/2023

Description

Antrea Community Meeting, June 20th 2023

A

Perfect

good

morning,

good

afternoon,

good

evening,

thanks

for

joining

this

instance

of

the

entria

community

meeting

today,

it's

a

Wednesday,

the

21st

of

June

and

or

if

you

live

in

Europe

or

Asia,

it's

already

summer

for

you

for

folks

in

United

States,

you

have

to

wait

a

few

more

hours

for

your

summer

anyway,

jokes

aside,

for

today,

we

have

a

conversation

with

Chan

on

enabling

for

the

entria

controller.

This

will

be

he

with

the

active

standby

with

multiple

controller

instances.

That

is

the

only

topic

that

we

have

for

today,

so

Chan.

B

This

also

includes

that

even

a

nail

policy

has

been

applied

to

a

port,

but

a

New

Ports

has

been

created

during

the

controls.

Downtime

then

the

if

the

Newports

should

be

the

phone

or

two

of

the

mail

policy,

and

in

this

Imports

we

are

not

receive

that

update

and

I

think

the

first

two

issues

may

be

serious,

because

the

pause

will

not

be

secured

and

is

egress

traffic.

B

We

are

not

Behavior

like

user

is

expect

and

I

do

some

tests

and

investigate

investigated

why

it

took

so

long

to

create

a

new

replay

card

in

this

situation

by

the

way

in

other

situations

like

if

you,

if

the

country

control

Port

crash

or

you

delete

the

the

the

Pod,

it

is

very

fast

to

create

a

new

replica.

It's

just

this

case.

Kubernetes

doesn't

handle

it

very

very

well,

and

the

time

spent

on

the

floor

is

first.

He

when

a

node

becomes

unregible.

B

B

So

this

we

don't

have

control

on

this

configuration

a

way

how

to

support

that

the

center,

the

the

most

common

configuration

that

we,

so

we

should

assume

in

most

clusters

when

a

note

becomes

unregible

and-

and

it

will

take

40

seconds

before

the

node

is

marked

as

non-ready

and

has

this

tent

and

a

half

after

the

tent

is

added

to

the

node.

It

takes

another

five

minutes

to

effect

pause

running

on

this

note.

This

is

determined

by

another

parameter.

It

is

the

default

unreachable

contribution

seconds.

B

By

default,

we

don't

have

any

Toleration.

We

don't

have

added

any

noise

cute

Toleration

to

pause,

including

Android

controller

port.

However,

kubernetes

has

a

plugin

which

is

enabled

by

default

since

a

long

time

ago.

It

will

add

no

execute

Toleration

to

every

port

that

doesn't

have

such

Toleration

already,

and

the

default

storage

in

seconds

is

5

minutes.

So

after

the

Pod

is

marked

as

not

ready

and

has

the

tent,

it

takes

another

five

minutes

to

delete

the

port

and

only.

A

B

B

So

that's

why,

in

this

situation

it

takes

5

minutes

40

seconds

and

more

to

follow,

and-

and

this

is

a

when

I

observed

in

when

I

did

some

tests

in

kind,

but

it's

different

in

some

managed

kubernetes

cluster,

for

example

in

eks,

because

when

a

node

becomes

not

ready,

I

I

remember

I

saw

that

the

node

will

be

deleted

soon

by

I,

asking

it

by

the

uks

by

some

service

in

uks.

So

it

will

also

check

up

all

the

deletions.

B

So

in

the

failure

it's

faster

than

what

I

observed

observed

in

kind

cluster

and

in

this

situation

for

in

eks,

it's

typically

typically

takes

about

one

minute,

one

minute

to

failover.

I

guess

the

first

40

seconds

is

also

spent

on

detecting

this

node

as

not

ready.

Then

there

may

be

about

20

seconds

for

eks

service

to

delete

the

not

ready

node.

B

So

after

the

investigation,

I

I

found

that

the

file

mean

is

delayed

because

of

this

annotation

Toleration

added

by

kubernetes

by

default,

and

because

it

only

has

this

Toleration

when

there

is

no

such

tourism

on

this

port,

we

could

proactively

add

this

Toleration,

but

set

the

tent

to

zero

seconds

the

thorough

Toleration

seconds

so

that

once

a

node

is

marked

as

a

noise

skewed,

the

pot

and

the

controller

Port

will

be

deleted

immediately

and

a

new

replica

will

be

created.

So

with

this

change

we

could

keep.

B

B

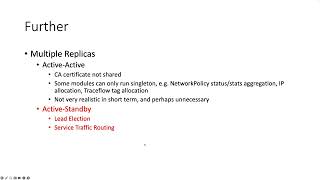

So

I

am

wondering

if

we

could

provide

them

higher

availability

while

running

multiple

replay

cards.

There

are

two

zero.

There

are

two

options:

one

is

active

active.

This

is

how

Cuba

API

server

Highway

B

mode

runs,

but

this

is

not

very

relative,

realistic

in

Azure

controller,

because

some

factors,

the

first,

is

that

we

need

to

publish

the

certificate

which

is

generated

by

the

controller

itself

in

most

cases.

B

This

is

because,

like

a

cube

controller

manager

and

the

cube

schedule,

we

have

some

modules

that

can

only

run

synchron

without

a

big

change,

for

example,

the

nail

policy

status

and

the

stats

aggregation.

We

need

a

single

place

to

aggregate

this

data,

so

it's

not

well

feasible

to

make

this

future

working

active,

active

mode,

and

there

are

some

other

functions

like

the

external

IP

poor

and

ip4

IP

allocation.

B

It

is

very

complicated

to

achieve

this

when

multiple

instance

could

do

the

same

thing

and

also

including

the

traffic

flow

tackle

location

and

the

set

really

is

that

today

we

didn't

see

a

any

problem

except

the

high

availability

by

learning

the

learning

a

single

replay

card.

It

is

the

the

performance

of

the

single

instance

is

good

enough

to

support

a

large

scale

cluster

according

to

the

test,

so,

except

for

the

I.

Think,

a

single

active

instance

is

Enough

from

our

architecture

perspective.

B

So

in

that

choice

we

how

it's

a

longing,

multiple

replicas

as

negative

standby

mode,

just

like

a

cube

controller

manager

and

the

cube

scheduler,

and

then

there

there

are

two

problems

we

need

to

solve.

The

first

one

is

the

hallway

to

leader

election.

The

second

is

how

we

loot

the

service

traffic

to

the

active

instance.

C

B

I

will

talk

about

the

Solutions

in

this

page

for

leader,

leader

election,

like

a

controller

manager

and

Q

scheduler.

We

could

leverage

the

kubernetes

mechanism,

and

that

is

at

least

API,

which

is

designed

to

for

to

to

be

leveraged

by

this

library

to

to

support

a

leader

election,

and

it

is

very

easy

to

integrate

this

collaborate

way

to

have

a

little

election

in

anterior

controller

and

the

parameters

can

be

tuned

so

that

the

failure

over

can

be

can

happen

in

15

seconds

or

less

by

default

recommended

parameters.

B

B

B

D

B

When

we

run

Cube

CTL

law

out

status,

this

deployment

will

in

the

command

will

never

finish

because

it

will

always

appear

as

the

progressing,

but

the

biggest

problem

is

that

it

relies

on

ready

condition

of

port

if

a

port

crashes

or

it

is

killed.

The

the

red

already

condition

will

be

changed

to

not

ready

immediately,

but

it's

not

the

case

when

the

node

becomes

unregible.

This

has

the

same

issue

as

the

deployment

controller,

because.

D

B

A

node

becomes

not

not

reachable,

the

port

will

only

be

marked

as

not

ready

after

this

period.

So

this,

if

we

adopt

this

approach,

it's

almost

equivalent

to

running

a

single

replica,

and

maybe

it

could

reduce

a

couple

of

seconds

to

create

a

new

port

and

I,

don't

think

is

where

it

was

to

get

that

little

benefit

by.

B

B

However,

my

only

concern

is

the

operation

cost,

because

in

by

default

or

before

we

make

this

future

officially

supported

the

we

will

have

to

switch

we

how

to

change

the

service

definition

when

running

different

modes.

For

example,

if

a

cluster

is

already

created

and

has

wrong

address

a

single

instance

mode,

it

already

used

service

definition

with

the

selector.

But

if

user

want

to

change

to

another

mode,

they

will

how

to

change

the

service

definition

to

remove

the

selector

and

I

tested

that

by

deleting

by

changing

the

service.

B

The

existing

endpoint

slides

previous

created

will

not

be

deleted

automatically.

So,

but

this

must

must

be

deleted,

because

when

the

active

instance

or

for

Android

controller,

create

and

update

yourself

at

the

endpoints

of

the

service,

it

will

be

mirrored

to

endpoint

slides,

but

the

it

will

not

removed

existing

endpoint

slides.

So

two

endpoint

slides

will

be

both

used

in

by

Q

velocity

or

anti-proxy

to

to

receive

traffic,

which

will

cause

a

problem.

B

B

They

add

an

actual

label

to

the

active

instance

and,

for

example,

they

add

an

this

label

to

the

active

one

and

make

the

selector

to

select

only

active

pot

by

matching

the

this

label,

but

I

think

that

it

is

essentially

not

very

different

from

the

second

one,

except

that

it

is

a

bit

more

complicated

because

it

because

the

active

instance

will

be

response.

We

are

needed

to

also

remove

the

this

actual

label

from

previously

perform

print

with

active

instance,

which

is

hard

to

guarantee

the

transaction

in

kubernetes

when

you

are

operating

several

objects.

B

So

I

think

this

has

no

it's

not

better

than

the

second

one

yeah,

and

so

my

plan

was

my

plan

is

first

we

we

should

consider

setting

a

node

skilled

tent

with

the

zero

Toleration

seconds

to

enter

a

controller.

This

may

be

a

a

big

Improvement

to

most

clusters

that

that

are

not

the

most,

not

a

self-managed

community

cluster

and

may

be

helpful

for

some

Cloud

manager

class

tests

for

cloud

management

class

tests.

B

Maybe

the

failure

over

time

could

be

reduced

from

one

minute

to

40

seconds,

and

another

plan

is

to

implement

the

second

approach

to

support

active

standby

mode,

but

because

of

the

operation

cost

in

the

first

stage.

I

I

want

to

to

to

to

to

make

this

feature

experimental,

and

we

just

provide

a

another

manifest

in

which,

until

service

written

will

be

an

empty

under

the

department.

B

B

C

B

A

C

B

B

They

didn't

mention

how

shortage

it

is

it

should

be,

but

but

because

of

the

the

second

delay

as

I

assume

that

it

may

has

some

impact

more

or

less

even

on

cloud,

even

though

the

cloud

could

delete

an

unregible

Port.

So

our

first

try

to

to

suggest

then

to

to

update

the

deployment,

many

manifest

to

add

a

a

zero

second

Toleration

seconds,

so

that

the

port

could

be

deleted

immediately

and

let's

see

whether

the

the

the

new

time

could

meet

their

requirement.

C

A

I

think

the

team

one,

the

only

comment

that

they

have

is

you

know,

is

probably

trying

to

find

whether

the

effort

for

supporting

Giza

might

be

justified

by

the

benefit

that

we

give

to

our

users

and

I

was

considering

one

aspect

and

when

we

do

things

like

reducing

the

default

or

reachable

Toleration

seconds

from

300

to

zero,

what

we

are

going

to

get

are

often

false

positives.

So

what

is

the

cost

of

a

false

positive?

A

In

my

opinion,

if

I

remember

correctly,

audio

and

Tria

controller

Works

since

there

is,

there

aren't

many

initialization

initialization

tasks

that

need

to

be

performed

at

startup.

The

cost

of

failover

is

pretty

much

just

the

cost

of

destroying

the

older

port

and

creating

in

the

new

pod.

So

let's

say,

even

if

we

have

some

false

positive,

it's

not

going

to

cause

a

big

deal.

Is

that

correct.

A

Yeah

I

mean

we

don't

know

how

frequently

it

will

happen,

but

you

know

I'm

just

wondering

if

we

have

some

Force

positive

like

we

fail

overly

strictly,

but

actually

it

just.

The

node

was

unavailable

just

for

a

couple

of

seconds

right.

So

in

that

case,

are

we

going

to

pay

a

penalty

for

initializing

your

entire

controller

or

does

anyone

Trio

controller

comes

up

comes

up

immediately

and

it's

able

to

operate.

B

Yeah

I

think

it

takes

only

a

few

seconds

to

come

up

a

new

instance.

There

is

no

many

cost

to

initialize

it

and

besides,

it's

not

like

the

the

Pod

becomes

unreadable

immediately,

who

we

will

delete

the

port

first.

There

is.

There

are

40

seconds

duration

for

kubernetes

to

tolerate

the

nodes

and

response

to

to

to

be

unregible

only

after

it

becomes

unregible

for

40

seconds

and

during

which

it

receives

no

any

status

update.

From

that

note,

it

will

mark

this

node

as

not

ready.

D

D

B

B

It

will

promote

itself

as

the

leader,

and

it

will

then

to

do

the

other

things

where

we

will

have

in

controller

we're

going

to

launch

as

a

standby.

It

doesn't

do

the

and

it

it

doesn't

allow

any

controllers

or

modules

and

because

the

storage

is

the

kubernetes

after

the

active

stand.

Active

instance

is

down

and

the

the

data

is

persistent

in

each

City

anyway,

so

the

standby

instance

could

retrieve

the

same

data.

D

Okay,

so

yeah,

another

thing

is

like

we

are

going

with

the

active

standby,

not

active

active,

so

active

active

have

Advantage

like

you,

can

distribute

traffic

over

active

effective

and

it

can

be,

can

act

as

a

load

balancer.

But

in

this

case

only

one

controller

can

be

active

so

that

Advantage

we

are

kind

of

will

not

be

having

an

activist

standby.

E

Thanks,

hey

Chen,

a

quick

question:

I

may

have

missed

something.

Can

you

go

back

to

the

different

options

for

the

service

traffic

routing?

Thank

you

so

for

the

option

you

have

in

red,

which

is

your

preferred

option?

Did

you

mean

that

you

wanted

to

have

like

different

service

definitions

for

the

case

without

and

for

the

case,

yeah

yeah?

Why

don't

we

use

the

same

mechanism

or

the

regular

case?

I

mean

the

the

knowledge

showcase.

B

Yes,

I

I

in

I

did

a

plan

that

in

the

future,

if

this

actually

mode

tends

to

be

working,

fine

and

we

could

unify

it.

But

currently,

if

we

make

the

change

immediately,

it

could

affect

the

existing

users

who

upgrade

from

a

prayer

with

release,

because

some

endpoint

slides

will

not

be

deleted

by

default

and.

B

I

I

was

one

the

my

main

concern

is

my

major

concern

is

to

come

backwards,

compatibility

yeah.

How

and

whether

this

this

is

a

really

good

solution

to

support

the

so

I

will

I

would

prefer

to

have

a

different

definition

and

using

a

different

yam

to

to

deploy

until

HOA

mode

and

to

see

whether

it

proves

to

be

working.

Fine.

A

D

B

A

A

Because

you

know,

I

I

was

mistaken,

because

I

was

thinking

that

the

leader

election

will

kick

off

immediately

and

the

leader

election

will

select

a

new

leader

and

then

the

Readiness

probe

will

return

ready

only

for

the

leader.

So

regardless

of

the

node

of

the

node

status

of

another

reachable

status,

I

was

thinking

that

all

the

parts

that

were

not

elected

as

leader

were

returning,

not

ready,

yeah,

so

I,

I,

misunderstood,

I,

believe,

okay,.

E

B

No

okay:

what

I

searched

is

the

first

and

the

third

one,

but

the

kubernetes

API

server

itself

use

the

second

one

and

but

for

a

different

reason.

A

kubernetes

cube,

API

server

will

report

itself

as

the

endpoints

of

the

kubernetes

service,

but

the

main

reason

is

a

before

itself

is

ready.

I

think

Cuba

controller

manager

is

not

even

able

to

update

the

API.