►

Description

Speaker: Albert P Tobey, Tech Lead, Compute and Data Services at Ooyala

Slides: http://www.slideshare.net/planetcassandra/c-summit-2013-practice-makes-perfect-extreme-cassandra-optimization-by-albert-tobey

Ooyala has been using Apache Cassandra since version 0.4. Our data ingest volume has exploded since 0.4 and Cassandra has scaled along with us. Al will cover many topics from an operational perspective on how to manage, tune, and scale Cassandra in a production environment.

A

All

right

well

welcome.

This

is

my

talk

about

practice.

Make

extreme

Cassandra,

optimization

I

did

not

pick

that

title,

but

it

is

better

than

the

one

that

I've

chose.

I

hope

you'll

get

a

lot,

I'm

going

to

cover

a

lot

of

technical

information,

I'm

going

to

move

fairly

fast,

cuz,

there's

a

lot

of

content

a

little

bit

about

me.

This

is

going

to

be

short,

I'm

sure

you

guys

are

tired

of

hearing

about

all

these

different

companies

and

their.

How

they're,

special

and

unique

I'll

just

say

y'all

is

really

awesome.

A

We've

learned

an

awful

lot

about

how

not

to

manage

Cassandra

clusters

and

how

to

do

it

correctly.

I'm

going

to

talk

a

lot

about

that.

It's

been

a

process

over

the

last

couple

of

years

of

just

gradually

making

incremental

changes

and

making

things

a

lot

better

or

more

colloquially,

make

it

suck

less

I'm.

Introducing

a

term

that,

I

think,

is

new.

A

That

kind,

I

think

describes

what

a

lot

of

us

do

in

terms

of

performance

and

how

you

make

Cassandra

or

any

distributed

system,

or

even

a

single

system

perform

better

and

it's

what

I

like

to

call

being

a

harass

titian,

which

is

using

heuristics

to

rather

than

being

more

scientific,

which

is

expensive.

It

takes

a

long

time.

A

I'll

talk

about

that

more

in

a

little

bit

I'll

talk

about

tools

that

I

use

that

maybe

you

haven't

seen

before

or

didn't

know

how

to

use

them

correctly

and

then

at

the

end,

if

I

have

time

there's

a

lot

of

settings

like

I'll

go

more

into

depth

about

specific

settings

that

we

use

at

a

yella.

And

then

I

got

a

couple

little

things

about

bowel

system

usage,

how

we're

using

btrfs

and

ZFS

to

make

our

clusters

on

store

a

lot

more

data

run

a

lot

faster,

getting

a

lot

more

flexible

about

me.

A

A

Software

operating

system

database

tuning

all

the

way

through

and

what

we're

building

is

a

platform

for

yella

to

use,

Cassandra

and

Hadoop,

and

all

of

these

different

large

big

data

systems,

so

that

all

the

engineers

don't

have

to

get

concerned

with.

How

do

I

setup

Hadoop?

How

do

I

interact

with

Hadoop

so

we're

building

services

in

front

of

these

systems

so

that

all

the

developers

can't

have

to

do

is

just

know

where

the

services

is

submit

their

jobs

and

then

forget

about

it?

A

We

have

about

100

Cassandra

nodes,

spread

of

costs

eight

to

ten

clusters.

This

is

probably

going

to

more

than

double

in

the

next

month

or

so,

and

then,

obviously,

the

obligatory

we're

hiring

make

a

char

happy.

We

always

found

in

2007.

Most

of

stuff

is

pretty

boring.

230

employees,

200

million

unique

users.

The

important

one

on

here

is

2

billion,

analytic

events

per

day.

A

We

really

appreciate

all

their

efforts

and

stabilization

and

operational

support,

use

cases

that

we

have

all

of

our

analytical

data.

I

have

a

slide

on

this

in

a

minute,

but

basically

gets

batch

processed

in

the

results

of

all

of

our

roll-ups

and

things

get

written

into

Cassandra

so

that

those

can

be

exported

to

our

web

interfaces

for

our

customers

to

use

cassandra

provides

us

that

very

low

latency

ability

to

fetch

values

and

chill

them

to

our

customers.

We

use

also

use

it

in

various

places,

is

just

a

highly

available.

A

Keystore

forget

all

of

the

columns

or

storage

and

all

of

that

it's

a

really

nice

distributed

key

value

store.

So

you

just

don't

have

to

think

about

cache

coherency

or

you

know.

Do

I

connect

the

memcache,

d1

or

memcache

d

to

do

I

deal

with

hashing.

You

just

forget

about

all

that

crap

connect

to

any

node

in

the

cassandra

cluster

and

your

data

is

there.

Well,

we

have

an

internal

monitoring

tool

called

Hastur

that

we

use

to

track

all

of

our

metrics,

so

there's

a

stat

CT

style

interface.

A

It's

basically

in

place

of

where

you

normally

use

graphite,

arm

that

that's

currently

receiving

about

50,000

in

metrics.

A

second

writing

that

and

do

a

cassandra

cluster

of

a

five

nodes.

We

use

it

for

play

head

tracking.

So

if

you've

used

netflix

and

you

watching

a

movie

on

your

ipad

and

you

pause

it

and

then

you

shut

it

down

and

you

go

to

your

TV

and

you

bring

up

the

same

movie,

it

will

resume

where

you,

where

you

left

off.

We

use

cassandra

for

the

same

I'm,

pretty

sure

Netflix

uses

for

that

too.

A

A

A

You

know

if

you're

having

buffering

issues,

what

bitrate

you're

at

a

bunch

of

different

little

things

like

that,

your

IP

address,

so

we

can

do

geo-tracking

things

like

that.

So

all

that

data

goes

and

it

gets

HTTP

push

to

our

edge

arm,

which

I

call

the

loggers

that

data

gets

crammed

into

s3,

which

is

a

big

black

box

on

purpose,

because

that's

how

we

treat

it

and

then

our

Hadoop

cluster

pulls

all

that

data

down

into

our

local

HDFS

for

crunching,

and

then

that

writes

into

Cassandra

and

then

so

on

and

so

forth.

A

Then

the

ABE

service

is

basically

just

a

front

end

to

Cassandra

that

our

web

tier

can

access

to

get

to

the

data.

So

here's

a

little

Oh

like

oh

right,

there's

something

I

was

going

to

talk

about

there

so

that

read-modify-write

there

I

don't

have

access

to

my

notes.

So

there's

a

weird

setup

here:

read-modify-write

is

what

I

want

to

talk

about

first

verse

for

performance.

A

Second,

we

don't

even

think

about

synchronization.

We

run

five

instances

of

the

service

and

we

just

don't

have

to

worry

about

any

kinds

of

performance

issues.

There.

There's

no

sharding,

there's

no

there's

no

a

consistent

hashing

at

the

service

layer.

It

just

writes

into

Cassandra

and

it

doesn't

matter

which

one

does

the

work,

so

it

kind

of

an

example.

What

happens

when

you

do

read,

modify

write

so

I

came

up

with

this

example.

So

I

was

thinking

about

trying

to

think

of

an

example

and

I

thought.

A

Well,

a

good

thing

to

model

would

be

how

much

my

team

is

going

to

drink

their

moments.

I

whole

team

is

here

so

I

said

that

you

know

with

Philip

he

he'll

probably

drink

a

lot.

He's

he's

a

beer

guy,

so

he's

got

12

drinks.

We

got

Kristoff

he's

in

London,

nobody

in

London

drinks,

right

Kelvin,

doesn't

really

drink

a

lot.

Frank's

Frank's,

a

nice

guy.

He

drinks

a

few

drinks

every

now

and

then

and

so

on.

So

getting

closer

to

the

conference.

A

A

couple

days

later,

the

mem

table

has

been

flushed

to

disk

into

an

SS

table

and

I

want

to

make

an

update,

so

I

go

and

I

read

the

value

out,

maybe

or

maybe

I

just

override

it

and

I

say

I

update

like

I'm

not

going

to

drink

that

much

because

I

have

to

talk

on

Wednesday

and

Wednesday

night.

I

got

to

go

home,

so

I'm

not

gonna,

drink.

Anything

and

Philip

tells

me

he's

quit

drinking.

I

berate

him

for

his

lack

of

dedication

and

then

update

the

column,

value

and

then

closer

to

the

conference.

A

A

So

now

that's

sitting

in

a

mem

table

in

Cassandra

there's

an

SS

table

on

disk

that

has

the

old

value

so

now

you're

back

into

conflict

resolution

territory

and

there

have

been

other

talks

about

conflict

resolution

not

going

to

go

into

that

right

now,

but

that

what

this

means

is

now

you

have

it.

You

have

to

compact

before

you

get

totally

consistent

right.

You

have

two

values

in

your

database

system

until

this

until

all

of

the

compaction

stuff

fires.

So

by

avoiding

read-modify-write

you

just

don't

even

have

that

problem.

A

You're,

your

Compassion's

are

going

to

be

a

lot

smoother

you're,

going

to

have

a

lot

less

conflict

resolution

stuff

to

deal

with

and

that's

kind

of

the

lesson

there

and

then,

finally,

after

a

while,

it

all

gets

compacted

down

and

that's

what

it

looks

like

when

it's

all

done.

So,

if

you

have

a

lot

of

hot

data,

one

of

the

one

of

the

patterns

I

really

push

our

developers

to

use

is

to

do

that.

Insert

workload

to

the

point

where

we

actually

do

stuff.

A

Where

we

do

we

waste

work,

so

we

like

to

insert

the

same

value

twice,

sometimes

and

insert

it

over

the

same

over

the

same

key

value,

same

set

of

keys,

the

same

value

and

let

kassandra's

conflict

resolution

take

care

of

all

the

overrides

and

it

works

really

well

for

us

so

moving

on,

so

that

that's

our

old

architecture.

So

this

here's,

a

bit

of

the

practice,

makes

perfect

stuff.

A

So

in

2011,

when

I

started

at

yellow,

we

were

running

on

Cassandra

06,

all

kinds

of

operational

issues

with

it,

but

the

most

important

one

was

is

that

if

you've

been

with

Cassandra

for

a

while

and

you

kind

of

how

it

works,

it

has

this

property

of

once.

You

start

up

a

cluster

and

get

it

kind

of

working

for

the

most

part.

It's

easy

to

forget

about.

So

we

had

this

18

node

Cassandra

cluster

sitting

there

for

years,

and

people

just

forgot

about

it.

A

People

forgot

to

do

backups,

they

forgot

to

do

compaction

because

we

were

still

on

sized

heerd.

They

forgot

to

do

repairs

and

we

have

that

rehab

II

read-modify-write

workload

and

we

had

some

deletes

in

there.

So

we

had

expired

tombstones.

We

had

all

kinds

of

issues

of

the

data,

so

what

ended

up

happening

is

to

get

to

zero

dot,

7

and

0

to

8

and

it

being

zero

that

eight.

A

We

we

looked

at

all

the

different

options

for

reading

data

out

of

Cassandra

with

their

tools,

and

there

wasn't

a

lot

of

good

tooling

there

and

one

of

our

guys

said

well,

I'll

just

rip

the

data

of

the

SS

tables

and

in

a

big

MapReduce

job

and

write

it

back

out

to

Cassandra

and

really

that's

you

can

do

that

and

yeah.

It

would

be

easy

right.

Are

you

sure

you

know

what

that

word

means,

and

you

know

he's

one

of

these.

A

Just

these

wicked

smart

guys

we

have

a

bunch

of

running

around,

and

so

he

went

and

used

one

to

play

with

Scala,

so

you

skali,

he

pulled

the

SS

table

classes

out

of

out

right

out

of

the

cassandra

source,

wrote

a

MapReduce

job

that

what

that

could

read

the

SS

tables

and

right

back

into

the

front

end

of

Cassandra.

That

way,

the

clusters

are

completely

separate.

This

is

all

happening

in

production

and

we

needed

to

do

the

whole

migration

with

zero

downtime.

A

So

what

we

did

is

it

was

like

well

if

we

could

pull

all

the

snapshots

in

the

HDFS

and

just

process

them

there.

That

would

be

really

nice,

but

we

didn't

have

enough

space

in

the

Hadoop

cluster

and

no

time

to

buy

more

city,

so

I

said

well.

We'll

just

do

this

trick.

Will

export

over

NFS

all

the

snapshots

to

the

Hadoop

cluster

and

do

this

big

crossover

everything

mounts

everything

craziness.

A

It

turns

out

the

colonel.

We

were

running

at

the

time

we've

been

on

there

for

years

and

didn't

have

an

NFS

support,

sort

of

all

crap,

and

so

we

looking

around

like

glossary

FS

point-to-point

actually

worked

out

great.

So

we

did

Gloucester

FS

point-to-point

export

the

same

mishmash

mess

and

ran

a

MapReduce

job

across

it

completely

saturated

the

network.

A

For

three

days

and

at

the

end

of

the

day

we

had

a

nice

new

cluster

with

completely

consistent

data,

we'd

scrubbed

out

all

the

expired

tombstones

fixed

up

all

the

inconsistencies

and

rebuilt

all

of

our

indexes

and

that

that's

our

first

migration.

If

we

do

this

again,

so

lessons

learns

there.

Oh

don't

forget

about

your

Cassandra

cluster.

A

A

A

So,

while

this

transition

is

happening,

I

had

the

opportunity

to

watch

this

receiving

cluster

be

under

some

extreme

duress.

You

know

doing

20,000

30,000

inserts

a

second

which

is

higher

than

we'd

seen

before

and

was

pretty

high

40

days,

so

we

ended

up

going

to

08.

We

end

up

going

to

a

24

gigabyte

heap,

which

is

not

recommended

anymore

I

used

to

recommend

it

about

a

year

ago.

But

what

happens

is

if

you

don't

have

it

a

big

enough

cluster?

You

spent

you're

going

to

have

a

lot

of

frustration

from

your

engineers.

A

What

dealing

with

just

really

slow

fetch

it

reads

and

writes,

because

the

JVM

is

too

sitting

there

doing

GCS

right,

you're

gonna

have

a

hundred

millisecond,

sometimes

500

baby,

sometimes

a

thousand

milliseconds

of

GC

time

when

you

have

that

big

of

a

heap

just

because

it's

got

to

scan

all

that

memory.

We

move

to

java

16

moved

to

the

newer

colonel,

because

we

were

on

a

bunt

too,

and

the

Colonel's

that

ship

with

a

bunt

to

just

aren't

as

fast

as

what

you

can

build.

A

So

we

chose

to

go

with

raid

5

at

the

time

and

then

the

biggest

thing.

The

most

important

thing

that

I

really

want

everybody

to

remember

is

turn

swap

off

on

your

cassandra

boxes.

If

you

don't

have

another

reason

like

you're

running

other

applications

that

require

it

turn

it

right

off,

swap

off

minus

a

is

your

friend

you'll

have

more

consistent

performance.

A

You

won't

have

as

many

WTF

moments

where

you're

just

looking

at

and

you

just

have

these

latency

spikes

and

what

the

hell

is

going

on

and

nothing's

wrong

with

the

system

and

when

I

log

into

the

system,

if

I

see

swap

going

at

all

it's

the

first

thing.

I

do

always,

if

you

can't

disable

swap

I've

talked

to

a

few

people

that

say

well,

we

have

applications

that

really

require

swap

they.

You

know

sometimes

they'll

just

run

a

little

over

and

we

just

don't

want

them

to

crash.

A

A

Vfs

cash

is

really

really

greedy

and

what

it'll

do

is,

if

you've

been

using

links

for

a

long

time.

You

remember

the

days

when

locate

would

run

and

you'd

come

to

your

box

a

couple

hours

later,

and

none

of

your

would

work

because

it

would

been

all

swapped

out

to

your

disk

and

you

got

to

wait

an

hour

for

everything

to

come

back.

Sometimes

you

just

turn

the

power

off

turn

it

back,

Isis,

faster

right

or

you

log

on

to

log

into

a

system.

A

You

think

maybe

it's

swapping,

but

you

can't

get

in,

because

SSH

has

been

swapped

out.

All

these

problems

just

go

away.

So

what

linux

does

it

that

causes

us

to

happens?

When

you

do

a

bunch

of

I/o

letters

will

actually

be

trying

to

optimize

that,

for

you

and

it'll

go

and

steal

as

much

memory

from

this

free

memory

from

the

system

as

it

can

to

make

space

for

VFS

cash,

which

is

great,

except

that

now

it

swapped

out

your

sshd.

It's

starting

to

try

to

swap

out

your

jvm

and

that's

just

bad

news.

A

Inconsistent

performance

really

slow

and

sometimes

the

box

will

just

become

unresponsive

and

never

come

back.

If

you

set

vm

SWA

penis

21,

it

tells

the

colonel

it

says

I

never

want

that.

Stupid

behavior

just

keep

just

you,

take

the

memory,

that's

free

use

that,

but

never

swap

out

my

applications

until

unless

you're

under

extreme

duress

more

on

that

a

little

bit

so

this

year

we

did

the

or

toward

the

end

of

last

year.

In

beginning

this

year

we

did

this

migration

again.

So

I

had

gone

off

to

another

team.

A

A

Hadoop

cluster

run

it

on

this

new

greenfield

cluster

and

avoid

all

the

issues

with

like

over

are

overloaded,

MapReduce

cluster.

This

process

works

really

well

and

you

end

up

with

a

nice

clean,

the

cleanly

distribute

II,

don't

have

to

worry

about

like

snitch

problems

or

replication

problems.

It's

all

just

works,

so

we

made

a

few

more

changes.

We

do

that

again.

This

cut

this

new

cluster

is

different

to

hardware,

a

different

different

layout,

and

we

it's

time

to

tune

again

so

we're

on

DSE.

A

We

love

to

heap

the

same

because

we

still

had

these

massive

bloom

filters

in

memory.

I'll

talk

more

about

tuning

your

bloom

filters

in

a

little

bit

and

we

did

we

stuck

with

the

same

distro

on

a

bunt

to

lucid,

which

I

definitely

don't

recommend

the

the

whole

job

ran

a

lot

faster

this

time.

So

instead

of

running

on

an

80

node

Hadoop

cluster,

which

is

one

of

our

production

clusters,

writing

into

this

36

note

cluster.

A

We

actually

ran

the

whole

thing

on

there,

so

it's

doubling

up

its

workload,

but

it

still

ran

faster

because

of

things

like

DSC.

Cfs

is

a

lot

lower.

Latency

hdfs

is

by

default.

So

it's

it's

really

nice

in

that

way,

when

you

go

and

you

upload

a

file

to

it,

just

goes

BAM

and

it's

in

there

because

it's

built

on

top

of

Cassandra

instead

of

this

HDFS

architecture,

that's

loaded

with

latency

and

connections

going

all

over

the

place.

A

So

the

mistakes

we

made,

we

moved

back

to

raid

0

because

we

had

problems

with

disks

filling

up

past.

The

fifty

percent

mark

against

I

steered

compaction

bidis

again.

I

would

never

do

again

because,

as

soon

as

we

did

a

couple

days

later,

disks

a

couple

discs

fail

on

the

cluster.

Fortunately

they

were

spaced

out

in

the

ring.

Those

nodes

went

down

and

we

had

to

go

and

deal

with

Dell

and

our

data

centers

remote.

A

We

had

to

go

and

do

all

that

stuff,

which

is

why

a

lot

of

people

love

ec2,

but

the

nice

thing

is

if

you're

on

raid

5

or

age

6

you

just

don't

have

to.

You

can

wait

a

couple

days

to

deal

with

it

and

that's

just

really

nice.

So

the

from

this

point

forward

we're

pretty

much

sticking

with

some

kind

of

rate

underneath

Cassandra

and

I

know,

that's

not

generally

recommended,

but

it

just

makes

my

life

a

lot

easier

when

you're

on

hardware

and

then

we're

still

being

on

a

bun

to

lucid.

A

We

also

took

this

opportunity

to

make

few

changes

to

our

schema

so

when

you're

doing

performance

tuning

one

of

the

most

important

things,

probably

the

most

important

factor

in

performance-

is

your

data

model

in

any

database.

I

don't

care

if

it's

mysql,

if

it's

berkeley,

DB

or

if

it's

cassandra.

If

you

get

your

data

model

right,

you

got

a

lot

of

overhead

to

get

to

come

up

with

really

good

performance.

A

These

aren't

necessarily

data

model,

but

I

just

want

to

make

sure

I

mentioned

that

compaction

strategy

move

move

to

leveled

compaction.

If

you

love

your

ops,

people

I

highly

recommend

sticking

with

leveled

compaction.

Even

if

sized

here

is

a

little

bit

faster.

Leveled

compaction

is

a

lot

friendlier

to

your

operations.

A

People

because

you

don't

have

that

fifty

percent

threshold

on

disk,

where

you

wear

your

size,

tiered

disk

usage

once

it

crosses

fifty

percent

or

two

times

your

largest

SS

table

or

your

largest

column

family,

then

basically

you're

stuck

you've

got

to

put

more

discs

in

the

node

or

do

these

kinds

of

crazy

migrations

that

we've

done

in

the

past.

So

if

you're

unleveled

compaction,

you

only

need

enough

free

for

two

compact,

two

S's

tables

together.

So

in

my

systems

you

know

I

need

about

a

gigabyte

free

so

arm.

That

was

a

big

change,

bloom

filters.

A

So

this

is

changing

a

lot,

especially

with

LCS

and

12,

in

on

words,

but

prior

to

that,

we

found

that

we

end

up

running

24,

gig

heaps

because

we

had

these.

These

calm

families

where

we'd

have

rose

is

what,

with

15

million

columns

in

them

and

those

would

just

use

up

a

ton

of

space

for

the

bloom

filters

and

I

was

talking

to

Ben

covers

in

one

days

like

yeah,

you

need

to

adjust

your

bloom

filter

and

we

did

and

we

actually

can

drop

down

back

down

to

eight

gig

heaps,

so

GC

gets

smoother.

A

The

whole

system

just

starts

running

a

lot

more

consistency

once

you

have

the

smaller

heap

size.

So

that's

something

to

look

at.

That's

what

we

use:

zero

dot,

one,

that's

not

a

great

value.

It's

just

kind

of

a

we

just

kind

of

just

said:

we

didn't

have

time

to

really

sit

and

play

around

with

different

values.

So

we

just

picked

one

that

was

basically

effectively

disabling

the

bloom

filters

see

what

happens.

We

started

cramming

all

that

data

in

and

our

false

positive

rates

still

really

low.

A

So

then,

when

you're

tuning

this,

you

really

want

to

look

at.

If

you

look

at

node

tool,

CF

stats

you're

going

to

see

in

there

the

bloom

filter,

false

positive

rate.

That's

what

you

want

to

look

at

to

tell

if

you've

got

the

right

value,

the

default

is

0007.

I

moved

up

to

0.1

and

I

still

have

just

a

few

add

a

false

positives,

which

is

fine

by

me

because

I'm

not

io

bone,

the

other

big

ones

SS

table

size

in

megabytes.

A

So

this

is

on

a

per

column,

family

value,

if

I

remember

correctly,

and

what

we

found

is

the

default

of

5

megabytes.

So

if

you

have

really

deep

Cassandra

nodes,

so

the

recommendation

and

tillery

I

don't

know

what

it

is

right

now,

but

you

used

to

always

be

on

IRC

s

like

don't

go

over

200

gigs

per

node

may

be

on

SSD,

it's

a

little

different,

but

we're

on

spinning

rust.

A

So

what

we

found

was

is,

with

the

five

megabyte:

we'd

have

500

gigs

on

a

node

and

that's

a

whole

hell

of

a

lot

of

SS

tables

on

disk

and

when

Cassandra

starts

up,

it

literally

opens

every

single

one

of

those

files

and

em

apps

it.

So

we

use

a

ton

of

file

descriptor

years

we

run

and

we

ran

into

limits

with

em

maps,

the

Linux

setting

for

max

em

maps

and

okay.

A

Right

so

the

jb

m

and

the

current

we're

spending

an

awful

lot

of

time,

just

managing

all

these

file

descriptors.

These

huge

lists

of

files

that

were

open.

We

saw

like

really

high

system

load.

We

saw

in

just

the

ton

of

CPU

usage

on

a

load

that

really

should

just

be

very

low,

CPU

in

very

high

I.

Oh,

we

turn

on

snappy

compression,

not

terribly

impressed

and

then

we've

set.

We

turn

compaction,

throttling

right

off,

I've,

been

told

by

the

day

stacks

guys.

This

isn't

really

necessary

anymore.

A

A

I

have

an

example

of

that

later

of

how

to

look

for

it,

but

what

happens

is

if

you're,

not

if

you're

compaction

is

get

behind,

then

you

kind

of

get

into

this

loop,

where

you're

inserting

data

most

of

us

they're

using

Cassandra,

have

a

pretty

constant

insertion

rate

right.

It's

not

it's

not

a

wave

like

this.

A

You

just

have

this

like

50,000,

a

second

24

7

wham

into

the

database

right,

so

compaction

would

get

behind

for

us

and

we

were

doing

these

really

high

insert

rates,

and

then

you

know

the

next

one

to

come

along

and

x1

come

along

and

they

just

stack

up

forever

until

you

just

it

was

impossible

to

get

out

of

that

mess.

We

turn

the

limit

all

the

way

up.

I

said

you

know,

this

disk

array

should

be

able

to

do

about

500

megabytes.

A

A

second

I

turned

it

all

the

way

up

to

you

know

a

thousand

megabytes

a

second

and

it

still

couldn't

keep

up

turn

it

off.

It

does

just

fine

right,

so

if

you

have

linux

tune,

just

the

right

way

doing

all

that

extra

I,

oh,

isn't

that

big

a

deal

most

of

it's

actually

happening

in

memory.

There

are

a

couple

of

settings

you

can

play

with

to

make

that

even

more

the

case

I'll

time

coming

to,

and

this

works

just

fine.

So

if

you

it

might

be

worth

something

worth

playing

with.

A

If

you're

dealing

with

that

issue,

where

you

have

really

high

insert

rates

your

compaction

czar

getting

behind

on

leveled

compaction,

it

might

be

worth

trying

this

especially

on

SSDs

and

then

now

we're

actually

working

on

yet

another

migration.

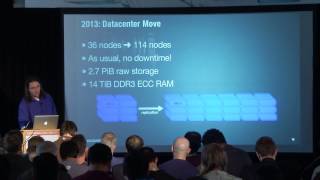

But

this

one's

not

it

because

we

screwed

up

time

it's

because

we

want

to

move

from

our

old

data

center

cage

into

a

new

bigger

one,

with

more

more

racks

available

and

all

that

stuff.

So

we're

going

to

a

much

bigger

cluster

nodes

of

time.

A

So

what

we're

moving

at

to

is

our

new

pipeline

is

going

to

be

all

those

events.

I

talked

about

earlier.

Those

analytical

events

are

going

to

be

flowing

into

Cassandra

in

real

time

as

they

come

in

and

then

we're

going

to

be

running

analytical

jobs

over

that

data

to

do

successive

aggregations

directly

out

of

Cassandra,

so

both

read

and

write

workloads

are

going

to

be

pretty

much

equal

in

a

few

months.

A

So

this

is

the

next

generation,

so

a

lot

of

stuff

that

should

be

familiar

to

everybody

in

the

Big

Data

community.

If

you're

new

to

this

stuff

I'll

quickly

cover

it.

Kafka

is

a

really

cool

tool.

It's

if

you're,

using

storm

or

Cassandra.

Basically

any

of

these

big

data

stacks

where

you

want

to

do

real

time

streaming.

Inserts

Kafka

is

a

really

nice

tool

from

LinkedIn

open

source.

That,

basically,

is

a

message:

queue

for

really

high

volume,

rep

messaging,

so

basically

all

those

logs.

A

But

get

written

real

time

as

a

request

coming

into

calc

into

a

casket

queue,

and

then

we

have

an

in

gesture

that

pulls

off

of

those

calf

get

queues

and

writes

into

Cassandra.

What

that

lets

us

do

is

if

we

want

to

bounce

that

in

gesture

or

have

it

down

for

ten

minutes,

Kafka

will

eat

up

all

the

rights,

and

that's

generally,

what

you

like

message:

keys

for.

A

A

A

That's

the

business.

A

lot

of

us

are

actually

in

right,

we're

not

building

you

know.

A

lot

of

us

aren't

going

out

and

discovering

new

algorithms

or

building

new

hot

hot

snot,

like

computer

systems,

we're

taking

a

bunch

of

components

like

Cassandra

and

Kafka

and

Scala,

and

bringing

them

together

to

build

an

aggregate

service,

that's

unique,

but

from

common

parts

off

the

shelf.

A

So

we

have

it's

a

more

practical

than

than

pure

computer

science,

and

so,

when

we're

talking

about

tuning,

there's

kind

of

a

philosophy

that

I

have

that

I've

really

worked

hard

to

try

to

be

able

to

share

with

people

and

the

main

point

being

is

there's

a

lot

more

to

tuning

than

just

raw

performance

right.

So

the

number

one

is

security.

It's

always

security.

Your

number

one,

that

any

kind

of

design

decisions

should

always

be

security,

even

if

you're

turning

it

off,

which

a

lot

of

us

do

with

Cassandra.

You

turn

off

authentication.

A

You

don't

bother

to

do

crypto,

because

it's

a

pain

in

the

ass.

You

still

need

to

think

about

it

and

it

needs

to

be

a

conscious

decision.

Then,

after

that,

you

know

your

cost

of

goods

sold

like

sure

it

would

be

great

if

I

could

have

a

thousand

node

Cassandra

cluster

and

ec2

on

SSD

instances.

I'd

never

have

to

touch

a

damn

thing

right,

but

my

business

would

would

be

I'd,

be

spending.

You

know

fifty

thousand

dollars

a

month

on

that

cluster

or

whatever

it

costs.

A

A

How

much

are

your

Ops

guys

going

to

hate

you

or

hate

themselves?

You

need

to

think

about

that.

So,

when

you're

picking

Cassandra

it's

a

great

database

for

your

ops

people

write

a

note

can

fail

in

the

middle

of

the

night.

They

look

at

their

phone.

They

go

back

to

sleep.

That's

what

I

do

that's,

what

a

good

ops

person

does

with

a

distributed

database,

but

for

for

the

engineers

in

the

room

when

you're

designing

your

schemas,

it

might

be

tempting

to

mess

around

with

your

replication

factor

and

say

you

know.

A

Maybe

three

is

too

many:

I

don't

want

to

store

the

data

that

many

times

they're

running

rate

under

it.

Why

it's

a

big

deal?

Well,

it's

a

big

deal,

because

if

it's,

if

your

application

factor

is

2,

that

means

your

system

in

has

to

get

up

in

the

middle

of

night

and

fix

that

node,

because

if

the

other

one

fail,

if

the

next

node

in

the

replica,

if

the

nodes

replica

fails

at

the

same

time,

they

got

it

now,

you

got

an

emergency

on

your

hands

right.

So

just

keep

that

in

mind

as

you're.

A

Designing

schemas,

as

you

make

messing

with

all

these

settings,

is

think

about

what

happens

in

the

middle

of

the

night

when

stuff

fails,

because

it

always

does

it

never

fails

during

the

day.

It

always

fails

during

your

kids

baseball

game

or

in

the

middle

of

the

night,

when

you

just

having

the

best

dream

of

your

life.

A

You've

all

woken

up

in

the

middle

of

night,

because

something

like

that

right

so

and

then

you

know

physical

capacity

do

I

have

Rackspace,

you

know.

Do

I

have,

can

I

get

the

hardware

so

on

reliability

and

resilience,

I

recommend

everybody

look

up.

John,

all

spas

has

blocked

nice

blog

posts

and

a

few

talks

about

resilience

and

just

in

general,

the

philosophy

of

it

I'm

not

going

to

go

into

that.

A

But

you

know

going

back

to

the

replication

and

stuff

think

about

reliability

and

resilience

and

then

there's

compromise,

which

is

probably

the

one

that

that

is

the

most

frustrating

to

deal

with.

So

you

have

different

business

use

cases.

You

have

a

whole

bunch

of

different

engineers

that

have

different

opinions

and

how

to

build

schemas

and

build

databases

and

which

one

they

should

choose

should

be

used.

React

should

be

used

Cassandra.

Should

we

write

our

own

or

have

this

like

massive

level

d

bees

all

over

a

hundred

boxes?

A

You

know

you

got

to

make

compromises

in

your

tuning.

It

actually

has

tuning.

Your

system

needs

to

take

all

those

compromises

into

account.

So

I

think

I

got

the

hope

we

got

the

point

across

that

you

know

it's

not

just

the

maximum

performance

that

you're

looking

for

you're

looking

for

the

the

best

of

the

best

that

you

can

do.

They

look

not

the

lowest

common

denominator,

but

something

a

lot

like

it,

hopefully

not

as

depressing.

A

So

I

mentioned

earlier

a

little

bit

about

the

this,

the

science

of

tuning.

I

won't

spend

a

lot

of

time

on

this

and

bore

you

guys

with

my

my

philosophy,

but

the

the

video

reason

why

I

brought

that

up

is

because

I'm

not

a

scientist

about

it.

It

would

be

really

great

if

I

could

spend

a

month

in

the

lab,

with

cassandra

playing

with

all

kinds

of

different

hardware

and

memory

and

and

get

it

just

running.

Just

super

awesome

before

I

show

it

buddy.

A

That

would

be

awesome

right,

I've

done

it

before

other

jobs

and

it's

fun,

but

the

reality

is

production

comes

first

and

you

got

to

get

the

code

shipped.

So

how

do

you

tune

a

system

to

the

best

of

your

ability

without

actually

going

and

often

having

a

lab?

So

what

I'm,

trying

to

bring

up

this

term

look

at

this

Harris

Titian,

like

you

got

to

use

heuristics

you

got

to

use

your

gut.

You

got

to

use

your

education

to

make

decisions.

A

You

know

when

I'm

choosing

hardware,

I

know

that

you

know

quad

channel

ddr3

is

going

to

do

a

lot

more

bandwidth

than

dual

channel

and

if

I

imbalance,

the

banks

and

I

don't

have

to

test

that

like

it's,

just

that's

how

the

hardware

is

so

you

can

make

that

decision

without

actually

testing.

It

is

what

I'm

kind

of

getting

at

and

your

your

brain

is

really

good

at

her.

This

kind

of

stuff

right.

This

is

what

we

do

better

than

computers

and

for

now

anyway.

So

you

should.

A

You

should

learn

to

use

that

ability

if

there's

a

really

good

book

by

Malcolm,

Gladwell

called

blink.

That's

all

about

this

kind

of

stuff

and

how

gut

reactions

will

often

beat

clinical

studies

and

scientific,

very

measured

control

group,

and

you

know,

being

very

careful,

he's

careful

studies

and

that

they

did

studies

with

doctors

and

things

where

they

found

that

you

know

you

could

go

through

this

procedure.

A

That

was

designed

in

a

lab

for

treating

heart

patients,

but

they

found

that

if

they

just

had

the

doctor

make

a

quick

snap

decision

out

of

his

gut

that

outcomes

were

better.

There

are

a

lot

of

factors

to

that,

but

I

recommend

the

book

highly

and

then

it

back

to

you

know.

The

practical

approach

is

just

concentrate

on

bottlenecks.

Look

at

your

cluster

in

production

and

look

at

what's

what's

slowing

you

down

is:

are

you

doing

a

lot

of

I/o

is?

Does

it

look

like

your

memory?

Bus

might

be

over

saturated.

A

If

you

see

one

process,

if

you

open

up

top

and

you

hit

H

and

you

can

see

all

the

threads

and

there's

one

thread

on

top

doing,

one

hundred

percent

CPU

and

all

the

rest

of

them

are

idle.

You

probably

have

GC

problems

and

I

just

wanted

to

mention

this

one

real,

quick,

this

is

kind

of

getting

popular

in

the

ops

side

of

the

world.

Is

the

OODA

loop.

This

comes

out

of

the

military,

there's

a

really

good,

Wikipedia

article

on

it.

If

you

want

to

google

it

billy

says

this

is

my

slight

variation.

A

Why

I'm

tuning?

Is

you

know,

II

observed

production

systems

under

load

under

your

load,

because

it's

the

only

thing

that

actually

matters.

Nobody

gives

a

crap.

If

you

can

make

Cassandra

superfast

in

the

lab

and

then

you

put

it

production

and

your

production

load

crushes

it

right

so

tune

with

your

load.

If

you

can

replicate

it

in

the

lab

great,

but

a

lot

of

us

can't

we

don't

have

the

resources,

we

know

I'm

the

money,

but

at

the

time,

so

you

have

to

observe

your

production

clusters

in

production.

A

It

make

changes

safe

changes

that

you're

confident

about.

Maybe

you

test

those

on

a

small

set

up

an

ec2

for

a

couple

days,

shut

it

all

down

and

then

try

it.

If

you're

not

confident,

you

are

confident

or

you're

crazy,

like

I.

Am

you

just

make

the

change

in

production

on

one

or

two

nodes

and

then

roll

through?

And

you

can

make

these

changes

with

confidence,

because

you

just

get

good

at

knowing

what

the

health

of

your

cluster

is.

I

won't

spend

any

more

time

on

that.

So

that's!

Basically

what

I'm

coming

to

is.

A

You

know

I

love,

testing,

shiny

things,

I

love

play

with

these

settings.

I

love

getting

an

extra

one

percent

out

of

my

cluster

and

I

know

I'm

kind

of

on

the

outside

with

that.

But

you

know,

linux

kernel

has

come

a

long

ways

and

performance

in

the

last

few

years,

if

you're

on

sent

os6,

your

kernel

is

missing.

Probably

twenty

percent

performance

overhead

on

a

lot

of

things.

The

memory

subsystem

has

gotten

a

lot

better.

The

I/o

subsystem

has

gotten

a

lot

faster,

a

lot

more

parallel.

A

Most

of

the

file

systems

have

gotten

better

I've

run

into

weird

bugs,

like

XFS

has

had

this

bug

a

while

back

with.

If

you

set

Alex

eyes

to

anything

it

would

all

so

you

could

set

XMS

up

so

that

when

you,

when

Cassandra,

goes

to

do

a

right

on

esos

table

right,

it

opens

up

a

file

and

it

goes

to

the

right.

A

The

first

bite

under

the

covers

XFS

would

actually

go

if

you

set

this

value

to

like

Alex

I

is

equals

64

Meg's,

it's

a

really

cool,

optimization

right

because

it

goes

and

allocates

the

whole

64

Meg

chunk

under

the

covers

right.

As

soon

as

it

gets

the

first

byte

written

to

the

file

system,

so

you

don't

follow

the

success

of

streaming

right

out

of

Cassandra.

A

Just

there's

you

don't

have

the

overhead

of

going

and

finding

an

open

block

on

the

disk,

so

it

can

just

stream

it

all

out

as

fast

as

possible,

except

there

is

a

bug

and

that

it

would,

if

you

were

only

Road

out

like

five

megabytes,

that

64

make

it

would

still

leave

the

64

Meg's

allocated

on

the

files

system

and

your

disc

could

fill

up

like

in

a

couple

days,

especially

in

LCS

I,

like

playing

with

different

Linux

distributions.

I,

don't

typically

do

that

in

production,

but

you

know

it's

something

that

comes

into

play.

A

A

lot

of

people

just

pick

sent

to

us

because

that's

what

the

organization

uses,

or

they

pick

a

bunt

too,

because

it's

cool

and

popular

with

developers,

but

there

are

things

you

can

do

with

those

distributions

by

changing

out

some

of

the

the

components

you

know.

Looking

at

what

jbm

my

shipping.

Obviously

most

of

us

are

shipping

our

own

JVMs.

A

For

that,

the

colonel

it

ships

with

it,

which

lib

see

is

it

and

how

is

it

compiled

and

how

much

performance

can

I

extract

out

of

that,

because

the

JDM

depends

on

it

very

tightly

playing

with

different

file

systems

wearing

ZFS

on

Linux

in

production.

Now

and

it's

it's

awesome,

you

get

most

of

the

advantages

of

ZFS

and

it's

in

kernel,

and

it's

fast

as

hell.

Btrfs

is

really

interesting

because

it's

in

the

linux

kernel

it

ships

with

most

of

the

distributions.

Now

it

has

some

of

the

features

of

ZFS.

A

It

doesn't

have

any

of

the

performance

features,

but

it

does

have

LGL

compression.

It

has

support

for

ssds

with

trim

commands.

It

does

its

own

version

of

raid

0,

that's

built

into

the

file

system,

so

that

the

file

system

is

aware

of

where

the

blocks

are

distributed

and

can

do

optimizations

that

you

can't

do

with

these

successive

layers

of

lvm

and

md

raid

and

the

disk

device

is

abstracted

so

far

away

that

the

fallston

has

no

freaking

idea

what's

going

on

and

then

you

know,

different

JVMs

is:

does

it

openjdk

viable?

A

Yet

it's

not,

but

it's

worth

trying

every

few

months

just

to

see

because

they

are

advancing

pretty

quickly

and

then

I

do

I

test

all

these

things

in

production.

None

of

the

things

I'm

running

production

today

started

out

in

the

lab.

All

of

them

started

on

a

production

cluster

and

here's.

How

I

do

it

so

Cassandra

actually

is

perfect

for

this

kind

of

crazy

stuff

right.

So

you

have

a

new

kernel.

You

want

to

try,

say:

33

11

comes

out

and

it's

got

some

really

cool

optimizations

for

memory

memory,

bandwidth.

A

What

you

do

is

you

just

take

advantage

of

the

ring,

so

you

have

you,

have

your

ZFS

filesystem

between