►

Description

Speaker: Patrick McFadin, Chief Evangelist for Apache Cassandra at DataStax

Cassandra is a highly performant database, but are you getting most bang for your buck? There are a handful of patterns and anti-patterns you should know when looking for top performance in your application. We’ll cover topics such as a proper data model, driver selection and access patterns. You should also know what can destroy performance just as quick, so a tour of common anti-patterns is on the agenda. Put these together if you feel the need, the need for Cassandra speed.

A

A

That's

yeah

and

it

was

actually

just

us.

No

last

year

we

were

at

the

barbicon,

which

is

a

cool

venue,

but

it

was

a

little

smaller

and

it

was

a

nice

intimate

feel,

but

man.

This

is

really

cool,

because

I

think

there's

a

lot

of

good

energy

here.

A

lot

of

cool

talks,

one

of

the

things

I

I

hate

about

doing

these

type

of

events-

is

that

I

don't

get

to

go,

see

all

the

talks

because

I'm

usually

busy

doing

other

stuff,

but

the

good

thing

is:

is

we

record

them?

A

A

A

So

applications

that'll

look

funny

on

2x,

so

I'm

gonna

do

a

little

different

talk.

Yesterday

I

did

eight

hours

of

data

modeling.

This

is

not

a

data

modeling

talk.

This

is

a

different

type

of

talk

and

when

I

say

hit

the

turbo

button,

it's

kind

of

a

I'm

being

cute,

but

what

I'm

trying

to

hopefully

relay

to

you

is

some

of

my

experience

as

being

a

consultant

in

in

cassandra

with

cassandra

and

working

with

teams

on

making

their

life

a

little

better.

A

So

I

don't

know

if

I'm

my

name

is

patrick

mcfadden.

If

you

didn't

know

that

and

I'm

the

chief

evangelist

for

apache

cassandra,

which

is

a

really

killer

job,

because

I

get

to

go

around

talk

to

people

like

yourself

find

out

what

you're

doing-

and

it's

really

I

mean

it's

like

my

dream

job,

because

I

I

got

to

talk

about

cassandra

all

the

time

I

got

to

do

applications.

A

So,

let's

start

out

with

here.

2014

was

a

awesome

year

for

drivers.

You

know

when

we

started

out

this

year,

because

the

data

stacks

had

three

formalized

drivers

for

cql.

There

was

java,

c-sharp

and

python,

and

those

were

those

are

great

drivers

and

well

c

sharp

had

a

lot

of

trouble,

but

we'll

fix

that

and

those

are

good

core

drivers.

But

since

then,

we've

added

you

know

node.js

and

we've

added

ruby

driver.

A

This

is

from

datastax

and

so

and

now

we

have

c

plus

plus,

which

is

currently

in

beta

and

from

what

I

understand

is

coming

around

pretty

quickly.

It

is

a

really

fast

driver

and

it's

good

because,

with

a

c

plus

plus

driver,

it's

going

to

open

up

a

lot

of

things

like

php,

an

accelerated

python

driver

experience.

A

A

I

work

with

our

driver

team

at

data

stacks

quite

often,

and

it's

just

a

really

good

team

of

people

and

they're

all

application

engineers.

They

get

it

passionate

and

they're

moving

fast

with

these

things,

and

this

isn't

just

a

datastax

effort.

You

know

this

is

one

of

the

things

that

datastax

as

a

company.

A

So,

for

instance,

the

the

ruby

driver

came

from

cqlrb,

which

was

in

community,

and

we

worked

with

theo

on

that

and

there

was

a

lot

of

collaboration

in

community

and

all

of

these

drivers

are

open

source

right,

so

you

can

go

out

and

download

them

and

use

them

and

contribute

to

them

and

do

pull

requests.

And

that's

I

see

that

all

the

time

there's

also

some

great

community

drivers

out

there

closure

there's

actually

two

really

good

ones.

A

It's

kind

of

hard,

because

when

you

have

two

drivers

that

are

really

good,

which

one

you're

going

to

use

and

there's

a

go

driver,

erlang

driver,

there's

some

there's

rust.

I've

seen

some

rust

drivers,

some

scala

stuff

going

on.

So

those

are

the

ones

that

are

more

formalized.

The

closure

go

in

erlang

using

erlang

with

cassandra

is

kind

of

a

funny

thing,

but

anyway,

so

why

drivers,

because

the

drivers,

the

driver,

support

for

cql,

opens

up

a

lot

of

possibilities

right

and

when

you're

using

cassandra.

A

It's

an

application

database

right

you're

supposed

to

write

your

your

application

is

an

endpoint

to

store

data.

So

most

times

developers

are

using

cassandra.

It's

not

just

a

dbas

type

database

and

it's

not

bi

people.

You

know

people

who

work

in

business

intelligence,

it's

application

database,

so

these

apis

and

these

drivers

are

really

important.

They

have

to

be

rock

solid

and

they

have

to

be

fast

and

so

and

they

have

to

be

feature

rich.

A

They

have

to

express

what

is

in

cassandra

really

well.

So

I'm

just

going

to

say

this.

You

probably

saw

this

slide

this

morning,

but

cassandra

performance,

that's

not

a

big

problem

right

now.

You

know

I

mean

it's

awesome

that

we're

getting

faster,

but

cassandra

performance

is

it's

fast.

It's

a

fast

database.

It's

meant

to

be

a

really

fast

database,

the

performance

we

saw

this

morning.

A

You

know

with

the

new

2.1

cassandra

it's

pretty

fast.

I

mean

you

should

not

have

a

lot

of

performance

issues.

If

you

do,

you

probably

created

them

yourself.

So

why

do

I

say

that?

Because

I

do

a

lot

of

consulting

so

if,

if

cassandra

is

fast

enough,

if

we

can

get

the

job

done,

you

know

just

as

a

database.

It

has

all

the

right

things.

A

Then

here's

the

problem,

I

see

all

the

time

right

is

you

have

the

ability

as

a

developer,

to

do

the

wrong

thing

or

the

right

thing

and

it's

not

like

you're

a

bad

programmer.

Sometimes

I'll

talk

to

teams

I'll,

be

like

hey.

Why

don't

you

do

this

they're,

like

I've,

never

heard

of

that?

Oh,

I

should

do

a

talk

on

that.

A

So

I'm

doing

a

talk

on

that,

but

you

know

you

don't

want

to

be

in

this

situation.

Where

you

got

this

killer

database,

it's

going

to

kick

ass

and

then

you,

you

know

spoon

feed

it

or

you

do

something:

that's

not

going

to

make

it

as

performant

as

possible.

You

know

you

want

to

just

do

this,

so

you

can

do

some

simple

stuff

use

the

apis

correctly.

Exp

and

those

apis

are

built

around.

What

cassandra

can

do

and

actually

help

exercise

some

of

the

better

features

of

cassandra.

A

So

it's

a

it's

really

a

complete

system.

If

you're

looking

at

a

system,

cassandra

is

not

only

just

a

server,

but

it's

also

the

clients.

The

clients

participate

pretty

heavily

in

what

the

cluster

is

doing.

So

it's

how

you

use

it.

So,

let's,

let's

start

out

with

a

couple

of

really

easy

ones,

and

I'm

going

to

start

with

this

prepared

statements,

simple

beauty

right

and

I've

always

amazed

how

many

people

never

use

prepared

statements

and

the

question

I

always

get

is:

what's

the

difference,

there's

a

huge

difference.

So

what

are

they?

A

Let

me

explain

how

a

prepared

statement

works.

So

a

prepared

statement

is

all

about

these.

This

progression

in

your

code,

now

I'm

going

to

use

some

general

generalizations

cql

drivers

should

all

have

a

prepare,

some

sort

of

a

prepare.

But

how

does

this

work

so

when

a

client

says

hey

prepare

this

statement,

it

does

a

session.prepare.

A

That

statement

will

have.

You

know

here's

a

select

statement

and

it

has

a

question

mark

for

the

variables

that

you're

going

to

bind

later.

So

the

prepare

is

all

about

here's,

a

statement

and

I'm

going

to

use

this

statement

over

and

over

again

and

bind

the

variables

at

runtime

when

I

need

to.

This

is

exactly

like

what

relational

databases

have

done

for

a

long

time

with

jdbc

and

those

type

of

drivers.

A

So

we

have

a

prepare

statement

so

when

it

does

a

prepare,

it

actually

goes

out

to

the

it

goes

and

takes

to

the

cluster,

and

it

says:

okay,

entire

cluster-

we're

now

doing

this

preparation.

So

what

does

that

mean?

So

it

parses

and

hashes

that

statement.

So

it's

pre-parsing,

the

parsing

action

is,

is

a

string,

manipulation

and,

as

we

know,

string

manipulation

is

the

fastest

thing

you

can

do

in

java.

A

Not

so

when

we

pre-parse

that

we

parse

that

that

that

statement

and

then

it's

hashed,

it's

just

an

md5

hash

and

stored,

so

that

pre-parse

statement

is

hashed

through

an

md5

and

then

once

it's

it

says.

Okay,

now

we

have

a

prepared

statement

and

the

hash

goes

into

back

to

the

client

says:

here's

the

hash

and

then

it's

cached

as

well

on

every

one

of

the

servers.

A

A

It

seems

like

a

pretty

simple

thing

that

you

is

it

too

much

optimization,

but

there's

a

lot

you're

missing

there,

so

that

you're

skipping

a

whole

bunch

of

code.

When

you

do

this,

it's

a

shortcut

and

especially

on

something

most

likely

you're

going

to

run

over

and

over.

So

they

combine

the

to

the

pre-parse

query.

A

So

the

non-prepared

statements

down

here

at

5,

000

or

500

inserts

per

second

and

then

up

here

the

throughput

went

to

2500

ish

or

more.

So

that's

a

5x

improvement

on

throughput

just

using

a

prepared

statement,

and

this

is

the

type

of

thing

that

you

would

prepare.

You

do

a

prepared

statement

early

on

so

I'll

show

you

some

code.

A

You

do

a

prepared

statement

early

on

like

this,

and

you

say:

here's

my

java

code,

for

instance,

or

python.

If

you

notice

they're

pretty

similar

or

you

create

a

prepared

statement,

a

bound

statement

takes

that

and

then,

when

you

execute

it,

you

bind

the

variable

to

it

and

it's

both

python

and

java

and

node.js

and

c-sharp.

I

mean

all

of

all

the

language

drivers

do

this

closure

and

when

you

do

this,

this

is

this

is

free

speed.

A

A

And

I've

seen

this

done

and

it's

it's

like

okay,

great

feature

use

bad

placement,

you

know

just:

can

we

just

copy

that

or

cut

that

one

piece

and

move

it

up

a

bit,

because

what

happens

here

is

you're,

just

gonna

say:

prepare,

prepare,

prepare,

prepare,

prepare

over

and

over

again

and

a

prepare.

What

a

preparer

does.

Is

it

spreads

out

that

all

of

that

to

the

cluster?

A

Now

that's

just

gonna

hammer

the

hell

out

of

your

servers

and

you

get

zero

benefit

from

it.

It's

just

gonna

be

like

you

might

as

well

just

use

session,

execute

and

leave

it

at

that.

So

it's

an

anti-pattern

for

sure-

and

this

is

like

one

of

those

you

know

when

you're

trying

to

solve

a

performance

problem-

and

you

see

this-

you

get

real

excited

because

it's

going

to

be

a

short

day.

A

A

A

Now,

if

we're

in

the

middle

of

a

skirmish,

I

want

that

one,

and

this

is

a

this-

is

my

cute

analogy

that

shows

the

difference.

When

you

do

an

execute,

it's

a

single

it's

just

boom,

I'm

going

to

send

one

thing

out

when

I

do

is

execute

async.

I

get

the

advantage

of

being

able

to

do

things

in

parallel

and

take

advantage

of

some

things

that

cassandra

already

gave

me

like.

My

data

is

distributed

across

multiple

servers,

that's

cool!

If

I

have

a

500

node

cluster,

I

would

love

to

spread

out

the

load.

A

I

want

to

run

things

in

massive

parallel

and

this

is

all

brought

to

us

by

the

magic

of

nettie,

and

this

is

what

I'm

a

good

representation

of.

What's

going

on.

So

when

you

do

request

pipelining,

you

have

this

one

connection

to

from

the

client

to

the

server

and

each

one

of

the

servers

each

the

client

connects

to

each

one

of

the

servers

in

the

cluster.

So

whenever

I

send

requests

over

when

I

use

async,

it

will

send

the

request

over

and

then

the

responses

get

received.

A

A

Now

that

sounds

legit,

but

in

a

case

where

I'm

gonna

be

doing

a

lot

of

inserts,

for

instance,

or

something

like

that,

then

I

wanna

do

something

where

I'm

gonna.

I'm

gonna

run

a

lot

of

statements,

hopefully

at

the

same

time

and

let

them

run

on

each

individual

node,

that's

gonna

be

collecting

that

and

one

of

the

important

things

about

this

is

that

you're

collecting

a

future.

A

So

here's

some

sample-ish

code.

It's

I

try

not

to

get

too

language

specific,

because

this

is

a

feature

that's

on

every

single

driver

and

actually,

if

you

use

node.js,

you're

already

pretty

smug,

because

there's

no

such

thing

as

a

non-asynchronous

call

right,

because

that's

the

way

node

people

work,

everything's

asynchronous.

A

So

those

fan

out

in

parallel.

So

as

I'm

inserting

things

they're

going

off

in

a

parallel

manner

and

that's

awesome

because

I'm

no,

my

code

is

not

blocking,

so

I'm

in

the

middle

of

doing

something

here.

So

I

I

send

those

out

whenever

I

run

the

future.get,

that's

when

it

blocks

and

not

until

then,

and

if

you're

really

good

at

concurrent

code,

which

I'm

sure

everybody

here

is,

then

you

can

figure

out

some

ways

to

use

like

mutexes

and

things

like

that

to

gather

that

gather

those

almost

in

a

parallel

fashion.

A

I

have

some

code

that

I

wrote

that

I

got

a

lot

of

help

with

because

I

suck

at

concurrent

and

it

when

it

sends

out

those

asynchronous

requests.

Basically,

my

slo,

the

slowest

all

of

it

will

be,

will

be

the

one

slowest

response.

So

everything

comes

back

pretty

much

at

the

same

time

and

I

collect

them

all

using

a

mutex

you

can.

A

A

A

Going

back

to

my

analogies

batch

is

like

a

battering

ram

right

and

versus

you're

gonna

get

now

a

battering

ram

sounds

awesome

if

you're

taking

down

a

castle,

it's

horrible

whenever

you're

writing

database

code,

because

why

am

I

calling

it

a

battering

ram?

Let's

get

into

that?

Well,

it's

potentially

a

pattern.

A

A

A

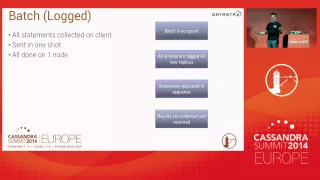

The

results

are

then

collected

by

the

one

server

on

your

in

your

whole

cluster.

You

got

a

huge

cluster.

One

server

is

now

collecting

all

those

responses,

and

then

it

has

to

go

back

to

the

client

now

why

you

know

that

that's

how

it

works.

So

the

good

news

is

batches.

Have

a

good

point.

I

mean

I'm

not

going

to

just

say:

you're

telling

me

batches

are

bad.

The

reason

batches

are

good

are

for

what

they're

used

for.

A

For

that

reason,

I

just

told

you

so

here's

a

use

case,

so

I

have

two

lookup

tables

that

basically

store

the

same

information,

but

in

a

different

way.

So

I

those

are

two

things

that

will

have

to

be

updated

at

the

same

time,

if

these

become

out

of

sync,

my

application

code

is

going

to

suck

because

I'm

going

to

have

to

figure

out

how

to

make

these

work

right.

So

I

have

a

comments

by

video

and

comments

by

user.

A

So

if

I

ever

add

a

comment

to

my

to

this

table

to

my

comments

table,

I

would

have

to

update

both

of

these.

At

the

same

time,

now,

what's

my

option

going

into

application

code

and

managing

that

situation

in

application

code,

so

that

kind

of

sucks?

So

I

would

rather

use

a

batch

to

do

this,

so

both

inserts

are

run.

A

A

A

I

have

with

someone-

and

I

have

this

about

once

a

week

so

hey

I

was

doing

a

load

test,

my

nose,

blinked

out-

and

I

say

well

we're

using

a

batch

by

any

chance,

because

I

hear

this

all

the

time

and

by

that

they're

like

one

node

just

dropped

out

and

then

it

picked

up

and

then

another

dome

dropped

out

and

it

picked

up

and

like

oh

cassandra,

sucks

and

then

so.

If

I,

if

I

just

I

use

my

magic

ball

and

I

say,

are

you

using

a

batch

and

they're

like

whoa.

A

A

And

it's

typical,

where

you

have

these

massive

batches

and

you're

fire

up

your

load

testing

and

you

just

start

pounding

the

hell

out

of

your

poor

cluster

with

the

wrong

tool,

a

battering

ram.

So

how

does

it

work

well?

So

if

the

client

decides

to

I've

seen

this

people

use

this

as

a

potential

optimization

where

it's

like:

hey,

I'm

getting

a

bunch

of

sensor

input

and

I'm

gonna,

I'm

gonna

put

them

all

into

a

single

batch

and

just

send

them

down.

That's

more

efficient

right.

Yes,

it's

absolutely

more

efficient.

A

If

you're

using

oracle,

not

cassandra,

I

was

I

used

batches

all

the

time

when

I

was

an

oracle

dba

because

it

was

on

a

single

server

and

it

actually

pipelined

a

lot

of

the

requests.

It

was

a

very

efficient

way

of

sending

a

lot

of

information

down.

It

did

one

use

one

connection

right

well,

because

let's

go

back

to

using

we're

using

nete.

A

That

means

there's

only

one

connection:

we're

not

creating

a

whole

bunch

of

connections

for

this,

but

people

will

put

developers

will

put

a

bunch

of

mutations

a

bunch

of

inserts

into

one

batch

they're

like

hey

one

server,

here's

a

thousand

things

to

do

that

thousand

things

to

do

have

to

get

done

here.

I'm

just

going

to

throw

more

at

you!

Good

luck!

A

Then

I

get

that

message

dude,

my

cluster

sucks

cassandra

sucks,

that's

the

problem

and

if

you

look

at

what

the

problem

is

usually

like

a

gc,

it

just

starts

garbage

collecting

like

crazy,

because

all

that

stuff

is

sitting

in

newgen

and

it

gets

promoted

into

old

gen

and

the

cms

starts

firing

up

and

cpu

gets

eaten

up

and

then

discs,

and

then

the

dogs

and

cats

are

living

together

and

it's

just

really

ugly

and

there's

a

mess

and

you

get

this.

So

what

do

you

do

so

follow

the

rules?

A

A

So

there

is

ac.

This

is

actually

a

jira

that

is

now

in

production

or

in

cassandra

mainline.

I

created

this

so

now,

there's

a

warning

on

large

batches

of

five

kilobytes

in

size,

not

how

many,

how

many

statements,

but

how

big

it

is,

because

that's

usually

the

problem.

So

there's

a

warning.

You

look

in

the

log,

it

says:

hey

dude,

that

batch

is

pretty

big.

What

are

you

doing

so

that

was

in

there

for

a

while,

and

I

kept

having

the

same

conversations

and

I'm

like

didn't

you

see

that

log

entry?

A

A

It

will

fail

and

why,

because

I

love

you

man,

I

don't

want

you

to

ruin

your

cluster,

so

this

is

gonna

prevent

this

is

this

is

like

the

safety

interlock,

and

so

this

should

be

a

guide.

If

this,

this

is

good

enough

to

be

put

into

code,

it

probably

needs

to

be

thought

of

well

before

it

gets

to

the

point

where

it

fails.

A

batch

you'll

just

throw

an

exception

and

say

too

large

of

a

batch

dude.

A

A

Do

this

use

a

execute,

async

and

just

send

those

out

in

parallel

and

that's

and

what

you're

doing

now

is

your.

Those

requests

will

then

go

to

each

one

of

those

servers

individually.

Instead

of

going

to

the

one

server

and

nuking

it

all

right

and

it's

more

distributed.

Actually

it

is

distributed

where

the

other

one

is

not.

So

this

kind

of

thing

is

what

you

want

to

do:

there's

actually

a

nice

blog

post,

one

of

our

solution,

architects,

that

datastax

wrote

it's

really

funny.

He

he's

a

consultant.

A

A

The

last

thing

I'm

going

to

talk

about

is

this

caching

mechanism

that

is

now

part

of

cassandra

2.1,

and

so

this

is

a

future

tense

thing,

so

old

cash,

if

you

would,

if

you

had

been

looked

at

row,

cash

at

any

point

before

2.1,

which

is

probably

everybody

that

had

used

it,

you

kind

of

got

that

warning

like

row:

cash,

isn't

quite

what

you

want

it

to

be:

it's

not.

It

doesn't

work

as

well

in

a

high

volume

load,

and

why

is

that?

A

A

So

the

new

row

cache

is

much

different

and

it's

more

thought

out

in

the

way

that

you

and

I

would

probably

want

to

do

it.

So

I

need

this

so

how

about

just

caching

this

and

that's

what

it

does

it

caches

the

cql

rows.

So

when

you

use

it

like

this,

so

I

have

a

table

here

I

can

say

and

caching

rows

per

partition

20..

So

it's

only

going

to

cash

20

rows

per

partition.

A

What

does

that

do

for

you

in

the

long

run?

Is

that

it

it

probably

like

if

you

have

hot

rows

and

you're

participation

in

a

particular

table,

it's

just

going

to

cache

those

and

that's

good.

You

can

tune

this.

You

can

say

five

rows

per

partition,

100

rows

per

partition.

It's

much

more

efficient!

Now,

keep

in

mind

it's

a

right

through

cache.

So

if

you

change

something

in

that

20

that

first

20

rows

of

partition,

it

will

just

invalidate

it

and

re-read

it

on

later

on.

But

what

do

you

get

out

of

this?

A

Cassandra

has

to

read

from

a

disc

and

if

you're

looking

for

low

latency

reads

and

you

put

a

7200

rpm

disc,

underneath

it

don't

come

after

me,

please

dude.

It

must

be

jvm

tuning.

No,

it's

rotational

physics,

18

milliseconds,

12

milliseconds,

that's

the

kind

of

seeks

you're

going

to

get.

We

did

a

bunch

of

load

tests

on

on

hard

drives.

This

is

the

kind

of

stuff

we

got.

We

know

that.

A

So

what

do

you

use

to

fix

that

you

use

low

latency

drives

like

this

top

one

is

a

samsung

840

and

the

bottom

one

here,

that's

flash

pci,

that's

a

fusion!

I

o

drive

that's

70

micro

seconds

now.

What

do

you

think

that

does

for

your

latency

on

your

reads?

Oh

it's

great

and

your

your

p99s

and

p95s

are

going

to

be

pretty

good.

A

So

this

is

my

annual

rant.

I

do

this

once

a

year

right

now.

I

do

it

every

time,

but

just

think

about

this.

This

is

I've.

Given

you

a

bunch

of

stuff

here

to

to

look

at

cheap,

easy.

These

are

things

you

could

probably

flip

up

in

your

laptop

right

now.

Go

look

at

the

code.

You

wrote

for

cassandra

and

find

some

stuff.

You

could

do

these

little

things

and

get

a

lot

more

performance

out

of

this.

A

A

The

session

object

stores

the

the

hash

for

the

prepared.

So,

yes,

you

would

have

to

do

inside.

If

you

had

separate

drivers

going

every

session

object

you

create.

You

have

to

do

a

prepare

all

right,

that

that

could

seem

a

little

inefficient.

But

if

you

only

had

four

drivers

say

you

did

it

four

times

then

you're

done

the

rest

of

your

code.

Running

will

be

fine,

but

it

is

tied

to

that

session

object.

So

if

you

close

the

session

object,

then

you

have

to

re-prepare

your

your

statement.

Yeah.

A

I

don't

know

if

there's

much

of

a

hit

on

performance

there,

because

it's

if

you

look

at

the

the

long

like

the

in

total

budget

of

time

that

it

takes

for

requests

to

respond,

I

mean

how

long

does

it

take

in

the

difference?

You

know

finding

something

in

an

array

microseconds,

so

yeah.

It

may

be

a

few

microseconds

different,

but

in

the

big

scheme

it

probably

isn't

a

big

difference

at

all

yeah.

A

A

So

the

first

question

was

why

the

size,

and

not

the

number

of

statements,

because

what

it

comes

down

to

is

the

size,

is

what's

going

to

cause

an

impact

on

the

server.

That's

going

to

that's

going

to

drive

the

resources

contentions

and

that

issues

it

was

the

easiest

thing

to

look

at,

so

you

could

have

a

thousand

mutations

of

very

small

amounts

or

five

of

huge

amounts

of

data

right.

A

It's

too

arbitrary

to

know

exactly

what

it

is.

But

then

I

what

I

looked

at

for

the

root

cause

of

the

problem

is

that

you're

putting

a

lot

of

large

or

you're

putting

a

lot

of

bytes

through

the

jvm

and

5k

after

I

did.

The

math

5k

was

where

I

started

seeing

some

problems

if

you're

hitting

it

really

hard,

it

doesn't

seem

like

a

lot,

but

it

adds

up

when

you're

doing

thousands

of

those

a

second,

and

so

it

was

strictly

based

on

how

it

manages

the

resources.

A

It's

just

not

a

good

idea.

The

second

question

I

think,

you're

asking

about

like

doing

a

batch

on

a

single

partition.

Okay,

that's

not

so

bad.

A

single

partition

means

that

you're

only

going

to

one

server

the

times

that

I've

seen

people

do

that

on

a

single

partition

is

pretty

small.

Now,

why

would

that

make

us?

Why

would

that

be

a

difference?

Well,

a

batch

on

a

single

partition.

A

A

Yeah

there's

I've.

I've

discussed

this

with

driver

drivers

and

they're

hesitant

to

try

to

guess

user

intent

in

api,

and

I

I

I've

been

argued

out

of

that

before,

because

I

even

in

statement

is

another

one

right

in

statements

should

be

run

in

parallel,

but

it

isn't

and

again

the

api

is

very

clear,

so

I'm

out

of

time

all

right.

So

that

was

the

last

question.

So

both

of

those

are

just

really.

You

don't

want

to

assume

user

intent

right

and

I

I

guess

from

the

purity

of

it.