►

From YouTube: Developing Applications with Apache Cassandra

Description

Christian Carollo is the director of cloud and alternative platform development at GameFly. He is focused on availability, reliability and scalabilty in cloud computing and how mobile, tablet and other non-traditional platforms can leverage cloud-based services.

Previously Christian has worked at Fandango as the Director of Data Systems and at several other internet companies over the last 15 years.

Follow him on twitter at @supernaut.

A

Start

off

with

a

quick

little

overview

as

to

you

know

what

game

fly

is

in

case.

Some

of

you

don't

know

a

little

bit

about

what

I

do

there

and

sort

of

how

that's

evolved

over

time

and

how

that's

led

to

us

actually

using

cassandra.

Gamefly,

specifically,

we

actually

built

something:

that's

social

in

nature,

using

cassandra

and

then

I'll

dig

deeper

into

cassandra

and

kind

of

give

a

broad

overview

of

the

features

that

were

appealing

to

us

and.

A

A

A

Specifically,

we

have

focused

in

the

past

on

the

console

systems

and

then

later

on,

the

portable

systems

and

we've

moved

out

into

most

recently

into

digital

downloads

for

pcs

and

now

we're

starting

to

look

at

mobile

platforms,

phones,

ios,

android

tablets,

that

sort

of

thing

trying

to

figure

out

where

we

can

either

build

out

a

rental

business

or

a

sell

game

business

in

that

space.

And

then

we

have

a

couple

other

properties.

A

You

may

have

heard

of

shaq

news

and

moby

games

and

they're

sort

of

our

content

arms

and

we

use

those

to

sort

of

fill

out

what

we

can

provide

around

the

gaming

experience.

You

know

cheat

codes,

other

bits

of

information

about

gaming

screenshots.

That

sort

of

thing

just

to

kind

of

give

you

a

greater

feel

for

what

a

product

the

game.

A

A

At

fandango

I

was

the

director

of

data

systems

there,

basically

working

on

making

sure

that

we

could

sell

movie

tickets

on

a

friday

when

spiderman

or

batman

or

whatever

big

comic

book

movie

came

out,

and

I

was

looking

for

something

new

and

I

actually

done

the

fandango

iphone

app

initially

right

when

it

came

out

in

2008,

and

I

was

really

looking

to

move

in

that

direction.

Unfortunately,

it

didn't

work

out

for

me

at

fandango

and

I

found

game

flying.

A

They

didn't

have

anything

having

to

do

with

mobile,

obviously

tablets

weren't

around

back

then,

so

we

sort

of

got

together.

One

thing

led

to

another,

and

so

that

was

the

start

of

what

what

we

do

today

initially,

then

there

was

nobody

in

that

group

and

now

there's

10

people

that

do

mobile

development,

whether

it's

phones

or

tablets

or

even

website

development,

specifically

tailored

to

mobile

browsers.

A

So

when

we

were

kind

of

looking

at

what

we

could

do,

we

were

looking

at

this

mobile

space

and

we

were

thinking

well,

you

know,

gamefly

is

whatever

it

is

today.

Well,

maybe

we

can

reimagine

it.

Maybe

we

can

interface

with

new

customers

using

phones

and

eventually

tablets,

and

you

know

we

have

looked

at

televisions

and

tried

to

figure

out

ways.

A

A

We

were

specifically

talking

about

working

with

just

the

gamer

community,

so

you

know

the

next

question

really

was:

okay,

we're

going

to

do

social.

We

know

what

it's

going

to

be.

We

want

it

to

be

the

social

stream

where

information

is

coming

in

pretty

much

all

the

time

that

gamers

can

communicate

with

each

other

about

anything

it

could

be.

A

You

know

whatever

they

would

normally

say

on

twitter,

or

it

could

be

about

a

news

article

that

we

had

on

chat

news

or

it

could

be

about

a

video

game

that

they're

excited

about

that's

coming

around

the

corner

or

be

about

a

trailer

that

they

hate

about

a

video.

It

could

be

a

contextual

or

it

could

be

just

generic.

We

kind

of

knew.

That

was

what

we

wanted

to

mold

our

social

stream

around.

A

So

you

know

we

looked

around

based

on

our

prior

experiences.

Doing

other

other

products

at

other

properties,

you

know

the

fandangos

of

the

world

as

an

example,

we

we

knew.

There

were

a

couple

things

that

might

run

that

we

might

run

into

that

might

be

problems,

and

that

was

how

do

you

scale

systems

like

that?

How

do

you

do

things

of

that

nature

when

we

hadn't

done

those

in

the

past,

because

gamefly

was

a

retail

business,

it

was

a

commerce

business.

It

didn't

have

the

same

constraints

that

a

social

platform

might

have.

A

Even

the

closest

thing

we

had

was

something

called

shaq

news

that

had

a

forum

type

system,

but

even

then

it

was

more

long

form,

not

a

lot

of

back

and

forth

sort

of

more

like

a

chat

system

for

lack

of

a

better

way

of

thinking

about

it.

So

we

looked

at

facebook

and

twitter

and

linkedin

read

it

and

some

others

myspace,

and

we

we

tried

to

understand

how

they

built

their

systems.

A

What

were

the

things

that

were

pain,

points

for

them

and

what

were

the

things

that

they

did

right

or

that

they

learned

the

hard

way

and

we

hopefully

wouldn't

have

to

learn

and

the

one

big

takeaway

was

massive

data.

Massive

usage

leads

to

sort

of

massive

scale

problems,

and

so

we

looked

at

how

they

scale.

A

Actually,

since

moved

on,

they

bought

a

couple

of

companies

that

were

hbase

experts

and

sort

of

pushed

the

sounder

out

over

time,

and

now

they

do

have

it

doesn't

mean

that

cassandra

isn't

necessarily

a

good

product.

It

just

means

that

they

have

expertise

in

a

different

area.

So

anyway,

how

do

they

scale?

A

E

A

A

We

knew

that

for

scaling

social.

There

were

a

couple

things

we

cared

about.

We

wanted

to

be

nimble,

fast,

flexible,

scalable

and

available,

and

so

what

did

those

things

mean

to

us?

Well,

since

we

didn't

really

know

what

to

expect

we,

what

nimble

meant

to

us

was

we

needed

to

be

able

to

get

into

the

code

potentially

in

real

time,

make

a

change

if

need

be,

deploy

that

change

not

have

to

bring

down

all

the

infrastructure

along

the

way

we

knew.

A

A

B

C

A

But

for

us

that

meant

that

if

we're

going

to

do

upgrades

to

the

software,

whether

it's

the

database

or

you

know

the

operating

system

or

what

have

you

we

could

do

something

so

that

we

could

always

have

like

rolling

upgrades

would

be

sort

of

the

preferred

strategy.

So

we're

never

down

we're,

never

losing

data.

Things

are

always

working.

A

Social

doesn't

necessarily

mean

how

the

reddits

and

the

twitters

and

the

facebooks

looked

at

it,

but

not

knowing

how

they

did

it.

That's

what

we

were

looking

to

do

so,

the

philosophy

from

a

software

perspective

for

us

and

a

hardware

perspective

was

we

needed

to

always

be

able

to

add

that

was

add

or

subtract.

We

never

really

thought

too

much

about

subtracting,

but

you

know

we

wanted

that

flexibility.

We

wanted

to

be

able

to

say,

if

need

be,

we

could

always

move

forward

and

without

a

lot

of

pain.

B

A

How

stable

that

piece

of

hardware

is

going

to

be,

and

then

you

take

it

down

your

data

center

and

oh

wait:

it's

2

am

and

you

need

to

call

the

it

guy

and

get

them

down

there,

and

so

that

was

a

challenge

thinking

about

the

hardware

piece.

That

was

a

challenge,

but

then,

if

let's

say

we

could

solve

the

hardware

challenge,

then

we

had

the

software

challenge,

which

is

all

the

pieces

I

just

mentioned.

How

do

we

do

that?

A

Where

do

you

get

hardware

on

demand

well

up

until

recently,

that

wasn't

really

feasible,

but

things

have

changed,

and

so

now

the

you

know

whether

or

not

you

were

going

the

route

of

procuring

hardware

from

a

dell

or

an

hp

and

waiting

for

five

six

weeks

and

then

hoping

and

praying

that

it

can

get

into

the

data

center

rack

and

all

that

in

a

short

period

of

time

or

you

could

use

this

thing

called

the

cloud

potentially,

and

so

you

know

that's

where

we

started

kind

of.

We

had

no

experience

in

the

cloud.

A

We

had

no

idea

who

to

use-

or

you

know

how

successful

it

was.

So

we

we

started

looking

into

that.

Another

architectural

challenge

for

us

was

okay.

If

we

want

to

be

nimble

and

flexible,

the

first

two

tenants

that

we

described

earlier.

How

do

we

do

that

in

the

software

here?

Is

it

easy

for

us

to

make

a

change

and

okay

we're

deploying

a

dll?

A

You've

got

to

stop

iis

or

you

know,

you're,

maybe

putting

up

a

jar,

and

you

got

to

do

some

other

stuff

where

you

stop

and

start

something

else,

and

you

got

to

compile

it

and

you

can't

do

it

right

on

the

server

potentially

or

if

you

do.

Maybe

it

seizes

something

while

the

server

is

doing

that,

so

we

were

looking

at

statically

typed

languages

versus

dynamically

typed

languages

and

trying

to

see

what

was

the

most

flexible

and

sort

of

fit

our

needs,

and

so

that

was

the

second

challenge

and

then

the

third

challenge

was

okay.

A

So

these

database

systems,

how

do

you

get

them

to

scale

whether

or

not

it's

a

relational

database

or

a

cassandra

database

or

a

database

or

oracle?

How

do

you

get

them

to

scale,

and

hopefully

it

doesn't

cost

a

lot

of

money

along

the

way

and

then,

lastly,

we

looking

at

scaling,

we

wanted

to

make

sure

that

we

weren't,

maybe

every

couple

of

months

as

things

started,

to

grow

exponentially,

hopefully

going

back

and

re-architecting.

A

Just

that

we're

able

to

add

more

hardware

and

expand

the

software

across

that

hardware.

So

if

you

start

with

traditionally

you

might

start

with

one

database

server

and

then

let's

say

you

wanted

to

add

another

one.

If

you're

in

a

relational

world,

you

may

not

have

configured

your

schema

in

such

a

way

that

it

can

be

replicated

easily

so

now,

you've

got

to

go

refactor

the

schema

so

that

you

could

add

another

machine

so

that

you

could

take

on

more

traffic

and

more

load

into

your

infrastructure.

A

A

A

So

you

know

when

we're

talking

about

the

hardware

decisions

again,

it

was

about

procuring

hardware

from

a

vendor,

and

that

takes

time

and

then

you

get

it

shipped,

and

then

you

get

it

into

your

into

your

office

and

then

some

it

guy

has

to

become

available.

He

has

to

put

down

the

operating

system,

drive

it

down

to

the

data

center.

All

that

stuff

has

to

happen.

That

was

the

traditional

way

we

worked

at

gamefly.

We

knew

that

that

was

going

to

be

probably

impossible

due

to

resource

constraints

within

our

company.

A

A

We

needed

infrastructure

that

could

scale,

which

means

we

needed

to

be

able

to

get

these

things

at

any

time.

We

needed

to

be

able

to

get

small

ones,

big

ones

super

big

ones,

configured

in

different

ways

with

x

number

of

drives.

All

of

that

became

available

to

us.

As

soon

as

we

went

with

the

cloud

solution,

you

could

kind

of

design

it

on

the

fly

if

you

wanted

to,

we

needed

it

to

be

in

more

than

one

location.

A

Eventually,

we

haven't

actually

gotten

to

this,

but

we

like

the

idea

that

you

could

actually

scale

your

infrastructure

from

just

being

a

one

data

center

to

three

or

four

data

centers

and

then.

Lastly,

this

point

about

horizontally

or

vertically.

It

just

meant

that,

like

if

our

infrastructure

needed

more

cpus

inside

three

machines,

instead

of

going

to

30

machines,

we

had

that

option

just

as

well

as

we

had

the

option

to

add

more

hardware,

just

additively

buying

more

boxes,

so

the

flexibility

that

the

cloud

offered

really

catered

to,

what

we

needed

at

the

time.

A

A

We

also

wanted

the

software

to

be

as

flexible

as

this

hardware

solution.

We

found

we

wanted

to

make

sure

that

we

could

change

it

very

easily.

This

was

that

nimble

piece

I

mentioned

earlier

and

we

wanted,

as

new

people

came

on

board,

because

again

we

were

only

two

people.

We

wanted

to

be

able

to

say

if

we

documented

this

fairly

well,

hopefully,

you

could

come

in

and

take

that

software

that

we've

hopefully

documented

very,

very

well,

and

there

would

be

a

low

learning

curve

to

making

you

actually

productive

in

in

our

infrastructure.

A

A

We

realized

that

we're

going

to

go

with

a

dynamic

language,

one

that

you

could

change

pretty

much

on

the

fly

anytime

anywhere

inside

a

little

piece

of

you

know,

software

that's

running

on

the

third

server

or

the

fifth

server

within

your

web

tier

sort

of

thing.

We

knew

that

that

meant

that

we

were

probably

going

to

sacrifice

a

little

bit

of

performance,

but

we

were

willing

to

make

that

trade-off.

If

we

had

a

slightly

lower

performance

over

here,

we

might

add

another

server

at

a

later

date.

A

A

So

you

know

we

did

some

experiments

with

java,

but

we

kind

of

quickly

moved

away

from

that,

and

that

was

predominantly

the

only

language

we

looked

at

that

wasn't

a

dynamically

typed

language.

We

looked

at

ruby

and

python.

We

like

those

more

than

php,

because

at

the

time

we

were

really

playing

around

with

the

interactive

shelves.

I

found

out

later

that

there

was

a

php

shell

that

came

out

of

facebook

know

that

at

the

time,

so

we

were

really

playing

with

ruby.

A

G

A

But

it

was

like

poorly

documented,

and

there

was

one

guy

who

seemed

to

know

everything.

It

was

really

hard

to

understand.

So

we

ended

up

going

towards

python

and

then

specifically,

we

ended

up

using

something

similar

to

event

machine

called

tornado,

which

is

a

an

event

loop

web

server.

It

allows

you

to

do

asynchronous

communications

sort

of

like

a

threading

model,

but

you

don't

have

to

like

get

into

the

weeds

about

doing

threading.

A

A

So

we

decided

again

to

use

open

source,

and

so

we

took

sold

it

and

then

again

we

spent

some

time

looking

at

databases,

but

we

had

a

lot

of

experience

on

the

team

of

two

people

working

on

relational

systems

and

we

knew

some

of

the

the

cons

that

we

didn't

really

want

to

face.

And

so

we

were

really

looking

for

alternatives

to

relational

systems.

Things

that

could

have

scalability

sort

of

as

a

core

tenant

of

their

systems

didn't

mean,

obviously

that

there

probably

were

going

to

be

some

sacrifices

along

the

way.

A

A

I

had

been

following

cassandra

for

a

while,

because

I

knew

about

the

google

bigtable

and

amazon

dynamo

model

that

it

sort

of

leveraged

to

grow

out

and

to

build

off

of

so

it

was

pretty

much

sort

of

ingrained

in

me.

I

think

at

some

point

that

I

really

just

wanted

to

try

this

and

see

if

I

can

make

it

work,

and

so

that's

what

led

me

to

cassandra

so,

like

I

said

you

know,

we,

the

big

table

and

dynamo

white

papers

were

very

interesting.

A

They

accomplished

a

flexible

data

model

and

sort

of

the

horizontal

scalability.

They

each

did

these

one

thing

very

well

and

facebook

when

they

built

cassandra.

Just

basically

said:

let's

see

if

we

can

put

these

two

things

together.

That

really

appealed

to

me.

I

liked

having

the

flexibility

at

the

data

layer

and

I

like

having

the

flexibility

of

being

able

to

add

servers

and

and

bring

another

machine

up,

have

it

replicate

sort

of

distribute

the

data

that

it

had

on

two

machines?

A

A

A

I'm

not

doing

it.

I'm

bringing

the

machines

up,

I'm

saying

you

know

about

you

and

you

know

about

you

and

you

know

about

you

and

the

rest

happens

within

the

infrastructure

itself

within

the

cassandra

infrastructure

that

was

really

appealing

and

then

later

on,

they

introduced

the

ability

to

do

that

in

a

wide

area

network.

So

now

you

could

have

a

data

center,

in

los

angeles,

with

three

machines,

and

you

have

a

data

center

in

new

york,

the

three

machines,

and

that

same

thing

I

described

when

you

bring

up

the

three

machines

locally.

A

Could

happen

across

a

land,

there

will

obviously

be

the

speed

of

light

and

preventing

you

from

getting

your

copy

over

there

and,

however

much

time

that

takes

to

get

from

la

to

new

york,

but

your

data

will

get

there

and

it'll

happen

all

without

you

really

having

to

do

much,

which

again,

like

I

said,

if

you

need

to

have

that.

That

was

really

appealing

at

the

time

that

we

were

first

looking

at

cassandra.

It

was

a

cassandra

zero.

Six

now

they're

up

to

one

twos

in

beta,

it

had

very,

very

fast

rights.

A

Its

rights

were

actually,

I

think,

four

times

faster

than

its

reads,

which

is

not

traditionally

how

a

database

works,

which

just

seemed

amazing.

I

was

that

sort

of

just

drew

me

just

for

that

alone,

but

the

second

question

was:

why

are

your

reads

so

slow

and

they

couldn't

really

give

a

legitimate

answer

to

that.

It

was

more

because

the

rights

were

just

amazingly

fast

and

but

eventually

in

around

1.0

of

cassandra

they

actually

had.

B

A

It's

they

don't

really

think

of

it

as

asynchronous

and

synchronous.

It

has

to

do

with

what's

called

a

consistency

level,

and

everything

in

cassandra

is

what's

called

eventual

consistency,

so

it

doesn't

follow

the

traditional

acid

model,

so

if

you

say

we're

getting

a

little

off

and

I'll

touch

on

later,

but

I'm

happy

to

bring

it

up

now,

when

you

say

I

have

a

row

of

data

and

I

want

that

row

of

data

to

be

in

three

places

within

my

architecture.

A

A

There

won't

be

loss

because

of

the

way

it

writes

to

a

it

writes

to

in

memory

and

to

a

commit

log

in

an

append

only

manner

on

the

one

system

that

got

it

before

it

gives

you

a

response,

so

you'll

get

that

data

somewhere

and

then

the

eventual

consistency

part

will

happen

at

a

later

date

later

date.

You

know

less

than

one.

B

A

You

can

have

an

insane

amount

of

data

quite

frankly,

because

you

just

got

to

put

some

disks

behind

it,

and

you

just

got

to

make

sure

that

you

can

have

enough

servers

to

hold

all

the

data

that

you

want,

and

you

know,

depending

on

what

you're

actually

doing

with

the

data.

You

probably

won't,

have

it

all

in

memory,

but

you'll

be

able

to

have

access

to

it

pretty

quickly.

B

A

A

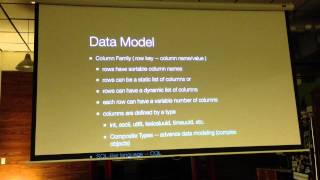

Columns

the

columns

are

defined

at

creation,

time

of

the

table

and

there's

they're

fixed.

Every

row

looks

the

same

if

it's

got,

10

columns

defined

every

row

has

10

columns

and

if

you

don't

populate

those

columns,

you

usually

put

double

data

or

empty

string

or

whatever

your

choice

is,

I

guess

so.

The

rough

equivalent

to

that

in

cassandra

is

a

column

found.

A

So

what

is

a

column?

Family?

Well,

a

column

family?

Is

it

has

a

primary

key?

It

has

a

row

lookup

id

just

like

a

traditional

database

does

and

then

it

has

basically

name

value

errors

that

make

up

these

rows

and

these

columns

these

you

know

the

the

ten

columns

that

we

talked

about

in

the

table.

You

can

have

those

same

ten

columns

in

the

cassandra

column,

family.

However,

let's

say

one

row

is

a

user

profile

row

and

it

has

a

username

and

a

password

and

a

couple

other

data

points.

A

Let's

say

the

second

row:

you

don't

have

a

username,

yet

it

doesn't

create

a

field

called

username

and

put

a

no

value

in

it.

Just

doesn't

exist,

so

you

end

up

with

one

row

that

might

have

ten

columns

and

one

row

that

might

have

two

columns.

So

you

get

you

know

variability

in

the

shape

of

the

data

that

you're

storing

inside

one

column,

data

type,

the

key,

the

rokin

it

can

be

variable.

You

can

define

it

in

advance.

So,

in

the

definition

of

a

column,

family,

there's

a

there's,

you

can

define

the

column

names.

A

This

is

jumping

ahead,

a

couple

of

steps,

but

you

can

also

define

the

row

key

as

being

a

certain

kind,

so

it

could

be

an

integer

type.

It

could

be

there's

something

called

the

time

uuid

which

is

sort

of

like

supposedly

globally

unique

value,

so

oftentimes

in

cassandra.

We

use

that

as

opposed

to

a

sequence,

or

what

does

it

involve?

There

is

no

default.

You

have

to

define.

C

A

Have

to

define

it

yeah,

you

can

also

do

composite

keys,

which

traditionally

might

look

like

something

like

an

integer,

a

colon,

another

injury

colon,

but

you

can

actually

define

that

in

something

called

a

you

can

create

a

type

that

actually

defines

the

structure

of

this

multi-part

key

one

of

the

more

interesting

things

about

column.

Family

is

because

you

have

these

dynamic

rows.

These

different

shaped

rows.

A

You

can

actually

think

of

it

as

being

both

a

data

structure

that

can

be

statically

defined,

meaning

you

can

always

enforce

to

have

a

row.

Look

the

same.

Every

row

look

the

same

as

you

want

exactly,

but

you

have

to

enforce

that

when

you're,

inserting

the

records,

the

other

side

of

that

coin,

is

you

can

have

it

be

dynamically?

A

Each

row

can

have

a

different

shape,

the

major

or

the

the

the

traditional

example

case,

for

that

is

timeline

or

time

series

data

you

know

instead

of

you

know

off

the

top

of

my

head.

How

you

do

this

in

a

traditional

database,

but

you

would

probably

have

some

some

field

called

date

and

you'd

be

ordering

off

of

that,

and

the

data

might

necessarily

get

stored

in

that

particular

order.

A

Unless

you

have

like

a

sorted

sorted

on

disk

index

on

that,

but

you

could

be

writing

data

into

that

in

every

column

and

you

could

have

hundreds

and

hundreds

and

of

thousands,

whatever

number

of

rows

that

you

want

in

cassandra.

The

way

you

would

do

that

is

you

make

one

row

which

has

much

better.

I

o

read,

and

you

would

just

strike,

write

out

that

data

as

different

columns

and

you'd

hang

off.

Of

that

each

column

name

would

actually

be

a

timestamp

and

then

off

of

that

you'd

actually

put

into

it

whatever

you

want.

A

So

let's

say

you

had

400

tweets,

just

as

an

example

over

three

years.

That

would

be

one

row

with

400

columns

and

then

the

time

stamp

for

each

tweet

would

be

a

column

name

and

then,

whatever

you

want

to

hang

off

of

that,

the

post

a

picture,

video

url,

whatever

you

want,

that

would

all

be

sort

of

bundled

into

the

column

values.

The

column

name

is

the

time

stamp

and

the

column

values

can

be

a

string,

an

integer

or

it

can

be

a

complex

object.

E

A

Problem

and

no,

we

haven't

had

that

problem,

but

I

actually

built

our

public

timeline

that

way,

so

each

one

is

based

is

the

the

index

for

the

row.

Key

is

actually

a

date

and

then

it

goes

out

this

way.

So

you

might

have

you

know

whatever

100

000

posts

one

day,

55

000

the

next

day,

30

000.

You

know

two

that

sort.

A

B

A

When

you

have

these

dynamic,

this

dynamic

data

structure,

you're

gonna,

have

the

opportunity

to

have

it

actually

sort

those

posts

that

we

were

talking

about.

You

can

actually

sort

them

by

the

column

names.

So

there's

this

thing

called

the

comparator

and,

as

you

define

a

column

family,

you

define

the

row

key.

The

data

type

of

the

row

key

also

define

the

data

type

for

the

column

names

so

that

you

can

actually

sort

it

in

a

particular

way.

A

If

you

want

to

read

it

from

the

earliest

the

latest

or

vice

versa,

you

can

do

it

either

way

and

you

can

use

a

time

stamp

data

type,

a

time,

uuid

data

type.

To

actually

do

that,

so

the

sortable

column

names

come

in

handy

quite

often,

so

you

know

rows

can

have

static

lists

of

columns.

That's

where

I

was

like

saying

again.

You

can

just

write

out

exactly

what

you

want

it

to

be,

every

time

it

can

look

consistent

or

the

dynamic

list

of

columns.

A

It

can

be

sort

of

like

a

timeline

and

again

the

rows

can

have

variable

lengths,

variable

number

of

columns

each

row

and

then

the

data

types.

So

the

row

key

comment:

you

know

you

can

be

in

ascii,

utf-8,

lexical,

uuid,

tinyuid,

byte

types,

there's

like

12

of

them

too

many

to

put

on

here

and

that

can

be

used

for

columns

and

for

roki's

and

then

lastly,

and

they'll

just

touch

on

it

actually

absolutely

down

here

and

see

composite

types.

A

A

B

A

You

can

do

things

like

get

range,

a

couple

other

features,

but

you

don't

have

a

lot

of

query

power

that

would

be

sort

of

like

the

one

thing

you

have

to

be

aware

of

when

you're

building

your

architecture

using

cassandra

is

that

you're

not

going

to

be

able

to

do

joins

order,

buys

you're

not

going

to

be

able

to

do

any

of

that

stuff

using

the

hardware

you

have

at

the

database

level.

All

that's

going

to

have

to

happen.

A

So

you

have

all

the

data

in

the

exact

format

you

want

it

in

or

whatever

your

language

is,

that

you're

going

to

be

taking

it

up

to

on

on

the

the

middle

tier

or

the

left

here,

you're

going

to

do

the

order

by

up

there

you're

going

to

do

a

loop

over

it

and

do

some

join,

none

of

which

are

good

ideas,

but

that

was

in

the

beginning

and

now

they've

introduced

something

called

cql

which

stands

for

cassandra:

query

language,

which

is

very

similar

in

nature

to

sql

minus

a

few

limitations.

No.

C

A

And

there's

some

limitations

on

the

where

predicate

those

are

sort

of

the

two

biggest

ones,

but

you

can

do

select

star

from

entry

to

column

family

just

like

a

table.

They

actually,

I

believe,

actually

just

recently

put

in

some

order

by

functionality

which

I

haven't

actually

used

yet,

but

it

for

those

who

know

sql,

understand

sql.

You

don't

have

to

kind

of

get

down

into

the

whole,

using

these

more

primitive

drivers

that

leverage

thrift

and

do

all

this

stuff.

A

So

on

the

data

designs

and

you

kind

of

understand

what

the

model

is

like,

how

you're

going

to

build

things

in

it.

So

now

it's

like

well,

okay!

Now,

how

do

I

build

an

architecture?

Well,

the

first

and

probably

the

most

important

thing

is:

don't

think

of

it

like

a

relational

database.

You

know

it

doesn't

have

joins.

A

A

Well,

you

have

a

couple

of

choices.

One

choice

is

that

you

can

write

multiple

versions.

Think

of

them

as

like

indices,

think

of

the

column,

families

like

building

a

materialized

view

of

your

data

at

right

time,

so

I'm

gonna,

I'm

gonna.

I

wanna

look

at

the

orders

in

my

system

by

date.

I

want

to

look

at

them

by

user.

I

want

to

look

at

them

by

product

you'd.

Actually,

those

would

be

three

queries

traditionally

now

there

are

three

writes

into

your

system.

A

C

A

Yeah,

you

don't

actually

define

when

you

create

the

equivalent

of

create

tables

called

create

column

family.

You

don't

actually

define

any

column

names

at

that

time.

All

you

do

is

define

the

comparator,

which

is

used

to

sort

of

sort

the

column

name,

so

you

have

to

stick

within

the

confines

of

a

data

type.

A

F

A

So

that's

one

way

you

could

do

it

the

other

solution.

To

what

I

mentioned.

There

were

two

ways

to

do

this.

One

is

at

right

time.

You

know

all

the

parties

you

want.

The

other

one

is

that-

and

this

is

a

little

bit

further

jumping

ahead

a

little

bit,

but

it's

a

good

question

to

bring

up

so

cassandra

has

this

sort

of

ring

topology,

which

allows

you

to

have

multiple

nodes

servers,

they're

all

part

of

this

replication

process.

A

The

ring

still

exists

as

one

entity,

but

they

sort

of

slice

it

up

and

they

sort

of

treat

what's

in

la

as

one

thing

so,

you'll

have

a

replication

of

three

in

here

and

you'll

have

a

replication

of

three

in

new

york,

but

your

replication

factor

is

still

three

and

that's

the

you

have

to

have

three

copies

when

you

divide

the

data

center

up

into

these

two

parts,

what

happens

if

the

la-1

disappears

right?

It

goes

into

the

ocean.

A

You

still

need

to

have

three

over

there,

so

the

reason

why

that

is

important

is

because

you

don't

have

to

do

you-

don't

have

to

have

physically

disparate

data

centers

to

take

advantage

of

virtual

data

centers.

What

you

can

do

is

you

can

create

a

virtual

data

of

several

virtual

data

centers.

If

you

want

inside

one

physical

data

center,

you

can

make

copies

to

those

that

second

virtual

data

center

and

there

you

can

do

all

sorts

of

ad-hoc

querying

analytics

whatever

you

want

to

call

it.

A

A

Features

taking

hadoop

and

bringing

it

in

so

now,

if

you

write

your

data

one

way

you

can

use

like

hive

or

pig

or

write

your

own

mapreduce

stuff.

If

you

want

whatever

your

language

of

choices,

and

you

can

manipulate

that

data

on

your

own.

However,

you

want

to

it

is

sort

of

to

what

you're

saying

you're

going

to

pull

it

out,

you're

going

to

do

some

etl

type

thing

and

then

shove

it

back

in

that

sort

of

has

to

happen

in

a

traditional

relational

system.

Oftentimes.

A

You

know

you

have

to

pull

it

out

into

a

data

warehouse

and

you

might

be

changing

it,

putting

in

an

analytics

cube

doing

whatever

you're

doing

so.

It's

not

sometimes

when

you're

doing

that,

you

don't

have

to

change

the

data

to

actually

do

the

query,

because

hive

and

pig

are

pretty

powerful

and

can

do

some

things

on

their

own,

where

you

don't

have

to

like,

take

it

out

and

shove

it

in

a

different

manner,

and

sometimes

you

will

have

to.

A

G

Up

there,

like

I,

I

might

shine

in

because

I've

been

like

dealing

with

both

like

in

the

way.

The

reason

like

why,

using

like

the

big

data

like

the

nosql

solutions,

is

mostly

like

for,

like

okay

you're,

throwing

like

an

enormous

amount

of

data

there,

just

running

like

the

regular

reports

like

you

would

run

from

again

like

against

the

mysql

database,

might

not

be

feasible

there

at

all,

because

you

might

have

like

terabytes

or

like

petabytes

of

data,

it's

think

of

it

as

like.

Okay,

you

have

this

apache

log

there

you

know.

G

If

you

want

to

run

some

kind

of

statistics,

so

then

you

need

to

run

my

analysis

script

on

that

a

normal

way

to

do

it

like

people,

run

some

kind

of

map

produce

using

hadoop

or

like

some

kind

of

other,

like

parallel

analysis

tools

on

it

just

to

collect

statistics.

Okay,

I

need

like

these

stats.

You

know,

okay,

how

many,

like

you

know

like

female

users

in

alaska?

I

have

you

know

like

it

is

you

not

normally

would

not

run

the

like

reporting

tool

directly

off

the

data

set.

A

And

then

the

really

cool

thing

from

client

to

answer

your

question

about

preference,

because

the

replication

happens

to

this

other

virtual

data

center.

I

now

have

let's

say

two

machines

that

I

can

run

some

really

nasty

hive

mapreduce

thing:

peg,

the

cpu,

let

it

run

for

whatever

an

hour

24

hours,

no

impact

on

my

customer

facing

data

servers,

none

still

getting

the

replicas,

the

the

copies

coming

out

in

real

time

to

the

analytics

server.

So

I

can

be

doing

analytics

in

basically

near

real

time

without

me

doing

any

etl

anything

else.

A

A

So

we

talked

a

little

bit

about

the

replication

already.

The

one

really

interesting

thing

here

is

replication

factor.

So

when

you

are

putting

a

column

family

into

cassandra,

you

get

to

define

for

that

one

particular

data

object,

the

replication

factor

or

the

number

of

copies

of

any

given

row

within

it

that

you

want,

so

you

can

have

table

a

or

column

family,

a

and

column

family

b,

and

they

can

have

different

replication

factors.

A

We

don't

practice

that

we

just

have

a

standard.

We

want

three

copies

of

everything,

but

you

have

choice

there.

You

can

do

that

what's

called

the

key

space

level

and

the

key

space

level

is

the

the

catalog

that

holds

all

of

your

traditional

databases

that

the

thing

that

holds

all

your

tables

is

a

catalog

and

in

in

cassandra

they

call

it

a

key

space

holds

all

your

column

families,

so

you

can

do

a

key

space

level

and

then

you

can

overwrite

it

at

the

column,

family

level.

A

A

When

that

server

comes

online

and

then

read

repair,

let's

say

you're

doing

a

query

and

you

need

a

consistency

level

of

one

think

of

it

like

a

dirty,

read

and

relational

database,

but

you

still

need

three

copies

of

it.

When

it

does

the

read-

and

it

recognizes

hey,

I'm

going

to

give

you

this

row

back,

but

oh

by

the

way

it

hasn't

made

it

to

all

three

of

the

servers.

A

The

other

really

interesting

thing

about

consistency

is

that

it's

tunable

at

query

time.

So

what

that

really

means

is

at

write

or

read

time,

both

being

queries,

you

can

define

the

level

of

consistency

you

want

so

the

best

way

to

think

of

that

is

at

right

time.

If

I

want

a

consistency

level

of

one,

I

just

need

to

make

sure

one

machine

gets

a

copy

of

that

data

and

I'll

get

a

response

back.

B

A

A

Yes,

but

the

so

back

to

the

the

consistency

level

when

you're

writing

a

record,

and

maybe

you

only

care

that

it

gets

to

one

location,

still

means

you're.

Gonna

have

a

replication

factor

of

three.

In

the

example

we're

talking

about

so

you're,

eventually

going

to

get

that

copy

to

three

other

machines

or

two

other

machines.

Sorry,

but

initially

it

only

has

to

be

successful

at

one.

So

you'll

have

a

faster

right

before

with

a

response

back

to

your

your

application,

tier

the

other

side

of

that

coin,

is

you

can

do

a

read?

Yes,.

D

A

Right,

but

what

is

the

case

if

one

of

those

targets

is

down

so

in?

If

you

have

say

three

machines,

and

only

three

machines-

and

you

have

three

copies

of

the

data

or

you've

got

your

your

requirements.

I

have

three

copies

of

data

and

you

have

one

machine

go

down

when

you

do

that

right,

you

will.

If

I

remember

correctly,

you

will

actually

have

a

problem

with

that.

A

We

don't

typically

run

with

just

three

machines

and

a

copy

of

three,

so

you

have

the

ability

to

hopefully

not

have

more

than

one

server

down

at

a

time

without

somebody

you

know

getting

on

it

and

taking

care

of

it.

So

you

can

actually

then

have

three

copies

say

on

five

machines.

So

they're

spread

out

it's

sort

of

striped

around

the

the

ring,

and

so

in

that

scenario

that

you're,

describing,

if

you

have

three

machines

and

three

copies

you'll,

have

you'll,

have

a

problem.

You'll

have

a

system

down

problem.

A

A

So

in

that

scenario,

if

you

have

to

have

three

copies-

and

you

only

have

three

machines-

you

don't

really

have

a

lot

of

flexibility

in

case

one

server

goes

down,

so

maybe

you

want

to

start

off

with

four

machines

and

maybe

a

replication

factor

of

two.

So

you

have

flexibility,

so

you

have

to

think

about

those

things

as

you're.

A

D

A

Maybe

I

can

explain

it

afterwards,

a

little

bit

better.

I

mean,

ultimately,

if

you

demand

three

and

you

don't

even

have

three

servers

you're,

just

not

going

anywhere.

You're

gonna

have

you're

going

to

get

errors

back

from

cassandra,

saying

it

wasn't

able

to

do

that

right

and

then

your

application

is

going

to

do

whatever

it

does

to

tell

the

user.

It

was

unsuccessful.

E

E

A

Not

the

way

they

architected

it,

so

I

I

mean

I

don't

know

the

details

at

that

low

level.

How

they're

doing

that

piece

that

you're

referring

to,

but

that's

not,

it's

not

an

issue.

They

have

a.

They

use

a

time

uuid

to

actually

do

a

timestamp

of

every

record

at

a

particular

point

in

time,

and

they

are

able

to

coordinate

that

in

such

a

fashion

that

that

doesn't

happen,

but

the

actual

inner

workings

of

how

that

works.

I'm

not

I'm

not

aware

of

the

inner

system.

C

E

E

A

Right

but

you're

going

to

have

two

different

time

stamps

for

the

two

records

that

were

written

in

so

I'm

assuming

I'll.

Take

that

the

example

a

little

bit

further.

Let's

say

I'm

updating

my

my

user

record,

let's

say:

you're

updating

in

new

york

and

I'm

updating

in

la

right,

and

so

it's

one

record.

You're.

E

E

F

A

So

the

so

it's

tied

into

replication.

So

in

an

example

where

you

would

have

five

machines

in

this

ring

and

but

your

replication

factor

which

for

argument

say,

is

three

up

on

every

data

model

throughout

the

system.

So

let's

say

you

have

five

column

families

they

all

have

to

have

every

row.

In

all

five

column,

families

has

to

have

three

copies,

a

replication

factor

of

three,

but

you

have

five

machines,

so

the

partitioning

meaning

I

have

five

machines.

There's

gonna

be

some

set

of

three

over

here.

There's

gonna

be

another

set

of

three

over

here.

A

F

A

A

Architecture

that

you

can

fuss

with

that's

probably

the

best

way

to

put

it

which

you

can

control

the

partitioning,

but

you

don't

really

need

to

do

that.

There's

some

values

that

you

can

set

up

at

the

beginning

and

once

you've

done

that

in

the

config

file

and

when

you

start

up

the

server

you're

done

you

don't

every

data

model

doesn't

or

every

data

object

or

table

whatever

you

want

to

think

of

it

as

doesn't

need

to

have

partitioning

defined

at

the

schema

level.

A

A

Don't

have

to

do

it

that

way.

That's

one

way

you

can

do

it.

You

can

also

like

inject

yourself

between

two

nodes.

So

if

the,

if

the

ring

topology

has

say

from

zero

to

a

thousand,

are

these

tokens

that

I'm

at

you

know,

point

100,

250,

450,

650

and

whatever

900

right?

I

can

bring

in

another

machine

between

the

250

and

the

450.

A

A

So

there's

mechanisms

now

that,

if

you

use

specifically,

if

you're

using

data

stacks

as

product,

they

have

something

called

op

center

and

op

center.

Basically

can

do

all

this

configuring

for

you,

you

just

check

the

box

and

it

does

the

rest,

so

you

can

manually

do

it

if

you

want

to-

or

you

can

have

it

auto

set

up,

and

it's

there's

no

reason

in

my

opinion,

to

go

to

the

level

of

doing

it

manually.

It

was

sort

of

how

it

was,

and

now

that

it

automatically

can

do

this.

If

you

tell

it

to

do

so,.

H

B

B

A

So

we

kind

of

touched

on

this