►

From YouTube: GMT 2018-05-01 API WG

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

C

A

D

C

A

C

C

C

E

A

E

A

C

C

A

A

Basically,

what

we're

doing

right

now

is

adding

per

framework

metrics

to

the

master,

mainly

so

so

far.

What

we

had

in

my

sauce

is

essentially

metrics,

but

our

cluster

white,

like

number

of

tasks

launched

number

of

offers,

sent

and

stuff

like

that,

but

we

really

did

not

have

a

way

to

partition

them

by

frameworks,

so

it

it

kind

of

becomes

hard

when

you're

trying

to

understand

the

individual,

flavors

performance

or

a

masters.

A

How

is

the

master,

responding

to

individuals,

remotes,

work

tools

and

stuff

like

that,

so

this

can

mainly

stem

from

one

of

because

internally,

here,

missus

fear,

where

they're

trying

to

run

a

large

number

of

frameworks

in

a

cluster

I'm

trying

to

understand

how

the

system

behaves

and

white

in

certain

way.

So

as

part

of

it,

we

started.

A

Implementation

around

how

to

do

the

how

to

figure

out

the

matrix

that

we

want,

but

are

useful

to

kind

of

a

personal

basis

and

how

to

essentially

kind

of

write

them.

So

there

is

a

design

dog

that

was

shared

with

the

community.

It's

not

really

designed

out

but

more

like

what

I

can

offer

you

doc

on

the

kind

of

metrics

that

we

have

been

thinking.

A

So

we

added

those

metrics

right

now

we

haven't

committed

any

of

those

metrics

yet

to

master

their

reviews

out

and

we've

been

testing

some

of

those

metrics

on

an

internal

branch

which

is

based

on

older

version

of

misses

or

not.

For

specifically,

and

looks

like

from

some

of

the

tests

that

Gilbert

did

regarding

benchmarks,

looks

like

the

numbers:

don't

look

that

bad

when

me,

when

he

benchmark

up

two

hundred

thousand

channels,

or

so

it

wasn't

significantly,

it

wasn't

any.

A

C

B

A

A

C

A

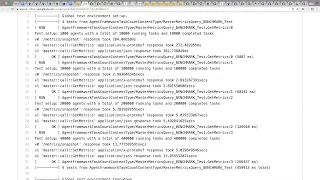

And

that's

one

hundred

a

second,

so

this

is

before

before

we

added

powerful,

magnet

ryx

and

if

it

scroll

down

this

stuff

is,

after

all,

the

performance

metrics

are

added

and,

as

you

can

see,

because

it

was

280

milliseconds

for

v-0

metrics,

whereas

now

it's

300

something.

So

it's

like

about

20

milliseconds

extra

well,.

A

A

A

D

A

A

D

A

A

A

So

the

gauges

so

at

least

a

part

frame

rate

matrix

we're

trying

to

use

gauges

that

are

push

based

instead

of

pull

based.

It's

a

new

thing

that

Ben

Maller

added

part

of

this

effort.

So

if

you're

using

push-based

gauges

and

counters,

there

should

not

be

any

work

that

happens

on

the

master

during

the

matrix

collection

time,

I

mean

when

someone

hits

a

metric

standpoint.

A

It's

not

going

to

affect

master

so

who

is

going

to

push

masters

going

to

push

a

snowman

is

going

to

just

update

a

variable,

essentially

okay

inside

metrics

actor,

just

like

it

a

bit

count.

So

at

least

the

part

framework

gauges

and

counter

should

not

generate

any

dispatches

or

anything

on

to

the

master

actor

or

any

work.

The

world

only

happens

when

the

master

is

incrementing

a

metric

or

setting

the

value,

at

whatever

rate

those

metrics

change.

A

A

A

C

C

A

C

And

the

metrics

path,

so

there

could

be

some

issues

there,

or

it

could

also

be

that

the

existing

gauges,

the

non

performer

gauges,

are

doing

a

lot

of

looping

and

so

on.

Here,

like

they've,

they

have

to

loop

over

400,000

running

tasks,

I

think

a

couple

of

times

at

least

right,

because

they're

going

to

compute

different

task

status,

counts

and

that's

probably

pretty

expensive

as

well

yeah.

A

A

C

B

B

A

One

question

I

had

was

given:

we

need

to

run

more

runs

to

confirm

but

sounds

like

it

does

not

have

any

material

impact.

If

that

holds

true,

should

we

be

committing

this

upstream

one

of

the?

What

is

we

had

was

this

would

have

a

lot

of

impact

for

people

with

big

clusters.

If

you

just

started,

including

our

climate

metrics,

we

weren't

very

many.

We

had

to

do

like

query

or

some

kind

of

filtering

query

parameters

so

that

they

do

not

yet

or

framework

on.

A

C

Think

we'll

have

to

ask

those

who

have

the

concerns

like

James

to

understand

if

he

was

concerned

about

master

performance.

If

he

was

concerned

about

his

metrics

integration,

starting

to

slurp

down

too

many

metrics,

or

if

it's

the

latter,

then

he

might

already

have

like

a

constrained

list

of

metrics

that

he

uses

or

he

might

want

to

set

up

a

filter

to

reduce

the

response

size.

But

from

this

at

least

it

doesn't

seem

like

the

your.

D

B

A

C

A

A

Audience

of

learning

it

mastered

yes,

I

guess

one

comes

in

I

have

is

the

code

is

going

to

change

on

master,

it's

going

to

be

become

harder

to

backward,

are

actually

front

port

to

master.

If

it

goes

stale

like

in

a

month

or

two,

it

will

require

a

lot

more

work

to

actually

again

get

it

properly.

Compiled

and

I

mean

if

there

is

no,

at

least

on

the

server

side,

doesn't

look

like

there's

that

much

performance

change

so

I

feel

like.

A

B

C

For

the

master,

we

just

know

that

we

have

any

introduced

any

any

additional

computation

because

everything

gets

pushed.

So

the

overhead

is

now

in

the

runtime

path,

and

it's

just

a

bunch

of

like

atomic

sets,

which

you

can

do

like

you

can

do

a

lot

of

those

in

a

second.

There

should

have

pretty

negligible

overhead,

like

that

was.

The

approach

was,

let's

just

use,

counters

and

push

based

gauges

to

avoid

introducing

any

work

as

part

of

this

just

so

that

this

doesn't

take

any

additional

master

time.

C

A

C

A

C

E

C

A

A

A

E

B

A

C

C

C

Doesn't

support

it

because

we're

not

using

it

that's

what

that's

kind

of

what

we've

been

doing.

However,

we

have

no

enforcement

of

that.

So

when

you

do

a

downgrade

it

might

not

work,

it

might

do

something

very

bad.

We

just

don't

know

and

there's

nothing

in

the

system

that

tries

to

stop

a

bad

downgrade.

C

So

when

Michael

and

I

were

working

on

the

agent

side

of

the

refine

reservation

stuff

when

they

started

thinking

about

downgrades,

we

realized

that

we

needed

to

build

something

sooner

rather

than

later,

to

make

the

agent

and

master

be

aware

of

the

minimum

capabilities

that

they

need

based

on

the

recovered

state.

So,

for

example,

an

agent

would.

C

Read

it

state

and

see

that

there's

a

minimum

capability

for

refined

reservations

in

there,

because

someone

started

using

refine

reservations

and

if

that

agent

doesn't

recognize

that

capability,

it

would

refuse

to

start.

It

would

say

this

isn't.

This

is

not

a

safe

downgrade

because

there's

some

unknown

feature

being

used

rather

than

just

kind

of

proceeding

and

whatever

happens

happens

same

for

the

masters

same

idea.

They're

the

master

would

look

at

the

registry

and

say:

oh

there's,

some

minimum

capability

that

I

don't

have

I.

C

This

must

be

a

downgrade

and

I'm

gonna

refuse

to

start

rather

than

try

to

interpret

this

stuff,

that

I

don't

understand

right

or

ignore

it.

Basically,

so

that's

the

general

idea

of

how

to

approach

this

problem

like

how

to

make

downgrades

something

that

users

can

try

safely

without

meaning

to

say

you

know,

I,

don't

know,

look

at

all

sorts

of

end

points

and

try

to

find

which

features

they're

using

like

we

don't

give

them

a

tool

for

that,

and

so

it's

just

really

hard

to

do

down

grades

today

and

it's

not

safe.

So.

C

C

Is

this

a

new

capability

if

it

is

I

need

to

write

that

capability

down

as

the

minimum

capability

when

the

feature

starts

to

get

used

and

that's

all

I

have

to

think

about

I.

Don't

have

to

worry

about

anything

else,

because

the

existing

logic

is

there

to

prevent

downgrades.

But

if

a

minimum

capability

is

there

that

we

don't

know

about

so

that

that's

I

think

that's

an

easier

world

to

be

in

then

the

current

world,

which

is

like.

A

A

A

C

E

Think,

like

the

refusing

to

start

issue,

is

kind

of

orthogonal

to

this

minimum

capability.

I

think

if

we

check

that

the

minimum

key

agent

or

the

master

does

not

meet

this

minimal

capability

I,

what

to

do

next

is

another

issue,

I

think

by

introducing

this

minimum

capability

to

the

list,

we

can

like

provide

a

simple

tool

for

the

operator

to

understand

what

entails

with

this

dumb

grid

as

to

what

to

do.

If

there's

a

mismatch

between

capabilities,

I

think

that's

another.

Another

thing

that

we

can

discuss.

A

C

A

A

A

C

C

So

I

mean

they

don't

have

a

design

at

this

point,

but

that's

the

general

idea

is

to

just

try

to

make

downgrades

something

that

are

safe

to

try.

You

don't

have

to

worry

like

if

it'll

totally

screw

up

your

cluster,

because

there's

like

a

fail-safe

to

stop.

If

we

see

that

there's

a

feature

being

used,

we

don't

we

can't

handle.

B

E

E

The

possibility

of

reverting,

for

example,

if

you

use

quota

limit

and

later

it's

possible,

that

you

can

remove

all

the

quota

and

in

the

code

we

were

detect

that

all

the

coda

are

removed,

so

that

this

registry

no

longer

requires

quota

limit

and

we

will

remove

that

minimum

capability

from

the

registry.

And

then

from

that

point,

even

though

you

use

it

before,

and

you

can

still

safely

downgrade.

B

C

B

C

C

C

A

C

Think

we

want

to

be

explaining

this

on

the

feature

by

feature

basis.

I

think

we

want

to

tell

people

a

general

rule

which

is

oh,

if

there's

a

minimum

capability,

now

that

crazy

used

to

feature

you

can't

downgrade

until

you

get

rid

of

that

minimum

capability,

so

either

for

quota.

That

means

not

using

different

limits

than

guarantees.

C

B

Using

a

format,

and

then

if

you

I

mean

what

we

can

do

is

say

if

we

want

to

downgrade

from

say,

1

7,

which

have

columnist

who

won

3,

we

could

make

the

colon

cavity,

configurable

and

restart

the

the

concert

with

1

7

but

turned

off

the

colon

committee,

and

if

the

1

7

master

sees

registry

that

has

the

capability,

but

now

is

nerve,

is

responsible

to

convert

it

back

or

for

that.

It's.

C

B

B

C

This

one

we

recommend

you

also

go

and

wipe

all

the

limits

that

you've

set,

but

I

don't

think

maysa

would

want

to

do

that,

because

we

would

go

and

drastically

change

the

behavior

the

allocation

without

them

really

understanding

that

I

don't

know

it's

just

like

over.

Is

it

over

engineering

like?

Do

we

really

need

to

go

that

far

with

down

grades?

I

think

people

just

want

it

to

be

safe

as

a

first

step,

which

it

isn't

even

safe

today,

right.

C

A

A

C

E

C

Limit

is

an

easy

one.

We

could

say

that,

like

it

goes

back

to

the

old

behavior,

which

isn't

good

right,

that's

going

to

drastically

change

all

the

configuration

they

set

up,

but

it

could,

it

could

downgrade

it

I.

Don't

think

we

want

to

I.

Think

we'd

want

to

tell

them

a

your

configuration

is

going

to

change

a

lot

here,

like

your

allocation

is

going

to

dramatically

change.

It's

that

okay

for

you.

A

C

A

C

They

don't

think

it

should

be

as

its

I

don't

think

we,

the

system

should

be

doing

those

kinds

of

things

stirring

it

downgrade

right,

I

think

we

should

only

be

downgrading

if

we

can

maintain

the

behavior.

If

we

can't,

we

should

tell

them

what

to

do.

What

to

do

in

that

case

is

a

separate

thing

like

do

we

need

a

tool?

C

Do

we

need,

like

all

sorts

of

tools

that

can

rewrite

things

and

so

on,

or

do

we

just

say,

you're

in

your

own

you're

on

your

own

here,

like

you've,

got

a

here's.

The

instructions

wipe

all

the

limits,

understand

what

that

does.

If

that's,

okay

for

you

and

wipe

all

the

limits

and

wipe

the

minimum

capability,

but

like

I,

don't

I,

don't

how

often

are

people

doing

downgrading.

A

C

Think

what

when

people

do

downgrades

is

like

they

do

an

upgrade,

there's

some

amount

of

time.

They

think

it's

not

working,

they

think

something's

wrong.

So

they

want

to

go

back.

I

think,

that's

probably

the

main

90%

use

case.

I,

don't

think

people

are

gonna,

go!

Oh

I'm,

a

1.5

I

want

to

go

back

to

1.3

unless

that,

like

they

just

upgraded

to

1.5,

so

I

was

mostly

concerned

with

when

they

want

to

make

this

decision.

Let's

just

make

sure

it's

safe.

C

Let's

make

sure

it

doesn't

screw

everything

up

and

then

they're

in

a

worse

situation,

because

their

clusters,

it's

all

screwed

up

and

that

we

have

to

come

in

and

be

like.

Okay,

what

did

you

do

you

downgraded

from

one,

not

five

to

one?

Not

three,

but

oh,

this

error

message

means

you

probably

use

this

feature.

Okay.

How

do

you

get

out

of

this?

Well,

you

got

to

go

back

to

1.5,

like

that's

just

like

very,

very

hard

to

figure

out

right.

I

just

want

a

simpler

story

around

oh

there's,

mom

capabilities.

You

used

a

feature.

B

C

We

were

thinking

of

using

strings

for

that,

so

that,

like

an

string,

a

string

in

addition

to

the

enum

so

that

the

old

code

could

print

it

out

clearly,

for

you,

I

mean

that's

one

of

the

problems

and

there's

some

ways

to

solve

that

like

how

to

print

out

a

readable

message.

Instead

of

just

saying.

Oh

there's

some,

you

know

we

don't

know.