►

From YouTube: Argo CD and Rollouts Community Meeting 4th Aug 20201

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

B

Yes,

hi

everyone

thanks

for

having

me

so

my

name

is

brett

weaver,

I'm

an

engineered

intuit.

I've

worked

here

for

about

18

years

now,

so

a

long

history

at

intuit

across

many

different

evolutions,

this

next

one

being

moving

to

the

cloud

and

how

we're

managing

our

resources

there,

and

so

today,

I'm

here

to

talk

to

you

about

an

experiment,

called

argo

cloud.

Ops

and

let

me

share

my

screen.

B

So

I

was

coached

I

I

came

with

a

deck

with

53

slides

and

I

was

coached

that

might

be

too

many,

and

so

I

reduced

it

I'm

just

kidding.

I

didn't

really

have

that

many.

I

know

what

everyone

thinks.

Somebody

who's

worked

it

into

it

for

18

years,

he's

probably

just

going

to

bury

us

in

slides,

so

we'll

try

not

to

do

that.

We'll

get

to

a

few

quick

dem,

a

quick,

a

few

quick

slides

to

ground

everyone

and

then

a

demo

to

talk

about

what

we're

doing

so.

Can

everyone

see

my

screen.

B

So

a

few

quick

slides,

as

I

talked

about

just

to

get

us,

grounded

on

the

what

we're

going

to

be

displaying

so

so,

we've

recently

opened

source

an

experiment,

we're

calling

orbital

cloud

ops

and

the

goal

of

argo

crowd.

Ups

is

to

allow

you

to

manage

your

cloud

resources

via

get

out

using

open

source

frameworks,

terraform

cloud

development

kit

and

others

across

multiple

cloud

providers,

aws

and

gcp.

With

the

git

op

style

interface.

B

So

we

have

into

it

obviously

a

very

large

kubernetes

deployment,

but

we

also

have

other

resources

we

need

to

manage.

Those

resources

include

things

like

aws

resources

and

other

cloud

resources

that

support

support

those

kubernetes

workloads.

In

many

cases,

those

are

managed

outside

of

our

kubernetes

infrastructure,

everything

from

state

to

different

other

aws

resources.

B

We

have

all

of

our

non-container

based

workloads,

so

everything

from

aws

managed

services

to

lambda

to

other

things

that

are

somewhat

orthogonal

to

our

container

workloads,

and

then

we

have

everyone's

favorite,

our

legacy

workloads.

So,

like

most

large

organizations,

we

have

a

a

fairly

extensive

deployment

of

ec2

and

other

styles

of

workloads

that

aren't

going

to

be

moving

onto

containers

anytime

in

the

short

term.

B

So

a

few

architectural

principles

at

intuit

we

want

to

solve

for

aws.

First

aws

is

our

primary

cloud,

but

we

are

not

precluding

other

clouds.

We

do

have

footprints

and

in

many

of

the

other,

major

clouds

solve

for

cdk

and

terraform

first,

but

don't

preclude

other

frameworks.

So

personally

I

have

a

belief.

I've

worked

in

this

space,

for

some

time

is

that

there

will

never

be

a

complete

consolidation

of

every

every

framework

into

one

that

rule

them

all

they're

way

too

intimate

and

tied

into

your

application

once

you've

selected

one.

B

It's

I

don't

know

if

I've

ever

seen.

Anyone

change

anything

significant

from

cdk

to

terraform

or

cloud

formation

or

any

other

direction.

I'd

be

interested

to

hear

if

someone

had

so

we

want

to

make

a

system

where

we

can

create

a

environment

where

we

could

have

a

github

style

process

that

could

manage

those

resources

using

any

other

frameworks

that

are,

you

know

we

want

to

meet

developers

where

they

are

and

what

they're

using.

B

In

addition,

we

also

want

to

not

preclude

other

frameworks,

because

this

is

another

space,

that's

ripe

for

evolution

with

new

ones

coming

out

constantly

git

ups

and

infrastructure

as

code.

I

think

everyone

here

is

very

intimately

familiar

with

that.

We

want

to

isolate

our

ci

from

our

production

environments.

We

we're,

I

think

many

people

want

to

try

and

remove

aws

access

from

people

managing

those

resources,

removing

laptop

deployments

and

also

getting

credentials

out

of

our

cicd

system

and

then,

where

possible,

aligned

to

the

same

high-level

patterns

as

argo

cd.

B

B

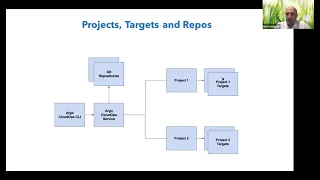

So

again,

if

you

look

at

this

box,

the

green

components

are

those

which

are

actually

we're

considering

to

be

part

of

the

argo

cloud.

Ops

deployment

fault

kind

of

goes

is

internal

component,

which

we

believe

might

be

somewhat

considered

somewhat

separate,

but

it's

a

configuration

which

is

very

specific

target

cloud,

ops

and

one

more

just

quick

slide

to

kind

of

ground.

What

I'm

going

to

show

is

argo

cloud

ups

is

definitely

built

for

enterprise

support

scale.

We

want

to

be

able

to

support

multiple

projects,

and

those

projects

are

essentially

logical.

B

Containers

of

access

each

one

of

those

has

targets

and

those

targets

will

be

different

regions,

combination

of

regions

and

or

providers

and

environments.

You

have

your

u.s

west

tuqual,

your

u.s

east,

one

prod

would

be

each

be

a

specific

target

and

access

is

at

the

project

level,

so

project

one

some

of

the

magic

resources

that

would

not

have

access

access

to

project

two

and

vice

versa.

B

B

B

The

details

aren't

important,

but

you

can

see,

on

the

left

hand,

side,

there's

a

build

process

that

goes

on

and,

like

you

know,

probably

most

most

people

here

we

have

a

fairly

extensive

build

process

to

eventually

create

the

necessary

components

in

rpm

to

be

deployed,

and

then

what

happens

is

once

this

build

is

completed.

We

get

to

what's

called

the

argo

cloud

up

steps.

We

have

a

diff

and

sync

commands

here

and

I'm

just

going

to

go

straight

to

this

well

I'll.

Let's

click

on

the

dish

for

the

fun

of

it.

B

If

we

look

at

the

diff

here,

what

you

see

is

in

here

the

actual

command

that

gets

run

here.

Is

this

argo

cloud,

ops,

diff

command

and

there's

three

key

pieces

to

this?

One

is

the

s

which

is

the

sha,

so

this

is

actually

the

sha.

We

are

going

to

reference

to

provide

a

difficult

and

then

the

project

name

and

the

environment.

So

those

are

those

three

components

that

we

talked

about

before.

B

If

we

go

to

the

actual

repository,

so

this

repository

describes

the

infrastructure

as

code

command

to

be

run,

and

I

apologize

I'm

bouncing

around

a

lot

here,

I'm

probably

happy

to

clarify

once

I

get

through

it,

because

I

know

I'm

throwing

a

lot

at

everyone.

This

actually

creates

the

infrastructure's

code

command

to

be

run.

It

says

we

are

going

to

run

cdk.

B

It

sets

some

environment

variables

to

be

run,

it

tells

the

arguments

and

then

it

gives

a

handful

of

parameters,

and

these

parameters

are

actually

passed

to

an

argo

workflow

which

is

used

to

orchestrate

the

actual

execution

of

cdk

via

a

docker

image.

It

has

the

project

name,

and

this

project

name

is

just

in

our

case.

It's

just

a

numeric

number.

We

associate

with

each

project

the

target

name

and

the

type

in

this

case.

It's

a

sync

and

then

the

workflow

templates.

B

B

B

Additional

commands

can

actually

be

configured

by

we've

written

we've

written

the

start

of

a

templatization

language

which

allows

for

different

commands

to

be

added

to

this.

So

the

assumption

is

most

commands.

Most

every

command

in

this

space

will

always

have

a

sink,

and

a

diff

cdk

will

construct

a

command

using

bootstrap

and

diff,

and

terraform

obviously

has

a

terraform

and

then

a

terraform

plan

and

applied

other

infrastructures

code

frameworks

by

other

variables

and

commands

that

are

run

for

those

for

those

steps.

B

B

You'll,

see:

oh

okay,

we're

gonna

run

this

diff

command

with

that

project

name

and

then

what

we're

gonna

do

is

tail

the

logs

on

this.

So

if

you

look

here

at

you,

do

argo

cloud

up

logs.

You

give

it

this

id,

and

this

is

an

id

for

the

diff,

that's

run

and

then

you'll

see

that

it

goes

through

here

and

it

actually

starts

running

all

of

the

constructing

the

environment

to

run

cdk

in

this

case.

B

So

it

gets

some

tokens

from

our

provider

which

is

vault,

it

downloads,

the

cdk

tarball

to

be

run,

and

then

finally,

it

comes

down

to

here

and

it

actually

runs.

You

know

this

diff

command,

which

basically

says

okay,

this

cdk,

essentially

it's

just

a

dummy

app

I

created

called

delete

me.

I

just

updated

it

from

version,

one

to

version

two

of

the

app

code

and

that's

gonna

result

in

the

change

of

a

lambda

function

being

changed

from

one

asset

to

another.

B

We

take

a

look

in

here

and

we

see

now

the

sync

command,

so

this

looks

very

similar

to

the

other

one.

Where

there's

a

sync

command,

you

give

it

the

shot

to

sync

and

the

name

and

the

target,

and

if

I

click

into

here,

you'll

see

something

that

looks

very

similar.

It

sets

up

this

same

environment

to

run

cdk

and

instead

of

in

this

case

doing

a

diff.

It

actually

runs

a

cdk,

applier,

sync

and

you'll

see

that

that's

actually

doing

now.

This

is

the

example.

B

B

All

of

the

communication

goes

from

the

service

to

github.

So

if

you

look

here,

there's

only

a

in

this

command,

all

you

provide

is

the

sha,

the

name

and

the

target,

and

what

this

does.

This

allows

all

configuration

to

be

externalized

from

the

actual

build

system

where

this

is

running

and

the

source

of

truth

goes

back

to

the

github

account

very

serious,

the

github

repository,

that's

allowing

for

history

and

control

to

be

managed

there

and

how

those

commands

are

run.

B

We

are

in

the

process.

If

you

look

right

here,

it's

a

little.

We

we

have

an

initial

release

out.

We

have

it

working

and

we're

experimenting

with

it

today,

but

I

think

what

you'll

see

is

there's

a

lot

of

internals

that

will

be

being

leaked.

A

lot

of

cleanup

that

can

be

done.

What

we're

going

to

be

doing

over

the

next

quarter

is

moving

a

lot

of

this

configuration

into

the

service.

B

A

A

Thanks

for

that,

hey

but

brad,

what

is

the

workflow?

Not

argo,

workflow,

but

the

process

workflow

that

the

user

experience

would

be.

Do

you

expect

them

to?

You

know,

go

play

around

with

their

cdks.

You

know

get

that

tweak

that

until

it's

the

way

they

like

commit

that

and

then

expect

like

the

automation

to

take

over

after.

At

that

point

like

where

does

the

new

workflow

yeah.

B

And

so

what

it

looks

like

is

it's

very

much

a

git

style

experience?

Let

me

actually

just

see

if

I

can

do

a

quick

update

and

see

if

I

can

kick

it

off

while

we

have

a

few

minutes.

So

if

I

go

into

our

cdk

directory-

and

I

do

let's

do

cdk.json-

I

can

change

this-

is

the

various

configuration

to

the

cba

kick

command?

I'm

just

going

to

change

the

greeting

on

this

lambda

function

from

hi.

How

are

you

doing

hi?

B

B

What

we

don't

want

to

do

is

try

and

recreate

cdk

or

terraform,

because

I

don't

think

we'll

be

successful,

building

our

own

language

to

do

this,

but

allow

for

a

system

that

will

can

be

used

to

execute

those

different

configuration

languages

in

a

get

op

style

fashion,

and

so

what

this

is

doing

is

kicking

off.

And

what

eventually

this

will

do

is

this

will

get

over

to

this

argo

cloud

up

to

different

sync,

which

will

now

use

that

new

updated

shot

to

pull

that

configuration

to

be

applied

from

github?

B

C

A

little

less

noisy

at

home,

so

I

can

ask

a

question

so

the

the

for

the

the

one

thing

that

I

usually

find

that

that's

not

that

great

of

a

story

is

the

delete

side

of

it

right.

So

once

once

the

the

thing

is

is

no

longer

needed,

and

I

don't

think

we've

gone

over

that

here

yet.

Is

that

something

that's

kind

of

in

target

for

this

particular

tool.

B

It

is,

but

we've

purposely

left

it

out

right

now,

because

delete

is,

as

you

know,

like

it's,

it's

a

very

difficult

thing,

a

to

do

safely

and

b

to

do

successfully

with

end

of

these

tools,

I

mean

in

most

cases

I

found

you

of

any

kind

of

complex

application.

You

have

to

do

delete

then

clean

up

a

bunch

of

stuff

and

delete.

So

absolutely

it

is

part

of

the

story,

but,

as

most

people

have

probably

done,

as

you've

said,

you

we've

kicked

that

can

down

the

road

for

the

for

the

next

release.

A

B

I

was

actually

thinking

of

the

use

case

where

it's

you

can.

You

know

you're

actually

done

with

this

environment.

You

just

want

to

delete

it

entirely.

Oh

yeah,

and

that's

the

one

that

was

what

I

was

responding

to

and

maybe

whoever

asked

it

can

clarify,

but

this

absolutely

will

allow

you

to

roll

forwards

and

backwards

using

different

git

shaws.

You

know,

provided

that

whatever

those

changes

are

can

be

rolled

forwards

or

backwards.

I

mean

there.

Are

you

know,

depending

on

how

you

use

terraform

or

cdk?

B

There's

you

know

your

your

ability

to

roll

forwards

and

roll

backwards

are

only

as

good

as

what

you've

planned

for

in

that

you

know

what

I

mean

by.

That

is,

if

you

say

you

know,

deleted

a

data

database

or

made

some

changes

that

are

not.

You

can't

necessarily

just

roll

back

to

state

if

you've

destroyed

it

as

part

of

your

roll

forward.

So

but

it

absolutely

allows

you

to

roll

your

shot

forwards

and

backwards,

based

on

the

configuration

in

github.

A

B

What's

difficult

in

many

cases

is,

if

you

actually

want

to

say,

have

a

complex

application,

you've

deployed

in

cdk

or

terraform

and

then

say:

do

it

terraform

destroy,

I

think,

is

the

word.

Sometimes

it

works.

Sometimes

it

doesn't

depending

on

how

much

changes

has

gone

externally

on

externally,

to

that

over

the

course

of

time

that

those

resources

have

been

managed

via

and

then

there's

also.

The

challenge

of

you

know

that

being

a

very

a

one-way

door

that

you

walk

through,

that's

very

hard

to

walk

back

from.

B

B

There's

also

a

plan

to

support

just

branch

names

as

well,

and

this

is

now

running

it's

generating

the

policy,

and

so

one

thing

we

didn't

talk

about

on

this

is

we

want

to

make

sure

that

part

of

the

reason

we

use

vault

is

to

allow

for

assuming

minimally

privileged

roles,

and

we

actually

set

the

policy

at

time

of

rule

assumption

so

that

cdk

or

terraform

whatever's

running

only

has

access

to

the

resources

that

it

needs

to

manage,

and

this

is

actually

now

running.

Where

are

we

at

here?

B

B

A

B

Oh,

so

I'm

sorry!

Yes,

I'm

in

the

cncf

as

well,

correct

and

I'll

confirm.

I

am

there

as

well

I'll

make

sure

I

am

and

I'll

have

I'll

I'll

put

my

contact

information

should

I

just

put

it

in

the

community

document.

Yes,

I'd

encourage

everyone

to

start

the

repo.

This

is

again

very

much

in

a

first

introduction

of

these

concepts.

D

D

A

D

And

so.

In

order

to

respond

to

this

situation,

we

proposed

a

solution

which

was

to

create

the

app

source,

controller

and

custom

resource,

and

so

the

way

it

works

is

an

admin

team

or

a

devops

team,

with

access

to

the

argo

cd

instance

and

namespace

can

create

a

config

map

that

contains

a

bunch

of

argo

cd

project

specifications

that

can

control

and

manage

the

sort

of

resources

and

things

that

developers

can

do

with

their

argo

cd

applications.

The

resources

they

can

use

and

things

of

that

sort.

D

Now

the

dev

team

from

within

their

own

restricted

namespace.

They

can

create

an

app

sorts,

an

appsource

instance

that

triggers

a

reconciliation

request

to

for

the

app

source

controller

to

verify

whether

the

developers

have

followed

the

naming

convention

specified

by

a

regex

pattern

within

that

config

map

that

the

admin

or

devops

team

created,

and

we

can

match

the

specific

project

that

a

developer

team

might

be

wanting

to

create

an

application

for

by

using

the

namespace,

where

the

appsource

instance

was

created.

D

Let's

go

a

little

bit

into

what

a

typical

appsource

config

map

looks

like

so

over

here

on

the

left.

You

can

see

that

the

controller

uses

the

argo

cd

api

to

create

to

make

all

of

these

requests,

whether

it's

for

creating

accuracy,

applications

verifying

that

the

application

exists

already

or

not.

So

a

typical

config

map

will

include

the

argo

cd

api

address

and

a

series

of

client

options.

D

We've

also

included

finalizers

that

can

work

out

how

we

want

to

delete

argo

cd

applications.

If

you

ever

decide

to

delete

your

appsource

instance,

and

the

appsource

instance

will

also

contain

the

necessary

information

on

where

your

argo,

cd

application

is

being

hosted

and

the

path

to

that

application.

D

D

So

a

question

that

we

thought

about

when

developing

this

controller

is

how

will

admins

communicate

what

profi,

what

project

profiles

developers

have

access

to,

and

so

it's

very

convenient

that

kubernetes

already

has

its

own

role-based

access

control

system.

So

we

can

give

developers

read

permissions

to

this

config

map,

so

they

can

see

what

projects,

what

things

can

be

done

within

their

projects,

but

not

right

access

so

as

to

maintain

the

security

of

how

applications

are

automatically

being

created.

D

D

We've

already

done

a

little

bit

of

the

setup

for

the

sake

of

demonstration,

so

we've

already

created

the

config

map

that

the

appsource

controller

uses

made

sure

to

display

that

down

here,

but

we're

going

to

be

using

the

same

config

map

and

appsource

manifest

that

I

showed

in

a

couple

earlier.

Slides

we've

also

created

a

secret

that

contains

the

argo

cd

api

token

for

the

account

that's

going

to

be

making

all

of

these

requests

to

create

applications.

D

And,

lastly,

I

also

just

made

sure

to

display

the

appsource

manifest

that

we'll

be

using,

but

it's

the

same

one

that

we

showed

earlier.

So

installing

the

controller

is

a

fairly

easy

process.

It's

very

similar

to

how

we

install

argo

cd.

Actually,

so

we

can

use

a

single

install

manifest

that

will

create

the

deployment

in

any

necessary

roles

and

service

accounts

for

the

controller.

D

D

We

can

see

on

the

left

side

that,

through

the

argo

cd

ui,

an

argo

cd

application

was

created

and

if

I

want

more

information

about

whether

my

application

was

successfully

created

because

I

don't

have

access

to

the

argo

cd

namespace,

I

can

just

go

ahead

and

check

out

the

appsource

status

sub-resource.

So

let

me

just

pull

that

up.

D

So

now,

let's

deleting

the

argo

cd

say,

the

appsource

instance

is

fairly

simple

and

in

this

manifest

we

included

a

finalizer

that

will

handle

deleting

the

argo

cd

ins,

the

argo

cd

application

instance

and

its

resources

from

wherever

that

application

is

being

hosted.

So

let's

go

ahead

and

delete

the

the

appsource

instance.

D

Okay,

I'm

going

to

hop

back

now

to

the

slides

just

to

sort

of

go

over

some

of

the

things

that

we

talked

about,

and

actually

I

don't

think

I

have

to

reshare.

I

can

just

go

right

back

to

my

slides,

so

specifically

like

I

mentioned,

we

have

the

finalizers

that

handles

how

we

want

to

take

care

of

our

argo

cd

applications.

If

we

ever

choose

to

delete

the

appsource

instance,

we

can.

We

also

support

the

cascade

deletion

that

is

available

to

argo

cd

users.

D

We

can

allow

them

now

to

automatically

create

argo

cd

applications

from

within

their

own

namespace

and

even

from

within

their

own

clusters.

This

is

because

we're

using

the

argo

cd

api

to

communicate

with

whatever

argo

cd

instance

you're

running

you

can

have

developers

that

operate

from

within

their

own

clusters

and

can

service

self-serve

themselves,

argo

cd

applications.

D

A

Yeah,

so

this

this

was

in

response

to

providing

just

a

a

simpler

way

for

for

self-servicing

application

creation.

So

you

can

imagine

so

without

this

controller.

The

expectation

is

that

you,

you

admin,

creates

projects

for

like

each

namespace

of

their

developer

teams,

and

then

they

sandbox

like

what

can

be

done

for

them.

But

it

assumes

that

you

want

to

also

give

the

developers

argo,

a

cd

api

server

access

and

be

able

to.

A

Crds

which

ultimately

create

applications

and

then

the

advents

are

able

to

control

like

what

they

can

do

by

pre-configuring

these

projects

and

using

that

configuration

config

map

to

map

their

app

sources

to

the

pre-configured

projects.

So

that's

the

the

type

of

use

case

that

this

is

trying

to

address.

D

And

I

think

this

is

just

for

future,

because

I

know

this

is

being

uploaded

if

you'd

like

to

see

more

information

about

the

project

and

where

it's

currently

hosted.

The

appsource

project

is

already

a

part

of

the

argo

proj

labs

organization,

and

so

it

can

be

found

just

by

looking

up

argo

proj

labs

forward,

slash

appsource,

so

I'll

make

sure

to

drop

a

link

to

that.

For

those

who

might

be

interested

in

checking

that

out

today,.

A

All

right,

thank

you,

marco

yep,

all

right,

so

we've

reached

the

end

of

our

our

regularly

scheduled

projects,

our

topics

and

I'm

forgot

to

check

if

there

are

any

after

topics

submitted

by

the

community.

But

let's

let

me

check

really

quick.

Does

anyone

have

any

topics

they

want

to

bring

up

right

now.

A

Okay,

it

looks

like

there

that

was

the

end

of

the

agenda.

So

looks

like

that

will

conclude

this

meeting.

For

today.

We

again

we

have

the

workflow

community

meeting

that

happens

the

second

or

sorry

the

third

wednesday

every

month.

So

another

two

weeks,

and

aside

from

that,

we'll

see

you

either

at

our

weekly

contributors

meetings

that

happens

every

thursday

or

the

next

community

meeting.

That

is

on

the

monthly

basis.