►

From YouTube: Ceph Month 2021: CephFS update

Description

Presented by: Patrick Donnelly

Full schedule: https://pad.ceph.com/p/ceph-month-june-2021

A

I've

also

included

in

the

slides,

which

will

be

distributed

later.

The

blog

post

I

wrote

up,

which

also

includes

a

lot

of

these

discussion

topics

somewhat

in

more

detail.

Perhaps

so,

the

the

project

has

been

following

five

themes:

you

revolving

around

the

features

we've

been

developing

for

the

last

few

releases

and

that

and

we'll

begin

with

usability.

A

A

We

have

this

fs

volume

interface

that

allows

you

to

create

a

file

system

quite

easily,

including

all

of

its

pools.

Following

the

best

recommendations

of

this

of

first

half

and

automatically

just

deploy

mdss

using

the

distributed

or

the

deployment

backend

for

ceph,

either

cephadm

or

rook,

and

bring

up

as

many

file

systems

as

you

need

and

then

also

remove

them

as

you

need

another

usability

feature.

We've

developed

in

that

same

vein

is

the

mds,

auto

scaler,

which

will

start

and

stop

mdss

based

off

of

the

needs

of

the

file

systems

you

have.

A

This

is

a

module,

that's

not

enabled

by

default.

You

have

to

turn

it

on,

but

once

on

it

should

create

mdss

using

the

deployment

tool,

either

adm

or

rook

in

response

to

changes

of

the

configurations

of

your

of

your

ffs

file

system.

For

example,

if

you

increase

max

mds,

the

module

will

automatically

deploy

another

mds

in

response

to

that

change.

A

This

is

communicated

to

the

mds

and

the

mds

is

able

to

communicate

with

the

sef

the

cef

manager,

to

provide

a

summary

of

statistics

for

all

the

clients

in

the

cluster

and

display

a

simple

and

curses

ui

for

the

administrator,

showing

the

various

client

sessions

in

the

cef

file

system

and

what

they're

doing

and

who

the

top

consumers

are

again.

This

is

a

tech

preview,

but

we

are

spending

time

to

improve

it

always

and

we

are

eager

for

any

feedback

that

the

community

has.

A

In

that

same

vein,

we've

also

been

working

on

cfs

shell.

To

my

knowledge,

we

haven't

had

too

much

feedback

from

the

community.

This

has

been

around

for

about

two

releases.

Now,

two

or

three

releases,

it's

a

simple

python

utility

that

mounts

ffs

and

allows

you

to

execute

some

commands

on

the

file

system

without

having

to

mount

it

using

fuse

or

the

kernel.

A

We

also

have

a

new

snap

schedule

manager

module

that

also

must

be

enabled

that

allows

you

to

schedule

snapshots

on

a

step

file

system

on

a

given

period

and

also

retain

snapshots

according

to

a

retention

schedule.

That's

set

up

that

can

allow

you

to,

for

example,

set

up

snapshots

to

be

taken

every

day

for

a

week

and

then

delete

any

snapshots

that

are

that

are

older

than

that

time

frame.

A

A

The

the

you

can

set

up

the

nfs

clusters

in

active,

active

configurations

or

set

of

and

and

in

in

the

near

future,

you'll,

be

able

to

even

set

up

aha

using

ceph8m

with

aj

proxy.

That's

a

work

that

sage,

while

is

doing

right

now,

or

you

can

also

use

rook

for

for

the

aha

component,

although

the

rook

crd

is

under

active

development

right

now,

so

not

quite

yet

the

nfs

clusters

that

are

deployed

you

can

have

them

automatically

deployed

using

the

step

orchestrator.

A

A

All

right.

I

think

we

might

have

a

question

you

have

sharing

that

with

yes,

we're

doing

an

fs

crypt

instead

of

talk

tomorrow

at

9

30

a.m.

Eastern

time,

if

you

want

to

learn

more

about

that,

the

coming

back

to

the

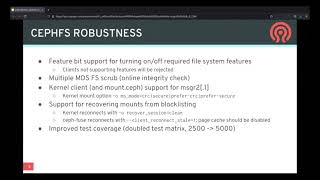

robustness,

we

have

feature

bit

support

for

turning

on

and

off

required

file

system

features.

A

So

a

more

robust

solution

is

to

selectively

enable

and

disable

the

features

that

you

want,

and

that

is

all

the

api

for

that

is

all

set

up

and

documented

this

ftox.

If

you

want

to

learn

more,

the

we've

also

stabilized

multiple

mds

file

system,

scrub

that

is

in

in

the

past

before

pacific.

You

would

have

to

bring

your

self

ffs

file

system

down

to

max

mds

equals

one

so

reduce

the

number

of

ranks

to

one

before

you

could

run

a

scrub.

A

A

If

of

a

applications,

writing

to

the

mount

tries

to

come

back,

but

the

applications

that

had

read

handles

on

files

will

continue

to

function

and

new

applications

running

on

the

mount

can

do

reads

or

writes,

set

fuse

has

a

similar

feature

or

behavior

with

the

client

reconnect,

scalable

one

configuration

and

then

I'll

see.

If

you

want

to

do

this,

you

need

you

should

disable

the

page

cache.

A

A

Moving

on

to

performance,

another

big

feature

that

we've

had

that

was

partially

available

in

octopus.

As

development

preview

was

ephemeral

pinning

this

is

now

stable

and

what

this

is

is

a

policy-based

sub-tree

pinning

pinning

subtrees

has

been

around

since

I

believe

luminous

allowing

you

to

assign

a

given

subdirectory

treat

to

a

particular

mds

rank

ephemeral

pinning

allows

you

to

set

a

policy

saying

that

you

want

a

how

you

want

a

directory

or

a

group

of

directories

to

be

pinned

but

you're

not

actually

assigning

the

pin,

pin

directory

to

a

particular

rank.

A

A

Next,

we've

also

spent

a

lot

of

time

improving

the

capability

in

cash

management

in

the

mds

for

larger

clusters.

There's

this

has

been

an

issue.

We've

been

dealing

with

for

quite

a

several

releases

now,

and

we

believe

that

the

the

current

status

ffs

is

much

better

now

and

a

lot

more

stable

than

it

used

to

be.

The

most

recent

changes

we've

made

are

improving.

The

cap

recall

defaults

for

larger

production

clusters,

and

that

is

in

response

to

some

feedback.

A

A

A

A

A

A

Moving

on

to

ecosystem,

we

also

have

spent

a

lot

of

time

improving

the

use

of

cfs

with

kubernetes

csi

environments,

where

syphifest

is

used

for

rwx

or

rox

volumes.

Pvc

pvs-

and

this

is

all

orchestrated

through

the

volumes

plugin

in

the

cef

manager,

which

provides

an

api

for

creating

and

deleting

tvs

through

the

what

we

call

this:

the

sub

volume

interface

for

pacific.

A

We

added

a

support.

We

stabilized

the

snapshots

interface

for

our

sub

volumes

and

stabilized

the

interface

within

csi,

and

we

have

been

adding

a

new

authorization.

Api

support

for

openstack

manila,

which

is

also

in

the

process

supporting

to

use

this

new

api

and

also

have

added

ephemeral,

pinning

support

for

volumes.

A

A

A

For

performance,

what

we

would

like

to

do

next

is

add:

support

for

asynchronous

rm,

dur

maker

and

potentially

also

link

and

rename.

This

will

get

us

to

a

point

where

our

scene

can

run

extremely

quickly.

Those

are

the

last

remaining

our

pcs

that

the

that

we

would

need

to

make

a

asynchronous

to

improve

that

kind

of

workload

tremendously.

A

We

also

have

asynchronous

metadata

operations,

support

and

lips

ffs.

It's

currently

under

development.

We

expect

that

should

be

merged

for

quincy,

there's,

also

some

opportunities

to

improve

the

performance

of

set

fuse.

There

have

been

some

recent

changes

to

lib

views

that

we

believe

we

could

integrate

into

our

staff

use

library

to

just

take

advantage

of

the

latest

kernel

modifications

that

help

us

achieve

better

performance

within

lips

ffs.

A

A

We

also

are

developing

the

fs

cache

support

within

the

kernel,

client,

jeff

jeff

layton's

been

working

on

this.

The

fs

cache

interface

is

currently

being

refactored

and

reworked

by

david

howells

and

the

theft

kernel

client

is

being

constantly

updated

to

to

his

latest

changes

and

we're

hopeful

that

we

can

get

something

like

that

complete

by

the

by

the

quincy

release.

A

That's

especially

true

within

the

new

volumes

plug-in,

which

needs

to

regularly

delete

sub-volumes,

and

it

would

be

much

faster

to

just

tell

the

mds.

An

entire

directory

tree

can

go

away

and

that'll.

That's

a

workload

that

we'd

like

to

support

through

a

new

recursive,

unlink,

rpc

and

probably

that'll,

be

exposed

to

the

user,

is

some

kind

of

dot

trash

directory

to

allow

applications

to

to

be

able

to

do

that

without

linking

to

lips

ffs.

A

A

A

That's

in

pacific

there's,

a

link

to

the

blog

post

for

libsev

sql

lite

in

the

slides,

libstep

ffs

sqlite

will

function

similarly

and

that

it

allows

you

to

put

a

sqlite

database

on

cephfs

without

actually

mounting

cfs

using

the

chronoclient

or

cephus.

So

can

speak

this

fs

protocol

and

and

avoid

doing

any

mounts.

A

Then

we've

also

got

the

mds

memory

target

and

this

is

another

configuration

companion

to

the

the

osd

memory

target

variable

and

this

is

to

improve

how

the

mds

utilizes

the

memory

by

monitoring

its

own

memory,

use

and

then

adjusting

the

its

own

cache

as

appropriate

in

order

to

to

meet

the

desired

memory

target.

We

have

some

code.

A

That's

already

been

worked

on

that

just

needs

picked

up

and

polish

for

frequency

to

get

this

in

and

then

first

ffs

multi-site

replication,

currently

we're

working

on

more

testing

for

the

h,

a

components

of

the

cepheus

mirror.

That's

already

present

and

adding

active

active

support.

Both

of

those

features

were

planning

to

back

port

to

pacific.

A

You

won't

need

to

wait

for

quincy

for

those

to

be

available

and

make

it

more

automatic

to

set

up

snapshots

and

sync

it

to

the

to

the

remote

cluster

to

make

that

interface

a

little

cleaner

and

some

of

the

other

things

we're

looking

at

is

some

other

sophisticated

models

for

for

actually

doing

multi-site

replication

without

any

firm

plans

of

doing

this.

For

for

quincy,

look

at

potentially

bi-directional

or

loosely

eventually

consistent

type,

synchronization

mechanisms

and

some

kind

of

simple

conflict

resolution

behavior

in

tandem

with

bi-directional.

A

B

B

Okay,

the

other.

The

other

thing

I

was

wondering

is:

whenever

there's

like

a

it

happens

very

rarely,

but

whenever

users

hit

like

a

a

crash

of

an

mds

and

then

the

journal

can't

be

replayed

and

then

they

have

to

enter

into

the

disaster

recovery

procedure.

This

is

like

extremely

scary.

I'm

wondering

if

there's

any

ideas

to

make

that

somehow

less

scary

and

maybe

even

more

automatic,

something

like.

A

A

There

are

tools

to

do

that,

of

course,

but

which

tools

to

use

is

not

always

clear.

I

think,

as

far

as

improving

the

usability

of

of

cefs,

we

could

go

a

long

way

to

be

more

clear

about

what

the

kind

of

metadata

damage

we've

discovered

and

in

places

where

we

do

said

that

there

should

be

some

suggestions

on

on

where

to

look.