►

From YouTube: 2019-JUN-27 :: Ceph Tech Talk - Intro to Ceph

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

A

Why

it's

built

the

way

it

is

how

it

works,

but

are

the

core

concepts?

What

makes

it

different

from

other

systems,

focusing

mostly

on

raitis

the

underlying

storage

later,

but

also

talking

about

the

object,

block

and

file

components

that

are

built

on

top

of

it,

then

we'll

shift

gears

a

little

bit

and

talk

about

how

stuff

is

managed

and

some

of

the

user-facing

features

that

make

it

easy

to

consume.

A

And

finally,

we'll

talk

a

bit

about

the

open

source

community

and

for

ecosystem.

So

what

is

SEF?

It's

been

described

as

software-defined

storage.

As

a

unified

storage

system,

scalable

distributed

storage,

we've

branded

stuff

I

was

the

future

of

storage.

It's

on

a

lot

of

our

t-shirts

and

people

have

also

described

stuff

as

the

Linux

of

storage,

but

all

these

frames

braces

mean

slightly

different

things:

different

people

so

try

to

get

to

the

crux

of

it.

A

I

think

the

first

thing

to

recognize

is

that

stuff

is

open

source

software

emphasis

on

a

software

that

can

run

on

any

commodity

hardware,

so

commodity

servers

from

any

vendor

use

this

typical

standard,

IP

based

networks,

and

we

can

use

all

the

usual

standard

types

of

storage

devices,

hard

disks,

SSDs

in

muse,

Envy,

gems

and

so

on.

And

finally,

it's

it's

important

to

recognize

that

def

is

a

unified

system

in

that

you

can

serve

object,

block

and

file

workloads

from

the

same

cluster

from

the

same

hardware,

using

the

same

software

stack.

A

So

Steph

is

free

and

open

source

software.

That

means

you

have

the

freedom

to

download

and

use

f3.

In

that

sense,

you

also

have

access

to

the

source

code.

It's

open

source,

so

you

can

introspect

look

at

how

the

system

works.

You

can

modify

it.

You

can

share

changes

as

long

as

you

conform

to

the

open

source

software

license.

This

gives

you

freedom

from

vendor

lock-in.

A

You

can

choose

from

many

different

companies

and

organizations

that

are

building

products

and

services

based

on

stuff,

and,

if

you

don't

like

them,

you

can

switch

somebody

else

without

having

to

you

know.

Throughout

your

software

stack

and

by

virtue

of

the

community,

you

also

have

the

freedom

to

innovate

in

the

space

by

integrating

stuff

with

other

software

systems

and

adapting

it

to

your

particular

use

cases

and

workloads.

A

Sep

is

designed

and

built

to

be

reliable.

Our

goal

is

to

create

a

reliable

storage

service

out

of

inherently

unreliable

components,

though

the

architecture

is

designed

with

no

single

points

of

failure.

It

provides

data

durability

via

either

replication

or

erasure

coding

of

your

data,

and

it's

designed

to

be

continuously

available

so

that

you

have

no

interruption

of

service

from

rolling

upgrades

expansion

of

your

plaster

of

your

cluster

contraction

failures

and

so

on,

and

it's

also

reliable

in

the

sense

that

we

as

a

rule,

favor

consistency

of

the

system

over

correct

and

correctness

over

performance.

A

Finally,

stuff

is

scalable.

We

describe

it

as

an

elastic

storage

infrastructure.

That

means

that

your

storage

cluster

may

grow

or

shrink

over

time

as

the

size

of

your

data

sets

or

your

workloads

or

your

overall

requirements.

The

organization

change

over

time

and

you

can

add

and

remove

Hardware

from

the

system

while

it's

online

and

then

load

both

to

deal

with

failure

and

hardware

refresh

in

order

to

also

to

expand

capacity

or

deploy

a

new

different

performance

classes

or

whatever

it

is,

and

we

can

scale

a

number

of

different

ways.

A

So

you

can

scale

up

by

simply

using

faster,

bigger

servers

and

storage

devices.

You

can

scale

out

by

adding

additional

nodes

or

racks

of

nodes

and

more

storage

devices

to

get

more

capacity

and

performance

in

the

system,

and

you

can

also

federate

multiple

clusters

across

multiple

sites,

using

a

set

of

asynchronous

replication

features

for

disaster

recovery

type,

these

cases

and

to

provide

availability

in

the

event

of

a

tie,

an

entire

data

center

site

image.

A

So

our

GW,

the

rate

of

Skateway,

provides

an

s3

compatible

with

compatible

object,

storage,

API

buckets

using

a

restful,

get

input,

type

interface,

RBD.

The

rate

of

block

device

provides

a

virtual

block

device

interface.

This

is

used

very

widely

in

public

and

private

cloud

deployments

and

platforms,

so

virtual

disks

usually

backing

virtual

machines

and

stuff

if

s

is

a

distributed

network

POSIX

file

system

that

allows

lots

of

clients

to

have

a

shared

access,

shared

access

to

a

single

file

system

in

space,

with

your

usual

POSIX

like

semantics.

A

So

in

this

talk,

I'm

going

to

do

sort

of

a

deep

dive

into

how

this

architecture

is

put

together

and

how

it

works,

starting

with

raitis

this

underlying

layer

and

then

moving

on

to

Rios,

Gateway,

brightest

block

device

and

stuff

file

system.

But

let's

start

with

Freitas

raitis

stands

for

reliable

autonomic

distributed,

object

store,

and

this

is

the

common

storage

later

that

underpins

all

the

other

services

and

stuff,

and

it

provides

this

low-level

data

object,

storage

service,

that's

reliable

and

highly

available.

A

That's

scalable

both

when

you're

in

cluster

is

initially

deployed

on

day,

one

these

sort

of

arbitrarily

large,

and

also

on

one

two

three

years

down

the

line

when

you

are

refreshing

hardware

and

expanding

and

deploying

more

storage

and

so

on.

It's

also

scalable

sort

of

after

the

fact

I

mean

ray.

Does

this

job

is

to

manage

all

the

replication

and

erasure

coding

of

the

data

in

the

system

figure

out

where

that

data

should

be

stored

on

what

nodes

and

what

storage

devices

rebalancing

scrubbing

for

integrity

checks?

A

Repair

all

that

stuff

is

handled

by

this

underlying

rate

of

storage

layer.

It's

designed

to

provide

a

strong

level

of

consistency

so

for

those

familiar

with

the

cap,

theorem

Rados

is

a

CP

system.

Not

an

AP

system

and

its

purpose

within

a

larger

stuff

architecture

is

to

simplify

the

design

and

implementation

of

the

higher

layers,

so

that

the

file

block

and

object

components

can

focus

on

the

complexities

and

intricacies

around,

providing

that

particular

type

of

API

we're

all

Rados

can

handle

the

safety

and

availability

of

the

data,

so

Seth

Andrade

us

as

a

software

system.

A

So

it's

comprised

of

a

number

of

different

storage

demons.

The

first

one

is

the

monitor

step.

Monitor

these

monitors

are

a

central

authority

for

authentication

using

for

authentication

data

placement

and

policy

in

the

system,

they're

sort

of

the

central

coordination

point

that

manages

all

the

other

demons

that

participate

in

the

system.

A

They

protect

critical

cluster

state

with

an

algorithm

called

Paxos

and

they're,

typically

somewhere

between

three

and

seven

of

these

per

cluster,

usually

spread

across

different

hosts

or

different

racks,

so

that

you

have

reliability

and

availability,

there's

also

a

set

manager

demon

that

has

two

roles.

The

first

is

to

aggregate

real-time

metrics

about

all

the

demons

that

are

participating

the

system,

things

like

what's

the

current

level

of

throughput.

A

What's

the

current

disk

utilization,

what

are

the

various

internal

metrics

that

all

these

other

suffer

components

are

reporting

aggregate

all

that

so

yup

a

real-time

view

of

what's

happening

and

stuff,

and

the

second

job

is

to

provide

a

host

for

pluggable

management

functions.

Things

like

the

the

dashboard

for

user

management

or

automated

background

tasks

that

are

doing

optimization

and

other

automated

functions.

These

can

all

be

implemented

as

Python

modules

and

are

hosted

inside

the

set

manager.

A

Daemon,

there's

typically

only

one

or

always

actually

only

one

active

manager,

demon

or

cluster,

but

you

usually

will

have

a

number

of

standby

so

that

if

the

first

one

fails

or

the

host

that

it's

running

on

fails,

another

one

can

take

over.

And

finally,

we

have

the

stuff

OSD,

the

object,

storage,

demons.

These

are

the

workhorses

of

a

SEF

cluster

and

their

job

is

to

store

data

on

a

directly

attached,

hard

disk

or

SSD,

and

a

service

IO

requests

to

that

data.

But

these

OS.

A

A

But

all

of

these

design

methods

are

limiting

and

overall

sort

of

limit

the

design

of

the

system

and

limit

the

overall

performance

and

consistency

and

behavior.

So

instead,

I'm

step

is

designed

around

what

we

call

a

client

cluster

architecture,

which

basically

means

that

there

is

an

intelligent,

client

library,

that's

sitting

at

the

application

side.

That

understands

that.

It's

not

talking

to

a

single

server,

but

is

in

fact

talking

to

a

cluster

of

cooperating

servers

and

could

do

intelligent

things

like

smart

requests

for

adding

making

sure

that

IO

requests

are

routed

to

the

correct

node.

A

You

know

manage

the

fact

that

data

might

be

moving

around

in

the

background

and

provide

sort

of

a

seamless

experiment,

experience

for

the

application

and,

at

the

end

of

the

day,

we're

providing

the

same

application

API

as

far

as

the

application

is

concerned.

It's

writing

a

data

object

into

some

logical

construct

and

it's

this

library,

that's

handling

sort

of

the

internal

details

of

where

exactly

that

request

should

be

routed.

A

So

one

of

the

first

questions

when

building

a

system

like

this

is

where,

should

you

store

your

data?

And

how

do

you

know

where

you

put

it?

So

if

you

imagine

an

application

that

wants

to

read

or

write

a

data

object,

it

needs

to

know

where.

To

put

it,

though,

that's

sort

of

a

naive

approach

would

be

to

have

a

metadata

server

that

has

a

big

table

of

all

the

data

objects

and

what

servers?

What

notes

are

stored

on

em?

A

The

problem

with

this

is

that

it

involves

a

separate

lookup

step

if

you're

trying

to

read

an

object,

you

have

to

find

out

where

the

object

is

first

and

then

go

and

contact

that

particular

node.

That's

slow

and

it's

also

hard

to

scale

that

metadata

service

to

trillions

of

objects

when

you're,

storing

many

many

petabytes

of

data,

so

at

many

other

distributed

systems

and

stuff

as

well

to

do

is

something

called

calculated

placement.

A

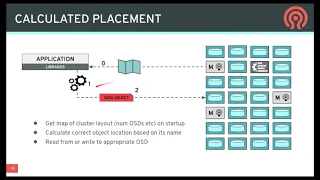

So

the

idea

here

is

that

when

the

library

starts

up,

you

get

this

initial

map

I'm

a

concise

description

of

the

structure

of

the

cluster.

What

servers

exist

and

how

data

is

supposed

to

be

laid

out

across

them

and

then,

whenever

you

want

to

read

or

write

a

particular

data

object,

you

do

some

calculation,

that's

a

function

of

the

state

of

the

cluster

and

the

name

of

the

object

and

that

spits

out

the

location

in

the

system

where

that

data

should

be

stored.

A

And

then

you

can

contact

the

appropriate

node

or

demon

in

the

system

and

then,

if

some

time

goes

by,

you

know,

maybe

the

cluster

gets

expanded

and

it

fails

and

data

gets

moved

around.

The

application

can

get

an

updated

version

of

the

topology

of

the

cluster

and

so

later

on,

when

it

needs

to

go,

read

back

that

data

that

a

previously

wrote

it

can

repeat

that

calculation,

it

might

get

a

different

answer,

this

time

and

it'll

go

and

contact

the

appropriate

node

where

that

data

should

be

stored.

A

This

avoids

the

complexity

of

having

that

overall

lookup

table

and

tends

to

scale

very

well

when

your

clusters

are

very

very

large.

But

this

brings

up

the

question

of

what

these

data

objects

actually

are.

So

this

is

the

fundamental

underlying

unit

of

storage

in

Rados

is

an

object,

so

each

object

has

a

name:

/

unique

set

of

characters,

usually

tense

characters

as

some

semantic

meaning.

Presumably

each

object

can

have

some

attributes

associated

with

them.

These

are

sort

of

analogous

to

extended

attributes

in

a

file

system.

A

I

mean

a

or

may

not

need

to

use

them,

but

you

can

have

some

sort

of

lightweight

metadata

associated

with

that

object,

but

the

bulk

of

the

data

that

the

object

is

really

in

the

byte

data

or

key

value

data.

So

the

first

type

of

object

looks

kind

of

like

a

file.

You

can

store

a

bunch

of

bytes

in

it.

Typically,

objects

can

be.

A

All

these

objects

exist

within

sort

of

a

logical

grouping

called

a

pool

so

pools

usually

map

to

some

sort

of

use

case

or

deployment.

So

you

might

have

a

pool

that

contains

all

the

virtual

machine

images

for

your

cloud

hosting

of

the

structure.

You

might

have

another

pool

that

contains

all

the

data

for

a

file

system

that

sort

of

thing,

so

it's

sort

of

a

high

level

large

grouping

of

objects

in

the

system.

A

So

the

question

is:

how

do

we

map?

How

do

we

decide

where

these

objects

should

be

stored

across

the

you

know,

hundreds

thousands

or

tens

of

thousands

of

OSDs

in

the

cluster?

So

if

you

imagine

you're,

storing

all

kinds

of

different

data

and

stuff

right,

it

might

be

disk

images

files,

video

files,

pictures,

let's

assume

for

for

as

an

example

that

we're

storing

a

big

impact

video.

So

the

first

thing

we

would

do

is

break

that

large

video.

A

Maybe

it's

several

terabytes

into

lots

and

lots

of

greatest

objects,

so

a

long

sequence

for

mega

objects

say

and

they're

all

going

to

add

names

that

are,

you

know,

probably

end

with

a

number

or

something

sort

of

this

sequence

of

objects.

And

then,

of

course,

all

of

these

objects

exist

within

a

pool,

so

we're

dumping

all

this

video

data

into

a

single

rate

of

spool,

and

so

when

you

do

this

with

lots

and

lots

of

videos,

you

end

up

with

a

pool

that

has

bazillions

of

objects,

millions,

billions

trillions.

A

A

4096

in

this

particular

example,

different

placement

groups

that

are

comprising

this

pool

so

that

each

placement

group

has

you

know

some

fraction

of

the

total

objects

of

the

pool

and

and

those

objects

map

into

placing

groups

in

a

deterministic

way

and

finally,

for

each

of

those

placement

groups

used

to

these

sort

of

fragments

of

your

overall

data

set.

We

have

to

store

it

on

multiple

devices

for

redundancy.

So

in

a

3,

X

replication

type

scenario,

then

each

of

these

plates

and

groups

would

be

but

a

Steudle

randomly

assigned

to

three

different

toasties

in

the

system.

A

But

these

are

small.

We

have

a

lot

more

placement

groups

than

we

have

OSD.

So

if

you

sort

of

look

at

this

from

the

other

perspective,

if

you

consider

one

single

storage

device,

it's

actually

going

to

store,

you

know

tens

or

maybe

around

a

hundred

different

place

in

groups

that

might

be

all

from

the

same

pool

or

might

be

different

pools

or

whatever.

So

each

OS

D

growing

lots

of

different

chucks

chunks

of

the

overall

data

set

over

all

pools,

placing

groups

that

are

stored

within

the

sub

cluster.

A

So

you

might

be

asking

why

do

we

have

this

sort

of

intermediate

stage?

Why

don't

we

just

sort

of

assign

objects

to

storage

devices

and

there

a

couple

of

reasons

for

this,

but

it's

helpful

to

look

at

what

the

alternative

design

options

might

be

in

this

case,

so

in

sort

of

the

simplest

approach

you

could

simply

choose

to

replicate

disks

in

your

system

right.

A

You

could

take

all

of

your

disks

there

doing

three

rare

replications,

so

you

have

a

bunch

of

sets

of

three

disks

and

these

disks

are

simply

all

replicating

the

same:

identical

identical

content,

so

sort

of

raid

zero

or

raid

one

type

configuration

the

first

limitation.

You

notice

is

that,

in

order

to

do

this,

all

these

disks

have

to

be

exactly

the

same

size,

or

at

least

you

can

only

use

sort

of

the

smallest

size

of

three

disks.

A

That's

a

bit

of

a

limitation,

but

maybe

you

can

get

past

that

if

you

instead

replicate

placing

groups,

for

example,

though,

things

are

a

bit

better

because

you

have

each

individual

placement

group,

you

can

sort

of

randomly

choose

which

devices

it's

assigned

to

and

they're

sort

of

spread

around.

You

can

have

different

size

devices,

because

the

smaller

devices

might

just

have

fewer

placement

groups

and

the

larger

devices,

but

it's

a

bit

more

flexible

in

that

sense.

A

And

finally,

you

can

imagine

taking

this

to

an

extreme

where

you

take

every

single

object

in

the

system

and

you

sort

of

randomly

map

that

to

different

devices,

and

you

end

up

with

a

situation

where

sort

of

every

set

of

posties

or

disks

in

the

system

is

sort

of

sharing

replicas

data

with

every

other

disk.

So

you

have

a

sort

of

a

tightly

fully

connected

mesh

of

storage

devices.

A

So

let's

look

at

what

happens

when

a

disk

fails.

So

in

the

disk

replication

scenario,

if

the

disk

fails.

The

first

thing

you

notice

is

that

you

have

to

have

a

spare

device,

that's

empty

and

totally

unused

in

order

to

do

a

repair

and

then

also

that

spare

has

to

be

sort

of

the

appropriate

size

so

that

you

can

make

a

new

copy

of

the

failed

data

onto

new

disks

to

compensate

for

the

fact

that

you

lost

one.

So

this

is

a

couple

of

problems.

First,

you

have

to

have

these

spares

around.

A

They

have

to

give

you

the

right

sizes

and

before

the

failure,

the

other

idle

disk-

that's

not

being

used,

you're

essentially

wasting

that

resource,

and

the

second

thing

is

that

the

recovery

process

is

bottlenecked

by

the

throughput

of

a

single

disk.

So

you

can

only

recover

as

quickly

as

this

thing

as

this

replacement

disk

can

write.

A

Its

data

or

the

source

can

read

its

data

and,

as

we

know,

hard

disks

are

getting

bigger

faster

than

they're

getting

faster,

which

means

that

the

recovery

time

for

a

single

disk

is

getting

longer

and

longer,

which

means

that

you

have

a

wider

Wender

window

of

vulnerability

during

which

sort

of

the

durability

and

replication

count.

That

data

is

somewhat

compromised.

So

that

can

be

problematic

in

the

case

of

placement

groups.

A

It's

a

little

bit

better

because

you

notice

that

when

we

lose

a

disk,

we

have

copies

of

the

loss,

placement

groups

on

lots

of

different

devices,

and

we

can

choose

new

location

for

those

placement

groups

that

are

independent

and

also

pseudo-random,

so

that

the

you

know

this

cream-colored

one

can

replicate

to

one

node

and

the

blue.

One

can

replicate

your

different

node,

and

so

you

suddenly

have

a

parallel

recovery

process,

so

both

of

these

pieces

are

recovering

in

parallel.

It

will

happen

twice

as

fast

in

the

extreme

you

can

imagine.

A

If

there

were

a

hundred

placement

groups

on

that

failed

disks,

they

could

go

a

hundred

different

disks

recovering

in

parallel,

going

taking

one

one-hundredth

at

the

time

and

also

you'll

notice

that

we

didn't

need

a

spare,

as

we

can

simply

move

these

placement

groups

to

do

the

remaining

sort

of

empty

space

on

the

surviving

nodes

in

the

cluster.

So

that

means

that

all

of

our

hardware

is

being

utilized

at

all

times.

A

It

should

work

the

larger

problem

with

that

scenario

or

what

that

strategy

comes

when

you

think

about

what

happens

when

you

have

a

concurrent

failure.

So

if

you

imagine

that

you're

very

unlucky

and

not

just

one

device

filled

the

three

device

devices

failed

at

the

same

time,

what

happens

so

in

the

original

scenario?

A

Where

you

have

these

sort

of

replica

sets

of

three

it's

most

likely

that

if

three

devices

failed

they're,

not

all

gonna,

be

from

the

same

replica

set,

they're

gonna

be

spread

across

different

replica

sets,

and

so

you're

never

gonna

lose

or

very

rarely

are

you

going

to

lose

all

three

replicas

of

the

same

data

and

actually

I'm

data

loss?

Usually

it's

going

to

be

spread

across

different

wrote,

the

cassettes

and

you'll

be

able

to

recover

so

very

few.

Triple

failures

caused

a

loss.

A

On

the

other

hand,

though,

if

you

think

about

the

scenario

where

we

were

replicating

individual

objects,

because

we

have

a

gazillion

different

objects

and

they're

all

randomly

placed

pretty

much.

Every

set

of

three

devices

within

this

cluster

has

some

data

that

is

replicated

on

those

just

on

those

three

nodes,

which

means

that

there's

pretty

much

always

going

to

be

some

data

loss.

A

It

might

not

be

very

much,

but

there

you're

always

going

to

lose

some

data

and

that

can

be

particularly

problematic

when

sort

of

the

integrity

of

an

overall

data

set

depends

on

having

all

the

data

I'm

not

sort

of

having

some

random

subset

of

it

disappearing

and

hoping

that

the

rest

of

it

will

still

hang

together.

So

that's

very

concerning

and

then,

if

you

look

at

placement

groups

and

then

it's

sort

of

somewhere

in

between

right,

so

some

triple

failures.

A

But

it

turns

out

that

the

sort

of

placement

group

strategy

is

a

balance

of

these

competing

extremes.

So

in

the

academic

literature,

this

was

described

as

D

clustered

replica

placement

and

it's

this

basic

trade-off.

So

if

you

have

more

clusters

more

placement

groups,

then

you

have

faster

recovery

and

a

more

even

data

distribution.

And

if

you

have

fewer

clusters,

if

you

have

fewer

placement

groups,

then

you

have

a

lower

risk

of

concurrent

failures,

leading

to

data

loss

event

and

having

using

the

strategy

with

placement.

A

Groups

is

a

happy

medium

because

you

can

avoid

the

spare

devices

and

you

can

adjust

by

adjusting

the

number

of

placement

groups.

You

can

sort

of

choose

what,

where

you

want

to

be

on

that

on

that

spectrum

in

either

extreme

as

a

the

perfect

world.

But

you

can

sort

of

have

this

balance

and

durability

in

the

case

of

conquering

failures

and

the

recovery

time

that

you

want

to

tolerate,

and

when

you

do

that,

then

sort

of

having

a

complete

strategy

to

keep

your

data

safe

is

really

then

around.

A

Avoiding

those

concurrent

failures

in

the

first

place

or

ensuring

that

when

concurrent

failures

do

happen,

they

don't

lead

to

data

loss

and

the

way

to

do

this

is

to

separate

your

replicas

of

your

data

across

its

failure

domains.

And

so,

for

example,

you

might

have

a

rack

cluster

that

comprised

of

hosts

organized

into

racks,

racks

and

rows

rows,

no

data,

centers

and

so

forth.

A

By

having

that

infrastructure

aligns

to

sort

of

the

physical

placement

of

those

devices

in

space,

then

you

try

to

correlate

those

failures

with

the

failure

domain

and

minimize

the

risk

that

you'll

have

just

simultaneously

failing

that

are

in

different

racks.

That

might

be

sharing

the

same

and

so

the

challenge.

Then

the

real

question

is

how

to

question

you

this.

How

do

we

have

this

like

magic

policy

that

places

all

these

bazillions

and

placed

in

groups

across

devices

that

respects

this

buyer,

to

have

replicas,

separated

across

failure,

domains

and

so

on?

A

Do

all

the

things

you'd

want

to

do

in

a

real

store

system,

and

then

the

answer

is

with

an

algorithm

that

we

call

crush

so

crush.

Is

a

pseudo-random

placement

algorithm,

it's

repeatable,

deterministic

calculation,

a

function

of

the

state

of

the

cluster

and

the

name

of

the

object

that

spits

out

where

the

data

should

be

stored.

So

the

inputs

are

the

topology

of

the

system

so

that

hierarchy,

that

I

was

talking

about

how

osts

are

organized

under

hosts

and

racks

and

rows,

and

so

on.

A

The

pool

parameters

like

the

replication

Factory

and

are

my

placement

policy

and

then

the

identifier

for

the

placement

group

that

I'm

measuring

in

a

store-

and

you

put

all

that

into

crash.

That's

some

calculation

and

it's

it's

out

a

number

as

essentially

not

a

number,

but

an

order

list

of

which

OS

DS

that

they

sent

group

should

be

stored

on

and

then

that's

where.

That's,

where

you

going

to

put

your

data,

and

so

as

part

of

this,

these

pool

parameters

crush,

allows

you

to

write,

rule-based

policies

that

describe

how

those

replicas

should

be

placed.

A

So

you

can

do

things

like

say

you

know:

I

want

three

relic

dozen

different

wrecks.

Maybe

I

only

want

to

use

SSD

devices,

that's

one

of

the

inputs

to

the

function

and

spits

out

which

devices

to

use

at

the

end.

You

can

have

something

more

complicated

like

if

you're,

using

erasure

coded

scheme,

that's

six

plus

two,

so

you

have

eight

sort

of

shards

of

your

data.

Maybe

I

want

to

have

two

of

those

shards

per

rack

spread

across

four

racks,

but

of

those

two

shards

that

are

within

a

particular

rack.

A

I

want

those

to

be

separated

across

different

hosts

and

I

only

want

to

use

hard

disks.

Something

like

that.

It's

also

possible

one

of

the

key

properties

of

crush

is

that

it

generates

what

we

call

a

stable

mapping.

So

that

means

that

if

you

have

a

particular

state

of

the

cluster

with

some

set

of

devices

and

there's

some

topology

change

like

a

node

is

added

or

a

device

fails

or

something

like

that.

A

Then

we

want

the

amount

of

data

that

has

to

move

in

order

to

rebalance

the

distribution

to

be

proportional

to

the

size

of

the

change

made.

So,

for

example,

if

I

have

a

hundred

nodes

and

one

node

fails,

then

roughly

one

percent

of

the

data

is

going

to

move.

So

when

I

repeat

my

crush

calculation

with

all

the

existing

placement

groups

and

find

out

where

they

should

be

stored.

A

Now,

given

the

new

state

of

the

system

about

1%

of

those

placement

groups

will

be

mapped

to

different

OSTs

and

will

requires

some

sort

of

data

movement,

data

movement,

and

so

that's

a

very

important

property

with

storage

in

particular,

because

moving

data

around

is

very

expensive

and

finally

crush

supports

bearing

device

sizes.

So

every

device

in

this

hierarchy

has

a

weight

and

that

weight

determines

the

sort

of

proportional

amount

of

data

that

will

be

stored

there.

A

So

that's

Crush,

that's

sort

of

the

magic

that

that

figures

out

where

all

the

data

in

systems

could

go,

and

everybody

can

repeat

this

calculation

and

figure

out

out

where

to

read

her

to

write

data.

The

challenge,

then,

is

what

should

raid

us

then

do

once

it

knows

where

it's

where

the

data

should

go,

how

does

it

actually

store

it

and

two

strategies?

So

all

the

objects

that

are

stored

in

a

pool

have

to

be

durable.

We

have

to

make

sure

they're

safe.

A

Those

pools

are

broken

up

in

the

placement

groups,

so

each

individual

placement

group-

you

know

some

subset-

that

the

overall

data

has

to

be

durable

in

some

way

and

we

have

two

strategies

for

doing

that.

I'm.

In

the

case

of

replication,

we

simply

stamp

out

copies

of

placing

group.

So

if

we

imagine

this

PG

as

two

different

objects

in

it,

we

just

have

three

different:

no

SDS.

We

store

a

copy

of

this

placing

group

on

each

of

those

those

these

and

each

OSD.

A

A

A

So

if

I

want

to

go

from

three

role

because

to

five

replicas

I

can

just

you

know,

flip

a

switch

in

the

pool

and

rate

us

will

go

off

and

start

creating

new

copies

of

these

keys

and

finding

new

places

to

store

them,

and

that's

all

fine,

it's

really

quite

straightforward.

Our

researcher

coding

is

a

different

reliability

strategy

and

it

works

very

differently

in

that,

instead

of

having

identical

copies

of

placement

groups.

Instead,

we

have

different

shards

different

slices

if

you

will

of

the

same

placement

group.

A

So

if

the

placement

group

logically

contains

a

number

of

objects

in

this

example,

which

is

a

four

plus

two

scheme,

we

would

have

four

shards

and

half

of

the

data.

That's

striped

over

those

four

shards

in

a

way,

and

then

we

would

have

two

additional

shards

that

have

some

sort

of

parity

and

redundancy

information.

So

this

is

really

what

raid

is

doing.

A

A

richer

coding

is

sort

of

a

generalization,

a

more

flexible

version

of

what

raid

does,

and

so

we

have

these

additional

I'm

components

that

provide

some

redundancy

so

that,

if

I

lose

either

one

or

two

of

these

different

shards

I

can

always

read

the

read

the

surviving

pieces.

Do

some

calculation

and

rebuild

the

data

and

you'll

notice

that

eraser

coding

is

much

more

storage

efficient.

So

these

first

four

shards

have

a

complete

copy

of

the

original

data

and

then

I

have

two

additional

shards.

A

So

I

have

sort

of

a

50

percent

storage

overhead

to

provide

a

level

of

redundancy

that

allows

me

to

lose

two

different

devices

and

still

have

a

full

copy

of

my

data

or

be

able

to

rebuild

my

data.

You'll

notice

that

in

the

3x

replication

case,

I

can

also

only

survive.

Two

node

failures,

there's

two

copies

and

still

others

arriving

a

copy,

but

the

overall

storage

overhead

is

3x.

So

this

is

one

copy

of

the

data

and

I

have

sort

of

a

200%

overhead

versus

a

50%

overhead,

so

wrist

recording

is

much

more

space

efficient.

A

Unfortunately,

it's

less

efficient

when

you're

doing

recovery,

because,

as

I

mentioned

with

replication,

you

can

just

read

any

surviving

copy

and

then

write

it

again

and

that's

pretty

straightforward

in

a

racial

coding

case,

if

I

lose

one

of

these

shards

I

have

to

read

all

of

the

surviving

shards

and

do

some

calculation

in

order

to

generate

regenerate

that

one

additional

shards.

So

it's

significantly

more

expensive

in

terms

of

that

work.

Bandwidth,

then

storage

IO,

but

it

works

well,

particularly

for

datasets,

where

you

have

large

objects

that

aren't

changing

very

often

and

then

radius.

A

Of

course,

support

allows

you

to

store

lots

of

different

pools

in

your

cluster,

and

so

you

can

have

multiple

specialized

pools

living

within

the

safe

cluster

that

have

different

storage

policy,

so

you

might

have

a

replicated

pool.

You

might

have

an

eraser

coated,

pools,

some

of

them

using

hard

disks

and

be

messy

using

SSDs

and

so

on,

based

on

those

those

quests

crushed

policies.

A

Now

by

default

and

in

most

cases

all

of

these

pools

in

the

system

X

by

will

normally

just

share

devices,

so

each

pool

is

being

broken

up

into

placement

groups

and

then

they're.

All

things

that

are

randomly

spread

across

the

u.s.

DS

in

the

system,

unless

you

are

specifically

specifying

a

policy

that

specifies

SSDs

or

her

gist's

or

something

like

that.

But

this

sort

of

mapping

between

the

logical

pools

and

the

physical

storage

devices

means

that

you

have

elastic

and

scalable

provisioning.

A

So

you

can

a

pool,

can

sort

of

contain

either

a

little

bit

of

data

or

effectively

infinite

amount

of

data.

As

long

as

you

can

provision

of

those

DS.

In

the

background

to

keep

up

with

your

storage

demand

as

you

store

data,

then

you

can

keep

expanding

the

system

and

you

won't

run

out

of

space.

You

want

to

determine

specify

what

the

size

of

a

pool

is

up

front

or

anything

like

that.

I'm,

it's

totally

virtualized

and

flexible.

A

This

approach

also

gives

you

sort

of

the

ability

to

have

uniform

management

of

devices,

though

I

just

sort

of

deal

with

the

deploying

the

Ceph

software

on

new

hardware

nodes

and

making

sure

that

they're

consuming

storage,

I,

throw

them

into

the

pool

and

then

stuff

and

crush

will

handle

remapping

data

onto

them

and

consuming

them.

So

I

have

sort

of

a

common

workflow

for

managing

the

hardware

resources.

Regardless

of

what

is

consuming

that

storage,

it

might

be

file,

storage,

the

FS

or

objects,

or

something

else.

A

Those

are

all

users

of

Rados

and

ratos

is

just

providing

that

storage

via

these,

these

logical

pools.

So

another

way

to

think

about

this

is

consider

that

Redis

is

really

virtualizing

storage

right.

We

have

these

virtualized

pool

storage,

abstractions

that

are

sort

of

variably

sized

and

have

some

have

some

of

policy

around

like

what

performance

you

want

out

of

it,

and

but

the

internal

redundancy

scheme

is

but

from

users

perspective,

they're,

just

a

bucket

full

of

objects

right

and

then

ratos.

A

Some

crush

do

some

magic

to

make

sure

that

these

things

get

replicated

in

a

richer

coated

and

distributed,

and

on

the

back

end,

you

have

all

these

different

underlying

storage

devices

and

software

demons

that

are

actually

making

this

all

work.

But

as

far

as

somebody

who's

consuming

the

storage,

they

don't

really

know

or

care,

though,

and

that

that's

an

turns

out

to

be

a

very,

very

powerful

thing,

I'm

in

a

tick

particular

because

it

means

that

radius

can

be

used

as

a

platform

or

the

higher

level

services

that

are

built

on

top

of

it.

A

So

radius

provides

this

highly

available,

highly

durable

storage

service,

and

then,

on

top

of

that

we

can

build

object,

service,

block

service

and

file

service,

so

we're

gonna,

move

on

and

talk

a

bit

about,

radius

gateway,

the

component

that

provides

object,

storage

services

in

in

the

stuff,

so

rgw

stands

for

the

rios

gateway

and,

as

you

might

imagine,

it's

a

gateway

that

provides

s3

and

Swift

api

compatible

object.

Storage,

though

this

is

an

API,

that's

based

on

rest,

it's

usually

tunneled

over

HTTP

and

provides

sort

of

a

high-level

optic

storage

service.

A

It's

the

same

type

of

thing:

that's

often

combined

with

a

load

balancer

and

actually

exposed

to

the

public

Internet.

So

much

like

Amazon

s3

service.

You

can

have

an

encrypted

connection

to

these

gateways

and

you

can

store

and

retrieve

objects

so

that

the

data

model,

our

job,

provides

a

little

bit

different

than

what

ratos

does,

though

I'm

the

s3

API

is

built

around

the

idea

of

having

users

and

buckets

collections

of

objects,

and

then

objects

are

usually

large.

A

--Is--

blobs

of

data,

so

there's

a

whole

model

around

how

that

what

the

structure

the

data

is

and

how

the

permissions

work

and

how

users

are

allowed

to

access

what

objects

and

so

on

based

around

Ackles.

All

of

that

is

implemented

and

enforced

by

raitis

gateway

and

in

fact,

what

it's

doing

on

the

backend

is

mapping

that

into

some

internal

storage.

That's

dumping

into

ratos.

So

one

important

thing

to

recognize

is

that

the

objects

that

we're

talking

about

with

our

GW

object,

storage

or

s3

objects.

That's

not

the

same

thing

as

the

radio

subjects.

A

I

was

talking

about

a

few

moments

ago

that

are

stored

in

pools

and

ratos.

Burritos

objects

are

small,

they're,

usually

you

know

less

than

10

megabytes

and

they

can

store

key

value,

data

and

byte

data,

and

so

on.

They're

sort

of

this

low-level

object,

rgw

objects,

s3

objects

can

are

usually

pretty

big,

they

can

be

gigabytes

terabytes

and

they

have

apples

associated

with

them

and

they

live

in

buckets,

which

is

a

totally

different

abstraction.

A

You

could

have

you

know

millions

of

buckets,

whereas

you

only

have

usually

a

small

number

of

pools

and

Rados

and

so

on.

So

it's

a

very

different

use

case

in

gredos

gateways,

both

component

in

the

system.

That's

sort

of

making

that

breath

remapping

and,

in

fact,

mostly

what's

happening,

is

that

rgw

is

taking

these

big

s.

Freestyle

objects

directing

them

across

a

lot

of

smaller

ratos

objects

and

then

doing

the

authentication

and

enforcement.

A

So

if

we

look

at

a

little

bit

of

detail

about

how

that

might

work,

you

can

imagine

that

we're

storing

a

large

video

file,

vn

s3

plate

or

post

operation

into

our

GW

and

that's

getting

stored

into

the

backend

stuff

clusters.

So

the

first

thing

that's

going

to

happen

is

we're

gonna

go

look

at

our

metadata.

Our

GW

can

look

at

our

metadata

about

users.

A

What

s3,

users

and

buckets

are

defined

in

in

the

system

and

make

sure

that

this

is

a

valid

request,

that

it's

authenticated

and

that's

a

bucket

we're

putting

into

actually

exists

and

what

the

policies

are

around

and

so

on.

It's

going

to

go.

Make

an

update

to

this

bucket

index

object,

so

s3

API

is

defined

around

the

idea

of

being

able

to

do

a

sorted,

lexicographic

enumeration

of

all

the

objects

in

a

bucket,

and

so

we

have

to

take

their

sort

of

s3

names

and

sort

them

and

put

them

in

an

index.

A

So

we

can

perform

perform

that

enumeration.

So

it's

going

to

make

an

update,

they're

saying

we're

in

the

process

of

updating

the

subject

and

then

it's

gonna

take

the

data

and

stripe

it

across

lots

and

lots

of

reduce

objects,

dump

them

all

in

reduce

and

then,

when

it's

done,

it'll

update

the

index

and

say

I'm

done

I'm

taking.

A

So

you

can

think

about

this

whole

picture

as

being

grouped

into

something

called

zone

right.

So

you

have

you,

have

these

radio

spools

that

have

the

actual

data

that

you're

storing

and

the

metadata

about

it,

and

then

you

have

some

number

of

ratos

gateways.

You

can

scale

these

out

horizontally.

You

know

less

than

ten

tens

of

them.

A

The

idea

here

is

that

you

can

add

multiple

zones

that

are

deployed

that

are

federated

together,

so

each

of

these

zones

might

live

in

a

completely

different

stuff

cluster,

maybe

in

different

sites

and

different

geographies

and

different

continents,

but

they're

associated

in

that

there's

a

replication

relationship

where

all

of

the

user

and

bucket

info

you

know

which,

as

freezers,

exist

in

which

s3

buckets

exist,

is

replicated

between

these

two

zones.

So

they

have

a

shared

view

of

what

buckets

they're

serving,

but

they

have

different

data.

A

So

when

you

have

a

request

to

read

a

bucket

from

one

Redis

gateway,

if

it's

stored

locally,

it

can

service

that

request

and

read

it

here.

If

you

request

a

bucket

foo,

that's

actually

stored

in

a

different

zone

bar

then

this

gateway

knows

that,

because

the

house,

with

the

metadata

about

that

bucket,

it

can

send

you

a

redirect

that

bounces

the

client

over

to

the

appropriate

gateway,

and

so

you

can

read

the

data

from

that

location

instead.

So

this

is

really

very

similar

to

what

Amazon's

globalists

service

provides

right.

A

So

you

have

a

global

namespace

of

buckets

and

users.

When

you

create

a

bucket,

you

create

that

bucket

in

a

particular

region

which

is

similar

to

a

zone,

and

you

can

request,

do

reads

and

writes

from

that

bucket

anywhere

in

the

world

and

as

soon

as

sort