►

From YouTube: Ceph and Cloudstack: Integrating the Ceph Block Device into your Cloudstack Deployment - Ian Colle

Description

CloudStack Collaboration Conference North America 2014

A

Sorry,

did

you

get

a

picture

of

me

behind

you?

I

know,

I'm

being

a

little

frazzled

I

walk

around

a

lot

and

I

talk.

What

I'm

gonna

try

to

cover

quickly

because

I

had

some

technical

difficulties

with

the

integration

between

Microsoft

and

Apple

is

hiding

a

great

SEF

and

cloud

stack.

There's

a

little

bit

about

me.

The

slides

will

be

available.

If

you

want

to

contact

me,

feel

free

to

tweet

me,

a

tire

Koli

I

also

sit

on

IRC

either.

One

of

those

I

are

Kohli.

A

All

right

can

I

just

get

a

hand

how

many

people

currently

have

running

implementations

of

Ceph?

Okay,

how

many

people

have

heard

of

Ceph

how

many

people

have

no

idea?

What

you're

in

for

right

now

be

honest?

It's

okay,

all

right!

So

Seth

is

unified,

object,

block

and

file

storage

I'll

go

into

a

little

bit

about

the

architecture,

with

the

the

time

that

we

have,

but

what

it

does

is

provide

you

block,

which

you're

extremely

interested

in

in

a

cloud

environment,

but

also

object.

A

Little

bit

about

Seth

was

started

back

in

2004,

open

sourced

in

2006.

Then

it

really

started

to

pick

up

when

it

was

picked

up

in

the

accelerate.

When

was

picked

up

in

the

Linux

kernel

in

2010

and

then

in

2012.

It

was

integrated

in

both

cloud

stack

and

OpenStack,

so

we've

been

integrated

since

for

Oh.

A

And

the

the

reason

this

is

so

good

for

an

exascale

size

project

is

when

you

think

about

having

thousands

of

terabytes

of

data

or

even

thousands

of

petabytes

of

storage.

Just

do

the

MTBF

numbers.

You're

gonna

get

failures

all

the

time

so

with

the

underlying

architecture

of

Ceph.

That

failure

is

hidden

from

you

again

community

stuff,

it's

totally

exploded

since

2012.

But

let's

talk

more

about

the

architecture,

so

we

have

underlying

object,

storage

demons

that

are

one-to-one

with

each

of

your

physical

devices.

A

Then

we

have

these

monitor

nodes

that

sit

out

there,

monitoring

the

cluster,

getting

updates

from

the

clients

getting

updates

from

the

the

OSD

saying:

ham,

live

ham

dead

and

they

help

give

you

a

map

of

your

cluster

of

what's

alive,

what's

dead,

what

state

things

are

in

and

all

of

all

of

that

then

talks

to

the

various

levels.

It's

based

all

on

the

object,

storage

and

then

everything

else

goes

on

top

of

that

and

we'll

see

a

better

picture

of

that

later.

But

first

I

want

to

talk

about

again.

A

What

makes

SEF

special

and

that's

the

crush

algorithm

crush,

stands

for

controlled

replication

using

scalable

hashing,

and

what

that

does

is

so

when

you've

got

your

object,

then

it

hashes

it

in

a

pseudo-random

manner,

but

it's

deterministic,

so

you

know

that

every

time

you

run

it

through

the

crush

algorithm,

whether

you're

on

the

client

or

the

daemon

you're

gonna

get

this.

So

it's

gonna

take

this

chunk

right

here.

Put

it

on

this

OSD!

A

That's

what

each

of

these

squares

stand

for,

and

given

your

replication

rules

and

here

I've,

given

it

a

replication

rule

of

1,

so

I've

got

one

original

and

one

copy.

It's

gonna

put

one

of

them

there

and

one

of

them

here

now

what's

powerful

about

that

is

whether

I'm

a

client.

That's

looking

for

the

data

looking

where

to

write

the

data.

Looking

where

to

read

the

data

or,

if

I'm

the

storage

device

itself

I,

don't

have

to

go

to

a

lookup

table,

I'm

calculating

that

locally.

Ok,

so

there's

no

I!

Don't

have

to

go.

Hey.

A

A

So

this

is

just

showing

you

what

that

looks

like

when

I've

taken

this

object

and

put

it

across

to

all

my

OSDs

more

more

detail

about

what

crush

does

now?

What

happens

when

we're

doing

a

read

again?

It

doesn't

do

any

sort

of

lookup.

It

calculates

that

locally

on

the

client.

But

what

happens

when

there's

an

outage

so

before

my

rule

said

I

had

to

have

one

original

one

copy

and

that

they

couldn't

be,

for

example,

in

the

same

host.

Let's

say

this

is

one

rack.

This

is

another

rack.

A

So

what's

gonna

happen,

is

these

OS

T's

talk

to

each

other,

so

they

talk

to

the

guy.

Next

to

him

say:

hey

you

still

there

hey

you

still

there

and

then,

additionally,

the

pair's

talk

to

each

other.

So

these

two

guys

say:

hey

I,

know:

you've

got

my

copy,

you

still

there

I

know

you

got

my

copy

you're

still

there

and

then

also

every

once

in

a

while.

The

monitors

will

go

out

and

say:

hey

how's

everybody

doing

I

haven't

heard

from

you

in

a

while.

So

through

one

of

those

mechanisms

the

system

finds

out.

A

This

guy's

gone

whether

that's

a

network

issue,

whether

that's

the

drive,

just

went

bad,

so

this

guy's

gone

so

right.

Now,

I

only

have

one

copy

of

my

yellow

and

my

red

chunks,

but

immediately

without

you

doing

anything,

the

system

says:

okay,

you

guys

start

moving

stuff

around

again.

This

is

calculated

locally

I.

Don't

have

to

go

to

any

sort

of

look

up

and

say:

hey

I

lost

my

copy.

Where

do

I

put

it

now?

It

knows

my

rules

say

that

I've

got

to

have

another

copy

that

doesn't

exist

in

this

row.

A

Here

are

the

available

devices

go

put

it

there

and

without

any

intervention

of

the

user?

The

data

just

moves.

So

let's

look

at

us

now

now

the

data.

If

it's

going

to

go,

do

a

read.

It

knows

that

it

can

fight

at

this

other

place.

Because

again

the

the

monitor

gets

that

updated

map

saying

hey

the

copies

are

here:

I

can

go

there.

So

what

happens?

When

you

add?

More

storage,

so

now

we've

got

the

opposite

issue.

So,

instead

of

having

a

failed

device,

now

I've

got

I,

add

additional

rack

of

storage.

A

A

So

why

is

that

important

for

cloud

storage?

So

you've

got

one

VM

the

block

device

hypervisor

we'll

go

again

more

into

the

architecture

here,

because,

with

the

with

the

block

device

that

Saif

gives

you,

you

can

spin

up

hundreds

of

them

with

cloning.

So

this

takes

a

little

bit

to

draw.

So

here

we've

got

your

original

block.

Okay,

now,

with

our

instant

copy,

go

back

to

that

so

now,

I've

got

my

original

four

copies

same

amount

of

storage.

I've

only

used

that

original

144.

A

A

Now,

when

the

client

goes

to

read,

it's

smart

enough

to

know

well

if

this

is

a

part

that

hasn't

changed,

I

just

passed

that

through

it

goes

right

to

the

original.

But

if

it's

a

chunk,

that's

changed.

Well,

yeah

I've

got

to

read

from

here,

but

you

see

your

total

storage,

for

this

is

only

the

Delta

plus

the

original.

A

A

If

you

haven't

heard

of

Vito

den

Hollander,

you

probably

should

shame

on

you.

He

is

active

in

the

cloud

stack

community

he's

a

cork

emitter

and

I

mean

I.

Gherkin

Lee

calls

the

house

that

Vito

built,

but

this

would

not

be

here

but

for

him

so

he

developed

the

the

Rados

Java

bindings.

He

did

the

plugins

in

libvirt.

He

put

the

logic

into

KVM

you.

So

all

this

is

credit

to

him.

So

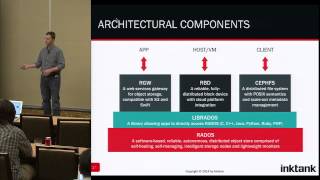

let's

go

back

to

the

overall

SEF

architecture.

We've

got

this

underlying

object

store

again

everything

sits

on

this

object

store.

A

A

Now

one

thing

I

I

need

to

be

clear

about

is

that

this

I'm

not

saying

that

you

can

have

unified

storage

in

that

I

can

talk

to

the

exact

same

object

using

each

of

these.

So

it's

not

that

I

can

I

can

access

an

object

using

the

Gateway

using

the

block

device

you're

using

SEF

of

s.

Those

are

different

pools

of

data

that

you're

going

to

be

accessing,

but

what

it

allows

you

to

do

is

using

the

same

underlying

infrastructure.

The

object

storage

allows

you

to

have

access

to

each

of

these

features,

so.

A

A

You

then

talk

to

lib

RBD,

and

so

it's

the

it's.

The

cloud

stack

manager

talking

to

sending

JSON

commands

out

to

the

cloud

stack

agent

that

then

converts

those

into

libvirt

calls

and

those

liberabit

calls

then

talk

to

our

lib

RBD,

which

again,

as

I,

said

on

the

previous

one,

then

talks

to

that

object.

Storage

underneath

passes

that

back

up.

So

what

do

I

need

to

do

this?

A

A

A

What

do

you

do

first

step?

Is

you

have

to

set

up

your

self

cluster

and

there's

a

Quick

Start

Guide?

There

that'll

walk

you

through

that

downloading

the

software

setting

up

your

monitors,

setting

up

your

OS

DS,

then

once

you've

got

that

up

and

running,

install

and

setup

your

QE

moon

same

with

libvirt,

then

on

SEF

create

your

your

pool

that

you're

going

to

use

for

your

cloud

stack

storage.

So

this

is

just

a

set

command,

so

a

Sappho

SD

pool

create

cloud

stack.

A

It's

that

simple

then,

just

like

you

would,

with

any

other

primary

storage,

just

go

to

your

cloud,

stack

administrator,

GUI,

add

primary

storage

and

then

for

your

protocols.

Select

our

BD

and

r

BD

stands

force,

ray

dose

block

device,

so

we've

got

acronyms

of

acronyms,

so

ray

dose.

Is

your

reliable

autonomous

distributed

object

store?

You

can

say

that

mouthful.

So

that's

ray

dose

is

the

object

storage.

So

then

you

take

ray

dose

block

device

and

that's

where

the

RPD

comes

from.

A

A

A

The

first

two

I

think

are

really

exciting

for

use

cases

so

cache

tearing

if

you've

got

a

write

back

cache.

So

for

your

object,

storage,

you

can

have

clients

that,

with

the

front-end

of

let's

say,

you

want

to

have

spinning

disks

for

your

entire

five

petabyte

cluster,

but

you

can't

afford

to

have

five

five

petabyte

of

flash

in

your

system.

A

Although,

if

you

do

I'd

like

to

talk

to

you

afterwards,

but

five

hundred

terabytes

could

be

a

reasonable

front-end

and

you

want

to

make

sure

that

you're

getting

good

throughput

on

your

rights,

so

you're

gonna

write

to

the

cache

and

then

based

upon

the

cache

rules

you

set

up.

The

cache

is

going

to

push

that

stuff

off

so

think

of

this

as

a

hot

to

warm,

so

you're

pushing

it

from

hot

to

warm.

A

And

then

this

is

a

feature

that

is

not

fully

ready

and

or

fully

tested

in

Firefly,

but

will

probably

be

the

capabilities

there,

but

we're

not

sure

if

we've

totally

satisfied

every

corner

case.

So

we're

not

gonna,

say

it's

ready

until

probably

giant,

but

this

is

a

read-only.

So

you've

got

the

opposite

problem

here.

I

don't

want

a

buffer

reads

from

a

customer

say:

let's

say:

I've

got

some

sort

of

a

content,

distribution

network

and

so

I

want

to

make

sure

that

they're

getting

as

much

read

throughput

as

possible.

A

So

I

put

my

flash

cache

on

the

front

end

and

I

can

either

pre

stage

stuff

or

I

can

say

hey

once

someone

has

accessed

that

pull

it

up

there

and

leave

it

there

until

I

start

to

fill

up,

and

then

you

can

push

it

back

down

and

as

far

as

size,

wise.

The

the

feature

that

is

biggest

for

us

in

Firefly

is

erase

your

coding

erase.

Your

coding

is

the

same

math

magic

that

makes

raid

5

raid

6

work,

one

of

the

biggest

kind

of

digs

on

Seth

and

the

past

has

been

okay.

A

Fine,

you

see,

I

can

do

it

with

commodity

storage,

but

if

I'm

not

relying

upon

the

smarts

of

the

raid

and

the

hardware

to

save

my

data,

how

am

I

saving

it?

It's

with

replication

so,

instead

of

instead

of

having

one

copy

of

my

data,

that's

rated

now

I've

got

I've,

got

to

basically

buy

three

times

the

usable

store

time

storage,

to

ensure

that

my

data

is

safe,

so

where's

that

cost

benefit.

Maybe

it's

worth

me

worth

it

to

me

to

pay

more

for

a

big

EMC

chassis

as

opposed

to

three

HP's.

A

So

in

this

scenario,

you've

got

it.

I've

got

a

ten

ten

mega

object

to

ensure

my

my

data

retention

and

quality

I've

got

one

original

and

then

two

copies.

So

we

could.

We

call

that

three

times

replication,

so

you've

got

three

times

the

original

size,

so

I've

had

to

pay

for

30

to

really

get

ten

usable.

A

Now,

with

the

racer

coding,

what

you

do

using

reed-solomon

or

other

erasure

codes,

you

just

split

it

up

using

the

chunks

using

math

and

basically

there's

various

K

plus

M

values

that

you

can

set

up

that

allow

you

to

say

hey

as

long

as

I've

got

this

many,

let's

say:

I

chop

it

up

into

14

chunks.

As

long

as

I've

got

ten

of

those,

then

I

can

I

can

rebuild

my

data

if

anything

goes

wrong.

So

I've

got

my

original

10

plus

4

parity,

just

like

your

raid

raid

5

raid

6.

A

So

what's

what's

next,

if

you're,

if

you're

interested

in

finding

out

more

about

Seth

the

the

docks

are

pretty

pretty

detailed

and

pretty

good

there,

you

can

go

to

prove

those

we've

got

some

juju.

You

can

go

play

with

it.

On

AWS,

the

ansible

Play

Books

are

a

new

thing

that

we

we

just

started

with.

In

the

last

month.

We've

got

a

lot

of

traction

on

using

ansible

to

set

it

up,

and

then

we've

got

a

manual

QuickStart

guide.

A

A

A

Okay,

the

questions

were

okay,

since

we

have

s3

do

we

have

ACLs

access

control

lists

on

it

and

then

the

second

question:

what

question

was

can

I

front

Saif

with

I

scuzzy

right?

Okay,

we

do

have

ACLs,

we

have

bucket

level

ACLs

for

the

the

greatest

gateway

and

as

far

as

front

with

I

scuzzy,

we

have

a

TGT

plug-in.

So

you

can

use

TGT

to

then

plug

in

access,

I

scuzzy

to

TGT

to

RVD.

A

Okay

speaking,

is

my

community

member

hat

I'd,

say

yeah

go

on

play

with

it.

It's

great,

wearing

my

director

of

engineering

at

ink

tank

at

we're

not

ready

to

support

it

again

there

when

we

say

not

production

ready.

That

just

means

that

we're

not

sure

the

stability

of

it

and

we

don't

want

anyone

to

lose

data.

There

are

people

in

various

places

that

are

running

it

in

production.

There's

a

pretty

active

group

in

China,

that's

running

it

with

multi

MDS

servers

and

are

having

no

problems.

So,

basically,

right

now

it's

bulletproofing

corner

cases.

A

Okay,

the

question

was:

have

we

implemented

any

performance,

gating

yeah

qos

on

volumes?

We

have

not

implemented

any

QoS,

that's

on

our

roadmap,

but

we

have

not

done.

Okay.

I

want

to

be

fair

to

time,

even

though

I

stole

a

little

bit

with

my

Wi-Fi

issues.

So

thanks

for

your

time,

if

you

have

questions

I'll

be

over

here

and

if

you

want

I've

got

just

a

handful

of

schwag

that

they

sent

me

so

feel

free

to

come

over

and

grab

a

shirt

or

a

sticker,

or

something

thanks

for

your

time.