►

From YouTube: Demystifying Cloud Native Buildpacks ~ Developer's Life Without Dockerfile !! - Suman Chakraborty

Description

Dockerfile has been developer's best guide in building cloud-native application on a polyglot environment. While it does offers many features in packaging an application, it does have its side-effects on speed-builds and maintenance. What developer wants a simplified approach that will produce quality container when they prefer not to author and maintain their own Dockerfile. In this context, I will be mentioning CNCF incubated Sandbox project Cloud Native Buildpacks (CNB). The talk aims to solve developer's pain in building quality softwares using CNB and will mention some use cases around it. The talk will be supported by a small demo.

A

So

hi

everyone

today

I'm

going

to

speak

upon

one

of

the

most

coolest

project

in

the

CNC

F,

which

is

cloud

readable

text,

and

the

agenda

here

would

be

to

understand

that

how

we

can

use

pill

packs

as

an

alternative

to

docker

file

specially

to

make

life

easy

for

the

developer.

A

bit

about

myself.

I

am

working

as

a

senior

DevOps

engineer,

with

asipi

labs,

Bangalore,

India

and

I'm,

a

community

member

and

speaker

with

docker

Bangalore

CNC,

Bangalore

group

and

I.

A

Do

I'm

I'm,

also

a

tech,

blogger,

I,

do

post

articles

on

platform,

services,

cloud

native

and

micro

service

on

different

social

platforms?

Ok,

so

coming

to

the

agenda.

So

here

today

we

are

going

to

quickly

discuss

about

docker

file.

What

is

the

shortcomings

of

this

current

approach

and

how

built

acts

basically

solves

it,

and

what

is

this

cloud

that

you've

built

PACs

is

all

about

a

bit

on

the

demo,

we'll

see

a

quick

demo

around

it

and

then

why

should

we

consider

a

cloud

native

built

back

over

a

traditional

docker

file

approach?

Okay,

so

dr.

A

file,

as

we

know,

is

basically

an

instruction

set

which

helps

a

developer

to

basically

build

a

runnable

image.

So

you

basically

mention

a

set

of

instructions

within

that

file,

and

then

you

pass

it

to

the

docker

demon,

which

finally

creates

a

build

image,

and

it's

considered

as

a

best

friend

for

a

developer

to

dock,

raise

any

polyglot

based

applications

and

definitely

docker

file

has

certain

advantage

regarding

faster

application.

Development,

spitting

of

the

incremental

builds

as

well

as

easier

management

and

scaling

of

the

containers.

A

If

you

look

closely,

a

docker

file

looks

like

something

here

like

where

we

mentioned

that

what

would

be

the

base

operating

system

from

where

we

are

going

to

build

the

application

and

then

what

are

the

dependencies

that

we

are

going

to

put

it

here?

Along

with

that,

we

mentioned

what

configurations

we

are

going

to

do

for

the

particular

applications.

A

What

would

be

the

working

directory,

how

we're

going

to

the

application

and

then,

finally,

how

we

both

would

be

exposed,

and

the

result

is

something

like

this,

where

we

get

each

instructions

which

are

nothing

but

a

set

of

layered

images

which

are

which

finally

creates

the

docker

image.

So

it's

basically

a

set

of

real

images.

Read-Only

images

where

you

get

the

full

fledge

application

image,

but

building

this

building

a

particular

app

in

a

docker

iswe

using

docker

file

is

the

simplest

thing,

but

then

again

it

has

certain

shortcomings.

A

The

application

image

built

for

docker

file

is

often

bloated,

with

extraneous

cache,

a

directory

and

real

performance

come

during

the

speed

builds.

Another

thing

is

that

we

have

seen

is

about

the

compressibility

like

building

the

multiple

docker

images

required,

where

you

have

to

specify

if

you're

like

using

some

of

the

dependencies

of

a

bit

of

the

docker

image

from

the

previous

build,

you

have

to

mention

it

through

a

multi-stage

build

process.

But

again,

if

you

are

using

that,

then

you

cannot

use

the

environment

variables

directly.

You

cannot

follow

a

symlinks

and

also

file

system.

A

Layers

has

to

be

manually

copy,

so

it

has

a

leaky

abstraction,

so

docker

file,

I

I,

would

say,

is

a

very

poor

tool

for

application

developers

who

want

to

actually

write

quality

code.

Basically,

it's

not

application

ever

so

when

the

developer

is

basically

building

the

application.

He

must

because

he

must

be

knowing

about

that.

How

what

logic

should

be

there

within

that

docker

file,

which

actually

builds

that

quality

image,

and

so

it

raises

concerned

about

operation

and

application

developer

concern,

because

the

developer

would

know

how

to

build

an

application.

A

But

then,

when

it

goes

to

any

ops

person

right

in

building

the

docker

file,

then

he

may

miss

some

of

the

configurations

which

was

being

done,

which

was

actually

needed

in

building

that

particular

application.

So

another

important

factor

here

is

about

the

maintenance,

which

is

normally

very

tedious

and

time

consuming

in

using

docker

file.

So

when

you

were

actually

creating

doc

Rafael

from

multiple

applications,

often

we

go

about

copying

the

informations

from

one

Bhakra

file

to

another,

dr.

A

Phil

and

then

basically

changing

some

certain

configurations,

and

this

can

basically

introduce

it

in

low

level

concerns

and

it

disclaim

pacts.

The

final

quality

of

the

image

which

is

produced

so

then

what

is

the

alternative

photographers?

Well,

there

is

one

which

is

called

as

bill

tax

bill.

Pacs

is

something

which

is

kind

of

a

pluggable

modular

tools

and

which

translates

your

actual

source

code

into

an

OSI

native

format

image.

It

provides

a

very

high-level

abstractions

for

building

your

applications

compared

to

our

doc

refines

it

uses.

A

Particular

they

build

a

concept

which

bundles

all

the

bits

and

pieces

of

information

against

your

source

code

and

creating

the

final

artifact

or

be

called

as

the

droplet.

Also

it

was

originally

conceived

by

Hiroko

in

2011,

and

then

this

has

been

actually

being

used

across

multiple

platforms

in

cloud

foundry,

another

platform,

the

service

technology,

such

as

Keita

labs,

can

ativ

to

basically

widen

it

to

modernize

the

current

application

development

process.

Primarily

the

bill

pack

basically

comprises

of

three

steps,

which

includes

the

bin:

slash,

detect,

min

/,

compile

and

bin

/

release.

A

Basically,

here

what

happens

is

first

of

all

of

the

platform

determines

whether

the

actual

application,

development

environment

is

there

or

not.

Where

we

can

apply

the

Bill

tag,

if

it

finds

one,

then

it

basically

applies

all

the

dependencies

which

is

required

to

basically

use

along

with

that

particular

source

code,

and

then

finally,

it

releases

your

image,

which

is

again

a

layered

over

sale

image

and

that

is

created

into

a

final

artifact

so

coming

to

the

same

concept.

What

it

got

evolved

is

something

called

as

a

cloud

native

built

acts.

A

It

was

basically

a

project

which

served

as

a

vendor

neutral

body

to

unify

the

whole

of

the

bill

back

ecosystem,

because

bill

packs

was

initially

being

conceived

by

Hiroko,

though

cloud

foundry

was

following

a

different

version

of

the

particular

bill,

so

there

needs

to

be

certain

body

which

can

govern

across

these

two

versioning

into

a

common

platform.

So,

with

this

purpose,

bill

PAC

got

introduced

through

a

pivotal

and

hiroko

engine

2018,

and

then

it

was

inducted

as

a

sandbox

project

in

October

2018.

A

So,

basically,

bill

packs

takes

your

source

code

and

basically

creates

a

final

docker

image

without

the

pain

of

basically

create.

Are

writing

the

inter

instructions

of

building

that

particular

applications

coming

into

the

component

sites

built

axe?

Has

a

component

called

as

build

back

so

a

builder

or

the

builder

is

an

image

which

bundles

all

the

bits

is

bits

and

pieces

of

informations,

which

is

required

to

create

the

build

back,

and

basically

it

has

the

built-in

image

also.

So

if

it

is

executed

against

your

application

source

code.

A

So

basically

it

has

something

called

as

a

tml

file

where

you

mentioned,

what

are

the

bill

tax,

which

should

be

applied

against

your

app

code

in

an

ordered

manner

and

along

with

that

it

contents,

something

called

as

a

stack

stack

is

basically

comprises

a

base

image

and

a

run

image,

and

then

you

finally

create

a

builder

image.

So

builder

image

comprises

of

all

the

audits

set

of

Bill

X

and

a

lifecycle

which

governs

that

how

the

application

artifact

could

be

produced.

So

it

goes

through

multiple

stages

and

then,

along

with

that

building

it

next

comes.

A

A

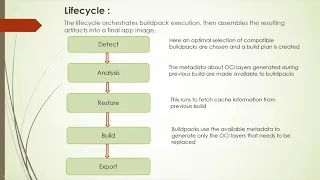

We

have

to

understand

this

life

cycle

process.

It

basically

goes

through

five

different

processes.

First,

is

the

detectors.

Detect

phase

is

the

area

where

basically,

the

platform

to

zzz's

whether

the

bill

pack

should

be

applied

against

the

application

source

code

or

not?

If

it

has

to

be

applied,

then

it

creates

a

bill

plan

around

it.

Second

is

the

analysis

analysis

where

the

metadata

about

the

voicing

layers

which

were

generated

from

the

previous

bill

are

taken

into

account

which

can

be

used

in

the

current

bill

execution

process.

A

The

restore

phase

doesn't

normally

come

in

the

first

bill

run

because

this

is

something

which

uses

the

cash

in

layers

from

the

previous

bill.

That

happened

so

that

it

can

reuse

the

existing

layers

and

basically

speed

up

the

process

of

creating

that

final

image.

The

build

is

where

the

bill

PACs

uses

the

available

metadata

to

generate

the

actual

OSI

layers,

which

needs

to

be

replaced.

Let's

say

if

you

have

basically

created

some

application

with

version

one

and

then

again

we

deploy

another

application

with

slight

modification,

maybe

on

the

code

side

with

version

v2.

A

So

what

the

bill

process

will

do.

It

will

keep

an

intact

all

the

layers

where

there

is

no

change

that

that

has

happened

and

only

replaced

that

layer

which

needs

to

be

which

has

to

be

which

requested

and

changed

so

next

comes

about.

The

export

phase.

Export

is

where

the

remote

layers

are

replaced

by

the

generated

layer.

So

this

is

the

place

where

your

final,

the

artifact

or

we

can

say

that

OSI

layer

gets

created.

A

Then

the

come

is

this

Texas.

That

is

something

which

forms

the

core

of

basically

creating

this

whole

will

pack

logic

lifecycle,

so

it

basically

comprises

something

called

as

a

building

which

and

a

run

image.

It

provides

this

build

image

or

when

the

Builder

image

is

created

and

when

the

source

gets

along

with

that

particular

building

which

and

the

final

image

gets

created,

the

execution

environment

which

was

running

with

the

building,

which

gets

replaced

with

the

run

image,

which

is

again

provided

from

the

stat.

A

So

in

turn,

if

we

see,

unlike

in

a

docker

file

where

we

have

to

mention

orbit

and

whatever

it's

the

base,

OS

image

from

which,

on

top

of

which

we'll

be

creating

an

application

here

from

the

platform

side,

we

are

already

mentioning

the

build

image

and

run

image

which

should

go

intact,

whether

to

create

the

final

artifact.

So

one

of

the

most

important

property

of

using

build

packs-

or

you

can

say

one

of

the

coolest

feature

here-

is

about

this

imagery

basic,

so

imagery.

A

Basing

is

something

where,

if

you

need

to

replace

your

actual

run

image

where

you're

you're

basically

have

deployed

your

application

and

let's

say,

for

example,

I

suppose

there

are

certain

CV

vulnerability

which

is

being

detected,

and

you

need

to

just

replace

your

base

image

with

the

patch

OS

image.

So

how

we

do

do

it,

you

do

it

using

the

rebus

command.

So

rebus

is

something

which

basically

take

into

account

your

existing

OS

image.

A

It

replaces

that,

with

the

new

patch

image,

without

rebuilding

the

whole

application,

that

means

your

intermitted

little

layers

remains

unchanged

and

you

just

get

the

new

patch

image

version.

So

then

how

we

go

about

building

a

cloud

native

application

using

Bill

pack,

so,

first

of

all

we

have

to

select.

What

is

the

builder

that

we

are

going

to

use.

So

again,

builder

depends:

what

is

the

kind

of

application

environment

where

we

are

going

to

that?

We

are

going

to

that.

A

So

you

can

use

that

image

as

the

doctorís

image

you

can

use

it

to

run

on

a

docker

environment

or

you

can

basically

use

this

image

to

work

on

and

deploy

on

a

Cuban,

its

environment,

but

for

Cuban

its

we

use

a

separate

utility

which

we

called

as

a

key

pack

that

is

mostly

compatible

with

the

Cuban

s

base

resources

which

I'm

not

going

to

discuss

today

but

yeah

it.

It

definitely

helps

in

that.

Look

that

song

okay,

so

we

have

enough

of

talking

I'll

just

quickly

run

through

a

quick

demo

to

help.

A

You

all

understand

how

we

basically

use

with

this

feedback.

So

if

we

see

so

I

have

a

path

install

the

just

install

the

latest

version,

which

is

0.11

dot.

Two

first

of

all,

what

we

get

to

see

here

is

the

available

stats.

So

stats

is

something

as

I

told

you

comprises,

of

both

the

build

image

and

the

run

image.

A

So

here

the

stacks

is

currently

being

provided

from

the

Heroku

as

and

from

the

Cloud

Foundry,

so

we

have

four

different

types

of

stacks

that

is

available,

and

so

these

are

again

being

used

in

the

builder

notation

so

to

list

what

are

the

available

builders

which

you

get

from

the

platform

side?

I

just

give

this

command

calls

such

as

builders,

and

here

you

get

to

see

the

list

of

builders

vision

which

you

can

use

it.

A

So,

as

I

mentioned

like

based

on

the

type

of

application

that

you

are

deploying,

you

can

basically

choose

what

is

the

builder

that

you

can

use

in

this

case?

Let's

say,

for

example,

I

am

going

to

deploy

golang

application.

Then

I

can

use

this

packet

opal

path,

which

basically

supports

a

bionic

Ubuntu

Bionic

base

image

for

deploying

this

go

bowling

application

again.

If

I

just

want

to

create

a

small

slitted

build

image,

then

I

can

just

use

the

tiny

builder

just

to

deploy

the

application.

Okay.

A

A

So

it's

just

simple

a

cooling

application,

it's

nothing

fancy

about

it

and

what

I

will

do.

I'll

basically

use

the

PAC

Kamat

and

before

that,

I

need

to

choose,

which

is

the

Builder

that

I'm

going

to

use

it

for

I'll.

Just

use

a

smallest

builder

builder,

in

this

case,

just

to

save

some

of

my

time

here

and

then

I

use

a

pad

bill

command.

Just

give

my

name.

Okay,

one

more

important

thing

about

this.

That

tool

is

that

it

helps

you

to

publish

this

image

directly

in

the

registry.

A

So,

unlike

a

docker

based

approach

where

you

basically

create

a

image

locally

and

then

you

do

a

push

it

just

like

you

create

an

image

and

you

push

it

to

the

registry

and

it's

quite

a

cool

feature.

So

let's

say

I

just

name

the

application,

as

with

cloud

native

and

then

I

just

give

the

Builder

option

here

and

then

I

have

to

just

pass

on

this

flag

called,

let's

publish

so

once

I

give

this.

A

It

will

just

try

to

download

the

base

image

from

that

particular

builder

image

that

it

is

that

that

has

been

specified

there

and

then

again

it's

a

docker

base

image,

so

it

will

be

pulling

it

from

the

registry

so

once

it

has

full

full

ad,

it

will

start

in

the

life

cycle

phase.

So

as

per

the

life

cycle

phase,

it

will

first

go

to

the

detection

section

where

it

will

detect

on

the

bill

tags

based

on

the

grouping

which

we

have,

which

has

been

mentioned

there.

A

It

will

analyze

and

then

whether

this

particular

image

coexist

in

the

registry

or

not.

If

it

is

not

so

it

will

not,

it

will

not

do

anything

rest

or

will

not

take

place

in

the

first

case,

because

it's

the

first

run.

So

it

has

nothing

to

restore

from

the

previous

build

steps

and

then

it

will

go

about

creating

the

OCA

layers,

so

in

this

case

we'll

basically

download

all

the

dependencies

from

the

internet,

basically

the

runtime

dependencies,

whatever

we

based

on

the

type

of

application,

we

are

deploying

like

say

for

example.

A

In

this

case,

it

will

be

depend

on

loading,

the

dip

our

dependencies,

and

then

it

will

be

installing

everything

and

finally,

in

the

export

phase,

it

will

create

the

build

image

here.

So

now,

if

I

see

here,

I

can

simply

use

docker.

Okay,

so

before

that

I

just

want

to

show

you

one

more

thing.

So

once

you

have

this

application,

so

you

can

use

it

to

inspect

from

the

remote

side.

Also,

that

means

I.

A

Don't

have

this

image

in

my

local

environment,

but

I

can

use

the

inspect

image

command

and

then

I

can

just

give

be.

Oem

is

something

called

as

bill

of

material

and

then

I

can

just

mention

the

remote

option

here,

so

what

it

would

do

it

would

basically

fetch

what

are

the

dependencies

that

has

gone

inside

this

particular

images.

A

It's

created

just

to

check

the

country

is

up

and

running.

So

it's

an

up

and

running

so

I

just

use

my

port

2

just

to

ensure

its

up

and

running

yeah.

So

you

can

see

that

the

application

is

now

running

now.

The

thing

is,

let's

say,

for

example:

I

need

to

again

run

this

particular

Pokemon

once

again

for

this

image.

So

what

happens

so

I

can.

Basically,

if

I

just

run

this

back

pack

bill

come

on

once

more,

it

will

try

to

basically

go

through

the

same

lifecycle

first.

A

But

in

this

case,

because

the

run

image

is

already

there,

it

will

not

pull

what

it

will

try

to

do.

It

will

restore

from

the

already

run

images

the

dependencies

whatever

it's

there.

It

will

try

to

pull

it

from

there.

It

will

not

touch

the

existing

metadata

layer.

It

will

just

see

whether

there

has

been

any

change

in

this

particular

application

code,

which

it

needs

to

import

inside

the

OS

a

step.

So

if

it,

if

it

doesn't

finds

anything,

it

will

simply

run

it

and

it

will

ensure

that

the

artifact

is

created.

A

And

basically

it's

it's

create

the

final

image

for

me,

so

it

doesn't

use

anything

in

this

case.

So

this

is

the

way

how

we

basically

go

about

using

Bill

packs.

Then

the

next

thing

comes,

then

why

we

should

consider

using

Bill

packs

when

dr.

file

in

his

place,

so

main

thing

comes

because

of

this

separation

of

concerns.

So

first

is

like,

in

this

case

what

happens

in

a

docker

file

approach.

The

developer

has

to

take

care

of

the

full

responsibility

in

defining

the

whole

application

stack

in

this

bill

pack

case.

A

The

developer

just

concentrates

on

the

logic

in

defining

the

application

source

code,

while

the

dependencies

are

being

taken

here

from

the

platform

itself,

and

the

second

thing

that

comes

here

is

about

ensuring

that

the

application

are

patched

with

the

correct

OS

images.

If

some

vulnerabilities

are

already

introduced

in

the

existing

OS

image

for

in

a

docker

file

based

approach,

you

have

to

basically

redo

the

building

of

the

complete

docker

file.

Right,

you

have

to

replace

it.

A

You

have

to

basically

then

define

the

set

of

coding

there

like

the

set

of

instructions

that

will

go

anyw'ere

to

rebuild,

but

in

this

case

you

just

point

the

reference

from

the

existing

metadata

layer

to

the

new,

updated

one,

and

both

of

these

images

are

ABA

compatible

with

shouting

and

nted

from

the

platform

site

itself.

So

here

is

the

doctor

fell

approach

as

I

told

you,

the

developer

rebels,

all

the

affected

containers

for

the

application,

which

is

running

in

the

environment,

for

the

build

pack

approach.

It's

just.

A

The

developer

is

just

building

his

own

application

logic,

while

entirely

the

platform

team

is

taking

care

of

rebuilding

the

base

layer

with

the

correct

patch

OS

version.

So

on

the

advantage

site.

Yes,

we

it

may

meets

the

security

and

compliance

requirement

without

much

devil

intervention.

We

don't

basically

disturb

the

developer

as

such.

He

just

concentrates

building

his

own

code

behind

creating

that

application,

so

it

provides

an

automated

deliverable

for

both

your

waist

level

and

application

level

dependency

upgrades.

A

It

can

efficiently

handle

the

data,

operation

or

maintenance

of

maintenance,

which

is

difficult

to

use

with

the

doctor

file,

and

it

can

basically

variably

use

ABA

compatibility

to

ensure

that

both

the

base

layer,

the

runnable

image

where

your

applications

building

are

compatible

on

the

platform

saying

it

rebuilds

and

uploads

the

layer

in

layers

when

that's

Rea,

not

or

not

only

it

will

do

everything

only

if

there

is

any

change,

it

will

do

it

and

then

it

supports

the

cross.

Suppose

a

free

block,

Mountain

or

across

the

doctor

is

3v2.