►

From YouTube: CNB Office Hours - 7 April 2022

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

B

B

C

And

just

to

remind

you

that

there

is

a

document

attached

to

this

meeting.

Please

go

ahead

and

sign

in

today's

officer

session

is

a

bit

special.

We

have

eric,

who

is

a

bill?

Kid

maintainer

who

has

happily

agreed

to

answer

our

questions

and

figure

out

like

how

and

if

we

can

integrate,

build

it

with

the

buildbacks

project,

including

some

of

the

newer

capabilities,

like

the

the

more

job

support

and

access

to

oci,

manifest

descriptors.

B

B

Instead

of

supporting

you

know,

just

the

registry

and

the

daemon

actually

adding

oci

support

right,

so

file

system,

support

and-

and

so

now,

that

kind

of

kind

of

took

us

down

this

path

of

of

going

down

that

route,

but

yeah,

I

think

that's

kind

of

where

we've

left

off

is

like

now

we're

thinking

like

hey.

Do

we

even

need

the

doctor

damon,

or

do

we

want

to

just

give

that

sort

of

responsibility

to

the

platforms?

B

D

A

Yeah,

no

I've

been

looking

into

it

a

little

bit

but

yeah

not

enough

time

to

absorb

all

of

it.

So

I

I

saw

that,

like

with

the

docker

demon,

it

was

primarily

used

for

like

local

debugging.

Is

that

true,

or

is

that

other

use

cases

for

it

like

yeah?

I

guess

what

what

is

the

use

for

the

docker

demon

like

right

now.

A

E

Like

a

kubernetes-based

platform,

it's

going

to

use

kubernetes,

but

then

the

second

place

where

we

use

the

daemon

is

sort

of

what

I

would

call

like

an

export

target.

So

we

can

either.

You

know

like

write

an

image

directly

into

a

registry

or

we

can

load

the

image

into

the

daemon

and

a

lot

of

pac

users

want

that

latter

use

case,

because

what

they're

doing

is

they're

diving,

something

and

then

they

want

to

run

it

and

test

something

out.

E

A

E

I

would

say

the

number

one

issue

is

sort

of

when

you're

doing

a

load

or

a

pull

into

the

daemon,

that

it's

figuring

out,

whether

it's

seen

a

layer

before

based

on

the

chain

id.

So

as

soon

as

you

insert

one

layer,

it's

never

seen

before.

You

have

to

reprovide

all

the

layers

and

that's

a

big

problem

for

us

when

we're

using

the

demon

as

an

export

target,

to

the

extent

that

we've

sort

of

like

recreated

a

cache

of

layers

in

a

volume

in.

A

E

E

A

Yeah,

okay,

yeah,

now

that

that

is

exactly

what

I

would

guess.

That's

I

think

most

people's

issue

eventually

is

that

yeah

you

could

have

the

exact

same

layer

like

it

by

itself.

Is

you

know

identical,

but

if

other

different

layers

are

below

it

than

an

image

before,

then

it

doesn't

care

it'll

call

it

again,

yeah.

A

A

A

E

Then

our

second

big

problem-

I

guess,

is

just

dealing

with

artifacts

oci

artifacts

other

than

images

like

there's

lots

of

places

where

we

maybe

want

to

be

putting

like.

We

want

like

an

image

manifest,

for

example,

but

sort

of

in

the

way

the

registry

does

it.

So

we

can

have

as

an

output

of

our

build

annotations

on

a

manifest

doing

that

when

the

export

target

is

the

demon

is

awkward

to

impossible.

A

Right,

okay,

yeah

I've

never

even

tried

to

do

that

with

the

docker

demon,

but

yeah.

That

makes

sense

too

cool

yeah.

I

mean

I

guess

like

in

general,

I

I've

looked

a

little

bit

at

the

overall

architecture

of

like

build

pack

stuff,

but

yeah

I'll

probably

need

a

lot

of

help

along

the

way,

but

we

can

start

getting

into

questions

if

we're

ready

done

waiting

for

people.

A

Yeah

totally

so

yeah

the

well,

I

guess

maybe

just

a

very

brief

background

on

build

kit

so

bill.

Kid

whole

idea

is

to

be

kind

of

like

the

base

layer

for

higher

level,

build

tools

and

takes

care

of

a

lot

of

the

problems

around

like

field

execution,

caching,

exporting

results

and

all

that

stuff

and

the

interface

to

it

is

llb.

I'm

sorry

if

anyone's

already

familiar

with

this

I'll

just

try

to

go

as

quickly

as

possible.

A

It's

something

called

lb,

which

is

basically

just

like

a

dag

of

file

system

operations,

and

so

the

idea

is

that

higher

level

build

tools

or

formats

can

compile

to

llb,

which

then

build

kit

will

actually

run,

and

so

llb

has

like

different

types

of

operations,

there's

ones

for

importing

images,

there's

ones

for

running

a

command

on

top

of

another

file

system

created

by

a

different

lb

op,

those

are

probably

the

most

common

ones,

but

yeah

so

merge

and

dip.

That's

what

I

just

got

done,

adding

to

buildkit.

A

Those

are

two

new

types

of

llb

operations.

Merge

is

the

main

one.

Diff

is

a

little

bit

more

obscure,

but

can

be

helpful

sometimes,

but

the

idea

behind

merge

is

that

you

very

often

need

to

take

a

bunch

of

different

file

systems

and

combine

them

together

into

one

so

like.

If

you

have

a

bunch

of

dependencies,

you

need

to

combine

together

in

order

to

run

a

build

on

top.

You

need

to

do

that.

If

you

are,

you

know

you

want

to

export

an

image

that

consists

of

three

independent

components

in

the

final

image.

A

You

need

to

merge

all

of

those

together

and

so

before,

merge

up.

The

only

way

to

do

that

was

something

called

copy

op,

so

I

mean

in

docker

file

land.

That

would

literally

just

be

like

a

copy

line,

so

you

know

like

a

multi-stage

doctor

file.

Actually

I

can

share

my

screen.

It

might

make

this

a

little

bit

easier

to

follow.

If

I

can

remember.

A

Can

you

see

my

screen

no

cool

this?

This

is

a

blog

post.

I

was

hoping

it

would

actually

be

released

by

now,

but

it's

still

waiting

for

some

approval,

but

you

can

get

a

sneak

preview.

I

guess

yeah

so

like

in

a

doctor

file.

You

have

like

something

like

this,

where

you

want

to

build

a

bunch

of

stuff

independently

and

then

at

the

end,

combine

that

all

together

and

use

copies,

and

so

that's

the

docker

file.

A

This

is

what

it

looks

like

in

terms

of

lob,

and

you

can

see

here

that

you

are

chaining

together

copy

operations

and

that

I

mean

you're,

probably

familiar

with

this

issue.

So

I'll

skip

a

lot

of

the

details

here,

but

that

can

be

bad

because

it

creates

dependencies

between

things

that

don't

have

dependencies

between

each

other

so

like

here.

This

is

like

the

final

image,

where

you're

trying

to

assemble

all

the

different

parts

together

and

so

like.

A

It

gets

invalidated

in

the

final

image

and

that's

fine,

but

then,

because

of

this

chain

of

operations,

there's

other

parts

which

are

not

invalidated

at

all,

so

this

foo

part

it

should

be

totally

cached

that

gets

invalidated

just

because

there's

this

chain

of

copy

operations,

I

think

yeah,

I'm

sure

all

of

you

have

run

into

this.

So

yeah

merge

is

just

a

new

type

of

operation

that

lets

you

just

combine

things

together,

but

without

actually

creating

dependencies

between

them.

A

So,

like

here,

I'm

merging

together

some

base

image

with

you

know

two

copy

off

results,

and

so

then,

when

you

do

it,

this

way

skip

a

lot

of

details

here.

Basically,

you

end

up

in

a

situation

where

you

only

are

invalidating

exactly

what

needs

to

be

validated

so

that

that's

kind

of

the

base

idea

here.

A

A

Those

layers

combined

together,

never

had

to

do

any

work

to

actually

combine

everything

on

disk

locally,

so

that's

important

and

then

that's

really

powerful

in

combination

with

the

fact

that

buildkit

also

implements

image

references

lazily,

I

don't

know

if

I

have

yeah,

I

guess

it's

sort

of

an

example

here

or

down

here.

So

this

a

source

app

where

it's

basically

pulling

down

an

image.

These

are

also

lazy,

so

you

can

only

pull

the

image

if

it

actually

needs

to

be.

A

You

know

in

existence

locally,

but

like

for

this

llb

here

all

this

is

lazy

and

the

merge

up

is

lazy.

So

the

entire

thing

you

could

these

could

all

just

exist

in

a

remote

registry

and

you'll,

you

could

export

it

as

an

image

and

it

nothing

will

ever

need

to

be

pulled

down

locally

or

if

one

part

needs

to

be

pulled

down

locally,

then

to

be

like

pushed

to.

You

know

another

registry

or

something

like

that:

it'll

only

pull

down

what

it

actually

needs.

A

So

that's

like

how

the

the

new

copy

dash

dash

link

flag

works,

the

rebasing

that

basically

this

is

the

base

underneath

all

of

that

yeah.

I

think

that

that's

like

the

gist

of

merge

up,

there's

also

diff

op,

which

I

can

go

into

too,

but

I

don't

know.

Does

this

make

sense?

Are

there

any

questions

about

this.

F

Yeah,

I

think

I

think

the

cash

invalidation

parts

are

not

not

especially

useful

for

us,

because

we

have,

you

know

like

more

logic

around

invalidating

caches,

but

that

lazy,

merge

operation

is

exactly

what

we're

missing

from

the

you

know,

daemon

right

now

that

makes

it

less

suitable

for

part

of

the

caching

mechanism

we

have

where

we.

If

we

know

that

something

in

the

destination

image

is

already

there

and

is

good

right.

Our

destination

registry

really

is

already

there

and

it's

good.

Then

we

can

just

say

no.

That

continues

to

be

part

of

the

image.

F

I

I

worry

a

little

bit

that

we're

kind

of

forced

to

maintain

two

implementations

right

like

we

have

this

kind

of

conoco

like

right,

implementation

that

relies

on

you

know

not

needing

nested

containers,

the

the

base

image

being

you

know

the

images

you

know

we

have

a

build

image

and

a

run

image

they're.

You

know

they

present

the

same

api,

so

we

can

build

layers

to

run

it

build

image.

It

can

end

up

on

the

run

image

and

the

layers

are

kind

of

contractually

separated

as

directories.

F

I

worry

a

little

bit

about

like

I

don't.

I

don't

necessarily

think

this

is

a

terrible

outcome,

but

I

worry

a

little

bit

about

you

know

we're

going

to

maintain

this

life

cycle

implementation

that

works

like

conoco.

That

means

absolutely

no

privileges,

it's

like

very

efficient

right

and

that

you

know

you

don't

have

to

move

bits

anywhere

where

they

don't

need

to

go,

and

you

know

build

kit.

F

I

think,

is

maybe

a

little

more

designed

for

to

place

a

local

docker

demon

where

you

don't

you

don't

you're

not

running

in

a

container

already

right.

I

think

that

the

destination

here

is

probably

we

just

do

both

and

it's

great,

but

the

I'm

not

so

I'm

not

very

worried

about

it.

I'm

just

you

know

before

we

go

down

that

path,

I

thought

I'd

ask

like

you

know.

Are

there?

F

A

Yeah,

no,

I

I

know

what

you

mean

and

right

now

that

is

absolutely

the

focus

of

build

kit

is

to

be.

You

know

like

a

server

component.

Basically

that

clients

talk

to

it

sounds

like

you

would

almost

be

more

interested

in

like

build

kit

as

a

library.

So,

like

you,

can

get

a

lot

of

this

functionality

yeah

and

it's

like

it's

technically

possible

to

do

that.

But

it's

not

fleshed

out

or,

like

officially

supported.

A

I

mean

all

the

build

kit

code

is,

you

know

publicly

available

and

you

can

import

it,

but

it

there's

no

guarantees

about

stability

or

anything

like

that

and

you

right

now.

I

guess

you

do

have

to

run

everything

in

a

container.

I

mean

there's

options

there.

You

know

you

can

use

run

c

or

container

d

or

any

oci

runtime.

A

F

D

Yeah

conoco

actually

like

uses

all

of

build

kits,

especially

docker

file,

parsing

code

and

everything,

and

then,

instead

of

invoking

containers

to

do

stuff,

does

its

own

crazy

thing.

So

right,

it's

interesting.

You

were

mentioning

like

having

a

build

kit

that,

instead

of

calling

run

c

or

container

d

like

did

something

else

that

didn't

happen

to

require

privilege.

Is

anybody

even

looking

into

that,

because

I

feel

like

that

would

allow

me

to

delete

all

of

conoco,

which

I

would

be

more

than

happy

to

do.

A

It

it

depends

on

what

you

mean

like

I

I'm

very

much

thinking

out

loud

here

so

like

they

call

this

with

plenty

of

salt.

I

mean

right

now,

it's

it's

really,

even

in

like

the

user

facing

api,

assume

that

you

could

run

a

command

like

one

of

these

exec

ops,

and

that

will

be

such

that

the

root

fs

is.

You

know

the

file

system

that

it

has

its

input,

and

at

that

point

you

do

need

at

least

the

truth.

So

like

it,

when

you

say

without

privileges,

I

mean

I

actually.

A

My

original

interest

with

buildkit

was

just

this

kind

of

hobby

project.

I

was

working

on

called

bin

castle,

which

there's

a

lot

of

things,

but

among

other

things,

it

was

a

static

binary

that

embedded

buildkit

and

it

also

embedded

lib

container

from

run

c.

So

it

was

a

completely

standalone

binary

that

could

just

do

everything

with

no

external

dependencies,

and

I

did

I

ran

that

completely

list.

You

didn't

even

need

any

set

uid

binaries

to

set

up

the

uid

mapping

or

anything

like

that.

A

I

mean

that

works,

there's

it

it

was

a

total

hack.

You

know

it's

kind

of

a

fun

thing

so,

like

I

would

say

it's

depending

on

what

you

mean

by

no

privileges

possible,

but

yeah

you

need

it.

It

does

assume

that

you

know

you're

going

to

be

able

to

isolate

a

process

into

some

root

effects.

I

suppose

which

I

I

don't

know

if

that

answers

your

question.

D

A

Right

and

they

also

only

go

into

the

container

when

they

have

to

like

for

the

executive,

so

there's

also

like

a

vertex

type

called

file

op,

where

you

can

like

create

file

here.

Delete

file

here,

you

know

make

whatever

those

you

never

need

to

go

into

a

container

at

all,

because

they're,

just

you

know,

cis

calls

that

just

get

made

directly

by

the

demon

now

same

with

merge.

E

A

Yeah

yeah

so

merge

up

right

now.

I

guess

it

was

designed

with

like

the

registry,

like

the

case,

where

you're

pulling

and

exporting

to

a

registry,

that

that

was

the

main

use

case.

So

I

guess

I

would

be

more

interested

in

like

the

details

of

how

you

think

you

would

use

it,

because

I

just

want

to

make

sure

that

I.

E

A

E

E

A

A

Yeah

there's:

well,

I

one

other

thing.

Is

I

don't

work

for

docker

and

I

really

only

work

on

standalone

build

kit.

I

know

a

little

bit

about

this

just

because

I've

interacted

with

like

the

places

where

build

kit,

you

know

touches

docker

but

like

yeah,

so

I

I'm

going

to

be

careful

to

not

not.

You

know

start

talking

about

things

I

don't

actually

know,

but

yeah.

C

E

A

Yeah

right

now

that

no,

I

I

don't

think

that's

gonna,

quite

work,

so

I

mean

like,

if

you

wanted

to

just

ignore

docker

entirely,

then

you

know

you

can

do

all

of

this

and

build

it

and

it

will

work.

You

know

it'll

it'll

work

within

the

context

of

build

kit

itself,

but

buildkit

will

just

be.

You

know

a

container

that's

running

under

docker

the

docker

game

and

it

has

its

own

storage

volume

but

yeah.

If

you

wanted,

then

just

do

docker

run.

A

A

F

I

think

the

primary

use

case

for

what

we're

interested

in

build

kit

for

is

you

know

we

kind

of

have

two

use

cases

for

build

packs

right.

One

is

cloud

platforms

that

are

going

to

build

using

unprivileged

life

cycle.

You

know

build

could

just

may

be

less

suitable

for

that

right,

now,

kind

of

based

on

what

we

talked

about

earlier

and

then

there's

local

builds.

There's

like

you're

going

to

pack

build

on

your

local

machine.

F

F

The

you

know,

I

think

the

user's

expectation

is

that

if

they're

running

docker

for

desktop

or

whatever

it

is

locally

right,

they

could

do

pac

build

and

then

they

see

docker

images

and

they

docker

push,

and

even

though

we

have

a

published

flag

that

runs

the

cloud

you

know,

like

version

of

that,

that's

more

efficient

that

doesn't

have

to

deal

with

loading

and

saving

into

docker

daemon.

Most

people

don't

really

want

to.

F

A

E

B

E

A

F

Container

orchestration

engine,

we

don't

need

to

contin

it.

It

doesn't

matter

if

it's

docker

container

d,

that's

just

gonna,

run

the

containers,

so

we

could

run

our

cloud

thing

and

export

to

a

local

registry

and

build

a

whole

interface

around

managing

things

in

that

local

registry

and

that

we

wouldn't

even

need

continuity.

In

that

case,

it

would

work

great,

but

before

we

do

that,

we

wanted

to

explore,

see

if

build

code

would

solve

the

problem

for

us.

A

Yeah,

unfortunately,

yeah

right

now,

I

it

doesn't

sound

like

it

would

totally

and

it's

like,

like

I

said

I

mean

I,

I

don't

have

a

ton

of

insight

into

like

you

know,

docker's

roadmap

or

anything

like

that.

I

do

know

that

they

are

aware

of

these

issues.

You're

talking

about

so

you

know

it's

definitely

worth

seeing

like

if

they're

putting

any

priority

on

them

like

in

theory,

if

docker

the

actual

demon

switch

to

like

the

container

d

storage

back

end,

then

that

would

probably

make

this

integration

a

lot

easier.

C

So

yeah,

right

now

when,

when

like

docker,

desktop,

actually

uses

build

kit,

so

it

has

to

go

through

the

same

cycle.

When

people

run

dockable

on

newer

versions

of

docker

desktop

they're

calling

out

to

build

kit

book,

it

is

doing

the

whole

thing

and

then

it's

calling

docker

load

at

the

end

to

load

the

tarball.

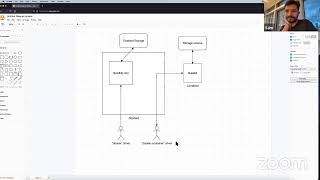

A

Yeah,

so

actually

I

I

saw

questions

about

this,

so

I

took

five

minutes

and

made

the

ugliest

diagram.

I've

ever

made

to

try

to

explain

this

because

it's

a

little

complicated.

Okay,

so

with

I

mean

there's

the

default

docker

build,

which

is

actually,

I

think,

being

deprecated

in

the

next

version

and

now

the

next

version

of

docker

it's

switching

over

to

always

using

build

kit,

at

least

by

default,

but

so

there's

a

driver,

there's

a

concept

of

drivers

for

that

and

the

one

you

get.

A

If

you

do

nothing

is

the

docker

driver

so

like

this

is

right

now,

if

you

do

docker

buildex

build

or

whatever

this

is

the

default,

and

so

that

actually

it

talks

to

something

that

is

embedded

in

docker

d.

So

it's

using

buildkit

as

a

library-

and

I

have

here

buildkit

ish,

because

it's

a

very

it's

taking

little

components

of

build

kit

and

combining

them

together

in

a

way

that

adapts

it

to

docker's

storage

model,

the

one

with

all

the

problems

we're

just

talking

about,

and

so

that

one

actually

actually

merge.

A

All

I

mean

it

technically

works,

but

it

doesn't

have

all

the

same

benefits

like

it

doesn't

have

the

lazy

behavior

for

example.

So

that's

what

you

get

by

default,

but

if

you

switch

to

the

docker

container

driver

as

the

back

end,

it's

just

like

one

command

to

do

that,

then

you

will

be.

What

happens

is

dr

d

starts

up

build

kit

in

a

container

which

is

great,

because

you

can

update

build

kit

completely

independently

of

docker

and

all

that

and

then

you

get

all

the

benefits

of

build

kit.

A

E

A

Yeah

build

kit,

will

it

has

its

own

volume,

and

you

know

it's

just

like

a

docker

volume

and

then

it's

basically

treating

that

the

same

way

it

does

when

it's

a

standalone,

so

it

build

kit

itself

is

importing

container

d

libraries

and

using

the

container

d

storage

mechanism.

And

so,

if

you

look

here,

you

would

end

up

seeing

something

pretty

similar

to

what

you'd

see

for

like

a

container

d

image

store.

A

F

A

So

that

flag

is

only

needed

for

this

docker

container

driver,

which

is

not

the

default.

You

have

to

run

a

command

to

specifically

enable

this

one.

This

other

driver

will

be

the

default

again

take

this

with

a

grain

of

salt,

because

I

am

not

actually

working

on

it,

but

and

in

that

case,

because

docker

d

is

the

actual

storage

mechanism

behind

all

of

it,

it

you

don't

need

the

load

flag.

It's

basically

implied

at

that

point.

A

One

right

and

so

yeah

yeah

exactly

like

right

now,

it's

not

even

doing

any

of

this

by

default.

It's

doing

like

the

old.

You

know,

docker

build

mechanism

without

build

kit,

and

so

then

yeah

the

switch

is

to

deprecate

that

one

and

then

the

yeah

order

of

preference

becomes

what

you

said,

the

doctor

driver

and

then

you

can

enable

this

driver

there's

also

like

a

kubernetes

driver

too.

I

think.

A

I

mean

I

think

that,

generally

the

performance

is

at

least

as

good

or

better.

I

know

there

was

an

issue

there's

like

a

couple

like

small

changes,

they're

like

really

obscure

things,

printing

to

standard

out

instead

of

or

standard

error

instead

of

standard

output

like

I

think

the

performance

there's

not

expected

to

be

any

sort

of

regression

or

anything

like

that,

it's

just

either

no

difference

or

an

improvement.

C

Okay,

and

so

when,

when

we

actually

run

a

build

kit

front

end

with

with

docker

right

now

it

it

can

run

in

either

of

these

modes.

So,

like

the

front

end

can

run

with

that

build

kit,

ish

model

and

store

directly

to

docker

daemon

or

it

can.

It

can

also

run

with

the

other

model

where

it

spins

built

it

up

as

a

container.

A

Yeah,

so

the

yeah,

when

you're

using

a

front

end

that

should

work

for

either

driver

mode,

essentially

yeah,

like

the

fact

that

this

is

using

docker

d

underneath

the

hood

is

completely

hidden

from

like

the

front

end

that

you

would

be

running

the

front

end

will

have

the

exact

same

interface,

and

it's

just

that

yeah

when

it

stores

its

images.

It's

gonna,

you

know,

be

somewhat

less

efficient.

A

E

A

A

A

C

C

That's

different

or

that's

lossy

yeah.

So

like

some

of

the

updates

on

that

tweet

said

that

now

it

had

access

to

the

oc

descriptors.

I

was

just

wondering

like

we.

I

was

still

not

clear

on

whether,

like

how

embedded

bill

kit

is

in

docker

damon,

so

whether

you

could

access

this

information

or

it

was

stored

somewhere

in

the

daemon,

so

that

when

you

did

docker

push,

it

would

put

that

information

back

on

the

registry

or

not.

A

Yeah,

none

of

the

changes

with

merge

op

would

have

impacted

that

so

yeah

like

I

guess,

the

connection

with

merge

up

is

really

bad.

It's

you

can

kind

of

think

of

it

as

way

of

constructing

like

the

the

layers

list

within

there,

because

you

know

when

you

merge

you're,

just

basically

joining

layers

of

each

of

the

merge

inputs

together,

but

yeah

in

general,

like

if

docker

is

dropping

those

annotations

when

you

load

it

in

I

mean

I'm

not

aware

of

that.

A

Having

changed,

I

obviously

could

miss,

have

missed

something

and

but

yeah

it's

otherwise.

It's

kind

of

the

same

story

as

before,

where,

if

you

are

using

this

driver

and

talking

directly

build

kit

and

telling

build

kit

to

go

and

export

it,

then

those

should

be

retained,

but

yeah.

If,

if

it's

dropped

once

the

import

into

docker

storage,

then

I'm

not

aware

of

that

being

any

different.

C

C

C

So

a

couple

of

questions

just

purely

in

terms

of

build

kit

like

what

does

the

source

op

allow

you

to

take

an

oci

layout

on

some

volume

as

an

input.

So,

like

you,

I

see

it

takes

in

registries

or

other

things

as

inputs,

but

does

it

is

there

some

operation

that

lets

you

load

it

from

a

no

c

layout

on

disk.

C

A

D

Go

ahead,

jason,

oh

yeah!

Sorry!

I

had

to

step

away

for

a

second,

so

I

don't

know

if

this

question

has

been

asked.

While

I

was

absent,

but

is

I

don't

know

how

how

closely

related

the

doctor

file

format

and

build

kit

is

is,

is

are

merge,

ops,

going

to

be

a

thing

in

docker

files?

Are

you

going

to

be

able

to

say

merge

these

three

like

run

directives?

That's

that's!

The

thing

people

always

want

to

do

is

like.

A

It

right

now

that

it's

not

directly

exposed

so

that

copy

link

flag

that

is

essentially

merge

up,

there's

a

little

bit

of

a

caveat

there,

but

it

that's

as

close

as

it

gets

right

now

to

a

like

direct,

merge,

op

and

in

theory,

though,

you

could

add

that

but

yeah

that

would

be

another

release

of

docker

files

and

in

in

general,

there's

no

rule

that,

like

for

every

llb

operation,

there

has

to

be

a

one-to-one

correspondence

with

a

docker

file.

Anything

like

that,

but

yeah

there

yeah,

the

the

other

operation.

A

I

added

diff

is

probably

kind

of

useful

for

what

you're

describing

there,

because

it

lets

you.

Basically

you

could,

you

know,

run

a

command

and

then

you

can

basically

get

the

files

that

change

just

as

a

result

of

the

command

without

the

base

underneath

it,

and

so

you

could

like

run

three

of

those

and

then

just

take

the

changes

created

by

those

and

merge

them

together.

And

so

there's

been

some

person

who

wanted

that

in

docker

file.

So

like

in

theory,

you

could

have

that

but

yeah

it's

it's

like

a

case-by-case

basis.

A

D

Yeah

I

mean

there's,

there's

so

many

blog

posts

about

how

you

can

get

a

smaller

docker

image

by

you

know,

cramming

things

into

instead

of

like

apt-get,

install

foo

and

then

run

apt,

cache

cleanup

or

whatever,

like

put

them

in

one

run

directive,

and

you

save

a

bunch

of

you

know

this

one

weird

trick.

Yeah

I

feel

like

that

is

a

a

best

practice.

That's

been

like

cargo

quilted

across

10

million

docker

files

and

it'd

be

like

to

just

have

it

in

the

in

the

file

format,

but

I

don't

know.

D

A

Right

yeah,

it

in

theory

should

and

yeah.

I

actually

once

this

blog

post

is

out.

You'll

probably

be

interested

in

it,

because

I

talked

basically

exactly

about

that

about

finding

a

better

way

of

doing

exactly

that

using

merge

up

in

exactly

yeah.

I

guess

my

main

focus

has

been

not

so

much

docker

files

like

just

you

know

other

formats

that

are

being

built

on

top

of

build

kit,

but

yeah

in

in

theory.

It

would

be

really

nice

and

obviously

you

know

dr

phil

is

still

probably

the

most

popular.

That's

my

guess

anyway.

A

C

A

C

G

Yeah-

and

this

is

really

in

response

to

being

able

to

load

the

oci

layout

as

one

thing

so

that

we

can

continue

to

keep

the

exporter

the

exact

same,

but

gen,

export

and

ocl

layout

and

then

use

build

kit

to

load

that

as

the

image

so

that

we're

just

doing

the

container

orchestration

here

and

not

having

to

re-implement.

Like

all

the

the

oci

image

creation

itself,

like

we'll

leave

that

in

cmd.

A

B

A

Yeah,

I

guess

like

the

one

of

the

main

benefits

there

is

just

that:

buildkit

has

a

lot

of

optimizations

in

terms

of

like

you

know.

If

you

have

two

clients

and

they're

doing

you

know

similar,

builds

or

there's

like

overlap,

then

it

can

deduplicate

those

like

live

or,

like

you

know,

you

take

the

cache

of

one

and

then

use

that

with

the

other

builds

you

know

like.

So,

if

you're

like

a

really

huge

company

with

you,

know

hundreds

of

users,

then

those

types

of

performance,

optimizations

kind

of

making

a

pretty

huge

difference.

Yeah.

C

G

So,

like

I

didn't,

I

didn't

really

like

carving

that

path

like

as

far

as

like

pack

having

to

load

images

with

like

a

manual

command

because

users

aren't

used

to

it

but

like,

if

you

know

the

mind

share

of

using

docker

and

turning

on

this

more

efficient

path

to

use

like

a

container

d

back

in

for

various

reasons

that

you

know

whatever

like.

I

could

see

this.

B

Yeah

and

it's

also

the

data

loss

right

so

like

then,

the

users

could,

if

we

do

go

down

this

other

path.

This

different

workflow,

where

pac

itself

is

kind

of

managing

the

images

and

then

able

to

load

them

into

the

damon.

The

daemon

becomes

sort

of

secondary

right.

The

docker

cli

becomes

sort

of

secondary

to

a

lot

of

that,

and

hopefully

they're

able

to

use

other

things

like

pushing

them

directly

to

registry

through

pac

and

and

that

sort

of

stuff

and

the

daemon

becomes

more

of

a

just,

not

the

primary

right.

C

So

currently,

we

interact

with

like

the

the

only

thing

that

our

base

implementation

lifecycle

is

aware

of,

is

like

it

can

communicate

with

the

daemon

over

a

socket

using

the

daemon

api.

Is

there

in

any

information

that

is

added

to?

Let

us

know

like

what

kind

of

storage

back

end

it's

using

like?

If,

if

like

someone

is

running

container

d,

for

example,

as

as

the

storage

back

in

like?

Would

we

know

that

by

any

chance

through

the

api,

or

is

that

just

like

not

exposed

whatsoever.

A

Yeah

so

depends

on

which

part

you're

talking

about

so,

but

the

docker

demon

I

mean

it

only

has,

as

far

as

I

know,

one

storage

back

end

like

the

container

d

back-end

that

doesn't

exist.

That's

like

hypothetical

for

docker,

specifically,

so

I

I

don't

know,

I'm

not

sure

about

that,

but

I

like

with

buildkit.

I

know

you

can

go

and

ask

it

like.

A

It

has

multiple

backgrounds

and

you

ask

it

which

one

it's

using,

and

I

know

that

with

docker

so

like

if

you're

using

docker

build

x,

you

can

choose,

you

know

which

driver

back

end

you're

using

like

the

these

various

different

options,

and

there

I

I

know

with

the

cli

you

can

ask

it

which

driver

is

being

used

and

change

that

and

all

that.

So

I

would

assume

it

must

be

possible

with

an

api,

hopefully

but

yeah,

not

not

100,

sure.

E

It

seems

like,

at

the

very

least,

there's

interesting

prior

art

here,

it's

like

if

we

didn't

want

to

export

to

the

demon

like.

Could

we

do

something

similar

to

what's

being

done

by

buildkit

now

to

get

better

performance?

And

then

to

me

the

big

question

mark

comes

down

to

like

how

important

other

oci

artifacts

are

going

to

be

to

some

of

our

core

workflows,

because

I

don't

think

you

know

container

d

storage.

A

Yeah,

I

I

don't

know

very

much

about

that

at

all,

but

I

yeah

I'm

not

aware

of.

I

know

for

build

kit.

There's

no

way

to

just

like

say

you

know,

attach

this

arbitrary

artifact,

like

in

theory

like

I

can't

imagine

why

it

would

be

technically

impossible

to

implement

that

someday.

But

you

know

obviously

that's

very

hypothetical,

and

you

want

to

figure

that

out,

but

I

mean

another

option

is

to

use

buildkit

to

push

images,

and

then

you

can

do

some

registry

operations

after

that,

too.

G

G

Because

that's

what

we

struggled

with,

because

we

kind

of

designed

something

similar

for

like

pack

having

its

own

storage

volume

and

storing

all

this

stuff

ocla

out

on

disk.

But

then

it

gets

a

little

weird

when

you're

like

asking

pack

to

build

something

that

you

that

might

come

from

a

local

image

and

if

you

didn't

have

it

loaded

like,

and

that

happens

to

be

a

public

registry

image.

It's

going

to

pull

it

and

is

that

okay

is

that

expected

and

that

that

user

behavior

is

yeah.

Yeah.

A

C

A

C

A

G

A

E

It's

great

that

other

people

are

going

that

direction,

because

I

think

one

of

our

big

sticking

points

has

been

like

trying

to

swim

upstream

against

expectations

on

the

user's

behalf,

like

we're

not

a

big

enough

project

to

like

universally

reset

expectations

ourselves.

So

if

we're

the

first

project,

that's

like!

Oh

your

base

image.

You

can't

just

get

that

from

the

demon

storage

people

like

what

is

you

know

what

is

buildpacks

even

doing

over

there

right,

but

if

multiple

projects

are

going

that

direction,

that

is

helpful.

A

A

D

Yeah,

I

think

I'd

be

super

interested

in

another

one

of

these

very

often

with

more

folks

doing

this

kind

of

stuff.

I

feel

like

we're

all

approaching

the

same

problem

from

eight

different

angles,

and

you

know

necessity.

Some

of

that

is

like

necessary

complexity,

and

some

of

that

is

just

completely

avoidable

extra

work,

so.

C

D

Yeah

code

just

does

the

dumb

thing

and

docker

loads

and

tarball

I

mean.

I

don't

think

I

think

we

don't

care

if

it's

slow,

because

we

prefer

you

to

push

to

the

registry.

I

completely

understand

why

pac

would

would

would

want

to

optimize

that,

but

I

think

code's

answer

has

just

been

yolo:

it's

slow

because

you

want

to

use

docker.

It's

your

fault.