►

Description

There are so many things you can track and observe in Kubernetes. The big question is - which one is essential that I should pay attention to immediately? In this talk, we will discuss and share our opinions, observations, and how to get started.

A

Hello,

everyone

welcome

to

kubernetes

community

days.

My

name

is

steve.

I'm

the

developer

relations

lead

here

in

new

relic.

What

I

do

is

I

manage

the

relationship

between

developers,

engineers

when

they

use

the

new

relevant

platform.

So

today,

what

I'm

going

to

do

with

my

topic

is

I'm

going

to

share

my

point

of

view

top

10

metrics

to

observe

in

the

world

of

kubernetes.

A

A

What

you

see

right

here

is

a

very

popular

comic

strip

by

duber.com.

Many

engineers

are

aware

of

this,

because

sometimes

when

we

take

a

break

or

we

just

go

through

the

internet,

we

want

to

find

something

funny.

Sometimes

we'll

come

across

this

particular

topic.

What

I

like

about

this

particular

comic

strip

it

talks

about

dilbert

is

actually

talking

about.

Hey

you

know

looks

like

solving

problems.

A

It's

not

as

easy,

because

moving

things

like

containers,

micro

services,

cloud

and

even

with

kubernetes

doesn't

mean

the

problem

will

magically

go

away

in

fact,

sometimes

probably

get

amplified,

because

the

layer

of

extraction-

that's

involved

right

here,

so

kubernetes,

probably

one

of

the

greatest

technology

that's

released

within

the

decade.

Kubernetes

is

awesome,

but

at

the

same

time

it's

quite

scary

because

the

layer

of

extraction

is

so

extracted

compared

to

what

we

used

to

be

when

something

goes

wrong,

it's

very

hard

to

pinpoint

what

you

need

to

look

out

for.

A

So

what

we

do

right

here

is

this

sounds

very

familiar

to

us.

What

you

see

right

here

is

the

evergreen

container

ship

that

if

you

remember

that

blocked

the

seuss

canal

that

had

logistic

issue

around

the

wall.

This

is

same

in

kubernetes,

because

within

one

node

you

have

a

lot

of

container

parts,

that's

running,

and

sometimes

when

something

goes

wrong

with

a

particular

container

part,

it

might

crash

the

whole

environment

or

other

parts

got

affected

too.

In

fact,

what

I

really

like

about

this

particular

image.

A

A

So

what

I

want

to

do

right

here

is

to

make

things

even

harder,

especially

for

within

a

kubernetes

environment.

Did

you

know

there

are

five

areas

that

you

can

actually

get

telemetry

from

again.

This

is

not

kubernetes

fault.

It's

just

that.

Kubernetes

is

an

open

source

project.

Therefore

everyone

at

their

little

modules.

Everyone

has

different

point

of

view

and

every

modules

is

designed

to

solve

a

specific

problem.

So,

for

example,

c

advisor

metric

server,

api

server,

node

exporter,

coupe

state

metrics.

A

A

Yes,

within

kubernetes

has

hundreds

of

thousands,

but

if

I

can

only

pay

attention,

maybe

the

first

10.

What

would

I

would

it

be,

and

this

is

my

point

of

view

where

we

can

continue

moving

forward

so,

first

and

foremost,

probably

the

most

common

problem

that

you

always

see

you

know,

sometimes

things

go

wrong

in

kubernetes

is

misconfiguration

misconfiguration

happens

whether

we

like

it

or

not,

and

there's

really

no

magical

solution

that

you

can

solve

it

right

there

now

sometimes

fat

fingers.

A

A

So

what

we

can

do

in

the

world

of

kubernetes

is

we

want

to

use

a

combination

of

source

control

and

you

have

a

really

good

deployment

pipeline,

which

you

already

have

added

to

a

cdci

tool,

or

maybe

things

like

jenkins,

but

the

key

important

concept

is

actually

smoke

test.

What

you

want

to

do

is

you

want

to

run

regular

smoke

tests

in

different

environments

so

that,

when

you

reach

into

production,

everything

is

okay,

so

there's

several

techniques,

you

can

do

it.

One

enterprise

vendor,

which

many

people

will

love,

is

actually

launched.

Uplink.

A

A

A

However,

there's

one

thing

you

need

to

be

mindful

of:

in

order

to

do

tests

in

your

staging

development

or

even

environment,

that's

not

production

will

increase

costs.

However,

I

rather

get

that

and

capture

that

issues

before

we

go

into

production,

because

one

thing

that

I

think

we

can

all

agree

as

engineers

right

here

is

during

the

pandemic,

many

engineers

are

burnt

out.

There's

mental

load

there's

a

lot

of

things

going

on

and

not

to

mention

kubernetes

kubernetes

is

so

complex

and

distributed.

A

A

If

you

haven't

really

explored

that,

thereby

one

thing

you

need

to

be

mindful:

argo

cd

is

best

to

pair

up

with

git

ops,

if

you're

very

new

to

git

ops,

git

ops

is

using

git

as

a

way

of

a

source

control

where

that's

where

what

we

call

the

single

point

of

truth.

We

deploy

all

configurations

and

applications

in

the

environment

right

there.

A

So

let's

just

move

forward

right

here

and

we

can

see

from

a

configuration

point

of

view.

Where

can

you

find

them

now?

I'm

going

to

use

gitlab

as

an

example,

but

again

a

lot

of

tools.

Does

this

by

use

gitlab

because

they

probably

have

one

of

the

best

ui

that

I've

seen

from

there.

You

can

visualize

for

your

deployment

pipeline

within

each

pipeline.

You

have

different

tasks

or

jobs

that

is

going

to

execute

and

you

can

see

the

status

of

each

task.

A

One

thing

that

I

like

about

gitlab

is-

and

this

is

probably

my

first

metric

that

I

want

to

nominate-

is

pipeline

status.

Is

it

running

fail,

pass

or

successful?

I

want

to

make

sure

that

all

the

deployment

pipelines

I'm

running

regardless

what

environment

I'm

running.

I

want

to

know

how

many

fail

pass

or

pending.

So

that's

actually

quite

good

and

that's

the

metric

that

I

actually

want

to

nominate

to

capture

those

issues

as

early

as

I

can

so,

let's

mention

in

2022.

A

A

So,

if

you're

very

new

to

keep

ops,

you

want

to

explore

it,

but

what

I've

did

right

here

is:

I've

included

all

the

links

and

reference,

including

the

metrics,

that

what

git

ops,

sorry,

what

argo

cd

can

expose

is

all

available

to

you

right

here

now.

Let's

talk

about

compute

resource

top,

10

metrics

wouldn't

be

complete.

If

I

don't

talk

about

kubernetes

compute

resource,

if

you

ever

work

with

kubernetes

before

you

know,

kubernetes

takes

a

lot

of

compute

resource.

This

is

actually

quite

common.

The

reason

is

because

each

node

is

relatively

huge.

A

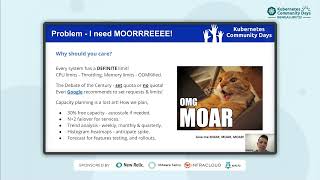

You

have

high

amount

virtual,

cpu

and

virtual

ram,

and

you

stack

a

lot

of

clusters

and

ports

in

it

and

therefore,

especially

for

practitioners,

administrators

of

kubernetes.

You

pay

a

lot

of

attention

to

the

limit

that

you

have.

The

reason

is

pretty

simple:

every

system

have

a

definite

limit

and

there

are

two

common

problems

that

you

always

see

with

compute

results.

First

is

cpu

limits

once

you

hit

the

limit,

you

start

c

applications

daughterly

number

two.

When

you

start

seeing

memory

limits,

you

start

to

see.

A

A

But

arguably

what

I

call

the

debate

of

the

century

that

a

lot

of

kubernetes

practitioner

will

actually

debate

about

is:

should

you

set

a

quota

or

limits

for

your

request

within

environment?

Should

you

set

a

quota

or

let

it

free

with

no

quota?

In

fact,

google

even

recommend

this

there's

really

no

right

or

wrong

answer,

and

I

will

show

I

will

share

an

article

for

you

that

really

talks

about

the

pro

and

cons

by

setting

quota.

But

what

I

have

noticed

is

a

lot

of

engineers

when

they

set

the

quota.

A

They

don't

really

have

a

good

reason

why

they

said

in

the

first

place.

This

is

usually

because

of

an

advice.

Some

article

they've

seen

online

with

our

true

understanding.

Why

do

you

want

to

set

quota

in

the

first

place?

But

when

you

really

think

about

it,

why

you

need

quota

is

because

of

capacity

planning

now,

especially

for

engineers.

If

you

can

do

capacity

planning

well,

you

should

deserve

a

raise.

The

reason

is

because

capacity

planning

in

the

world

of

kubernetes

is

really

really

hard

here

in

ireland.

A

Our

sre

engineers

spend

a

lot

of

time

in

capacity

planning.

Let

me

show

you

some

of

the

tips

that

what

we

use

internally,

first

and

foremost,

our

main

number

one

rule

is

we'll

never

go

more

than

70

and

we

make

sure

we

always

have

30

free

capacity

with

the

environment

right

there,

and

also

we

leverage

things

like

auto

scaling

for

us

being

a

full

stack,

observably

platform.

When

our

platform

goes

down,

customers

that

don't

have

visibility

environment,

we

need

to

make

sure

that

we

have

sufficient

capacity

with

our

kubernetes

cluster.

A

Second

one

most

people

will

do

an

n,

plus

one

fail

over

for

us

we're

very

aggressive,

because

we

need

to

plan

for

worst

case

scenario.

We

do

a

n

plus

two.

This

increased

cost,

but

it's

a

necessary

step

that

we

need

to

make

sure

our

stuff

is

up

and

running.

Now.

What

we

do

from

there

is.

We

do

trend

analysis,

weekly,

monthly,

even

quarterly.

What

we

try

to

do

is

we

want

to

understand

the

usage

pattern,

so

we

can

forecast

effectively.

A

So

where

do

you

find

them?

So

what

you

can

do

is

what

I

recommend

is

you

want

to

get

to

the

metric

server?

That's

the

quick

ctl

command.

You

know

what

you

can

do

is

you

actually

can

get

cpu

metrics

memory

metrics,

which

is

very

important,

especially

for

horizontal

and

vertical

scaling

right

there.

Now

I

want

to

draw

your

attention

to

this

particular

slide

right

here.

Isolating

no

cpu

limits,

that's

a

fantastic

article!

I

recommend

that

you

spend

time

go

through

and

read

to

understand.

How

do

you

do

tests

and

run

your

hypothesis?

A

Where

should

you

set

quarter

when

you

shouldn't

set

quota

right

there?

It's

a

brilliant

process

where

it

actually

goes

through

the

process.

I

actually

say:

hey

what

we

found.

There's

some

area

we

shouldn't

set

quota

because

we

want

to

burst

and

what

you

see

right

here

is

actually

a

histogram

heat

map,

but

we

can

see

here

in

the

relic

roughly

which

time

of

the

day

which

pod

service

is

going

to

have

a

spike.

This

is

what

we

do

here

here

in

new

york.

I

did

mention

with

my

statement

earlier.

A

If

you

know

how

to

do

capacity

planning,

you

deserve

a

raise.

However,

I

also

found

a

fantastic

resource,

especially

for

people

that

look

after

kubernetes,

where

capacity

planning

for

kubernetes

made

easy,

fantastic

guide

by

learnkx.io

right

here.

It's

a

visual

representation

guide

where

you

just

need

to

punch

in

a

few

values

and

from

there

you

can

adjust

your

value

through

slider.

It

gives

you

a

really

nice

visual

representation.

What

the

capacity

forecast

that

you're

going

to

need

for

your

kubernetes

cluster.

A

As

mentioned,

that's

the

link,

and

I

have

included

for

you-

and

this

is

a

fantastic

resource-

that

I

recommend

that

you

spend

time

to

play

around

with

now.

Let's

talk

about

networks,

if

you

work

with

kubernetes

long

enough,

you

probably

know

network

is

actually

a

very

big

headache.

In

fact

when,

when

I

try

to

triage

network

related

issue,

quite

often,

I

need

to

drink

10

cans

of

red

bull

and

really

go

into

the

logs

and

just

find

out.

What's

going

on?

Is

it

you?

Is

it

that

person

is

it

the

infrastructure?

Is

it

the

app?

A

Is

it

the

external

network?

What's

going

on,

troubleshooting

network

is

not

fun

at

all.

There's

a

good

amount

of

resources

available

online

in

the

world

of

kubernetes

that

talk

about

what

you

need

to

observe

in

network,

the

one

that

really

stand

out.

The

most

is

actually

the

core

dns,

so

starting

from

version

1.12

called

dns

is

the

recommend

dns

server,

where

what

you

do

is

dns

resolution

and

the

number

one

most

common

problem

that

we

always

see

is

dns

resolution

error.

Sometimes

it

could

time

out

or

due

to

network

intermittent

connectivity

issues.

A

So

that's

actually

what

I

would

nominate

and

I'll

suggest

that

you

want

to

look

after

called

dns2

now

what

about

kubernetes

ingest

ingest?

Yes,

it's

a

big

problem

too.

I've

seen

and

spoke

to

many

people

that

kubernetes

injuries

can

cause

a

big

problem.

However,

as

an

industry

we're

getting

better,

the

solution

is

getting

better.

There's

a

lot

of

guides

and

community

and

suggestions

out

there

and

I'll

talk

a

bit

more

where

you

want

to

look

from

an

ingest

point.

A

So

what

you

can

do

from

network

point

of

view

is

from

a

dns.

So

what

you

want

to

do

is

you

want

to

go

to

core

dns

which

they

provide?

You

fantastic

documentation,

and

this

is

the

two

metrics

that

I

would

normally

as

part

of

my

top

10-

is

errors

and

health

within

core

dns

itself,

so

you

actually

can

see

all

the

different

metrics

that

actually

they

produce

exposed

to

you

and

also

that's

the

coop

ctl

command

that

you

can

run

to

actually

check

the

logs

for

core

dns.

A

So

what

I

want

to

do

is

I

want

to

look

for

early

symptoms

so

that

when

there's

a

problem

we

call

dns,

I

can

actually

quickly

jump

over

it.

The

next

one

is,

I

want

to

talk

about,

is

ingest

ingest.

Yes,

the

good

thing

is

as

an

industry

and

the

provider

that

you

see

right

here,

nginx

traffic

and

con,

everyone

is

actually

getting

better

and

better.

A

So

just

pick

one

of

them,

if

you

ask

me

personally,

the

most

common

one

I've

seen

is

nginx,

because

people

are

very

used

to

that.

If

I

can

only

pick

one

again

I'll

pick

error

rate

for

egress,

so

egress

is

probably

the

most

common

one

and

that's

actually

what

I'm

going

to

nominate

as

part

of

my

top

10..

A

Let's

talk

about

parts,

if

you

ever

work

with

kubernetes,

you

know

that

your

spots

get

stuck

quite

often,

but

here's

the

problem

pods

get

stuck

for

many

reasons.

Sometimes

it

could

be

insufficient

resource.

Sometimes

you

reach

a

limit,

sometimes

not

getting

the

right

image.

Misconfiguration,

there's

so

many

reasons

right

there.

So

the

problem

with

posits.

A

A

Yes,

error

message

for

us

as

an

industry,

let's

be

honest

right

here

engineers.

We

know

we

don't

do

a

good

job,

but

kubernetes

is

actually

getting

better.

Now.

One

thing

that

I

will

also

mention

in

the

world

of

kubernetes:

it's

very

common

that

engineers

get

into

lots

because

you

know

that's

actually

how

we

figure

out

what's

going

on,

but

you

have

to

be

mindful

of

that

because

logs,

if

you're,

not

careful

and

you

try

to

collect

every

loss,

it's

gonna

get

really

expensive

containers

nodes,

the

parts,

the

application

infrastructure

they

all

generate

locks.

A

In

fact,

I

always

joke

that

for

anyone

that

manages

lot,

sometimes

you

get

a

heart

attack.

Just

looking

at

you

know,

based

on

the

number

of

locks

that

you

get

is

because

it's

just

a

lot

so

my

suggestion,

especially

for

parts

if

you

possible,

look

for

error

locks

and

actually

push

it

into

the

logging

platform

of

your

choice

right

there.

A

So

where

do

you

find

them?

Where

do

you

find

these

specific

details

and

you

actually

find

them

in

cubestep

metrics

right

here

called

the

pop

metric.

So

here's

again

another

my

two

nomination:

that's

about

my

top

ten,

which

is

status,

phase

and

also

status

reason

right

there.

This

is

relatively

easy

to

get

out

because

kubstep

metrics

is

actually

quite

friendly

to

use

and

from

there

I

actually

can

push

it

up

into

a

specific

monitoring

to

open

source

like

prometheus

or

even

new

relic.

A

It's

totally

up

to

you

and

from

there

once

you

start

seeing

specific

issue,

you

can

usually

use

coup,

ctrl

described

to

actually

describe

the

plot

and

the

good

thing

about

again.

There

are

a

lot

of

good

resources

available

out

there.

It

saves

you

some

time.

This

is

my

favorite

resource

that

I

like

to

use

online.

I've

included

this

link

for

you

so

that

you

can

go,

read

a

bit

deeper

right

there.

A

Now,

let's

move

on

to

the

control

plane,

I

want

to

warn

you

there's

a

lot

of

modules

in

control

plane.

The

question

is,

which

one

do

you

focus

first

and

that's

my

focus

of

this

particular

point

right

here

within

this

control

plane.

There

are

two

areas

if

I

can

of

of

everything

that

I'm

going

to

look

after

I'm

going

to

focus

predominantly

on

the

api

server

and

etcd.

A

I'm

not

saying

others

are

not

important.

This

is

that

I'm

going

to

place

much

more

important

on

this

too.

Why

api

server

is

the

api

gateway

for

everything

that's

related

to

kubernetes.

If

the

api

server

is

down,

they

can't

communicate,

and

probably

the

number

one

problem

that

always

causes

problems

to

api

server

is

the

inter-network

issues

that

occur

within

that

cluster.

A

So

it

could

be

a

firewall.

Something

could

be

a

network

intermediate

network

issue.

Sometimes

it

could

be

a

lot

of

things,

that's

going

on

underneath

it,

so

I

will

make

sure

that

api

server

is

up

and

running

another

one

is

egcd,

so

edcd

is

a

key

value

database.

It's

actually

really

important

in

the

world

kubernetes

because

it

stores

a

configuration

and

it

stores

the

actual

state

and

the

desired

state

of

the

cluster

right

there

now

being

a

database,

and

especially

people

ever

work

with

database,

you

know

be

mindful

and

don't

forget

about

storage.

A

Anything,

that's

database

related

can

grow,

really

really

quick

and

also

one

suggestion.

That's

always

suggested

in

all

other

sessions.

I've

been

through

even

speaking

to

customers.

Remember

to

back

up

your

egcd,

especially

early

control.

Plane

is

arguably

the

most

important

part

within

kubernetes

and

a

good

amount

of

guides

even

coming

from

the

official

dock

side.

That's

the

link

for

you,

and

this

is

the

two

that

our

focus

compared

to

others

right

there.

So

the

next

question

is:

where

do

you

find

them?

So

you

need

to

be

mindful

since

kubernetes

1.16

sound

names

has

changing.

A

So

this

is

my

top

two

again.

The

two

metric

that

I

nominate

as

part

of

my

top

ten

one

is

ready

with

the

z

right

there,

four

api

server

and

the

other

one,

which

is

the

etcd

which

I'm

looking

for

health

right

there.

So

what

I'm

looking

right

here

is

just

a

simple

ping

check

or

health

check

is

good

enough

to

ensure

if

egcd

and

api

server

is

good,

and

what

I

want

to

do

is

I

usually

pop

actually

funnel

this

into

a

logging

platform

I'll

put

up

into

the

big

screen

tv.

A

I

want

to

make

sure

these

two

components

is

actually

working

very

well

now.

What

I've

did

so

far

right

here

is

kind

of

told

you

10

different

metrics

from

an

infrastructure

point

of

view,

however,

especially

for

people

that

look

after

kubernetes.

You

don't

want

to

admit

this,

but

let's

be

very

honest

right

here:

application

is

the

heart

and

mind

of

the

kubernetes

cluster,

with

our

application.

There's

really

no

need

for

kubernetes,

because

kubernetes

is

there

to

make

sure

that

applications

up

and

running.

A

Therefore,

I

want

to

take

a

moment

to

talk

about

more

on

the

application

side,

because

right

now,

as

a

kubernetes

administrator

a

practitioner,

you

need

to

have

some

knowledge

what's

going

on

in

an

application

right

there.

So

let

me

talk

about

apps,

so

let

me

add

two

more

metrics

apart

for

my

top

ten,

so,

first

and

foremost

in

the

world

of

kubernetes,

the

ideal

architecture

is

stateless

architecture.

A

Stateless

is

very

very

hard

to

achieve.

The

reason

is

because

maybe

you

can

you

know,

build

your

application,

your

api

layer

using

a

stateless

architecture,

but

when

it

comes

to

database

identity,

access

management,

usually

it's

a

stateful

application,

it's

very

hard

to

get

that

so

try

to

monitor

the

complex

services

and

also

each

programming

language,

each

framework,

each

library

might

change

every

month.

It

makes

things

really

really

hard.

A

A

lot

of

people

put

a

lot

of

tension

with

cat's

application

and

cattle,

or

sometimes

people

use

the

word

cow,

where

usually

it's

a

state-of-the-application.

If

the

container

is

down

or

the

application

down,

people

just

destroy

it

and

redeploy

and

everything

revenue

is

ready

to

go.

Nothing

is

irreplaceable.

That's

what

the

cat

encounter

description

and

it's

still

valid

here

in

2022,

and

I

still

suggest-

and

we

should

still

strive

for

if

you

want

to

go

and

fully

leverage

kubernetes,

you

want

to

go

through.

A

Go

to

a

stateless

architecture,

that's

a

fantastic,

actually

article

right!

There

is

available

to

you,

which

I

include

into

the

link

which

you

can

go

through

right

there

within

an

application.

If

you're

very

new

to

this

or

just

need

somewhere

to

start,

I

would

recommend

the

google

sre

or

google

site

reliability.

Engineering,

four

golden

signals

right:

there,

latency

traffic

error

and

saturation

now

within

the

four

of

them,

which

one

do

you

pick

for

me?

A

A

The

95

percentile

latency

right

there,

so

95

percentile

latency

is

the

maximum

latency

in

seconds

for

the

95

of

the

requests,

if

you're

very

new

to

this,

because

you're

coming

from

average

95

when

you

just

started,

might

give

you

a

heart

attack,

because

you

know

your

latency

might

be

very

long

and

you've

got

to

take

some

time

to

do

performance.

Optimization,

however,

don't

give

up.

Don't

give

up

because

it's

better

that

you

prepare

for

the

worst

is

that

your

customer

is

going

in

the

next

one

I

actually

want

to

go

through

is

actually

error.

A

Rate

error

is

pretty

straightforward.

Either

you

can

do

what

we

call

a

status

http

code

like

404

or

500,

or

maybe

you

want

to

build

your

own

error

status

right

there.

That's

actually

a

really

good

one

and

I

want

to

start

actually

monitor

as

a

symptom

right

there.

One

thing

to

note:

I

just

want

to

let

you

know:

metrics

things

like

a

summarization

of

aggregation.

Things

like

latency

and

error

rate

doesn't

tell

you

the

full

picture.

You

still

need

to

use

something

what

we

call

tracing,

which

you

actually

see

traces

that

occur

within

a

microservices.

A

Let's

say:

surface

a

connect.

The

surface

b

b

connect

to

c

what

you're

using

right.

There

is

actually

tracing,

and

also

logs,

and

one

thing

that

that

really

is

really

apparent

in

the

world.

Kubernetes

is

unless

there's

logs

engineers

actually

can't

figure

out.

What's

going

on

again

just

be

mindful

metrics,

traces

and

logs

that

all

have

their

purpose,

they

have

their

own

pros

and

cons.

Okay,

now,

let's

just

move

forward

right

here,

just

a

quick

recap

what

we

covered

so

far.

I

told

you

my

top

ten,

let's

just

recap:

number

one

pipeline

status.

A

Number

three

number

four

number:

five,

which

is

dns

error

and

health

and

the

egress

error

rate

one

two,

three,

four,

five,

six,

all

right,

then,

after

that

I

have

my

pot,

which

is

stuck

that

what

I

want

to

look

after

is

my

status

phase.

I

say

this

reason

because

the

earlier

it

is

the

better

it

is.

I

know

that,

what's

going

on,

our

part

is

actually

going

to

be

better

and,

lastly,

just

to

wrap

it

up

on

number

nine

number

10,

which

is

the

api

server

ready

and

the

egcd

health.

A

Lastly,

I

added

up

with

two

more

for

my

application

layer,

which

is

p95

and

error

rate

okay.

So

this

is

my

top

ten

plus

two

again

just

to

give

you

some

idea.

Of

course,

you're

gonna

have

an

opinion.

You

know

you

have

your

point

of

view,

no

problem.

We

can

actually

chat

in

the

q

a

or

just

feel

free

to

reach

out

to

me.

We

can

talk

a

bit

more

from

that

now.

Let's

reuse,

a

recap

now

one

thing

that

I

also

very

grateful.

A

The

reason

why

I

know

all

this

you

know

we

can

talk

about.

This

is

because

a

lot

of

companies

they

wrote

about

it.

They

share

about

it

in

conference

and

events

like

this,

but

one

thing

that

I

really

want

to

suggest,

especially

for

engineers,

is,

have

a

habit

to

have

post-modern

even

just

five

minutes.

Just

before

you

close

the

meeting

just

understand,

what's

going

on

and

learn

from

your

mistake

and

really

start

planning

remediation

part,

that's

how

you

get

better

right

there.

A

I

know

it's

hard,

it's

not

an

easy

conversation,

but

it's

better

to

do

it

right

there

now

in

the

world

of

kubernetes,

and

I

think

forest

engineer,

we

have

to

start

accepting

that

it's

okay

for

things

to

fail.

That's

why

kubernetes

will

design

in

the

first

place,

because

once

something

goes

well,

we

actually

can

quickly

redeploy

it

by

orchestration

layer.

What

you

want

to

do

is

you

want

to

have

plan

b

or

what

I

call

redirection.

A

Also,

the

good

thing

about

kubernetes

like

community

like

this,

is

we're

getting

better

with

kubernetes

adoption,

but

also

were

good

at

sharing.

What

didn't

work

well

and

was

sharing

operational

flow

I'll

talk

about

what

we

call

a

workflow

diagram,

which

I

found

which

I'm

going

to

share

with

you

later

on

now

in

saying

that

kubernetes

can

be

very

complex.

You

know

it's

very

tempting,

but

just

pushing

everything

into

what

I

call

a

managed.

Kubernetes

service

is

not

perfect

because,

as

a

kubernetes

administrator,

you

still

need

to

understand

the

fundamental

basics.

A

The

problem

is

the

more

extracted

the

more

orchestration

is

involved.

We

have

to

understand

all

the

smaller

things

underneath

it

right

there.

First,

in

your

relic,

we,

what

we're

trying

to

do

right

here

is

we

try

to

make

our

service

to

be

more

aware

of

the

context,

the

relationship

and

the

dependency

within

the

application,

kubernetes

and

nodes

right

there

I'll

show

you

a

little

screenshot

I'll

talk

a

bit

more

on

the

resources

that

you

can

actually

view

later

on.

A

So

going

back

to

the

kubernetes

troubleshooting

guide

as

some

fantastic

guy

that

I

saw

online

over

not

only

available

through

the

official

channel,

but

also

again

going

back

to

learn,

kx

io,

they

have

a

fantastic

flow

chart

which

they

can

go

through

that

talk

about

step

by

step

where

they

suggest

you

to

start.

Of

course,

you

actually

can

change

it

according

to

your

preference

right

there.

A

Lastly,

just

to

wrap

it

up,

what

we

do

here

in

new

relic

is

we

have

a

kubernetes

cluster

explorer.

We

give

the

practitioner

a

high

level

overview

what's

going

on

in

the

cluster

and

give

you

the

ability

to

drill

down

to

your

down

gear

down

into

the

micro

level,

down

to

the

application

to

the

port

level

right

there.

Also

here,

when

you're

relic,

we

embrace

open

source.

In

fact,

a

lot

of

customers

push

open

source

data

to

us.

A

So

what

we

do

is

we

host

the

prometheus

data

on

your

behalf,

and

you

can

continue

to

use

what

you

really

love,

which

is

grafana

and

promql

right

there.

What

we

do

is

we

offer

customers

a

choice,

open

source

data

in

new

relic,

but

also

you

can

use

the

curated

agent

and

the

dashboard

that

we

provide

to

you

right

there

again.

Links

is

all

available

to

you.

If

you

want

to

look

a

bit

more

just

to

wrap

it

up.

I

hope

you

enjoyed

this

session.

I

know

there's

just

so

many

things

that

we

can

cover.

A

A

I

also

included

a

special

page

for

the

kubernetes

community

day,

where

you

actually

can

sign

up

get

new

relic

swag

such

a

t-shirt,

but

also

get

your

free

forever

account

it's

all

available

to

you

right

there,

as

mentioned

earlier

one

thing

I

always

like

about

kubernetes:

it's

always

good

to

go

to

the

source.

So

what

that?

A

What

I've

included

right

here

is

all

the

source

that

I

mentioned

earlier

like

metric

server,

api

server,

coupe,

step

metrics,

but

also

I

want

to

make

it

your

life

even

easier

if

you're

interested

to

try

new

relic,

all

the

links

is

available

to

you

right

so

that

you

can

try

it

out,

read

it

out

and

actually

try

to

push

kubernetes

data

here

within

the

platform

with

that.

Thank

you,

so

much

for

your

time.

If

you

have

any

questions,

feel

free

to

put

in

a

chat

window

or

just

feel

free

to

reach

out

to

me.