►

From YouTube: Geo-distributed Metadata Management System

Description

Don’t miss out! Join us at our upcoming event: KubeCon + CloudNativeCon Europe in Amsterdam, The Netherlands from April 17-21, 2023. Learn more at https://kubecon.io The conference features presentations from developers and end users of Kubernetes, Prometheus, Envoy, and all of the other CNCF-hosted projects.

A

A

A

A

A

A

A

A

A

A

A

A

So,

let's

first

take

a

look

of

current

cloud

native

metadata

management

system

etcd,

which

is

a

most

popular

solution.

First,

we

will

discuss

the

impact

of

Sky

Computing

to

the

distributed

systems.

Then

we

will

show

how

we

deploy

Etc

service

in

the

multi-cluster

scenario

and

analyze

the

disadvantages

of

these

deployments.

A

A

The

message

passing

between

servers

is

not

a

big

deal,

as

it

usually

takes

less

than

one

milliseconds

to

send

a

message

unless

the

throughput

is

high

enough

in

most

cases.

As

a

result,

the

network

is

usually

not

a

bottleneck

of

the

whole

system,

but

the

situation

changes

where

Sky

Computing

comes

more

and

more

cross-cloud.

Communication

appears

and

usually

is

unavoidable,

sending

a

message.

In

that

case

you

only

takes

up

to

hundreds

of

milliseconds

and

the

bandwidth

is

a

limited.

A

A

A

A

However,

the

performance

of

eetcd

service

itself

drops

quite

a

lot

almost

unacceptable.

So

what's

wrong,

the

answer

is

the

survey

service

internal

message

passing?

Usually

these

messages

are

triggered

by

the

underlying

consensus

protocol

raft,

which

is

used

for

data

consistency.

Now

it's

a

good

chance

to

take

a

look

at

Rough

to

protocol.

A

A

A

A

A

Both

channels

are

cross-classers,

It

suffers

twice

the

high

latency.

Now

we

know,

edcd

cannot

work

well

for

the

multi-cluster

or

geo-distributed

scenarios.

Can

we

find

a

way

to

work

around

X

Y

is

our

answer

to

this

question.

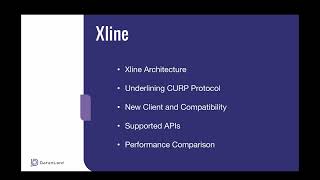

I

will

first

show

the

architecture

of

X

Y

and

then

analyze

underlying

curved

protocol

to

work

with

the

new

protocol.

We

also

need

to

consider

the

client-side

SDK

and

its

compatibility.

A

A

If

we

want

to

achieve

better

performance,

we

need

to

move

part

of

the

consensus

protocol

to

the

client

part.

We

will

show

that

later.

The

green

part

in

the

graph

is

a

main

innovation

of

X

line

that

consensus

protocol

is

called

Curve

curve

is

Geo,

distributed

friendly

protocol,

but

how

let's

discuss

it

and

drop

down

way?

A

A

A

Let's

take

KV

as

an

example.

If

two

requests

are

changing

different

Keys

value,

the

other

one

doesn't

matter,

we

will

always

get

the

same

final

result.

So

exchanging

order

should

be

allowed

in

this

case,

but

if

the

two

requests

conflict

with

each

other

changing

the

same

key,

the

order

does

matter

for

the

previous

scenario.

The

ordering

property

can

be

skipped.

The

next

question

is:

how

do

we

know

whether

the

request

can

be

reordered

or

not?

A

The

answer

is

a

speculative

execution

pool.

Every

server

will

check

the

incoming

request

if

it

conflicts

with

requests

in

the

pool.

The

server

replies

to

the

client

telling

there

is

a

conflicting.

Otherwise,

the

request

is

inserted

into

the

port

when

we

hit

it

conflicting,

the

backend

protocol

helps

or

the

requester

will

also

be

sent

to

the

backend

protocol.

That

protocol

will

broadcast

as

a

requested

to

all

servers

and

decide

the

global

ordering

the

backend

protocol

can

be

any

consensus.

Protocol

such

as

rough

and

multi-paxels.

A

Here

for

Simplicity

we

choose

fat.

We

can

see

the

back

end.

Protocol

is

a

second

barrier,

making

sure

every

request

has

its

own

position

in

the

global

ordering.

Eventually

to

some

to

summarize

the

curved

protocol,

it

tries

the

requester

optimistically

first,

if

it

fails,

let

another

consensus

particle

handle

the

rest

thing

here.

A

The

consensus

particle

is

roughed

from

the

above

description.

We

can

tell

that

the

current

particle

can

complete

the

consensus

in

one

trip

if

there

are

no

conflicting

requests,

even

in

the

worst

case.

Two

round

trips

are

enough,

as

a

corporate

practical

user

X

line

should

provide

additional

information

to

tell

whether

to

request

conflict

with

each

other

or

not.

If

the

users

are

using

the

etcd

service,

they

can

switch

to

XY

easily,

because

xli

provides

etcd

compatible

apis,

they

are

listed

in

the

table.

We

have

implemented

kvos

lease

watch

apis

and

the

others

are

in

progress.

A

The

completed

parts

are

the

most

useful

ones,

so,

if

you

want

to

have

taste,

don't

hesitate,

we

also

provide

two

versions

of

client

SDK.

If

the

users

don't

want

to

change

code

so

original

as

a

etc,

the

SDK

works

well

with

X

Y.

So

only

drawback

of

this

option

is

that

the

performance

is

also

the

same

as

etcd,

because

two

round

trips

message

passing

happens.

If

you

are

searching

the

best

performance

of

X

line,

you

should

use

X

Y

SDK,

whose

programmer

interface

is

very

similar

to

the

etcd.

A

With

limited

code

change,

your

application

should

work

with

X

Y

in

the

X

line.

Sdk

the

client

side

is

also

a

part

of

a

corporate

protocol.

It

ascends

a

request

to

All

the

known

servers,

so

one

round

trip

is

enough

for

Lucky

requests,

though

we

know

X

line

should

outperform

etcd

in

multi-cluster

scenario.

Theoretically,

we

have

to

demonstrate

it

with

tests

and

benchmarks.

A

Here's

how

we

build

the

Benchmark

environment,

we

simulated

the

multi-clusters

network,

latency,

making

Intel

server

communication,

latency

50

milliseconds

and,

at

the

same

time

the

client

should

not

be

in

the

same

cluster

of

the

servers.

The

communication

latency

between

client

and

the

servers

is

50

milliseconds

or

75

milliseconds.

A

A

A

In

the

regular

case,

clients

are

randomly

pick

a

key

from

100

000

key

space.

As

a

result,

there's

almost

no

key

complexion

in

this

scenario.

Explain

taples

a

throughput

as

expected,

because

the

latency

is

halved

in

the

real

world.

It's

almost

impossible

to

make

the

worst

case.

Now

we

have

demonstrated

X

line

is

geo-disputed

friendly.

A

Here

is

our

roadmap.

Last

year

we

implemented

the

major

API

VTC

deal

for

X

line.

We

plan

to

add

more

features

this

year,

such

as

persistent

storage,

support

snapshot,

support

and

the

cluster

membership.

Changing

support,

men's

club

features

are

not

listed

here,

but

one

thing

is

clear:

in

2023,

our

main

task

is

to

make

clax

live

feature

complete

and

the

next

year.

We

want

to

make

system

more

robust,

so

we

plan

to

bring

in

the

chaos

engineering

helping

validate

the

system.