►

From YouTube: Secure supply chains - Tekton chains

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

Hey

everyone.

Thank

you

for

joining

today,

good

afternoon,

good

morning,

wherever

you're

joining

from

today

we're

going

to

talk

about

tecton

chains.

So

let

me

start

off

by

introducing

myself.

My

name

is

park

patel,

I'm

a

devops

engineer

at

the

box

boat

and

ibm

tech

company.

So

initially

it

was

box

boat,

but

we

recently

got

acquired

by

ibm,

boxplo

specializes

in

you

know,

dev

cycops.

We

do

a

lot

of

container

containerization

of

a

lot

of

applications.

A

You

know

managing

cloud

infrastructure,

utilizing

various

kubernetes

platforms

in

various

cloud

environments

as

well

as

using

openshift

and

and

also,

of

course,

using

various

different

tools.

You

know

helm

oppa,

any

kind

of

a

tool

you

can

think

of

that's

related

to

devops

voxpro.

Does

it

the

the

main

focus

for

our

we've

been

focusing

on

recently

is,

has

been

security

and,

as

you

know,

recently,

software

security,

especially

software

supply

chain

security,

has

been

very

important

with

the

solar

winds,

attacks

and

everything.

A

So

this

presentation

is

going

to

focus

on

how

can

you

mitigate

some

of

those

things?

So,

let's

get

started

with

tecton

so

starting

off?

Let's

just

talk

about

hey:

what

is

tecton,

so

tekton

consists

of

a

lot

of

different

parts,

so

the

first

piece

is

tecton

is

basically

just

it's

an

open

source

tool

and

it

creates

it's

used

for

to

create

ci

cd

systems

in

either

across

any

cloud

provider

or

on-prem

system.

A

So

you

can

developers

can

use

it

to

build

and

build

pipelines

to

do

tests

and

deploy

it,

deploy

different

images

and

applications

into

a

kubernetes

cluster

or

whatever

else

you

need

to

so

tekton

consists

of

multiple

different

projects,

so

we'll

walk

we'll

kind

of

walk

through

each

of

these

things

and

we'll

also

give

you

more

in

depth

as

I

go

through

the

presentation,

as

well

as

the

demo

that's

coming

up

later

on,

so

the

first

thing

is

tekton

pipelines.

So

this

is

basically

the

ci

cicd

system

that

you

build

your

pipeline

with.

A

So

it

consists

of

tasks,

consists

of

task,

runs

pipelines,

pipeline,

runs

and

then

a

lot

of

different

other

things

that

come

along

with

it

and

I'll

give

you

an

example

of

exactly

what

a

task

and

a

pipeline

and

stuff

like

that.

Look

like

it

comes

in

the

common

slides.

The

tecton

cli,

it's

basically

you

can

actually.

Let's

say

you

want

to

interact

using

a

terminal

with

the

actual

cluster

that

you

created

a

techton

techdom

pipeline

you

created

in

a

specific

cluster.

You

can

use

a

tecton

cli.

A

You

can

use

that

to

view

your

different

tasks

to

view

your

pipelines

as

well,

as

you

know,

initiate

actual

pipeline

runs

the

dashboard.

So,

for

example,

let's

say

you

are

more

inclined

to

use

a

gui

interface.

The

dashboard

is

a

great

example

where

you

can

actually

use

a

web

browser

and

interact

with

the

actual

pipeline

that

you

have

you

have

deployed

again.

You

can

see

you

can

visualize

everything.

You

can

see

all

the

different

tasks,

your

different

pipelines,

you've

created

and

you

can

actually

initiate

and

delete

and

everything

do

everything

right

from

the

web.

A

Browser

and

I'll

show

you

that

up

and

running

in

this

demo

and

we'll

use

that

to

interact

with

our

with

our

pipeline

and

actually

initiate

the

runs.

The

catalog

or

the

hub

basically

is

basically

just

a

collection

of

different

tasks

and

I'll

speak

about

this

more

coming

up.

But

basically

it's

a

good

starting

point

for

anyone.

That's

starting

out

with

tecton

kind

of

doesn't

know

like

hey.

How

do

I

make

some

tasks?

A

This

is

the

one

that's

doing

the

the

supply

chain,

security

and,

of

course

the

whole

presentation

is

going

to

be

based

on

that.

So

we'll

talk

a

lot

more

about

that

coming

up

so

starting

off

talking

about

what

a

task

and

a

task

run

is

so

a

task

like

I

said,

attack

tekton,

creates

tasks,

and

these

are

crd

objects

that

they

basically

exist

in

kubernetes

and

you

can

interact

them

with

using

cube

cuddle

or

you

can

use

a

tkn

or

you

can

use

the

the

gui

interface

that

comes

with

tecton.

A

So

let

me

just

walk

you

through

what

a

task

is

so

task.

Is

this

a

building

block

of

a

pipeline-

and

you

can

see

in

here

that

each

task

can

have

multiple

different

steps

in

this

case,

the

specific

task

has

one

step,

which

is

a

builder

and

it

kind

of

uses

a

specific

image

and

takes

some

takes

some

kind

of

our

argument

and

accomplishes

some

kind

of

work

within

that

image.

A

So

that's

what

a

step,

what

a

step

would

do

and

a

task

would

have

multiple

of

these

steps

in

it,

a

parameter

is

actually

you

can

define.

Let's

say,

let's

say

you

want

to

pass

in

different

values

into

these

into

this

different

task,

then

you

can

specify

these

parameters

and

these

parameters

will

be

passed

into

the

actual

steps

that

call

it

later

on.

So

this

makes

the

task

very

modular

and

you

can

reuse

the

task

multiple

times

based

on

whatever

parameters

you

pass

it.

A

So,

in

order

for

a

task

to

be

run

in

a

tecton

pipeline

or

in

tactile

in

general,

you

have

to

have

a

task

run.

So

that's

what

this

object

on

the

on

the

right

side

that

you

see,

so

you

can

see

here

the

task

reference

references

that

specific

task

itself

so

task

with

parameters,

so

you

can

see

the

name

matches

with

the

one

on

the

left

side

and

then,

like

I

said

before,

you

can

pass

in

the

specific

parameters.

A

A

The

next

piece

going

up

going

up

the

chain

is

basically

pipeline,

so

a

pipeline

you

can

think

of

it

as

basically

a

collection

of

tasks

you

can

see

here.

It

gives

you

a

task

and

it

gives

you

a

task

list.

So

in

this

case,

there's

one

task

being

defined.

It

references

that

task-

and

if

you,

if

you

think

about

this

is

this-

is

basically

the

the

task

run

that

came

before

it.

Basically,

so

it's

it's

kind

of

like

a

pipeline.

A

This

is

a

collection

of

different

task

runs

and

you

can

have

all

those

defined

so

a

task.

This

task,

this

task

run

or

a

pipeline,

is

basically

referencing

a

build

push

task

and

it's

taking

it's

going

to

pass

in

these

different

parameters,

and

you

can

have

multiple

tasks

in

a

pipeline

similar

to

a

task

before

it

right.

A

You

can

have

different

parameters,

so

this

makes

the

pipeline

again

very,

very

modular,

and

you

can

pass

in

different

parameters

as

you

see

fit

and,

for

example,

let's

say

you

know

one

example

you

want

to

pass

in.

You

have

a

specific

git,

git,

url

or

something

or

git

repo

that

you

want

to

pass

in.

So

you

can.

You

can

set

that

as

a

parameter

so

that

you

can

reuse

that

same

pipeline,

but

but

pass

in

a

different,

git

repo

each

time.

A

If

you

want

to

build

different

images

or

something

like

that

in

order

for

a

pipeline

to

run

similar

to

a

task,

it

needs

a

pipeline

run.

So

again

you

see

that

the

name

reference

to

your

pipeline-

I'm

sorry

frame

reference

down

here-

is

a

pipeline

with

parameters

so

that

matches

up

with

this,

so

that

pipeline

object

gets

the

pipeline

run.

A

Crd

calls

the

pipeline

object

and

it

passes

in

the

parameters

that

it

needs.

So,

for

example,

this

pipeline

here

needed

contacts

and

flags,

so

it

passes

into

different

values

and

actually

just

does

the

initiation

and

and

runs

the

actual

pipeline

and

I'll

show

you

all

this

when

we

actually

get

to

the

demo

and

it'll

make

it

it'll

kind

of

visual.

You

can

visualize

exactly

what's

happening

so,

like

I

was

talking

about

before

the

catalog

is

a

good

place

to

start.

A

So,

if

you're,

starting

out

with

tecton,

it's

kind

of

difficult

to

pick

up

like

you

know,

writing

and

writing

your

task

or

pipeline

from

scratch.

So

the

catalog

is

a

good

way

for

you

know

users

to

go

in

and

look

at

the

different

tasks

that

the

community

has

already

created

for

you

and

you

can

just

combine

all

those

different

tasks

together

into

a

pipeline

or

there's

already

predefined

pipelines

in

there.

So,

for

example,

the

one

we're

going

to

be

using

today

is

called

build

packs.

A

A

The

tekton

dashboard,

like

I

said,

is

it's

a

collection

or

it's

it's

a

user

interface

for

the

tecton.

So,

for

example,

like

we

talked

about

before

the

pipeline

pipeline,

run

tasks

task

run

all

are

defined

in

here,

so

once

you

actually

establish

them

or

actually

have

them

established

in

your

in

your

cluster,

then

you

can

visualize

them

and

view

them

here

and

actually

kick

off.

Specific

task

runs

or

pipeline

runs

as

you

want

to

and

delete

and

modify

and

do

whatever

you

watch

it

from

here.

A

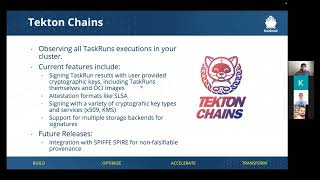

Of

course,

the

main

piece

that

we

talked

about

today

is

tecton

chains,

so

tekton

chains

has

a

lot

of

features,

but

the

main

features

that

we

want

to

look

at

specifically

is

that

it

has

the

ability

to

sign

task

runs.

So,

whatever

results

that

come

out

from

your

specific

pipeline,

tecton

change,

visualize

or

views

this

and

wait

for

that,

the

task

run

to

complete

and

actually

does

signing.

A

A

A

So

these

are

the

different

storage

options

that

are

available

and

I'll

show

you

that

you

can

actually

store

it

in

both

locations

or

multiple

locations.

At

the

same

time,

if

you

want

to

in

the

future

we're

looking

to

start

configuring

or

start

having

spire

so

that

we

can

so

we

can

establish

non-phospho

falsifiable

providence,

it's

a

little

bit

out

of

scope

for

this

presentation,

but

kind

of

just

get

the

community

aware

that

tecton

change

is

moving

in

that

in

that

direction,

so

that

it

can

meet

some

of

the

higher

levels

of

salsa.

A

So

salsa

salsa

is

the

supply

chain

levels

for

software

artifacts.

So

there

are

four

different

levels

that

you

you

want

to

achieve.

So

what

chains

helps

us

or

child

chains

helps

an

organization?

Do

is

that

it

helps

achieve

a

level

one

and

level

two

of

the

of

the

of

the

salsa,

the

different

artifact

levels.

So,

for

example,

the

first

level

is

a

build

process

must

be

fully

scripted,

so

the

tecton

pipeline

gives

us

that

it

gives

us

a

build

process.

A

That's

fully

scripted

and

generates

a

prominence,

so

the

pipeline,

tecton

pipelines

will

create

is

a

build

process

as

fully

scripted

automated

and

the

tecton

chains,

priests

will

generate

a

providence

for

us

whether

it's

signed

or

unsigned

tecton

the

level

one

does

not

care

level.

Two

does

care

about

assigned

providence,

but

change

already

accomplishes

that

we

talked

about

that

in

the

previous

slide.

You

can

use.

A

You

know

private

keys

and

stuff

like

that

in

order

to

do

signing

and

assigns

both

the

task

run

results

as

well

as

the

ocr

image

that's

created,

and

we

can

host

and

post

the

source

and

build

the

third

piece

that

change

kind

of

wants

to

go

towards

is

the

non-fossil

file

by

provenance.

So

that's

what

I

spoke

about

before,

where

spire,

if

you

introduce

spire,

you

introduce

the

non-falsifiable

providence,

where

you

do

attestation,

but

that's

a

little

bit

out

of

scope

for

this

presentation,

but

they

are

moving

in

that

direction.

A

So

what

is

this

also

harmonic?

How

does

it

get

created?

So

the

provenance

is

basically

just

an

attestation

of

some

entity,

the

builder.

So

in

our

case

our

builder

is

going

to

be

techton,

technopipelines

and,

and

it

takes

in

so

it

says

if

it

produces,

you

know

one

or

more

artifacts.

So

in

our

case

it's

going

to

create,

produce

an

image

and

then

it's

going

to

create

us.

A

It

takes

in

it

does

so

by

by

executing

some

invocation.

So

that's

where

our

pipeline

on

our

task

comes

into

play,

so

it

takes

in

the

invocation

takes

in

different

parameters-

materials,

environment,

variables-

whatever

it

is,

and

does

it

takes

all

that

information

in

order

for

us

to

create

some

kind

of

a

software

artifact,

but

in

doing

so

it

also

creates

us

a

specific

provenance

file

or

prime

minister

document.

That

kind

of

gives

you

a

specific

information

like

okay.

How

was

this

software

artifact

created?

What

went

into

it?

A

You

know

what

parameters

went

into

it.

What

commands

were

called?

You

know

what

environment

variables

were

used

all

that

kind

of

stuff.

It

captures

all

the

information.

So

that's

what

we

want

to

capture.

So

that's

that's!

What

change

is

going

to

provide

us?

It's

going

to

give

us

that

that

providence

document

that

kind

of

gives

us

all

that

information

that

can

be

verified

later

on.

A

So

a

few

things

before

we

start,

the

actual

demo

is

that

tecton

chains

has

a

lot

of

different

that

you

can

change

around

the

main

things

that

I

want

to

focus

on

today,

especially

for

this

demo,

is

the

artifact.

The

artifact

task

run

format.

So

this

is

the

format

that

the

task

or

the

prominence

that

we

create

at

the

end

will

be

stored

in

so

by

default.

It

goes

to

tecton,

so

we

want

to

be

in

in

total

format.

A

So

this

the

in

total

format,

is

the

one

that's

salsa,

compliant

that

we

talked

about

before.

So

that's

the

format

we

want

to.

We

want

to

produce

because

that's

the

ones

that's

supported

by

the

salsa

community,

the

the

artifact

has

grown

storage.

So,

like

I

was

saying

before,

you

can

store

it

into

multiple

different

back

ends.

You

know

either

as

a

tecton.

This

is

the

annotation

on

the

actual

task

run

oci

and

ocr

registry

gcs.

A

You

know,

google

cloud

storage

and

then

docdb

by

default.

It

goes

to

techton

us.

This

is

an

annotation,

but

you

can

change

it

and

have

you

know,

specify

multiple

so

that

it

stores

both

in

the

annotation

and

oci.

So,

for

example,

if

you

want

a

redundancy

or

just

in

case,

you

want

to

keep

a

backup.

Let's

say

your

your

cluster

gets

destroyed

or

something

you.

You

have

a

backup

of

your

your

provenance

file.

A

A

So

we'll

actually

be

running

this

in

the

coming

demo,

just

to

show

that

here

so

the

one

thing

you

do

want

to

remember,

especially

if

you're

creating

an

image,

and

especially

if

you

want

that

if

you

want

the

image

or

if

you

want

change

to

do

signing

of

the

image

you

do

have

to

specify

image

your

underscore

url

and

anything

in

front

of

it

right

and

image

underscore

digest.

These

two

results,

as

you

can

see

here,

have

to

be

specified

in

a

specific

task.

A

So,

for

example,

either

your

build

packs

or

your

your

canaco

task

right.

You

have

to

make

sure

that

at

the

end,

you

have

a

results,

underscore

url

or

underscore

digest,

and

then

change

will

know.

Okay,

I

cr

this

disk

task

created

some

kind

of

a

artifact,

some

kind

of

an

image

that

I

that

that

the

user

wants

me

to

sign

kind

of

thing.

So

that's

what

it's

using

currently.

So

this

might

change

in

the

future,

but

currently

it

requires

these

two,

these

two

parameters

to

be

present.

A

These

two

results

will

be

present

in

order

for

that

to

work

and

again

once

we

do

the

builtpacks

example.com

I'll

point

this

out

to

you

so

that

you

can,

you

can

see

it

actually

in

actual

task.

So,

like

I'm,

saying

we're

going

to

be

using

cosine

to

do

to

do

our

signing.

So

cosine

is

a

very

useful

tool.

A

It

comes

from

the

six

store,

so

you

can

use

it

for

container

signing

verification,

and

actually

you

can

store

that

into

an

oca

store

in

the

images

you

will

start

registering

so

for

a

change

expects

assigning

secret

to

be

stored

in

the

the

in

the

change

name

space.

So

you

can

see

down

here.

There's

a

command

actually

cosine

generate

key

pair

kubernetes

in

the

tecton

chains

is

going

to

create

us

a

signing

secret

name,

a

secret

name

secret

in

the

tecton

chain's

namespace.

A

A

A

So

it's

very

simple

to

install

and

get

it

running

on

your

cluster

configuration

again

is

very

easy.

Using

the

big

map

and

I'll

show

you

that

coming

up

and

then,

of

course,

if

you

want

to,

if

you

wanted

to

use

cosine,

you

can

go,

you

know,

use

go

install

or

you

can

do

it

say

using

mac

os

or

something.

Then

you

can

use

a

broom.

You

can

always

just

you

know,

install

the

binary

directly

onto

your

system

by

going

to

the

github

repo

itself.

A

So,

let's,

let's

go

start

working

with

the

demo.

So

what

I'm

going

to

show

you

in

this

demo

is

we'll

start

off

by

using

cosign

to

create

that

signing

secret.

So

I'll

explain

exactly

what

the

build

pack

examples

is

doing

so

we'll

walk

through

what

the

pipeline

looks

like

the

task

looks

like

all

that

kind

of

stuff

once

the

actual

pipeline

finishes

we'll

kind

of

review

that

prominence

document

that

I

was

talking

about

before.

How

does

that

look?

How

what?

A

What

format

is

that

in

so

I'll

show

you

that

at

the

end

and

then,

of

course,

we

can

also

use

cosine,

like

I

said

you

can

use

cosine

to

verify

the

signatures

you

want

to

so

change

is

gonna,

be

signing

that

image

and

storing

that

into

an

osr

registry.

So

we

wanna

check

that

both

the

image

and

the

attestation

that

prominence

document

is

actually

signed

and

and

so

that

we

know

that

it's

not

been

tampered

with

by

a

third

party.

A

You

know

we'll

be

using

a

modified

version,

but

there

is,

there

has

been

work

going

on

basically

to

upstream

all

these

different

changes,

so

the

catalog

is

being

updated

so

that

it

is

more

change

compliant

so

that

in

the

future,

you

don't

have

to

worry

about

this,

but

I

would

say

just

just

if

you

are

creating

some

kind

of

an

image

or

some

kind

of

artifact,

and

you

want

it

to

be

signed.

Make

sure

you

double

check

that

whatever

task

is

doing

that

actual

image

creation.

A

All

right,

so

you

can

see

this

is

the

tecton

dashboard

running

and

all

I

did

is

basically,

I

have

a

proxy.

Let

me

go

off

the

screen.

All

I

have

is

oops

a

port

forward,

basically

going

forward

so

that

it

it

is

forwarding

the

to

zero,

nine,

zero,

nine

seven

and

that's

how

it

appears.

I'm

just

running

it

locally

and

you

can

see

right

now.

I

don't

have

anything

defined.

There's

no

pipelines,

there's

no

pipeline

runs

pipeline

resources

is

actually

getting

deprecated,

so

this

will

be

removed.

A

So

there's

no

there's

no

reason

to

talk

about

this

as

well

as

conditions,

so

these

two

things

are

going

to

be

removed.

So

that's

why

I

did

not

mention

it

in

this

presentation.

The

so

we

talked

about

pipelines,

we

talked

about

pipeline

run,

talked

about

task

and

a

task

run.

A

cluster

task

is

basically

the

same

thing

as

a

task,

but

the

only

difference

is

is

that

a

task

is

in

a

specific

name

space

in

kubernetes.

A

While

a

cluster

task

is

not

it,

you

can

be

used

in

any

name

space,

so

you

define

it

once

and

it

can

be

used

in

different

name

spaces,

but

a

specific

task.

If,

if,

for

example,

your

pipeline

cause

a

specific

task,

but

it

only

exists

in

a

specific

namespace,

then

it

might

not

work

so

this

that's

what

the

only

difference

is.

A

So

here

is

the

the

catalog

that

I

was

talking

about

before

the

tekton

catalog

and

it's

a

very

good

place

to

get

started.

So

you

can

see

there

are

a

bunch

of

tasks

or

you

can

find

in

here.

So,

for

example,

the

build

packs

that

we're

using

you

know

curl,

there's

also,

you

know,

get

clones.

If

you

want

to

clone

in

a

specific

repo

that

you're

using

that,

has

your

your

docker

file

in

there.

You

want

to

create

an

image

from

all

that

kind

of

stuff.

A

So

can

I

go

golang,

there's

a

lot

of

stuff

here

for

that,

so

whatever

you

need

is

a

good

place

to

start

and

then,

of

course,

the

pipelines

so

specifically

we're

going

to

be

using

this

build

packs

pipeline.

So

you

can

see

in

here

that

it

takes

in

it

takes

three

different

tasks

in

order

for

it

to

run

so

right

here

here

are

the

defense

dependencies

and

these

define,

or

these

define

out

the

three

different

tasks.

A

So

we

will

actually

apply

all

these

so

that

they

they

appear

in

our

in

our

kubernetes

cluster,

so

that

we

can

actually

run

the

specific

pipeline

and

then,

after

that,

we'll

actually

we'll

actually

install

the

actual

pipeline

and

then

do

the

actual

pipeline

run.

So

let

me

just

walk

you

through.

So

here

is

the

actual

pipeline.

So

this

is

this.

You

can

see

it's

much

longer

and

much

more

complicated

than

the

small

small

example

that

I

showed

you

before,

but

basically

it's

it

has

the

same

kind

of

things.

A

The

one

thing

I

did

want

to

mention

is

workspaces,

so

let's

say

your

pipeline

has

multiple

tasks

and

they

each

are

maybe

they're

producing

some

kind

of

an

artifact

or

something

that

gets

passed

between

tasks.

In

that

case,

you

you

would

need

a

workspace,

so

workspace

is

basically

just

like

you

know,

an

empty

directory

or

or

a

pvc

that

you

know

in

kubernetes

that

would

store

that

specific

artifact

and

that

it

could

be

shared

between

the

different

tasks.

A

So

in

this

case,

this

specific

pipeline

has

two

different

ones

and

then,

of

course,

we

talked

about

parameters,

so

you

can

specify

different

parameters

in

here.

Some

of

them

are

defaulted

out

so,

for

example,

this

source

reference

as

a

default

of

empty

string

right,

so

let's

say

you,

don't

let's

say

you

don't

want

to

change

anything

there,

then

you

don't

have

to

specify

it.

So

some

of

these

that

already

have

a

default.

A

You

don't

have

to

specify

any

values

so

specifically

like

source

url,

app

image

you

do

have

to

specify,

because

there

is

no

default

for

it

and

of

course

the

main

point

of

this

pipeline

is

create

a

specific

image.

From

a

specific

you

know,

source

url,

so

that

that's

not

defaulted

out.

So

you

have

to

specify

those

things.

A

A

You

can

see

it

has

the

image

underscore

digest,

but

it's

missing

the

image

underscore

url.

So

if

I

ran

this,

if

I

ran

this

pipeline

by

itself

with

chains,

change

will

not

pick

up

on

the

the

image

that's

being

created

and

it'll

actually

not

do

the

signing.

So

this

this

is

the

reason

why

we're

modifying

this

image.

This

task

specifically

so

here,

is

the

updated

one

it's

actually

linked

in

the

powerpoint,

so

you

can

use

this

specific

one.

It's

a

0.4

version,

and

you

see

down

here.

A

A

So

the

first

thing

I'm

going

to

do

is

use

cosine

to

generate

that

the

signing

secret,

so

I'm

just

going

to

run

this

all

it's

doing

is

is

calling

the

cosine

function

and

just

the

you

know

what

we

talked

about

before

is

going

to

generate

the

signing

secret

in

the

tecton

change

namespace.

So

that's

done

it's

going

to

create

it's

going

to

give

us

the

public

key.

Also

at

the

end,

this

is

right

there

you

can

see

that

it

got

written

out.

A

So

the

next

piece

we're

going

to

do

is

we're

going

to

edit

the

configuration

config

map

because

remember

we've

talked

about

this

in

the

previous

slide.

We

want

to

be

in

the

in

total

format,

and

we

also

want

to.

We

want

the

storage

to

be

both

oci

and

also

store

it

into

the

tecton

annotation.

So

this

this

is

going

to

take

care

of

that

for

us.

So

I'm

just

going

to

run

this

so

nothing

changed,

but

we

can

take

a

look

at

it.

So,

in

my

case,

it's

already

been

set

to

that.

A

So

you

can

see

in

here.

This

is

looking

at

the

specific

configuration

map

in

that

for

the

tekton

chains.

You

can

see

the

format

is

already

in

total

and

you

can

see

the

storage

as

we'll

see

in

tect

on,

so

the

controller

will

automatically.

Let

me

just

show

you

this

real

quick

you

can

see

here

is

the

change

controller

that's

running

so

that

got

modified

based

on

the

big

map

that

I

that

I

just

changed

earlier

here.

Is

that

dashboard

that's

running?

So

that's

what

this

is

running

right

here.

A

So

you

can

visualize

stuff

and

here

are

the

two

different

pipelines.

So

this

is

the

pipeline

controller

and

then

a

web

hook.

So,

for

example,

let's

say

you

want

your

your

tasks

and

pipelines

are

stored

in

a

specific

repository

somewhere.

The

webhook

can

so

you

can

use

the

webhook

in

order

for

it

to

you

know,

instantiate

the

pipeline

run

and

run

through

that

pipeline.

If

anything,

newer

changes,

so

those

are

different

pieces

that

are

being

installed.

A

So

next,

like

I

said,

we

have

to

install

the

different

tasks

right,

so

the

catalog

says

we

need

to

in

order

for

this

pipeline,

the

build

packs

pipeline

to

run

we're

missing

these

dependencies.

So

these

these

are

the

different,

the

clone

tasks,

the

build

packs

task

and

the

adult

type

basis

test.

So

you

can

see

that's

what

I'm

going

to

be

installing,

so

I

did

replace

this

with

the

0.4

right.

This

is

this

one

right

here

that

has

that

underscore

image.

A

So

that's

the

that's

the

task

I'm

using

so

that

it

will

get

signed

once

this

pipeline

actually

runs.

So

I'm

going

to

do

that

and

do

the

install.

We

can

actually

go

back

in

here

and

take

a

look

now.

You

can

see

there's

three

different

tasks

up

here:

quick

clone

the

phases

and

then

actual

build

pack.

We

click

on

it

and

if

you

actually,

you

know

see

the

actual

yaml

right,

because

it

is

a

kubernetes

object,

a

crd,

so

you

can

actually

go

in

and

view

exactly.

A

Next,

we're

going

to

install

the

pipeline

so

do

that

and

all

this

is

doing

is

just

installing,

like

the

next

piece

of

this

is

install

the

pipeline.

So

that's

what

I

showed

you

here,

which

is

this

the

the

buildpacks.yaml

is

basically

this

pipeline

object

right

here.

It's

going

to

create

that

for

us

in

in

our

cluster.

So

if

I

click

on

pipeline

now

you

can

see

the

build

packs.

Is

there

and

again

you

can

you

can

view

them

yaml,

that's

associated

with

it

now.

A

Finally,

we're

gonna

we're

going

to

do

the

actual

pipeline

run.

So,

if

you

remember

at

the

beginning,

in

order

for

a

pipeline

to

actually

run

because

a

pipeline

is

just

an

object

right,

this

can

be

reused

based

on

the

different

parameters

you

pass

it

in

order

for

it

to

run,

you

would

have

to

specify

a

pipeline

run

or

let's

say

you

want

to

just

run

a

specific

task

by

itself.

Then

you

have

to

specify

a

task

rep.

In

our

case,

I

want

to

run

the

actual

pipeline.

A

So

down

here

you

can

see

the

pipeline

when

specified

up

here

is

the

persistent

volume

claim

that

I

was

talking

about

before,

because

it

takes

in

a

specific

workspace

right

it

it

actually

the

clone

and

the

build

packs

tasks

kind

of

work

together

in

order

for

it

to

clone

down

the

specific

repository

and

then

do

the

actual

image

creation

right.

So

it

needs

a

shared

workspace.

A

So

that's

what

this

pvc

is,

so

the

persistent

volume

claim

you

can

see

matches

with

this

one,

so

all

that's

doing

is

basically

creating

that

making

sure

that

it's

there

so

that

the

pipeline

can

actually

use

it

when

the

time

comes,

so

you

can

so

right

here.

The

reference

pipeline

reference

is

that

build

packs.

A

So

that's

the

name

of

our

build

pipeline.

That's

in

our

cluster!

Currently

it's

going

to

take

in

the

different

parameters,

so

the

builder

image

is

going

to

be

using

is

the

the

builder

the

build

tax

builder,

that's

located

in

the

docker

hub

right

here,

the

app

image.

So

what

what

do

I

want

it

to

be

named?

Where

do

I

want

it

to

be

stored?

It's

going

to

be

here.

So

that's

what

it's

specified

here.

A

Basically,

I

want

it

to

be

stored

in

ttl.sh

and

ptl.sh

is

basically

in

like

a

very

short-lived

and

like

it's

very

good

for

development

use

cases.

Anyone

actually

can

use

it

and

without

logging

in

or

anything.

So

it's

very

useful

for,

like

you

know,

demo

purposes

or

this

this

one.

You

want

to

make

sure

that

you,

you

know

you're

pushing

and

pulling

is

working

properly.

Of

course

you

don't

want

to

do

this

with

production

or

any

kind

of

stuff

like

that.

A

Just

for

like

testing,

you

know

for

demos

or

anything

else

like

that,

it's

great

great

use,

all

I'm

doing

is

putting

it

into

you,

know

my

repo

and

giving

giving

it

the

name

of

you

know

webinar

demo,

eight,

so

it's

gonna

pull.

Where

is

the

the

source

code?

Where

is

that

get

repo

coming

from

it's

gonna

pull

from

this

buildpack

sample,

and

specifically

it's

gonna

build

this

ruby

builder.

A

So

what

I'll

do

is

I'm

gonna

use,

keep

cuddle

apply

in

this

case

because

they

are

kubernetes

objects

right.

I

can

just

use

that

instead

and

I'm

just

going

to

do

that

and

I'll

take

that

so

you

can

come

in

here

now

you

can

see

the

build

pack

run,

it's

actually

inst,

so

it

created

that

object,

and

it's

actually,

it

found

the

pipeline

and

it's

actually

running

now.

So

we

can

view

exactly

what's

going

on.

A

So

that's

where

this

this

user

interface

comes

into,

comes

in

handy

because

you

can

see

exactly

hey

what

what

logs

are

being

created

within

each

day's

different

tasks,

because

each

of

these

different

tasks

that

are

running

you

can

see

them

down

here.

They're

all

going

to

create

their

own

task

runs.

So

these

each

of

these

are

different

pods

in

your

cluster.

So

it

helps

you

visualize.

Okay,

hey!

What's

going

on

with

that

pod

kind

of

thing,

so

it

gives

you

a

nice

log

of

it.

It

gives

you

a

status

of

what's

what's

actually

happening.

A

If

it

finished,

is

there

any

kind

of

errors,

and

it

gives

you

a

little

bit

of

detail

like

hey,

what's

happening?

You

know

same

as

that.

The

ammo

configuration

what's

happening

or

what

is

going

to

happen

in

that

specific

task,

that's

being

run

so

it

looks

like

the

get

fetch

finished.

You

know

it

pulled.

It

pulled

from

the

build

pack

samples

and,

as

you

see

here,

the

next

piece

after

that

is

going

to

start.

The

build

tax

is

going

to

build

the

image

specifically

name

it.

A

This

push

it

to

that

ttl.sh

registry,

and

it's

it's

going

to

use

that

you

know

the

source

path,

so

it

looks

like

it

completed

properly,

so

we

can

take

a

look,

so

we

can

verify

that

it's

actually

there

so

go

back

to

the

top

of

the

screen,

and

so

next

we're

going

to

look

at

is

verifying.

So

we

want

to

verify

so

we

want

to

verify

hey.

Is

the

image

signed?

Has

the

attestation

been

created

and

has

it

been

stored

into

oci?

A

So

the

first

thing

we're

going

to

do

is

so

this

tkn

command.

I

kind

of

want

to

show

this

here

real

quick.

What

this

does

is

this

is

that

the

tecton

cli

that

I

was

talking

about

before.

So

what

this

did

is

basically

hey.

Techton

cli

check

the

pull,

request

or

sorry

check

the

pipeline

run

describe

the

last

describe

the

last

pipeline

run

and

then

use

the

json

path.

In

order

to

get

me

what

the

image

name

was

so

what

it

so,

basically

what

it

did

is.

It

came

here

on

the

side.

A

Looked

at

this

looked

at

okay,

the

last

one

to

run.

Was

this

cache

image

pipeline

go

in

here

and

find

the

one

that's

labeled,

app

underscore

image,

which

is

this

and

give

me

this

value.

So

it's

very

easy,

very

useful

to

get

information

back

or

you

know.

Let's

just

say

you

want

to

describe

your

last

pipeline

run

kind

of

thing

right,

so

you

don't

have

you

don't

want

you're,

not

a

you

know,

graphical

user

interface

person,

just

kind

of

view.

Everything

in

your

terminal.

You

can

get

a

lot

of

the

information

again

same

thing.

A

You've

got

in

your

your

your

dashboard,

you

can

get

over

here.

You

know

it

hasn't

been

finished

and

successfully

finished.

What

parameters

did

you

pass

in

there?

Was

there

any

specific

results

in

that

specific

pipeline?

What

workspaces

were

used

and

what

tasks

were

actually

run?

And

you

can

you

know

you

can

describe

this?

You

can

also

describe

the

task

run.

You

can

describe

the

task

all

that

kind

of

stuff,

so

it

makes

it

easy

just

to

verify.

What's

going

on

or

interact

with

it.

A

So

I'm

just

going

to

do

this

command

here.

Basically,

it's

going

to

store

the

doc

image

as

an

environment

variable,

and

this

in

my

terminal

here

so

that

I

can

use

crane.

So

crane

is

another

tool.

Basically

just

you

can

useful

for

verifying

to

view

the

what's

in

the

actual

oci

registry.

So

when

I

do

a

crane

ls.

So

if

you

remember

that

this

is

our

app

image

right,

so

that's

going

to

be

looking

at

the

ttl.sh,

pxp

and

ip8

and

then

the

webinar

demo,

just

looking

at

that.

A

So

if

you

remember,

I

didn't

specify

a

tag.

I

did

not

specify

a

tag

in

here

there

we

go,

but

now

specified

tag.

So

all

it's

doing

is

this

is

gonna.

You

know

by

default,

it's

gonna

tag

it

to

the

latest,

so

you

can

see

in

here

it

tagged

it

to

the

latest.

So

that's

what

this

is.

It

also

stored

the

dot

att.

That's

the

added

station

that

providence

document

and

it

also

created

stored

the

actual

signature

in

there.

A

So

what

cosine

allows

us

to

do

is

that

it

allows

us

to

verify

that

hey

like

you,

want

to

verify

that

whatever

image

you're

pulling

down

from

the

internet

is

actually

trusted

right.

So,

for

example,

let's

say

I'm

building

this

internally,

I

built

this.

You

know

some

kind

of

application,

some

kind

of

application

image

and

I

push

it

up

to

the

ocr

registry

and

I'm

going

to

pull

it

down

into

my

production

environment.

A

Before

I

pull

it

down,

I

want

to

make

sure

that

whatever

image

that

I'm

pulling

down,

I

trust

that

image

and

that's

where

this

whole

signing

and

checking

and

verifying

comes

into

play.

So

cosine

verify

is

going

to

take

that

my

private

key

in

this

case

it's

going

to

pull

it

exa,

pull

it

from

the

tecton

chains,

namespace

that

signing

secret

that

we

created

earlier,

and

it's

going

to

verify

that

hey

is

this

image

signed

with

the

key

that

I

used

to

create

it.

So

you

can

see

right

here,

verification

test.

A

You

can

see

that

cosine

claims

are

validated.

The

signatures

are

verified

against

the

key

and

it's

also

using

the

full

shear

roots.

So

all

that

stuff

was

also

verified

and

it

gives

us

a

little

bit

more

information.

The

other

piece

you

want

to

check

is

the

actual

attestations,

so

that

attestation

document

that

got

created

by

chains

and

got

pushed

up

to

the

ocr

industry

has

that

been

tampered

with.

So

we

can

use

that

because

that's

also

signed.

So

you

can.

A

You

can

verify

hey,

no

one's

tampered

with

this

with

that

specific,

the

provenance

document.

So

that

does

the

same

thing.

It

kind

of

you

know:

checks

were

validated

and

the

keys

and

everything

matched

up.

So

here

is

the

actual

payload.

So

that's

the

so

it's

kind

of

giving

us

that

back.

So

I'm

going

to

copy

this

to

here,

so

you

can

see

the

payload

starts

here

and

it

ends

there

and

the

next

piece

after

that,

as

a

signature.

So

this

is

base64

encoded.

A

A

Base64

dash

d

and

I'll

type

it

to

jq,

just

so

it's

more

visually

pleasing

so

here

is

that

providence

document

that

got

stored

so,

like

I

said

here,

is

that

salsa

providence

it's

stored

in

the

total

format.

The

type

is

in

total.

The

name

is

that

specific

image

that

we

created

the

app

image

right

there.

That's

what

I

got

created

here,

because

there's

a

specific

digest.

What

did

the

creating

right?

The

builder

in

this

case

was

tucked

on

chains.

A

Build

type

was

specifically

to

change

version

two

and

it

kind

of

gives

us

more

information

on

okay.

What

happened

in

this

specific

build?

How

was

this

image

created?

So

it

gives

us

all

that

information.

So

what

parameters

are

passed

in

so

right,

app

image?

You

can

see

how

the

image

got

passed

in

it

says

sub

source

sub

path

that

got

passed

in

here,

user

id.

So

all

the

different

things

that

got

passed

in

are

all

mentioned

here.

A

So

if

someone

like

so

let's

say

down

the

line,

this

pipeline

no

longer

exists

and

you

want

to

verify

like

hey.

How

was

this

image

created?

Actually

I

want

to

deep

dive

into

it.

Kind

of

thing

you

can

look

at

this

prominence

document

and

be

like

okay,

so

this

is

all

the

different

parameters

that

we

used

and

then

these

are

different

steps

that

the

specific

this

build

pack

image

took

in

order

to

actually

make

that

so

there's

multiple

different

steps

that

this

build,

this

build

pack

step

actually

ran

and

they're

all

listed

out

here.

A

So

you

can

see

the

actual

entry

point

and

you

know

there's

a

bunch

of

different

commands

that

it

ran.

What

arguments

that

I

took

in

was

there

any

kind

of

environment

variables

that

I

took

in

gives

us

that

information

any

annotations

and

then,

if

there's

any

other

and

then

the

next

piece

right.

So

this

is

the

first

step,

and

then

this

is

the

second

step

and

then

lastly,

the

third

step

and

so

on

and

so

forth.

A

It

tells

us

when

it

when

the

build

started

when

it

finished

and

all

that

different

kind

of

information.

So

this

is

the

format

I

think

this

this

this

format

is

still

kind

of

evolving.

It's

at

you

can

see

here

it's

at

version

0.2.

This

was

very,

very

different

when

it

first

started

out

at

0.1.

A

lot

of

this

information

was