►

From YouTube: Building Resilience with Chaos Engineering

Description

Don't miss out! Join us at our upcoming event: KubeCon + CloudNativeCon Europe in Amsterdam, The Netherlands from 18 - 21 April, 2023. Learn more at https://kubecon.io The conference features presentations from developers and end users of Kubernetes, Prometheus, Envoy, and all of the other CNCF-hosted projects.

A

Hey

everyone

good

morning,

good

afternoon

and

good

evening

from

wherever

you

are

today

I'm

here

from

harness

and

I'm

here,

to

talk

to

you

about

building

continuous

resilience

in

the

software

delivery.

Life

cycle

with

chaos,

engineering,

ultimately

Cloud

native,

develop

development

has

enabled

teams

to

move

quickly,

but

it

also

introduces

new

ways

for

software

to

fail

quickly.

Sres

QA

engineers

and

developers

need

to

work

together

to

optimize

reliability

and

resilience

to

improve

developer

productivity.

A

Why

am

I

here

I'm

part

of

the

litmus

chaos

open

source

Community,

which

is

an

incubating

cncf

project

harness

as

a

sponsor

is

also

part

of

the

cncf

as

a

silver

sponsor?

You

may

have

seen

me

at

qcon,

plus

Cloud

nativecon

Detroit,

where

we

had

our

first

ever

chaos

day

in

October

2022..

Please

feel

free

to

contact

me

via

email,

Twitter

or

LinkedIn.

A

Ultimately,

we

are

here

because

we

are

building

and

making

things

better

as

engineers

and

leaders,

we

are

always

seeking

to

understand

and

learn

how

the

world

works.

I

talk

about

how

building

for

resilience

is,

in

fact

chaos

engineering.

Ultimately,

this

discipline

is

simply

allowing

us

to

understand

how

the

system

works

and

operates.

This

is

one

of

my

favorite

quotes

from

Twitter

from

Andy

Stanley,

who

has

a

podcast

where

he

just

says.

A

A

Not

everything

has

to

be

perfect,

but

an

error

message

can

instruct

the

user.

Why

something

failed

or

help?

You

know

the

IT

person

solve

the

problem.

Chaos

engineering

can

be

used

to

validate

and

tune

these

mechanisms

to

make

sure

they

work.

Now,

at

the

end

of

the

day,

you

say:

why

does

chaos

engineering

exist

here.

Another

way

to

phrase

this

is

you

know

like

what

failure

modes

does

my

system?

Have

you

know,

and

What

mechanisms

am

I

using

to

prevent

that

and

like

how

do

I

test

it?

A

Do

I

wait

for

an

incident

to

happen

to

prove

it

or

can

I

test

it

proactively?

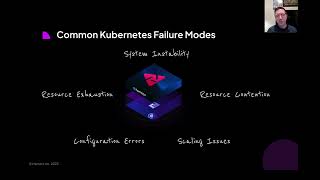

Some

common,

you

know

kubernetes

failure

modes.

This

image

is

great

to

Think.

Through

I

got

these

failure

modes

or

stuff.

The

kubernetes

website

system,

instability,

resource

contention,

scaling

issues,

configuration

errors,

resource

exhaustion.

Now

kubernetes

is

self-healing

to

an

extent,

but

the

application

that

you

put

onto

the

container

isn't

necessarily

always

self-healing,

and

you

have

to

know

how

to

handle

these

failures

that

happen.

A

Now.

Chaos,

engineering,

The

Experience.

Today.

It's

basically

like

you

know

a

shopping

cart

on

an

e-commerce

website.

You

can

basically

click

around.

This

is

an

example

from

the

litmus

chaos

open

source

project

from

the

cncf.

But

basically

you

know

you

can

click

and

say,

like

hey,

I

need

to

do

this,

fail

around

kubernetes

or

Cassandra

or

Kafka

and

there's

a

button.

You

can

click

and

there's

experiments

that

you

can

pull

from

right.

But

ultimately

you

know

that

that

works

well

and

like

when

you

click

in

here.

A

You

can

see

experiments

that

are

basically

you

know

easy

to

understand

and

self-service

But.

Ultimately,

like

developers,

QA

engineers

and

sres,

you

know

they

can't

manually

click

buttons

all

the

time

so

like

today,

a

chaos

experiment

might

just

look

like

this,

which

is

a

declarative,

yaml

file.

You

know

in

here

all

we're

trying

to

do

is

delete

like

a

pod

from

a

kubernetes

deployment

to

see

how

my

application

behaves

when

it

restarts

or

when

that

pod

gets

disrupted.

A

So

that's,

basically

it

right

now,

if

I

dive

into

what

we're

trying

to

do

with

continuous

resilience.

You

know,

let's

break

this

down

on

how

reliability

and

resilience

can

help

development

teams

so

improving

resilience

across

the

software

delivery

life

cycle,

ultimately

giving

customers

improved

experience

now,

generally

speaking,

sres

Q

engineers

and

developers

are

a

team,

but

they

do

work

siled

right,

they

hand

off

work

to

each

other,

whether

it's

through

a

PR

through

a

test

or

you

know

through

an

incident.

But

what

we're

trying

to

do

here

right

is,

you

know.

A

Sres

can

leverage

chaos,

engineering,

maybe

after

an

incident

to

recreate

that

incident

to

see

you

know

how

they

can

fix

it

if

they

can

fix

it

or

perhaps

increase

the

blast

radius

of

that

incident

in

a

simulated

environment

right

and

then

you

can

validate

that

the

experiment,

the

fix

that

you

put

in

for

the

experiment

resolves

and

then

they

can

shift

that

learning

that

test.

If

you

will

left

to

the

QA

environment.

A

So

now

the

QA

team

can

run

that

same

experience,

experiment

to

see

if

there's

any

you

know,

failure

in

that

environment

to

see

if

the

system,

the

configuration

drifted

and

ultimately

that

QA

person

you

can

shift

that

to

the

left

of

the

developer,

so

the

develop

bear

can

run

that

chaos

experiment,

you

know

in

that

CI

pipeline

or

that

QA

test

environment.

So

now

you

have

protection

across

the

pipeline

and

you're

not

waiting

for

a

random

incident.

You

know

that

can

happen

now

you

can

actually

avoid

that

incident.

A

A

So

let's

talk

about

Innovation

and

achieving

reliability

and

resilience.

You

know

it's

challenging

to

solve

everything

at

the

same

time,

but

this

year

in

2023,

we

need

to

not

only

move

fast

with

high

velocity,

but

we

also

need

to

do

it

efficiently

at

a

low

cost

and

with

the

highest

reliability

and

resilience

needed

for

the

best

customer

experience.

It's

a

mouthful.

But

how

do

we

solve

this?

You

know

automatic

automation

is

key

in

a

pipeline,

so

let's

talk

a

little

bit

about

the

cost

of

software

development.

A

So

right

now

you

know:

there's

approximately

27

million

software

developers

globally

with

an

average

salary

of

a

hundred

thousand

in

the

annual

payroll

equivalent

to

2.7

trillion.

You

know

that's

a

lot

of

money

right

and

if

you

look

at

you

know

how

much

time

developers

spend

coding.

This

was

a

recent

survey

poll

on

LinkedIn.

You

know

54

said

less

than

three

hours

a

day

they

spend

coding.

You

know

that's

equivalent

to

you

know,

wrench

time

right,

like

three

hours

of

wrench

time

per

day

that

they're,

you

know

making

something

creating

something

innovating.

A

You

know

the

rest

of

the

time

like

what

are

they

doing

right?

There's

meetings,

there's

other

toil,

there's

watching

the

deployment

like

babysitting

there

right,

there's

security

testing.

There's

all

these

things.

You

know

the

toil

that's

preventing

development

teams

to

be

productive

and

not

that

you

have

to

code

for

eight

hours

a

day,

but

if

you

can't

be

creative,

you

can't

innovate

and,

if

you're

being

again

bogged

down

by

all

these

toils

in

the

deployment

process,

then

you're

not

being

as

productive.

A

So

if

we

look

at

the

math

behind

this

opportunity

again

the

annual

payroll

of

2.7

trillion,

you

know

what

does

that

look

like.

So

if

we

can

cut

developer

toil

in

half

which

is

doable

right,

then,

ultimately,

like

you,

can

look

at

your

developer

budget

increasing

as

well,

and

then

you

can

redirect

that

to

development

right,

whether

that's

being

more

productive

with

the

same

amount

team

or

hiring

more

to

do

more

capabilities

right.

You

don't

always

have

to

do

more

for

less,

but

you

can

do

more

with

cutting

down

this

toil.

A

A

So

again,

if

you're

able

to

quickly,

you

know,

write

code

to

solve

the

problem

to

test

it

to

prototype

it,

and

if

you

can

deliver

that,

you

know

to

non-production

or

production

quickly

and

test

it

with

a

few

customers,

like

that's

an

ability

of

a

development

team

to

test

get

feedback,

you

know

and

iterate

so

ultimately

like

innovate,

you

need

to

innovate,

to

increase

developer

productivity

and

saves

costs

and

so

like.

Where

can

you

increase

developer

productivity

all

right,

let's

break

that

down

for

reliability

and

resiliency,

so

you

can

reduce

the

software

build

time.

A

You

can

reduce

software

deployment

time

and

you

can

reduce

software

to

bug

time.

So,

let's

dig

into

that

last

one

a

little

bit

more.

Why

do

why

developers

spend

more

time

in

debugging

right

now?

So

one

thing

is

oversight.

You

know,

there's

just

a

million

things

going

on

and

you

you

test

as

much

as

you

can

you

automate,

but

you

know

you

overlook

something.

That's

just

human

nature

dependencies

have

not

been

tested.

You

know

it's

very

normal

to

not

understand

all

that

goes

in

and

goes

out

of

your

system,

especially

in

these

managed

service

environments.

A

You

know,

so

you

can't

test

everything

right.

Sometimes

you

sometimes

you

wait

for

an

incident

to

uncover

that

dependency.

You

know

retroactively,

but

you

would

rather

be

proactive

and

then

you

know

a

lack

of

understanding

of

the

product

architecture

in

today's

world

with

thousands

of

microservices.

You

know

it

can

a

human

understand

the

map

of

everything.

You

know

it's

very

hard

to

memorize

that

in

the

old

school

days

of

you

know,

monolithic

applications,

sure

but

microservices

today

are

challenging

and

then

you

know

sometimes

the

developer.

A

You

know

their

code

is

running

in

a

new

environment

and

again

your

code

should

be

written

in

a

way

to

kind

of

move

around

the

workload

to

different

clouds,

but

sometimes

again,

there's

dependencies

that

are

intertwined

that

you

just

don't

know

about.

So

software

developers

are

spending

a

lot

more

time,

debugging

right

and

if

you

think

about

it,

debugging

in

production

right

has

the

worst

possible

experience.

If

you

think

about

responding

to

an

incident

right,

it's

very

stressful,

it's

painful

for

the

customers.

A

People

are

hunting

and

digging

through

the

problem

and

ultimately

the

cost

of

that

is

expensive.

Because

now

you

have

production

code,

that's

broken!

You

have

to

go

back,

fix

it

test

it

and

that's

time

that

could

have

been

spent

on.

You

know

new

feature

development

right

so

and

it's

also

like

the

Lost

opportunity

cost

of

that

customer,

because

maybe

you

lost

that

customer

with

that

transaction

that

they

weren't

able

to

get.

A

So

that's

where

going

back

to

that

other

slide,

where

we're

shifting

left

to

QA

and

shifting

left

to

you

know

to

non

production

in

the

code

for

the

developer.

If

you

can

actually

find

find

these,

you

know

infrastructure

failures

and

application

failures

earlier

on

it's

cheaper

and

this

graph

shows

you,

you

know

like

look.

If

you

fix

it

in

a

QA

environment.

It's

you

know,

10x

reduction.

A

So

if

you

look

at

this,

a

bug

May

cost

ten

dollars

instead

of

a

hundred

dollars,

and

if

you

fix

it,

you

know

in

the

code

right

away

before

you

even

push

it

to

QA.

That

can

be

up

a

hundred

times

different

than

actually

fixing

it

in

production.

So

these

are

real

bad

values

right

that

you

can

apply

to

kind

of

show

why

it's

important

to

test

more

upfront.

A

Now,

if

you

look

at

Cloud

native

developers,

they're

focusing

on

the

container

itself-

and

you

know

the

consumable

apis-

that

it's

using

right,

Cloud

native

developers

experience

this

at

a

rapidly

increased

Pace,

because

we

are

making

it

easier

to

deliver

software.

You

know

they're

experiencing

more

failures,

because

it's

easier

to

deliver

software

containers

are

helping

developers,

focus

on

their

application

and

API

and

not

worry

so

much

about

the

stack

underneath

chances.

You

know

the

chance

of

lack

of

understanding.

A

The

lack

of

texting

can

cause

issues

across

the

whole

infrastructure

stack

and

again,

if

you

look

back

at

you

know,

even

common

kubernetes

failures

right.

The

system

instability,

the

resource

contention

right.

These

occur

when

kubernetes

cluster

run

out

of

resources

such

as

CPU

or

memory

configuration

errors

occur

when

a

kubernetes

cluster

is

not

properly

configured.

Resource

contention

occurs

when

multiple

components

compete

for

the

same

resources

system.

Instability

occurs

on

the

kubernetes

cluster,

is

not

stable

and

is

regularly

crashing

or

restarting

in

chaos.

A

Experiments

that

should

be

automated

in

the

CD

pipeline,

for

example,

include

testing

for

this

resource.

Exhaustion

configuration

errors,

resource

contention

and,

additionally,

you

can

automate

the

testing

for

the

ability

to

recover

from

these

unexpected

events

and

errors,

as

well

as

the

ability

to

scale

up

and

down

as

needed,

and

then,

ultimately,

this

can

help

you

automate

testing

for

the

ability

to

detect,

diagnose

and

mitigate

security

vulnerabilities.

A

So

it

was

developed.

You

know,

as

developers

dig

into

these

problems

and

debug,

you

know

they

shouldn't

have

to

like

dig

too

far

to

find

that

issue

right.

Your

testing

code

as

fast

as

possible

and

shipping

code

as

fast

as

you

can,

but

not

looking

at

the

overall

system

and

as

that

container,

sits

in

an

application

that

consumes

apis

and

resources

on

the

infrastructure.

The

impact

of

the

outage

can

greatly.

You

know,

extend

just

beyond

that

container

right,

so

you

have

to

ask

yourself:

are

containers?

A

So

if

you

look

at

the

faults

of

these

deep

dependencies,

the

problem

is

that

happens

is

that

customers

face,

the

application,

is

impacted

and

the

developer

jumps

in

to

resolve

the

issue,

and

they

find

out

that

there

are

multiple

dependencies

that

are

causing

an

issue,

and

this

ends

up

increasing

the

cost

of

development

right.

So

now

we

have

service,

resilience

is

impacted,

developers

are

debugging,

it

you

know,

dependency

fault

is

discovered

and

then

new

resilience

issues

discovered

as

well.

So

this

is

the

case

of

the

10x

100x

costs

and

Bug

fixes.

A

This

means

that

cloud

native

developers

need

fault,

injection

and

Chaos

experimentation.

So

if

we

look

at

revisiting

the

original

use

case

of

chaos,

engineering,

we

introduced

controlled

faults

to

reduce

expensive

outages.

It

seems

important,

but

ultimately

we

introduce

controlled

faults

to

reduce

expensive

outages.

You

know

we

recommend,

recommended

production,

chaos,

testing

and

then

it

was

very

high

barrier

to

entry,

and

then

it

was

more

so

on

a

game

day

model

and

then

traditional

chaos.

Engineering

has

been

more

so

a

reactive

approach

driven

by

regulations

like

a

requirement,

but

the

new

patterns

driven

by

chaos.

A

Engineering

are

the

need

to

increase

developer

productivity

right

to

remove

that

toil,

so

they

don't

have

to

dig

for

answers

and

the

need

to

increase

quality

in

Cloud

native

environments

and

the

need

to

guarantee

reliability

in

CL

and

move

to

Cloud

native.

So

this

this

need

leads

to

the

emergence

of

the

new

continuous

resilience,

which

is

basically

verifying

resilience

through

automated

chaos.

Testing

continuously,

and

all

that

means

is,

if

you

know,

have

a

known

failure

mode

that

you

need

to

protect

against.

You

know,

you're

using

a

resilience

mechanism.

A

You

can

have

a

chaos

test

to

validate

that

that

resilience

mechanism

still

works

as

expected

right,

whether

that's

alerting

you

or

you

know,

triggering

a

failover

or

just

an

error

message

right.

But

if

you

can

do

this

continuously,

you

can

know

your

system

is

protected

across

the

pipeline.

So

again

continues

resilience.

It

says:

chaos,

engineering

across

development,

QA,

pre-prod

and

production,

and

one

way

we

look

at

this

is

we

measure

it

with

resilience

metrics,

because

if

you

can't

measure

something

you

don't

know,

if

you're

improving

it,

so

the

resilience

score.

A

It's

the

average

success

of

the

percent

of

steady

state,

given

an

experiment

or

component

or

a

service,

and

then

what

that

means

is

basically

like.

My

your

expected

system

is

supposed

to

behave

a

certain

way

during

A

disruption.

So

then

you

can

have

a

score

associated

with

you

know.

Did

it

change

or

not

change?

Is

it

good?

Is

it

bad?

Is

it

up

or

down?

And

if

you

map

that

to

a

resilience

coverage,

that's

basically

the

number

of

chaos

tests

executed,

divided

by

the

total

number

of

possible

chaos

tests

times

100..

A

A

So

again,

if

we

look

at

you

know

the

game

day

approach

versus

pipeline

approach,

chaos

experiments

are

executed,

On

Demand,

with

a

lot

of

preparation

versus

in

pipelines,

chaos

experiments

are

executed

continuously

and

without

much

preparation,

and

then

you

know

primary

primarily.

We

Target

sres

in

the

Persona,

with

the

game

day

model

versus

with

chaos

and

pipelines.

All

personas

are

executing

the

chaos,

experiments

and

then

again,

chaos

with

game

days.

A

So

again,

traditionally,

you

know

developing

chaos.

Experiments

has

been

a

challenge.

Code

is

always

changing,

bandwidth

is

not

budgeted

to

creating

that

and

the

responsibility

is

typically

not

identified.

You

know,

sres

are

usually

pulled

into

the

incident

and

corresponding

action

tracking

and

then

pulling

the

QA

or

developer

and

then,

ultimately,

like

from

you,

know,

identify

identification

to

fix.

It's

not

track

to

completion.

So

no

idea

how

many

more

experiments

to

develop

or

what

failure

modes

are

protect

against.

Oh,

a

continuous

resilience.

You

know

developing

chaos.

A

Experiment

is

a

team

sport

across

the

delivery

life

cycle

and

it's

typically

it's

attributed

to

an

extension

of

regular

tests.

You

know

a

chaos,

Hub

or

experiment

repositories

are

maintained

as

code

and

get

so.

You

can

have

Version,

Control

and

historical

information

on

how

systems

were

configured.

Then

you

can

know

exactly

like

how

many

tests

need

to

be

completed

because

you

have

the

resilience

coverage

metric.

So

it's

never

an

unknown

that

you're

talking

to

leadership

about

what

tests

you're

running

or

how

how

it's

performing

you

can

actually

just

say.

A

Here's

the

test,

I'm

running

and

here's

the

trend.

So,

in

summary,

resilience

is

a

real

challenge

in

the

modern

or

Cloud

native

system

because

of

nature

of

the

development

use,

fault,

injection

and

Chaos

experimentation

to

get

ahead

of

the

resilience,

Challenge

and

push

chaos,

experimentation

as

a

development

culture

into

the

organization

rather

than

a

game

day.

Culture

thanks

for

listening

today,

I

appreciate

your

time

just

wanted

to.

Let

you

know

of

a

community

event:

chaos

Carnival.

It's

happening,

March,

15th

and

16th.

It's

a

two

day

virtual

event.

That's

entirely

free.