►

From YouTube: Managing add-ons across clusters

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

Hey

everyone

welcome

to

the

webinar

today

we're

gonna

talk

about

how

to

manage

add-ons

across

clusters.

Before

we

get

started,

though,

let

me

quickly

introduce

the

the

presenters,

so

I'm

ritesh

patel

co-founder

of

vp

products,

nermata

and

then

with

me.

I

have

damian

toledo

who's,

also

a

co-founder

and

leads

the

engineering

team

at

nemata

hi.

A

Just

to

quickly

introduce

nermata,

you

know

our

our

our

company,

so

we

are

the

creators

of

kivano.

It's

an

open

source

policy

engine

now

part

of

cncf

nemata

is

actually

a

platform

that

enables

data

management

of

kubernetes

and

workloads

and

we've

been

part

of

cncf.

You

know

for

quite

some

time.

We

are

an

active

member

of

cncf

and

participate

in

various

community

events

and

six,

and

we

have

customers

who

are

using

nermata

platform

to

up

operationalize

kubernetes

for

their.

A

You

know

developers.

So

it's

a

quick

introduction

of

nirmata

and

you

know

what

we

do

in

terms

of

the

agenda

today.

We'll

talk

about

you

know

we'll

start

with,

what's

typically

running

inside

a

kubernetes

cluster

and

just

to

kind

of

level

set

and

describe

you

know

what

we

mean

by

add-ons,

and

you

know

how

how

we

are

seeing

enterprises

enable

and

manage

add-ons

across

across

multiple

clusters.

A

So,

let's

get

started

so

typically,

when

you

look

at

a

kubernetes

cluster,

you

know

beyond

you

know.

Obviously

the

control

plane,

the

few

different

types

of

you

know,

applications

or

or

components

that

are

part

of

your

cluster,

so

at

the

minimum.

Initially,

you

need

some

of

the

required

core

services,

and

these

tend

to

be

your.

You

know:

cni

plug-in

your

csi

for

storage.

You

know

dns

if

you're

running

code,

dns

and

ingress,

you

know

if

it's

h,

a

proxy

nginx

or

any

other

ingress

out

there.

A

A

In

some

cases

you

know

they

may

not

be

if,

if

they

need,

if

these

add-ons

need

to

be

application

specific,

but

some

of

the

examples

here,

like

you,

know,

voltage

and

data

dog

agent,

you

know

prismacloud

sumo

logic.

There

is

just

several

of

these

that

are

typically

part

of

any

kubernetes

cluster

and,

finally,

the

applications

right.

So

then

you

know

these:

are

you

know

whether

it's

custom

applications

that

yeah

the

the

the

development

team

is

building

or

third-party

applications

that

need

to

be

installed

and

run

inside

a

kubernetes

cluster?

A

So

these

are

the

different

types

of

applications

and

in

on

your

running

on

your

cluster,

and

today

we

are

going

to

focus

on

on

add-ons.

So

you

know

typically,

what

are

what

are

these

add-ons?

What

what

you

know?

What

do

they

provide?

Why?

How

are

they

used?

So

these

add-ons

are

standardized

services

that

need

to

be

available

in

every

cluster.

A

B

A

Just

like

any

other

application,

so

let's

talk

about

how

typically

you

know,

applications

are

being

deployed

on

on

kubernetes

and

obviously

there's

lots

of

ways

to

deploy

applications,

but

one

common

way

to

to

deploy

you

deploy

and

automate.

The

deployment

of

your

applications

is

by

using

get

ops,

so

git

ops

is

essentially

it

allows

you

to

deploy

your

application

in

a

declarative

manner,

using

git

as

the

source

of

truth.

A

Now

the

benefit

over

here

is,

you

know

you

can

actually

define

or

or

or

you

know,

commit

your

your

application

manifest

into

git

and

git

now

becomes

the

source

of

truth

and

then

anytime.

You

need

to

deploy

your

application

to

a

single

cluster

or

or

multiple

clusters.

You

can

you

know

using

a

git

ops

controller.

A

You

can

apply

the

manifest

to

those

clusters,

so

the

benefit

of

this

is

obviously

git

becomes

a

single

source

of

truth.

Now

also,

it

enables

developer

self-service

because

most

developers

by

now

are

familiar

with

gate.

They've

used

it

before

they

understand

you

know

get

you

know,

commands

like

comment,

push,

etc.

A

Also,

it

provides

observability

in

terms

of

you

know

exactly

what's

running

in

your

cluster

and

you

can

actually,

you

know

validate

that.

You

know

by

by

looking

at

your

get

repo

in

case

you

end

up

losing

your

cluster

or

losing

your.

You

know

application

that's

running

in

the

cluster.

You

can

easily

recover.

You

know

from

from

your

git

repo,

so

you

know

these

are

some

of

the

benefits

and

git

is

becoming

increasingly

popular

for

delivering

or

for

deploying

applications

into

kubernetes

clusters.

A

A

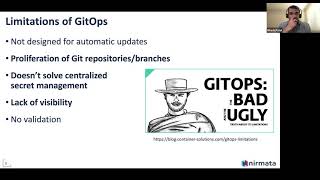

So

you

know

the

example

we

we

typically

see

is

for

add-ons

right

which

need

to

run

on

every

cluster.

That's

that's

that's

deployed!

If,

when

you

do

that,

and

if

you

need

any

variations

in

your

manifest,

you

end

up

having

to

create

either

multiple

repositories

or

branches

to

change

your

yaml

manifest

for

those

clusters

and

that

you

know

becomes

challenging

now,

because

it

really

adds

that

additional

overhead

to

to

be

able

to

manage.

All

of

these,

you

know

get

repos

and

branches,

and

and

so

on.

A

The

other

aspect

that

get

githubs

doesn't

solve

is

around

centralized

secrets

management.

So,

for

example,

typically,

it's

not

a

good

idea

to

store

your

secrets

and

git,

so

you

need

a

way

to

ensure

that

your

secrets

are

stored,

stored

outside,

maybe

in

in

vault,

and

when

you

do

that

now

you

need

to

again

every

time

you

deploy

something

from

git.

A

So

before

we

get

more

more,

you

know

into

the

the

automation

use

case.

Let's

quickly,

look

at

what

a

git

options

controller

does

the

github

comes.

The

gitoff's

controller

is

really

responsible

for

applying

changes

to

the

cluster.

Now

the

controller

could

be

something

that's

running

inside

the

cluster

or

it

could

be

something.

A

That's

that's

outside

the

cluster

there's,

don't

I

there's

no

real

kind

of

there's

no

real

requirement

on

where

the

controller

is

running,

but

it's

important

that

the

controller

can

address

multi-cluster

deployments,

especially

if,

if

you

know

customizations

are

required

per

cluster

and

also

the

gitoff's

controller,

provides

that

visibility

and

state.

You

know

essentially

providing

you

clear

visibility

into

which

change

was

deployed

on

which

cluster

and

whether

that

change

was

deployed

successfully

or

not,

and

then

the

controller

also

should

it

should

enable

advanced,

progressive

delivery.

A

So

now,

let's

think

about

add-on

management,

and

how

can

we

automate

that,

especially

when

you're

deploying

deploying

across

several

clusters-

and

you

know

one

of

the

challenges

we

talked

about

or

the

limitations

we

talked

about

of

of

git

was

having

to

create

multiple,

repos

and

branches.

If

you

want

to

deploy

to

multiple

clusters

so

with

when

in

in

order

to

automate

add-on

management,

what

you

can

do

is

is

use

customize

to

actually,

you

know,

provide

specific.

You

know

customization,

for

for

each

of

your.

A

You

know,

target

clusters

and

then

select

target

based

customization

to

apply

apply

your

customization

to

so

that

each

cluster

has

exactly

the

right

configuration

or

the

right

yaml,

and

it

can

you

know

it

it

it

it's

not.

It's

not.

A

the

the

yaml

is

exact

is

specific

to

that

cluster.

Instead

of

just

getting

a

generic

yammer

right

generic

configuration

and

that

way

you

can

address

several

use

cases.

For

example,

if

you

have

specific

licenses

or

ids

or

tokens

that

need

to

be

used

per

cluster,

that

can

be

done

using

customization.

A

The

other

advantage

of

using

customize

for

add-on

management

is

that

it

becomes

very

easy

to

reproduce

final

yammers

even

offline

before

applying

to

the

cluster,

because

you

could

just

use

the

customized

cli

to

actually

reproduce

or

recreate

the

the

final

yaml

files

and

that

that

helps

you

recreate

the

state.

That's

that's

running

inside

the

cluster,

so

it's

completely.

A

A

The

left

is

a

specific

customization

for

a

particular

target

cluster

in

in

this

case,

you

know

the

customization

is

actually

in

the

in

the

secret

here,

which

is

it's

it's

targeting

or

it's

listing

a

specific

cluster

and

on

the

right

hand,

side

is

a

yaml

which,

which

selects

a

specific

customization

for

each

cluster

and

each

target.

If

you

will

right-

and

the

target

in

this

case

is

a

kubernetes

cluster

identified

by

a

name

and

a

label

selector.

B

Retouch

all

right,

so,

let's

take

a

look

first

and

the

the

repository

that

retest

just

presented

so

in

our

in

our

case

here

we

have

actually

multiple

add-ons

in

the

same

repository.

You

could

have

one

repository

paradigm

that

works

as

well.

So

if

we

take

the

example

of

datadog

here-

and

you

can

use

also

multiple

branches,

if

you

want-

but

here

I'm

using

this

branch,

so

we

have

these

clusters

that

have

that

we

want

to

customize

right

there,

this

one

lift,

s2,

etc,

and

we

could

have

also

production

clusters.

B

B

B

B

Then

you

can

select

a

branch

in

this

repository,

and

here

you

can

actually

indicate

that

you

want

to

actually

apply

per

target

customization

and

you

can

you'll

be

able

to

select

the

the

file

defining

the

targets

right

that

we've

just

seen.

In

addition,

you

can

also

have

you

can

mention

or

define

the

namespace.

You

want

to

use

to

deploy

this

add-on

and

in

our

case

we

want

to

take

all

the

yaml

files

from

this

data

log

agent

directory.

B

So

that's

how

you

can

just

declare

this

application,

this

add-on

to

tunia

mata.

So

we

already

did

that

for

the

red

organ

the

volt

injector,

so

the

next

step

after

that

is

to

create

a

cluster

type.

The

cluster

type

is

going

to

be

used

when

you

discover

your

cluster

when

you

onboard

the

cluster

into

nyamata-

and

here

I

have

an

example

in

this

cluster

type-

we

have

added

our

two

add-ons

vault

injector

and

data.

We

also

included

giverno,

which

is

a

policy

engine

and

you

can

define

in

which

order.

B

You

want

these

add-ons

to

be

deployed

in

your

cluster.

So

when

you

unborn

the

cluster,

you

just

say

that

you

want

to

use

this

cluster

type,

so

we

have

already

registered

a

few

clusters

and

we

have

left

this

one

def

test

two

distance

three

here

and

now

what

we're

going

to

do

is

we

are

going

to

make

a

change

to

our

repository

and

verify

that

this

change

is

rolled

out

across

my

clusters.

B

B

B

B

B

So

we

see

that

since,

since

it's

a

demand

set,

we

don't

have

any

pods

here.

At

this

point,

the

part

is

being

recreated

we're

pulling

the

image

and

you'll

see

that

it's

going

to

go

to

running

state.

So

what's

interesting

here

now

we

should

be

able

to

verify

that

the

the

change

has

been

rolled

out

to

my

cluster.

So

here

we

are

looking

at

depth

test

one.

B

B

B

B

B

Look

at

the

demand

set

here,

so

I

see

that

the

same

change

has

been

applied

to

all

my

clusters,

but

the

key.

The

secret

name-

is

different

for

this

one

right,

so

we

want

to

have

a

secret

name

different

from

for

each

cluster,

so

that

that's

how

you

can

solve

you

know

what

rotation

has

explained.

You

can

have

a

per

target

customization

across

multiple

clusters

from

a

central

point,

all

right

rotation

back

to

you.