►

From YouTube: Kalix: Tackling the cloud to edge continuum

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

Okay,

hello,

everyone.

This

is

the

first

time

I'm,

actually

doing

a

webinar

on

Twitch,

so

I

hope

you

can

all

hear

me.

So

I

was

thinking

taking

a

few

minutes

to

to

talk

about

something.

You

know

that

we've

been

working

on

a

lot

in

the

last

years

at

light

Bend,

but

also

something

that

I've

been

thinking

about

a

lot.

You

know

where

we

both

a

little

bit

where

we

are

today,

but

also

some

some

thoughts

where

we

would

like

to

go

with

future

in

the

in

the

future,

and

it

evolves

a

lot

around.

A

You

know

cloud

and

Edge

and

what

we're

doing

with

calyx,

but

also

most

of

these

things

do

apply

to

akka

as

well.

So

the

this

session

is

titled,

tackling

the

cloud,

Edge

continuum

and

I'll

hope.

Hopefully

you

will

get

some

more

clarity.

What

I?

Well?

What

I

mean

by

that

throughout

this

this

this

this

talk,

so

my

name

is

Jonas

bonir

I'm,

the

I'm,

the

CEO

and

and

founder

of

light

Ben

I'm,

also

the

the

creator

of

akka

based

in

Sweden.

A

A

It's

almost

hard

to

remember

how

it

was

about

well

10,

12

years,

back

or

15

years

back.

You

know

when

we

just

started

tinkering

with

the

cloud,

how

hard

it

actually

was

and

how

much

work

we

had

to

do

and

reinvent

that

we

reinvent

the

wheel

over

and

over

again,

you

know,

I'm

talking

about

the

world

before

containers

and

and

virtualization

and

kubernetes

and

and

the

whole

ecosystem

around

kubernetes,

kubernetes

I.

Remember

you

know

when

I

started

rocket

in

2008

nine,

you

know

the

first

years

back

then

in

you

know,

2009

2010.

A

We

had

to

all

the

work

we

had

to

do

to

to

make

it.

You

know

run

efficiently

on

on-prem

and

also

in

in

AWS.

For

for

for

clients.

You

know

work

that

every

client

had

had

to

redo

over

and

over

again.

So

it's

it's

very

you

know

it's.

Definitely.

The

state

of

the

art

is

amazing

when

it

comes

to

Cloud

infrastructure,

but

but

it's

I,

don't

think

it's

all

good.

You

know

it's

like

it's

been.

A

It's

been

also

becoming

very,

very

complex,

like

there

are

almost

too

much

of

the

good

stuff.

There

are

too

many

good

products

pro

products.

You

know

they're

two,

there

there's

too

much

decisions

that

we're

all

drowning

in

in

order

to

ensure

you

know

that

we

we

take,

you

know

that

we

do

what's

best

for

for

the

specific

app

or

the

specific

use

case

or

the

other

specific

customer.

A

So

it's

you

know

the

options

that

we're

all

facing

can

sometimes

be

completely

overwhelming

and

and

almost

be

like

a

blocking

Factor

when

it

comes

to

decisions

and

and

moving

forward

moving

forward.

This

image

is

actually

directly

from

the

cncf

website

and

it

shows

the

you

know

the

vast

ecosystem

that

we

have

now

and

you

know

under

cncf,

which

is

completely

staggering.

You

know,

but

but

at

the

same

time,

how

could

someone

navigate

this

efficiently?

A

Do

you

do

I

need

to

learn

about

all

these

products

and

in

order

in

order

to

choose

which

ones

to

use

or

that

and

and

must

have

chosen,

you

know,

I,

don't

know

five,

five,

ten

or

whatever

is

needed

three

to

seven

I.

Don't

know

you

know

how.

How

do

I

make

sure

that

they're

all

worked

work

work

together?

You

know

how

can

I

compose

them

and

and

as

as

most

people

that's

been.

You

know,

including

myself.

A

You

know,

learn

the

hard

way

that

when

you,

when

you,

when

you're

composing,

for

you

know

sort

of

distinct

subsystems

or

products

into

a

larger,

cohesive

whole

in

a

one

single

system,

it's

usually

at

the

at

the

edges

that

that

things

break.

How

can

we

ensure

that

our

slas

are

kept

when

we

move

from

one

product

to

another

and

compose

them?

How

can

we

ensure

that

you

know

in

data,

integrity

and

consistency,

and

all

these

hard

things

are

maintained?

For

us?

A

So

so

so

a

matter

of

fact,

many

of

us

are

stuck

in

maintaining

and

prefers

building

and

maintaining

in

non

a

non-trivial

application

stack

ourselves

which

includes,

you

know

in

the

simplest

case,

often

a

low

balance

or

Ingress

router,

some

sort

of

API

Gateway

caches.

We

usually

built

our

system

in

some

sort

of

app

framework

for

microservices

and

if

often

we

need

some

Eventing

as

well

always

means

that

we

need,

like

an

event

broke

from

some

sort

of

message

broker.

A

We

need

to

put

it

all

in

a

database,

and

often

a

different

type

of

use.

Cases

means

different

type

of

databases

to

make

sure

that

we

optimize

data.

You

know

in

a

storage

and

queries

fully

depending

on

the

use

case,

and

all

of

this

is

of

course,

very,

very

hard.

You

know

you

know

most

applications,

then

sort

of

need

to

have

fully

staffed

operations

and-

and-

and

you

know,

24x7

making

sure

that

all

of

this

you

know

continues

to

function

and

takes

forward.

A

You

know

I

guess:

I

was

really

excited

when

I,

when

I,

when

I,

when

I

first

learned

about

about

serverless,

you

know

Lambda,

Amazon,

Lambda

I

think

was

really

revolutionary.

When

it

came

out,

it

was

really

pointed

towards

the

future.

You

know

for

a

new

Better

World

in

a

way

and

and

but

for

me,

serverless

has

always

been

a

developer

experience.

You

know,

I

think

it's

it's

too

good.

It's

too

revolutionary.

It's

too

forward

thinking

to

be

through

only

sort

of

bundled,

together

or

or

used

in

in

one

type

of

product.

A

A

A

You

know

if,

if

syrups

lives,

up

to

his

promise

is

to

focus

on

building

the

code

and

committing

it

you

know

to

wherever

a

repository

or

like

or

throw

it

up

into

the

cloud

into

whatever

platform

you

are

running

and-

and

you

know,

as

I

said

again

fast-

really

showed

the

way

here,

but

in

a

way

it

gets

stuck

halfway.

You

know

it

addressed

communication

and

workflow,

and

and

this

like

data

data,

pipelining

type

of

use

cases

very,

very

well,

but

in

a

more

stateless

manner.

A

There

definitely

is

a

big

piece

of

the

puzzle

in

how

we

build

applications

today,

but

it's

just

a

piece.

You

know

it's,

it's

sort

of

ignore

the

biggest

the

hardest

problem

distributed

in

distributed

systems.

In

my

opinion,

which

is

State,

how

do

you

manage

state

in

a

distributed

system

and

don't

just

like

put

it

over

in

a

database

somewhere

right?

A

How

can

you

actually

efficiently

manage

State,

Insider

application,

Insider

Services,

how

you

communicate

I,

coordinated

Etc,

and

also

it

didn't

do

such

a

good

job

of

fully

abstracting

already

over

all

infrastructure,

it

abstracted

over

the

message

broker

to

to

really

make

messaging

sort

of

a

part

of

the

programming

model,

but

not

necessarily

you

know,

databases

and

caches

API

gateways

and

all

these

other

things

that

that

you

have.

Of

course

it

varies

depending

on

the

on

the

on

the

product,

but

but

it

you

know,

functions

a

service

leaned

itself,

very

mostly

to

stateless

type

of

workloads.

A

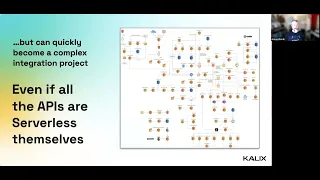

So,

and,

and-

and

also

you

know,

I

when

it

comes

to

serverless

many

many

applications

or

products

do

they

call

themselves.

Serverless,

which

is

great

I,

think,

can

provide

a

serverless

experience,

but

they

also

all

do

so

in

the

in

the

individually

with

databases

or

serverless

message,

Brokers

or

serverless.

You

know

event-based

systems

or

server

caches

or

serverless,

etc,

etc.

A

So

the

question

is

that

I've

been

sort

of

pondering

the

last

couple

of

years

or

so

or

or

so

is?

Can

we

can

we

do

better?

What

lies

Beyond,

serverless

and

I

say

what

lies

beyond

the

current

Incarnation

or

the

current

state

of

the

art

when

it

comes

to

serverless

I,

really

believe

that

we

can,

we

can

do

better,

we

can.

A

We

can

take

yet

another

sort

of

step

in

this

in

the

ladder

of

abstractions

and

making

the

life

easier

for

the

for

the

for

for

developers

and

what

I

think

we

really

need

to

do

is

is

is

is

is

vertical

integration,

it's

Stephen,

O'grady

Red

Monkey.

They

wrote

this

article,

this

great

article

where

he

talks

about

vertical

integration,

the

collision

between

app

platforms

and

the

database

and

and

one

of

the

quotes

in

this

in

this

article

is

there.

A

There

are

already

too

many

Primitives

for

engineers

to

deeply

understand

and

manage

them

all

and

more

arrive

by

the

day,

and

even

if

that

was

not

that

were

not

the

case.

There

is

too

little

upside

for

the

overwhelming

majority

of

organizations

to

select

Implement,

integrate,

operated,

secure

every

last

component

and

serve

or

service

time

spent.

Managing

Enterprise

infrastructure

is

time

not

spent

building

its

own

business,

okay

and

and

yeah,

and

you

know

going

back

to

Alfred

North

Whitehead's.

A

You

know

Timeless

wisdom

here

in

a

civilization,

Advance

advances

by

extending

the

number

of

important

operations

which

we

can

form

without

think

thinking

about

them

and

I

think

this

quote

very

much

applies

to

to

the

to

the

software

industry.

We

need

to

continue

climbing

the

latter,

abstractions

and

and

automate

you

know

making

as

much

as

possible

as

like

a

like

a

like

a

a

commodity

that

we

don't

have

to

think

about.

A

Of

course,

when

you,

when,

when

Iran

is

self-managed

on-prem,

it's

everything,

it's

the

business

logic

is

the

framework.

It's

the

database,

it's

the

Transport

Security

kubernetes.

If

you

happen

to

run

that

operating

systems

all

the

way

down

to

the

heart

to

the

hardware,

the

promise

with

the

with

the

cloud

is

that

it

got

us

basically

half

halfway.

The

the

cloud

providers

now

not

provide

great

kubernetes

services

that

you

that

you

can

just

throw

up

Docker

containers

on.

A

A

What

we

need

I

think

is

is

is,

is

a

new

sort

of

category

of

platforms

that

that

that

that

sort

of

out

in

which

you

can

Outsource

everything

but

the

business

logic,

there's

really.

No

reason

why

we

can't,

by

serverless

and

in

future

serverless

platforms,

should

be

able

to

manage

everything,

but

the

business

Logic

for

you

and

still

allow

you

to

not

just

build

the

subset

of

the

applications

that

that

you

that

you

want

but

actually

build

general

purpose,

real

full-blown

business

applications

or

Enterprise

applications.

A

So

so

I

think

this

is

at

least

at

least

for

me.

This

is

the

vision

that

I

have

and

I

think

what

what

we

need

to

move

towards-

and

this

is

a

great

example

of

vertical

integration,

where

the

platforms

or

takes

care

of

almost

everything.

But

the

thing

that

it

can't

you

know

your

business

logic

and

and

by

doing

so

removes

all

all

complexity

in

like

in

in

sort

of

setting

it

up

running

it.

Managing

or

upgrading

it

Etc,

you

know

for

the

whole

lifetime

of

the

application.

A

So

so

so

that

is

where,

where

we

are

with

with

Cloud

today

and

where

I

think

we

we

need

to

go,

and

you

know

this,

of

course

you

know

this

is

something

that

we've

been

working

on

a

lot

with

Enlightenment

and

and

I

think

we

have.

We

have.

We

have

a

solution.

You

know

to

to

this

vertical

integration

problem

in

calyx,

but

I'll

get

back

to

more.

To

that

later.

I

just

want

to

say

some

some

things

about

Edge

Computing

as

well.

A

You

know

Edge

Edge

I

think

is

really

the

natural

extension

of

the

cloud.

It's

not

really

a

separate

thing.

It's

it's!

It's

really

I

see

it

really

as

a

Continuum

as

as

I'll

talk

more

about

later,

gortner

said

in

2021

that

edge

Computing

is

actually

being

implemented

today

in

many

of

our

clients,

environments

enabling

entirely

new

applications

and

data

models

simply

put

Edge,

has

moved

from

concept

and

hype

into

successful

vertical

industry,

implementations

with

general

purpose

platform

status

approaching

rapidly

and

I

can't

really

Echo

this.

A

When,

when

talking

to

our

to

our

clients,

you

know

many

in

the

in

the

global

2000

category,

you

know

they,

so

they

are

they're

really

sort

of

starting

to

to

embrace

Edge.

But

many

do

so

in

an

in

a

sort

of

ad

hoc

way

and-

and

so

most

of

them

are

and

say

all

you

know,

but

many

of

them

are

struggling

with

how

to

combine

cloud

cloud

and

Edge

and

and

I

think

that's

that's

unfortunate

as

I'll

get

to

just

in

a

second

yeah.

You

know

it's

it's

it's

clear.

A

You

know,

there's

of

course

been

a

lot

of

hype

around

Edge,

the

last

five

five

years

or

so.

But

it's

it's

definitely

not

just

talk.

You

know

the

edge

is

already

here,

at

least

in

my

experience

talking

to

a

lot

of

these

companies.

We

already

have

really

really

it's

a

really

well

built

out

Edge

infrastructure,

so

that

the

cloud

providers-

often

you

know

so

they

they

provide

most

of

them,

provide.

A

You

know

small

data

centers

out

out

at

the

edge

data,

centers

they're

scaled

down,

but

but

still

allow

a

programming,

environment

or

or

Ops

environment.

There

are

very

very

similar

to

to

the

one

in

the

in

the

in

the

regular

Cloud.

You

know

you

can

offer

around

kubernetes

and

these

like

it

having

some

in

these

historical

Edge,

Edge

Edge

clusters.

Cdn

networks

have

well

you

know

they

they

allow

you

to

attach

compute

to

to,

to

you

know,

to

static

data

that

they've

always

always

served.

A

You

know

we

we've

seen

in

companies

like

local

Cloud,

flare

and

fastly,

and

so

on

being

coming

up

with

with

programming

malls

to

to

to

allow

you

to

run

computations

out

there

and

and

and

like

furthest

out

actually

not

furthest

before.

This

is

probably

the

devices

themselves,

but

before

we

make

the

jump

to

the

devices

themselves,

you

know

we

have.

A

We

have

the

telcos

that

allows

running

applications

inside

the

actual

cell

towers,

for

the

lowest

latency

possible

and

and

and

really

and

and

five

in

5G,

and

this

new

trend

of

local

first

software

is

really

changed,

changed

in

the

game.

Here,

it's

it's!

It's

too,

it's

too

big

of

a

topic

to

go

into

how

5G

and

so

we'll

we'll

change

the

game,

but

I

really

think

that

it

will

enable

a

completely

new

category

of

use

cases

and

and

and

allow

companies

to

serve

their

customers.

A

A

Just

you

know

giving

you

some

numbers

is

really

a

huge

Market

on

the

rise

here.

It

could

go

in.

The

Gardner

has

predicted

that

in

in

in

that,

in

you

know,

2020

we

was

already

the

case.

You

know

it

was

like

4.6

billion

and

you

and

it

will

go

up

to

61.1

billion

so

to

which

is,

you

know,

quite

quite

quite

a

rapid

rise

and

what

kind

of

edge

use

cases

are?

Do

we

see

out

there?

You

know

this

is

just

a

short,

a

short

short

list.

A

There's,

of

course,

many

many

more,

but

you

know

some

of

the

ones

that

I

I

personally

been

been

involved

in

are

autonomous

vehicles.

You

know

retail

trading

systems,

Healthcare

Emergency,

Services

factories,

Stadium

events,

you

know

serving

Sports,

you

know

concerts

and

these

type

of

things

very

efficiently

local

locally-

and

you

know

gaming

of

course

falls

into

that

category.

Often

farming

Financial,

Services,

Smart,

Homes

Etc.

So

so

you

know,

there's

there's

a

growing

list

here

or

Edge

use

cases

can

really

change,

change

the

game

and-

and

also

you

know,

tsunami

warning

here.

A

Garton

predicts

that

in

2025

about

75

percent

of

all

Enterprise

generated

generated

data,

I

mean

all

generated.

Data

will

be

created

and

processed

at

the

edge

itself,

and

that

is

that

is

an

increase

from

from

about

10.

Today,

I

think

today

was

actually

last

year.

If

I

remember

correctly,

you

know

this

is

this.

Is

this?

Is

a

game

changer

and

it's,

and

it's

this

of

course

means

you

know

that

that,

sir,

that

we

have

to

keep

as

much

data

at

the

edge

as

as

possible.

A

We

have

to

move

processing

at

the

edge,

so

we

so

we

can

actually

tackle

as

much

data

as

absolutely

possible

out

there

and

not

having

to

put

like

shove

it.

It

will

Channel

it

all

the

way

back

to

the

to

the

back

end

Cloud

into

processing

there

and

then

going

back

with

the

with

the

intelligence

or

the

the

answers.

So

the

you

know

the

insights

that

you

might

have

from

processing

that

that

data

we

need

to

be

able

to

process

that

out

on

the

edge

itself

and

I've

already

talked

about

that.

A

You

know

the

the

benefits

are

obvious:

real-time

processing,

meaning

faster

answers

to

the

users.

You

know

things

can

be

way

more

resilient

as

I

talked

about

because

we

don't

have

the

we

don't

rely

on

a

stable

connection

to

the

backend

cloud

and

it

can

also

be

a

lot

more

resource,

efficient

and

environmentally

friendly.

You

know

have

helping

tackling

you,

know

climate

change

and

consuming

less

energy,

which

is

you

know

we

all

want

to

do

in

these

these

days.

A

So

all

this

and

all

this

is

quite

exciting.

You

know

that

said,

and

it's

it's

of

course,

all

trade-offs

and

and

I'll

go

into

that

in

a

second.

The

first

I

just

want

to

say

that

you

know

Edge

means

different

things

for

different

people

and

the

way

I

see

it

is

a

sort

of

a

hierarchy

of

layers,

there's

no

black

and

white.

It's

not

either

you're

in

the

cloud

or

you're

in

the

edge

and

there's

nothing

in

between

it.

Sort

of

it

serves

a

lot

of

gray

area

here.

A

You

know

some

call

the

so

first

I

mean

there's,

there's

definitely

more

than

two

I

think,

but

but

if

we

like

to

be

have

to,

it

should

be

like

a

recently

coarse

grained.

Here

we

have

at

least

you

know,

Cloud

near

Edge,

far

Edge

and

the

devices

you

know

some

call

them

forage

Edge

clusters,

some

called

near

Edge

Regional,

you

know,

there's

we

haven't

really.

Is

there

a

settled

on

it

on

a

on

a

on

a

good

common

vocabulary

here,

I

think?

But

but

it's

really

it's

really.

A

You

know

more

and

more

of

stuff.

You

know

more

and

more

knows

more

and

more

of

sort

of

points

of

presence.

This

all

the

way

up

to

the

devices

you

know

where

they

can

be

literally

hundreds

of

millions.

You

know,

depending

on

what

kind

of

use

case

you

have

so

and

and

the

interesting

thing

is

that

you

know

each

one

of

these

layers

has

its

own

opportunities

for

us

as

Developers,

but

it

also

it's

its

limitations,

and

sometimes

we

need

to

lean

into

the

constraints

and

lean

into

the

limitations

to

really

get

those

opportunities.

A

So

if

I

should

just

you

know,

walk

you

through

a

little

bit

the

way

I

see

it.

At

least

you

know

is

that

further

out

towards

the

devices

on

the

right

hand

or

the

left

hand,

side,

and

you

have

further

in

towards

the

cloud

so

down

on

their

on

their

on

the

right

hand,

side

so

further

out

towards

the

devices.

As

I

said

you

have,

you

have

a

look

like

more

and

more

and

more

things.

A

You

know

tenth

of

thousands

or

hundreds

of

thousands

Pops

to

to

coordinate

while

further

down

the

cloud

is,

is

usually

sometimes

in

the

intense

or

up

to

thousands

of

nodes

to

two

core

to

coordinate

further

out.

You

usually

have

more

unreliable

networks

and

Hardware,

while

further

in

you

can

usually

trust

the

networks

and

be

in

and

there

and

you

know,

in

in

public

clouds.

Today

the

you

know

the

hyperscalers

cloud

clouds

you

they're,

usually

very,

very

reliable.

A

You

know

comparatively

at

least

and

same

and

same

holes

for

Hardware

further

out

you

have

more

limited

resources

and

and

computational

power,

which

of

course

influences

the

way

you

design

and

think

about

these

things.

You

know

underneath

while

we're

further

down

in

towards

the

cloud

you

have

your

vast

resources

and

compute

power.

Further

out,

you

have.

It

leads

itself

more

to

real

time

low,

latency

processing.

As

we

talked

about

you,

can

directly

out

there

come

to

compute

or

return

with

you

with

the

answer

immediately,

but

further

in

you

have

more

batch,

High

latency

processing.

A

You

know

further

out,

you

know

we

we

can

take

local

decisions,

faster

decisions

less,

but

less

also

less

accurate

decisions,

because

there's

less

computational

power

and-

and

we

usually

want

to

want

to

return-

you

know

answers

in

real

time

further

in

towards

the

cloud

you

know.

Of

course

we

have,

we

can

look

more

globally,

we

can

make

decisions

on

more

global

data

set,

but

it's

it's

definitely

slower

while

better,

you

know

better

in

the

terms

we

can

take

more

Intelligent

Decisions,

so

often

I

see

sort

of

a

High

Hybrid

approach.

A

Where

we

can

we

can

we

can.

We

can

get

reasonably

good

decisions

immediately

back

to

the

user,

while

we

then

also

Channel

all

data

back

to

the

cloud

for

more

sort

of

batch

processing.

It's

probably

not

batch

processing

these

days,

but

more

aligned

with

batch

processing

takes

more

time

to

do

more

thorough

analysis

and

once

we've

reached,

you

know

some

sort

of

of

of

the

intelligence.

You

know

we

can

funnel

that

back

out

to

the

edge

and

that

can

influence

you

know

the

edge

Services

out

there

further

out

is

definitely

more

resilient

than

available.

A

You

know

from

the

perspective

of

the

of

the

system

you

know

or

or

from

the

perspective

of

the

user.

That

said,

you

know,

the

hardware

is

less,

is

less

reliable,

so

it

definitely

affects

the

way

you

the

way

you

designed,

but

having

data

in

compute

and

the

user.

At

the

same

time,

place

you

know,

leads

itself

towards

more

resilience

and

more

available

systems,

and

you

know

contrary

less

resilient

and

available

from

the

perspective

of

the

user

out

there.

A

If

you

put

things

in

the

cloud

and

and

for

for

infer

and

further

route

requires

you

the

more

fine-grained

data,

replication

and

and

Mobility,

you

need

to

move

with

the

user.

You

know

compute

and

state

would

need

to

move

with

the

user

as

it

as

it

moves.

You

know.

Thinking,

for

example,

autonomous

vehicles

or

whatever

you

know

actually

driving

physically

and

and

as

you

as

you

move,

you

know

across

the

country

of

you

know.

A

So

so

what

do

we

need

to

to

to

tackle

all

of

these

like,

like

very

different

requirements,

yeah

I,

think

I

I

think

we

need.

You

know

one

of

the

cornerstones

in

the

way.

I've

always

thought

about

software.

You

know

comes

from

the

actor

model.

I

wish

you

have

autonomous,

self-organizing

components,

We

call.

We

call

them.

Actors

I,

think

that

that's

a

model

that

really

works

very

well

out

on

the

edge.

A

As

I

said,

we

need

physical

co-location

of

data

compute

and

end

user.

They

are

exactly

usually

abstracted

by

these

autonomous

self-organizing

components,

sort

of

actor-ish

type

of

components.

We

need

also

intelligent

and

adaptive

placement

of

data

compute

menu,

so

we

need

some

intelligence

in

knowing

where

data

should

be.

Ideally,

you

know,

if

possible,

predict

where

the

user

should

be

unable

to

put

States

right

there

in

advance.

A

That's

not

always

possible,

and

it

can

sometimes

be

very

hard

to

do,

but

you

know,

but

at

least

do

it

very,

with

very

low

latency

on

demand

and

and

there's

so

there's

a

lot

of

very

interesting

research.

You

know

marketing,

Clubman

and

and

and

many

others

you

know-

are

doing

around

local

first

cooperation

or

local

first

software

in

in

which,

which

you

know

so

you

invert

the

way

you

look

at

it.

A

The

system

you

know

should

should

work.

Fine

completely

fine

in

in

you

know

completely

locally,

you

know,

and

and

if

we

happen

to

have

a

a

cloud

that

we

can

use

sometimes

for

all

the

time,

then

we

happen.

Then

we

then

we

do

that,

but

we

don't.

We

are

not

reliant

on

always

in

using

like

a

database

over

there

or

or

some

cloud

services

over

here,

but

we

can.

We

can

function

fine

inside

factories,

for

example,

or

or

or

inside

stores

like

or

Etc.

It's

a

lot

of

interesting

stuff

going

on.

A

A

It

is

that

you

know,

and

the

way

we're

looking

it

that

we

have

solved

in

in

calyx

is

that

we

have

options

for

what

we

call

State

models

they're,

like

shapes

of

your

data,

that

sort

of

can

be

tied

to

a

specific

consistency

model,

specific

replication

model,

Etc

a

little

bit

more

higher

level

way

of

reasoning

about.

You

know,

replication

and

consistency

by

just

looking

at

what

you

know

what

my

state

models

means,

what

kind

of

semantics

it

has

and,

of

course,

end-to-end

guarantees.

You

know

I

mean

we.

A

We

need

the

system

to

to

take

full

responsibility.

You

know

all

the

way

and

and

and

naturally

that's

very

hard

if

you

as

a

developer,

has

to

stitch

together

multiple

different

pieces

that

you

don't

own,

that

you

perhaps

you

just

download

or

bought

or

whatever

we

need

platforms

that

can

really

take,

take

responsibility

of

end-to-end

guarantees

of

the

slaves.

So

so

so

so.

My

vision

is,

though,

is

that

what

we

need

is

a

is

what

I

call

the

cloud

to

Edge

data

plane.

A

So

an

abstraction

or

platform

that

can

sort

of

allows

us

to

build

application.

In

This

Cloud

to

Edge

continue

and

because

this

really

is

a

Continuum

the

way

the

way

I

view

it.

You

know

it's,

it's

really.

If

you

run

in

the

cloud

or

if

you're

running

the

edge

should

not

be

a

design

decision,

definitely

no.

You

don't

want

to

hard

code

that

it

shouldn't

be

a

development

decision.

It

should

be

it's

it's

it's

and

you

know

it

should

actually

not

even

be

a

deployment

decision.

A

It

should

ideally

be

a

runtime

decision,

but

it

should

at

least

be

a

deployment

decision

that

you

can

choose

to

say.

I

want

to

put

this

thing

over

here

and

that

over

the

thing

over

there

and

and

and

but

you

know,

as

as

as

always,

it's

really

hard

to

predict

how

the

application

is

being

is

being

used

and,

and-

and

you

know,

and

if

you

get

sir,

what

we

used

to

call

slash

dotted.

A

We

know

you

know

when

I

started,

you

know

like

Black

Friday,

so

whatever

you

know,

it's

like

the

system

needs

to

adaptively

scale

and

work

with

with

how

it's

actually

being

used

to

today,

which

means

scale

up

and

scale

down

and

move

things

around.

So

adaptiveness

is

extremely

important

here.

It's

of

course,

really

important.

Also,

then,

that

these

these

services

are

true,

the

location

transparent

that

they

can

run

anywhere

from

the

public

from

the

public

Cloud

to

like

thousands

of

pops

out

at

the

edge.

A

So

how

can

we

then

find

the

these

right

abstractions?

You

know,

Timothy

Keller

said

that

freedom

is

not

so

much

the

absence

of

restrictions

as

finding

the

right

ones.

The

liberating

restrictions,

yeah

I,

think

you

know

I've

learned

often

the

hard

way

you

know,

but

I

also

learned

to

really

appreciate

constraints

and

the

fact

that

constraints

can

actually

be

liberating.

It's

not

just

super

software.

Of

course

it's

true

forever

for

everything,

but

I

I

think

it's

sort

of

been

been

something

that's

been

guiding.

A

You

know

the

way

I've

been

thinking

about

software

for

many

years

and

the

way

I've

been

designing

products

and

apis

that

that

you

know

constraints,

the

right

type

of

constraints

are

used,

often

and

having

them

in

a

first

class.

These

constraints,

like

there's,

also

been

a

Hallmark

in

always

been

a

Hallmark

in

in

building

akka,

that

these

constraints

of

the

network

and

the

constraints

that

we

see

in

distributed

systems

should

be

first

class

and

and

that

can

be

liberating

in

in

how

we

how

we

did

design

software.

A

So

if

we,

if

we

would

then

try

to

distill

the

ultimate

you

know

ultimate

in

quotes

there.

Of

course,

no

such

thing,

but

one

step

towards

at

least

the

better

or

a

better

programming

model

for

for

this

Cloud

to

Edge

could

continue.

I

really

think

that

that

that

there

are,

you

know

three

main

things

and

the

these

are

the

three

things

that

we

as

developers,

in

my

opinion,

can

never

delegate.

A

A

You

know

tick

so

to

speak,

how

to

mine

intelligence,

how

to

act

and

operate

on

data,

how

to

transform

and

down

sample

and

relay

or

trigger

side

effects

and

then

and

ensure.

You

know

that

that

what

is

side

effecting

and

what

is

not

I

mean

how

to

use

workflow

and

point-to-point

communication

patterns

like

point-to-point

Pub

sub

streaming

broadcasting

how

to

pull

it

all

together.

The

data

model,

the

API

and

all

these

things

around

the

business

logic

into

one

single

programming

model.

I.

A

We

sort

of

tried

to

look

beyond

the

current

state

of

the

art

of

serverless

today

and

not

jobs

abstracting.

You

know

what

what

functions

service

does,

but

but

well,

actually

abstract

the

way

all

infrastructure

into

single

declarity

Pro

programming

model,

which

means

that

you

don't

have

to

think

about

the

database

anymore.

You

don't

see

the

database,

you

never

see

the

event

Brokers,

you

never

see

the

caches,

no

API

gateways,

no

service

meshes,

you

don't

have,

can

you

guys

declaratively,

configure

security

and

so

and

and

so

on?

And

it's

and

it's

fully

polyglot

you

know.

A

Live

band.

Has

a

history

of

building

tools

for

the

jvm

with

a

job

on

scholar

you

know

play

for

a

Moroccan,

ARCA

and

so

on,

but

calyx

is

fully

polyblock.

You

can

use

it

from

from

many

many

different

languages,

the

ones

we

we

are

officially

supporting

supporting

right,

not

now

only

like

four

months

after

the

launches

Java,

JavaScript,

typescript

and

Scholar.

A

But

you

know

there

are

sdks

in

many

other

languages

and

and

as

there's

a

need

and

demand,

we

will

definitely

add

more.

It

really

tries

to

unifies

this.

This.

You

know

the

idea

of

cloud

native

and

Edge

native

development

into

one

single

programming

model.

You

know

abstracting

enough

for

the

runtime

to

take

the

decisions

of

how

to

most

efficiently

move

data

around

and

and

and

and-

and

you

know,

allowing

you

through

the

state

models

they're

talked

about

is

there

for

at

a

high

level

defined.

A

You

know

the

the

constraints

that

you

want

to

have

on

that

data

in

terms

of

of

consistency

and

the

guarantees

and

data

Integrity

to

get

guarantees

is,

is

reactive

at

its

core

low

latency

have

to,

but

it's

all

running

on,

ARCA,

grpc

and

kubernetes,

and

it's

really

sort

of

embodies

this

vertical

integration

as

a

service.

You

know

you

have

essentially

all

of

that

in

calyx

we

abstract

everything

but

the

business

logic

and,

as

I

said

you

know

the

data

modeling

and

the

API

definitions.

A

But

that's

all

you

need

to

do

the

rest

is

in

us

and

we

operate

it

for

it's

fully

managed.

We

operate

everything

for

you.

So

the

question,

then,

how

do

you

build

a

service

in

this?

It's

it's

three

steps:

API

description,

defining

your

state

model

and

then,

finally,

writing

your

business

logic.

So

I'll

show

you

a

little

example

here

in

this

sample:

I'm

going

to

show

you

our

our

declarative

sort

of

API,

first

contract,

first

SDK

and

we're

we

are

working

on

a

code

first

version.

A

The

first

one

will

come

out

is

for

for

spring

developers.

We

use

spring

annotations

to

to

Define.

You

know

things

declaratively,

but

right

in

the

code,

but

here

in

this,

since

that

hasn't

been

released.

Yet

I'm

going

to

show

you

here,

one

that

is

based

on

Pro,

on

Pro

debuff,

for

or

for

before,

defining

the

schema,

but

the

same

ideas

whole

that

you

first

Define

your

eight,

your

API

and

your

data.

A

So

in

the

in

this

example,

let's

create

a

simple

shopping,

cart

and

I'm

just

going

to

show

you

not

not

all

of

the

code,

but

some

of

the

more

important

you

know

pieces

of

it.

So

you

so

you

get

the

idea

of

how

to

work

with

it.

So

so,

let's

now

Define

the

events

and

the

and

the

data

model.

We

start

by.

Defining

this

add

line,

item

event

and

there's

one

you

know

key

answer

of

annotation

as

as

it's

called

in

in

in

in

protobar

language,

which

is

this

entity

key.

A

So

we

we

set

a

user

ID

and

we

tag

that

with

entity

key

calyx

field.empt-

and

this

is

this-

is

this

then

becomes

your

your

primary

key

and

and

which

is

used

for

routing

for

quick

work

for

for

querying

it

for

sharding

and

many

different

things

yeah.

But

apart

from

that,

then

we

just

have

the

product

ID.

So

so,

let's

now

Define

an

API

that

sort

of

that

that

makes

makes

use

of

this.

A

A

So,

and-

and

as

you

can

see

here,

we

have

the

the

ability

here

to

add

options.

You

know

optional

annotations

here.

We

here

we

add

a

Google

API,

HTTP

annotation

that

allows

us

to

add

sort

of

a

post

URI

to

this,

which

means

that

you

know

it

will

automatically

generate

an

HTTP

endpoint

it

will.

It

will

like

by

default,

also

generate

a

grpc

endpoint

since,

since

you

know

this,

this

is

protobuf.

A

You

know

it

goes

hand

in

hand

with

with

grpc,

but

it

will

additionally

sorry

sort

of

generate

also

an

HTTP

endpoint

here

and

and

then

we

also

add

one

option

for

Eventing

here

in,

in

which

we

can

Define

the

in

and

out

and

in

reads

the

event

from

the

event

log

and

we're

here

we

can

Define

that,

as

as

you

know,

the

event,

log

shopping

cart

and

then

out,

we

Define

a

topic.

Shopping,

cart,

events.

So

that's

that's,

essentially

everything

we

need

to

do

when

it

comes

to

to

the

API.

A

Of

course,

there

might

be

more.

You

know

sgb

endpoints

or

grpc

endpoints

that

you

want

to

create.

But

but

you

know

the

same

logic

holds

here.

The

second

thing

we

do

is

we

Define

a

state

model,

they're

sort

of

the

sort

of

the

shape

of

our

domain

data.

Of

course,

it's

extremely

important

that

you

that

you

pay

a

lot

of

just

to

take

a

lot

of

time

on

defining

your

domain

data.

A

A

We

have

said

that

it

should

be

of

the

type

value

entity

which,

which

is

our

way

of

saying

that

it

should

be

a

key

value

type,

but

we

can

just

change

that

with

like

to

event

sourced

entity,

whether

changing

one

single

line

of

code

here

and

the

third

statement,

all

that

I

haven't

talked

about

is

sort

of

data

structure,

type

backed

by

CR

crdts.

That

are,

you

know,

a

very

efficient

and

very

very

in

you

know,

reliable

and

an

available

way

of

of

replicating

data.

A

Yeah

then

we

also

have

the

option

here

of

defining.

You

know

how

we

should

query

our

data.

We

we

have

the

notion

of

or

or

the

concept

of

views

here.

So

we

can.

We

can

add

a

a

grpc

method,

we'll

say

it

will

get

get

cards

that

returns

a

stream

of

cards,

and

here

we

can

say:

okay

now

I

want

to

query

my

my

data

model

by

creating

you

know

some

sort

of

materialized,

streamable,

adaptive,

View

and-

and

you

do

that

by

simply,

you

know,

defining

a

select

statement

that

are

it's

not.

A

A

Then

we

also

have

the

option

of

adding

an

HTTP

annotation

for

this,

and

in

this

case

we

we

defined

as

a

get

and

at

slash

cars,

which

means

that

you

can

then

get

that

this

this

this

stream

likes

from

that

URL

we're

also

currently

working

on.

We

haven't

released

that

yet,

but

working

on

supporting

joins,

which

is

one

of

the

highest

demanded

features

here.

A

So

so

now

we're

defining

the

API

our

data

model,

and

the

third

thing

is

that

we

need

to

write

our

business

logic,

and

here

we

can

choose

whatever

like

favorite

language

we

might

have

here.

I

I

chose

to

to

show

you

know

some

JavaScript

code,

so

so

the

the

only

thing

we

need

to

do

here

is

is

to

Simply

Define

a

business

logic

function.

A

It's

essentially,

you

know

one

line

or

a

couple

of

lines

of

code

and

and

what's

what's

really

interesting

here,

if

you,

if

you

look

at

this

function,

add

item

that

it

has

three

different

arguments

here

and

those

are

things

that

are

injected

into

the

function

as

needed.

So

when

an

event

derives

it's

injected

into

this

function

and

the

function

is

invoked

for

example,

and

in

this

case

an

ad

item

event,

but

we

also

inject

the

state.

A

You

know

the

state

is

outsourced.

It's

managed,

I'll

talk

just

more

about

that

just

in

a

second,

but

is

outsourced

or

managed

on

behalf

of

the

function.

Just

as

communication

Eventing

is

managed

on

behalf

of

the

function,

we

also

managed

State

on

behalf

of

the

function

and

inject

that

accordingly,

and

only

inject

that

when

there's

new

state

available,

you

always

have

the

latest

State

without

having

to

go

and

pull

it.

You

know

all

the

time

and

and

and

by

doing

so

we

can

ensure

that

it's

always

as

efficient

as

absolutely

possible.

A

There's

no,

you

know

we

don't

have

there's

no

responsibility

from

the

function

to

do

to

to

to

to

think

anything

about

communication

or

State

Management.

It's

just

it's

just

injected

into

the

function

on

an

as

needed

basis,

and

then

we

also

inject

the

context

for

you

to

to

do.

You

know

additional

things

you

know

within

inside

the

calyx

environment.

That's

it

essentially

it's

it's!

It's

extremely

simple!

A

You

know

one

of

the

key

Innovations

I

just

want

to

say

talk

a

little

bit

more

about.

That

is

that

you

know

we

you

know

function

as

a

service

allows

you

to

fully

abstract

over

communication.

You

don't

need

to.

You

know

care

about

how

the

event

arrived

into

your

function.

It's

just

injected

into

your

function.

You

know

and

you're

invoked

whenever

an

event

comes

in

sort

of

triggers

the

function

and

once

you're

done

with

your

business

logic,

doing

some

sort

of

action,

then

it

isn't

just

a

meteor

event.

Then

you're

done.

A

That's

all

that

there

is

to

it.

You

know

it's

not

your

responsibility

to

care

at

all

about

how

events

you

know

are