►

From YouTube: Cloud Native Live: Automate & orchestrate databases & other stateful workloads w/ Kubernetes

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

A

This

is

an

official

live

stream

of

the

cncf

and,

as

a

circuit

is

a

subject

that,

since

have

code

of

conduct,

please

do

not

add

anything

to

the

chat

and

or

questions

that

would

be

in

relation

of

the

code

of

conduct.

Basically,

please

be

respectful

of

all

of

your

fellow

birth

sponsor

and

presenters.

We

throw

all

that

hand

over

to

alex

to

show

us

about

this

amazing

idea.

That

is

how

automated

cassette

based

state.

B

B

So

a

little

bit

a

little

bit

about

myself.

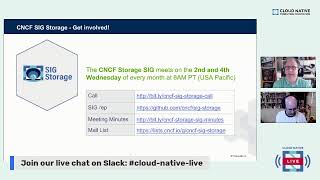

My

name

is

alex

kirkop,

I'm

I'm

one

of

the

founders

of

the

ceo

of

storage

os

where

we've

been

building

a

software

defined

platform

for

for

cloud

native

storage.

I

I

do

have

two

hats.

I'm

also

the

co-chair

of

the

the

cncf

storage

sig

so

feel

free

to

to

to

ask

any

type

of

questions

or

hands

cloud

native

storage.

During

this

talk

and

I'll,

happily

try

to

answer

to

the

best

of

my

capability.

B

My

background

before

before

starting

up

storage

os

was

was

actually

working

on

infrastructure

in

infrastructure,

engineering

of

sort

of

large

platforms

with

some

large

financial

services.

I

mentioned

the

cncf

storage

sig.

I

want

to

make

a

little

plug

here,

we're

always

looking

for

to

expand

the

community

and

have

people

join

the

the

sig

and

the

and

and

the

the

sig

meetings.

We

cover

a

lot

of

things,

including

project

presentations,

that

that.

B

Well

as

projects

that

are

just

considering

joining

the

cncf,

and

we

also

do

a

lot

of

work

on

different

documents

and

information

that

we

think

would

be

really

useful

for

for

the

end

user

communities

and

for

organizations

and

developers

and

teams

that

are

that

are

deploying

cloud

native

technologies,

so

I

wanted

to

cover

off

right.

We

we

often

have

this

discussion

around.

You

know

what

is

a

stateful

application.

Why

is

cloud

native

storage

so

important,

so

I'll

start

off

with

something

maybe

a

little

contentious?

A

B

Applies

to

the

storage

too,

as

much

as

possible.

We

want

cloud

native

storage

to

be

composable

and

declarative.

You

know

in

much

the

same

way

that

you

can

specify

which

containers

your

applications

uses

and

the

amount

of

cpu

and

ram,

and

perhaps

the

the

network

topology

or

the

the

service

mesh,

etc,

that

that

your

applications

need

developers

should

also

be

able

to

provide

the

ability

to

compose

their

storage

environment.

And,

of

course,

you

know

for

that

to

be

possible.

The

storage

environment

needs

to

be

api

driven

and

needs

to

integrate.

B

B

B

The

other

thing

is

platform,

agnostic

right.

So

so

what

do

we

mean

by

this

here?

I'm

talking

about

using

software

options

or

or

or

perhaps,

services

which

allow

you

to

use

the

same

climate

of

storage

or

the

same

declarative

interface

wherever

you

are,

whether

you're

you

know

developing

on

a

laptop

environment

or,

or

you

know,

in

on

premise,

on

big

bare

metal

or

cloud

environments

or

or

whatever

the

mix.

Is

you

really

want

the

cloud

native

environments

to

be

the

same?

B

In

all

those

environments,

so

that

your

development

environment

and

your

production

environment

can

relate

to

each

other

and

can

work

together

and

finally,

agile,

and

I

do

admit

that

this

is

incredibly

cliche.

But

what

do

I

mean

by

agile?

Well,

like

I

said

in

in

cloud

native

environments,

the

rate

of

change

is

high.

They

are

designed

to

scale

on

demand.

They

are

designed

to

to

have.

A

B

So

when

we

looked

at

all

of

this

in

the

cncfc

we

we

we

realized

that

there

was

this.

This

huge,

broad

landscape,

that

that's

where

storage

you

know

is

defined

and

it

it

covers

lots

of

different

things.

Everything

from

you

know,

distributed

storage

systems,

to

simple

local

systems,

to

databases

and

key

value

stores

and

object,

stores

and,

and

other

things

like

that,

so

storage

storage

in

the

cloud

native

world

is

a

particularly

broad

topic

in

order

to

to

help

to

help

people

understand

this.

B

We

cover

some

of

the

things

around

the

attributes

of

the

storage

system,

because

it's

it's

more

important

than

ever

that

that

developers

can

understand

what

their

application

needs

and

therefore,

what

attributes

of

a

storage

system

they're.

Looking

for

how

the

different

layers

in

a

storage

system

in

a

storage

solution

affect

the

different

attributes,

because

what

we,

what

we

often

see,

is

that

storage

systems

in

cloud

native

environments

have

have

any

number

of

layers

and

each

of

those

can

affect

different,

different

aspects

of

say,

availability

or

scale.

A

B

A

B

B

B

Some

of

the

key

things

are

are,

obviously

you

know

things

like

availability

and

scalability,

but

very

often

you

know

performance

is

a

key

consideration

too,

and

we

mustn't

forget

things

like

consistency

and

durability.

But

what

we

found

was

that

these

different

attributes

could

be

measured

across

a

number

of

different

vectors,

so,

for

example,

the

ability

to

to

failover

and

and

and

have

applications,

access,

storage

in

different

locations

being

able

to

move

data

between

nodes

and

protecting

the

data

against

against,

say,

say,

failures

was

another

factor.

B

Similarly,

you

know,

scalability

could

be

could

be,

in

terms

of

say,

the

number

of

clients

that

can

access

the

storage

system.

The

the

number

of

operations

that

that

that

might

be

important

say

for

for

a

database

where,

where

it's

all

about

the

transactions

per

second

but

also

scalability,

could

be

measured

in

throughput,

where

you

know

it's,

which

could

be

really

important

if

you're

running

workloads

like

analytics

or

something

like

elasticsearch,

for

example,

right

and

it's

very

rare-

that

one

storage

system

is

going

to

be

able

to

do

everything

right

so

so.

B

B

And

of

course

you

know,

the

the

storage

topology

place

plays

a

huge

part

in

this.

So

so,

for

example,

you

know

distributed

topologies

might

affect

latency.

Sharding

is

often

meant.

Is

it's

often

a

tool?

That's

used

to

to

to

allow

workloads

to

to

scale

better

and

to

support

large

number

of

clients

and

hyper

converged.

B

Allows

is

very

popular

today,

because

it

allows

compute

and

storage

workloads

to

to

share

the

same

environment.

And

of

course

you

know

the

orchestration,

the

host

and

the

operating

system

can

have

any

number

of

of

different

different

abstractions

there.

So,

for

example,

if

you're

accessing

a

database

or

you're

accessing

the

service,

you

know

you

might

have

ingers

controllers

or

load

balancers

in

in

the

pot

and

if

you're

accessing

volumes

there

might

be

volume

managers

and

things

like

that.

B

You

know

starting

off

from

from

local,

which,

which

tends

to

offer

you

know

really

great

low,

latency

performance

and

and

fast

throughput,

but

but

obviously

poor,

availability

and

and

durability,

but

remote

allows

you

to

to

sort

of

disaggregate

the

the

architectures

and

and

more

commonly

nowadays

distributed

systems.

Allow

you

to

make

it

easier

to

scale

and

to

provide,

provides

the

the

capability

to

to

provide

storage

capabilities

across

a

large

number

of

components

or

nodes

and

and

capacity.

B

B

One

thing

that,

although

a

lot

of

the

development

thus

far

has

been

on

putting

in

container

orchestration

around

volumes,

we

are

seeing

now

developments

with

the

new

with

the

new

cap,

with

the

called

cossie,

which

is

talking

about

creating

abstraction

layers

for

object

stores

as

well.

But

ultimately,

the

data

access

interface

also

impacts.

B

A

number

of

attributes

within

the

system.

But

what's

important

is

that

we

don't

necessarily

consider

the

different

attributes

purely

on

a

data

access

interface.

So

so,

for

example,

you

know

object

stores

typically

have

slightly

higher

latency

than

say

a

block.

A

block

volume

in

a

in

a

file

system,

because

object

stores

have

a

more

distributed

nature

and

the

api

itself

obviously

adds

adds

overhead.

B

But

it's

not

always

safe

to

assume

that

say

a

block

or

a

file

system

volume

will

always

have

lower

latency

than

an

object

store.

In

fact,

you

know

there

are

any

number

of

distributed

storage

volumes

that

could

actually

be

built

on

top

of

object,

store

back

ends,

in

which

case

you

know

the

the

the

after

some

of

the

attributes

that

that

volume

would

inherit

are

based

on

those

those

back-end

object

stores

in

terms

of

availability.

B

So

we

tried

in

in

the

white

paper

we

tried

to

put

together

a

few

comparisons

between.

You

know

how

the

different

access

interfaces

compared

to

each

other.

This

is

this

is

generally,

you

know

generally

accepted

attributes,

but,

like

I

said

it

doesn't

always

apply,

because

very

often

the

layering

means

that

that

different

systems

can

be

built

on

on

on

different

underlying

components.

B

B

B

Workloads

will

be

using

the

data

interface

to

talk

to

the

data

playing

component

of

the

storage

system,

but

the

orchestrator

will

be

running

will

be

interfacing

with

the

control

plane

of

that

storage

system,

and

this

would

be

in

order

to

dynamically

provision

resources,

perhaps,

and

also

to

do

things

like,

for

example,

attach

resources

or

or

or

move

resources

to

different

nodes

or

depending

on

where

the

workload

moves.

At

the

time

the

the

standard

control

plane

interface

for

for

volumes

within

within

kubernetes

is

csi,

the

container

storage

interface.

B

B

Perform

snapshots

etc

from

the

underlying

storage

system,

some

storage

systems

also

have

you

know,

a

variety

of

frameworks

and

tools,

and

that

can

also

interface

with

the

orchestrator.

This

could

be

systems.

It

could

be

an

operator,

for

example,

that

allows

the

distribution

or

or

the

the

deployment

and

the

upgrade

ability

of

a

storage

system

within

within

kubernetes.

B

So

so

we

talked

a

lot

about

the

the

different,

the

different

options

before

actually

before

before

we

go

into

the

next

stage.

I

just

wanted

to

say:

if,

if

there

are

any

questions,

please

feel

free

to

to

ask

in

in

the

chat

I'm

happy

to

to

stop

and

answer

questions

on

the

fly

as

I'm

going

along

too.

So,

don't

don't

don't

feel

like

you.

A

B

So

so

a

storage

class

is

allows

you

to

define

a

set

of

parameters

that

will

interface

with

a

with

a

driver

with

that

that

is

used

within

the

control

plane

of

that

of

that

storage

system

so

so

effectively.

The

storage

class

is

the

standard

interface

that

defines

a

name

of

the

storage

system

and

how

to

access

that

storage

system.

B

B

Will

dynamically

provision

a

pv

or

persistent

volume

out

of

the

storage

class

and

then

that

persistent

volume

can

actually

then

be

accessed

through

any

pod

or

or

any

stateful

workloads

and

it

becomes

becomes?

You

know

a

piece

of

portable

storage

that

can

move

around

with

the

pod

right.

So

the

idea

is

that

by

giving

dynamically

provisioning

the

claim

and

giving

the

claim

a

name

which

is

then

referenced

within

the

pod,

you

have

you

know,

a

standard

building

block

for

how

to

link

storage

into

into

the

the

applications.

B

B

All

we're

saying

here

is

that

we

we

create

a

a

storage

class,

called

storage

os.

We

give

it

a

name,

the

the

the

we

define

what

the

provisioner

for

that

storage

class

is,

in

this

case

it's

the

csi

interface

for

for

storage

os,

and

there

may

be

some

parameters

that

are

also

defined

within

that

within

that

storage

class.

B

B

It

references,

the

storage

class,

so

kubernetes

knows

which

driver

to

use

and

which

interface,

to

use

to

to

create

that

provision,

and

we

specify

the

access

mode.

So

so,

if

we're

doing

something

like

a

database

volume,

for

example,

we

would

choose

read

write

once,

which

means

that

that

storage

volume

is

accessible

to

to

one

pod

at

a

time.

B

But

it's

also

possible

to

create

volumes

with

read,

write

mini,

which

typically

uses

some

sort

of

shirt

file

system

like

nfs,

for

example,

under

the

covers

to

make

the

to

to

to

enable

the

volume

to

be

accessed

by

multiple

containers.

At

the

same

time,

and

the

other

mandatory

piece

of

data

is

typically

the

size

of

the

volume

right

so

in

this

case

we're

doing

a

five

gig

volume.

Now

it's

it's

it's

possible

to

define

different

attributes

for

different

systems

in

the

storage

class

or

in

the

persistent

volume

claim.

B

So

very

often

it

will

be

possible

to

define

parameters

like

replication

or

compression

or

encryption

or

or

or

other

data

services,

for

example,

which

which

can

be

applied

either

at

a

storage

class

level

or

or

at

the

individual

volume.

Depending

on

the

on

the

underlying

storage

system.

In

the

case

of

storage

os,

you

can

create,

you

know,

different

classes

with

with

different

classes

of

data

services

and

then

and-

and

you

can

also

create

granular

individual

settings

for

for

specific

volumes,

and

then

we

can

launch

a

stateful

application.

B

So

so

in

this

case

we're

launching

a

postgres,

a

postgres

database

and

what

you'll

see

is

in

that

list,

we'll

see

where

we're

mounting

pg

data

and

we're

using

we're

using

the

database

volume

one

as

the

claim

for

that

volume.

So

so

what's

actually

happening

behind

the

covers

from

a

kubernetes

point

of

view.

B

So

so,

with

a

little

bit

of

yaml,

it's

extremely

easy

to

have

a

complete

end-to-end,

declarative

interface,

to

start

stateful,

workloads

with

storage

and

also

to

template

them

and

build

build

your

services

as

you

go

along

again.

If

there

are

any

questions

that

come

up,

feel

free

to

ask

them

in

the

in

the

chat

window,.

A

B

B

It's

based

on

a

scale-up

approach

where

the

server

might

have

a

number

of

database

instances

or

a

number

of

databases

within

a

single

instance

running

on

that

node

and

and

ultimately

you

know

it

is

problematic.

Every

time

a

new

database

is

required,

whether

it's

for

development

or

testing

or

or

if

databases

are

required,

as

the

application

expands,

it

can

be

problematic

and

and

and

often

risky

to

make

changes

to.

You

know

your

database

server

instance.

B

We

have

the

developer

edition

of

storage

resin

off,

which

actually

virtualizes

the

storage

and

provides

that

scale

out

distributed

storage

for

the

applications

and

provides

those

plugins

for

csi

to

to

allow

kubernetes

to

dynamically

provision

volumes

on

demand

across

a

storage

pool,

that's

aggregated

across

all

of

the

nodes

in

the

cluster,

and

what

we

see

is

that

we

can.

You

know

we

can

create

a

database

and

we

can

also

then,

rather

than

have

one

big

database

instance

we

can.

We

can.

B

We

can

move

individual

instances

as

as

separate

pods

within

within

the

cluster,

which

can

then,

which

can

now

be

spread

out.

So

so,

rather

than

have,

you

know

each

rather

than

have

one

big

database

instance

where

your

failure

domain

and

everything

else

is

based

on

a

scale-up

architecture.

You

now

have

a

distributed

architecture

where

it's

easier

to

make

changes

or

to

tune

or

the

scale,

individual

databases

or

database

instances

within

your

environment.

And

of

course

it

can

be

extremely.

B

And

here's

the

here's,

the

other

thing

right.

We

talked

about

the

different

attributes

that

cloud

native

storage

can

provide.

So

if

your

underlying

storage

system,

like

storage

os,

is

providing

that

high

availability

for

your

database,

you

now

also

have

an

automatic,

failover

and

and

typically

the

way.

This

works

is

right

because

we

talked

about

not

just

availability

but

consistency.

B

In

order

to

be

consistent,

the

storage

system

will

be

taking

the

the

transactions

that

are

being

committed

to

the

primary

volume

of

storage

and

replicating

it

to

one

or

more

replicas.

We

typically

recommend

two

replicas

to

to

provide

additional

availability

and

once

the

replicas

then

acknowledge

that

the

replica

has

been

done,

that

data

is

then

acknowledged

to

the

to

the

database,

and

we

we

call

this.

B

But

what

we

see

here

with

this

with

this

underlying

system

is

that

if,

if

one

of

the

nodes

fails

and

effectively

you

know,

a

copy

of

the

data

is

lost,

the

underlying

storage

system

with

liquid

storage

os

can

can

actually

then

automatically

create

an

additional

replica

to

get.

You

know

the

desired

state

of

having

two

replicas

back

into

back

to

match

the

actual

state,

and

the

database

continues

to

work

on

the

underlying

or

on

the

new

primary

volume,

which

is

which

is

promoted

from

a

previous

replica.

B

B

B

But

in

my

view,

the

biggest

superpower

of

kubernetes

is

the

fact

that

everything

becomes

composable

and

declarative

right.

So

a

developer

can

specify,

can

specify

environments

where

they

define

the

containers

and

the

cpu

and

the

networking,

etc

and

kubernetes

will

just

make

it

happen

and

abstract

away

the

infrastructure

and

also

deal

with

the

scaling

and

automated

failovers

etc.

B

But,

like

we

said,

all

applications

need

to

store

state

somewhere,

and

this

is

where

a

cloud

native

storage

solution

that

integrates

with

kubernetes

can

now

automate,

not

just

computer

networking

but

also

all

of

the

storage.

And

what

this

gives.

You

is

an

incredibly

powerful,

application-centric

storage

paradigm

right

where

effectively

we

have

the

ability

now

to

move

any

stateful

application

into

into

kubernetes,

whether

it's

a

database

or

a

message,

queue

or

or

infrastructure

components

or

analytics

like

elasticsearch

or

prometheus

or

kafka,

for

example.

B

This

is

this.

Is

this

is

incredibly

exciting?

Now,

if

you

want

to

get

into

the

nitty-gritty

of

how

we

do

it

with

with

storage

os,

you

can

try

the

developer

edition.

This

is

the

only

bit

of

plug

I'm

going

to

do

on

storage

os.

You

can

try

the

developer

edition

for

free.

We

have

a

number

of

different

use

cases,

including

you

know,

postgres

and

different

databases,

and

how

to

run

them

on

on

kubernetes,

and

this

is

a

really

easy

way

to

sort

of

experiment

and

learn

in

your

own

kubernetes

environment.

B

A

A

A

It's

so

much

important

to

focus

on

how

you

will

store

your

data,

so

it's

it's

amazing

tim

and

we

can

discuss

about

that

and

how

what's

the

better

pla

best

practice

for

this

for

a

day

long.

I

I

love

this.

This

presentation

we

have.

We

have

some

questions,

few

questions

about

one,

for

example,

one

specific

them

about,

for

example,

search

class.

The

question

is:

how

can

specific,

how

can

be

is

specific

to

a

local

dc.

A

We

lost

everything-

oh

my

god,

sorry.

I

said

that

is

amazing

him,

because

one

point

important

point

for

applications

from

the

perspective

of

data.

Not

only

data

entranced

but

during

rest

is

to

how

you

maintain

the

resilience

of

the

application,

how

you

store

your

data,

how

you

maintain

your

data

from

ever

execution,

so

not

only

during

the

current

execution,

but

then,

if

you

fail

or

if

you

have

a

long

running

process,

so

you

can

stay

with

your

dad

in

some

place.

So

it's

an

amazing

time.

A

You

can

apply

a

day-long

conversation

about

this.

We

have.

We

have

a

thank

you.

So

much

was

amazing.

I

have

a

pleasure

you

here

with

us

today

we

have

a

question

about

how,

if

it's

possible

to

configurate

kind

of

future,

like

I

think

or

start

a

class

to

a

specific

implementation

in

you

know

specifically

dc,

like

vmware

nfvi

company,

it's

possible

to

drive

for

a

specific

implementation

like

this.

B

Absolutely

so

you

know

it's

it's

it's

it's

possible,

you

know

there

are

csi

is,

is,

is

a

general

driver

that

allows

kubernetes

to

to

abstract

out

to

any

number

of

different

storage

systems,

and,

and

they

could

be-

you

know,

traditional

storage

systems.

You

know

like

a

hardware

array

and

an

on-prem

data

center,

but

it

could

also

be

software

solutions

like

like

storage

os,

so

so

effectively

you

can.

You

can

use

that

in

in

vmware.

B

You

know

if

you're,

using

these

these

sort

of

storage

solutions

which

are

based

on

software,

you

can

you

can

use

it

in

vmware.

You

can

use

it

on

bare

metal.

You

can

use

this

in

in

cloud

instances

because,

because

typically

they'll

they'll

apply

everywhere,

you

know

and

it's

not

just

storage

os,

but

even

you

know

there

are

cncf

projects

like

like

rook,

for

example,

that

that

provides

similar

sort

of

things

too.

A

Really

so

it's

we

have

many

opportunities

to

use

any

anything

and

anything

where

in

the

cloud

or

not

cloud,

public

cloud

or

private

cloud,

because

you

have

this

capability

to

adapt

for

with

drivers.

Amazing,

we

have

another

question.

This

is

about

what

why

you'll

be

recommended

for

kubernetes

two-time

db,

replica

cluster,

for

example,

galera

or

replicating

a

single

debate

at

storage

layer.

B

B

Right,

it's

it's!

It's

not

a

straightforward

answer.

It

does

depends.

There

are

different

pros

and

cons

right,

doing

doing

replication

at

the

database

layer

or

even

charting

at

the

database

layer

is

is

certainly

you

know

a

very

common

architectural

pattern

and

it

works

to

to,

to

a

certain

extent.

The

downsides

to

doing

say

you

know,

replication

with

something

like

galera

is

that,

of

course,

you

have

to

use

three

times

the

amount

of

compute,

for

example,

if

you

have

a

primary

and

and

a

couple

of

replicas

or

or

a

couple

of

secondaries.

B

Of

course,

having

multiple

computes

resources

for

for

the

the

primary

in

the

slave

can

also

mean

that

you

know

you

gain

additional

scalability,

because

you

can

use

the

slaves

for

for

read-only

workloads

or

or

to

or

to

run

batch

reports

or

things

like

that

as

well

right.

So

so

it's

it's

not

always

clear-cut.

B

The

other

thing

is,

it

doesn't

have

to

be

an

either

or

solution

right.

You

can

use

storage

level

replication

as

well

as

database

level

replication,

because

database

level

replication

can

give

you

the

scale

that

you

might

need

and,

and

that

doesn't

just

apply

to

you

know

things

like

galera,

but

you

know

cassandra.

A

B

And

vitesse,

and

and

cockroach

db,

etc,

are

all

defined

on

that

on

that

same

type

of

architecture,

but

then

on

on

the

the

the

underlying

storage

can

also

become

a

challenge

when

you

have

a

recovery

scenario.

So

so

imagine

you

know

you

have

a

distributed

database

and

you

lose

one

of

the

nodes.

Well,

it's

obviously

possible

to

start

up

another

node

and

let

that

let

the

database

replicate

into

the

data

into

the

new

node.

A

B

B

What

we

can

find

is

that

the

control

plane

and

the

storage

system

can

automatically

place

replicas

across

availability

zones

in

a

cloud

provider,

for

example,

and

thereby

provides

additional

protection

against

an

individual

availability

zone

or

failure

domain

from

from

taking

out

all

of

the

replicas,

so

so

replica

placement

is

is

is

one

of

the

functions.

The

other

function,

though,

that

that

we

need

to

that

we

need

to

consider

is

the

the

the

challenges

around

latency

right.

B

You

can

also

potentially

scale

it

down

to

zero

and

stop

running

the

application

altogether,

but

as

long

as

the

storage

system

still

has

some

sort

of

scale,

you're

fine,

of

course,

if

you

are,

if

you

want

the

ability

to

to

scale

the

entire

cluster

and

the

nodes

in

general,

you

probably

want

to

have

a

set

of

nodes

dedicated

to

the

storage

which

you,

which

you

don't

scale

down.

You

know

so

so

you

might,

rather

than

go

for

a

completely

hyper

converged

topology.

A

Great

alex,

we

have

a

great

question

from

yogi,

but

don't

have

more

time

here.

Just

have

five

five

minutes

to

finish

so

gagi

will

try

to

answer

your

question

then

debate

about

this

in

our

other,

in

our

chat

with

alex

and

if

alex

can

help

us,

but

alex,

is

member

co-chair

of

a

special,

interesting

research.

Special

interesting

group

alex,

so

we

have

a

few

times

just.

Could

you

please

talk

a

little

bit?

What

is

what's

your?

A

B

B

B

The

sick

itself

is,

you

know,

constituted

by

the

toc.

We

don't

make

any

decisions

ourselves.

We

we

act

in

an

advisory

capacity

to

the

toc,

the

toc

nominates

and

votes

for

co-chairs

and

tech

leads

within

within

the

toc.

Sorry,

within

the

sig,

and

typically

it's

people

who

are

active

in

the

community

and

trying

to

help

out.

So

you

know

the

easiest

thing

to

do.

If

you

want

to

to

to

have

an

active

role

in

the

sig

is

to

join.

A

Oh

great

alex

just

to

finish

my

last

question

about

the

stars

special

interesting

group.

We

have

many

guys

there

with

specializes

in

storage

and

how

lead

with

with

workloads

that

need

to,

of

course,

storage

data

etc.

So

if

you

someone

has

a

question

or

the

doubt

about

this

concern,

it's

a

good

place

to

reach

the

channel

from

siggy

stores

to

chat

about

and

maybe

get

some

help,

because

I

we

know

that

the

the

group

has

other

many

many

work

to

do.