►

From YouTube: CNCF Serverless 9/28/17

Description

Join us for KubeCon + CloudNativeCon in Barcelona May 20 - 23, Shanghai June 24 - 26, and San Diego November 18 - 21! Learn more at https://kubecon.io. The conference features presentations from developers and end users of Kubernetes, Prometheus, Envoy and all of the other CNCF-hosted projects.

A

A

B

A

D

B

A

E

F

F

A

A

C

C

Okay,

do

we

want

to

start

or

wait

for

someone,

no

go

ahead?

Okay,

so

what

we

wanted

to

do

is

explain

the

architecture

and

especially

sound,

more

unique

tributes

in

nuclear.

Our

intention

is

not

necessarily

to

look

at

it

as

a

holistic

solution,

but

some

of

the

components

we

will

discuss

could

actually

be

reused

by

others,

or

we

can

try

and

absorb

other

components

from

a

other

project.

So

it's

important

to

understand

sound,

a

modeling

that

we've

done.

C

G

C

We

started

doing

that.

We

had

a

previous

version

which

was

proprietary

and

then

about

a

year

ago

we

decided

to

open

source

it

and

sort

of

rewrote

the

entire

code

and

in

order

to

make

it

more

open

to

other

data

sources,

it

and

sources

and

be

more

modular

for

future

use

cases.

What's

some

essential

things

in

the

architecture?

It's

it's

a

real-time

system.

It

has

notions

of

zero

copy,

zero

context,

which

is

no

garbage

cleaning.

All

those

aspects

that

come

from

a

real-time.

This

is

why

it's

performance

is

so

high.

C

We

we

did

want

to

address

all

the

aspects

of

life

cycle

so

versioning.

How

do

we?

You

know

test

in

one

place

and

deploy

in

another

place,

because

we

have

a

lot

of

IOT

use

cases

and

we

wanted

the

data

and

events

versus

to

be

pluggable

without

impacting

the

I

had

the

function,

because

we

use

functions,

for

example,

to

do

backup

jobs

in

our

systems.

I

want

to

just

like

the

the

right

data

set

that

I

want

to

backup

as

a

parameter.

I

don't

want

to

rewrite

my

in

my

application

and

say

for

events.

C

We

want

to

trigger

the

same

function,

sometimes

for

testing

from

an

HTTP

event

and

sometimes

from

a

stream

or

a

message

queue

and

the

general

idea

is

that

needs

to

be

portable.

Pretty

much

like

with

the

Amazon

Lander.

You

can

do

a

green

grass.

You

could

do

snowball

ads.

You

could

do

in

the

cloud,

so

here

it's

even

a

bit

more

modular

because

you

can

run

it

on

a

laptop

on

a

Raspberry

Pi

on

many

other

platforms,

so

that

was

our

requirements

for

the

system

it's

divided

to

serve.

C

The

main

component

is

the

processor,

which

is

the

thing

that

wraps

the

function.

I'll

dive

a

bit

more

into

it

this

and

then

this

processor

is

wrapped

by

on

one

side,

something

called

event

listener

which

intercepts

the

events

from

the

different

types

of

data

sources.

And

then

you

have

a

notion

of

data

binding,

which

is

the

thing

that

binds

with

the

different

data

types

like

databases,

stream,

etcetera

and

on

the

controller

we

have

several

components:

it's

run

as

a

bound

to

kubernetes,

but

when

it's,

the

current

implementation

of

controller

is

over

kubernetes,

it

could

be.

C

This

is

I

think

the

only

component

that

is

tied

to

the

underlying

a

platform

there's

another

component,

which

is

the

Builder

function,

Oscar,

runs

in

a

container

and

builds

the

artifacts

there's

something

called

dealer

which

I'll

explain

it's

a

thing

that

allows

the

dynamic

association

between

various

things

like

jobs

and

scars

and

tasks

and

functions

and

there's

also

aspects

of

user

interface.

One

is

the

CLI.

The

one

is

a

pretty

much

like

I

go

playground

and

we'll

see

how

it

works.

C

So

that's

the

general

architecture

and

if

we

dive

further

into

the

processing

engine

so

the

event

listener,

you

essentially

implement

a

server

class

that

implements

the

event

listener.

There

are

four

types

of

event

listeners

because

they

define

the

behavior

of

the

of

the

in-car

workflow

and

then

under

a

class.

You

have

different

implementations.

So

if

you

go

for

the

the

code,

you'll

see

that

there

is

like

synchronous,

asynchronous

stream

and

palling

implementations,

or

so

we're

probably

going

to

general.

It

is

best

jobs.

C

The

polling,

work

and

under

those

are

different

transport

implementations,

because

synchronous

is

like

a

request

and

reply

has

one

behavior,

asynchronous

or

independent,

set

tool?

Asynchronous

messages

and

things

that

we

discussed

you

know

streaming

is

sort

of

continuous

stream

of

messages

with

ordering

and

potentially

sharded.

So

those

are

the

the

plugins

they're

implemented

in

a

way

that

you

can

actually

have

zero

copy,

all

the

way

at

zero

context,

switches

all

the

way

from

the

event

source

to

the

function

and

the

other

side.

You

have

the

data

bindings.

C

We

still

need

to

do

some

more

work

on

that.

So

currently,

today,

Amazon

API

is

in

our

release.

Rio

is

supported,

but

in

few

weeks

we'll

add

all

the

rest

of

the

things

in

the

list

here.

This

processing

runtime

have

abstraction

of

the

interface

to

the

platform,

so

this

is

why

it

can

run

over

different

platforms.

C

So

all

the

things

around

logging

and

and

runtime

have

absolute

interfaces

which,

if

the

group

wants,

we

can

start

and

standardize

them

and

I'll

touch

on

some

of

them,

especially

like

logging

and

others,

and

the

function

itself

runs

in

the

runtime

engine.

If

it's

because

the

old

infrastructure

is

designed

around

my

golang.

So

if

it's

golang,

it's

sort

of

inline

go

routines.

If

it's

other

languages,

then

it's

there.

Few

invocation

mechanism,

one

is

like

a

bash,

the

other

one

is

like

unix

sockets,

and

this

is

going

to

be

a

shared

memory

implementation.

C

So

it's

not

like

some

of

the

platforms

where

it's

like

CGI,

that

you

invoke

the

process

process,

the

process

or

the

workers

are

kept

live,

and

then

you

sort

of

just

inject

the

message

and

get

events

and

other

responses

from

the

function

and

also

another

unique

thing

is

that

function?

We

we

have

multiple

workers

on

a

single

process

and

this

allows

us

to

get

very

high

concurrency

and

also

enable

this

very

high

performance.

C

H

C

This,

what

you're

saying

here

the

function

processor,

is

a

process.

Okay,

it

is

live.

The

orchestration

layer

needs

to

decide

if

it

needs

to

be

live

any,

for

example,

this

is

in

the

dock.

We

refer

to

something

called

starter,

so

if

the

function

is

down,

someone

else

intercepts

that

she

requests

loads

the

process

and

then

ships

the

message

into

the

process

in

case

of

message

queues,

it's

slightly

different,

but

the

idea

that

the

process

already

has

the

runtime

worm.

You

don't

necessarily

have

to

have

all

the

workers

up.

C

I

C

H

H

H

C

Because

what

we,

what

we

see

is

sometimes

we

deal

with

low

latency

use

cases

where

people

would

prefer

to

pay

some

more

for

the

resources

and

launch

and

have

server

zero

latency.

And

you

know

we've

seen

latencies

of

sort

of

0.1

millisecond

and

on

the

system

and

implication.

And

so

you

know

we

don't

want

to

add

another

100

or

few

hundreds

of

milliseconds

in

some

cases

to

the

invocation.

C

C

Getting

because

we

don't

have

a

lot

of

time,

let's

table

sound

the

discussion

if

some

of

the

questions

to

the

end,

okay,

so

one

unique

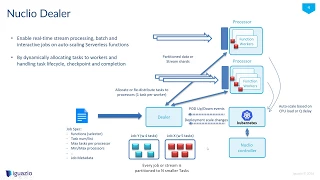

element

that

we've

initially

we

started

working

with

like

stateful

set

in

order

to

deal

with

the

sharding

challenge

of

how

do

you

distribute

multiple

charts

to

multiple

functions

and

eventually

we

decide

to

have

something

which

is

more

dynamic.

It's

called

the

dealer,

essentially

what

you

do

you

submit

the

job

and

you

tell

the

job.

How

many

tasks

and

task

could

be

very

different.

C

Things

could

be

a

number

of

actual

acting

charts.

It

could

be

if

you're,

sometimes

service

doesn't

feed

bad

jobs.

Let's

assume

you

want

to

do.

Etl

ETL

is

too

long

for

a

service

function,

so

you

may

want

to

break

the

table

to

a

thousand

smaller

slices

each

one

of

those

will

run

independently

in

checkpoint.

So

if

you

fail,

you

serve

start

from

the

same

point.

C

So

general

idea

is

that

you

break

a

job

into

a

bunch

of

tasks

as

many

as

you

want

there

all

sorts

of

metadata

things

like

you

know

how

many

tasks

in

a

process

do

concurrently,

etcetera

and

the

dealer

deals

with

sort

of

dynamically

distributing

the

shards

or

the

job

tasks

among

the

actual

functions.

Every

time

you

know

there

is

something

is

restarting,

it

will

reallocate

it's

actually

not

really

working

from

the

definition

of

what's

up

and

down

it's

it's

looking

at

the

deployment

expected

number

because

what

happens?

If

you

have

a

pod,

they

just

restarted.

C

You

don't

want

to

redistribute

all

the

shards

and

then

I

mean

a

second.

After

that

the

pod

went

up

and

you

redistribute

again

so

we

we

watch

the

both

events

of

up/down

events

as

well

as

deployment

scale.

They

change

events,

okay,

so

that's

a

very

generic

mechanism.

It's

actually

not

tied

to

nucleus,

so

we

can

use

it

even

and

other

micro

services.

C

They

could

run

in

parallel,

depending

on

the

metadata

you

see,

maximum

tasks

per

process

or

in

minimax

processes

and

all

that

those

parameters

or

they

could

run

in

a

cascading

like

I,

have

a

thousand

tasks

and

I'm

limited

to

only

four

processes.

So

essentially,

I

can

give

every

process

like

five

tasks.

They

finished

two

they

get

another

two

etc,

okay.

C

C

C

Also

we

demonstrate

this

week

at

strata-

is

that

by

having

you

know,

service

functions

that

first

are

so

fast

and

real-time

and

have

those

native

data

bindings

to

data

and

and

have

this

variability

to

because

go

beyond

just

simple

event-driven

to

also

sharding

and

in

stream,

processing

and

batch

jobs.

Then

essentially

it

can

replace

almost

anything

in

the

setup.

So

this

is

a

traditional

pipeline

that

we

work

with

customers.

You

know

you,

you

get

fees

from

whether

it's

operational

data

cases

like

ETL

or

CDC

or

stream.

C

You

do

all

the

pre-processing

on

those

strings

like

really

normalization

aggregation

prediction

from

chi

learning

against

a

data

source.

You

can

do

all

the

complex

event

processing

with

those

you

could

do

all

the

data

movement.

You

know

from

one

repository

to

the

cloud

and

back

you

could

do

even

the

API

is

that

serve

the

dashboards,

and

essentially

you

also

have

other

services

in

our

platform

that

deal

with

like

machine

learning

or

spark

or

other

things

which

are

you

know,

those

will

be

continuous

services,

they

have

their

own.

You

know

api's

and

tcp

ports,

etc.

C

Okay-

and

this

is

one

example

that

I'll

show

in

a

minute

that

how

we

essentially

take

an

event,

do

parallel

enrichment

across

multiple

tables.

Do

a

complex

event,

processing

work

against

like

four

different

streams

and

tables

and

geo

maps

60

lines

of

code.

All

of

that

example,

I

want

dive

into

the

next

few

slides.

It

will

will

be

something

that

you

could

look

at

after.

This

is

the

definition

of

the

like

job

spec

and

because

there

are

different

points

into

the

unique

aspects

of

you

know

things

like

data

binding.

C

You

could

notice

it's

a

kubernetes

CRD,

so

essentially

you

can

do

Kubik

at

all,

create

of

a

bunch

of

things

and

functions

in

the

same

and

command.

There

is

this

definition

of

events

that

are

shipped

into

the

function.

This

is

also

something

worth

discussing,

because

this

is

part

of

how

we

see

generalizing

the

event

structure

across

multiple

forms

of

events.

C

This

is

the

logging

interface.

We

also

suggest

to

sort

of

standardize

the

logging

interface

today

we

have

different

implementations

for

the

logging

interface

like

bring

to

the

screen,

pass

it

for

HTTP,

write

to

a

file,

push

it

to

event,

event

stream.

This

is

another

thing

that

I

wouldn't

like

to

have

us

maintaining

everything,

and

if

we

can

form

a

generalized

model

here

and

everyone

agrees

to

it,

then

we

can

have

people

very,

creating

plugins

for

logging

for

stream

logging

and

one

uniform

interface.

C

C

Okay,

so

good!

So

where

Iran

will

show

us

model,

it

will

show

us

the

demo

and

one

of

the

things

that

I

mentioned

it.

Nuclear

comes

with

a

built

in

sort

of

a

playground,

pretty

powerful.

So

you

could

you

don't

it's

essentially

a

kubernetes

deployment,

you

just

launch

it

and

it

works

standalone.

You

could

launch

as

many

as

you

want

in

parallel

and

and

what,

let's

so

Iran,

let's

start

with

no

show

a

simple

fight

on

you

could

see

right.

You

write

the

function,

a

simple

fight

in

a

getting

booked

by

the

event.

C

In

the

context,

the

context

also

gets

you

the

logging.

The

logging

is

booked,

structured

and

and

has

different

levels

while

and

then

you

just

compile

the

function,

run

it

from

the

same

playground.

You

can

actually

also

invoke

it

and

entity

that

the

return

response

could

be

either

structured

or

unstructured.

If,

instead

of

putting

a

structure

the

response,

I'll

just

put

a

string,

it

will

also

respond.

C

You

just

won't

have

the

flexibility

is

to

control

all

those

things

like

return,

status,

etc,

and

with

the

logging

level,

you

can

actually

control

in

real

time

what

kind

of

log

messages

are

going

to

be

shown

to

the

to

the

user

or

be

written

to

the

log

stream.

So

if

I

have

a

same

function

with

different

levels

of

logging

and

I

happen

to

run

into

a

challenge,

I'll

just

turn

on

debugging

and

run

okay,

and

so

that's

around

that.

Maybe,

let's

show

a

different

function

now

and

go

okay,

and

this

you

could

see

again.

C

Also

all

the

notion

of

the

logging

structures

start

to

set

Sarah,

and

maybe

you

can

show

us

the

performance

in

Roger.

So

what

you

see

in

nuclear

is

also

integrated

with

prometheus

and

we

have

our

own

tool

for

HTTP

benchmarking.

It's

pretty

fast

tool

generates

about

800,000,

HTTP

requests

per

second,

it's

open

source

and

what

you

see

well,

while

we're

running

the

function,

we

can

run

parameters,

also

look

at

the

HTTP

blaster

event

and,

and

you

see

the

the

results,

hopefully

yeah-

we're

running

an

empty

function

that

just

returns:

nothing:

okay,

yeah.

C

C

Okay,

so

that's

around

that

and

maybe

around

yeah,

can

you

show

us

also

how

you

invoke

some

function

from

different

sources

yeah.

So,

as

we

mentioned

the

same,

the

things

same

function,

signature

is

the

exactly

the

same

across

multiple

types

of

sources.

You

can

subclass

it

and

extend

stuff,

but

it's

pretty

comprehensive.

So

the

nice

thing

is

I

can

take

the

same

function

that

I

just

invoked

with

HTTP

and

now

invoke

it

through

rabbitmq.

Okay,

because

I

asked

the

function

to

listen

on

the

fae,

synchronous

and

synchronous

data.

C

C

So

until

now

we

sort

just

play

it

with

sort

of

stuff,

but

you

can

see

that

you

I

mentioned

to

you

this

real-time

IOT

demo,

that

you

know

intercepts

core

messages

and

and

does

a

real-time

enrichment

with

other

datasets.

This

is

actual

the

code

you

can

see.

The

first

line

is

the

data

binding

we're

actually

having

in

the

definition

language,

we're

saying:

DB

0

equals

to

certain

data

type,

certain

security

credentials

Sarah,

and

then

that

already

is

for

bound

to

my

context

and

I.

C

What

I

do

here

is

we

also

have

serve

like

pandas

or

data

frame,

as

Park

data

frame

implementation,

similar

to

that

in

Python.

In

go

sorry

for

real-time,

it's

also

going

to

be

open

source,

and

you

could

say

that

what

I'm

here

doing

here,

I'm

doing

far

a

little

read

from

four

different

tables.

You

know

cars

driver

is

wrote,

information,

doing

all

sorts

of

decision-making

underneath

you

know,

and

cop

the

geo

max

drop

date

card

driver

violation,

streams

all

that

stuff.

The

last

line.

C

By

the

way,

you

could

see

that

it's

or

waits

for

completion,

because

everything

here

happens,

asynchronously

all

those

five

different

updates

to

five

different

streams

and

tables

happens

concurrently.

So

that's

why

the

last

row

is

essentially

like

waiting

for

all

those

things

to

complete.

You

could

see

that

very

few

lines

of

code

I

managed

to

do

something

that

in

traditional

stream

processing

will

take

multiple

components

and

you

know

one

be

as

elastic,

and

this

is

an

elastic

function.

C

C

Hope

we

have

two

more

minutes

for

that.

So

just

show

us,

you

know.

So

there

is

a

full-blown

CLI.

You

can

see.

There

are

so

many

options

when

you

want

to

invoke

a

function

and

you

could

decide

where

do

you

want

to

have

the

repository?

So

you

know

things

like

IOT

use

cases

you

deploy

and

run

a

function,

create

an

artifact,

and

then

you

want

to

spread

the

same

artifact

to

multiple

edge

devices.

You

could

do

that.

C

C

You

know

maybe

show

us

the

publishing

feature

for

the

versioning,

just

it

okay.

So

the

nice

idea

is

that,

let's

assume

I

tested

the

function,

everything

is

cool.

I

want

to

now

label

it

and

tag

it.

So

that's

the

publishing

feature

and

what

I'll

do

with

the

event

source

I

will

define,

which

sort

of

label

selector

or

which

version

or

alius

I,

want

the

event

to

drive

so

now

I

could

have

event

source.

C

You

know

extreme

that

the

production

per

stream

and

matches

with

a

production

function

and

in

parallel

I'll

have

a

different

function

just

for

testing

and

I'll,

invoke

it

with

HTTP

or

another

stream.

Okay.

So

that's

the

that's

the

general

right

here.

You

can

update

a

bunch

of

things

to

each

one

of

the

versions

like

environment

variables.

You

could

label

functions

and

all

those

things

it's

very

comprehensive,

we'll

probably

won't

have

time

to

get

into

all

those

things

good

any.

So

thanks

Ron

for

okay.

C

A

C

A

All

right

cool,

thank

you

all

right.

So,

first

on

the

agenda

is

a

gentle

reminder:

people

who

have

been

trying

their

best

to

address

comments

in

the

doc

itself,

ignoring

this

section

of

what

is

service,

the

rest

of

the

doc

I

think

there's

less

than

about

a

handful

of

comments

that

are

outstanding.

So

if

there

are

any

comments

suggested

that

is

or

something

in

your

section,

please

try

to

address

them.

Don't

just

leave

the

comments

there.

A

If

you

think

you

could

rest

it

whether

that

means

actually

do

what

they

asked

or

ignore

it

or

something

or

reject

it,

go

ahead

and

resolve

the

comment,

so

we

get

disappear,

some

doc

itself.

My

goal

is

to

try

to

get

zero

comments

in

the

doc

really

really

soon.

So,

just

a

reminder

of

that.

So

let's

now

jump

to

the

conclusion

section,

the

doc

and

actually,

let

me

go

ahead

and

try

to

share

my

screen

here-

hold

on

a

sec.

A

Cool

all

right,

so

the

conclusion

section

first

I

wrote

this

I

guess

maybe

two

weeks

ago

and

I

find

it

very

hard

to

believe.

There

are

zero

comments

that

it

was

actually

perfect,

so

I'm

gonna,

just

haven't

had

a

chance

to

review

it

yet

so,

rather

than

taking

the

time

to

review

it

right

here

right

now,

what

I

would

like

to

do

again

is

just

encourage

people

to

please

read

it

as

soon

as

possible,

because

this

is

probably

the

most

critical

part

of

the

document

itself.

A

We

need

to

catch

this

as

soon

as

possible

because,

aside

from

the

what

is

service

section,

this

section

should

be

the

one

we

spend

most

of

our

time

on,

and

it's

worrying

me

that

no

one's

commented

on

there

because

I

know

I

didn't

do

that

good,

a

job

so

and

are

there

any

high

level

things

about

this

people

like

to

discuss

now

or

if

not

how

late

it

and

we

can

just

go

back

and

discuss

it

next

week.

But

please

take

a

look

at

some

point,

so

they

pause

here

any

high-level

comments

or

questions.

G

A

So

the

basic

goal

of

the

recommendations

section

is

to

provide

us

a

set

of

proposals

for

the

CN

CF

COC

to

consider

for

next

steps,

whether

it's

do

nothing

at

all

or

its

look

for

some

sort

of

standardization

effort

or

something

in

between

that's.

What

we're

trying

to

lay

down

here

as

a

set

of

recommendations.

A

They're,

all

any

of

the

things

you

just

mentioned,

there

are

valid

possibilities.

They

keep.

The

key

point

here

is

people

need

to

write

down,

identify

what

they'd

like

to

see

as

an

option,

and

then

we

as

a

group

can

decide

whether

that's

worthy

of

us

saying

to

TOC.

Yes,

we

like

this

idea

strongly

enough

that

we

want

you

guys

to

seriously

consider

it

great.

G

H

A

H

A

Goal

yep

definitely,

and

those

are

the

kind

of

things

that

I'd

like

to

see.

You

guys

add

to

the

dock

itself

if

I

have

already

covered

it,

wherever

you

think,

I

had

done

a

poor

job

of

covering

it.

Please,

edit

the

text

directly

there

and

like

with

all

other

suggestions.

Please

just

don't

leave

a

comment.

Saying:

hey

it'd,

be

nice.

If

you

talked

about

this

actually

add

the

actual

text

in

there,

it

makes

it

so

much

easier

to

review

and

and

to

understand

exactly

what

you're

looking

for

yeah.

C

I

think

another

point

beyond

the

user

is

also

the

echo

system

and,

yes,

we

work

with

guys

developing

edit

or

and

the

buggers

and

whatever.

If

we

maintain

some

some

ability

to

create

a

uniform,

the

ammo

files

or

unit

for

name

event,

structure

or

uniform

debugging

mechanism,

or

at

least

close

in

terms

of

the

model

that

makes

life

easier

for

the

echo

system.

And

if

the

echo

system

fosters

everyone

wins,

I

would

agree

with

that

part.

E

A

G

A

Don't

think

we

missed

them

out

on

this

call

right

now,

because

I

think

everything

you

guys

are

mentioning

your

great

ideas:

let's

get

them

in

the

doc

as

actual

textual

edits.

That

way,

we

can

look

at

them

in

context

and

and

we

look

at

them,

review

them

and

in

essence,

vote

or

approve

or

reject

them.

Yeah.

H

That's

right,

I'll

add

something

to

the

doc

of

another

suggestion

is

in

the

first

bullet.

We

say

we

encourage

more

service

technology

to

try

CSF

and

more

open

sources

party

places.

So

we

should

keep

this

window

open

right

because

there

could

be

more

providers

open,

source

solutions

coming

in

the

future.

So

how

we

should

I

mean

one?

Is

this

going

to

be

like

reported

to

the

CSF

TOC

or

what's

the

timeline?

Could

they

be

made?

Could

we

make

it

this

time

man

longer

well.

A

So

I

don't

want

to

push

out

Nestle.

Oh,

we

don't

have

a

formal

timeline

for

the

white

paper

other

than

try

to

get

it

done

as

soon

as

possible.

But

you

are

correct.

We

don't

want

to

necessarily

lock

down

everything

so

that

if

new

projects

come

along,

they

can't

be

added

to

something,

and

that's

that

quote

something

that

we

need

to

figure

out

right.

So

do

we

take

this

document?

Put

it

into

github,

so

we

can

have

people

issue

PRS

to

get

their

product

listed

in

there.

A

Or

do

we

talk

about

the

next

subject,

which

is

which

is

create

a

table

someplace

where

we

actually

list

these

things,

and

this

is

a

lead,

living

breathing

document

of

the

various

projects

and

tools

and

service

and

stuff

like

that.

That

might

be

the

place

to

list.

It

is

in

this

landscape

document

rather

than

the

white

paper

itself.

So

that's

something

else.

We

need

to

discuss.

Yeah.

K

Yeah

we

wanted

this

to

be

kind

of

a

living

document.

The

Google

Doc,

that's

kind

of

updated

and

we're

basing

this

on

what

the

service

I'm,

sorry,

the

storage

working

group

has

done

and

that

they

had

success

with

according

to

Chris,

yeah

I.

Think

the

the

key

shift

that

we

have

right

now

is

getting

the

white

paper

in

a

good

enough

state

to

get

out

the

door,

so

we

can

actually

start

working

on

some

some

plantations,

some

code

first

type

of

stuff

rather

than

too

much

in

terms

of

you,

know,

generic

wording

and

stuff

right.

A

A

So

when

we

talked

about

creating

this,

this

spreadsheet

did

we

agree

to

remove

the

appendix

for

the

document

that

listed

some

of

these

things

out

and

only

have

the

spreadsheet,

or

did

we

say

the

document

was

gonna

be

a

sort

of

like

a

snapshot

right

now,

but

the

living

document,

the

living

breathing

side

of

things,

would

be

the

spreadsheet.

My.

A

A

K

A

E

Sure,

instead

of

having

things

that

had

all

on

the

same

sheet,

it

made

made

sense

to

us

to

break

out

what

are

the

the

projects

that

are

delivering

more

serverless

types,

types

of

orchestration

platforms,

function,

schedulers,

executors,

split

out

development

tools

and

then

there's

a

services

tab

as

well

to

list

out.

You

know

what

are

what

are

the

recommended

or

preferred

applications

that

you

would

you

would

tie

into

for

event,

sources

that

are

known

to

be

interoperable

with

with

some

of

the

some

of

the

function

executors,

you

know

just

in

terms

of

the

venting

etc.

A

A

Actually

it's

in

the

dock

itself,

but

you're

writing

me.

Did

you

do

so?

Here's

the

link

in

slack

and

it's

in

the

meeting

minutes

as

well

right

here,

yeah

but

as

I

know,

you

guys

haven't

a

chance

to

really

look

at

this,

but

as

a

starting

point.

This

seemed

like

the

least

headed

in

the

right

general

direction

and

we

just

need

to

do

some.

Some

tweaks

I

think.

K

A

A

Okay,

I

didn't

think.

I

saw

him,

okay,

so

Sara.

Thank

you

for

your

peer

edits.

There

I

personally

like

the

direction

you

took

it.

The

question

is

I

think

it's

going

to

be

more

for

Chris

innate

than

anybody

else,

because

he

was

on

the

other

side

of

the

fence

in

terms

of

what

the

present

here

is,

whether

this

is

going

to

be

still

along

the

lines

where

he

can

live

with

in

terms

of

Amazon's

view

of

things.

A

G

There's

this

perspective

of

like

making

something

that

can

run

anywhere

or

making

something

that

can

run.

I

can

run

myself

where,

if

I'm

running

it,

myself,

I'm

kind

of

acting

as

both

the

provider

and

the

developer,

but

those

are

still

two

separate

concerns

and

and

I

was

kind

of

recalling

also

I'm

something

I

didn't

know

quite

how

to

put

in

the

dock,

because

I

couldn't

find

a

good

reference

to

was

unholstered,

where

that

seemed

very

relevant

in

some

way.

G

So

this

was

just

a

way

of

attempting

to

clarify

what

was

already

there

and

I

refrained

from

actually

I

believe

changing

anything

rather

to

separate

the

things

that

are

clearly

not

controversial,

which

is

like

zero

ops.

Everything

is

managed

for

you

as

the

developer,

deploying

the

function

right

and

then

there's

this

other

concern,

which

is

as

the

developer

using

this

platform.

G

What

are

my

costs

and

so

I'm

really

interested

in

the

working

group?

Thinking

like,

what's,

what

is

the

business

model

have

to

do

with

anything

and

and

at

first

I

wanted

to

strike

it

because

I've

been

most

of

the

dock,

I

was

considering

under

a

technical

lens

and

then

I

realized

that

anecdotally,

a

lot

of

the

developers

that

I

talked

to

are

and

seen

use

our

products

are

excited

about

the

it's,

the

business

model

of

server

list

that

drives

their

usage

of

it

as

much

as

the

like.

Its

developer

cost

and

dollar

cost

right.

G

J

K

I

think

we

had

that

originally

somewhat

in

the

attaced

reactor

right.

We

wanted

to

call

out

who

the

target

audience

was

for

this

paper

and

I

think

we

did

yeah.

We

agreed

that

it

was

Sara,

like

you

said,

there's

two

different

audiences

right.

There's

that

developer

with

the

point

of

view

of

serverless

and

there's

also,

especially

given

the

open

source

projects

right.

K

Those

people

that

are

providing

and

may

want

to

host

and

learn

about

that

and

I

think

that

yeah

that

could

be

crisper

in

the

paper,

but

I

think

we've

gone

back

and

forth

on

it

a

couple

times,

but

I

definitely

think

it

should

be

there

right.

So

clearly,

this

is

we're

talking

targeting,

and

this

is

who

we're

talking

about

when

we

mentioned

certain

things,

yeah.

G

C

I

was

a

little

buckle

on

the

slack

on

that,

but

you

know

again

whatever

we

decide

I'm

I'm

open

to

that.

But

you

know,

let's

assume

that

now

a

cloud

provider

goes

to

a

large

customer

says

you

know

what

you're

gonna

get

a

million

invocations

for

free

every

month.

Ok

or

you're

gonna

get

service

site

license

for

invocation.

If

you

put

a

million

dollar

in

front

of

me

so

isn't

now

you're

not

gonna.

Call

it

service.

G

E

A

Does

it

have

to

so

you

think

we

can

even

I

see

the

word

cost

I.

You

know

I

can

understand

why

someone

would

look

at

that

and

see

dollar

signs.

But

to

me

when

I

see

the

word

cost

I

actually

tend

to

think

more

about

resource

usage

and

I'm

wondering

whether

it'd

be

less

controversial

to

talk

about

resource

uses

instead

of

using

the

word

cost,

which

implies

monitor

things

but.

C

But

it's

very

indicated

there

is

actually

always

resources,

whether

it's

a

entropy

gateway,

listening

on

in

event,

whether

it's

some

stuff

that

is

waiting

on

cold

start

or

whatever.

There's

always

some

resources

and

I

think

that

what

here

is

more

about

the

business

model

am

I

going

to

charge

just

for

invocation

or

even

today,

that's

not

correct,

because

in

Amazon

provides

X

and

X

amount

of

free

invocations,

you

know

per

month,

okay.

So

how

does

that

fall

into

there's

no

cost

when,

when

idle

right.

A

H

I

think

I

agree

with

that.

You

know

because

I

think

different

I'm,

just

wondering

whether

we

need

to

be

so

restrictive,

I

think

different

vendors

proper

will

have

different

the

cost

model,

but

the

resource

will

be

on

usage

will

be,

has

I

mean

be

common

right.

You

know

no

matter

what

what

kind

of

you

know

on

Cosmo.

Even

the

function

is

not

running.

H

There

will

be

some

resource

being

your

system,

especially

if

it's

a

complicated

workflow

right

there

will

be

the

platform

the

provider

will

have

resources

to

manage

that

workflow

and

I,

and

you

know

at

one

side

is

you

know

the

provider

might

not

charge

for

some

resource

usage.

Email

function

is

Ronny

and

on

the

other

side,

some

some

provider

might

charge.

You

know

for

the

platform

usage

of

managing

the

whole

workflow.

I

Not

I

think

it's

problematic.

If

you

say

it's

it's

for

developer

resources,

then

warm

start

cannot

be

counted

as

surveillance

because

he

already

has

the

RIS

was

afraid,

no

matter

what

so

yes

said

that

even

someone

does

worms

that

it's

not

service

anymore,

because

it

costs

the

rhesus

for

the

developer.

I

agree.

H

A

That's

really

an

interesting

question,

because

it

seems

to

me

that

a

lot

of

the

a

lot

of

the

back

and

forth

we've

had

recently

is

around.

Is

it

absolutely

zero

costs

last

resource

users

as

seen

by

developer

or

is

a

minimal

amount

as

seen

by

a

developer?

Just

because

the

things

warm

is

that

okay

yeah?

So

we

called

service

my

correct

and

that's

basically

the

gist

of

the

disagreement

here.

I.

H

Yeah

I

think

that's

key

I.

Think

I'll

agree.

You

know

we

do

not

say

absolutely

no

cost

when

idle,

because

there

could

be.

You

know

when

the

function

is

not

running,

there

might

be

some

cost

minor

cost.

It

depends

on

the

you

know:

the

law

even

I'm

alone.

Currently

it

charges.

You

know,

as

I

said

on

one

site

it

charges.

It

will

not

charge

you

when

you

your

function

is

really

running,

not

idle.

They

give

you

some

free

free

runs,

but

on

the

other

side

for

the

step

function,

it

charges

a

platform

cost.

H

A

So

all

right

so

I

know

that

there

definitely

some

people

in

this

working

group

who

believe

serverless

equals

absolute,

zero

right

and

I

believe

there

are

other

people

on

the

call

who

who

don't

want

to

be

quite

as

strict

and

want

to

allow

for

something

greater

than

zero,

and

what

that

number

is

it

greater

than

zero

I,

don't

know,

I

think

it

might

vary,

but

they

won't

allow

for

at

least

something

a

little

bit

greater

than

zero.

Is

that

fair?

No.

C

I

think

I

wear

again,

whatever

we'll

decide,

I'm.

Okay

with

that,

but

I

think

we're

missing

the

point.

The

experience

the

operational

experience

is

the

thing

that

important

is

not

it's

not

what

you

pay

is

as

an

operation.

Guy

I,

don't

need

to

manage

server

is

that's

the

important

point.

I

want

to

run

a

function,

I

click,

something

I,

I

deploy

a

function

without

thinking

about

the

OS

that

I

need

to

deploy

without

thinking

about

the

docker

containers.

I

think

that's

the

core

thing,

not

not

how

you're

gonna

charge

me

and

business

models

are

flexible.

C

E

So

straight,

but

also

we're

looking

at

this

purely

on

a

technical

point

of

view

in

nuancing

it,

whereas

serverless

is

also

being

used

as

a

generic

term

throughout

the

industry

to

encompass

both

and

I.

Think

that

that's

getting

that

that's

playing

into

this

as

well,

but

I

know

I've

talked

to

some

of

the

open

source

projects

and

Nathe.

They

feel

that

you

know

they

provide

server

list

from

a

developer

point

of

view,

regardless

as

to

whether

they

can

you

know

who's

operating

it.

Yeah.

A

You

at

least

speaking

from

the

IBM

side

of

things,

I

think

if

we

were

to

take

the

approach

of

dropping

the

I

guess

it's

out

the

second

bullet

there,

the

absolute

no

cost

when

idle

or

dropping

the

discussion

of

that

from

this

from

this

definition,

as

you're

I,

think

you're,

proposing

I

think

we

would

probably

have

a

problem

with

that

because

to

us

part

of

service.

Is

this

getting

down

to

zero

resource

usage,

zero

cost?

Where

you

want

to

call

it?

A

L

This

is

a

I

I

know.

Chris

is

not

on

the

call,

but

for

Amazon

perspective

you

know,

Chris

made

those

three

points

very

clear.

You

know

zero

servers

to

manage

absolutely

no

cost

when

idle,

except

state

food

storage

and

instant

scalability

I'm

trying

to

ping

Chris

is

a

customer

meeting

right

now.

So

I

think

those

are

three

tenets

that

we

would

like

to

stick

to

as

well

right.

A

So

let

me

ask

you

this,

because

I

I'm,

not

what

I'm

trying

to

figure

out

is

I

mean.

Let

me

pick

on

your

own

for

a

sec,

it's

good!

It's

fun

to

come

up

with

a

good

person.

Do

you

think

it's

possible

to

find

wording

that

you

could

live

with?

That

still

keeps

a

discussion

of

that

second

bullet

point:

yeah.

C

I

wrote

you

first

time:

it's

okay,

I,

don't

get

funded,

usually

I,

think

I

think

the

compromise

could

be

to

strike

out

the

absolutely

on

one

end

and

also

at

the

usually

as

I

wrote

you

and

I

again.

It's

not

my

definition.

I

think

the

definition

should

focus

should

not

focus

on

whether

to

price

and

I

am

willing

to

bet

with

most

of

you

that

the

pricing

models

will

change

over

time,

but

I

think

an

acceptable

thing

is

to

strike

out

there

absolutely

and

right

usually

on

there

no

costs.

So

then

no

one

is

violating.

C

K

A

L

C

A

L

G

This

is

Sarah

from

Google

and

I

noticed

from

that

Antonio

who's

been

more

active

in

this

working

group

was

strongly

advocating

absolutely

a

cost

for

an

idol.

So

I

I'd

like

to

check

in

with

him

and

get

his

thoughts

on

you

know,

sort

of

the

backstory

there

I

could

see

it

argue

different

ways,

but

I

think

I'm

still

I

still

don't

understand

what

we're

talking

about

like

are.

G

We,

we've

talked

about

resources

and

we've

talked

about

business

model,

and

so

it's

not

clear

to

me

whether

this

paragraph

is

actually

about

the

cost

model

for

the

developer

like

what

they

you

know,

whether

it's

in

dollars

or

in

deals

or

in

like

whatever

like.

Is

it

actually

about

the

business

model

which

can

apply

to

like

internal?

G

You

know

things

right

or

I

could

say

like

when

the

developer

is,

is

not

the

same

as

the

provider,

then

blah

blah

blah

right,

because

I'm

just

curious

about

that,

because

because

of

this

persistent

like

we're

seeing

this

huge

adoption

based

on

actual

dollar

cost

interest

right

and

having

things

scale

to

zero

is

an

important

characteristic

for

a

lot

of

firebase

developers

right

that

have

their

either.

They

have

Axton

development

or

they

have

mobile,

apps

or

spikey

traffic.

They

have

only

weekend

traffic

or

only

weekday

traffic,

and

so

the

dollar

cost.

A

Okay,

we're

almost

at

a

time

and

I

wanna

be

respectful

of

the

clock.

So

let

me

do

a

couple

of

things

here:

first,

because

Chris

spent

a

lot

of

time

and

effort

trying

to

word

this

section

very,

very

carefully.

That's

why

I'm

very

nervous

about

accepting

Sarah's

edits,

even

though

I

really

like

them

so

Aaron.

A

Can

you

check

with

Chris

to

see

if

he's

okay,

with

the

edits

that

Sarah

made

because

I

don't

think

she

actually

changed

any

of

the

controversial

bits

either

all

she

does

move

things

around,

I

think

I,

really,

okay,

so

because

we

can

at

least

get

the

the

syntactical

changes

that

she

made

in

there

agree

to

or

not

I

think

I

do

really

useful.

That

way.

People

can

can

make

their

own

minor

tweaks

if

they

see

a

Sarah

to

you

to

your

question

about

what

this

ex,

what

the

paragraph

is

actually

means.

G

A

G

Yes,

I'm

Sarah,

Allen,

I

joined

Google

about

a

year-and-a-half

going

about

nine

months

ago,

took

responsibility

for

the

parts

of

firebase

that

overlap

with

Google

cloud,

so

firebase

is

Google's

mobile

platform

and

gives

direct

from

mobile

access

to

Google

cloud

storage,

as

well

as

the

firebase

real

time

database

and

a

number

and

like

twelve

different

products

that

are

not

pop

rocks.

So

so

my

excitement

about

this

working

group

is

really

around

our

functions.

Effort

we're

originally

from

the

mobile

perspective.

We

came

to

like

well.

G

Mobile

developers

want

just

to

have

a

little

bit

of

function

in

the

cloud

and

then

what

I'm

seeing

is

that

mobile

developers

have

exact

same

needs

as

everybody

else,

because

the

backends

of

mobile

apps

are