►

From YouTube: CDF SIG MLOps Meeting 2020-05-21b

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

A

B

A

A

B

D

B

B

B

B

The

other

piece

of

work-

that's

going

on

at

the

moment,

is

adding

a

basic

glossary

of

terms

so

that

we've

got

some

standard

definitions

for

the

various

pieces

that

we're

discussing

the

the

the

session.

This

week

was

primarily

talking

about

the

the

differences

between

the

standard,

C,

ICD

and

DevOps

behaviors,

and

the

more

specific

and

unique

aspects

that

we

we

need

to

think

about

from

a

machine

learning

perspective.

So

specifically,

you

looking

at

the

scaling

challenge

of

how

do

you?

B

How

do

you

work

with

very

large

numbers

of

either

GPU

or

TPU

accelerators

in

a

machine

learning

perspective?

How

do

you,

how

do

you

go

from

a

pure

kubernetes

view

of

the

world

which

is

cpu-based

to

to

a

model

in

which

you

may

need

to

be

targeting

dedicated

hardware

and

then

also

discussing

some

of

the

challenges

around

how

we

manage

the

data

flow

component

within

machine

learning

world?

B

A

A

Implementations

for

these

narator,

you

are,

you

have

certain.

You

have

certain

technologies

in

mind

which

you

are

looking

at.

Was

this

something

you

are

asking

the

coming

to

develop

around

and

and

in

general

the

other

part

of

it?

Is

you

know

some

something

like

you

know,

machine

learning,

models

consuming

GPUs

etc?

Is

this

I

think

this

is

definitely

you

know

something

which

is

from

a

larger

develops

perspective?

It

makes

a

lot

of

sense

right.

B

B

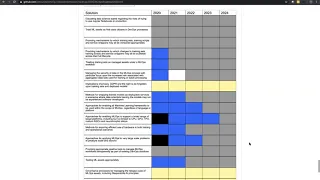

The

blue

areas

are

areas

where

we

we

know

we

need

stuff

and

stuff

is

being

built

and

the

yellow

areas.

Are

they

the

areas

where

we

we

actually

have

big

unknowns,

where

this

potentially

no

proper

activity

going

on?

So

the

the

view

of

the

roadmap

is

that

what

we

need

to

do

is

is

take,

though,

both

a

medium

term

and

a

long

term

view

about

what

what

capabilities

we

actually

need

within

ml

ops

and

then

to

paint

the

picture

of

you

know

what

what's

already

there.

What

can

we

do

today?

B

You

know

what

can

we

do

with

cube,

though?

What

can

we

do

with?

You

know

the

components

that

already

exist

and

then

I

like

what

gaps

there

are

and

where

we

need

to

evolve

solutions

in

the

future

to

meet

those

requirements.

So

so

this

is

about

giving

everyone

a

picture

of

where,

where

there

are

opportunities

in

the

space,

where

existing

solutions

or

new

solutions

can

can

be

developed

to

to

fill

those

gaps

and

and

fit

a

need.

A

A

There

is

a

quite

a

bit

of

work

happening

there

and

has

already

happened

there

around.

You

know

trying

your

models,

which

are

being

deployed

to

GPUs

or

CPUs,

are

choosing

what

hardware

you

want

right.

What

sort

of

capacity

from

that

hardware

you

want

and

most

of

these

projects

under

the

KF

long

rail

are

now

publishing

roadmap

dot,

MD

right,

which

is

essentially

more

tactical,

I,

would

say

a

year.

Look

at

what

we

think

is.

A

You

know

the

direction

for

the

project

for

the

year,

so

it

might

be

worthwhile

looking

and

checking

as

well

from

that

perspective

and

I

think

you

know

there,

this

can

help.

Is

you

know

there

are

like

projects

under

the

Q

flow

around?

You

know,

distributed

training,

hyper,

parameter,

optimization

models

serving

metadata

pipelines

right,

but

beyond

that,

if

there

are

other

emerging

areas

right

where

the

community

should

be

looking

at

right.

So

obviously

you

know

things

like

federated

learning.

A

Right

or

when

you

start

being

going,

you

know

bit

of

layers

on

top

right,

auto

ml,

Auto,

a

right

and

then

they're

still

not

something

right

which

which

on

which

you

know

there

are

projects

which

we

have

spawned.

Another

queue

flow

umbrella,

but

I

think

you

know

there

they

are

getting

quite

a

bit

of

traction.

We

have

seen

a

lot

of

requests

now

coming

around

federated

learning

or

so

to

say

you

know,

multi-cloud

learning

different

people

are,

you

are

giving

it

different

names

where

or

ng

learning

we

are.

A

B

So

I

I'm

seeing

a

lot

of

interest

in

the

wearable

space,

for

example,

where

we

expect

scenes

to

see

a

lot

of

target

device

is

needing

inference

on

on

edge

devices

in

wearable,

which

will

have

to

be

very

small,

dedicated

hardware,

so

so

that

drives

the

discussion

about

cross-compilation

and

and

delivery

into

into

a

six.

If

you

like,

and

then

I'm,

also

seeing

a

lot

of

customer

demand

in

the

industrial

space.

So

there's

there's

a

lot

of

work

going

on

with

sort

of

industrial

Internet

of

Things

and

factory

automation.

B

Stuff,

like

that,

where

there

are

some

some

very

interesting

challenges,

especially

around

the

latency

of

systems

and

the

need

to

be

able

to

train

against

very

large

data

sets

but

inference

in

real

time

very

close

to

machines.

That

are,

you

know,

either

safety

related

or

are

dealing

with

very

high-value

processes

where

it's

critical,

that

they

can.

They

can

make

complex

decisions

at

high

speed.

B

No,

so

you

know

a

big

reason

for

having

the

roadmap

really

is

to

start

to

paint

the

picture

of

all

of

these

quite

complex

and

re

O's

in,

in

which

a

basic

cloud-based

solution

is

it's

not

gonna,

be

fit

for

purpose

and

that

we

need

to.

You

need

to

actually

be

thinking

ahead

and

designing

much

more

subtle

approaches

to

how

we

manage

some

of

these

problems,

but

still

bring

this

overarching.

A

A

C

Vias

Bracy

are

currently

looking

into

case

serving

and

the

design

for

like

models

like

current

score

or

discount

customer

lifetime

value

and

putting

our

models

into

production

through

care

serving

and

trying

out

to

build

API

so

automatically.

So

this

is

really

particularly

interesting

for

us,

and

also

the

scalability

of

cube

flow

is

very,

very

handy,

and

it

helps

us

keep

costs

on

like

decent

amounts.

A

A

This

one

right,

so

this

was

a

talk

at

cube,

cone

right

at

which

myself

in

play,

if

he

is

the

CTO

of

Selden

right,

we

came

last

year.

This

was

in

one

of

the

details

and

also,

you

know,

has

links

to

the

slack

channel,

etc.

But

you

know

how

old

escapes

are

doing

work

that

was

essentially,

you

know

the

focus

I

mean.

Essentially,

if

you

see

the

the

companies

who

came

together

to

build

this

right,

I

mean

Selden

is

started

from

London

right

and

and

they've

created.

Selden

models

are

being

engine

in

open

source

right.

A

The

rest

of

the

names

you're

probably

more

familiar

with

right,

but

the

idea

was

to

do

this.

You

know

on

on,

you

know,

scale

to

zero

model,

because

the

point

of

you

know

model

serving

engines

taking

all

the

models

deployed.

Taking

a

lot

of

the

GPU

resources

are

still

sitting

and

not

being

able

to.

You

know,

scale

down

to

zero.

If

there

is

no

traffic

right,

it

has

become

concerning.

So

that

was

one

of

the

primary

ideas.

A

A

A

You

need

to

respond

to

this,

but

I'm

having

more

there

right

so

and

so

is

you

know

the

other

folks

Dan

see

this

last

comment

which

spanned

over

to

47.

That

is,

you,

know,

Dan

Sony's

from

Bloomberg

right,

so

Bloomberg

is

totally

running

here,

serving

right

now

in

production

spread

across

no

multiple

node,

so

I

think,

if

is

probably

the

best

person

right

now

in

the

community.

Given

the

you

know,

the

amount

of

he's

he's.

A

A

A

You

know

and

that

you

know

sort

of

explains

the

flow

right.

How

how

the

flow

is

flowing

from

is

tio2

is

TM,

open

gateway

that

your

Fox,

you

know

it

it

has

depending

on

you

know

the

scenarios

you

are

getting.

Hopefully

you

know

and

one

of

the

resolutions

they

are

right,

but

the

goal

is

to

continue

growing

this

people.

Do

it

right.

The

decks

in

channel

general

has

been

challenging,

listen

escapes

because

Dex

Levin

will

allow

you

to

do

programmatic

authentication

and

you

need

small.

You

know

something

where

you

can

have

you

know

a

uie.

A

Actually,

you

know

generating

the

DWG

tokens

and

you

authenticating

and

authorizing

based

on

that,

but

the

latest

release

of

K

observing

right,

which

is

K,

observing

0.3

I,

don't

know

which

release

you

are

using

if

you're

using

0.3

that's

bought

along

with

Kennedy

of

0.11

dot.

2

is

what

we

are

recommending

to

get

around.

You

know,

so

he

attacks

probe

issues.

Yeah.

A

C

Ok,

yeah

I

mean,

if

you

read

more

about

these,

these

issues

not

sure

but

evolved

version

of

Kennedy.

If

we

do

have

it

first,

I

will

start

with

that

diagram.

I

think

it's

particularly

useful

that

we

showed

in

debugging

well

yeah

and

where,

for

example,

I

I

find

where

in

abuse

you

can

take

initiative

is

for

this

explained

ability.

Maybe

you

know

we

have

a

framework

for

called

responsible,

AI

or

explaining

our

neural

network

models

like

for

stability,

testing

and

bias,

and

other

kind

of

things

that

can

be

tested

on

neural

network

models.

A

C

A

Yeah,

because

we

have

like

so

just

to

give

you

an

idea

right

around

this

topic

in

general

trusted

here,

we

call

it

trusted

AI

right.

We

have

projects

around

a

fairness

which

is

the

AI

fairness

360.

There

is

adversarial

robustness

toolbox,

which

is

around

you,

know,

detecting

adversarial

attacks

and

on

defending

against

them

right.

This

is

being

used

right

now

by

DARPA,

for

you

know,

adversarial

attack

and

defense

mechanisms,

it's

funded

by

DARPA.

A

Yes,

let

me

share

right,

so

this

is

what

you

know.

This

is

if

you

go

to

just

get

a

Broadcom,

slash,

IBM,

you

will

see

right

so

the

AI

finest

360

is,

you

know

trained

here

all

right.

This

is

for

bias,

detection

and

mitigation

right

and

there's

a

pretty

cool.

You

know

website

also,

will

you

can

try?

It

out

run

some

demos

at

your

head,

etc

to

detect

and

mitigate

bias.

The

second

thing

is:

I

mentioned

the

versatile

robustness

toolbox

that

this

is

a

project

you

know

which

is

right

now

initiated

by

IBM.

A

Darpa

has

given

a

ground,

they

are

actually

using

it

in

defense,

research

right

so

pretty

popular

in

adopted

right.

So

a

lot

of

the

defense

mechanisms,

evasion,

attacks,

extraction,

attacks,

poisoning

attacks,

and

then

you

know

beyond

the

attacks.

How

do

you

actually

implement

defense

mechanisms

right?

So

if

you

are

interested

in

this

space,

there's

another

one

right

on

the

explain

ability

side

we

have

our

project

or.

A

Explain

ability

360

right

so

so

this

one

is

essentially,

you

know

quite

a

bit

of

algorithms

from

IBM

research

right,

and

that

also

has

its

website,

but

also,

you

know,

provides

an

interface

on

top

of

Liman

tap

right.

So

if

you

go

there

to

the

website

as

well,

wait

it's

the

state

in

the

github.

It

talks

about

you

all

the

different

methods

it

is

using.

You

know

you

can

run

your

own.

You

know

scenario,

demos

and

and

try

it

out

right.

What

does

it

mean

and

the

generating

explanations

for

different

kinds

of

consumers

so

yep?

A

A

A

C

A

A

He

explained

ability,

for

example,

right

when

you're

looking

at

we

just

you

know,

go

to

the

project

yourself.

This

has,

you

know,

explained

ability

at

different

levels.

So

if

you

can

expand

alright,

so

the

idea

is

that

you

know

what

we

call

you

know

a

there

is

explained

ability,

algorithms

for

the

data

itself

right,

but

when

you

actually

go

to

more

models,

you

know

we

have

two

areas.

One

is

you

know

local

explanation.

The

second

is

global

explanation

and

local

explanation

is

essentially

you

know.

The

model

results

right.

A

Global

explanation

is

very,

very

essentially

treating

model

as

a

black

box

right.

You,

you

don't

have

access

to

the

internals

of

the

model

or

the

model

is,

you

know,

fairly

complex,

deep

learning

model

right.

So

how

do

you

generate?

You

know,

explanations

for

this.

So

right

you

know

the

algorithms

one

of

the

algorithms

we

use

is

you

know

having

a

several

gate

model

right,

which

is

learning

over

the

explanations,

and

you

know

creating

its

own

modified

explanation

around

that

right.

A

A

F

F

Maki

Jackson

has

written

an

article

as

well

there's

a

bit

more

sort

of

generic

on

ml

ops

and

then

the

other

thing

I

was

going

to

ask

Terry

if,

like

I,

think

it

would

be

pretty

cool

to

stick

in

the

vision

from

the

roadmap

as

a

post

that

just

links

then

to

the

roadmap

again

just

another

way

to

get

some

eyes

on

it

and

increase

the

visibility

for

folks

to

see

so

Terry.

Would

you

be

okay

with

me

pushing

there?

I'd

put

it

under

your

name

as.

B

F

F

No

problem

and

in

general

I

think

we'd

like

to

start

using

things

like

the

newsletter

more

regularly.

So

we

have

a

recurring

theme

of

ml

ops,

so

maybe

every

I

don't

know

what

it

will

be

six

or

nine

months,

but

then

the

other

thing

we

want

to

tie

in

with

the

other

CDF

events.

So

if

folks

are

interested

in

either

participating

in

the

podcast

or

webinars,

please

ping

me.

Let

me

know

and

I'll

help,

sync

that

up

with

Jackie

who's

running

the

show

and

all

all

those

things.

Okay,.

A

Jc

one

question

I

have

is

like:

is

there

a

larger

for

the

overall

CD

foundation?

Right

I?

Think

one

of

the

things

we

do

want

to

now

do

is,

like

you

know,

get

the

folks

who

are

actually

behind

you

know

some

of

these

projects

like

Jenkins

or

you

know

things

like

Tecton,

etc,

be

a

little

bit

more

and

depending

right,

I

mean

even

with

that

audience

right.

A

F

So

what

I

was

thinking

there

and

that

the

general

body

where

we

have

a

lot

of

the

project

representatives

and

folks

from

the

communities

around

the

projects

is

the

the

technical

Oversight

Committee

I

know

towards

the

end

of

last

year.

It

was

kind

of

lost

a

bit

of

momentum,

but

Dan

Lawrence

has

come

in

as

the

new

chair,

so

he's

reviving

things

quite

well

and

what

he's

looking

to

do

is

get

updates

from

the

SIG's

on

a

regular

basis

of

the

interoperability.

Sig

did

an

update

at

the

last

talk.

F

A

Think

it's

probably

you

know,

I

mean

one

of

the

things

we

have

been

doing

as

I've

dedicated.

You

know,

activity

as

part

of

the

sig

was

you

know

the

project

around

this

right,

which

is

more

hands-on,

exercise

on

ground

exercise

will

be,

and

essentially

you

know

trying

to

build

something

and

deliver

something

right

which

can

be

used

right.

A

You

know

the

cue

flow

pipeline,

Python

interface

on

top

of

Techtron

right,

so

you

can

use

Python

programmatic

ways

of

defining

pipelines

on

pipelines,

so

that's

achieved.

The

second

phase

is

where

we

essentially,

instead

of

going

to

Tecton

directly,

we

want

to

orchestrate

from

pure

flow

pipeline

engine

and

the

reason

for

doing

that

would

be.

You

know

to

get

the

cue

flow

pipeline

UI

and

the

lineage

tracking

and

the

artifact

tracking,

all

all

integrated

around

tracfone

right,

so

I

think

you

know

I

would

love

to

figure

out.

You

know

how

to

now.

F

A

Both

its

primary,

you

know,

I

would

like

to

see.

You

know

there

are

folks

who

are

interested

in

evolving

right,

something

like

this

for

their

own

needs,

right

and

and

by

virtue

of

getting

users.

Also,

you

get

more

feedback

right,

like

you

know,

things

are

so

I

think,

even

if

they

don't

want

to

contribute,

but

if

they

have

a

need

right

where

they

are.

Essentially,

you

know

someone

who

are

running

Tecton

and-

and

they

want

to-

you-

know,

evolve

it

towards

machine

learning

needs

and

they

want

to

bring.

A

F

Okay,

this

sounds

like

something

we

can

connect

you

with

Jackie

to

do

a

webinar.

We've

now

got

CDF

running

regular,

webinars

and

I

know

she's

looking

for

topics

and

I

think

this

would

work

quite

well.

So

how

about

I'll

connect

the

two

of

you

and

I'll

point

you

at

the

the

forum

where

you

just

need

to

sort

of

book

your

slot

in

that

sound

good.

F

A

No

I

think

these

are

the

two

primary

I

mean

the

roadmap

activity

plus

you

know

all

hands

on

ground

technical

project

right

now

is

you

know

the

cue

flow

pipeline

and

check

on

integration,

which

is

happening

at

much

faster

speed

right

and

an

active

in

development.

The

end

today's

discussion,

as

you

saw

right,

I,

mean

depending

on

the

community,

needs

right.

So

today's

discussion

was

more

focused,

like

you

know,

around

model

serving,

and

you

know,

model

explaining

ability,

motive,

fairness

right,

so

that's

the

second

part

of

that

how's

that

you

know

the

pipelines.

A

Hopefully

you

are

helping

you

to

get

to

the

point

where

your

models

are

deployed

right,

but

how

you

deploy

that

in

them

in

production,

and

how

do

you

enable

things

like

explain,

ability?

First,

detection,

detection

right?

So

hopefully

you

know

we

start

getting.

You

know

so

more

focus

around

that

area,

and

then

we

can,

you

know,

think

of

carving

or

something

specific

in

that

space.

A

Thanks

thanks

a

lot

so

yet

Thomas,

it

will

be

great

to

you

know.

You

know,

get

some

more

details

around

your

responsibilities.

She

ate

it

and

see.

You

know

how

we

can.

There

is

a

trust

area

committee,

as

well

as

part

of

the

Linux

Foundation

AI

right,

which

essentially

is

more

focused

on

all

these

areas.

Bias,

explain

ability,

adversarial,

detection

and

generation

right.

So

if

you

are

interested

right,

you

can

reach

out

to

me

and

I

can

get

you

connected

enjoy

that

as

well.

If

that's

your

area

of

interest,

yeah.

D

A

This

right

so

essentially

the

integration.

Can

you

see

my

screen

yeah?

Yes,

the

integration

with

TF

exes

is

at

a

different

level

than

what

you

were

thinking

in

terms

of

the

Czech

term

right.

So

this

is,

for

example,

you

know

queue

flow

pipeline

and

it

works

with

our

goal

as

an

engineering

to

the

covers

right,

what

we

are

doing

is

replacing

our

goal.

Bringing

in

Tecton

all

right.

Rest

of

the

entry

point

remains

the

same

right.

So

essentially,

there

is

a

merge

happening

at

an

SDK

level,

so

the

first

version

of

that

SDK

is

ready.

B

A

A

You

know

either

the

same

SDK

to

draft

T,

FX

pipeline

or

a

kfb

pipeline

right.

So

that's

the

SDK

story.

We're

essentially

you

know

one

single

SDK.

If

you

define

a

pipeline

using

Q

flow

pipeline,

DSL,

semantics

or

using

you

know,

T

effects,

you

can

use

that

single

SDK

and

launch

it.

The

second

work,

which

is

a

bit

longer

term,

as

you

know,

aligning

around

the

same

DSL

as

well

right,

so

which

is

essentially

right.

Now.

A

Yes,

you

can

use

the

same

as

DK,

but

you

know:

there's

still

the

the

Python

DSL

for

both

are

quite

different

right,

so

how

you

will

define

a

T

FX

pipeline

versus

how

you

will

define

now

Q

flow

pipeline?

You

know

the

syntax

and

the

semantics

are

different

right.

So

that's

where

there

is

that

discussion

going

on

right,

heavily

and

and

part

of

it

is.

You

know

that's

something

which

the

internal

Google

teams

need

to

come

themselves

together

as

well

right,

which

is

the

tf-x

team

in

the

KP

team.

A

Presentation

right

so

assuming

you

have

a

line

around

SDK,

which

has

happened

right

so

hopefully

it

will

be.

You

know

more

widely

distributed.

Then

the

second

thing

was

DSM.

The

third

thing

is:

when

you

compile

you

want

to

compile

to

something

intermediate

right

and

not

specifically

to

Ann

Arbor

yeah

Melora

Techtron

y'know

by

virtue

of

compiling

to

something

intermediate

right.

A

There

is

now

an

opportunity

to

create

a

stern

industry

standard

right

around

how

pipeline

should

be

defined

in

a

more

neutral

language,

which

then

you

know

the

Chevy

engine

can

interpret

based

on

the

target

given

to

it

hey.

This

is

the

IR.

Your

target

is

checked

for

normal

or

tomorrow

in

future.

Maybe

it's

Jenkins

right

and

thou

shalt

be

able

to

convert

that

IR

back

to

that

corresponding

mean

yet

right.

So.

A

Phase

of

that

right,

which

is

you

know,

a

little

bit

more

more

in

the

future

right

so

long

answer

to

your

question

but

short

answer,

the

combined

SDK

is

ready.

The

next

will

be.

You

know,

looking

at

that,

we

have

the

same

DSL,

which

is

essentially,

you

define

using

the

same

way

whether

it's

at

the

FX

pipeline

or

KP,

and

the

third

angle

to

it

would

be.

You

know

you

also

compile

to

something

which

is

common

across

everything

and

the

engine

takes

that

common

standard.

A

D

Now

T

FX

also

kind

of

not

compiles,

but

like

you

can

also

use

it

as

a

structure

for

an

airflow

pipeline.

Is

it?

Is

there

a

possibility

that

we

can

use

that

intermediate

representation

structure

to

create

a

an

airflow

pipeline

that

you

can

around

perhaps

locally

on

your

machine

rather

than

and

grenades.

A

Ya

know,

I

think

the

tf-x

right

now

has

this

concept

of

runners

right.

So

airflow

is

one

of

the

runners

behind

he

effects

right.

So

by

virtue

of

it,

you

know,

assuming

you

the

there

is

a

single

SDK.

You

will

combine

compiling

to

a

single

IR

and

that's

that's

what

you

know.

Gfx

is

taking

right

and

then

you

know

what

is

the

runner

tf-x

has

chosen

right.

A

So

when

you

are

running

GFX,

you

have

chosen

airflow

as

a

runner

right

logically,

that

should

work

on

airflow

right,

but,

as

I

said

this

part

of

it,

where

there

is

this

common

IR,

which

we

all

are

using,

it's

still

I

would

say

at

this

point.

You

know

there

are

like

lot

of

e-mails

back

and

forth.

We

are

trying

to

establish

that

communication

and-

and

maybe

you

know

David-

this

is

also

an

opportunity,

an

area

where

you

can

help

where

I

think

you

know

we

are

heavily

interested

in

this

lift

has

come.

E

A

Know

so

I'm,

so

there

is

an

email

chain

right

between

how

I'm

going

on

with

Pavel

on

that

side

right,

it

would

be

I

mean

if,

like

with

what

wall

you

were

doing

with

ml

spec

right

or

the

pipeline

metadata,

or

you

know

finding

pipeline

inna,

so

I

think

I

would

see

that

you

have

a

inherent

interest

in

it

right

if

you

can

make

it

more

like

you

know,

also

that

yes,

Microsoft

is

heavily

interested

in

standardizing

this.

That

will

sort

of

speed

up

some

activity

on

this

side.

Right,

I

think.

E

A

A

Thanks

I

think

this

was

a

great

discussion

today

and

you

know

I

will

compile

and

send

an

email

with

the

links

etc.

Right

for

both

around

you

know

the

a

of

serving

trusted

AI

as

well

as

around

you

know,

the

tf-x

K,

if

we

work

right

and

Terry's

roadmap

is

in

the

seg

ml

of

3po

itself

right.

So

there

is

one

single

thing

to

go

to

that

right.

A

If

there

is

any

other

thing

right

which

you

need

in

terms

of

you

know

how

to

reach

out

on

different

areas,

whether

it's

the

trusted,

iPod,

biased,

explained

ability

or

the

checked

on

you

for

pipeline

integration

or

kfb

GFX,

you

know

just

should

open

email

and

I'll

be

well

yep

thanks.

Everyone

thanks

again

thank.