►

From YouTube: CDF SIG MLOps Meeting 2020-02-27

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

A

A

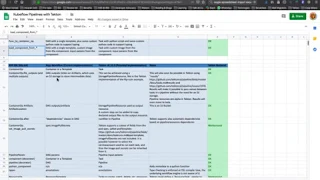

Revised

comparison

between

the

queue

for

pipelines

are

go

and

Tecton,

and

then

you

know,

question

let's

go

through

you

know,

I

would

say

the

work

in

progress,

designing

specifications,

talk

and

and

the

further

work

you

have

done

in

terms

of

you

know,

updating

the

compiler

that

your

flow

pipeline

compiler

to

generate

spectrum

pipelines

over

that

and

yet

do

you

want

to

go

first

and

get

started

so

by

the

way.

Jeremy's

here

you

know

he's

from

the

cue

flow

team.

He

leads

the

cue

flop

project.

A

C

Cool

yeah,

so

I'll

just

get

the

comparison

again

this

time,

starting

from

more

of

the

50s

DK

side.

So

the

previous

comparison

was

more

centered

in

comparing

Harbor

City

features

against

actin.

Then

we

started

from

what

is

actually

used

in

the

compiler

and

mapped

into

what

is

on

the

harder

side

and

what

it

could

be

objective

side,

of

course,

the

compiler

package,

the

first

package.

C

We

know

that

there

is

a

compiler

for

Argo

and

there

is

a

prototype

compiler

on

Tecton,

which

is

work

in

progress,

so

in

terms

of

just

just

go

through

the

list

here.

So

there

are

some

functionality

to

create

a

container

operation

in

the

SDK

from

a

Python

function

or

loaded

from

from

a

file,

and

that

is

easy,

easily

implemented

in

the

in

Tecton.

So

that

would

be

a

task

intact

to

the

side.

C

So

in

terms

of

container

operation,

I

think

there

is

a

good

support

intact

inside

one

thing

that

we

don't

have,

but

there

is

a

workaround

is

the

set

image

full

secret?

Actually,

we

may

have

discussed

adding

it

to

to

the

TAS

directly.

The

way

Tecton

handle

this

is

is

by

specifying

a

service

account

which

has

the

required

image

pool

secret

attached.

So

that

would

be

the

way

to

do

it

intact

on

today.

C

C

In

fact,

Tecton

has

a

catalogue

of

tasks

like

usable

tasks,

and

one

way

for

work

would

be

to

define

those

tasks

in

the

catalogue,

you're,

also

working

on

extra

features

that

will

allow

us

to

appoint

to

a

catalog

in

the

task

by

reference,

for

instance,

so

that

it

doesn't

need

to

be

imported

first

into

the

into

your

cluster.

So

we

could

just

reference

to

it

and

that

would

make

the

compiler

work

even

easier.

A

We

will

also

need

to

addresses

you

know

the

components

which

we

are

creating

for

queue

flow

pipeline,

which

are,

you

know,

essentially

docker

containers,

but

there

is

a

components

toriyama

file

which

defines

you

know

the

input,

the

output,

the

packing

docker

image

for

this

component.

If

we

can

start

doing

that

in

a

generic

way

as

well,

so

that

you

know

they

can

be

reusable

across

both

Tecton

as

well

as

queue

flow

pipeline

right

and

so

I,

don't

know

whether

that's

something

you

know.

C

Yes,

so

that's

absolutely

so:

that's

I

think

in

terms

of

SDKs

is

implemented

as

load

component

from

Yammer,

for

instance,

which

is

on

line

8

and

yeah.

So

that's

that

can

be

mapped

into

into

detect

on

tasks.

So

I

mean

from

the

SDK

point

of

view.

The

component

is

loaded

from

from

a

file,

and

that

would

be

the

same

format,

I

believe,

regardless,

whether

it's

our

goal

or

tectum.

C

So

we

could

have

the

same

component

for

motto

and

then

it

would

compile

into

our

goal

workflow

image

or

attacked

on

attacked

on

tasks,

something

that

you're

working

experimented

with

right

now

is

the

ability

to

store

tasks

into

the

registry

so

into

the

container

image

registry

as

artifacts.

So

you

know

CI

format

and

that

would

allow

us

to

pull

things

like

are--some

components,

using

a

specific

version

like

a

char

specific

Shou

like

it

is

done

for

container

images.

D

C

E

A

Home

from

our

go,

it's

more

like

you

know,

providing

Techtron,

also

as

a

target

backing

to

run

these

pipelines.

So

I

think

specifically

again

speak

on

behalf

of

IBM

and

Red

Hat

right,

so

we

have

standardized

on

packed

on

as

the

native

CI

CD

pipeline

for

the

cloud

and

that's

the

motivation

on

our

side

over

that.

A

You

know,

if

that's

something

that

is

already

going

to

be

embedded

in

OpenShift

as

a

supported

pipeline

on

our

IBM

cloud

as

a

supported

pipeline

engine

it,

the

the

internal

teams,

are

requesting

that

you

know

for

something

like

you

flow,

it

would

make

sense

sitting

on

top

of

Tecton,

rather

than

something

like

Argo

right

for

which

they

will

lead

to

figure

out.

You

know

getting

people

trade,

my

particular

skill

set

and

supporting

that

so

I

think

that's

the

primary

motivation

on

our

side.

Jeremy.

Do

you

have

any

comments

on

the

motivation

from

your

side?

Yeah.

E

I

think

I

think

there's

two

I'm

Tom

Jeremy

I

work.

A

lot

on

kukuku

go

I.

Think

there's

two

I

think

this

is

just

my

two

cents.

So

the

first

is:

if

you

look

at

pipelines

right

now,

Argo

is

not

a

first-class

citizen,

and

so

I

think.

One

thing

that

pipelines

is

missing

is

a

well-defined

resource

model

that

provides

a

little

bit

more

abstraction

between

the

Python

SDK

and

the

actual

underlying

resources,

and

so

I

think

you

know

the

one

question

is

you

know:

should

it

be

Argo?

E

Should

it

be

tacked

on,

should

it

be

some

new,

well-defined

concept,

new

resource

model,

right

and

so

I

think

you

know,

NMS

just

mentioned

pipelines

defined

its

own

sort

of

llamó,

which

has

for

a

component,

which

is

basically

the

notion

of

a

reusable

task.

Now

that's

something

that

I

don't

think

Argo

really

had

originally,

but

what

well

it

did

happen.

Tecton

did

have

that

in

the

form

of

the

task,

resource

and

so

I.

E

Think

to

me

you

know,

one

of

the

questions

is,

you

know:

does

pipelines

really

need

to

introduce

a

new

notion

of

a

reusable

task,

or

should

it

just

be?

You

could

have

just

use

or

that

just

use

you

know

a

Tecton

attacked

on

tasks

and

then

I

think

I

think

Argo

I

haven't

paid

attention

recently

to

are,

though,

but

I

think

in

the

past

year,

or

so

they

might

have

introduced

something

more

akin

to

a

task.

E

I

don't

know

so

maybe

maybe

that

gap

has

been

closed

and

so

maybe

that

could

be

used

in

in

or

ago

and

then

I

think.

Also

just

you

know,

as

anima

says

you

know,

certain

companies

are

getting

kind.

Various

solutions

like

Tecton,

like

so

Google's

heavily

invested

in

found

as

well,

so

I

think

so

you

know

come

on

from

that

perspective.

You

know

we're

aligning

with

you

know

a

huge

team

at

Google,

that's

working

on

tech,

doc.

C

If

I

can

add

something,

it

doesn't

add

too

much,

but

from

a

technical

community

point

of

view,

Tecton

really

aims

to

be

like

a

common

denominator

and

continuous

delivery

scenarios,

which

is

the

ICD

but

also

delivery

of

machine

learning,

artifacts

so

I

think

there

is

a

general

willingness

from

the

Tecton

folks

to

integrate

as

much

as

possible

with

solutions

on

top

of

Tecton

and

to

be

like

yeah

come

on

integration

the

year.

There

is

a

thing

that

fits

with

that

aim:

that

direction

as

well

yeah.

A

I

mean

even

Jenkins

X,

you

know

they're,

never

aging

Tecton

under

the

cover.

So

if

you

think

or

see

you

know

the

trend,

this

cloud

native,

CIC

Lee

community

is,

you

know,

lining

around

Tecton,

so

I

think

it

makes

sense

I'm

just

looking

at

the

time

and

we

all

right.

So

there

are

a

couple

of

things

you

would

want

to

call

out

which

you

think

are

or

can

be

long-term

blockers

in

terms

of

getting

the

parity

at

a

functionality

level

between

orgo

and.

C

Long-Term,

not

really

so

the

two

things

that

are

marked

yellow,

so

the

access

handler

and

the

the

right

one,

the

recursion

I

think

the

the

exit

endler

is

something

that

is

actually

really

close.

So

it's

been

actively

worked

on

and

we

are

very

close

to

having

that

functionality

in

parallel

is

under

design,

so

we

were

discussing

about

it

so

we're

discussing

whether

to

do

its

own

task

level

on

step

level

or

both.

So

this

is

big

design,

but

I.

Don't

think

it

will

be

a

long

term

to

implement

that.

C

A

At

this

point,

I

would

say:

I

mean

I

haven't

seen,

I

mean

90

was

another

cue

floor.

95%

of

queue

flow

pipeline,

recursion

is

not

used,

so

I

would

say

you

know.

That

is

one

of

those

cases

where

we

probably

don't

bother

too

much

at

this

point

things

like

yes,

volley.

More

volume

snapshot

right,

the

exposing

the

Native

communities,

capabilities,

running,

sequential

parallel

jobs,

right.

E

A

C

A

Andrea,

so

please

keep

on

updating

this

right

and

we'll

you

know,

as

we

move

through

some

of

this

work.

I

will

keep

on

prioritizing

some

of

these

requirements

we

find

out.

You

know,

which

should

be

intact

along

with

that

Christian.

Do

you

want

to

share

your

screen

and

let's

do

a

quick

walkthrough

of

what

you

are

being

up

to

and

Jeremy

a

Christian

and

Andrea

right?

So

Andrea

is

our

tectonic

emitter

from

IBM

in

the

Techtron

community.

Christian

works

with

us

on

the

house.

B

B

F

Yep

I'm

trying

to

click

through

the

zoom

share

screen

buttons,

and

it

wants

me

to

go

to

my

system

preferences

and

one

second

I

don't

know

I

can

share

it

on

my

and,

if

you're

having

trouble,

you

know

it

wants

me

to

quit.

Zoom

and

restart

I'll

do

that.

Anyway,

maybe

you

can

start

with

the

Google

Docs

image.

A

So

I

think

you

know

Jeremy.

This

is

something

we

discussed

or

it's

so

part

of

it

was

you

know,

essentially,

yes,

putting

up

a

plan

and

figuring

out.

First,

you

know

the

gaps

in

Tecton

which

is

needed

for

a

full

featured.

You

know,

cue

flow

pipeline

checked

on

compatibility,

so

you

know

that's

an

exercise

which,

which

has

been

going

on.

A

The

other

thing

is,

which

is

essentially

some

of

the

requests

which

we

are

getting

from,

for

example,

from

internal

teams

or

more

like

you

know,

they

just

need

the

compiler

right,

because

UI

is

a

big

piece

of

it.

If

you

see

the

two

flow

pipeline,

UI,

it's

heavily

dependent

on

the

Argo

channel,

the

way

it

is

constructed.

The

way

it

is

you

know,

displays

their

dad

acceptor.

A

All

right

so

I

think

that's

an

aside

piece

of

work

which

will

require

you

know

pretty

extensive

investigation

in

in

general,

but

even

beyond

that

I

think

the

minimum

thing

which

you

know

some

of

the

teams

we

talked

to

they

want

it

was

you

know:

okay,

I

mean

in

any

case,

you

know

a

lot

of

the

products

that

they

are

only

ye

can

be.

You

know,

take

that

compiler

generated

sorry,

the

DSL

and

generator

tech

phone

equivalent

right,

so

that

has

been

the

main

driver.

A

A

A

Our

initial

set

of

instructions

have

been

around.

You

know,

I'll

just

jump

to

you

know

the

items

he

has

been

looking

at

right.

So

essentially

we

were

copying

part

of

the

compiler

code

here

into

the

KP

Techtron

repository

generating

that

sequential

pipeline,

which

was

mapped

to

the

steps

right.

So

quite

a

few

things

wrong

there

right,

so

he's

been

fixing

it

a

adding.

A

A

E

Oh

thanks,

I

think

this

is

really

great.

I'm,

certainly

excited

about

tech

time.

I

mean

I.

Think

to

me,

I

think.

The

big

question

is,

you

know,

is

the

way

to

start

incrementally

introducing

into

kupo

so

that

you

know

people

start

seeing

it

and

people

start

using

it,

and

you

know,

because

just

replacing

pipelines

with

backgrounds,

a

pretty

big

chasm

so

I

think

one

one

thought

that

you

might

want

to

look

into

is

I

I,

think

sharing

low-hanging

fruit

in

terms

of

starting

to

replace

some

of

the

functionality

and

fairing

with

tech

Don.

E

A

Okay,

make

sense:

oh

I

was

I

was

conversing

with

Janie.

Yesterday

right

we

were

actually

discussing

sharing

a

bit

part

of

the

reason.

Is

you

know

in

terms

of

I

think,

as

you

have

also

tried,

the

roadmap,

but

in

general

right

I

wanted

to

see

the

applicability

of,

or

involvement

in

it

with

service.

You

know

the

the

business

drivers

or

so

to

say

so,

I

think

the

Techtron

work

probably

will

or

can

act

as

a

business

driver

on

that

side

right

so

I.

E

So

you

can

have

one

text

on

packages

that,

as

a

pea

processor

converts

a

notebook,

you

can

have

another

Tecton

task

that

uses

Kanaka

versus

build

kids,

actually

build

it

and

then

sharing

so

it

just

becomes

like

a

Python

SDK.

On

top

of

that,

you

know

some

sugar

for

the

phonetics

treat

that

with

pipeline

would

Python

it

supposed

to

just

do

in

raw

mo

and

then

that

sort

of

merges

those

two

pretty

nicely

I.

Think

no.

A

No

I

hear

you

and

I

think

one

of

the

things

which

you

mentioned

regarding

in

other

notebooks

does

that's

something

which

we

are

doing

internally.

We

have

a

cue

flow

pipeline

component

which

can

take

a

notebook

and

and

run

it.

You

know,

and

that

component

is

essentially,

you

know,

has

Peppermill

under

the

covers

right,

so

the

the

that

will

also

align

you

know

one

of

the

things

which

I

need

to

think

is

you

know,

so

we

have

this

catalog

of

cue

flow

pipeline

components

right.

A

So

now,

when

you

talk

about

you

know

the

catalog

of

Tecton

tasks

right,

we

will

need

to

figure

out

how

to

do

them

in

a

generic

way

so

that

you

know

they

are

reusable

across

both

you

can

take.

You

know

KP,

as

is

and

run

it

as

inaudible,

because

in

the

end

these

are

docker

containers

right.

So

so,

if

we

be

a

little

bit

more

intelligent

about,

you

know

constructing

the

metadata

around

it.

Hopefully

we

can

reuse.

You

know

in

a

consistent

manner

across

both

of

them

yeah.

C

A

F

It's

some

spotting.

My

screen

is

this:

sharing

the

Google

Doc

right

now,

my

entire

screaming

here:

okay,

fantastic

I'm!

So

yeah

thanks!

You,

you

walk

through

what

you

do

share

your

entire

screen.

So

you

know

that

yes,

I've

been

careful

not

to

share

pictures

of

my

wife

and

daughter

here,

but

yes,

how

that

was

the

easiest

I

had

too

many

screens

open.

Thank

you

right.

So

I've

been

focused

more

on

the

very

low-level

tasks

of

getting

the

q4

pipeline

to

kV.

F

Tecton

project

that

you

might

have

seen

may

have

seen

into

a

shape

that

developers

can

collaborate

on

that

right.

So,

if

you

go

to,

if

you,

okay,

if

you

checked

on

it's

a

very

small

project,

still

has

a

few

samples

and

has

the

SDK

and

the

SDK

part

is

the

the

part

that

I've

been

working.

Working

on

I

have

been

doing

so.

I

thought

that

was

a

step

too

far.

I've

been

doing

Python

development.

F

For

you

know

a

couple

of

years

now,

I'm,

coming

from

a

Java

background

and

I'm,

really

keen

on

being

able

to

you

know,

go

to

github

clone

a

project

and

you

know

try

it

out

and

chipped

away

and

that

wasn't

possible

with

this

project

before

mainly

because

you

had

to

do

things

like

you

know,

get

the

cue

flow

pipelines

project.

You

know

clone

dead

somewhere

locally,

build

it

copied

the

good

files

from

this

particular

KP

tacked

on

compiler

over

there

q4

pipeline

project.

F

You

know

and

then

built

a

give

a

Python

project,

and

then

you

know

use

it

as

you

would

use

kfp.

If

the

caveat

that

you

basically

eliminate

the

KP

functionality

in

terms

of

the

compiler

and

now

only

produce

tacked

on

so

I

did

make

an

effort

to

change

a

few

things

in

this

project

and

make

it

so

that

people

can,

you

know,

basically

go

and

collaborate

and

not

having

to

copy

code

around

and

worry

about.

F

F

So

typical

you

create

your

virtual

environment,

you

have

a

pip

install,

you

know

to

get

your

project

installed

and

then

you

can

run

the

test

from

the

command

line

or

you

can

run

it

in

your

IDE

and

I

separate

it

as

the

command

line

executable

for

DSL

compile

I

created

these

occupy

detect

on.

So

you

can

run

it

in

parallel

to

your

standard

cube

flow

SDK

compiler.

F

Steps

in

the

pipeline

to

Tecton

steps

inside

one

task

and

instead

mapping

the

individual

private

operations

into

a

task,

so

they

can

run

in

parallel

and

we

can

then

also

make

some

progress

on

on

using

worst

places

and

sharing

sharing

data

for

input

and

output

parameters.

So

this

is

where

I

am

right

now.

The

next

bigger

concern

I

have

is

how

to

stage

that

work

in

a

way

that

we

can

feed

it

back

into

the

queue

flow

pipeline,

SDK

and

compiler,

and

maybe

in

the

future

habits

innovated

the

queue

flow

pipeline,

SDK

and

compiler.

F

They

have

a

a

command

line,

flag

way

and

say:

I

want

to

generate

Tecton

instead

of

the

Argo

llamó

and

the

code

would

all

live

in

one

repository

to

get

to

that

point.

I've

made

an

effort

to

to

make

make

sure

that

the

methods

that

we

had

to

override

to

generate

detect

on

Yama

they

follow

the

same

project

structure

as

in

db2

flow

pipelines,

SDK

or

the

same

class

name,

same

method

names

and

for

now

I'm

using.

A

That's

probably

the

time

you

want

to

get

Jesse

and

team

involved

from

the

cue

flow

pipeline

and,

as

Christian

mentioned

right,

align

that

so

that

you

know

we

start

working

in

a

common

goal

that

at

some

point

we

want

to

merge

into

the

main

stream

through

flow

pipeline

repo

and

give

that

option

for

a

flag

right.

So

that's

I

think

there

is

going

to

be

a

milestone

fairly

soon

where

we

will

need

to.

You

know,

have

a

conversation

with

the

queue

for

pipeline

team

right.

A

So

hopefully

you

know,

maybe

in

a

couple

of

weeks

or

so

right,

so

I

think

that's

something

you

know

we

are

hoping

you

can

facilitate

on

that

side.

I

also

intend

to

get.

You

know

that

that

probably

is

also

the

right

time

to

get

Red

Hat

it

more

in

as

well

that

you

know

this

is

something

which

can

now

start

turning

into

something

real

thanks.

Jeremy

I

do

need

if

you

I,

do

need

a

minute

of

your

time.

You

know

for

discussing

miss

of,

is

you

know

something

miss

Avista

announcement

right.

A

A

Me

Jeremy

yeah,

so

say

my

service.

You

know

the

mail

I

sent

us

here

was

more

around.

You

know

the

coordination

I

know

that

I

mean

the

technical

part

is

done

right,

I

mean

she

was

talking

about.

Yes

in

blog,

but

in

terms

of

you

know

being

having

to

coordinate

across

you

know

some

of

the

blogs

plus.

You

know

if

she

is

doing

any

press

release

right.

So

that's

what

my

meaning

take

was.

A

Even

it

was

not

I

mean

for

when

I

had

sent

her

a

draft

of

the

blog

right,

which

now,

given

the

time

frame,

we're

going

to

for

the

most

part

right.

We

are

going

to

publish

right

what

I

wanted

to

get

her

eyes

on

it,

but

I

didn't

hear

anything

back

right.

So

there's

what

I

was

asking

you

you

know:

I

can

ping.

You.

E

A

A

You

know,

but

in

general

the

thing

was,

you

know

what

we

wanted

was

a.

We

want

to

hyperlink

to

the

main

blog

right,

which

is

coming

from

your

side,

so

that

that's

one

thing

I

wanted

from

her

is

you

know?

Is

there

a

standard

URL

to

which

you

know

which

we

can

put

in

right

now

so

that

you

know

when

this

goes

live

you

know,

thou

shalt

be

able

to.

A

We

shall

be

able

to

point

to

the

other

thing.

Was

you

know?

Do

we

need

any

other

coordination

because

from

our

mail

it

up

sheared,

you

know

she

wanted

to

coordinate.

Quite

a

bit

of

you

know,

activities

across

orgs

right,

but

given

that

it

is

Thursday

and

Monday

is

when

this

is

happening

right

so

I'm

a

little

bit.