►

From YouTube: DataHub at Viasat: DataHub Community Talk - Jan 15 2021

Description

Anna Kepler at Viasat describes why they chose DataHub over other open source and commercial technologies and their plans with it.

A

A

B

Great

well,

first

of

all,

thank

you

so

much

for

having

me.

It's

been

really

pleasure

to

pick

this

tool

and

really

worked

with

the

community

to

get

it

into

production.

We

also

recently

just

couple

of

months

ago

deploy

data

hub

in

production.

It's

been

working

really

well,

and

so

we're

excited

to

share

how

we

are

using

it

and

how

we're

what

our

future

plans

and

serve,

how

we

arrived

at

that

at

the

selection

of

this

tool.

B

So

my

role

at

vyasad

is

the

technical

product

manager

about

analytics

platform.

So

I've

been

working

with

data

for

quite

a

bit,

so

it's

always

been

a

passion

of

mine,

and

so

metadata

is

definitely

part

of

that.

As

well

and

avaya

said

as

a

whole,

we

are

a

satellite

communications

company,

so

their

isp.

B

Kind

of

a

state

of

our

users

and

data

is

definitely

very

complex.

Our

core

data

platform

has

which

I'm

a

part

of

has

a

lot

of

different

data

sources

and

microservices

data

flows.

Data

analytics

tools,

but

we

also,

as

the

core

data

platform,

was

evolving.

In

parallel,

we

ended

up

with

a

lot

of

mini

data

lakes,

databases,

data

sources

all

over

the

place,

and

so

it's

definitely

been

a

complicated

landscape

to

work

with.

B

In

addition

to

that,

we

have

variety

of

different

skilled

users

from

data

suppliers

and

preparers,

and

then,

even

within

the

data

consumers

themselves,

there's

different

capabilities,

different

skills

that

users

have

in

the

interactive

data

and

from

data

analysts

that

work

more

with

reporting

tools

for

the

data

scientists

who

are

ready

to

really

dig

in

and

explore

the

data

set.

So

there's

definitely

quite

a

complex

data

personas

there

as

well,

and

the

company

has

set

the

goal

to

really

be

very

data.

B

Driven

and

part

of

it,

of

course,

is

just

really

even

getting

access

to

data.

And

how

do

we

find

all

this

small

mini

data

silos

and

explore

it

to

the

users

and

very

good

approached

way?

And

so

some

of

the

challenges

that

we

are

trying

to

resolve,

I

don't

think

there

is

a

really

new

to

many

of

you,

since

you

are

here

and

part

of

this

community,

and

so

the

silent

knowledge

about

data

is

definitely

one

of

the

challenges

we're

trying

to

address.

B

So

the

slow

analytics

process

of

even

many

of

our

users,

for

example,

they

complain

about

even

finding

what

data

is

available

to

them.

Can

they

even

access

that

data?

What's

where

and

what

teams

to

work

with

then

get

into

the

data?

And

it's

been

even

in

the

last

couple

of

months,

the

community

has

been

as

we

introduced

the

data

catalog.

The

community

already

been

very

excited

and

we

see

a

lot

of

users

coming

to

the

data.

B

Our

compliance

team

has

very

small

team

and,

as

the

company

growing

and

working

with

global

customers

around

the

world,

including

europe

and

brazil

and

africa,

so

a

lot

of

the

company.

A

lot

of

countries,

as

you

all

know,

introduced

different

compliance

laws

and

policies,

and

so

the

company

has

definitely

been

looking

for

solutions

and

we

have

started

it.

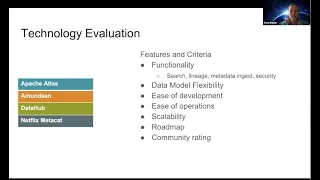

B

And

so

our

technology

evaluation

started

sometime

june,

I

think

last

year

and

we

were

very

excited

and

data

hub

became

open

source.

We've

been

sort

of

following

the

journey

of

that

product

within

linkedin.

We've

always

been

a

fan

of

linkedin

products,

we've

operated

at

kafka,

a

few

other

systems,

so

it's

always

been

a

really

a

pleasure

to

see

that

linkedin

open

source

as

a

product,

and

so

data

hub

definitely

made

a

list

for

evaluation

and

some

other

systems.

We

looked

at

as

apache

atlas.

B

What

do

I

mean

by

this?

We

are

service.

We

like

open

source

products,

we

like

to

contribute

to

open

source

product

as

well,

and

so

we

did

evaluate

what

tech

stack

is

behind

the

each

of

these

products

to

ensure

that

we

are

capable

of

submitting

prs

and

really

understanding

the

code.

That's

needed,

maybe

even

helping

with

some

bug

fixes

with

the

community,

so

that

was

one

of

our

valuation,

criterias

and

then

ease

of

operations.

B

Our

team

is

very

small

and

we

do

operate

a

variety

of

different

tools

and

systems

and

the

easier

the

process

is.

The

stability

of

a

product

is

really

important

for

us.

The

upgrades

just

really

deployment

and

evaluation

from

development

to

production

ability

to

integrate

and

test

the

tools

before

promoting

to

production.

So

all

of

this

components

definitely

were

evaluated

as

well

scalability.

We

do

have

a

lot

of

data

and

we

do

have

a

lot

of

different

micro

services.

B

B

Additionally,

the

road

map

we

knew

that

we

won't

be

able

to

sort

of

take

advantage

of

all

the

heavy

chain

features

immediately

for

our

customers.

So

we

wanted

to

do

like

a

slow

rollout

and

addition

of

the

various

features

within

viasat,

and

so

we

looked

at

the

data

hub

roadmap

for

open

source

in

multiple

features

and

really

well

aligned

with

what

we

were

trying

to

do.

Introducing

the

lineage

tradition,

ml

models,

introducing

some

of

the

metrics

functionality

and

data

quality

ratings,

so

it

so.

B

The

timeline

looked

great

and

just

the

fact

that

we

were

seeing

everything

we

needed

on

that

roadmap

was

really

exciting

and

then

community

rating

just

for

product

itself,

the

github

rating

and

just

how

well

community

supports

the

product.

So

we

took

a

look

at

it

as

well,

and

I

guess

it's

no

surprise

since

I'm

here

today,

the

data

hub

was

the

product

we

selected

and

so

far

so

good.

It's

been

really

really

good

journey.

B

B

We

introduced

some

of

the

product

metrics,

so

we

have

the

product

analytics,

that's

being

gathered

from

this

ui

to

really

understand

how

users

are

interacting

with

data,

what

type

of

features

they

want,

as

we

also

introduce

new

features,

to

make

the

experience

as

easy

as

possible

as

and

then

some

way,

flexibility

of

integrations

with

some

of

the

tools

that

we

have.

So

we

wanted

to.

B

So

so

we

went

with

that

approach

where

ui

is

ours,

but

back

end.

We

try

not

to

fork

it.

We

draw

a

contribute

to

open

source

community

and

we

have

been

doing

that

a

little

bit

already,

which

kind

of

leads

me

to

the

experience

so

the

operations.

I

chatted

with

the

team

this

week

to

really

understand

were

any

issues

and

deployments

as

we

were

doing

it,

and

there

were

minor

things

in

the

beginning

when

we

were

integrating

viral

kafka,

the

secure

kafka-

and,

I

believe

javier

sotelo

on

my

team.

B

B

I

think

some

of

the

other

issues

have

been

contributing

as

well.

That's

been

accepted.

I

think

he

helped,

even

with

some

of

the

code

reviews.

So

that's

been

really

good

to

see

just

how

how

well

the

community,

how

sort

of

responsive

community

has

been

and

how

accepting

so

welcoming.

So

it's

really

been

good

for

us.

B

B

We

realized

that

we

are

not

the

only

one

who

up

here

sort

of

operate

the

data

infrastructure

but

needed

this

functionality,

and

the

team

has

been

really

delivering

very

good

products

within

the

company

and

good

service,

and

so

we

have

this

trust

of

our

teams.

But

we

introduced

a

good

tool

and

we

worked

with

them

to

explain

this

is

the

data

hub

features?

This

is

the

evaluation

process.

B

We

shared

the

data

hub

information

with

them

and

all

the

teams

been

really

supportive

of

our

selection

of

the

product.

So

it's

making

this

adoption

definitely

much

easier

for

us

and

so

we're

working

the

rest

of

the

teams

to

ingest

information

about

their

data,

working

with

a

compliance

team

to

see

how

we

could

introduce

features

necessary

for

them.

B

The

lineage

has

been

really

anticipated

within

the

companies

they're

waiting

on

that

and

will

be

probably

around

the

summer,

really

integrating

with

all

our

tools

to

introduce

lineage,

visualizations

and

then

dashboards

and

reports.

We're

really

excited

to

see

the

rfc

and

the

end

implementation

for

dashboards.