►

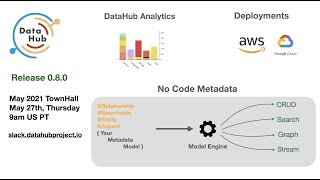

From YouTube: May 27 2021: DataHub Community Meeting (Full)

Description

Full version of the DataHub Community Meeting on May 27th 2021

Welcome - 00:00

Project Updates by Shirshanka - 00:01

◦ 0.8.0 Release

◦ AWS Deployment Recipe by Dexter Lee (Acryl Data) - 09:48

• Demo: Product Analytics design sprint [Maggie Hays (SpotHero), Dexter Lee (Acryl Data)] - 12:32

• Use-Case: DataHub on GCP by Sharath Chandra (Confluent) - 30:16

• Deep Dive: No Code Metadata Engine by John Joyce (Acryl Data) - 48:35

• General Q&A and closing remarks - 01:12:13

A

A

Cool

so

let's

see

what

we

did

this

month.

First

off

quick

project

updates

on

the

release.

We

have

aws

deployment

recipe

that

dextre

will

walk

us

through.

Then

we

have

a

demo

of

the

product

analytics

design

sprint

that

maggie

led

and

we

have,

for

the

first

time

a

pre-recorded

video

from

sharat

that

confluent

we'll

talk,

walk

us

through

how

he's

done?

A

How

he's

deployed

data

hub

on

gcp

he's

actually

here,

but

he's

just

on

a

low

internet

connection,

so

he's

gonna

be

there

for

questions

and

then

finally,

john

is

going

to

walk

us

through

kind

of

our

big

highlight

no

code

metadata,

and

if

we

still

have

time

we

will

go

through

questions

all

right.

So

first,

a

big

update

on

kind

of

the

metadata

space

itself.

A

Last

week

we

ran

metadata

day

2021

in

collaboration

with

linkedin,

and

you

probably

saw

a

lot

of

folks

who

joined

the

slack

channel

as

a

result,

because

we

used

our

slack

community

as

a

way

to

kind

of

have

conversations

about

the

event,

a

lot

of

great

content.

I

highly

suggest

you

watch

the

video.

We

got

a

great

group

of

experts

and

addressed

a

lot

of

these

burning

questions

around

how

to

do

data

mesh

right.

A

A

few

controversial

statements

like

you

know

we

should

be

contra

confronting

the

mess

and

not

running

away

from

it.

But

a

lot

of

good

stuff

go.

Take

a

look

at

it.

What

was

really

nice

to

see

is

that

everyone

was

aligned

with

essentially

getting

all

of

the

domains

to

publish

metadata

out

and

get

it

all

connected

up

into

a

single

metadata

graph,

which

is

kind

of

very

aligned

with

how

we

think

about

the

world

at

datahub.

A

So

this

is

great,

so

go

to

youtube

check

it

out

lots

of

good

nuggets

in

there

all

right,

so

project

updates

0.8

is

coming.

We

opted

not

to

cut

the

release

before

the

long

weekend,

just

because

we

didn't

want

people

to

upgrade

and

then

run

into

issues,

and

you

know

not

get

support

over

the

weekend.

So

we'll

cut

the

release

right

after

the

long

weekend

so

enjoy

your

holiday.

If

you

are

taking

it,

if

not

wait

and

we

will

get

it

done

right

after

stats.

A

Look

very

similar

to

the

previous

one

about

five

weeks,

trending

about

the

same

number

of

commits

interesting

update.

We've

got

13

new

committers

into

the

project

and

this

particular

release

will

have

26

committers

from

18

different

companies.

So

that's

great

a

lot

of

diversity.

This

is

exactly

what

we

want

in

terms

of

the

biggest

highlights.

A

Of

course,

it

will

include

the

product

analytics

feature

as

well

as

no

code

metadata,

and

there

are

a

bunch

of

other

highlights

as

well

that

I'll

quickly

walk

through

before

that

there's

an

official

kind

of

sense

to

our

airflow

lineage

integration.

Now

the

astronomer

team

has

kind

of

published

our

provider

on

the

registry,

so

it's

now

kind

of

official

airflow

supports

data

hub

as

a

lineage

back

end,

we're

actually

listed

as

a

featured

partner.

A

So

this

is

great,

I

think,

we'll

see

a

lot

of

people

using

airflow

connecting

us

up

with

airflow

for

lineage,

and

this

is

going

to

be

great.

So

really,

thanks

to

all

of

you

for

kind

of

getting

us

over

the

hump

and

all

of

the

support,

we'll

probably

do

a

longer

write-up

about

the

integration

in

a

future

blog

post.

A

Okay,

so

product

improvements,

a

couple

of

big

improvements

to

search.

We

now

support

autocomplete

across

types.

So,

as

you

start

typing,

you

not

only

get

recommendations

for

data

sets,

but

also

charts,

as

well

as

other

kinds

of

entities,

depending

on

what

the

hits

are.

So

it's

it's

pretty

cool.

It's

table

stakes

for

any

nice

search

product,

so

we

kind

of

built

it.

Try

it

out.

It's

already

live

on

demo.

Dot

data

hub

so

go

take

a

look

and

play

around

with

it.

A

Second

one:

it's

almost

like

something

we

filled

in

that

we

didn't

get

to

the

first

time.

Pipelines

now

include

also

visualization

of

the

tasks

that

are

part

of

the

pipeline

themselves,

so

we

actually

organize

the

tasks

within

the

pipeline

based

on

kind

of

a

sorting

of

the

dependencies

between

the

tasks

and

that's

that's

kind

of

one

of

the

things

we

added

a

few

improvements,

and

this

is

thanks

to

the

new

york

times

team

that

have

been

playing

around

with

the

themes

that

are

available

in

data

hub.

A

A

And

next

up

coming

is

business

glossary.

It

was

one

of

the

big

kind

of

requested

items.

The

saxo

bank

and

thoughtworks

team

have

been

working

really

closely

to

kind

of

build

this

with

us.

So

it's

great.

This

is

kind

of

screenshots

from

their

production

deployment

internally

and

next

month,

they'll

be

talking

in

more

detail

at

the

town

hall

about

this,

so

it's

actually

in

the

code

right

now,

but

we're

calling

it

incubating

because

we

haven't

yet

published

full-on

documentation

for

how

to

use

it.

But

this

is

sort

of

how

it

looks.

A

So

I'm

really

excited

about

this

because

it

finally

gives

kind

of

the

maturity

that

we've

been

looking

for.

For

people

to

actually

have

curated

glossaries

that

they

can

attach

to

schemas

as

well

as

data

sets

all

right

as

usual,

a

lot

of

improvements

and

integrations

on

the

systems.

Side.

Herschel

has

been

obviously

doing

a

great

job

managing

the

community

here,

but

a

lot

of

people

have

made

a

lot

of

contributions.

So,

thanks

to

all

of

you,

I've

listed

out

your

github

ids

if

you're

here.

A

Thank

you

very

much

for

all

of

the

help.

Big

changes

that

we've

added

transformers,

so

you

can

connect

to

a

source

and,

as

you're

extracting

metadata

out,

also

transform

it

before

it

goes

into

data

hub

people

are

using

it

to

add

owners.

People

are

using

it

to

add

tags

to

metadata

as

it's

flowing

through,

and

I

think

the

sky

is

the

limit.

So

we'll

probably

see

a

lot

of

new

integrations

there

in

terms

of

systems,

we've

had

deeper

integrations,

so

improvements

in

integrations

with

dbt

looker

migrated

out

of

incubating

into

supported

production.

A

So

looker

is

now

fully

supported.

We

actually

had

support

for

added

support

for

views

and

a

few

other

things.

So

thanks

for

that,

hive

also

got

better.

We

now

support

kind

of

the

data

bricks

hive,

as

well

as

the

hd

and

site

hive

they're

kind

of

odd,

in

the

sense

that

they

don't

use

the

thrift

binary

protocol,

but

they

use

the

http

and

the

https

protocols

for

transporting

for

doing

jdbc

over

http,

and

so

it

was

a

little

bit

of

work,

but

we

got

it

done

so.

A

Hive

should

now

be

fully

supported

in

all

shapes

and

forms,

and

a

few

other

improvements

that

I've

listed

out,

the

one

I

was

very

happy

to

see-

was

schema

inference

added

for

mongodb,

so

mongodb,

as

you

know,

is

a

kind

of

document

store

and

there

are

no

real

schemas

in

it.

You

can

put

whatever

you

want,

so

we

added

kevin

added

the

schema

inference

capability,

so

you

can

connect

data

hub

up

to

a

mongodb

instance

and

it

will

not

only

get

the

collection

names

but

also

infer

schemas.

A

A

All

right,

a

few

upcoming

roadmap

items

that

I

haven't

published

on

the

public

roadmap,

but

since

the

community

asks

about

this

a

lot

I

thought

I

would

drop

it

in

this

talk.

One

is

neptune.

We

get

asked

about

it

a

lot,

so

it's

gonna

happen

in

june

for

sure.

So

this

is

a

graph

database

that

is

supported

as

a

managed

database

managed

graphdb

on

aws

column

level,

lineage

we're

going

to

get

to

it

in

either

june

or

latest

by

july.

A

A

B

B

So

the

aim

has

been

to

make

deploying

on

kubernetes

easier

and

during

my

talk

with

everybody

here,

I

figured

out

some

of

the

pain

points

that

we

had.

One

of

the

pain

points

was

that

setting

up

the

storage

layer

on

kubernetes

was

difficult.

So

what

we

did

was

we

added

a

quick

start

configuration

for

setting

up

the

storage

layer,

including

my

sql,

neo4j

kafka

and

elasticsearch,

so

folks

can

just

easily

create

using

the

helm,

charts

that

we

created

by

the

end

of

next

week.

B

We

are

planning

to

publish

our

helm

charts

to

helm.datahub

project.io,

so

please

wait

for

the

announcements

there

and

we're

planning

on

adding

guides

on

exposing

data

hub

front-end,

which

was

the

hard

part

so

setting

up

ingress,

to

expose

the

data

up

front

and

to

external

parties,

and

this

is

very

specific

to

different

platforms.

So

gcp

has

their

own

way.

Aws

has

their

own

way,

so

we're

planning

on

adding

guys

one

by

one

for

the

widely

used

platforms.

B

B

So

it

starts

by

talking

about

how

to

easily

create

a

kubernetes

cluster

on

eks,

deploying

data

hub

and

depth,

using

our

hum

charts

on

the

kubernetes

cluster.

Third

is

the

big

part,

exposing

data

up

front

end

using

the

application

load,

balancer

controller,

of

course

there's

other

ways

of

exposing

data

up

front

in,

but

I

wanted

to

focus

the

the

aws

specific

way

in

the

guide.

A

C

C

I

think

it

was

april.

Time

is

weird

right

now.

Who

knows

it

was

within

the

last

month

or

so

I

teamed

up

with

the

guys

over

at

actual

data

to

run,

what's

called

a

design

sprint.

So

I'll

walk

you

guys

through.

What

is

that?

What

does

that

mean?

What

did

we

do?

What

was

the

point

of

it

and

then

we'll

move

into

a

live

demo?

C

So

can

y'all

see

my

screen?

Look

good

all

right.

So

if

you've

never

heard

of

a

design

sprint,

it's

something

that

was

created.

It

came

out

of

gv

or

google

ventures

and

it's

basically

a

framework

to

rapidly

move

through

discovery,

ideation

solution,

prototyping

and

testing

solving

hard

problems

with

technology

in

five

days

granted

we

did

it

in

three

days.

There

are

a

bunch

of

truncated

ways

that

you

can

do

it,

but

the

the

original

one

was

in

a

five-day,

a

five-day

sprint

and

I'll

walk

you

through

this.

C

If

y'all

are

interested

in

learning

more

about

this,

there's

a

ton

of

information

online,

this

is

the

book.

This

is

kind

of

like

the

main

source

of

record.

I

guess

of

what

a

sprint

framework

looks

like

you

can

find

out

amazon

all

that

also

on

youtube.

There's

a

channel

called

aj

and

smart,

where

they

have.

They

have

videos

that

break

down

every

single

session.

They

call

it

design

sprint

2.0,

so

it

kind

of

gives

you

like

a

refresh

of

it

there

so

ample

ample

context

or

resources

for

you

online.

C

So

on

the

first

day,

we

tackled

understanding

our

problem

like

identifying

and

understanding

our

problem

at

hand,

so

that

we

could

ultimately

build

a

strong

prototype

around

it.

So

we

asserted

that

our

problem

was

that

the

owners

and

admins

of

data

hub

do

not

understand

how

users

are

interacting

with

the

tool.

So

that's

a

big

problem

right.

There

are

a

lot

of

technical

approaches.

C

You

could

take

to

solving

that

and

so

what

what

we

started

doing

was

taking

a

step

back

and

understanding

how

to

contextualize

that

problem

into

the

bigger

picture

of

the

data

hub

strategy.

So

we

talked

about

how

does

this

fit

into

the

long

term

vision

of

data

hub,

and

we

rallied

around

this.

This

vision

that

in

12

to

18

months

data

platform

owners

will

want

to

deploy

data

hub

at

the

organization

because

it

gives

them

superpowers

so

right

away.

C

C

And

then

we

talked

about

what

we

identified?

What

question

or

questions

we

would

be

asking

at

the

end

of

this

process

to

understand

if

it

was

a

success

and

we

rallied

around,

are

we

providing

data

platform

owners

with

actionable

insight,

so

user

usage

analytics

is

not

all

like

it

doesn't.

Just

because

you

have

usage

analytics

doesn't

mean

it's

meaningful,

so

we

wanted

to

make

sure

that

we

would

be

able

to

ask

concretely.

Do

you

now

have

actionable

insights

so

that

you

can

move

towards

this,

like

future

value

of

data

hub

in

the

long

run?

C

The

next

thing

we

did

is

we.

We

started

to

break

down

all

of

the

potential

pain

points

within

developing

or

solving

this

problem

within

the

current

stack,

and

we

reframe

this

into.

What's

called

a,

how

might

we

and

really

it's

just

a

way

to

kind

of

like

flip,

a

problem

on

its

head

and

turn

it

into

an

opportunity?

So

we

talked

about

how

might

we

make

the

analytics

infrastructure

easy

to

manage?

So

it's

not

another

service

for

operators

to

manage.

C

How

might

we

give

clear

insights

where

there's

poor

data

qual,

sorry,

there's

poorer

data

quality

coverage

but

heavily

used

assets

so

that

way

we're

we're

trying

to

solve

this

solution

without

adding

too

much

burden

on

the

the

owners

or

operators

of

the

platform

and

then

also

giving

insight

into?

Where

are

you

seeing

a

lot

of

activity

and

there's

actually

opportunity

to

enrich

that

metadata?

C

To

give

folks

more

more

power,

there

then

another

thing

we

did

was

we

talked

to

our

experts

within

the

data

hub

community

and

wanted

to

make

sure

that

we

had

a

well-rounded

understanding

of

this

problem,

set.

How

folks

even

thought

about

how

products

analytics

would

fit

into

their

management

of

data

hub,

and

so

you

know

sample

questions,

and

these

user

interviews

were

what

are

some

like.

The

top

questions,

you'd

like

to

be

able

to

answer

around

user

activity

and

what

decisions

would

that

inform.

C

C

We

then

mapped

out

our

kind

of

user

experience

within

datahub

so

that

we

had

a

very

concrete

understanding

of

where

this

solution

fit

into

that

workflow.

So

we

talked

about

how

you

would

install

datahub

as

a

poc

have

some

step

of

ingesting

metadata

share

it

with

your

users

gather

feedback.

Maybe

do

some

iteration

cycles

here

from

there.

You

then

move

into

feature

development

and

improving

metadata

to

then

move

it

back

into

this

this

flow

and

we

really

targeted

this

idea

of.

We

are

assuming

that

the

poc

exists.

There

is

metadata.

C

There

are

active

users,

we

are

gathering

feedback

and

making

decisions

about

user

activity

to

inform

future

development

areas

to

improve

metadata

and

ways

to

drive

adoption.

So

again,

this

really

just

helps

us

have

a

very

laser

like

a

laser

focus

of

where

this

problem

fits

into

the

vision

of

data

hub,

the

user,

life

cycle,

etc.

C

Then

we

move

into

sketching

solutions.

You

can

see

that

these

came

in

a

variety

of

different

ways.

Some

folks

are

writing

a

pencil

paper.

Some

folks

are

whiteboarding

mocking

things

up

with

the

ui.

The

idea

is

that

we

just

start

visualizing.

What

does

this

solution?

Look

like

then,

day,

two

decide

on

a

solution,

we're

again

we're

we're

deciding

on

a

solution

to

tackle

this

one

big

problem.

C

B

B

So

the

first

thing

is

to

standardize

the

way

usage

events

are

produced

on

the

react

app,

so

please

check

out

the

event

schemas

there,

so

we

standardize

the

page

view,

events

search

events,

browse

events

and

so

on

and

so

forth,

where

we

put

enough

information

for

us

to

understand

where

these

usage

events

are

coming

from

and

what

these

users

events

like

it

actually

mean.

Second,

was

to

utilize

existing

components

of

datahub,

as

maggie

mentioned

before,

we

don't

want

to

make

operators

lives

even

harder

by

adding

even

more

components

to

deploy.

B

So

we

wanted

to

use

whatever

components:

we've

already

deployed

to

actually

support

a

initial

prototype

of

the

analytics

class

analytics

product.

The

third

was:

while

we

wanted

to

have

this

default

way

of

using

existing

components.

We

wanted

everyone

to

be

able

to

plug

their

own

architecture

for

consuming

these

usage

events.

B

So

usage

events

are

actually

posted

to

a

kafka

stream,

so

anybody

can

just

plug

in

any

consumer

of

choice

for

data

collection

and

analytics

operators

can

also

wire

third-party

analytics

tools,

like

google

analytics

and

fixed

panel

to

the

react

app.

So

please

check

out

this

doc

for

more

details

on

how

to

do

that.

B

Unfortunately,

for

now

you

have

to

fork

the

repo,

but

we

are

going

to

work

on

making

that

through

config

all

right,

so

moving

on,

let's

go

on

to

the

the

end-to-end

flow,

so

you

can

see

each

component

here

are

existing

components

in

our

data

hub

graph,

so

our

reactive,

so

that

we

have

the

user

mark

here,

as

the

user

interacts

with

the

react

app,

it

calls

it

sends

over

the

events

through

the

track

endpoint

in

the

front

end.

So

the

front

end

collects

these

events

and

posted

to

a

kafka

topic.

B

B

It

will

listen

to

data

hub

usage

event,

v1

and

process

these

events

that

come

through

so

note

that

these

events

are

not

hydrated.

So

what

we

do

like,

for

example,

a

user

earn

comes

in.

We

want

to

know

the

details

about

this

user

to

do

that,

we

go

back

to

gms,

so

we

call

the

remote

dow

local

dao

to

get

the

details

about

the

entities,

so

we

hydrate

the

entity

features

and

we

package

it

into

a

single

document

which

we

send

over

to

the

data

hub

usage

event.

B

Data

stream

on

elasticsearch,

so

elasticsearch

connects

all

these

usage

events

and

front

end.

So

we

created

a

new

analytics

controller

which

sends

over

filter

and

aggregate

queries

to

the

last

search

data

hub

usage

event,

data

stream,

where

it

kind

of

says

it,

tries

to

count

and

do

a

bunch

of

time

series

analytics

and

things

like

that

to

build

some

bare

bone,

charts

and

tables

that

power

our

analytics

service

and

that

is

fed,

backed

into

our

react

app

at

the

end.

A

B

B

You

can

see

the

search

event

that

came

in

so

it

says

it

queried

with

a

query

sample

as

well

as

search

view,

events

that

talk

about

how

many

results

was

in

the

search

page

and

as

we

click

on

it.

Each

of

these

actions

that

you

take

inside

the

entity

page

inside

the

search

page

inside

the

browse

page

will

translate

to

a

certain

usage

events

that

comes

in

so

now.

These

usage

events

are

all

sent

over.

You

can

see

that

the

elastic

search

connector

is

sending

the

bulk

request

to

our

data

stream

there.

B

So

once

we

go

to

the

analytics

beta,

what

we

do

is

each

of

these

components

are

configurable

inside

the

code.

In

the

data

hub

front

edge,

we

have

highlight

cards,

we

have

time

series

charts

and

then

we

have

tables

as

well

as

stacked

bar

charts.

So

we

created

these

main

four

different

visual

cards

that

we

want

to

support,

and

then

we

implemented

all

of

them.

So

you

can

see

here.

This

is

searches

last

week

and

then

top

search

queries

that

come

in

you

can

see

sample.

B

There

was

top

search,

five

searches

as

well

as

section

views

across

different

entity

pages,

so

we

have

lineage,

we

have

ownership,

we

have

schema

and

so

on

also

actions

by

entity

type.

You

can

see.

We

have

update

tags

here.

I

updated

a

few

yesterday

to

just

show

you

guys,

and

then

we

have

top

view

data

sets.

Of

course

we

will

be

continuing

to

add

more

charts

here.

So

it'd

be

great

if

we

could

get

feedback

here,

so

I

wanted

to

go

over

the

charts

that

we

see

for

our

own

demo.datahub

page.

B

So

you

can

see

we

have

amazing.

We

have

421

weekly,

active

users,

crazy

thanks

for

using

the

demo.

You

can

see

the

searches

that

are

happening

as

well

as

the

various

search

queries.

So

we

can.

We

can

gather

a

lot

of

signals,

but

what

users

are

doing

on

this

platform

by

just

looking

at

these

few

charts?

C

Yeah

one

thing

I'll

add

here:

dexter:

could

you

actually

just

display

open

your

or

hide

the

terminal

in

there,

so

you

can

see

like

a

full

s,

a

full

view

of

the

dashboard

perfect.

Thank

you.

So

what

we're

trying

to

do

is

like

find

find

ways

to

contextualize

not

only

activity,

but

also

where

is

their

opportunity

to

really

like

leverage

the

power

of

of

data

hub

right.

C

So,

if

we're

thinking

about

the

the

number

of

data

sets,

so

we

have

92

data

sets

and

half

of

them

have

owners

assigned

that's

great.

So

what

that

means

is

that

we're

halfway

towards

having

fully

documented

data

sets

within

data

hub

right?

So

it's

it's

not

even

just

the

what

are

people

looking

at,

but

what

are

people

looking

at

that's

specific

to

the

value

that

data

hub

is

driving

the

other

part,

and

so

speaking,

from

my

perspective

as

a

product

manager

managing

this

type

of

tool?

C

I

want

to

understand

how

do

I

decide

where

to

invest?

My

team's

energy

are

people

only

looking

at

data

sets.

Are

they

looking

at

pipelines

now,

and

maybe

our

pipelines

aren't

well

documented

or

in

the

actions

that

they're

taking?

Are

they?

Are

they

adding

tags?

Are

they

manage

changing

owners?

Are

they

looking

at

ownership,

detail,

lineage,

etc?

C

That

way,

I

can

start

to

narrow

down

where

to

have

my

team

and

my

stakeholders

start

to

and

invest

in

having

more

robust

and

and

more

meaningful

metadata

within

there.

The

other

thing

that

we're

thinking

about

is

you

know.

Looking

at

the

terp

terp

is

not

a

word

excusing.

The

top

search

queries

to

understand

like

what

are

people

even

looking

for

in

here?

A

Cool

thanks

a

lot

maggie

and

dexter

yeah.

It

was

a

great

experience

and

I

was

talking

to

young-

and

you

know,

nick

and

ben

over

on

the

linkedin

side

as

well,

and

they've

actually

built

a

very

complex

and

very

expensive

analytics

capability

on

the

product

stream

as

well,

so

at

a

future

date.

We

can

get

into

that

as

well.

That

includes

sessions

and

a

lot

of

deeper

analytics.

So

it's

pretty

cool

what

people

are

doing

with

it

all

right.

So

coming

back,

we

are

running

pretty

late.

A

So

what

we

have

today

is

a

very

interesting

talk

from

sharath

he's

actually

in

idaho,

backpacking

or

something

like

that.

He

drove

a

thousand

miles,

but

he

was

dedicated

enough

to

pre-record

a

talk

for

all

of

you,

so

I'm

gonna

play

that

right

away

and

he's

on

the

meeting.

So

he'll

be

around

to

answer

questions.

D

Sounds

hi

folks,

my

name

is

sharath

and

I'm

here

to

talk

to

you

about

data

hub

deployment

on

gcp

and

before

we

dive

into

it.

Maybe

a

brief

introduction

about

myself.

My

name

is

shirat.

I

am

a

data

engineer

at

confluent,

I've

been

one

of

the

first

teas

on

in

confluent,

and

that

means

I

helped

set

up

the

tech

stack.

The

data

stack

help

build

out

some

of

the

tools

that

we

use

within

the

data

science

and

engineering

teams

yeah.

So

let's

talk

about

today's

agenda.

D

So,

let's

get

into

it

so

at

confluent

our

data

warehouse

stack

is

basically

if

we

are

a

google

workshop

right,

so

we

use

bitquery.

As

our

data

warehouse,

we

use

cloud

composer,

which

is

airflow

as

an

orchestrator

for

high

volume

jobs.

We

use

pi

spark

on

data

procs,

which

is

again

a

managed

cluster

on

gcp

and

essentially

within

bigquery.

We

have

multiple

layers.

Think

of

these.

As

schemas

landing

is

like

a

layer.

D

D

When

we

talk

about

onboarding

right

now,

we

have

the

data

warehouse

set

up

in

a

way

that

all

of

the

lineage

that

exists

within

the

data

warehouse,

which

is

big,

query,

will

be

existing

or

will

be

seen

in

data

hub.

But

eventually

we

want

to

onboard

various

engineering

teams

who

have

kafka

streams

as

their

input,

who

could

have

their

own

silo

databases

and-

and

another

example,

is

right.

Now

we

have

data

sources

where

an

engineering

team

produces

data

into

a

kafka

topic.

D

We

use

a

connector

to

push

the

data

into

bigquery

and

then

there

are

multiple

layers

like

you

see

that

transformations

happen

and

you

see

a

final

table

that

could

be

real

time

real

plus

a

batch,

or

it

could

be

a

batch

processing

over

real

time,

but

this

lineage

would

help

us

essentially

identify

okay.

This

reporting

table

has

a

source

of

this

kafka

stream

and

not

just

the

internal

data

warehouse

tables,

but

also

the

source

of

the

stream

that

we

have

that

you

are

from,

and

the

third

part

is

visibility.

D

So

why

did

I

have

so?

We

did

look

at

a

few

options.

I

looked

at

lifts

amundsen.

There

was.

We

also

looked

at

one

of

the

proprietary

metabases,

so

we

used

metabase

as

a

bi

tool

and

we

wanted

to

derive

a

lineage

using

database,

but

that

had

very

high

limitations,

and

you

know

data

hubs,

architecture

being

having

having

kafka

in

their

architecture.

We

really

want

to

leverage

this

and

make

sure

that

we

use

confluence

kafka

and

data

hubs,

intel

architecture

to

really

power.

D

This

tool

that

can

help

drive

not

only

the

metadata

but

also

real-time

changes

and

alerts.

That

could

happen

over

this

real

time.

So

one

of

the

other

projects

that

we're

doing

is

to

build

streaming

applications,

and

I

think

this

is

a

good

example

of

how

streaming

applications

can

blend

in

with

one

of

these

metadata

tools,

to

give

us

good

info

just

to

not

maybe

not

to

dive

too

deep.

But

something

else

that

I

think

is

important

is

a

lot

of

data.

Warehouses

always

fall

back

on

things

like

okay.

D

D

So

before

we

dive

into

the

deployment

itself,

just

a

high

level

overview,

so

the

first

step

is

using

helm,

charts

with

deployed

data

hub,

and

it

really

took

us

less

than

30

minutes

to

do

that.

The

second

is

using

gcp.

We

deployed

ingress

services.

A

third,

a

simple

cron

job

to

load

the

metadata

into

bigquery

into

data,

big

queries

metadata

into

data

hub,

and

this

cron

job

is,

as

is

because

we

know

that

the

tables

and

columns

don't

change.

D

Very

often,

we

have

like

a

weekly

crown

job

that

pushes

this

data

and

then

the

custom

tag

that

helps

us

emit

all

of

the

lineage

data

into

github.

So

let's

maybe

get

into

the

data

hub

deployment,

so

just

quickly

going

over

the

environment

right.

So,

as

we

said,

we

are

a

gcp

workshop

at

confluence

data

warehouse

internal

data

team

is

the

gcp

uses

all

of

gcp

services,

so

it

only

made

sense

for

us

to

deploy,

manage

kubernetes

gcps

manage

kubernetes

service

use,

use

that

to

deploy

data

hub.

D

So

before,

and-

and

so

one

of

the

things

that

we

we

discussed

is

the

first

step

of

this

is

basically

when

you

go

to

data

hub

kubernetes

and

you

have

the

quick

start

guide

there,

we

had

to

do

very

little,

nothing

to

very

little

changes

to

that

guide.

Just

some

infrastructure

changes

where

we

had

to

increase

and

decrease

some

of

the

preloaded

values

for

the

cpu

and

the

memory

space.

But

apart

from

that,

I

think

everything

else

that

we

used

from

the

charts

helped

us

really

deploy

data

hub

on

gcp

kubernetes

seamlessly.

D

Once

it

was

deployed,

there

were

two

things

that

we

wanted

to

do

that

we

required

to

do.

One

is

to

run

the

ui

and

the

second

is

to

run

a

gms

service

ingress.

That

would

help

us

connect

to

this

data

hub

service

to

emit

any

data,

so

google

provides

or

gcp

provides

a

good

way

to

create

english

services.

D

The

ip

address

would

be

used

to

interact

with

the

ui,

and

the

second

would

be

used

to

interact

with

the

airflow,

which

is

cloud

composer.

So

going

back,

we

deployed

our

our

kubernetes

using

helm,

charts

into

gcp.

We

created

two

ingress

services,

which

is

again

just

two

clicks

away.

The

next

step

is

to

run

a

simple,

tiny,

cron

job

that

will

push

all

of

the

data

metadata

into

bigquery,

and

this

cron

job

could

be

scheduled.

However,

you

feel

your

database.

D

How

often,

if

you

feel

your

database

is

refreshed

and

the

final

step

is,

I

think,

how

do

you

operationalize

your

lineage

data

into

data

hub?

How

do

you

make

sure

that

your

lineage

data

is

captured

in

data

hub?

So

I

think

I'm

going

to

take

some

spa

time

and

and

maybe

walking

through

some

of

these

things

that

we

set

up,

but

before

that

just

a

few

components

that

are

required

for

this.

So

we

figured

that

gcp's.

Audit

query

log

is

a

very

good

source

of

understanding

the

lineage

of

data.

D

That

means

every

query

that

is

run

against.

Bigquery

is

captured

in

the

audit

log.

So

any

query

where

a

source

table

is

a

table

within

the

information

schema

and

the

destination

table

is

also

a

table

within

the

information

schema.

Any

queries

with

this

that

match

this

condition

is

safe

to

say

that

it's

a

transformation

or

record

in

the

audit

log

for.

D

Then

the

audit

log

would

capture

one

row

where

you

would

have

the

destination

table

as

t1

and

the

source

tables

as

s1

s2

s3,

and

when

you

think

about

data

hub,

that's

exactly

what

it

up

is

doing.

It's

trying

to

take

your

source

tables

and

map

it

to

your

destination

tables

and

bigquery

audit

logs

gives

this

out

of

the

box.

D

You

don't

have

to

worry

about

which

sql

script

runs,

which

of

the

tables

are

which

sql

script

is

responsible

for

loading

which

of

these

tables

and

the

steps

you

need

to

get

this

audit

log

into

bigquery

is

also

pretty

simple.

You

have

the

gcp

lobs

logs

in

the

logging

again

as

a

service

in

gcp,

a

logs

router

can

be

set

up

to

push

these

logs

into

bigquery.

There

again

has

basically

nested

rows

in

bigquery

and

we

use

cloud

composer

again

airflow

to

transform

this

data

and

push

it

to

data

hub

as

emitters

right.

D

D

This

query

essentially

breaks

down

the

audit

log

into

source

and

destination,

just

two

cables

that

do

two

columns

just

source

and

destination

so

for

every

destination.

What

are

the

different

source

tables

once

you

have

this

information

you're?

Basically,

what

you're

trying

to

do

is

you

use

the

emitter

task

to

use

this

information

embedded

embed

each

of

these

source

or

destination

tables

into

an

emitted

task

and

create

a

tag

out

of

it

and

use

this

stack

to

push

the

data

into

a

data

hub?

D

So

so

the

next

step

was

basically

to

take

all

of

this

log

data

create

a

sort

of

a

source

and

destination

hierarchy

and

then

using

the

template

that

is

given

by

data

hub,

create

an

emitter

task

and

using

that

emitter

task

we

create

a

dag

that

is

then

executed

or

scheduled.

However,

frequently

you

want

so,

let's

maybe

we

take

a

quick

look

at

the

data

hub

emitters

itself

right.

D

So

when

you

think

about

the

query,

this

is

the

query

that

we

were

talking

about

how

what

is

the

best

way

to

extract

this

query

in

a

way

extract

the

log

in

a

way

that

we

get

source

and

destinations

once

we

have

that

data.

All

we

are

doing

is

creating

upstream

and

downstream

funds

or

urns,

basically

trying

to

say

that

for

every

destination

table,

let's

build

these

upstream

and

downstream

dependencies

and

push

this

entire

task

as

a

data

hub

emitter

operator,

so

going

back

so

that

that's

exactly

what

we're

doing

here.

D

We

first

deploy

data

hub

on

gcp

using

helm,

charts

that

are

using

charts

that

are

already

provided

in

the

startup

quick

start

guide.

The

next

would

be

to

create

these

two

english

services,

which

is

a

two-step

process,

just

to

click,

select

this

service

and

create

custom

services,

and

the

next

is

to

use

the

template.

That's

already,

given

as

an

example

to

load

bigquery

metadata.

The

next

is

to

consume

the

logs

the

audit

logs

and

create

emitter

tasks

that

are

then

pushed

into

data

hub

for

the

lineage.

D

So

our

current

we're

taking

all

of

this

into

consideration

with

our

current.

Our

current

status

is

this.

We

have

data

deployed

in

our

sandbox.

We

don't

have

any

of

the

fully

managed

services.

For

example,

we

don't

have

cloud

sql

or

kafka

confluent

using

all

of

the

internal

services,

that

data

hub

is

packaged

with

what

did

do.

What

we

did

do

is

enable

oidc

connection,

authentication

using

octa

and

and

any

user

who

wants

to

access

data

hub

now

goes

through

octa.

D

So

there

is

that

step

of

security

as

well,

and

the

next

part

is

merited

and

linear.

So

this

is

pretty

much

automated,

so

we

have

metadata

and

lineage

almost

automated

into

our

sandbox

environment,

and

maybe

this

is

a

good

opportunity

for

us

to

take

a

look

at

how

this

data

looks

in

the

current

format

in

our

in

our

sandbox

environment.

Right.

So

when

we

look

at

the

data

here,

we

have

a

lot

of

different

tables

and

for

this

purpose

of

this

demo,

I've

just

selected

one

table

and

this

particular

table

has

a

hierarchy.

D

So

all

of

this

rich

data

set

lineage

can

help

when,

when

a

new

user

is

trying

to

understand

where

the

data

into

a

particular

table

flows

and

need

not

worry

about,

the

code

need

not

worry

about

what

the

sql

does

at

a

high

level

can

start

looking

at

where

these

tables

populate

and

support,

and

and

and

and

essentially

push

the

data

to

and

from

yeah

and

the

next

steps.

I

think

for

us.

The

next

steps

is

to

productionalize

our

current

setup,

make

sure

that

we

have

the

right

versioning

in

our

deployment.

D

We

also

want

to

make

sure

that

we

have

our

metadata

and

lineage

productionized

in

a

way

that

any

time

there's

a

new

change.

It's

automatically

pushed

to

data

hub.

We

want

to

start

using

managers

like

cloud

cloud

sql

for

mysql

or

even

confluent

kafka

to

use

instead

of

the

data

service

kafka,

that's

already

installed

as

a

part

of

the

charts.

Also,

we

want

to

make

sure

that

the

metadata

that

we

emit

is

rich.

So,

for

example,

we

want

to

add

more

column,

metadata

column

level

data.

D

We

want

to

add

owners

to

this.

We

also

want

to

add

processing

information

right

now.

We

don't

use

inlets

and

outlets,

so

we're

trying

to

see

what's

the

best

way,

to

make

sure

that

we

include

the

airflow

processing

information

into

the

edges.

So

then

you

have

the

complete

view

of

which

is

the

table

source

table

which

is

the

destination

table

and

which

processing

setup

really

helped

push

this

data

into

a

bigquery

yeah.

A

Thanks

a

lot,

I

wanted

to

make

sure

that

we

had

time

to

get

into

the

no

no

code

metadata

piece

from

john

as

well.

Sharath

is

on

the

call.

So

if

you

have

any

questions

about

kind

of

his

deployment

on

gcp

and

how

he's

setting

it

up

at

confluent,

definitely

being

him

either

here

or

on

slack,

but

thanks

a

lot

charles

for

sending

this

over

ahead

of

time

and

being

able

to

attend,

even

though

you

were

remote

all

right

with

that,

I

will

move

things

over

to

john.

E

Hey

guys,

can

everyone

see

my

screen:

yeah,

okay,

awesome,

yeah,

thanks

trishanka

and

thanks

dexter

and

sherat.

Those

were

these

are

really

informative

and

great.

Today,

I'm

going

to

talk

a

little

bit

about

a

project.

The

accrual

team

has

been

working

on

in

the

past

few

weeks

that

we're

calling

no

code

metadata.

E

I'm

going

to

go

through

sort

of

the

problem,

we're

looking

to

solve

the

solution.

We

came

up

with

and

a

little

bit

more

deep

technical

details

around

that,

as

well

as

a

demo

fit

in

there

a

little

bit

as

well,

so

with

that,

let's

get

right

into

it.

So

what

are

the

what's?

The

problem

we're

trying

to

solve

with

no

code

metadata?

Well,

the

problem

is

that

adding

an

entity

to

data

hub

today

is

pretty

hard.

So

these

are

three

pr's.

E

They

all

add

two

entities

each

and

you

can

see

that

the

number

of

files

that

needs

to

change

just

to

add

them

to

the

gms.

So

the

backend

layer

which

we're

going

to

focus

on

here

today

is

about

50

files

right.

So

we'll

talk

about

two

things,

one

is.

The

first

is

just

the

sheer

complexity

of

adding

that

entity

today.

It

requires

greater

than

25

files

for

entity

in

the

complex

case,

in

the

average

case,

it's

about

25

files

and

those

files

consist

of

models.

E

So

these

are

snapshots

and

aspects

that

we're

all

familiar

with

probably

search

documents,

relationship

models,

these

wrestling

resource

values,

burns

and

I'm

sure,

I'm

missing

something

here.

Endpoints

we

have

entity

and

optionally

aspect,

endpoint

files,

which

are

all

separate.

We

have

clients

which

are

just

wrappers

to

actually

talk

to

those

wrestling

resources.

You've

created

the

endpoints.

E

We

have

these

things

called

data

access,

object

factories,

so

each

entity

has

its

own

search,

local

browse

and

graph

dial,

which

allow

you

to

talk

to

the

persistence

layer

and

as

such,

we

need

each

entity

to

have

a

configured

factory

which

is

to

create

these.

Like

strongly

typed

factories,

we

have

index

filters

which

are

effectively

just

lambdas

that

take

metadata

change

events

and

turn

them

into

updates

against

the

search

index

and

the

graph

index.

E

E

We

found

that

on

average

it

would

take

one

to

two

weeks

for

a

new

data

hub

contributor

to

actually

get

up

to

speed

with

all

the

abstractions

and

add

entities,

and

that's

not

even

counting

the

back

and

forth

that

occurs

on

the

pr

after

you've

added

50

files,

which

can

span

up

to

a

month,

we've

seen

so

it's

just

too

hard

right.

That's

the

problem

we're

trying

to

solve

with

the

no

code

movement.

That's

where

the

no

code

movement

comes

into

play.

We

started

to

think

about.

E

How

can

we

make

the

process

of

adding

an

entity

much

simpler?

And

specifically,

we

started

to

rally

around

this

goal

that

it

should

take

no

more

than

15

minutes

to

add

or

extend

a

datahub

entity

at

the

backend

gms

layer

and

what

we

wanted

to

be

in

scope

are

the

ability

to

read

and

write

of

the

new