►

From YouTube: Sneak Peek!! UI-based Metadata Ingestion

Description

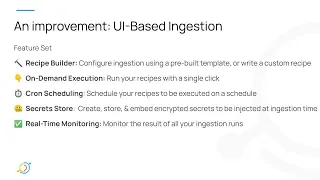

John Joyce (Acryl Data) gives a preview of upcoming functionality to ingest metadata into DataHub via the UI!

Learn more about DataHub: https://datahubproject.io

Join us on Slack: http://slack.datahubproject.io

Follow us on Twitter: https://twitter.com/datahubproject

A

Today,

I'm

going

to

talk

a

little

bit

about

a

feature:

we've

been

working

on

in

the

past

month:

it's

called

ui

based

ingestion

and

I'm

just

going

to

get

right

into

it.

First,

with

a

recap

of

the

current

state

of

the

metadata

ingestion

framework

so

to

ingest

batch

metadata

into

datahub.

Most

of

you

are

probably

aware

it's

kind

of

a

three-step

process.

A

The

first

step

is

you

install

the

datahub

cli

from

pipe

second

step?

Is

you

define

a

yamo

source

recipe

where

you

say

which

you

know

source

you

want

to

pull

from

and

the

configurations

about

that

source,

how

to

connect

to

it,

which

tables

which

databases

to

pull

out

of

it

and

then

finally,

you

run

this

datahub

ingest

command

and

the

cli.

A

So

on

the

on

the

right

side,

here

you

can

see

kind

of

that

process

in

a

picture

we

have

the

config

and

then

we

run

that

data

hub

ingest

command,

and

this

is

all

well

and

great.

It

actually

has

a

really

a

lot

of

good

things

about

it

and

some

not

as

good

things.

So

I'm

just

going

to

talk

about

what

we

view

as

the

pros

and

cons

of

the

current

metadata

ingestion

framework

that

in

batch

metadata

ingestion

in

particular,

you

know

it's

simple.

A

A

We

wanted

to

simplify

ingestion

such

that

you

know,

users

didn't

need

to

code

to

ingest

and

ingestion

should

take

less

than

five

minutes

after

you've

set

up

datahub,

so

pretty

much

quickstart

ingest

some

data

within

five

minutes,

but

we

also

wanted

to

keep

the

good

things

about

the

metadata

ingestion

framework

that

I

called

out.

You

know

continue

to

build

on

top

of

that

as

a

basic

building

block,

as

opposed

to

reinventing

something

completely

new.

If

it

ain't

broken,

don't

fix

it.

So

what

we

came

up

with

is

a

way

to

do.

A

A

secret

store,

a

way

to

create,

store

and

embed

encrypted

secrets

that

are

injected

at

ingestion

time

in

data

hub

itself

and

real-time

monitoring

so

a

way

to

actually

monitor

your

pipelines

as

they

run

in

real

time,

and

so

now

I'm

going

to

take

a

step

back

and

do

a

quick

demo

of

what

we

came

up

with

in

the

last

month.

This

was

all

I'm

going

to

disclaimer.

This

was

working

this

morning,

so

really

hoping

it's

going

to

work

here.

So

we

have

a

fresh

instance

of

data

hub.

A

This

is

what

you'd

find

if

you

ran

quick

start

and

went

to

local

host

9002.

In

this

case,

I

have

a

local

instance

going

you're

gonna

see

immediately

that

we

have

a

new

tab

up

here

called

ingestion,

so

I'm

just

gonna

go

ahead

and

click

on

that

and

right

now

we

don't

have

anything

it's

pretty

bare,

but

I'm

gonna

walk

through

the

process

of

setting

up

ui

based

ingestion

through

this

through

this

portal

here

and

I'm

going

to

start

by

clicking,

create

new

source

and

what

you'll

see

is

you

know?

A

A

So

I'm

just

going

to

go

ahead

and

start

filling

it

out.

I'm

going

to

be

connecting

to

the

datahub

database

and

my

username

and

password

is

finally

just

datahub

and

then

finally,

I'm

just

going

to

point

this

at

gms

directly

to

all

right.

So

the

next

step

is

now

that

I've

defined

my

recipe

actually

scheduling

that

on

a

particular

cadence

and

what

I'm

going

to

do

is

I'm

going

to

schedule

it

for

for

two

minutes

from

now.

So

I'm

going

to

say

46.!

A

done

and

now

we

have

a

new

ingestion

source.

And

so,

if

you

hover

over

here,

it'll

show

you

you

know,

runs

at

946,

but

you'll

also

notice

that

I

have

the

ability

to

just

go

ahead

and

execute

it.

So

I'm

going

to

go

over

here

and

actually

execute

the

source

with

the

new

recipe

that

I've

just

defined

and

what

you'll

see

is

the

source

starts

running.

A

A

So

now,

if

I

go

back

home,

the

expectation

is

that

I'll

have

some

data

yep.

All

right,

I

can.

Okay,

maybe

the

indices

haven't

been

fully

updated

yet,

but

let's

go

ahead

and

try

to

get

in

here

yep,

so

I've

got

my

sql

database

loaded

in

which

is

which

is

great.

So

actually,

if

you

come

back

here,

you're

going

to

notice

that

it's

actually

running

again

and

that's

because

it's

hit

that

scheduled

946

run.

So

you

can

see

there's

two

source

ways

to

execute.

A

A

You

know

it's

not

the

case

that

you

really

want

to

put

your

sensitive

information

into

this

file

directly

in

many

cases,

because

this

file

itself

it

isn't

necessarily

encrypted,

and

so

what

we've

come

up

with

is

a

system

within

data

hub

to

define

secrets,

and

so

what

I'm

going

to

do

is

come

to

the

secrets.

Tab,

I'm

going

to

click,

create

new

secret,

and

this

is

a

place

where

I

can

create

a

named

value

that

will

be

encrypted

by

datahub

and

then

resolved

at

ingestiontime.

A

A

A

A

A

One

other

thing

I'll

call

out

is

that

you

can

of

course

cancel

running

instances

of

the

job

as

well.

So

I

know

some

jobs

can

take

a

really

long

time

or

it

can

get

hung

sometimes,

so

you

can

go

ahead

and

cancel

that

using

the

cancel

button

there

and

you

can

see

that

it's

failed

because

we've

failed

to

properly

set

it

up

and

if

you

go

into

it,

you'll

actually

see

probably

some

debugging

information

about

why

that

source

failed,

and

I

think

it'll

eventually

say

that

it

can't

connect

to.

A

You

know

local

hosts,

no

connection,

so

this

is

really

useful

for

for

us

too,

like

as

the

central

team.

You

know

when

you're

running

these

recipes,

it's

going

to

be

really

easy

for

you

to

share

your

screen

or

send

these

to

us

for

us

to

kind

of

take

a

deeper

look

about

why

things

maybe

aren't

working

for

you.

The

final

thing

I'll

show

is

just

the

the

cancel

which

I

talked

about.

Hopefully

you

get

a

run

okay,

so

it's

running

I'm

just

going

to

try

to

cancel

and

note

that

it

won't

remove

any

metadata.

A

That's

already

been

ingested

and

there

you

go.

I

went

ahead

and

canceled

that

one,

so

that's

pretty

much

the

ingestion

framework.

You

can

actually

see

you

know

in

this

case

with

the

cancellation

we

got

through

some

of

the

installation

steps,

but

we

didn't

actually

ingest

anything.

So

you'll

have

a

little

bit

of

details

in

the

case

of

the

cancellation

as

well,

but

that's

that's

pretty

much

it.

I

I

think,

for

the

demo.

So

I'll

go

back

to

the

to

this

last.

A

All

right

just

a

quick

overview

and

pictures

for

what

we

built

the

people

who

weren't

able

to

make

this

session

and

now,

as

usual,

I'll

talk

a

little

bit

about

how

this

actually

works

behind

the

scenes

for

the

technical

audience.

Who

is

interested

so

how

this

works

is

we

have

a

few

new

critical

components

that

we

introduced

along

with,

of

course,

the

ui

screens

that

you

saw?

A

We

have

first

of

all,

an

embedded

scheduler

that

we

added

into

the

metadata

service

that

is

simply

responsible

for

listening

to

changes

in

your

you

know,

ingestion

source

schedules

and

then

actually

scheduling

them

locally

on

a

local

thread

using

a

cron

scheduler,

and

what

this

is

doing

is

just

running

and

executing

ingestion

requests

on

a

on

a

schedule.

This

means

that

if

you

take

down

the

metadata

service,

when

it

comes

back

up,

if

you,

if

you've

missed

a

scheduled

ingestion,

it'll

simply

ignore

it

and

pick

up

where

the

schedule

left

off.

A

A

So

we're

really

excited

about

this,

and

the

actions

framework

is

going

to

be

much

broader

than

just

ingestion.

We're

envisioning

it

to

be

a

place

where

we

can

define

actions

on

you

know,

tag

changes,

term

changes,

schema

changes

and

the

like

and

just

a

components.

Overview

again

is

we

have

this

ingestion

scheduler

an

embedded,

cron

scheduler

inside

of

datahub

metadata

service?

This

is

an

important

point,

because

it

means

that

we

don't

have

to

integrate

with

something

like

airflow

or

prefect,

or

something

else

as

an

add-on

to

datahub.

This

is

a

native

capability.

A

We

have

an

actions

framework

which

I

mentioned,

responds

to

changes

in

the

metadata

graph.

We

have

an

ingestion

action

which

just

actually

runs

the

ingestion,

and

then

we

have

the

executor,

which

is

actually

the

worker,

basically

and

finally

I'll

wrap

up

by

talking

about

the

vision

for

the

future.

On

this

set

of

features

in

particular,

we

would

love

to

actually

clean

up

some

of

the

configs

that

you

saw

us

having

to

define

in

the

recipe

builder.

Specifically,

the

sync

configurations

aren't

really

that

useful,

because

we

already

know

where

your

data

hub

instance

lives.

A

So

you

want

to

make

that

a

little

bit

easier,

we'd.

Actually,

in

addition

to

that,

like

to

put

a

forum

or

a

more

friendly

ui

on

top

of

the

recipe

builder

experience

with

the

ability

to

switch

back

and

forth

between

the

normal

yaml

based

editor

and

a

more

user-friendly,

you

know

form

we'd

like

to

add

the

ability

to

test

your

connection

to

a

data

source

directly

inside

of

the

recipe

builder,

so

that

you

can

get

that

quick

feedback

without

having

to

go

and

click,

execute

and

see

that

something's

wrong.

A

We'd

like

to

actually

have

in-flow

secret

creation,

so

as

you're

building

that

recipe

being

able

to

just

create

a

secret

and

embed

that

secret

right

inside

that

flow

and

then

finally,

based

on

the

demand

from

the

community,

we

would

love

to

introduce

some

sort

of

controls

for

rolling

back

and

gesturing

us

into

the

ui.

Obviously,

with

the

obvious

upsides

of

that,

in

the

case

of

you

know,

bad

ingestions.

A

All

right

and

yeah,

I

just

want

to

make

one

last

call

to

action,

help

the

core

team

prioritize.

These

features

you

can

help

us

by

you

know

reaching

out

to

us

on

slack

letting

us

know

what

you

like,

what

you

don't

like

about

it,

and

most

most

useful

of

all

just

trying

it

out

when

it's

released,

trying

to

build

some

flows

on

top

of

it

and

really

battle

testing

the

future.