►

From YouTube: No Code Metadata: May 27 2021 DataHub Community Townhall

Description

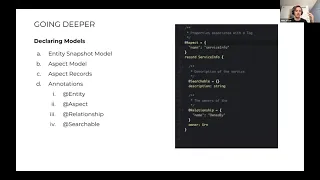

John Joyce (Acryl Data) presents a tech deep dive on the new support for no code metadata modeling in DataHub that is going to be released as part of release 0.8.0

A

Wanted

to

make

sure

that

we

had

time

to

get

into

the

no

no

code

metadata

piece

from

john

as

well.

Sharat

is

on

the

call.

So

if

you

have

any

questions

about

kind

of

his

deployment

on

gcp

and

how

he's

setting

it

up

at

confluent

definitely

ping

him

either

here

or

on

slack,

but

thanks

a

lot

chad

for

sending

this

over

ahead

of

time

and

being

able

to

attend,

even

though

you

were

remote

all

right

with

that,

I

will

move

things

over

to

john.

B

Hey

guys,

can

everyone

see

my

screen:

yep,

okay,

awesome,

yeah,

thanks

trishanka

and

thanks

dexter

and

trout.

Those

were

these

are

really

informative

and

great.

Today,

I'm

going

to

talk

a

little

bit

about

a

project

that

the

akron

team

has

been

working

on

in

the

past

few

weeks

that

we're

calling

no

code

metadata,

I'm

going

to

go

through

sort

of

the

problem,

we're

looking

to

solve

the

solution.

B

We

came

up

with

and

a

little

bit

more

deep

technical

details

around

that,

as

well

as

a

demo

fit

in

there

a

little

bit

as

well,

so

with

that,

let's

get

right

into

it.

So

what

are

the

what's?

The

problem

we're

trying

to

solve

with

no

code

metadata?

Well,

the

problem

is

that

adding

an

entity

to

data

hub

today

is

pretty

hard.

So

these

are

three

pr's.

They

all

add

two

entities

each

and

you

can

see

that

the

number

of

files

that

needs

to

change

just

to

add

them

to

the

gms.

B

So

the

backend

layer

which

we're

going

to

focus

on

here

today

is

about

50

files

right.

So

we'll

talk

about

two

things,

one

is.

The

first

is

just

the

sheer

complexity

of

adding

that

entity

today.

It

requires

greater

than

25

files

for

entity

in

the

complex

case,

in

the

average

case,

it's

about

25

files

and

those

files

consist

of

models.

B

B

We

have

these

things

called

data

access,

object

factories,

so

each

entity

has

its

own

search,

local

browse

and

graph

dial,

which

allow

you

to

talk

to

the

persistence

layer

and

as

such,

we

need

each

entity

to

have

a

configured

factory

which

is

to

create

these.

Like

strongly

typed

factories,

we

have

index

filters

which

are

effectively

just

lambdas

that

take

metadata

change

events

and

turn

them

into

updates

against

the

search

index

and

the

graph

index.

B

B

We

found

that

on

average

it

would

take

one

to

two

weeks

for

a

new

data

hub

contributor

to

actually

get

up

to

speed

with

all

the

abstractions

and

add

entities,

and

that's

not

even

counting

the

back

and

forth

that

occurs

on

the

pr

after

you've

added

50

files,

which

can

span

up

to

a

month,

we've

seen

so

it's

just

too

hard

right.

That's

the

problem

we're

trying

to

solve

with

the

no

code

movement.

That's

where

the

no

code

movement

comes

into

play.

We

started

to

think

about.

B

How

can

we

make

the

process

of

adding

an

entity

much

simpler?

And

specifically,

we

started

to

rally

around

this

goal

that

it

should

take

no

more

than

15

minutes

to

add

or

extend

a

data

hub

entity

at

the

backend

gms

layer

and

what

we

wanted

to

be

in

scope.

Are

the

ability

to

read

and

write

the

new

entity

using

a

rest

api,

the

ability

to

define

searchable

fields

that

are

indexed

in

the

search

index

and

to

define

sort

of

outward

graph

edges

coming

coming

from

that

entity.

B

B

We

wanted

it

to

be

declarative,

which

means

you

know

you

shouldn't

have

to

write

any

a

java

code

or

imperative

code

at

all,

ideally

or

at

least

minimize

that

requirement.

The

second

thing

is

we

wanted

this

to

be

extensible,

because

we

are

going

to

hopefully

move

towards

a

more

declarative

world.

We'll

have

a

dsl.

B

So

I'm

going

to

get

out

of

the

slides

here

and

go

over

to

this

town

hall,

demo

dock.

I

have

here

and

we're

going

to

start

with

just

modeling

an

entity

so

we're

going

to

imagine.

We

want

to

model

a

an

entity

representing

a

service

right.

So

we've

talked

about

this

before

a

service

is

maybe

like

an

online

microservice

that

you

want

to

represent

in

datahub,

and

the

first

thing

we're

going

to

do

in

the

new

world

is

we're

going

to

actually

model

the

aspects

and

aspects

are

just.

B

B

So

we're

saying

this

should

be

a

partially

a

search,

a

field

that

can

be

partially

matched

right.

That's

what

we're

saying

here

and

then

we're

saying

we

also

want

to

support

autocomplete

queries

against

this

field.

So,

if

you're

searching

in

the

search

box

on

the

ui,

you

should

be

able

to

see

auto

complete

results

based

on

the

service

name.

B

The

second

aspect-

we're

defining,

is

just

a

set

of

properties.

In

this

case,

we

just

have

two

simple

properties:

a

description

and

an

owner,

and

you

can

see

again

we

have

a

searchable

annotation

on

description.

In

this

case,

we

don't

provide

any

configurations,

because

we

are

okay

with

the

default

searchable

configurations

which

will

simply

make

this

a

space,

delimited

search

index

field

and

then

the

second.

The

second

field

has

the

next

kind

of

annotation.

B

In

this

case,

we

don't

put

any

bounds

on

what

can

be

on

the

other

side,

but

we

do

support

the

ability

to

add

something

like

this,

where

you

can

say,

entity

types

is

corp

user,

which

will

then

restrict

that

edge

to

only

have

a

user

on

the

other

side.

So

for

this

purpose,

I

think

that

that

makes

sense

here.

B

The

second

thing

we'll

need

to

do

is

just

add

the

aspect

to

union.

This

is

exactly

the

same.

Nothing

has

changed

from

what

happens

today.

We

add

a

service

aspect

which

basically

pulls

together

all

of

these

individual

pieces

of

metadata,

so

it

pulls

together

the

service

key,

the

info

aspect

we

just

defined,

and

then

there's

this

third

thing-

that

I'll

quickly

talk

about

which

is

pretty

fun,

which

is

this

new

browse

paths

aspect,

and

this

is

something

that

the

no

code

initiative

has

allowed

us

to

address.

B

This

ask:

that's

come

up

repeatedly

over

the

past

few

months,

which

is

the

ability

to

customize

browse

paths.

So

browse

paths

are

what

you

see

when

you're

navigating

the

explorer

hierarchy

right,

where

you're

seeing

prod

snowflake

something

else

right

now.

All

of

those

are

generated

based

on

this

hard-coded

logic

that

sits

in

gms.

We've

actually

changed

that

such

that

you

can

provide

custom

browse

paths

as

a

normal

aspect,

as

you

would

any

other

metadata,

and

so

here

we're

actually

adding

that

aspect.

B

So

we

can

provide

browse

paths

and

we'll

demo

exactly

how

you

would

query

for

that

in

just

a

moment

and

the

final

thing,

the

final

big

thing

we

have

to

do

is

define

sort

of

the

entity

model.

This

is

the

snapshot

model

that

everyone's

used

to.

We

have

one

final

new

annotation

that

we

put

on

the

snapshot

model,

which

is

the

entity

annotation

in

the

same

way

that

the

aspect

annotation

allows

us

to

define

a

common

name

for

an

aspect.

B

The

entity

annotation

allows

us

to

define

a

common

name

for

an

entity

which

is

globally

unique.

It

allows

us

to

get

away

from

using

the

fully

qualified

model

name

as

the

de

facto

name

for

the

entity,

which

we

can

talk

about

the

benefits

of

that

in

a

little

while,

but

the

second

piece

of

metadata

we

specify

here

is

that

key

aspect

right

so

here.

B

So

we

have

this

ability

to

sort

of

serialize

the

service

key

and

deserialize

the

service

key

into

a

struct

that

you

can

use

such

that

when

you're

querying

for

an

entity,

you

will

always

get

back

both

in

urn,

which

you

shouldn't

have

to

look

into

and

a

service

key

aspect

which

you

can

then

pull

fields.

Out

of

so

it's

sort

of

this

idea

of

a

virtual

aspect

here

and

then

the

final

thing

is

is

just

adding

that

service

snapshot

to

the

list

of

all

snapshots.

B

This

is

again

nothing

different

from

from

what

we

do

today

and

that's

pretty

much

it

right.

So

four

steps

entity

is

now

added.

We

redeploy

gms

and

we

redeploy

mae

consumer,

a

few

other

containers

and

we

should

be

able

to

now

interact

with

that

entity,

and

so

that's

what

I'm

going

to

show

now.

I've

already

actually

modeled

this

entity

and

redeployed,

my

own

local

versions

to

save

you

guys

some

of

that

awkward

silence

time,

but

we're

going

to

go

ahead

and

try

to

write

an

entity.

B

So

the

first

thing

we're

doing

is

we're

using

a

newly

created

generic

entities

endpoint,

which

allows

you

to

read

and

write

any

entity

in

data

hub

and

we're

going

to

write

into

it.

This

service

snapshot,

so

some

things

to

call

out

are

the

description.

Is

my

demo

service?

There's

an

owner

marked

here.

So

remember:

that's

the

foreign

key

relationship.

B

This

is

going

to

be

indexed

in

search,

and

then

we

have

these

custom

browse

pads

right.

So

my

custom

browse

path.

1,

my

custom

browse

path.

2.

An

interesting

thing

to

note

is:

we've

also

made

it

such

that

you

can

specify

multiple

browse

pads,

so

you

can

actually

access

this

entity

from

multiple

explorer

traversals,

which

I

think

is

a

pretty

cool

feature.

B

So

we're

going

to

go

ahead

into

this

terminal

and

I'm

going

to

go

ahead

and

paste

this

in,

and

hopefully

everything

works.

Okay,

so

nothing

came

back,

which

is

good

means.

There

was

no

exceptions

and

we

can

validate

that

by

reading

that

entity

using

the

exact

same

resource.

So

this

is

the

same

end

set

of

endpoints.

B

All

generic

we'll

go

ahead

and

curl

that,

and

you

can

see

okay

here

we

are

so

we've

got

our

new

our

new

entity

back.

It

has

all

the

data

that

we

we

think

it

should,

and

we

also

can

call

out

that

we

have

that

service

key

coming

back

right.

So

now

you

can

actually

ask

it:

what's

your

name

in

a

much

more

clean,

clean

way

and

we're

going

to

actually

search

right?

So

here

we

have

a

search

endpoint

again,

it's

generic!

B

You

can

search

across

any

entity

in

this

case

we're

going

to

search

across

the

service

entity

in

particular,

and

we're

going

to

pass

in

my

demo.

So

if

you

remember,

our

description

was

my

demo

service

and

we'll

get

back

a

my

demo

service.

So

this

is

saying

yes,

this

matched

your

search.

Query

based

on

this

this

field,

my

demo

service,

then

we're

going

to

go

ahead

and

and

run

the

auto

complete

test.

B

Okay,

we've

got

one

back.

My

demo

service

is

the

browse

entity

there,

so

this

seems

to

be

working

and

then,

finally,

we

have

this.

This

new

endpoint

called

relationships

which

allows

you

to

fetch

arbitrary

relationships

between

data

hub

entities,

and

in

this

case,

what

we're

going

to

fetch

is

an

owned

by

relationship

that

is

actually

incoming

into

a

corp

user

right.

So

basically

we're

trying

to

test

the

inverse

relationship.

B

So

we're

saying

get

me

anything

that

I

own

as

user

one

right,

so

we're

going

to

go

ahead

and

run

that

and

you

can

see

okay,

we

got

the

service

back,

so

the

edge

has

been

indexed

it's

available

via

this

generic

relationship's

endpoint

now,

and

so

that's

basically

the

demo.

That's

the

process

of

adding

a

new

entity

takes,

you

know

less

than

15

minutes.

I

think

I

was

talking

through

it,

so

maybe

15.

B

and

we're

going

to

go

back

to

talking

about

how

we

did

it

so

quickly

I'll

go

over

sort

of

what

the

architecture

used

to

look

like

you

know

in

a

nutshell,

the

theme

is

that

at

every

one

of

these

segments

you

had

to

define

components

on

a

per

entity

basis

right

so

at

the

client

layer

you

had

individual

classes

or

components

for

each

entity.

Data

sets

users

charts

dashboards,

that

propagates

over

to

the

resource.

The

wrestling

endpoint

layer

as

well.

B

You

had

a

different

set

of

endpoints

for

data

sets

users,

charts

dashboards

right

and

inside

of

those

components

you

had

another

layer.

You

had

individual

dowels

for

searching

for

writing

and

reading

to

the

key

value

store

and

to

getting

relationships,

and

these

are

each

specific

to

the

individual

entity.

So

you

can

see

this

just

scales

with

the

number

of

entities.

B

If

you

head

over

to

the

mae

consumer

side,

which

is

responsible

for

updating

the

search

index

and

the

graph

store

as

metadata

changes

come

through

the

system,

you'll

see

that

we

have

the

same

exact

pattern

right.

So

you

have

a

search

builder,

that's

specific!

For

a

data

set.

You

have

a

user

search

builder,

you

have

a

user

graph

builder,

you

have

a

ds

graph

builder,

and

so

it's

just

kind

of

scaling

with

the

number

of

entities.

B

B

So

this

is

the

after

we've

revised

that

such

that

we

have

two

sort

of

generic

sets

of

endpoints

one

is

about

entities,

so

it

provides

the

ability

to

read,

write,

search,

browse

any

entity

in

data

hub.

We

have

the

relationship

endpoints,

which

allows

you

to

effectively

do

the

same,

but

for

edges

right

and

then

we

have

these

service

classes

that

sit

behind

those

and

those

are

the

the

key

they're

kind

of

the

generic

read

and

write

abstractions

over

the

persistence

layer

right.

B

So

we

have

the

entity

service,

we

have

the

search

service,

we

have

the

graph

service

and

then

heading

over

to

the

mae

consumer

side.

We

followed

a

very

similar

pattern

where

we

have

a

generic

entity

search

index

builder.

We

have

a

generic

relationship

graph

builder

and

this

is

all

driven

based

on

those

annotations

in

the

model

right.

So

that's

how

we

compute

what

the

updates

need

to

be,

and

so

I'm

going

to

go

quickly

over

this

because

we

already

talked

through

it.

There's

a

few

slides

just

going

deeper.

B

The

entity

registry

is

really

a

runtime

source

of

truth

for

both

models

and

metadata,

and

it's

what

all

of

the

services

and

the

index

builders

now

depend

on

to

get

information

about

the

model

and

information

about

the

metadata.

So

you

can

think

about

the

storage

configurations,

the

annotations.

How

should

I

build

the

search

index?

How

should

I

build

the

relationship

index

and

a

key

part

about

this

is

that

we

decouple

all

of

the

service

and

index

builder

layer

from

the

metadata

models

and

configuration

itself

such

that

in

the

future.

B

You

know,

maybe

we

aren't

using

pegasus,

maybe

we're

using

protobuf,

maybe

we're

using

something

else,

or

maybe

we're

having

a

completely

dynamic

entity

registry

where

you

can

curl

in

a

new

schema

like

a

database

right

and

from

the

graph

services

perspective

or

from

the

services

perspective.

In

the

indexability

perspective,

nothing

would

change,

which

is

which

is

pretty

exciting,

so

again,

services.

They

all

allow

you

to

do

generic

things

to

each

entity

and

relationship.

B

One

key

point

here

is

they're

decoupled

from

storage

technologies,

so

they're

all

based

on

data

hub

specific

abstractions.

They

are

interfaces

or

abstract

classes.

That

can

be

very

slim,

they're

very

slim

and

can

be

implemented

for

a

multitude

of

different

persistent

stores,

which

provided

the

default

implementations

for

ebean,

which

is

all

the

sql

stores

elastic

on

the

search

side

and

neo4j

on

the

graph

store

side.

So

far,

endpoints

again,

we've

seen

them

generic

endpoints

for

both

fetching

entities

and

relationships

and

then

index

builders.

B

So

one

interesting

thing

here

is

that

these

service

classes,

we've

introduced,

are

actually

used

now,

both

on

the

read

path

so

from

gms,

but

also

on

the

right

path

from

the

index

builders.

So

these

search

service

and

graph

service

are

kind

of

common

across

multiple

parts

of

the

stack

which

makes

kind

of

changing

and

updating

things

much

easier,

and

we

have

one

central

abstraction

that

everything

else

depends

on

so

just

revisiting

the

the

non-functional

requirements

we

talked

about

earlier

talking

about

how

we

may

have

achieved

them

start

with

the

declarative

one.

B

So

we

provide

a

dsl

for

defining

models

as

well

as

storage

configurations

without

any

java

required

right,

no

coding,

we

defined

an

extensible

model

where

it's

easy

to

add

new

indexed

field

types.

So

you

remember

text

partial.

We

saw

earlier

it's

very

easy

to

add

something

new

there.

It's

easy

to

add

new

relationships,

it's

as

easy

as

defining

a

new

relationship,

annotation,

it's

easy

to

plug

in

new

storage,

implementations

and

then

finally,

it's

usable.

B

We

have

build

and

runtime

model

validation,

provided

such

that,

if

you,

when

you

define

your

entity

hierarchy,

you

should

know

at

build

time

if

something

is

wrong

right.

So

if

you

have

a

conflicting

aspect,

name

or

entity

name

or

maybe

you

didn't

define

a

key

for

some

reason.

You'll

know

a

build

time

so

that

we

can

avoid

these

runtime

exceptions

and

then

a

couple

fun

features.

B

We

think,

which

are

configurable

browse

paths

as

well

as

the

moving

away

from

the

requirement

to

have

these

strongly

typed

earn

pdls,

as

well

as

java

classes,

which

is

the

current

requirement,

and

so

just

quickly

going

to

talk

about

the

impact

of

this

initiative.

So

I

think

firstly,

the

biggest

impact

is

just

the

reduction

in

complexity

across

gms.

B

Now

I'm

going

to

talk

a

little

bit

about

where

no

code

goes

from

here,

so

we're

going

to

look

to

actually

move

up

the

stack

right

now.

All

of

the

work

that

you've

seen

is

really

limited

to

the

metadata

platform

layer

so

gms

and

beyond,

but

we

want

to

actually

auto

generate

the

graphql

api

at

the

datahub

frontend

layer,

as

well

as

exploring

the

ability

to

sort

of

gener

dynamically

generate

sort

of

ui

configurations.

B

B

They

do

exactly

what

data

hubs

ui

needs

them

to

do,

but

we

can

certainly

foresee

the

ability

to

add

sort

of

new

capabilities

to

those

apis

as

well,

and

then

I'm

just

going

to

close

by

talking

briefly

about

sort

of

the

vision

of

datahub.

As

we

see

it,

we

really

want

datahub

to

become

this

sort

of

true

metadata

platform,

where

you

can

do

things

like

dynamic

model,

registration

and

storage

configurations.

B

So

we

kind

of

think

of

the

this

having

multiple

kind

of

layers,

where

we

have

the

metadata

storage

engine

responsible

for

access

controls,

index,

building

the

commit

log,

maintaining

that

entity

registry,

and

then

we

have

a

set

of

apis

on

top

both

synchronous

and

asynchronous,

so

kafka

based

and

rest

apis,

and

then

on

top

of

that,

the

client

sdks,

which

can

then

interact

with

all

of

those

and

then

finally

I'll

just

conclude

by

saying

talking

a

little

bit

about

you

know

how

we

get

to

no

code.

So

how

is

this

going

to

be

released?

B

The

code

itself

is

coming

next

week

early

next

week,

and

it

includes

a

few

different

things.

One

is

a

newly

introduced

data

hub

upgrade

container

in

cli

that

allows

you

to

actually

perform

the

upgrade

against

a

running

instance

of

data

hub.

That

would

be

required

to

move

to

no

code

right.

So

there's

two

ways

to

do

it.

You

can

either

sort

of

restart

the

data

hub

instance

from

a

clean

slate

and

that'll

just

work,

or

you

can

run

this

data

hub

upgrade

cli

against

a

running

instance.

B

If

you

have

a

lot

of

data

and

we've

tested

this

against

a

few

hundred

thousand

rows

in

a

sql

store

and

things

look

good,

so

the

second

part

is,

we

have

all

these

guides.

Basically

that

allow

you

to

run

the

snow

code

upgrade

against

docker,

compose

deployments,

helm

deployments

or

manually,

if

you

want

to,

if

you

have

a

different

setup,

but

basically

in

a

nutshell,

it'll

be

deploy.

New

data

hub

containers,

run

this

migration

script

and

then

verify

and

validate.

You

should

be

done

and

with

that

I

think

I

will

hand

it

back

to

shashanka.

B

A

That's

fine,

john.

I

think

we

can

all

deal

with

the

productivity

events.

You've

gotten

us,

so

you've

saved

us

a

lot

of

time

as

well.

A

couple

of

things

I

wanted

to

point

out,

as

we

did

the

design

exercise

for

this.

We

didn't

want

to

boil

the

ocean

too

much

so

a

few

things

we

actually

kept

them

the

way

they

are.

For

example,

the

mce

schema

stays

the

same

for

now

and

even

for

strong

types

we

made

them

stay

as

a

constraint.

A

We

wanted

actually

so

these

models

that

you're

using

to

define

your

entities

and

your

aspects

they're

actually

used

to

create

a

serializable

structs

that

you

can

actually

use

to

send

metadata

over.

So

you

don't

lose

strong

types.

As

a

result

of

this,

we

just

created

a

generic

entity.

Endpoints

so

really

excited

about

this.

I

hope

everyone

is.

A

I

saw

a

lot

of

good

feedback

on

the

chat,

so

we're

looking

forward

to

rolling

this

out

next

week

and

then

helping

you

as

you

upgrade

your

ecosystems,

and

you

know

we'll

be

there

online

to

help

you

out

with

any

of

this.

We've

tested

it

out

internally,

quite

heavily,

and

we've

done

lots

of

backwards

and

forwards

compatibility

testing,

so

we're

comfortable

that

this

works.

But

you

know

you

only

know

when

you

finally

run

it

so

looking

forward

to

helping

you

all

get

over

the

hurdle.