►

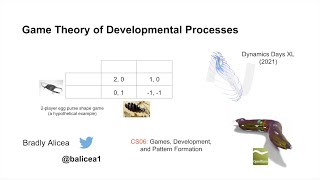

From YouTube: Game Theory of Developmental Processes

Description

Lecture given for Dynamics Days XL 2021. Virtual and Nice, France. Slides on Figshare: https://figshare.com/articles/presentation/Game_Theory_of_Developmental_Processes/16435308

A

A

A

When

we

talk

about

developmental

game

theory,

we

want

to

talk

about

agents,

I'm

going

to

talk

about

some

definitions

of

agents,

so

the

general

case

is

something

we

call

developmental

agents,

and

so

these

these

developmental

agents

take

the

form

of

cells,

morphogenesis

or

gene

products,

and

you

can

imagine

that

cells

come

in

many

different

types.

Morphogenesis

comes

in

many

different

states

and,

of

course,

gene

products

come

in

many

different

states

as

well,

and

these

all

these

agents

all

limit

states.

A

So,

whether

it's

you

know

it

could

be

between

the

agent

and

nature

or

it

could

be

between

two

agents,

and

so

the

interactions

will

determine

the

game,

and

so

you

can

see

here

sort

of

an

example

of

this,

where

you

have

a

developmental

agent,

where

you

have

this

nondescript

cell.

That

forms

three

germ

layers

and

then

those

three

germ

layers

form

different

parts

of

an

anatomy,

and

this

could

be

some

sort

of

agent

that

behaves

in

the

environment

has

sensors.

A

It

has

a

central

nervous

system

and

it

has

different

body

parts

and

all

those

have

a

developmental

origination,

and

you

can

see

that

they,

you

know

there

could

be

say,

for

example,

a

game

against

nature

or

a

game

between

different

agents

and

there's.

You

know,

competition

cooperation

and

all

those

things.

A

A

specific

case

of

a

developmental

age

is

an

anti-genetic

agent

and

so

not

a

genetic

agent.

It's

an

important

distinction

to

make,

because

ontogenetic

agents

do

not

use

cognition

to

execute

behaviors.

In

the

example,

we

saw

before

all

those

processes

unfold,

but

there's

no

cognition,

there's

no

intention

behind

the

behaviors,

and

this

is

different

from

typical

game

theory.

Like

saying

economics

where

you

have

you

know

rational

strategies

and

things

like

that

where

we

assume

certain

types

of

sub

are

optimal

or

sub-optimal

behavior.

A

In

this

case,

it's

just

simply

strategies

that

are

constrained

by

energetics

or

by

other

factors,

and

so

that's

all

we

assume

so.

Instead,

the

intentional

strategies

that

are

used

in

optogenetic

agents

are

related

to

transformational

processes

such

as

movement,

differentiation,

growth

and

regulation,

so,

for

example,

in

movement,

there's

a

something

that's

generated

by

muscle

and

that

it

generates

in

you

know

an

intentional

state

in

the

sense

that

it's

you

know

definitely

say,

moving

left

instead

of

moving

right,

but

it's

not

cognitive.

A

There's

no

reflex

reflexivity

to

this,

and

so

one

example

of

how

anti-genetic

agents

can

use

this

information,

and

can

you

know

build

themselves.

Some

sort

of

game

is

coordination

between

option

genetic

agents?

So,

in

this

case

you

have

coordination

that

results

from

collective

behavior

over

time

or

you

know

you

could

also

have

perfect

coordination

and

you

can

assume

this

a

priori,

and

so

in

this

case

you

have

a

bunch

of

agents

that

are

cooperating

in

a

game

they're.

A

You

know

they

have

intentional

behaviors

that

are

generated

and

those

behaviors

have

payoffs

and

those

payoffs

involve

some

sort

of

you

know,

energetic

constraints

or

energetic

costs,

and

so

they're

either.

You

know

loosely

or

perfectly

coordinated,

because

the

game

is

such

that

the

payoffs

favor

coordination.

A

So

we

also

want

to

talk

about

developmental

trade-offs,

and

so

we

want

to

model

the

internal

state

of

an

optogenetic

agent

to

show

how

developmental

trade-offs

sort

of

shape

the

execution

of

a

developmental

game,

and

so

in

this

case

we

want

to

consider

that

the

major

any

agent

needs

to

maximally

conserve

energy.

This

is

a

law

of

physics

and

it's

a

law

of

biophysics

as

well,

and

so

an

agent

will

naturally

invest

in

one

set

of

strategies

over

another,

but

it

won't

do

this

intentionally.

A

A

This

can

also

change

the

composition

of

different

agents,

and

this

can

lead

to

heterogeneity,

so

ontogenetic

agents

with

more

available

energy

have

a

greater

degree

of

complexity

with

a

capacity

for

a

greater

number

of

strategies.

So

you

can

see

how

this

process

works.

You

have

these

different

trade-offs

between

heterogeneity

and

homogeneity.

Depending

on

how

much

energy

there

is

in

the

environment,

fitness

could

also

serve

a

purpose

of

energetic

scarcity.

So

if

things

are,

you

know,

there's

a

fitness

imperative

to

keep

the

developing

organism

alive

so

that

it

can

reach

maturity.

A

A

They

look

like

maybe

they're

thinking

or

maybe

they're

they're

definitely

alive,

but

you

know:

what's

driving

this

what's

driving

almost

looks

like

goal-directed

behavior,

and

yet

maybe

it

isn't

exactly

the

type

of

goal-directed

behavior

that

a

human

agent

would

or

some

sort

of

animal

would

pursue,

and

so

here

you

have

like

a

cell

colony,

that's

moving

in

different

ways

and

may

be

oozing

in

in

a

certain

direction

or

it

may

be

moving

in

a

along

some

polar

coordinate

system.

So

you

know

biological

intentionality.

A

A

So

the

first

thing

we're

going

to

talk

about

here

is

zero

player

games

and

so

zero

player

games

an

example

of

which

is

conway's

game

of

life.

So

in

this

game

this

is

conway's

game

of

life.

Over

on

the

left

and

to

the

right,

you

can

see

how

you

can

generate

nd

point

processes

and

dimensional

point

processes,

and

you

can

see

that

this

is

what

we

kind

of

use

in

the

game

of

life.

This

is

a

zero

dimensional

aspect

of

it.

A

Is

this

this

point

process

the

single

point

that

changes

its

state

over

time

and

so

cells

operate

at

a

point

processes

that

emit

a

state

of

debt

or

alive

in

this

game.

So

in

this

zero

player

game,

you

have

a

an

autogenetic

agent,

it's

operating

as

a

point

in

space

and

it's

emitting

the

state

of

dead

or

alive.

So

there's

there's

no

real.

It's

just

generating

the

state.

It's

not

really

interacting

with

other

players.

A

A

There's

a

life.

There

are

a

lot

of

lifelike

attributes

captured

in

these

dimensions,

but

there's

no

real

competition

here.

So

this

is

an

example

of

a

zero

player

game,

and

if,

in

applying

this

to

development,

we

can

look

at

things

like

turing,

morphogenesis

or

reaction

diffusion

processes,

where

particles

compose

a

population

of

zero

dimensional

agents

and

what

they

the

sort

of

substance,

they

call

morphogens

act,

indirect

way

to

transform

populations

and

a

spatial

pattern.

A

An

equilibrium

is

reached

when

a

stable,

morphogenetic

morphogenetic

pattern

is

achieved

so

under

developmental

game

theory

that

we

can

reach

equilibria

just

like

we

can

in

normal

game

theory,

but

it's

a

little

bit

different

in

terms

of

the

sort

of

where

this

limit

is

so.

This

is

an

example

of

a

very

simple

payoff

matrix

for

a

zero

player

game.

Within

this

payoff

structure,

the

cell

will

tend

to

stay

alive

and

you

can

see

the

payoff

structure

is

0.8

for

alive

and

0.2

for

dead.

So

when

the

payoff

is

high

for

being

alive,

it'll

stay

alive.

A

A

A

A

So

in

this

case

an

ontogenetic

agent

will

play

an

intentional

strategy

against

the

quasi-random

natural

process,

so

quasa

quasi-random

natural

process

is

something

like

weather

or

entropy,

and

so

the

payoff

structure

here

will

be

solely

dependent

upon

a

process

driven

objective

so,

for

example,

an

increase

in

fitness

or

a

maximum,

a

maximization

of

error,

correction

and

the

actual

application

and

development

here

we're

going

to

get

into

is

a

generic

developmental

process

that

we'll

talk

about

as

a

game

of

developmental

minesweeper

and

so

again

just

to

go

over

this.

This

example

with

the

weather.

A

This

is

a

game

against

nature.

This

is

a

single

rational

player

ping

playing

a

pure

mixed

strategy

against

nature,

which

is

a

random

or

mixed

strategy.

So

a

strategy

in

this

case

is

carrying

an

umbrella

or

not

carrying

an

umbrella

in

when

the

weather

is

changing

all

the

time.

So

you

have

a

weather

forecast

here,

and

the

weather

forecast

is

generated

by

a

discrete

discrete

random

random

pattern

generator.

So

it's

generating

these

patterns

of

weather

and

the

forecast

that

we

make

is

imperfect

information.

A

So

the

question

is:

is

that

the

observer

do

they

have

to

carry

an

umbrella

or

not,

knowing

that

the

weather

is

always

changing

and

that

the

forecast

is

imperfect

information?

So

you

can

see

that

the

observer

strategy

here

is

below

your

end.

It's

an

umbrella

or

no

umbrella,

and

the

payoff

is

whether

you

have

an

umbrella

and

it

rains.

A

If

there's

you

know

it

doesn't

rain

and

you

have

an

umbrella,

there's

no

payoff

and

there's

a

positive

payoff.

If

you

don't

have

an

umbrella

and

it's

sunny

because

you

don't

have

to

carry

the

umbrella

with

you.

So

you

can

see

that

there's

this

case,

where

you

don't

have

an

umbrella.

You

get

a

negative

one

payoff

because

you

get

soaked

in

the

case

where

you

do

have

an

umbrella

and

it

rains

you

get

one.

A

So

this

is

this:

has

a

nice

connection

with

something

like

reinforcement

learning,

because

it

does

reward

the

agent

with

every

sort

of

correct

guess

of

what

nature

is

going

to

generate,

and

so

just

keep

that

in

mind

for

right

now

and

then

you

know

think

about

that

in

terms

of

the

game

against

nature,

in

this

case

we're

going

to

look

at

a

very

specific

case,

and

this

is

the

developmental

minesweeper

game.

So

this

is

a

picture

of

the

classic

minesweeper

game

on

the

right

and

minesweeper

is

a

game

between

nature

and

a

single

autogenetic

agent.

A

So

the

ontogenetic

agent

is

doing

the

job

of

the

player

one

in

this

minesweeper

game,

they're,

uncovering

different

they're,

selecting

different

tiles

and

I'm

covering

a

piece

of

this

landscape

and

the

idea

is

you

can't

select

where

there's

a

the

place

where

there's

a

bomb

or

a

mine,

because

you'll

blow

up

and

you'll

your

game

will

be

over.

So

the

idea

is

not

to

pick

a

spot

where

there's

a

mine

and

you

get

as

much

of

the

board

uncovered

as

possible,

and

so,

if

you

can

uncover

every

square,

but

the

squares

were

their

minds.

A

You

win

the

game,

and

so

you

can

actually

take

this

game

and

turn

it

into

a

developmental

game.

By

looking

at

this

game,

where

you're

created,

you

have

a

two-dimensional

genome

mandan,

this

is

a

two-dimensional

genome

and

the

algorithmic

agent

doesn't

know

where

the

mines

are.

In

this

case

the

mines

are

lethal

mutations,

so

the

agent

is

picking

spots

in

the

genome

and

some

of

these

are

lethal

mutations.

So

the

idea

is

that,

in

this

two-dimensional

space

the

agent

is

picking

places

to

sort

of

express

its

genome,

but

it's

not

picking.

A

It

doesn't

want

to

pick

the

lethal

mutations.

It

wants

to

pick

around

that,

and

so

the

idea

is,

these

are

generated

by

random

processes,

and

the

agent

has

to

sort

of

predict

where

there's

not

a

lethal

mutation,

but

it

has

no

information

as

to

what's

a

lethal

mutation.

So

that's

the

idea

here

and

so

these

null

squares

it's

interesting,

because

this

game

has

a

fairly

high

degree

of

a

set

of

degrees

of

freedom.

So

it's

actually

a

fairly

large

search

space,

and

so

we

can

also

add

another

information

to

be

uncovered.

A

A

So

in

this

case

we're

going

to

look

at

the

game

tic-tac-toe

and

it

seems

like

a

pretty

boring

game,

but

it

actually

has

a

lot

of

nice

properties

for

this.

So

there's

actually

in

tic-tac-toe,

something

called

a

first

mover

advantage,

and

this

is

where,

if

you

pick

like,

say

the

center

square,

if

you

start

the

game,

you

can

always

be

on

the

offensive

and

your

opponent

has

to

be

on

the

defensive

if

they

want

to

play

an

optimal

game.

A

A

This

is

where

you

have

optimal

play

by

the

onto

genetic

agents,

plus

this

first

mover

advantage

of

one

cell

lineage

over

another,

and

so

this

means

that

there's

no

clear

winner.

So,

for

example,

you

know

one

cell

lineage

can't

take

over

the

embryo,

but

on

the

other

hand

you

have

the

structure,

this

semi-hierarchical

structure

of

the

embryo,

something

you

might

even

call

heteroarchical,

and

so

no

one

selena

type

of

cell

dominates

the

embryo,

and

so

the

idea

is

that

you

know

if

you

had

these.

A

So

here

you

have

an

example

from

a

paper

from

biosystems

from

2018

that

we

put

out.

This

is

an

example

of

this.

This

first

mover

advantage

in

tic-tac-toe,

and

then

here

we

have

this

in

the

embryo,

so

you

have

the

cell

one

and

cell

two

cell

one

moves

first

by

dividing,

and

so

they

have

this.

They

they're

able

to

maintain

the

spacing

and

then

two

divides

they're,

the

second

player

they're

playing

this

defensive

move

so

that

one

doesn't

invade

their

space.

A

This

is

in

the

third

move

in

the

fourth

move,

the

psalm

lineage

one

divides

again,

so

you

have

three

cells

versus

two

cells

and

then

the

fifth

move,

the

cell.

Actually

in

this

case,

solenage

one

divides

again,

so

you

have

four

cells

in

one

versus

two

and

two:

the

sixth

move,

lineage

two

divides

into

from

one

cell

to

two

cells,

or

actually

you

have

three

cells

at

that

point.

A

The

idea

here

is

that

you're,

mostly

playing

optimally,

although

you

can

see

examples

where

there's

a

sub-optimal

move

where

lineage

one

divides

twice

and

two

moves,

and

so

it's

able

to

you

know,

structure

the

embryo

in

a

certain

way.

So

if

we

model

it

using

this

first

mover

advantage

model,

we

can

see

that

in

some

cases,

they're

sub-optimal

moves

by

some

of

the

lineages.

A

In

some

cases

both

lineages

play

optimally,

and

we

can

then

use

that

extend

that

result

to

look

and

see

what

exactly

you

know.

The

structure

of

a

of

an

embryo

looks

like

the

structure

of

a

developing

organism,

looks

like

and

maybe

explaining

why

some

of

these

things

are

asymmetrical

or

become

asymmetrical

over

time,

and

so

this

embryo

can

be

analyzed

using

a

first

mover

stackelberg

game,

there's

an

advantage

in

terms

of

size

so

for

differentiated

tissues.

A

Sublineages,

one

and

two

are

established,

and

one

in

this

case

clearly

has

a

size

position

advantage

and

the

second

move,

of

course,

sub

lineage

one

divides

and

then

before

two

and

that's

an

advantage

to

sublineage

one

and

then

the

third

move

supplement

h2

divides,

and

that

gives

a

sublineage

two.

It's

able

to

maintain

its

foothold

in

its

space

in

the

embryo

and

then

the

fourth

move

and

then

so

on

and

so

forth,

and

so

you

can

actually

do

the

same

type

of

analysis

or

have

the

same

type

of

game

for

connectome

formation.

A

If

you

want

more

information,

you

can

go

to

the

paper,

but

these

just

basically

explain

how

these

kind

of

these

connectivity

patterns

are

established

and

what

we

should

expect,

maybe

in

a

more

complex

connectome,

then

we

go

to

something

else.

In

two

player

games,

another

type

of

two-player

game

called

the

prisoner's

dilemma

or

the

iterated

prisoner's

dilemma.

So

this

gives

you

individual

versus

group

incentives.

A

A

So

in

the

case

where

you

have

two

anti-genetic

agents,

they

can

either

cooperate

or

they

can

defect

or

not

cooperate,

and

so,

when

you

defect

it's

to

seek

maximization

of

an

individual

payoff

which

is

often

sub-optimal,

but

it

just

maximizes

the

individual

payoff,

for

whatever

reason,

so

you

know

in

the

case

of

the

antigenic

agents,

they

might

not

cooperate,

they

might

end

up.

You

know

individually,

you

know

benefiting

in

the

short

term,

but

then

the

entire

colony

might

die

out

in

as

a

consequence

of

that.

A

So

that's

what

I

mean

by

sub-optimal

in

the

case

of

the

the

first

first

mover

games.

Of

course

this

does

not

apply,

but

this

is

specific

to

this

type

of

game.

If

you,

however,

cooperate

instead

of

defect,

it

maximizes

the

pairwise

or

group

payoff.

So

in

that

example

of

the

cooperating

agents

they

all

basically

are

behaving

in

the

same

way

and

they're

cooperating

and

it's

maximizing

their

group

payoff.

So

now

the

colony

is

the

thing.

A

That's

surviving

and

maybe

some

individuals

die

off

as

a

consequence,

but

the

colony

survives

in

the

iterated

prisoner's

dilemma.

It's

just

an

example

of

this.

This

example

up

in

the

upper

right,

the

prisoner's

dilemma:

it's

just

that

the

game

is

repeated

dynamically

over

time.

So

you

have

this

repeated

game

that

that,

where

the

payoffs

might

change

over

time,

but

you

get

this

function

that

shows

basically

the

same

trend

where

you

know

the

payoffs

for

defecting

and

the

payoffs

are

cooperating,

might

change,

but

you

can

get

clear

strategies

over

time

and

an

application

of

this.

A

The

development

is

where

you

have

a

pair

of

ontogenetic

agents

with

complementary

mechanisms

such

as

pattern,

recognition

and

so

where

I

meant

to

put

pattern,

recognition

and

pattern

generation

in

there,

but

I

think

I

had

it

both

words

twice.

So

this

is

an

example

of

what

I

mean.

So

this

is

the

observer

in

the

emitter,

and

so

this

is

a

game

that

we

have.

A

We

call

it

a

morphogenetic

game,

and

this

is

where

you

have

the

observer

that

reconstructs

the

pattern

through

perception

from

the

emitter

which

produces

a

pattern,

so

one

exhibits,

morphogenesis

and

the

other

exhibits

perception

or

attention,

and

so

these

two

agents

will

compete

against

one

another,

and

so

this

is

answering

the

question.

What

is

the

relationship

between

morphogenesis

and

perception,

if

you

have

say,

for

example,

an

oxygen

genetic

agent

that

forms

stripes

and

they're

doing

it

to

avoid

prey

or

some

other

reason,

then

what

is

the

relationship

or

avoid

predators?

A

Then?

What's

the

relationship

between

the

predator

and

the

prey?

That's

what

we're

trying

to

maybe

ask

here

one

of

one

of

the

possible

questions

we

could

ask

using

this

model.

We

can

treat

this

as

either

a

predator

prey

relationship

or

a

competitive

cooperative

game

depending

on

you

know

what

relationship

the

observer

and

emitter

have.

A

Some

of

this,

you

know

involves

things

like

peridolia

or

camouflage

and,

of

course,

in

peridolia.

This

is

the

evolution

of

perception,

morphogenetic

morphogen,

morphogenetic

mechanisms.

This

is

the

co-evolution

of

those

in

camouflage.

You

have

this

something

called

a

matching

pursuit

game

which

is

a

different

type

of

game

altogether

and

also

is

part

of

this

developmental

game

paradigm,

and

so,

in

this

case

the

predator

hunts,

the

prey

and

the

prey

must

conceal

itself

or

the

prey

is

dangerous

to

the

predator.

A

In

some

way

like

say

it

kills

with

venom

and

all

those

possibilities

can

be

replicated

in

this

game.

So

in

this

case

we

have

a

pursuit

of

asian

game

which

is

distinct

from

a

prisoner's

delima

game.

In

this

case,

the

observer

tries

to

identify

partial

phenotypes,

while

the

emitter

tries

to

conceal

its

phenotype

as

a

coherent

pattern.

So

here

you

can

see

that

actually,

this

emitter

isn't

trying

to

conceal

its

pattern,

but

it

could

definitely,

you

know,

have

different

types

of

striping

patterns

over

time.

So

you

know

we

have

like

in

the

first

iteration.

A

You

might

have

this

striped

pattern

in

the

second

iteration.

You

might

have

this

checkerboard

pattern,

but

this

other

agent

that's

trying

to

identify.

The

pattern

is

then

going

through

a

series

of

patterns

possible

patterns

to

sort

of

figure

out

which

pattern

it

is,

and

you

know

there

are

a

lot

of

things

here

to

do

with

like

spatial

orientation

and

translation.

So

you

know

this.

This

pattern

might

be

at

an

oblique

angle

in

the

environment,

and

the

agent

still

has

to

be

able

to

recognize

it.

A

So

if

the

agent

can

recognize

that

pattern,

the

payoff

for

that

pattern

is

much

lower

than

it

otherwise

would

be

if

it's

a

unique

pattern

that

the

agent's

never

seen

before.

That's

also

a

very

high

payoff

pattern

for

the

emitter

and

and

so

on

and

so

forth.

So

it

relies

on

the

learning

of

the

observing

agent

and

the

ability

to

generate

patterns

on

the

part

of

the

emitting

agent,

and

so

you

can

see

this.

A

There

are

interesting

connections

between

this

pursuit

of

asian

utility

function

that

you

use

in

a

pursuit

of

asian

game,

and

this

is

a

series

of

iterative

payoffs.

Like

I

said

you

know

these

games

change

over

time,

depending

on

the

pattern,

that's

being

emitted

by

the

emitter

and

depending

on

what

the

observer

has

seen

before,

can

learn

or

can

identify

in

different

contexts.

A

You

have

a

situation

where,

given

a

number

of

perturbations,

a

status

strategies

can

be

found

to

return

the

system

to

an

initial

state

and

resist

invasion

by

mutant

strategies,

and

so

in

the

developmental,

stable

state

case.

It

might

work

in

a

similar

manner

and

it

might

mimic

something

called

the

canalization

of

development,

which

was

proposed

by

conor,

ed

waddington

and

on

the

right.

You

can

see

an

example

of

the

canonization

of

development.