►

From YouTube: Use Case: Tuning Models

Description

Setup: https://www.youtube.com/watch?v=y6bz-9U4hJg

Make sure you're looking at the version of the notebook corresponding to the version of dffml you have! (dffml version)

The following is the link to the notebook from this video: https://github.com/intel/dffml/blob/master/examples/notebooks/tuning_models.ipynb

A

A

A

A

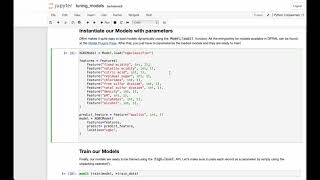

So

once

the

model

is

loaded,

we

instantiate

it

with

the

appropriate

parameters.

This

includes

a

list

of

all

the

features

in

the

data

set,

the

target

feature

and

the

location

or

directory

to

save

the

model

in

you

may

also

add

any

hyper

parameters

here

for

model

specific

parameters.

You

can

check

out

the

documentation

of

that

specific

model.

A

A

A

A

Since

we

didn't

pass

any

hyper

parameters

at

the

time

of

instantiation

of

the

model,

it

was

trained

on

default

values

of

the

model.

Let's

try

to

tune

it

with

a

good,

old-fashioned,

hidden,

trial

method.

We

can

mutate

the

hyper

parameter

values

by

changing

them

in

the

config.

However,

you

might

want

to

refer

to

the

model's

documentation

first

to

see

which

of

the

hyper

parameters

are

mutable.

A

A

A

We

can

use

tuners

to

tune

the

model

for

us,

for

the

sake

of

this

example,

we'll

be

using

the

parameter

grid,

tuner

parameter

grid

takes

a

grid

of

different

hybrid

parameters

and

as

many

values

as

you

want

to

tune

from

you

passed

in

all

the

relevant

data,

such

as

the

model.

The

target

feature,

the

score:

the

train

data

and

the

test

data.

A

And

it

tunes

the

model

against

the

hyper

parameter

grid.

Sets

the

model

hyper

parameter.

Train

set

the

model

to

the

hyper

parameters

that

are

the

tuned

hyper

parameters

and

trains

it

and

gives

you

the

tuned

accuracy.

As

you

can

see,

all

the

permutations

of

the

parameter

grid

that

we

sent

into

the

function,

it's

checking

out

all

the

accuracies

and

will

provide

you

with

the

best

version

of

the

model.

A

A

Now

here

is

a

small

visualization

of

our

model

that

our

unjoint

model

and

the

tune

model,

and

we

can

clearly

see

that

the

accuracy

increased

here.

I

hope

everything

was

clear

up

till

now

and

that

this

tutorial

was

helpful,

feel

free

to

open

issues

on

our

github.

If

you

have

any

queries

or

you

can

also

reach

us

out

on

the

getter

community.

Thank

you.