►

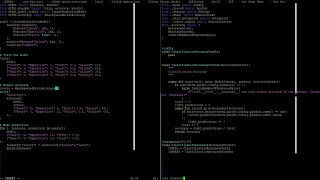

From YouTube: Weekly Sync: 2021-08-03

Description

Hashim points out the need for accuracy scorers!!! This was an important meeting! Thank you Hashim!!!!

A

A

A

A

B

A

A

A

That's

right,

we

needed

to

finish

the

thank

you.

Oh

yeah,

okay,

so

we

needed

to

finish

the

thing

where

okay,

this

is

not

gonna

get

done

in

this

meeting

then

so,

because

that

was

okay.

I'm

gonna

have

to

do

this

afterwards,

because

this

was

the

man

yeah,

okay,

I

think

you

tried

it

saksham

tried

it

so

now

we're

back

to

me

trying

it

for

the

second

time.

Okay,

all

right,

yeah!

This

thing

was

it

was

the

get

get

at

her

and

set

after

thing

right.

A

A

B

A

A

Yeah,

let's

make

sure

we

get

enough

input

on

your

guys's

stuff.

If

you

have

any

so,

we've

got

basically

three

weeks.

Okay,

you

guys

are

all

on

on

track.

So

I,

don't

think

we'll

have

any

major

hiccups

here,

but

you

know

I

want

to

make

sure

that

we

get

everybody.

You

know

anything

that

they

need

so

I'll

make

sure

to

get

that

done

today.

I

assume

and

but

don't

get

it

done.

Ping

me

in

the

morning,

I

will

and

we'll

make

sure

I

get

it

done

within

the

next

two

days.

A

I

think

this

once

I

get.

This

demo

should

be

great

more

free

up

here,

so

things

have

been

things

have

gotten

extremely

hectic,

so

let's

see

we're

doing

something

sure

enough

modernization

thing.

Okay,

so

all

right,

let

me

put

in

a

reminder

on

my

calendar,

so

this

is

we'll

config,

Setters

and

Getters.

A

C

So

the

implementation

of

multi

output,

support

on

the

scorers

that

we

implemented

ourselves

in

the

ffml

isn't

really

convincing

and

I.

Don't

think

that's

the

way

to

go

like

we

are

using

a

means

of

accuracies

of

different

predictions

to

you

know

calculate

the

multi-auto

accuracy

and

if

we

are

using

the

same

score

from

scikit

implementations,

they

give

out

different

scores

than

ours.

Do

and

I

can't

really

follow

their

multi-output

implementation.

They're

using

different

scores

inside

scores

and

it

was

getting

messy.

A

If

a

model

wants

to

do

something

else

with

features

right

like

we

should

really.

We

should

probably

separate

the

connection

between

the

model

and

the

score

a

little

more

by

specifying

to

the

score

what

we

want

it

to

score

right.

What

what?

What

predictive

feature

we

want

it

to

score

right.

Does

that

sound

like

reasonable

to

you

guys

or

do

you

think

I

mean

sutachu?

You

have

more

experience

with

this

than

the

rest

of

us.

So

do

you

think

that

that

we

should

leave

it

with

the

introspection

for

any

strong

reason

or

or

are

you?

A

A

A

I'm

just

concerned

that

we're

grabbing

predict

like

the

I'm

concerned

that

we're

grabbing

from

the

from

the

parent

model,

the

from

the

from

the

model

config

for

you

know

for

what

essentially

amounts

to

something

that

I.

That

I

think

is

that

that

would

change

right.

Basically,

so

what

what

happens

with

your

patch

Hashem

is

is

if

we,

where

was

that

the

multi-output

right,

so

your

changes

sort

of

make.

A

It

apparent

that

this

that

that

that

the

the

scores

and

the

models

are

too

intertwined

right

now

right,

because

you

now

begin

to

inspect

the

model,

can

predict

to

see

if

it's

a

features

or

if

it's

a

singular

feature,

and

now

all

this

extra

code

just

explodes.

So

basically,

the

code

in

each

score

is

too

tied

into

the

models.

Is

what

I'm

saying

I

I

believe

right,

because

right

now

you

you

we

we

this

this!

A

The

the

change

that

you

made

resulted

in

a

big

change,

not

a

big

change

but

like

it,

resulted

in

quite

a

few

lines

added

in

the

score

right

and

it,

and

it

showed

that

the

the

functionality

of

the

models

is

now

too

deeply

tied

to

the

functionality

of

the

scores

or

the

scores

of

the

functionality

of

the

scores

is.

The

implementation

of

the

scores

is

too

deeply

tied

to

the

implementation

of

the

model

since

we're

introspecting

the

predict

config

of

the

model.

A

So

what

I'm

suggesting

is

that

we

do

something

like

like

this

essentially

right,

where

we

just

make

this

self.parent,

and

so

now

the

only

dependency

on

the

model

becomes

the

prediction,

and

so

we

can

ask

you

know

we

do

we.

We

we

have

the

score.

Do

let's

see

this

is

where

it's

like.

Okay,

maybe

we

need

to

say,

label

equals

not

all

about

that

yeah,

so

I,

don't

know,

predict

or

feature.

We

should

probably

just

say

feature

so

I

don't

know.

This

is

where

it's

like.

A

That's

that's

good

now,

I'm,

not

sure

what

technology

to

use

here,

but

you

see

how

now

we

end

up

with

so

so

now.

It's

like

the

functionality

isn't

so

tied

together

right.

So

if

you

had

so

so

now,

if

you

end

up

with

a

multi-output

model,

you

could

verify

right

like

like

feature.

Nothing

works

like

let's

see.

A

A

Then

it

would

kick

them

out

and

not

let

them

instantiate

that

classification

accuracy

right,

so

they

would

have

to

use

a

multi-output

specific

classification,

accuracy

score

right

and

that

you

know

that

that

that

minimizes,

the

amount

of

you

know

the

the

possibilities

for

issues

with

this

score

here

right,

because

it's

no

longer

tied

into

every

single

model

and

having

to

deal

with

oh

well.

What,

if

this

model,

does

this

right?

Well,

it

doesn't

matter

because

we're

just

calling

predict

and

then

we're

looking

at

the

feature,

data.

A

B

A

Sense,

okay,

cool

great!

So,

while

we're

talking

about

the

scores,

you

wanted

to

talk

about

renaming

the

accuracy

function

of

something

else,

so

what

we

and

you'd

suggested

score

and

it

suggested

score,

oh

and

then

the

theme

thing,

but

that's

not

a

big

deal.

So

basically,

we'd

looked

at

a

few

themes

and

I

like

that.

A

One

that

you'd

posted

so

yeah

go

check

that

out

so

you're,

not

sure

if

you

get

a

chance

so

yeah

so

score

or

evaluate

I'm,

not

I'm,

not

I'm,

not

in

favor

of

evaluate

personally,

because

I

think

we

already

have

the

record

evaluation

function

and

I'm

not

sure

if

I

love,

that

name

for

that

either

but

score

seems

fine

to

me.

What

are

you?

Is

there

a

better

name

than

score?

Should

we

change

it

to

score?

Should

we

not

change

it

to

score.

A

C

A

A

A

A

Anything

else,

so

so

review

reviewing

this

I

can't

do

right

now,

not

enough

time,

but

I'll

try

to

get

to

it

and

I'll

put

it

through

the

the

I

think

this

yeah,

let

me

know

I,

think,

there's

some

comments

and

stuff

in

here.

I

thought

I

saw

some

stuff

that

looked

like

it

wasn't

cleaned

up

like

how

how.

C

A

A

A

A

A

C

A

A

A

A

A

B

C

A

C

C

B

So

I

have

actually

created

this

operation

input

layer

which

will

take

all

the

data

points

and

the

length

of

the

source,

and

it

will

actually

create

it

into

a

matrix.

So

I

have

created

a

matrix

here,

so

it

will

take

all

the

inputs

and

add

it

into

the

list.

Okay

and

the

way

I'm

doing

here

is

in

the

seed

I'm,

actually

providing

all

the

values

to

the

input

layer.

So.

A

Okay,

so

okay,

so

I

would

say

if

it's

showing

the

current

error.

I

would

say

it

looks

like

an

entry

point

registration

issue,

so

you

need

to

make

sure

that

the

operation

has

a

name

associated

with

it,

and

then

you

need

to

make

sure

that

the

entry

point

is

registered.

Third

and

you've

installed

the

package.

A

A

A

A

A

A

A

B

A

A

A

A

It

attempts

to

instantiate

it

so

I

think

it's

having

a

I

think

it's

having

a

problem

instantiating

that

operation-

oh

I,

think

I

ran

into

this

recently.

So

let's

see

okay,

it's

not

exchangeable!

So

what?

What

is

your

file

that

has

the

operations

in

it?

Look

like

oh

wait,

so

yeah!

So

that's

none,

but

it's

gonna!

Look

at

it's

gonna!

Try

to

load

it

from

the

entry

point

so

or

let's

see,

non-essential

was

not

found

in

blank

Okay.

So.