►

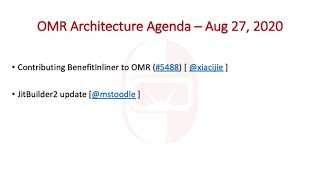

From YouTube: OMR Architecture Meeting 20200827

Description

Agenda:

* Contributing BenefitInliner to OMR (#5488) [ @xiacijie ]

* JitBuilder2 update [@mstoodle ]

A

A

B

B

B

C

C

C

For

example,

two

most

important

information

is

the

cost

and

the

benefit,

so

the

cost

currently

equal

to

the

size

of

the

method

and

the

benefit

is

calculated

as

the

product

of

the

methods

call

ratio.

So

it

is

actually

the

coefficient

frequency

of

a

particular

method

and

the

benefits

of

the

optimization

from

aligning

this

method,

so

they

will

be

used

for

choosing

methods

to

be

inlined

by

the

inliner

packing

algorithm,

so

jack.

B

Just

before

you

continue

do

you

have

any

slides

that

you're

presenting

or

actually

I

think

we

can

open

the

issue,

so

I

think

that

you

should

do

do

you

want

to

share

your

screen

showing

the

issue,

so

you

can

scroll

through

it

as

you're

talking.

Perhaps

that

might

be

easier

if

you

control

it

for

sure.

A

C

So

the

openg9

has

one

such

implementation

and

it

will

be

contributed

to

the

openg9

project.

So

the

main

responsibility

of

abstract

interpretation

is

to

find

the

core

sites

and

generating

the

inlining

method

summary.

So

this

will

be

mentioned

later

and

it

took

the

most

important

functionality

is

to

simulate

the

program

state,

the

inline

summary.

So

it

will

capture

all

the

some

potential

optimization

opportunities

of

inlining,

one

particular

method,

and

it

will

also

specify

what

are

the

constraints

that

are

the

safe

values

to

make

those

optimization

happen.

C

So

the

summary

itself

is

not

enough

to

determine

so

which

optimization

will

be

unlocked

after

inlining.

So

we

need

the

program

states

generated

by

the

abstract

interpretation.

So

we

need

to

test

the

program

state

at

the

against

the

master

summary

to

see

whether

those

constraints

they

are

satisfied

or

not

inline,

in

summary,

is

actually

it

is

extensible

and

reusable.

So

currently,

there

are

some

optimizations

for,

for

example,

branch,

branchfold

branch,

votings,

now

check

foldings

and

the

compiler

engineer.

C

C

C

C

B

Okay

jack,

if

we,

if

we

can

just

pause

before

you,

go

into

the

contribution

plan,

because

I

know

some

people

on

this

call

will

have

seen

a

lot

of

this

inliner

work

previously

and

be

fairly

familiar

with

it.

But

there

will

also

be

a

number

of

people

on

this

call

who

have

not

seen

it

or

have

not

seen

it

recently

and

probably

need

a

little

bit

more

of

a

high

level

introduction

to

what

this

is

and

and

kind

of

what

it's

trying

to

do.

B

So

if

it's,

okay

with

you,

I'd

just

like

to

take

a

couple

of

minutes

just

to

provide

that

overview,

so

people

have

a

better

idea

of

kind

of

what

all

these

parts

are

adding

up

to.

Since

you

did

a

good

job

describing

the

individual

components,

but

I

think

people

might

need

the

higher

level

view

if

that's

okay,

yeah

sure,

okay,

so

traditionally,

inlining

has

been

done

kind

of

with

a

single

metric

right.

B

B

Now

the

inliner

so

right

now

omr

has

an

inliner.

It's

called

the

trivial

inliner

and

it

does

not

do

any

very

sophisticated

kind

of

inlining.

What

it

does

is.

Basically,

if

you

tell

it

to

inline

something

it

will,

and

it's

basically

set

up

to

just

get

do

very

small,

simple

methods

like

getters

and

setters,

and

that

kind

of

thing

it

doesn't

do

any

sophisticated

trying

to

figure

out

the

right

things

to

inline.

B

Now

the

main

inliner

that

existed

in

the

j9

project

inside

ibm

before

it

was

broken

apart

and

open

sourced,

as

omr

has

open

j9

and

the

inliner,

which

is

still

in

open

j9,

is

a

very

traditional

kind

of

inliner,

in

that

it

has

a

single

metric

size

and

we

try

to

pick

things

to

fit

within

a

given

budget

and

to

incentivize

the

inliner

to

inline

some

things,

rather

than

others.

There's

loads

of

heuristics

in

that

open,

j9

inliner

that

increase

or

decrease

the

size

of

a

method

to

make

it

more

or

less

attractive.

B

And

this

has

a

number

of

drawbacks,

because

if

you

have

a

very

large

method

that

looks

very

beneficial,

you'll

reduce

its

size

and

you

can

have

a

very

small

method

that

may

not

look

as

beneficial

and

you'll

increase

its

size.

And

then

you

can't

tell

the

difference

between

the

two

right,

because

they

both

have

sort

of

arrived

at

this

medium

size.

B

So

we

have

an

implementation

of

that

and

what

it's

trying

to

solve

is

for

a

given

budget.

What

is

the

maximum

benefit?

So

budget

can

be

kind

of

easily

estimated

as

nodes

or

byte

codes

or

whatever

you

want.

But

the

the

question

is:

what

is

the

benefit?

How

good

is

it

to

inline

a

particular

method

and

the

approach

that's

being

taken

with

this

work

is

to

say

well

how

good

something

is

to

inline

is

how

how

much

opportunity

for

optimization

it

exposes.

B

So

if

I

have

a

choice

of

inlining,

two

things,

it's

better

to

inline

the

one

that's

going

to,

let

us

optimize

more

because

it

will

improve

performance

more

so

the

way

that

this

has

been

modeled

is

using

abstract

interpretation.

So

there's

an

abstract

interpreter

with

this

inliner,

which

processes

the

program

and

calculates

maintains

values

for

value

ranges

for

operands

values

of

intermediate

program

values,

and

it

looks

for

patterns

in

the

program

that

suggests

an

optimization

is

possible.

So

jack

alluded

to

branch

folding.

B

So

if

we

see

a

test

against

null

and

it's

branching

one

way

or

the

other,

we

can

look

at

the

the

abstract

constraint,

that's

being

produced

for

the

value

being

tested

and

say:

do

we

know

that

it's

not

null?

If,

yes,

we

know

that

we're

going

to

fold

a

branch,

so

we

can

say

we

think

that

that's

that's

an

optimization,

that's

possible,

or

you

might

say

well

actually

that

that

test

is

against

a

parameter.

B

So

we

we

don't

know

until

we

look

at

the

caller,

whether

this

branch

will

fold

or

not,

and

it

records

a

summary

of

these

possible

transformations

and

the

dependencies

on

parameters

in

that

method.

Summary

that

jack

was

talking

about,

and

so

the

idea

here

is

to

use

this

abstract

interpretation

to

build

up

these

benefit

numbers

to

have

the

the

benefit

scores

and

the

sizes

and

then

to

pick

an

optimal

inlining

based

on

those

numbers

and

that's

a

very

much

more

deterministic

mechanism

than

many

traditional

inliners

that

are

heuristic

based

now.

B

The

language

specific

component

at

the

moment

is

the

abstract

interpreter,

because

a

lot

of

this

prototyping

was

done

in

the

context

of

of

open

j9

and

there

generating

trees

for

all

the

byte

codes

of

all.

The

methods

you

might

want

in

line

is

too

expensive.

In

terms

of

memory

and

time

to

be

done,

so

you

want

to

do

your

abstract

interpretation

over

the

byte

codes.

B

So

jack

does

have

a

bike

java,

bytecode,

abstract

interpreter

which

he'll

be

looking

to

contribute

to

openg9,

I

believe,

but

all

of

the

common

infrastructure,

including

the

point

where

you

call

the

abstract

interpreter

and

a

lot

of

the

infrastructure

for

building

the

abstract

interpreter,

is

language

agnostic

and

can

live

in

omr

and

we

also

had

a

pull

request

open.

I

don't

believe,

we've

merged

it,

but

there

was

a

pull

request

that

started

an

abstract

interpreter

for

trees.

B

B

B

Could

you

just

provide

a

brief

summary?

So

how

is

the

throughput?

Is

it

sort

of

on

par

better

worse

than

the

current

inliner

in

openg9,

which

is

being

tweaked

and

tuned

for

the

better

part

of

about

20

years?

How

does

it

compare

in

terms

of

the

amount

of

compile

time

it

uses,

and

how

does

it

compare

in

terms

of

the

amount

of

memory

that

it

consumes

during

compilation?

C

The

memory

is

not

being

it's

not

being

tested

so,

comparing

with

the

open,

j9

inliner,

so

the

current

open

j9

in

liner,

the

runtime

increase

around

one

percent

to

five

percent,

because

the

the

runtime

may

be

affected

by

some

other

factors

in

the

performance

server

so

the

currently,

so

it

will

increase

around

one

to

five

percent,

but

the

compilation

times

it's

reduced

around.

I

think

18

18

to

20

percent

and

the

code

size

reduced

also

around

18

to

20

percent.

Comparing

with

the

current

open,

g9

inliner.

A

B

B

A

D

When,

when

you're

running

these

benchmarks,

that

you

ran,

was

it

mostly

hot

and

scorching

methods

that

were

running,

because

I

mean

the

the

the

default

inliner

that

runs

at

warm

is

cheaper

than

what

runs

at

hierarch

levels.

I

just

wanted

to

get

a

sense

from

those

numbers

what

those

numbers

actually

meant,

whether

it

meant

how

much

better

the

new

inliner

was

versus

hot

and

scorching,

primarily

because

that's

what

took

all

the

time

or

did

it

say

anything

about

the

warm

level

in

miner.

B

B

Okay,

so

for

for

dicapo,

the

throughput

performance

is

dominated

by

hot

and

scorching

methods.

There

is

a

non-trivial

amount

of

warm

compilation,

but

because

the

because

the

time

is

aggregate,

I

think

that

the

the

dominant

part

of

the

compile

time

will

be

the

high

oct

level

compiles

it

hasn't.

There

hasn't

been

a

study

done

recently

on

something.

That's

warm.

Only

the

code

size

again

is

probably

dominated

by

the

high

up

level

compiles

just

because

they're

the

biggest

ones.

So

is

it

a?

B

Once

it's

chosen

enough

things

to

inline

that

it's

run

out

of

budget,

so

it

it

sort

of

conserves

time

by

pruning

the

search

space,

whereas

the

this

inliner

will

search

the

whole

search

space

to

try

and

pick

the

optimal

inlining

plan

and

for

the

sake

of

comparison,

we're

running

them

with

equal

budgets

or

roughly.

What?

B

B

Yeah,

so

they

they

both

have

a

so

in

the

embodiment

in

in

openg9.

They

both

use

a

number

of

bytecodes

as

their

unit

of

size

and

their

unit

of

budget.

Now,

the

it's.

It's

not

possible

to

do

a

complete

apples

to

oranges,

comparison

because

apples

to

apples

comparison,

because

the

current

inliner

in

open

openj9

increases

and

reduces

size

based

on

perceived

benefit,

and

so

it's

possible

to

fit

more

methods

into

the

budget

when

you've

artificially

shrunk

them,

whereas

the

current

one

will

keep

the

budget

fixed,

but

look

for

the

best

benefit

that

will

fit.

B

B

B

B

D

D

D

B

E

A

Oh

thanks,

so

I

don't

think

anything's

been

done

with

interpreter

builder,

since

the

work

that

robert

charlie

and

yourself

were

kind

of

involved

in.

There

is

actually

a

method

inliner

in

jit

builder

right

now,

which

interpreter

builders

based

upon

so

there's

a.

There

is

an

actual

way

you

can

call

if

you

call

with

a

method

builder

as

the

target

it

can.

E

C

B

Yeah

the

whether

you're

going

to

include

frequency

as

part

of

your

benefit

score

for

an

optimization

found

within

a

within

a

procedure,

is

kind

of

left

down

to

the

bit

of

code.

That

sort

of

recognizes

that

opportunity.

At

the

moment,

I

don't

believe

it's

scaling

using

frequency.

The

frequencies

are

used

to

scale

the

call

sites

to

adjust

the

perceived

benefits

as

you

traverse

up

and

down

the

idt.

B

C

Okay,

sure,

so

the

contribution

will

be

divided

into

three

phases.

So

phase

one

is

contributing

the

basic

classes

for

doing

the

abstract

interpretation.

So

there

they

are

abstract

value,

abstract,

state,

abstract,

local

variable

array

and

abstract

open

stack.

So

after

the

phase

one

is

being

contributed,

we'll

we

will

contributing.

C

B

So

the

project

that

I'd

worked

with

at

kenosp

on

a

tree

interpreter

was

intended

to

try

and

provide

the

abstract

interpreter.

You

would

need

an

omr,

the

stu,

the

two

students

that

were

working

on

that

had

quite

a

bit

of

success,

but

only

got

an

interpreter

that

was

working

on

single

basic

blocks

for

a

subset

of

the

trees.

B

If

that

community

is

interested,

I

would

imagine

it

would

probably

not

be

the

default

inliner

for

them

to

start

with,

but

they

certainly

would

certainly

be

enablable

under

an

option

or

something

like

that.

I

think

would

be

the

plan

it

does

make

testing

it

in

the

context

of

omr

a

little

bit

more

challenging

until

that

abstract

interpreter

for

trees

exists.

A

A

B

C

B

A

Right

so

yeah,

so

I

think

that's

an

area

that

we'll

want

to

spend

a

little

bit

of

time,

thinking

about

how

we

can

how

we

can

bring

this

code

in

and

have

it

be

tested

in

some

way

shape

or

form

just

so

that

we're

we

can

ensure,

as

we

go

forward

here,

that

it

doesn't

get

broken

and

like.

I

would

prefer

that

it

not

only

get

tested

as

part

of

open

j9,

for

example,

which

is

kind

of

the

obvious

way

it

would

get

tested.

B

B

Yeah,

so

I've

marked

myself

on

those

just

because

I

I'm

interested

in

them,

but

we

do

need

external

review.

I'm

I'm

too

close

to

these

to

do

the

review

properly

myself

and

there's

too

much

code

here,

I

think

for

one

person.

I

think

we

need

a

few

people

to

look

at

it

and

I

I

would

especially

like

input

from

the

likes

of

philip

and

leo

and

ben

and

other

people

who

have

done

a

lot

of

work

with

testing

in

the

context

of

omar.

B

I'm

certainly

not

an

expert

on

that,

and

I

think

that

your

expertise

and

pongxion

for

good

software

engineering

would

be

very

appreciated

in

trying

to

come

up

with

the

way

that

we

can

get

the

the

testing

going

with

the

lowest

overhead

possible

to

jack.

Since

obviously,

he's

he's

got

other

things

on

his

plate

as

well,

but

I

would

hate

for

this

all

of

this

work

to

not

be

able

to

be

contributed

because

of

difficulties.

Getting

the

right

tests

and

things.

B

A

Hey

well,

I

mean

from

from

my

perspective

I

mean

this

is

this

is

filling

a

fairly

big

gap

in

the

omar

compiler

infrastructure

and

I

did

from

the

performance

results

that

you've

talked

about.

It

sounds

like

it's

filling

it

with

something:

that's

actually

already

reasonably

effective,

so

so

I'm

definitely

in

favor

of

seeing

this

in

the

code

base,

and

I

just

want

to

say

thank

you

to

jack

and

andrew.

I

know

you

guys

have

spent

a

lot

of

time

working

on

this

and

others

in

the

past

on

that

project.

A

B

B

A

So

don't

get

your

hopes

up

too

much,

but

the

there

are

a

few

things

that

I've

managed

to

incorporate

into

the

the

the

dipbuilder

2

compiler

that

I

guess

I

call

it

a

compiler

it's

about

7000

lines

of

code

right

now.

So

that's

a

pretty

pretty

small

compiler,

but

it's

based

on

top

of

the

omr

compiler

and

jetbuilder

itself.

So

it

leverages

all

of

that

infrastructure.

A

Unfortunately,

I

haven't

done

the

code

generation

for

function

calls

yet,

so

you

can't

actually

generate

a

a

method

of

a

function

builder

that

calls

anything

yet,

but

you

can

generate

the

il

for

it.

You

just

can't

generate

code

for

it.

I

also

have

generalized

some

more

of

the

infrastructure

that

represent

that.

That

makes

up

what

an

operation

has

anyone

who's

familiar

with.

A

So

the

thing

that

I've

been

spending

the

most

time

on

is

something

that

allows

you

to

debug

jit

builder

il

so

you

can

debug

at

the

level

of

the

jet

builder,

il

calls

that

you're

making.

So

if

you

do

a

load,

you

can

look

at

the

result

of

the

load.

If

you

do

a

ad,

you

can

look

at

the

the

operands

of

the

ad.

You

can

look

at

the

value

that

the

ad

creates

and

you

can

basically

single

step

and

do

all

kinds

of

things.

A

A

A

So

it

would

compile

all

of

the

jitbuilder

calls

that

you

make

all

the

way

down

into

native

code

and

it

would

hand

you

back

an

entry

point

that

you

could

then

run

as

a

as

a

native

function.

The

debugger

works.

You

can

call

a

a

different

entry

point

create

debugger.

I

think

I

called

it

something

like

that

and

you

pass

it

the

function

builder

and

then

what

it

does.

A

Is

it

hands

you

back

a

native

entry

point

that

is

essentially

a

thunk

into

a

debugger

that

is

tied

to

the

intermediate

language

or

the

the

il

of

the

of

the

function

builder?

So

what

that

means

is

that

the

interface

to

using

the

debugger

is

the

same

as

the

interface

that

you

use

to

generating

native

code.

So

it

allows

you

to

basically

just

step

into

a

function

and

and

debug

it

at

the

jit

builder

level,

as

if

it

was

being

called

well.

A

It

actually

is

being

called

at

runtime

on

the

data

that

so

you

call

this

function

as

if

it's

a

function,

entry

point

it's

as

if

it's

the

target

function,

so

you

pass

it

it's

per

its

arguments

and

those

get

passed

in

as

parameters

that

are

then

accessible

inside

the

the

debugger.

So

you

can

see

what's

going

to

happen

when

you

run

that

code.

A

A

Those

in

turn

make

calls

down

into

the

jit

builder

layer,

which

then

calls

down

into

the

omar

compiler

layer

which

builds

omer

omar

compiler,

il

which

doesn't

look

anything

like

the

jet

builder

calls

that

you

made

and

then

that

proceeds

through

you

know

the

obvious.

You

know

compiler

passes

that

the

optimizer

you

know

performs

on

all

of

that

code

and

eventually

it

spits

out

native

instructions.

A

But

it's

a

it's

a

lot

of

steps

through

the

the

code

api

that

the

developer

is

using

and

the

actual

output

that

you're

getting

so

it's.

It

can

be

hard

to

sort

of

make

sure

that

you're

doing

all

the

right

things.

So

the

idea

was

to

provide

a

way

for

people

to

test

just

the

the

il

that

they're

doing

so

it

would.

It

would

look

like

the

code

that

they

wrote.

A

So

what

I'm

gonna

do

is,

let's

see,

let's

look

at

we'll

just

look

at

this

thing,

so

this

is

the

standard

matrix

multiply,

jit

builder

code

hope

everyone

can

see

that.

So

this

is

just

a

refresher

for

people

right.

So

there's

you

know

four

three,

four

loops

the

the

standard

I

j

and

k

loop

and

then

the

standard

you

know

just

run

a

sum

through

the

I,

a

of

I

k

and

b

of

kj

right

and

and

collect

them

in

a

sum

variable.

A

So

I

don't

expect

you

to

absorb

all

this.

Don't

worry

about

it!

I'll

show

you

a

different

representation

of

this,

but

I

just

wanted

to

show

like

this

is

the

kind

of

code

that

you

write

in

git

builder,

so

you're

writing

things

like

for

loop

up,

you're,

doing,

load,

you're,

creating

const,

doubles

your

doing,

add

and

mole

things

like

that

right.

So

that's

the

kind

of

interface

that

you're

used

to

so

I've

actually

already

compiled

this.

So

I'm

not

gonna

bother

doing

that.

A

A

Here

so

from

method

is

just

the

function

builder

that

was

gets

built

by

that.

That

builds

all

of

that

code

that

I

just

showed

you

you

can

ask

for

a

debug

entry

with

a

prototype

type

is

something

I

stole

from

borrowed

from

how

the

the

the

trill

compiler

works.

I

think,

and

this

will

hand

you

this

will

hand

you

back

an

entry

point

that

you

can

then

just

call

somewhere

down

here

right.

A

You

can

just

call

test

of

right:

the

output

matrix

the

two

input,

matrix,

matrices

and

n

right,

so

you

just

call

it

like

it's

a

function,

but

what

that

actually

do

is

does

is

pop

you

into

the

debugger.

So

let's

I'll

show

you

what

that

actually

looks,

so

I'm

going

to

just

run

it

all.

It's

going

to

do

is

go

through

all

of

this

all

of

that

code,

and

it's

eventually

going

to

call

test

on

a

function.

A

Oh,

I

can't

see

that

there

we

go

so

you

can

see

once

it

gets

down

to

invoking

the

compiled

code.

The

jit

builder,

debugger

jbdb

pops

up

and

wishes

you

a

happy

debugging

session.

It

tells

you

which

parameters

are,

which

arguments

got

passed

in

for

all

the

parameters

and

what

their

values

are.

So

these

are

just

addresses

and

n

is

four,

so

this

is

multiplying

two

four

by

four

matrices

to

generate

another

four

by

four

matrices

matrix

and

and

it

just

automatically

kind

of

stops

at

the

beginning

of

the

program.

A

So

if

you

use

gdb

you'll

be

fairly

familiar

with

these

things,

you

can

ask

for

help.

You

can

get

a

list

of

the

current

il,

so

you

can

get

it

to

print

out

what

the

current

method

should

be

function.

Builder

function.

Builders,

il

looks

like

you

can

single

step

through

code.

You

can

do

next

to

jump

over

the

full

operation.

A

You

can

continue

to

just

let

it

run

until

the

next

break

point.

You

can

print

out

values,

you

can

print

out

types.

You

can

print

out

symbols

like

names

of

local

variables

and

you

can

set

breakpoints.

You

can

list

breakpoints,

you

can

break

before

an

operation

or

before

a

builder.

You

can

break

after

an

operation,

and

you

can

also

break

at

a

particular

time

so

part

of

the

output.

That

here

is

the

time

that

has

run

so

time

is

just

measured

in

number

of

operations.

A

So

we're

sitting

here

right

now

before

this,

this

load

of

a

right.

So

again,

it's

kind

of

very

similar

to

what

people

expect

to

see.

Why?

Don't

we

just

print

out

the

whole

jitbuilder

il

that

we've

got

here

so

there's

a

little

bit

of

a

problem

here

and

the

debugger

itself

creates

a

bunch

of

types

and

then

it

adds

them

into

the

type

dictionary.

A

A

Oh

actually,

it

is

right

and

what

the

parameters

are,

what

their

types

are,

what

local

variables

have

been

defined

as

as

as

this

function

was

created,

and

then

it

goes

and

shows

you

what

the

operations

are.

So

when

you

call

this

thing,

it's

going

to

go

through

a

set

of

operations

and

it

just

prints

out.

You

know

kind

of

like

this.

I

think

I've.

A

So

I've

shown

this

in

a

previous

meeting

on

how

what

jitbuilder

2

il

looks

like,

and

in

this

builder

it's

just

basically

loading

a

bunch

of

parameters,

and

then

it

does

the

outer

for

loop

and

and

then

returns

right.

So

that's

kind

of

what

the

outermost

builder

object

does

and

then

inside

this

for

loop,

you

can

see

it's

it's

collecting

the

values

together.

So

v4

is

zero.

V3

is

n,

so

this

is

zero

to

n

by

one.

A

A

Then

you

can

go

and

look

at

what

this,

what

the

b1

builder

does-

and

it

basically

just

sets

up

the

second

nested

loop

and

then

goes

into

b2

b2

just

does

again

it

it

initializes

the

sum

to

zero

that

should

be

printing

out

as

a

double

but

didn't

and

then

and

then

creates

the

inner

loop

and

then

at

the

after

the

f

after

the

innermost

loop

runs

it.

It

collects

together

the

sum

variable

and

stores

it

into

the

output

matrix,

and

then

you

know.

A

Finally,

b3

is

the

innermost

loop.

So

it's

doing

all

of

the

loading

of

the

values

and

collecting

the

sum

in

you

know.

Load

sum

multiply

the

two

things

together.

Add

it

to

the

sum

and

keep

going

so

that's

kind

of

what

the

il

looks

like

so

so

then

we

can

do

kind

of

single

step.

We

can

go

and

print

a

bunch

of

things,

so

we're

basically

single

stepping

through

the

operations

of

the

of

the

function,

and

then

we

can

do.

A

A

So

there's

a

bunch

of

things

like

that

that

you

can

do

if

we

keep

going

here.

Eventually

we

get

to

the

for

loop.

Now,

if

I

hit

next

here,

it's

just

gonna

do

the

whole

for

loop

and

get

to

the

return.

So

that's

not

very

interesting,

so

we

can

single

step

inside

and

then

we

can

start

executing

inside

the

for

loops

as

they

go.

A

A

V13,

we

could

say

is

double

one,

so

double

one

is

the

the

value

that

it

loaded

out

of

the

out

of

the

row:

zero

zero

of

the

of

the

a

array,

basically

right

and

if

you

keep

going

it

goes

and

indexes

into

the

b

array

and

loads

loads

at

that

one

too.

So

you

can

see

that

that

one

is

zero,

so

we're

going

to

get

a

very

unexciting

value

out

of

this

particular

row

of

the

of

the

thing.

A

A

I

don't

think

that

worked,

but

anyway,

all

right

did

I

get

the

wrong.

You

know

break

before

oh

26..

There

we

go

so

then

we

can

do

a

compare,

it's

continue

and

then

it's

executed

up

until

that

operation

has

run.

So

we

can

kind

of

take

a

look

at

what

so

at

this

point

sum

has

the

value

six

in

it.

It

does

a

bunch

of

other

stuff

and

and

keeps

going

so

anyway.

So

that's

just

kind

of

interesting

stuff.

Eventually

it

will.

A

Too

many

of

these

things,

okay,

I

give

up,

it

will

eventually

complete

and

run.

I

don't

have

anything

to

delete

a

break

point

right

now,

so

it

wasn't

included

in

the

list

of

commands.

Unfortunately,

you

can

see

all

the

breakpoints

that

you've

got,

but

you

can't

delete

one.

Unfortunately,

so

eventually

this

thing

there

we

go

will

complete

and

it

will

it.

It

basically

returns

and

shows

the

execution

of

the

thing.

A

So

that's

my

demo,

which

I

kind

of

created

on

the

fly,

so

I

apologize

if

it

wasn't

very,

very

polished

the

the

direction

that

I'm

going

in

so

right

now.

The

debugger

implementation

is

kind

of

a

one-off

that

I

did

so

it's

basically

all

the

code.

Is

it

duplicates

all

of

the

code

to

simulate

how

to

do

an

ad

and

how

to

do

a

load

and

how

to

do

a

load,

add

and

index

ad

and

so

on?

A

A

When

you

add

a

new

operation,

so

remember

one

of

the

one

of

the

facilities

or

one

of

the

things

that

I'm

trying

to

build

into

jet

builder

2

is

the

ability

for

people

to

create

their

own

operations

and

create

their

own

types

with

relatively

little

fanfare,

and

so,

if

a

if

you

have

to

go

and

implement

a

code

generator

for

a

new

operation

and

then

also

implement

a

an

interpreter

for

it.

That

feels

like

a

little

bit

wasted

effort

to

me.

So

what

I'm

trying

to

do

is

create

a.

A

It

is

essentially

going

to

compile

a

handler

for

each

kind

of

operation

and

then

insert

some

additional

control

flow,

which

it's

going

to

use

actually

compiled

function.

Builders

to

do

this

to

essentially

create

all

of

the

all

of

the

interpretation

that's

needed

in

order

to

be

able

to

single

step

through

that

code

and

and

and

get

the

same

result,

so

it

will

use

the

the

code

generator

that

you

implement

for

any

new

operations.

You

only

have

to

implement

it

once

and

then

by

manipulating

what

code

is

in

the

builders.

A

Automatically,

I

believe,

generate

the

debugger

that

provides

all

of

this

support

for

for

using

it

and

that's

pretty

much

it.

I

did

also

spend

a

little

bit

of

time

looking

at

creating

a

data

flow

engine

for

this,

but

I

haven't

made

enough

progress

there

to

really

report

success.

I

mean

it's

not

it's.

It's

mostly

mechanical

things

that

need

to

get

done.

A

A

I

haven't

really

figured

out

the

right

way

to

incorporate

it

quite

yet,

so

anyone

who

has

suggested

I

can

I

could

create

a

jitbuilder

2

directory

and

in

parallel

with

jit

builder,

I

could

create

it

as

a

subdirectory

of

jit

builder,

which

is

kind

of

what

I

I

have

it

in

right

now.

I

have

it

in

a

jbill

directory

inside

or

jitbuilder

il

inside

the

the

current

jit

builder

directory

of

of

my

private

repo.

A

But

I

guess

my

plan

is

to

kind

of

clean

up

this

debugger

and

get

to

the

point

of

being

able

to

contribute

this

into

the

into

the

project

and-

and

you

know,

hopefully

get

people's

feedback

and

thoughts

on

it

and

I'll

stop

there,

because

I've

been

talking

for

a

long

time.

Let's

see

if

anybody

has

any

see

if

anybody

has

any

other

questions

or

or

whatnot.

A

A

Right

now,

at

the

beginning

of

an

operation,

it

just

checks

a

list

to

see

if

there's

an

active

breakpoint

that

should

be

that

should

cause

it

to

to

break

out

into

the

debugger

user

interface.

So

it's

it's

not

done

in

any

kind

of

smart

way,

but

it's

interpreting

anyway.

So

it's

I

don't.

I'm

not

sure

it

will

get

any

smarter

than

that

performance

is

not

necessarily

the

top

most

concern

in

my

head

right

now

so

yeah.

So

I

just

did

the

dumb.