►

From YouTube: OMR Architecture Meeting 20200924

Description

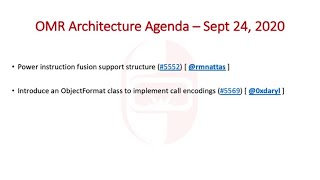

Agenda:

* Power instruction fusion support structure (#5552) [ @rmnattas ]

* Introduce an ObjectFormat class to implement call encodings (#5569) [ @0xdaryl ]

A

Welcome

everyone

to

this

week's

omar

architecture

meeting

today

we

have

two

topics:

they're,

actually

both

compiler

topics.

The

first

one

is

a

bit

of

an

architectural

discussion

around

power

instruction,

fusion

support

that

abdul

rahman

will

take

us

through.

So

why

don't

we

start

with

that?

One

and.

C

Okay,

can

everyone

hear

me

yep,

yeah,

okay,

okay,

so

for

power,

so

we

have

with

b10

starting.

We

have

this

new

instruction

fusion

feature

and

like

this

support

structure,

hopefully

allows

omr

to

take

advantage

of

it

by

knowing

which

structures

can

use

and

which,

not

if,

if

you

or

I

can

show

the

pr,

maybe

just.

C

C

If

the

target

of

the

first

instruction

is

one

of

the

sources

of

the

second

instruction,

but

that

fusion

does

have

to

be

issued

twice

because

there

are

two

target

registers

that

needs

to

be

set.

But

if

it's

used

same

target

register

we

it

it's

only

issued

once

so.

That's

kind

of

that's.

The

perfect

kind

of

goal

is

having

it

like

to

the

same

reuse,

the

same

register

in

the

target

and

having

the

follow

the

conditions

to

fuse.

C

C

Maybe

it

should

be

in

the

design

immediate

either.

There's

no

condition

it

means

or

is

there

or

there

is

a

condition,

because

some

some

have

a

condition

being

0

1

-1,

I

think

even

63

in

one

of

the

shift,

fusion

conditions

also

for

formats

there's

some

conditions

where,

like

there's

an

ad,

the

instruction

one

can

be

add

subtract

or

like

multiple

formats

too

many

instruction

two,

so

you

have

like

two

instruction.

One

formats

specified

confused

with

any

of

the

four

instruction

two

formats,

so

that

gives

like

eight

possibilities.

C

This

is

maybe

an

issue

between

memory

usage

of

this

structure

and

maintainability,

because

if

we

split

the

formats

in

a

different

list

and

have

the

structure

like

points

to

these

formats,

it's

going

to

be

harder

to

maintain

like

an

extra

pointer

to

follow.

So

maybe

the

memory

usage

is

kind

of

still

worth

it.

C

Source

register

interchangeable

so

for

target

one

source,

two

instructions

with

one

target,

two

source

registers

most,

if

not

all

like

all

that

I

have

seen,

is

they

allow

fusion

if

the

source

register?

If,

if

the

register

like

in

the

example,

if

rx

here

was

in

the

a

or

in

the

b,

so

it

allows

both

in

almost

all

cases.

C

So

that's

something

that

we

don't

want

to

double

register.

If

every

fusion

pair

twice

because

like

that,

would

be

a

a

lot

of

memory,

useful

memory,

we

can

have

like

maybe

a

boolean

that

tell

us

that,

oh

it

can't

be

here

or

here

and

that

all

works

for

the

fusion

yeah

and

then

like

we're

assuming

two

instructions

and

access

to

the

structure

given

like

julia

mentioned,

that

the

gain

from

instruction

fusion

is

not

big.

C

C

D

Look

for

well,

I'm

I'm

just

trying

to

get

my

head

around

like

what

it

is

that

you're

wanting

to

try

and

do.

Are

you

proposing

some

kind

of

instruction

scheduling

and

like

some

constraints,

on

the

register

assigner,

or

are

you

trying

to

like

change

how

you

generate

the

instructions

or

have

multiple

like

I'm

just

trying

to

get

my

head

around

exactly

what

it

is

that

this

is

proposing.

C

C

If

I

go

to

an

the

code,

for

example,

I

have

a

structure

which

is

like

a

fusion

bear

that

holds

instruction

one

instruction,

two

and

like

instructional

instructions

to

our

like

format,

and

if

I

go

to

an

example,

maybe

I'm

going

to

okay,

so

just

holding

oh

for

this

instruction,

this

these

views,

so

it's

just

a

structure

to

hold

what

instructions

can

fuse.

Then,

whatever

else

in

omr

can

use

this

structure

to

know

all

these

use,

so

we

can

do

something

with

it.

C

A

C

So

it

could

be

also

like

this

structure

can

be

used,

also

any

register

assignment

to

assign

the

best

register

for

fusion

and

then

for

people.

The

idea

I

have

is

like

a

reorder,

because

the

fusion

window

is

just

like

consecutive

instructions,

so

the

basically

whole

idea

was

to

reorder

the

instructions

that

can

fuse

to

fuse,

but

it

can

be

also

used

in

and

register

assignment,

to

assign

the

best

register

for

fusion.

C

So

it

should

like

mainly

it

should

be

an

instruction

schedule,

but

as

far

as

I

know,

there's

no

instruction

schedule.

The

next

in

mind

was

a

bible

and

register

assignment.

I'm

not

sure

if

there's

like

something

else

that

can

have

like

a

whole

holistic

kind

of

look

over

it

before,

because

when

a

node

convert

to

instruction,

there's

like

set

nodes

to

set

instructions,

is

there

something

else

that

can

be

done

here.

A

E

C

Because

for

power

at

least

like

in

p10

like

there's,

maybe

600

or

three,

if

we

count

the

interchangeability

as

like

a

separate

one,

there's

like

600

pairs,

so

it's

kind

of

more

larger

stairs

that

can

fuse.

So

it's

like

having

a

structure

that

holds

all

these

and

then

like

what

other

people.

Whatever

else

can

use

the

structure

to

kind

of

optimize

the

code.

C

My

proposed

design

is

similar

to

instruction

properties

and

then

what

it

starts

with.

So

I

have

a

fusion

format

and

instruction

format

that

holds

the

target

series

once

or

so.

That's

for

one

format,

I'm

just

like

working

on

one

format

right

now

and

then,

like

I

get

two

of

these

format

in

one

pair

and

that's

like,

and

I

have

then

a

list

of

pairs.

C

A

C

Okay,

yeah,

okay,

so

that's

kind

of

the

I'm

hoping

like

to

increase

formats

at

whatever

else

needed

like

a

better

idea.

The

guy

in

my

head

is

like

having

a

hash

table

because

that's

much

quicker

so

having

a

hash

table.

The

hash

key

would

be

like

the

op

code

of

instruction,

one

and

instruction

two

because

like

if

we

want

this

structure

to

be

quick,

because

the

the

gain

from

fusion

itself

is

not

it's

not

that

big.

C

We

need

the

structure

to

be

big

to

be

fast,

so

having

a

hash

table

to

access,

I'm

trying

to

limit

the

search

space

for

pairs

and

then

like

comparing

and

finding

a

pair

that

match

or

not

match.

Okay,

if

a

beep

hole

goes

through,

instructions

takes

an

instruction,

it

looks

in

the

hash

table.

Is

there

a

pair

that

matches

with

the

one

after

it,

for

example,

or

like

it

goes

after

like

a

window,

for

example,

20

instruction?

C

C

Like

because

we

can

point

from

instruction

one

to

instruction

to

many

instruction

twos,

but

if

we

want

to

go

the

reverse

way,

that

could

be

some

an

issue

like

if

we

can

have

a

structure

that

finds

in

all

instruction

two

possible

for

this

instruction,

one

that

can't

fuse.

But

if

we

want

the

other

way,

I'm

not

sure

if

we,

if

it

like.

If

it's

something

that

we

want,

that's

going

from

instruction

to

instruction

one.

But

it's

having

general

enough

that

it

does.

It

would

be

better.

But

maybe

we.

C

F

F

C

C

Yeah,

I

don't

get

a

lot

on

how

I

gonna

use

it

because

I'm

thinking

of

having

it

generally

like

as

a

general

structure

for

people's

or

others

to

use

it.

But

if

we

talk

about

the

people,

maybe

that

goes

over

the

instruction

for

each

instructions

goes

like

for

a

window.

Let's

say

20

down.

It

goes

to

the

next

one

instruction.

It

says

if

there's

an

instruction

that

could

use

with

the

first

instruction

and,

if

possible,

reorder

them,

so

they

fuse.

D

D

If

the

benefit

is

marginal,

I

mean,

if

you

can

show

that

there's

a

good

benefit,

then

complexity

is

warranted,

but

I

think

we

just

need

to

be

a

little

careful

on

the

trade-off

there

right

now,

because

if

we

design

something

really

complicated

and

the

instructions

get

reordered

and

changed

after

they've

been

selected-

and

you

know

that

makes

debugging

harder

and

yet

it

may

not

give

us

anything.

There's

one

concern

that

comes

to

my

mind.

A

I

was

going

to

say

much

the

same

thing

because

earlier

you

mentioned

that

you

didn't

expect

to

see

much

much

performance

from

from

this.

So

the

question

that

I

had

in

my

mind,

as

you

were

going

through

this,

was

how

many

opportunities

do.

We

think

we're

actually

going

to

find

with

with

this

kind

of

analysis

because

of

the

opportunities

are

going

to

be

fairly

few

yep.

The

analysis

is

somewhat

expensive

and

it

probably

isn't

a

great

trade-off,

and

you

may

want

to

save

this,

for

only

the

most.

C

True

yeah,

I

I

don't

believe

it's

gonna

like

it's

gonna,

give

a

huge

gain,

but

I'm

not

sure

if

it's

gonna

be

like,

would

it

be

a

less

than

a

break

even

or

it's

gonna

be

just

costing

more

than

it

and

then

it

uses.

So

that

should

be

something

that

I'll

look

into

more

and

how

much

it's

gonna

cost

versus

how

much

it's

gonna

gain

from

it.

C

A

Yeah,

I

mean

one

of

the

reasons

that,

like

I

had

mentioned,

that

we

used

to

have

an

instruction

scheduler

on

power

and

on

z,

prior

to

open

sourcing.

I

mean

one

of

the

motivations

behind

not

open

sourcing

it

not

and

not

consuming

it

in

the

open

products

is

that

we

didn't

really

measure

a

whole

lot

of

benefit

from

it.

A

It

was

doing

a

lot

of

analysis,

but

in

the

end

we

could

tolerate

the

very

minimal

amount

of

performance

losing

the

very

minimal

amount

of

performance

that

we

were

getting

from

it

versus

the

complexity

of

doing

all

that

analysis.

So

I'm

hoping

that

this

feature

isn't

going

to

fall

into

that

category.

I'm

hoping

we

might

see

more

from

it,

but

it

certainly

is

something

to

keep

in

mind

as

you

proceed

with

the

design.

C

Yeah

the

design

I

tried

as

much

as

I

can

to

have

it

like

getting

on

abstract

to

like

allow

future

fusion

bears

allow

future.

Maybe

the

winter

is

going

to

expand.

Maybe

I

don't

know

if

this

is

going

to

have

fusing

more

than

two

instructions.

I

don't

know

what

the

future

going

to

bring.

So

I'm

trying

as

much

to

have

it

generally

enough

at

the

same

time

having

it

like

work,

yeah.

C

Okay,

and

for

I'm

guessing

maintainability

is

a

like

trying

to

have

maintainability

is

a

good

thing,

too

memory

usage

memory

usage,

like

I

think

it's

going

to

be

like

for

this,

like

for

this

symbol

structure,

it's

going

to

be

around

like

the

five

six

kilobytes,

I'm

not

sure

like

I

know

omar

is

like

once

as

little

memory

usage,

but

how

much

like

would

that

be

a

big

effect?

I.

D

A

Okay,

the

other

thing

is

if,

if

there's

certain

kinds

of

code,

that

would

tend

to

produce

more

fusion

opportunities

than

perhaps

limiting

it

to

that

kind

of

thing.

So,

for

example,

if

I

don't

know

if

this

applies

to

floating

point

or

not

or

if

it's

only

just

fcr

gprs,

but

if

it

did

apply

to

floating

point,

you

know,

perhaps

you

know

restricting

it

to

methods

that

had

floating

point

or

to

blocks

that

had

floating

point

would

be

the

way

to

do

it.

C

G

A

C

A

Yeah

yeah

again,

it

would

come

down

to

how

many

opportunities,

like

you

may

find

more

opportunities

by

widening

your

your

your

your

the

field

of

search

by

considering

you

know

all

blocks,

but

from

a

implementation

point

of

view,

it

may

be

a

lot

easier

just

to

focus

on

a

block

extended

basic

block.

At

a

time.

G

C

D

Yeah

those

those

two

things

end

up,

looking

very

different

most

of

the

time

and

if

you're

going

to

go

across

blocks,

then

you

have

to

worry

about

things

like

the

global

register

assignment

that

might

have

happened

and

all

kinds

of

other

things

and

various

control

flow

issues

and

things.

So

if

you're,

trying

to

keep

this

simple

extended

basic

blocks

would

be

where

I

would

start

all.

B

D

Else

I

mean

if

you're

considering

designs,

I

mean

there

are

things

other

than

peepholes

that

can

achieve

this

kind

of

thing.

I

don't

know

if

you've

considered

any

of

those

or

how

well

those

would

fit

with

what

you're

trying

to

do,

but

I

mean

things

like

bottom

up,

tree

rewriting

and

various

other

kinds

of

techniques

that

can

be

used

to

produce

optimal

instruction

sequences

if

there's

enough

benefit

to

be

had

from

the

fusion

right.

D

C

Yeah,

that's

like

one

of

the

things

I

like.

I

tried

just

writing

a

simple

java

code

and

see

how

the

compiler

is

going

to

do

like

already,

like

reordering

and

the

trees,

like

kind

of

move

things

near

each

other

in

a

good

way.

So

yeah.

Maybe

I

don't

yeah,

that's

a

good

idea,

so

maybe

I'll

look

into

that

too.

E

F

D

Right,

but

I

mean

the

raw

number

of

fusions

is

not

like.

You

could

implement

all

this

and

simulate

how

many

things

might

fuse,

but

the

number

of

fusions

does

not

determine

the

performance

right,

because

the

fusion

may

give

a

large

performance

benefit

or

the

fusion

may

provide

only

an

incremental

performance

benefit,

and

if

it's

only

incremental,

even

if

you

have

millions

of

them,

you

may

not

even

really

be

able

to

observe

it.

C

D

I

guess

that

comes

down

to

how

much

development

effort

are

you

wanting

to

invest

in

something

that

may

or

may

not

pay

off?

That's

outside

this

discussion.

It's

just

true

yeah,

a

pointer

that

in

making

an

argument

for

this

kind

of

complexity,

and

for

this

kind

of

thing,

a

raw

number

of

fusions

is

probably

not

going

to

be

sufficient

for

all

of

the

different

people.

Who

would

be

reviewing

this

and

weighing

up

the

pros

and

cons

of

the

contribution.

D

A

Has

has

this

feature

been

implemented

in

any

static

compilers

yet

so,

for

example,

does

gcc

have

r10

instruction

fusion

support

and

because,

presumably,

if

you

could

get,

if

you

could

get

access

to

a

power,

10

machine

they're

probably

fairly

hard

to

find?

But

if

you

could-

and

you

could

possibly

do,

some

performance

runs

with

and

without

that

feature

enabled

just

to

get

an

idea

on

c

code

c,

plus

plus

code,

just

to

get

a

general

sense

of

what

kind

of

performance

you'd

expect

in

kind

of

general

purpose

code,

not

sure

if

that's

possible

or

not.

C

D

A

Concept

so

the

the

code

that

you

ended

up

producing

lives

in

a

code

cache

and

it's

expected

to

be

executed

dynamically.

That

works

just

fine

for

many

dynamic

languages.

It

works

fine

for

java

other

applications

that

we've

moved

this

code

into.

It's.

It's

been

a

good,

a

good

solution,

but

when

we

start

to

think

about

using

this

in

other

contexts

like

static

compilation,

where

you

want

to

be

able

to

target

use

it

in

a

static

compiler

to

let's

say,

generate

an

object

file,

so

you

want

to

produce

something.

That's

that's

an

elf

object

file.

A

The

what

you

would

really

have

to

do

is

to

go

around

to

a

lot

of

the

different

places

where

we're

generating

calls

or

accessing

data

that

kind

of

thing

and

generate

a

different

instruction

sequence,

potentially,

depending

on

that

actual

target

that

you're

that

you're

producing

you

know,

it's

certainly

possible

that

you

go

around

to

every

single

place.

That's

emitting

one

of

these

pieces

of

code

and

and

and

doing

something

different

there.

But

what

I

was

trying

to

think

of

was

that

there

was

a

more

a

cleaner

way

of

of

of

representing

this.

A

So

what

I

came

up

with

is

in

something

that

I've

been

calling

an

object

format

for

lack

of

a

better

name,

but

really

what

the

intention

here

is

to

look

at

places

where

we

are

generating

I'm

just

starting

with

calls

for

now.

This

certainly

would

extend

to

data

as

well

at

some

point,

but

just

starting

with

calls,

which

is

the

main

thing

that

I'm

interested

in

to

begin

with.

A

How

can

we

sort

of

abstract

what

it

is

that

we're

doing

at

all

the

different

places

that

we're

generating

calls

so

that

we

can

change

the

instruction

sequence

depending

upon

the

target

that

we're

trying

to

target?

So,

if

I'm

generating

an

object

file

for

for

elf,

it

needs

to

generate

stuff

that

cares

about

the

plt

and

perhaps

a

global

offset

table,

I'm

generating

stuff

in

the

in

the

you

know

in

in

for

a

jit

compiler.

Maybe

what

we

have

right

now

works

just

fine,

so

I'm

trying

to

hide

a

lot

of

that

decision.

A

A

I

didn't

mention

this

before

was

that

we

also

make

assumptions

we

we

impose

decisions

on

consumers

of

omr

on

things

that

they

may

not

want

to

make

a

decision

on

so,

for

example,

the

way

that

the

code

is

written

right

now

assumes

you're

generating

code

into

a

code

cache,

and

it

also

assumes

that

you're

gonna

need

to

care

about

trampolines

when

you're

calling

other

methods

or

calling

natives

or

things

like

that.

So

that

may

not

be

something

that

is

important

to

you

or

even

applicable

to

you.

A

So

what

I'm

thinking

of

is

what

we

can

do

for

all

the

different

call

family

of

of

of

instructions,

so

this

doesn't

just

apply

to

calls

that

come

from

the

il.

This

could

be

anything

that

is

being

generated,

any

kind

of

call

that's

being

generated

from

the

code

that

you're

that

you're

generating.

So

this

could

be

to

a

native.

It

could

be

to

another

an

entry

in

another

shared

library.

It

could

be

to

a

vm

method.

It

could

be

to

a

helper,

something

like

that.

A

They're

all

different

kinds

of

calls,

and

potentially

they

would

each

need

a

different

kind

of

encoding

depending

upon

the

the

target

environment.

This

doesn't

really

this.

Doesn't

this

isn't

about

the

linkage?

The

linkage

is

really

about

the

calling

convention

that's

being

used

in

order

to

like

from

one

function

to

call

another.

This

is

really

just

about

the

encoding

of

the

instruction

and

the

things

that

the

target

environment

requires

that

we

do

in

order

to

encode

that

code.

A

A

It's

going

to

create

it's

going

to

provide

apis

that

must

be

implemented

by

specialized

object

formats

and,

depending

on

whatever

makes

sense

for

your

language

environment.

You

install

the

right

object

format

for

that.

So,

for

example,

like

just

just

some

examples,

I'm

giving

here

a

jit

code,

object

format

again

for

lack

of

a

better

name

that

we

could

use

for

dynamically

generated

code.

That's

executed

in

place,

so

this

is

pretty

much

what

we

have

right

now

and

anything

that

we

do

right

now

would

fit

into

that

kind

of

an

object

format.

A

A

I

expect

that

the

the

the

initial

api,

if

we're

just

focusing

on

calls

to

be

quite

light

to

begin

with

at

this

point,

I'm

thinking

of

just

a

couple

of

different

kinds

of

calls.

So

one

I've

been

calling

a

global

function

for

again

lack

of

a

better

name,

so

it's

basically

any

kind

of

a

call

to

something

that

may

not

be

in

the

code

that

you're

generating.

A

So

this

would

be

like

to

a

native

or

something

to

the

vm

or

something

like

that,

and

then

there's

the

notion

of

calling

something

within

the

code

that

you're

that

you're,

generating

or

within

the

code

cache

of

something

that

you're

generating.

So

that

would

be

what

I

call

a

code.

Cache

function

call,

and

it's

certainly

possible

that

you

would

get

different

encodings

for

these

two

different

kinds

of

targets,

but

they

could

also

be

very

much

the

same.

They

could

they

could

generate

exactly

the

same

kind

of

code

for

both.

A

Even

though

they

are

very

similar

in

terms

of

the

logic,

the

way

that

they

actually

implement

different

kinds

of

function

calls

is

very

different

and

the

requirements

that

each

of

those

sites

have

to

make

the

decision

on

what

to

do

is

quite

different,

and

I

mean

there

are

similarities

like

they

usually

start

with

a

symbol

and

they

have

a

node.

But

beyond

that,

there's

lots

of

other

pieces

of

information

that

each

back-end

uses

in

order

to

make

the

right

decision

on

the

call

to

do

this

is.

A

So

the

way

that

I

solved,

that

was

to

introduce

another

kind

of

structure

that

these

object

format

function

call

functions

would

take,

is

their

parameter

and

I've

been

just

calling

it

a

function.

Call

data

class

and

every

architecture

can

populate

this

function

called

data

with

they

can

specialize

that

data

structure

the

way

that

they

want

and

they

populate

it

with

the

data

that

they

need

in

order

to

in

order

to

emit

their

function,

calls

at

these

different

sites,

so

in

itself

is

going

to

be

another

extensible

class.

A

I'm

going

to

show

you

that

a

code

in

just

a

sec

and

you

can

see

how

kind

of

ugly

it

it

starts

to

get

under

the

covers

going

forward.

I

don't

necessarily

think

it

has

to

be

that

way.

I

think

that

over

time

we

can

certainly

clean

up

and

reduce

the

amount

of

information,

that's

required

at

each

kind

of

call

site

and

perhaps

even

common

up,

some

of

that

across

the

different

backends,

but

just

starting

from

the

state

of

the

world

right

now.

It's

there's

a

bit

of.

A

I

can

see

that

as

we

start

to

target,

or

this

code

starts

to

target

other

static

contexts

where

we

need

to

deal

with

data

that

we're

going

to

need

to

have

some

kind

of

a

a

solution

in

place

for

encoding

the

different

kinds

of

memory,

references

and,

and

that

sort

of

thing,

so

what

I'm

going

to

do

is

just

just

to

give

you

an

idea

of

what

some

of

this

code

might

actually

look

like.

I

put

together

just

an

example.

A

A

So

there's

two

different

kinds

of

functions

that

are

being

introduced:

one

is

an

emit

and

one

is

an

encode

and

the

real

difference

between

them

is

that

emits

will

generate

tr

instructions.

It'll,

add

tr

instructions

to

the

instruction

stream

in

order

to

emit

the

call.

So

this

is

useful

prior

to

register

assignment

like

during

tree

evaluation

and

then

there's

a

corresponding

one

called

encode,

which

is

useful

after

register

assignment,

let's

say

during

binary

encoding

or,

if

you're,

generating

snippets.

A

So

I

have

two

functions

for

that.

I

have

one

for

asking

for

how

many

bytes

are

going

to

be

emitted

in

this

in

this

sequence.

So

it's

useful

for

code

sizing

purposes

and

then

I

have

a

corresponding

set

of

functions

for

recalling

functions

in

the

code

cache

itself

and,

like

I

said

before,

it's

certainly

possible

that

these

functions

map

to

the

same

kind

of

implementation

up

here

it

really

depends

on

on

your

language

environment.

A

So

that's

that

did

I

add

any.

I

don't

think

I

actually

specialize

that,

but

so

once

the

concrete

class

is

produced,

so

you

have

a

tr

colon

colon

object

format.

The

expectation

is

that

you

can

specialize.

You

can

provide

specialized

versions

of

this.

So

here

is

a

jit

code.

Object

format

that

that

extends

the

tr

object,

format.

A

In

this

particular

location,

it

doesn't

actually

provide

anything

other

than

just

sort

of

the

anchor

point

for

that.

The

idea

is

that

jit

code

object

format

would

do

exactly

what

the

code

we

have

today

does

so,

whatever

the

implementation

is,

it's

been

sort

of

repurposed

into

this

into

this

into

this

format.

A

A

The

main

reason

I

did

that

was

because

I

guess

it

wasn't

strictly

required.

I

guess

some

of

this

could

live

in

the

cogen

directory,

but

the

number

of

object

formats,

if

we're

going

to

start

to

support

a

lot

of

these,

is

going

to

to

to

start

to

to

accumulate

so

right

now

I've

got

I've,

got

ideas

for

a

jit

code,

object

format.

I've

got

an

elf

object

format,

there's

a

macho

object

format.

A

I've

got

hybrid

ones

as

well,

so

ones

that

if

you

wanted

to

have

an

executable

health

format,

so

you

generate

elf

code,

but

you

also

want

to

be

able

to

execute

that

elf

code

dynamically

while

you're

generating

it.

So

we

need

a

different

format

for

that,

so

those

are

starting

to

accumulate

so

I

produced

those

in

in

their

own.

You

know

directory

just

to

keep

things

a

bit

cleaner,

but

what

I

wanted

to

find

was

the

x

entity.

64

object

format.

So

here

is

an

amd

64

implementation

of

the

jit

code

object

format.

A

Call

it

does

take

this

sort

of

larger

data

structure,

a

function

called

data,

that's

got

and

I'll

show

you

how

that

gets

populated

in

just

a

sec,

but

what

it

really

does

is

it

follows

the

logic:

that's

currently

there

in

the

code

to

figure

out

what

it

is

that

I'm

trying

to

call

and

how

do

I

call

it

and

there's

lots

of

pieces

of

information

that

it

tries

to

draw

from

in

order

to

do

that,

and

it

can

find

all

this

information.

That's

in

this

in

this

data

structure.

A

So

I

certainly

do

think

that

some

of

this

could

be

simplified

and

and

and

condensed,

but

that

isn't

at

this

point

not

the

not

the

purpose

of

this

this

exercise.

So

what

this

will

end

up

doing

was

because

this

is

the

emit

function,

it'll

go

and

produce

tr

instructions

and

then

it'll

return.

The

the

final

thing

that

it

actually

ended

up

generating

encode

is

pretty

much

the

same.

In

many

cases

it

can

actually

be

much

simpler

than

this.

A

A

It

provides

a

number

of

different

constructors

and

a

lot

of

the

information

is

actually

set

by

default.

It

isn't

isn't

required

at

a

particular

point

and

as

we

start

to

spread

this

out

throughout

the

code,

you'll

you'll

see

that

in

some

cases

you

have

some

of

this

information.

In

some

cases

you

don't

so

there

are

different

constructors

for

those

different

kinds

of

kinds

of

applications.

Where,

if

you

don't

have

the

information,

there

will

be

a

sensible

default.

A

A

A

An

instance

of

this

data

function

call

data

and

we

pass

in

the

various

bits

of

information

that

are

all

being

used

up

here

to

make

this

decision

and

then

that

data,

a

reference

to

it,

is

passed

into

emit

global

function,

call

which

basically

unpacks

what

it

needs

in

order

to

for,

for

this

particular

object

format

and

and

does

what

it

needs

to

do

so.

It

should

be.

I

mean,

if

you

ever

wanted

to

change

this

to

going

from

jit

code

to

an

elf

object

format

to

to

a

hybrid

approach,

whatever

it

should

be.

A

All

you

would

really

need

to

do

is

just

to

change

the

implementation

of

that

object

format

in

the

code

generator,

and

it

should

just

happen

transparently

to

you.

So

it's

really

getting

rid

of

all

this

extra

logic

and

this

specialized

code

for

knowing

oh,

I

need

to

call

directly

again

directly

through

a

register.

I

have

to

call

through

I'm

calling

this

directly

with

a

32-bit

displacement.

A

That

kind

of

thing

it's

getting

rid

of

all

that

and

hiding

it

underneath

this

global

function

call

that's

one

example:

here's

another

example

within

a

tree

evaluator

some.

I

think

it's

a

helper

call

here,

so

the

the

code

or

the

initialization

to

call

a

helper

call

is

much

simpler.

You

just

need

the

index

and

perhaps

some

dependencies

and

then

and

then

away

you

go

so

I'm

just

going

to

stop

there

just

to

sort

of

see.

A

I

I

do

need

to

study

that

a

little

bit

more

just

to

make

sure

that

we're

not

incurring

more

of

a

performance

overhead

than

a

compile-time

performance

overhead

than

that

than

we

should

be

part

of

it

comes

down

to

reducing

the

number

of

things

that

we

actually

need

to

make

a

decision

on.

So

that

way

you

don't

actually

have

to

store

them

into

this

data.

A

This

is

actually

just

a

local

object.

It's

stored

on

the

stack

here,

so

it

gets

cleaned

up

at

the

end

of

when

it

goes

out

of

scope.

So

it's

not

allocating

any

sort

of

scratch

memory

or

anything

like

that.

The

data

structure

itself

is

pretty

small.

It's

you

know

it's

got

to

be

in

like

64,

bytes

or

64

to

80

bytes,

something

like

that,

but

it's

the

actual

writing

into

that

data

structure

of

all

these

different

things

could

potentially

be

problematic,

but

I

haven't

quite

other

than

reducing

the

number

of

those

I

haven't.

A

D

So

one

question

for

you:

daryl

one

of

the

things

that

open

j9

is

interested

in

at

the

moment

as

part

of

value

types

being

added

to

the

java

language

is

linkage,

optimization

so

being

able

to

carry

objects

that

might

normally

need

to

be

put

into

boxed

and

put

past

as

a

reference

in

the

general

case

being

able

to

optimize

the

linkage

by

passing

values

and

registers

and

things

where

they'll

fit.

I

was

just

wondering

how

you

might

see

that

kind

of

thing

playing

into

this

design

that

you're

proposing.

D

It's

not

necessarily

by

call

site,

it

might

be

by

target.

So

if

we've

compiled

a

particular

target

in

the

gym-

and

we

have

a

jit

entry

point

that

will

accept

the

value

type

being

passed

in

registers

and

the

interpreter

entry

point

will

have

to

accept

it

boxed,

but

that

basically,

rather

that

we

would

have

these

different

depend

like

you

would

have

to

you

would

have

to

do.

You

may

have

to

want

to

do

different

things

depending

on.

D

A

Linkages.

Okay,

so

if

you

speculate

on

the

target,

you

know

what

you're

going

to

be

calling

you

can.

You

can

then

therefore

speculate

on

the

linkage

as

well

yeah

I

mean

I

think

that

so

this

deals

with

the

encoding

of

the

ins

of

the

actual

call,

but

you

know

linkage,

any

every

call

site

is

actually

just

a

pairing

of

the

linkage

and

and

and

the

object

format.

A

So

we

would

almost

need

to

have

some

kind

of

a

a

higher

level

structure

that

actually

paired

them

or

have

them

on

the

like

a

symbol,

reference

or

something

for

a

particular

or

that

information

on

the

symbol,

reference

perhaps

for

a

particular

target,

but

yeah,

but

keeping

them

as

a

pair.

I

don't

think,

would

be

we'd

have

to

find

the

right

structure

for

that,

but

I

think

we

could.

We

could

do

that.

D

Sort

of

yes,

and

no,

I

guess

I'm

just

trying

to

figure

out

how

we

would

try

and

avoid

you

know

like

an

ex

an

explosion

of

combinations

that

may

end

up

being

poorly

tested

in

some

situations.

Right.

Just

because,

like

the

value

types,

implementation

is

going

to

want

to

pass

values

in

register

by

preference

when

it's

possible,

but

in

general,

because

the

interpreter

treats

everything

as

boxed.

D

A

Mean

well

from

from

a

testing

point

of

view.

I

mean

one

of

the

things

that

we

could

do

on.

Every

call

site

is

to

not

just

assume

the

linkage

is

whatever

the

code

generator

tells

it

like.

We

have

to

get

a

site-specific

linkage

or

target

specific

linkage,

but

we

could

certainly

I

mean

you

could

certainly

do

some

testing

based

like

you

could

you

could

provide.

A

D

A

Yeah

I'll

give

that

a

little

bit

more

thought

as

well,

and

just

just

to

clarify

my

mind,

but

your

what

what

you

have

in

mind

but

yeah.

I

think

that

there

are

some

extension

opportunities

just

be

even

just

beyond

global

function.

Calls

extending

this

for

for

linkages

as

well.

I

think

could

could

happen

so.

H

H

So

is

it

most

of

the

information

we

will

need,

barring

significant

refactoring,

I

believe,

will

be

in

the

il

not

in

the

instruction

stream

a

lot

of

the

information

we

need

to

do.

Those

optimized

calls

with

value

types,

so

my

guess

would

be

that

all

of

that

sort

of

handling

would

probably

stay

in

the

linkage.

H

D

D

H

G

A

A

H

H

A

Happening,

okay,

I

mean

the

reason

I

left

it

as

extensible

is

because

it

actually

wasn't

that

much

work

to

leave

it

as

an

extensible

class.

But

you

know

if,

but

like

I

said,

I'm

not,

I

haven't

really

found

a

use

for

it.

Yet

it's

just

a

way

of

it's

just

providing

a

feature

that

may

not

be

immediately

useful,

but

it's

not

a

huge

amount

of

effort

to

provide

that

feature.

A

H

A

Okay,

the

the

one

piece

of

feedback

that

I

was

hoping

to

get

if

there's

other

ideas

on

was

if

there

was

a

different

way

of

structuring

the

the

api

it's

like

like.

If

you

look

at

that

function

called

data,

it

is

a

little

bit

ugly

and

a

lot

of

that

is

hidden

from

developers

behind

the

different

constructors.

A

But

if

you

actually

get

into

that

code,

it's

actually

kind

of

ugly.

Looking

at

all

the

way

that

you

can

you

can.

You

can

compose

that,

so

I

was

wanting

to

ask

if

there's

any

other

suggestions

on

how

to

provide

the

myriad

of

information,

that's

needed

at

different

at

these

different

call

sites

to

determine

how

to

encode

the

how

and

what

to

encode.

E

H

H

So

you

have

basically

a

variant

of

different

tuples

and

each

variant

corresponds

to

a

different

kind

of

call

and

only

contains

the

information

you

need.

There's

no

straightforward

way

of

implementing

that

in

c

plus

plus

at

least

not

in

the

versions

of

c

plus

we

support

so

yeah.

I

can't

really

think

of

a

better

approach

right

now,.

E

E

We

can

get

rid

of

some

of

that

by

partitioning

as

a

I

saw,

some

of

the

constructors

have

like

13

or

14

different

parameters,

or

something

crazy

like

that.

We

can

for

sure

trim

down

the

number

of

overloads

we

have

of

the

function

called

data

and

kind

of

standardize

that

across

the

different

cogents,

but

at

the

end

of

the

day,

like

all

that

the

object

format

is

doing

is

just

querying

the

the

function

call

data

to

example.

What

is

the

function

address

that

I'm

dispatching

to

or

something

like

that?

It's

doing,

heroics.

E

A

A

So,

my

next

step

here,

if

there

weren't

any

sort

of

glaring

objections

to

anything,

that's

being

done

here

is

to

is

to

is

to

start

to

introduce

sort

of

roll

this

out

in

in

in

some

stages

it

obviously

needs

a

bit

of

documentation.

I

think

I've

provided

some

here,

but

I

think

I

could

do

a

little

bit

more

and

start

to

make

some

of

the

changes

to

the

code

to

to

to

introduce

it.

It

turns

out

there

actually

aren't

that

many

places,

at

least

in

omar.

A

A

We

introduce

something

and

it's

going

to

break

cogens,

that

don't

support

this

cogens

can

introduce

this

up

there

as

they

as

they

need

it,

and

but

I

think

that

at

some

point

it

would

be

good

to

to

have

all

cogens

sort

of

converge

to

the

same

to

the

same

thing,

and

similarly,

in

downstream

projects

like

openj9

and

making

changes

to

the

various

calls

that

get

emitted

from

the

code,

cache

making

them

use

global

functions

as

well.

So.