►

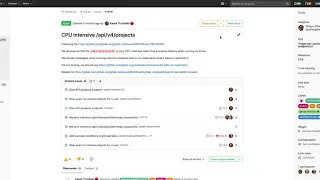

From YouTube: GitLab 12.6 Kickoff - Memory

Description

Memory Kickoff for the 12.6 release

A

Hi

I'm

Josh

Lambert,

a

product

manager

here

at

gate,

lab

and

I'm

gonna

walk

through

what

we

plan

to

do

here

in

the

memory

group

for

our

12.6

milestone.

The

memory

group

is

focused

on

improving

the

performance

and

reducing

these

resource

consumption

of

our

gate

lab

services.

So

we

have

a

number

of

different

issues

planned

to

be

addressed

in

12.6.

As

you

can

see,

there

are

quite

a

few

of

them.

A

A

Previously

this

used

to

call

a

consume

a

significant

amount

of

memory

and

sometimes

in

certain

cases,

would

consume

so

much

memory

on

larger

projects

that

it

actually

would

fail

to

complete

most

the

times.

It

would

consume

enough

memory

that

it

would

get

killed

by

the

psychic

memory

killer

and

and

failed

to

complete,

and

so

we've

been

working

to

improve

the

performance

and

also

reduce

the

memory

consumption

that

importing

larger

projects

consumed.

One

of

the

key

areas

of

this

is

that

we

identified

is

that

we

wanted

to

improve

the

importing

by

using

new

line

delimited

JSON.

A

So

previously

we

had

to

import

the

entire

JSON

block.

The

whole

project

has

consumed

a

large

amount

of

memory,

and

if

we

could

use

newline

deulim

in

JSON,

we

can

simply

parse

line

by

line

by

line

significantly

reducing

memory

overhead

and

so

we're

looking

to

deliver

this

here

in

12.6

we're

also

a

more

broader

level.

Here.

A

You

can

see

addressing

a

number

of

other

areas

here

as

well,

so

we're

looking

to

do

duplicates

in

the

data

in

some

cases,

as

you

can

see

down

here,

we

actually

loaded

the

JSON

twice

and

so

we're

looking

to

reduce

that

as

well

as

we're

also

looking

to

rework

the

version

mechanism,

so

that

it's

possible

in

most

cases

to

import

older,

still

compatible

project

exports.

And

this

we

don't

have

to

be

running.

The

exact

version

import

your

previous

export,

and

here

is

that

duplication

as

well

I

mentioned

earlier.

A

On

the

memory

side,

we're

going

to

be

investigating

what

is

going

on

in

this

API

call

that's

consuming

a

secant

number

of

CPU

resources

and

and

as

we're

working

to

shift

over

to

puma.

These

cpu

intensive

tasks

are

calling

some

increased

latency

with

the

new

multi-threaded

application,

server,

I

think

largely

because

of

the

Ruby

guilt.

So

this

would

be

a

key

area

for

us

to

improve

here,

as

we

kind

of

go

through

our

broader

API

and

improve

performance

on

again

most

of

these

non-performing

to

API

endpoints.

A

Finally,

I

mentioned

Puma

just

recently

here

and

we

are

working

to

essentially

finish

the

migration

from

unicorn

over

to

Puma

and

as

part

of

that,

we're

wrapping

up

a

couple

last

items.

The

first

one

here

is:

we

want

to

define

the

best

default

values

for

Puma

and

both

omnibus

and

our

charts,

and

this

way

we

can

define

things

you

see

here

like

holiday

number

processes.

A

We

hope

to

deploy

this

across

all

get

lab

here,

entitled

Essex

milestone

and

then

once

we

have

that

running

and

running

well,

well,

again,

then

take

those

learnings

and

those

default

values

and

leverage

them

as

defaults

and

Mabel

Puma

on

what

a

fault

here

in

that

are

27

or

released

shortly

thereafter,

once

we're

comfortable

with

the

performance

the

end

of

a

calm

first.

So

it's

a

big

effort

here

that

we're

coming

to

a

close

on

here.

A

We

can't

wait

to

get

this

turned

on

by

default

for

more

of

our

users

and

the

theme

of

being

architectural

changes

again,

we're

kind

of

wrapping

up

things

on

Puma,

and

so

we're

certainly

considering

new

areas

of

our

architecture

can

really

kind

of

level

up

or

make

a

serious

step

function

improvement.

One

of

these

is

WebSockets.

A

Previously,

we

haven't

had

support

for

any

WebSockets

and

that's

a

limited,

some

of

our

ability

to

introduce

new

features

or

design

them

in

a

certain,

more

efficient

way,

and

so

we're

gonna

start

doing

after

Puma

is

looking

at

potentially

offering

or

building

support

for

WebSockets

within

our

get

lab

codebase,

and

so

this

would

be

a

pretty

major

improvement.

We're

two

identified

a

number

of

potential

areas

that

can

benefit

from

this.

You

can

just

look

at

the

WebSockets

label

there

and

again.

This

is

possible

now

with

our

puma

multi

layer

application

server.

A

So

this

is

a

great

improvement

looking

to

take

advantage

of

and

we'll

be

starting

to

do

especially

primary

research

here

on

any

cable.

So

we

can

essentially

start

laying

the

groundwork

for

our

next

major

improvement.

Protects

relies,

forget,

lab,

and

so

thanks

for

listening

to

our

memory

update

here

for

we

plan

to

accomplish

on

12.6

again,

there

is

a

whole

lot

more

working

on

here,

but

these

are

just

some

highlights

and

general

themes

that

we're

going

to

cover

here

in

a

next

release.