►

From YouTube: How to build a Docker image using GitLab Runner AWS Fargate custom executor driver + AWS CodeBuild

Description

Demo of building a Docker image using the GitLab Runner AWS Fargate custom executor driver + AWS CodeBuild.

Resource links:

https://docs.gitlab.com/runner/executors/custom.html

https://docs.gitlab.com/runner/configuration/runner_autoscale_aws_fargate/index.html

https://gitlab.com/gitlab-org/ci-cd/custom-executor-drivers/fargate

https://about.gitlab.com/blog/2020/07/31/aws-fargate-codebuild-build-containers-gitlab-runner/

https://docs.aws.amazon.com/codebuild/latest/userguide/sample-docker.html

https://medium.com/ci-t/serverless-gitlab-ci-cd-on-aws-fargate-da2a106ad39c

A

Hey

everyone

jeremy's

been

here

product

manager

for

gitlab

runner.

For

today's

demo,

I'm

going

to

cover

how

to

build

a

docker

image

using

the

github

run.

Aws

firewall,

custom

executive

driver

plus

aws

code

build.

This

is

actually

building

on

the

demo

that

I

had

created

a

few

months

back.

But

since

then

we've

had

some

additional

questions

that

have

come

up,

so

I

thought

it

was

important,

interesting,

perhaps

to

to

have

a

second

demo

and

provide

a

bit

more

context

in

a

bit

more

detail.

A

A

So

today,

I'm

just

going

to

basically

I'm

going

to

in

terms

of

the

demo

I'll

cover

I'm

going

to

provide

a

brief

overview

of

the

ec2

virtual

machine

configuration.

That's

the

virtual

machine

that

we're

using

for

hosting

the

the

runner

or

what

we

might

call

in

this

particular

instance.

The

runner

manager,

basically,

a

high

level

review

of

the

ecs

cluster

powered

by

fargate.

A

I'm

going

to

touch

on

a

circle

of

the

container

image

used

to

create

the

ci

build

container

on

the

ecs

cluster.

This

is

the

container

that

executes

the

script

in

the

ci

job

right

and

that

pattern

is

covered

in

our

docs.

On

setting

up

the

database

find

your

drive

and

the

link

to

those

docs

in

the

slides

as

well.

I'm

going

to

touch

on

the

setup

of

the

aws

project

project.

A

Just

a

few

key

points

there,

the

container

registry,

I'm

going

to

touch

on

the

setup

of

the

product

repository

in

gitlab

and

then

touch

on

a

few

of

the

some

key

points

in

the

setup

of

the

gitlab

gitlab.

That's

the

yamaha

and

then

finally

I'll

execute

the

pipeline,

which

will

demonstrate

the

building

of

the

docker

image,

which

is

a

combination

of

the

pipeline

job

executing

using

the

github

runner

on

on

phangate,

as

along

with

code

build.

A

A

I

also

will

be

covering

the

setup

of

an

on-demand

gitlab

runner

manager.

I

even

I

mean

an

on-demand

git

network

manager.

This

is

a

configuration

that

I

have

defined

as

one

that

does

not

require

that

a

virtual

machine

and

easy

to

be

always

on

hosting

and

running

manager,

I'll

link

to

some

to

resources

with

that

pattern,

but

I

won't

be

covering

that

in

today's

demo.

A

So

the

first

thing

I

want

to

just

kind

of

touch

on

before

we

get

into

the

demo

is

an

overview

of

the

setup,

and

this

is

sort

of

the

prerequisite

steps

one

has

to

go

to

before

using

the

github

runner

on.

You

know

an

ec2

along

with

the

froggy

driver

and

this

particular

side.

What

I'm

showing

here

is

the

container

image

used

for

the

ca

job

execution

I'll

be

demonstrating

in

the

actual

demo

on

the

ecs

cluster

paul

my

file

gate.

This

is

very

basic.

Doppler

file,

here's

going

to

be

creating

a

node.js

image.

A

The

only

thing

on

the

caller-

that's

different

in

this

example

versus

the

one

that's

linked

to

in

the

in

the

in

the

blog

post

I

referenced

earlier-

is

that

I've

included

aws

client

here

because

we'll

be

actually

executing

a

few

aws

cli

commands

as

part

of

our

pipeline

job,

all

right,

so

just

kind

of

want

to

call

that

out.

We've

added

those

steps

in

here

as

well.

I

just

wanted

to

highlight

that

that's

kind

of

how

that's

built

so

I've

built

my

container

image.

A

I've

included

aws

cli

into

that

container

image

and

the

other

thing

to

call

it

as

well,

which

is

an

and

this

is

covered

in

our

in

the

installation

or

the

configuration

docks

for

the

phonegate

driver

here,

is

where

you're,

seeing

that

we

have

included

the

gitlab

runner

right.

So

the

container

used

for

executing

the

ci

job

all

in

the

fargate

powered

ecs

cluster,

must

include

an

installation

to

get

that

moment.

So

this

requirement

we

cover

in

detail,

be

described

as

in

detail

and

the

autoscaling

gitlab

see

on

a

best

file

get

docs.

A

A

We

have

a

prerequisite

section,

and

then

we

go

through

various

steps

that

you

that

you

need

to

follow.

If

you're

setting

up

this

pattern

for

the

first

time

and

the

step,

one

is

preparing

a

container

image

for

the

aws

primary

task,

and

so

this

is

what

I'm

referring

to

in

that

example,

and

here

in

adopt

in

the

docs.

A

Another

thing

I

did

as

I'm

I

was

setting

up

my

project

in

gitlab

for

this

demo

and

the

name

of

the

project

I'll

be

using

is

called

build-on

formulae.

That's

an

example

is

that

it

included

a

code,

build

project

variable

and

s3

bucket

variable

again.

This

is

just

going

to

make

it

easier

to

use

these

examples

and

other

top

products,

and

so

on.

So

it's

of

hard

coding

these

in

the

scripts

and

we

can

set

these

as

environment

variables

in

the

actual

github

project

itself.

A

In

terms

of

the

cobra

configuration

aws

has

done

a

fantastic

job

of

documenting

how

to

use

code

build

if

they

provide

a

number

of

example

patterns

and

getting

sounding

guides.

I

just

want

to

call

out

that,

in

terms

of

using

the

pattern

that

we're

going

to

demonstrate

today,

the

key

thing

that

that

you

want

to

make

sure

is

done

is

as

you're

setting

up

your

core

build

project.

A

You

want

to

have

these

environment

variables

set

in

this

particular

case,

the

aws

default

region

and

the

account

id

image,

repo

name

and

image

tag,

and

this

is

because,

as

you

can

see

here,

we

are

using

these

variables

in

the

build

spec

and

yaml

file.

That

will

be,

oh,

that

is

in

the

the

github

project

repository,

and

so

that's

how

we're

stitching

all

of

these

things

together.

A

It's

going

to

be

doing

a

quick

echo

out

just

to

see

hey

the

build

container

or

the

the

task

is

starting

on

aws

family.

I

need

a

container

I'm

able

to

do

a

quick,

echo

out,

and

then

I've

also

included

a

couple

of

simple

aws

commands

so

exercising

the

aws

cli.

So

very

quick

and

easy

way

for

you

to

test

whether

or

not

the

the

basic

setup

is

working

before

you

get

into

something

a

bit

more.

A

We

set

this

job

to

the

manual,

obviously

in

your

environment,

a

production

environment,

much

more

elegant

ways

in

terms

of

how

you

may

want

the

the

workforce

to

be

kicked

off,

but

for

the

purpose

of

the

demo

etcetera

manual,

and

the

only

thing

to

call

out

here

is

that

this

is

kind

of

you

can

come

back

to

that

go

back

and

then

other

thing

I

want

to

call

out

is

that

we

are

using

a

batch

script.

Let's

go

back

here.

Let's

keeps

highlighting

it's

kind

of

hard

to

see.

Success

zoom

in.

A

Okay,

let

me

see

if

we

can

see

that

okay,

and

so

this

bash

script

here,

which

is

going

to

be

in

our

scripts

directory,

is

called

copyless

sh.

That

batch

grip

is

actually

what's

going

to

be

responsible

for

executing

the

the

steps

in

the

code,

the

project

and

what

advance

will

do

it?

It

implements

a

timer

which

basically

says

to

which

basically

allows

for

the

the

execution

of

the

jobs

that's

being

managed

by

get

that

runner

to

wait

for

the

completion

of

the

corresponding

jobs,

if

you

will

on

code

bill.

A

So

that's

what

that

that

that

script

and

what

the

timer

is

doing.

It's

like

hey!

It's

waiting,

it's

waiting

for

sort

of

like

the

confirmation

that

the

the

jobs

that

have

been

kicked

off

on

code

belt,

which

is

obviously

a

separate

environment,

sort

of

a

separate

environment.

If

you

will

from

sort

of

your

corporate

like

running

instrumentation,

those

jobs

have

completed

before

it

wraps

up

the

the

closure

of

the

pipeline

job

on

github

and

then

really

fast.

This

is

a

quick

overview

of

that

that

builds

back

the

yammer

file.

A

The

only

thing

I

want

to

call

here

is

this

is

a

very

basic,

a

very

simple

pattern.

It's

one

that

you

can

get

by

just

going

directly

to

aws's

code,

build

documentation,

nothing

very

intricate

here,

it's

it's

part

for

the

course

all

right,

so

just

a

quick

review

of

the

entering

solution

and

the

demo

steps

before

we

go

into

the

actual

demo.

So

this

is

kind

of

what

everything

looks

like

when

stitched

together,

and

so

you

have

your

user.

A

In

this

case,

it's

going

to

be

me,

and

this

particular

example

in

this

particular

demo.

The

project

repository

that

I'm

using

is

hosted

on

github.com

I'm

using

the

sas

version

of

github.com.

So

my

product

repo

is

on

github.com.

If

you're

a

self-managed

customer

you

can

help.

You

obviously

have

your

own

instance

of

gitlab.

You

replace

the

gitlab.com

section

with

your

instance

of

gitlab.

A

My

project

repository

is

code,

build

that's

on

fire

example,

and

what

I've

done

here

is

ahead

of

time.

I

have

registered

a

specific

runner

to

that

project

repository

and

that

one

is

called

aws

dash,

ec2

dash,

filegate

dash

runner

dash

manager,

and

then

you

can

see

the

ip

address

so

over

here.

On

the

right

hand,

side

you

can

see

the

configuration

in

code

well.

I've

pre

previously

previously

set

up

an

ec2

instance

easy

to

virtual

machine.

I've

installed

the

flat

runner

I've

configured

github

running

to

use

the

custom

executor.

A

I

have

installed

the

family

driver

canola

configured.

I've

created

my

ecs

cluster.

I've

created

my

task

definition.

That's

based

on

the

node.js

example

that

I

showed

earlier.

I've

created

my

s3

buckets

that

will

be

repository

of

the

zip

file.

That's

created

over

here.

I've

created

my

codeword

project,

so

this

is

the

end-to-end

workflow

that

allows

you

to

kick

off

your

pipeline

jobs.

A

The

initial

commands

in

the

aw

step,

three,

the

initial

commands

and

the

aws

cover

pipeline

job

will

zip

the

builds

back.

The

yammer

file,

the

file

apple

js

under

the

package.json

files

into

an

s3

enter

zip

it

up

and

that

s3

file

will

then

be

copied

to

an

s3

bucket

and

then

and

then

step.

Four.

The

aws

cover

pipeline

job

will

call

the

code

builder

sh

bash

script.

A

I'm

sorry

we'll

call

the

code

builder

should

script

right

and

that

will

take

care

of

executing

the

job

on

call

build

that

finishes

that

we

need

together.

So

that's

the

end-to-end

flow,

so

let's

go

ahead

and

and

finally

before

we

get

your

jumping

demo,

I've

included

a

few

resources

here

in

the

presentation.

A

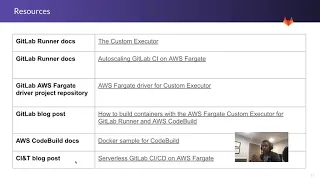

Once

I

upload

this

video

to

youtube,

I

also

enter

links

to

the

resources

directly

into

the

video

description

as

well,

but

a

number

of

various

resource

things.

The

very

last

link

on

the

bottom

is

a

blog

post

that

gives

folks

an

example.

Pattern

of

implementing

the

runner

in

a

sort

of

an

on

the

manual

service,

where

I,

if

folks,

are

interested

in

not

having

an

ec2

instance,

always

be

active,

always

be

running

in

terms

of

always

bringing

and

hosting

a

runner.

A

Here's

a

potential

pattern

that

one

may

implement

in

terms

of

the

service

pattern,

and

we

might

cover

that

in

a

future

video

all

right

so

get

out

of

this

and

we'll

go

into

the

actual

demo

itself.

So

this

is

my

coupon

on

filegate

example

project.

Before

I

kick

off

the

actual

pipeline,

just

a

quick

review

of

the

repository

service,

pretty

straightforward.

Here's

a

build

spec

that

yama

father

referred

to

earlier.

It's

the.

A

A

The

only

thing

I

did

differently

from

the

example

in

the

blog

post

is,

I

moved

a

few

things

around.

I

created

the

scripts

directory

and

I

move

the

callback

sh

file

into

the

scripts

directory,

and

this

was

just

going

to

allow

me

later

on

to

add

other

script

files

in

here

as

well

and

reference

them

a

little

bit

simpler.

A

A

A

Currently,

at

this

point,

you'll

see

that

there's

nothing

running

I've

got

some

tasks,

nothing

inactive.

Let's

take

a

quick

look

at

my

task,

definition

that

I

again

previously

created

it's

the

one

that's

going

to

be

picked

up

as

part

of

this

job.

So

right

now,

here's

my

again,

my

ecs

cluster,

followed

by

fangate.

Nothing

is

chronically

active,

nothing's

running.

A

A

So

as

part

of

this

pipeline

execution,

we

should

see

that

timestamp

refresh

so

we'll

take

a

look

at

that

as

we

come

through

the

demo

and

then

we'll

go

into

the

actual

code

well

project

here,

and

so

we

should

also

see

that

as

part

of

the

execution

we'll

see

a

new

entry

in

the

build

history

table

here

in

cold

build.

So

that's

how

all

three

of

these

things

will

stitch

together.

So

let's

go

ahead

and

kick

off

the

aws

code

build

job

manually

again

for

the

purpose

of

this

demo.

A

I

have

not

implemented

aws

code

deploy

job,

so

we're

going

to

be

executing

that

hana

gans

I

mentioned

before

the

the

debug

job

was

just

something

that

I

implement

myself

just

as

a

way

for

me

to

quickly

test

to

make

sure

my

aws

configuration

is

working

as

expected

before

I

do

something

a

bit

more

intricate

like

introducing

code,

build

or

code

deploy,

or

what

have

you

so

initiate

that

job?

Let's

have

a

look

and

see

what's

happening.

A

So

now

things

are

standing

up

here,

and

so

you

see

it's

starting

from

there.

If

we

were

to

pop

over

right

now

to

ecs

and

just

take

a

look

quickly

refresh

there

we

go

so

a

tax

definition

attach

just

got,

kicked

up

based

on

the

task

definition

and

let's

see

if

it's

running

it

so

the

last

year.

This

time

is

spending.

A

A

A

A

Actually

kicked

off.

Okay,

then

so

now

things

are

happening

over

here

is

on

the

ecs,

oh

by

the

way.

So

let

me

click

over

here,

so

you

should.

That

should

have

refreshed.

And

yes

now,

the

actual

container

is

running

on

ecs,

because

what

we're

seeing

here

in

the

the

logs

is

that

the

execution

of

the

job

is

actually

happening

right

because

the

container

on

file-

it

is

now

active

right,

and

so

we

can

see

that

the

echo

statement

got

kicked

off.

A

It's

the

zip

command

has

been

completed

and

it's

already

uploaded

the

the

zip

file

to

the

s3

bucket,

and

it's

called

it's

called

the

code.

Build,

does

sh

bash

script

so

over

here

on

this

side.

That

task

is

still

running

and

if

we

go

over

the

code

build.

Let's

do

a

refresh.

We

should

see

an

update

in

terms

of

what's

happening

in

google

right

so

over

here

in

coco.

You

can

see

that

the

status

is

in

progress

and

over

here

in

on

the

job,

that's

been

managed

by

the

gitlab

runner.

A

A

So

that's

been

succeeded

and

just

to

kind

of

pop

over

here.

So

everything

here

on

the

github

site

is

complete

ground.

So

you

get

that

side

of

things.

It's

telling

you

hey.

This

job

is

complete.

All

right

job

succeeded.

So

now

we

want

to

go

over

and

have

a

look

at

what

acts.

What

has

actually

been

done

in

aws

on

the

aws?

So

first

things

first

want

to

click.

A

Add

click

on

the

on

the

job

here

and

encode

them

and

click

on

the

face

details,

and

you

can

see

all

of

the

steps

in

the

jobs

that

succeeded,

there's

some

other

additional

stuff

that

you

can

get

from

code,

build

as

well

the

build

logs.

In

my

case,

I'm

pumped

I'm

pumping

my

build

logs

in

history

bucket.

So,

if

things

fail,

I

can

quickly

download

the

build

logs

and

take

a

look

at

the

reasons

why

I

failed

so

again.

So

I've

kicked

out

that

pipeline

job,

the

corresponding

core.

A

The

job

has

has

kicked

off

and

it's

not

completed.

Now.

Let's

look

at

and

see

what's

happening

over

on

the

acr

side,

so

haven't

we

haven't

refreshed

the

screen

as

yet

so

right

now,

the

image

tag

latest

was

last

pushed

at

approximately

5

52

p.m.

So,

if

we

refresh

now,

we

should

see

a

different

timestamp

for

the

latest

time.

S

and

d.

The

latest

time

is

now

at

6,

21

21pm.

A

A

Right,

it's

configured

to

use

a

get

time,

runner

that's

hosted

on

nmu,

aws

and

specifically

will

use

an

ecs

cluster

powered

by

foggy

to

execute

those

tasks

right.

So

that's

that's

the

easy

piece

of

business.

Like

you

know,

we

we

set

up

a

build

container

for

the

for

the

type

of

environment

we

need

needed.

A

My

example

was

another

js

build

container

and

we

then

used

that

in

for

executing

the

pipeline

jobs,

the

other

steps

that

we

demonstrated

as

part

of

this

demo

we're

showing

how

we

also

now

connect

this

whole,

this

whole

sort

of

get

that

centric

configuration

to

aws

cooldown,

so

that

now

we

can

have

the

ability

to

build

a

docker

image

right

as

part

of

the

overall

workflow

all

right-

and

this

is

again

because

aws

family

does

not

allow

for

privileged

there's,

no

more

privileged

container

bills.

This

pattern

solves

that

problem.

A

So

what

we've

done

again

is

we've

executed

the

pipeline

job

on

git

lab

from

gitlab

and

the

gitlab

runner.

On

our

ec2

instance,

configured

user

from

gate

driver

has

executed

their

job

using

the

ecs

cluster

powered

by

fargate

and

pushed

the

resulting

container

image

into

into

the

ecl

repository

and

again,

the

container

image

was

built

by

aws

code

building

really

fast

before

we

wrap

up

with

them.

A

It's

kind

of

one

other

thing

I

think

I'll

just

quickly

show

is

that

in

this

example,

we

only

kicked

off

and

then

we

go

back

to

ecs.

We

only

kicked

off

one

task.

We

only

kicked

up

one

container,

you

can

see

here

now

it's

stopped

right,

and

so

the

question

my

one

question,

one

a

question

one

I

have

is

hey:

could

I

have

parallel

jobs?

Could

I

have

parallel

jobs

be

configured

to

use

the

task

definition,

and

the

answer

is

yes,

so

just

really

fine.

A

Now,

let's

do

a

quick

example

of

that,

so

I'll

go

to

pipelines

again

and

I

actually

have

an

example

set

up,

and

so

in

this

example,

I've

got

a

sort

of

three

debug

drops

a

second

parallel

right.

You

know

what

I'm

calling

this

creepo

stage

and

the

reason

I

set

this

up

this

way

is

as

to

just

quickly

show

what

happens

if

one

were

to

have

multiple

jobs

and

they're

executing

a

power.

What

happens

correspondingly

on

the

ecs

cluster

power

by

foggy

in

in

this

implementation

pattern?

A

Oh

actually,

two

two

containers

already

running

free

containers

running,

so

what

we've

done

is

the

runner

manager

right

here

is

initiating

multiple

creation

of

multiple

tasks

based

on

that

multiple

containers,

if

you

will

a

task

to

use

the

aws

power

lines

based

on

that

past

definition.

So

again

we

have

a

lot

of

flexibility

in

this.

This

initial

versions

of

the

aws

file

drive

that

we

developed

and

and

if

you

have

any

questions

or

concerns

or

issues,

please

feel

free

to

drop

me

an

email

at

the

eastman

gitlab.com.

A

We

also

have

a

project

repository

as

well

specific

to

this

to

the

driver,

and

it's

going

to

be

linked

here

in

the

in

the

presentation,

as

well

as

I'll

add

that

information

and

in

the

description

of

the

video.

So

that's

it,

that's

it

for

our

demo

today.

I

hope

you

found

this

interesting

and

look

forward

to

chatting

with

you

next

time

cheers.