►

From YouTube: CVS advisories ingestion

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

B

A

My

screen

now

yep,

okay,

good,

so

today,

I

wanted

to

make

a

small

presentation

about

our

work

on

continuous

level.

It

is

coming

and

more

specifically

to

advisory

ingestion

right.

So

it's

more

like

a

progress

report.

What

we

have

been

doing

and

I'm

also

going

to

try

to

give

you

an

advisory

feeder

demo.

This

can

be

more

of

of

an

open

discussion

right

and

we

can

structure

this

around

this

small

presentation,

so

let

me

get

into

it.

A

So

let

me

take

a

step

back

and

explain

what

the

the

bigger

picture

of

what

we

are

trying

to

do

and

please,

if

you

disagree,

just

step

in

and

let

me

know,

because

I'm

also

new

to

the

whole

system

right.

So

the

main

idea

is

that

for

continuous

vulnerabilities

scanning

we

want

to

provide

a

way

to

GitHub

to

have

all

the

knowledge

about

all

the

advisories

that

exist.

So

we

have

multiple

sources

of

advisories,

for

instance,

for

dependency

scanning.

We

have

gymnasium

DB

for

continuous

scanning.

A

We

have

three

VDB

and

the

idea

is

that

we

want

to

ingest

those

advisories

process,

those

advisories

and

in

the

end

we

want

to

store

them

somewhere.

Externally,

like

a

bucket

where

the

gitlab

instance,

which

basically

pull

that

data

store

it

in

the

database

in

the

gitlab

database,

so

that

it

can

basically

do

continuous

vulnerability

scanning

in

this

first

iteration,

we

are

going

to

we,

we

work

mainly

with

gymnast

MDB.

So

we

were

also

thinking

about

what

we

need

to

do

about

3vdb

and

continue

scanning.

A

But

our

main

scope

and

focus

is

basically

on

the

dependency

scanning

and

more

specifically

on

simulationdb.

So

we

basically

start

with

just

one

advisory

source,

and

we

are

basically

working

on

on

on

this

diamond

save

here

on

the

ingestion.

How

are

we

going

to

ingest

that

and

Export

it

into

a

bucket,

so

that

gitlab

can

basically

fetch

that

information?

A

So

this

is

more

or

less

the

overall

design,

so

you

will

see

I

have

something

like

a

block.

Diagram

and

I

have

different

colors,

depending

on

what

we're

talking

about

so

with

orange

I'm

talking

about

a

gitlab

job

right,

so,

basically,

binary

that

can

be

executed

by

gitlab

job

and

by

Blue

is

basically

something

that

runs

on

gcp

or

a

gcp

resource.

A

So

the

main

idea

is

the

following.

So

the

first

component

is

the

advisory

feeder,

The,

Advisory,

feeder,

Works

more

or

less

the

same

way.

The

license

feeder

works

in

the

sense

that

it

gets

triggered

by

schedule.

So

we

can

execute

this

job

once

per

day

or

multiple

times

per

day,

doesn't

really

matter,

and

what

it

will

do

is

that

it's

going

to

clone

the

gymnasium

DB

I'm

talking

about

simulationdb,

because

that's

the

scope

right

now.

A

It's

going

to

fetch

information

from

a

gcp

cloud

storage

bucket

and

the

information

that

we

store

there

is

the

last

commit

that

we

have

processed

the

last

time

so

that

we

don't

need

to

start

from

the

beginning.

We

can

just

start

see

the

differences

between

the

last

process

commit

and

the

latest

process

and

the

latest

commit.

A

A

It

will

work

as

a

server

in

the

sense

that

we

are

going

to

use

most

probably

perhaps

up

push.

That

means

that

every

message

received

on

a

pub

sub

topic

it's

going

to

be

forwarded

as

an

it's

as

an

HTTP,

API

call

on

the

advisory

processor

and

the

advisory

processor

is

going

to

get

the

information

about

this

new

advisory

and

it's

going

to

store

it

in

the

right

format

in

the

license.

Db

and

our

license.

Db

is

basically

the

same

license.

Db

that

we

are

using

for

the

whole

license

ingestion

pipeline.

A

So

that's

running

on

cloud

SQL,

which

is

basically

the

gcp

version

of

unspl

database,

a

monitored

service.

Basically,

and

then

the

final

component

is

the

advisory

exporter.

This

is

also

triggered

based

on

on

a

schedule.

So

it's

up

to

us

and

that

component

will

work

more

or

less

the

same

way

as

the

license

exporter

component.

A

It

will

get

the

information

all

about

the

advisories

from

the

license

DB

and

it's

going

to

extract

them

in

new

delimiter

Json

format

into

a

cloud

storage

bucket,

which

will

be

public

and

that's

public

bucket

can

be

used,

then

by

offline

gitlab

instances

or

in

general.

Any

gitlab

instance

fetch

that

file

and

ingest

all

those

advisories.

A

On

the

next

slide,

it's

more

or

less

the

same

design,

but

in

this

format

at

least

right

I

don't

want

to

get

into

too

many

details.

But

again

you

see

that

we

have

an

advisory

processor

which

runs

on

cloud

run.

We

this

is

fed

by

a

pub

sub

topic

which

is

again

fed

by

the

schedule

advisory

feeder.

So

you

might

be

familiar

with

this

kind

of

drawings

from

the

deployment

projects

documentation,

and

this

is

just

an

update,

but

for

advisories

right

now.

A

Okay,

so

let's

focus

now

on

the

advisory

feeder,

because

I

think

that

some

of

the

efforts

that

we

did

during

this

iteration

was

also

focused

on

the

advisory

feeder,

and

this

is

also

what

I

would

like

to

demo

so

advisory

feeder.

Basically,

it's

a

CLI

command

tool

right

it.

It

runs

exactly

the

same

way

as

we

do

for

license

feeder.

A

So

that

means

that

we

have

some

run

configurations

where

we

can

pass

some

options

like

log

levels

or

which

internal

bucket.

We

want

to

talk

to

or

the

pub

sub

topic

so

very

similar

run

configurations

as

the

license

feeder

and,

of

course

this

is

normal

because,

as

I

mentioned

at

The,

Advisory

feeder

shares

exactly

the

same

code,

repo

as

the

license

feeder,

and

what

we

do,

then,

is

that

we

have

exactly

like

we

do

for

gymnasium,

where

we

have

in

the

command

folder.

A

A

Then

we

compare

and

we

find

The

Advisory

is

a

change

between

the

cursor

commit

has

and

the

latest

master

and

those

advisories.

We

publish

them

directly

on

the

pub

sub

topic

and

then,

as

a

final

step,

we

store

the

latest

process

that

has

commit

back

to

the

cursor,

so

the

next

time

the

advisory

feeder

will

execute.

It

can

continue

from

where,

from

from

where

it

finished

the

previous

time.

A

In

this

first

iteration,

we

just

focus

on

dependency

scanning.

So

we

try

not

to

think

about

the

data

structure

that

we

might

need

for

contain

for

for

container

scanning

and

and

3vdb,

because

there

are

some

differences

but

I'm

going

to

talk

about

this

in

a

bit,

so

the

data

that

we

basically

sent

to

the

advise

that

we

sent

to

The

Advisory

processor.

So

this

should

be

processor

here-

contain

two

main

things.

A

A

In

the

end,

we

are

going

to

store

the

Json,

the

Json

advisory

and

the

video

ID

is

basically

the

identifier

that

we

need

in

order

to

do

upsets

and

yeah,

as

I

mentioned,

and

before

also

advisor

video

can

be

executed

once

per

day,

and

it's

quite

fast,

especially

when

it

doesn't

need

to

start

from

scratch.

So

you

will

see

also

in

the

demo

that

it's

quite

quite

fast

yeah

when

it

comes

to

the

database

schema.

We

discussed

a

lot

about

about

this.

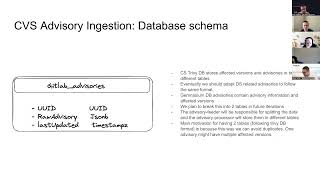

A

This

is

the

the

current

schema

that

we

have,

so

we

have

a

gitlab

advisories

table

and

in

there

we

just

have

the

uuid

the

raw

advisor,

which

is

in

Json

B

format.

So

in

in

case

you

don't

know

this

format.

Postgres

SQL

allows

you

to

store

a

Json

object

and

it

has

the

Json

format

and

Json

B

format.

There

is

a

small

difference

there

in

the

sensation.

A

Basically,

it

stores

the

binary

data,

so

it

does

some

kind

of

encoding

and

the

insert

it's

a

bit

more

costly,

but

in

the

end

of

the

day

you

can

actually

access

the

data

inside

the

Json

object.

So

it's

very

nice

and

of

course,

we

also

have

last

updated

timestamp

now.

The

interesting

thing

is

that

for

con

for

container

scanning

3vdb

towards

the

affected

versions

and

the

advisory

into

different

tables

right

so

in

in

indeed

in

but

intimidation

to

be,

we

just

have

one

object

that

has

the

advisory

details

and

also

the

affected

version.

A

A

They

they

are

both

very,

very

knowledgeable,

especially

for

parts

of

the

system

that

I

I

have

no

clue

like

the

gitlab

instance

world

and

the

database

there

I

haven't

worked

there,

so

it's

really

nice

that

Fabian

and

Digger

they

can

they

they're

there.

They

can

do

some

really

nice

work

on

that

on

that

side

and

give

me

a

lot

of

interesting

information

that

I

didn't

know

about.

A

So

the

schema

DB

work,

it's

actually

complete

or

the

infrastructure

needed

and

required

by

The,

Advisory

feeder

is

actually

complete

when

it

comes

to

the

advisory

feeder

code

is

actually

in

review

right

now,

as

we

speak.

We

expect

this

to

finish

in

the

first

week

of

16.0,

and

that's

my

own

estimation,

so

don't

take

my

word

for

granted

or

in

the

end

of

15.11

depends

how

fast

we

go

there

advisory

processor.

This

is

the

next

big

thing

to

do.

A

We

have

started

refining

it

I'm

currently

trying

to

understand

how

easy

it

is

to

use

to

reuse

the

same

code

base.

Hopefully

we

can

do

that

and

advisory

exporter

nothing

there.

Yet

we

need

to

refine

this,

although

most

of

the

information

it's

already

known.

So

probably

it

should

be

relatively

easy

to

refine

it

and

we

could

actually

start

working

on

advisory

exporter

in

Pala

in

parallel

with

advisory

processor

right

and

that's

it.

C

Yeah

it's

difficult

when

you

don't

get

any

feedback,

you're,

not

sure

if

people

are

just

not

listening

or

if

they

have

no

equation

at

all.

So

usually

you

can

have

if

you

want

the

agenda

doc

on

a

side

screen

or

quickly

accessible,

because

what

people

can

do

is

write

issue

right,

right,

questions.

Sorry

in

the

dark,

as

the

conversation

goes

depending

on

the

presenter

you

might

like

to

just

not

be

interrupted

or

you

might

welcome

interruptions

as

you're

making

the

presentation

I

mean

both

approaches

are

fine

but

yeah.

C

C

This

is

still

the

name

of

the

repository,

but

it

might

be

mentioned

as

gitlab

at

visery

DB

in

other

places,

also

I

think

something

that

we

haven't

yet

discussed,

but

maybe,

as

a

follower

past

MVC,

it

might

be

good

to

have

some

checks

to

verify

from

time

to

time

that

we

are

still

in

sync

with

the

sources

like

this

is

something

that

we've

seen

being

useful

for

continuous

scanning.

For

instance,

when

we

are

building

and

importing

the

the

3db,

we

have

a

check

that

runs

internally.

C

That

check

the

database

is

not

outdated

for

more

than

48

hours.

For

instance,

I

don't

know

if

it's

something

we

could

do

also

in

our

gcp

setup,

so

that

we

can

check

regularly

I'm

gonna

do

a

full

clone

check

how

many

advisories

we

have

and

check

how

many

accessories

we

have

in

the

database

and

make

sure

that

we're

not

having

a

bug

that

makes

us

missing

some

data

I

think

that

would

be

useful.

I

don't

know

if

this

is

something

that

has

already

been

discussed

so

far.

C

C

The

delay

between

the

the

Visa

is

disclosed

get

ingested

into

the

gymnasium

database,

and

then

we

sing

the

genesion

database

with

the

advisory

feeder

store

there

in

the

license,

DB

external

DB,

and

then

we

sync

that

with

the

rest

mode,

so

there

are

a

lot

of

intermediary

steps

that

can

make

this

end-to-end

process

take

much

more

time

than

expected.

So

if

we

can

run

that

more

more

often

and

reduce

this

time,

that

would

be

great.

C

A

D

C

D

Like

I,

did

we

just

go

back

to

slide?

Two

I

can

see

it?

Yes,

exactly

that's

the

one

I

guess

I

have

a

question

about.

I

just

saw

the

postgres

database

at

the

end

and

with

the

license

compliance

feature

that

has

been

rolled

up

like

we're.

You

know

having

to

raise

the

requirements

for

installation

basically,

and

so

I

was

just

curious.

If

this

design

would

also

lead

to

the

same

issue.

A

So,

just

to

make

to

give

a

bit

of

more

information

on

this

Sarah

if

we

first

take

the

3vdb,

which

stores

the

affected

versions

and

the

advisories

in

two

different

tables.

If

we

want

to

make

one

big

table

out

of

it,

then

we

will

create

some

duplication

and

that

will

increase

the

size

right.

That's

why,

ideally

at

some

point,

we

would

like

to

have

two

different

tables

also

for

technician

DB,

but

okay,

given

the

time

because

we're

already

on

time,

let

me

make

a

very

quick

demo.

A

I

hope,

that's

okay,

and

if

you

have

more

questions,

I

will

answer

those

later

async

so

right

now

this

is

the

license

feeder

project

I'm,

just

gonna,

run

it

directly

from

the

source

code.

It's

like

running

it

from

from

from

Maine.

Maybe

one

thing

I

should

actually

show

you

is

I'm

going

to

run

this

into

my

own

personal

GSP

project.

I

have

a

cursor.

The

cursor

is

on

a

commit

not

from

the

beginning,

so

there

is

a

value

there

close

to

the

latest.

A

Commit

hash,

I

think

it's

from

yesterday

or

two

to

three

days

ago.

So

we

should

have

some

new

advisories

one.

More

thing

that

I

wanted

to

show

you

is

that

we

have

a

topic

for

Dev

advisory,

processor

and

I

would

also

like

to

create

a

subscription-

let's

name

this

test,

so

that

you

can

actually

see

the

data

already

exists

test.

One

maybe.

A

So

this

will

take

a

second

or

two

okay.

We

can

go

here.

You

see,

there

are

no

messages,

of

course

yet,

and

what

we

can

do

is

we

can

actually

run

this

I'm,

not

gonna,

run

it

on

dry

run,

I'm

just

directly

going

to

run

it

and

I'm

gonna

send

all

the

messages

on

the

pub

sub

topic.

So

I'm

gonna

do

that

and

yeah

right

now.

It's

cloning,

the

gymnasium

DB

repo,

as

you

can

see

here,.

A

And

then

it

read

the

cursor

it

it

fits

the

latest

commit.

Has

it

compare

those

two?

It

says

that

there

is

actually

a

difference

between

those

two:

it

found

91

new

advisories

that

have

been

added

or

updated,

and

then

it

published

those

data.

So

now,

if

I,

if

I

go

here

and

pull

this

I

should

see

that

the

data-

what

you

basically

see,

is

the

usual

ID

and

the

row

advisory,

which

basically

are

Json

bytes.

So

it's

not

very

interesting

to

see,

but

you

see

that

here

we

have

a

bunch

of

messages

and

there

are.