►

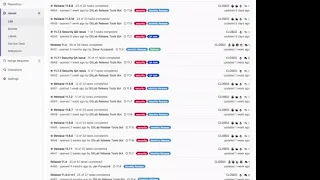

From YouTube: Delivery: run through the release process in 2019-01

Description

J. Skarbek is onboarding as Release Manager and M. Jankovski is explaining the different parts that are current as of 2019-01

A

A

The

docks

without

a

link

to

earlier,

say

how

you

can

start

the

release

issue,

but

basically

we

have

implemented

a

chat,

UPS

command.

Basically,

that

allows

you

to

start

start

the

whole

process

by

running

a

command

and

that

command

is

like

chat,

UPS

run,

prepare

and

then

the

issue

number

basically,

and

then

it

opens

this

automatically

generated

issue

template

here

yeah.

So

you

can

find

that

issue

template

actually

in

the

release

tools.

So

release

tools

is

a

bunch

of

Ruby

scripts.

B

A

This

is

actually

hooked

into

chat,

ops,

so

chat

ups,

will

release

tools

and

then

run

the

rape

command

to

do

certain

things.

So

all

of

these

templates

that

you

will

be

seeing

that

are

automatically

generated,

they

are

all

going

to

be

in

templates,

I

think

yeah

in

all

of

these

templates.

So,

for

example,

monthly

issue

is.

A

B

A

They're

going

to

be

moving

slower

than

master,

so

monster

keeps

always

is

unlocked

in

all

of

our

projects,

so

that

people

can

submit

fixes

there,

but

when

we

are

ready

to

start

preparing

in

the

release

we

branch

off

the

master

and

that

branch

now

starts

receiving

specific

fixes

and

so

on,

so

no

new

features

get

merged

into

it.

We

are

starting

a

bit

early,

but

when

the

code

freeze

gets

announced

on

the

seventh,

then

those

branches

we

receive

no

other

updates.

Basically

now

this

actually

only

mentions

Cee

an

omnibus.

A

A

All

of

these

different

components

have

version

files

inside

of

the

repository,

so

that's

the

first

difference

that

you'll

see

between

different

components

or

get

lumps

so

basically

Community,

Edition

and

Enterprise

Edition

are

being

used

as

this

big

overarching

repository

for

all

of

the

the

other

items

that

are

necessary.

This

is

mostly

historical,

has

nothing

to

do

with

actual

architecting.

How

this

looks

it's

more

like,

oh

well,

we

added

another

component.

What

do

we

do

with?

B

A

Example,

right

now,

omnibus,

if

you

go

to

only,

for

example,

omnibus

follows

the

same

process

there,

where

we

are

having

stable

branches

named

the

same,

but

then

okay,

let

me

not

go

into

this

right

now,

because

it's

going

to

confuse

you

from

the

start.

Basically,

this

is

what

you

need

to

know.

Cee,

you

know

me:

bus

have

11

8,

stable

branches,

maths.

B

A

So

multiple

other

things

here,

I

think

what's

important

to

note,

is

all

of

our

development

is

happening

on

github.com

that

what

you

do

know,

but

all

of

our

release

so

building

the

actual

release

happens

on

that

gate,

love

the

torque.

So

between

those

projects,

we

have

a

mirror

setup

that

automatically

mirrors

everything

basically

master,

protect

the

branches,

everything

so

in

normal

circumstances,

when

we

have

just

a

regular,

secure,

also

sorry,

a

regular

release.

A

Our

daily

deploy,

starting

from

the

seventh,

is

basically

an

RC,

so

release

candidate

I,

don't

like

the

name

that

people

usually

or

other

the

expectation

people

usually

attached

to

something:

that's

called

release

candidate.

But

to

me

it's

actually

just

that

it's

a

release

candidate.

So

if

we

were

to

say

our

c1

is

our

package

that

we

want

to

deliver

on

the

22nd.

That

should

be

possible

to

do

yeah.

B

A

A

So,

while

the

security

release

is

being

prepared,

we

don't

want

to

merge

table

stuff

into

it,

because

we

don't

want

to

mix

the

two

again

for

six

historical

reasons:

I,

don't

think

there

is

a

real

reason

behind

it,

but

this

way

it

allows

us

to

have

pipelines

that

are

going

to

run,

ensure

that

we

know

what

we

are

actually

picking

and

once

everything

is

ready,

then

it's

just

clicking

a

merge

button

and

it

will

go

into

the

stable

and

we

can

then

take

a

release

yeah

all

right.

This

is

just

the

structure.

A

B

A

For

each

release,

we

have

this

specific

label

called

pick

into

and

when

we

announce

the

feature

freeze

on

the

sevens,

every

merge

request

that

what

people

want

to

target

stable

branches

with

needs

to

have

this

label

applied.

Otherwise

we

have

no

way

of

knowing

this

right.

Like

people

apply

a

milestone,

we

used

to

just

apply

a

milestone,

but

that

didn't

actually

show

urgency

right

like

it

showed

that.

B

A

B

A

The

feature

freeze

gets

announced

we

go

into

this

exception,

request

situation

now.

Why

is

this

important?

Well,

because

previously

people

would

just

randomly

apply

the

label

and

merge

things

that

could

potentially

cause

problems

for

us

which

happen

loaded

with

multiple

times

at

the

beginning

of

2018,

so

to

prevent

people

from

just

randomly

merging

code.

A

To

create

an

exception

request-

and

this

actually

makes

me

really

happy,

because

you

barely

can't

see

any

these

days,

mostly

because

people

are

so

tired

of

creating

them

Oh

what

I'm

not

kidding.

So

what

I

actually

created

with

this

template

is

you

need

to

you?

There

is

a

clear

process

right.

You

need

to

link

the

bridge

request.

You

can

see

it

here.

Why

do

you

want

to

pick

this

right?

Like

you

had

yeah.

B

A

Time

so

explanation

of

what

actually

happens

and

why

is

it

important

to

pick

this

in

so

in

some

cases,

people

miss

the

delivery

by

by

a

one

day,

or

there

was

something

difficult

to

do,

or

extra

testing

needed

to

be

done

and

we

need

we

need

to.

We

promised

something

to

the

customer

and

we

need

to

ship

it

right.

Yeah.

B

A

This

is

where

the

explanation

goes,

but

this

the

second

item,

potential,

negative

impact

of

picking-

is

something

that

people

get

spend

most

time

on.

So

this

request

is

explained.

If

we

merge

this

explain

what

what

is

the

worst

case

scenario

that

can

happen

in

case.

We

accept

this

change,

so

I

just

merged

something.

The

worst

case

scenario

is.

We

will

no

longer

have

merge,

request

button

so

that

we,

as

the

release

managers,

can

decide

wait.

Is

this

really

worth

the

risk?

So

we

might.

A

B

A

A

People

explain

right

like

it

can

be

this.

It

can

be

that,

but

most

of

the

time,

it's

a

pretty

decent

explanation

explaining

right

like

this,

is

the

impact

and

I'm

aware

of

the

impact.

So

it

kind

of

shows

us

as

well

that

someone

actually

thoughts

through

the

item

and

that'll

be

there

are

a

bunch

of

approvals.

So

at

any

point

it's

a

release,

managers,

discretion

to

just

say

no,

but

not

just

to

say

no

but

explain.

Why

am

I

saying?

No?

B

A

That

would

be.

That

would

mean

too

much

time

spent

on

process,

and

we

say

that

you

have

a

blanket

merge

option

right

click.

We

then

depend

on

process

and

so

on

to

have

everything

done

by

the

seventh.

What

happens

on

the

seventh

is

that

we

have

a

very

fixed

due

dates

right

from

the

7

till

the

22nd.

We

need

to

do

so

many

items

so

many

things

in

so

many

environments,

so

many

checks

need

to

be

executed.

A

A

What

we

want

to

have

is

not

even

have

a

feature

phrase,

but

just

have

environments

and

enough

steps

in

between

enough.

You

know

tools

provide

to

developers,

so

they

don't

have

to

actually

do

any

of

this

I

would

love

to

remove

the

exception

request.

It

did

its

job,

but

I'm

annoyed

with

it.

That

I

had

even

had

to

create

something

like

this.

So.

A

A

Exactly

one

year

ago,

we

didn't

have

this

when,

when

I

introduced

this

a

year

ago,

we

had

quite

a

lot

of

exception,

request

created

quite

a

lot

of

annoyed

people,

but

which

time

they

started

to

learn

why

this

is

being

asked,

and

now

we

actually

only

have

maybe

like

two

or

three

exceptions,

requests

per

release,

which

is

great,

which

means

people

are

actually

thinking

like.

Oh

maybe,

this

is

not

really

that

urgent

do

I.

Do

I

really

want

to

like

introduce

this

risk

and

derail

the

whole

process.

A

A

The

feature

flag

is

a

way

for

us

to

control

the

impact

on

github.com

I.

Don't

want

to

block

people

from

using

a

feature.

I

just

want

to

have

a

way

of

us

deploying

and

like

seeing

something

bad

happen,

and

that's

like

flipping

the

switch

and

saying

okay.

Well,

at

least

we

are

now

have

the

old

behavior,

while

you

figure

out

why

this

is

happening

so

that

we

don't

affect

people.

So

that's

not

the

exception.

To

the

exception,

request

rule.

B

B

A

Previously,

someone

had

to

do

this

manually,

every

single

that

you

would

have

to

go

through

15

20,

merge

request

and

terrific,

each

and

every

one

of

them

thanks

to

Robert.

We

now

I

have

the

cherry,

picker

I,

don't

know

where

this

one

is

yeah

there

we

go,

so

it

would

just

pick

up

all

the

merge

requests

that

are

vetted

by

release

managers.

So

something

like

a

manager

went

through

the

list

of

merge,

request

labels

or

correctly

set

milestones

or

correctly

set.

Assignee

is

merged,

bla

bla.

A

B

A

B

A

Not

go

into

detail,

but

just

go

into

whether

this

belongs

there

or

not.

So

this

is

a

visual

confirmation

that

all

the

labels

are

correct.

So

if

someone

applied

you

know

regression,

it's

not

your

job

to

go

and

like

understand.

Oh,

is

this

a

regression

or

not?

Right

like

we

need

to

trust

someone

at

some

point

of

this

yeah.

B

A

But

in

certain

cases,

not

anymore,

but

at

the

beginning

this

was

people

just

randomly

apply

labels,

so

we

need

to

understand

wait.

Is

this

a

feature

what

okay?

Okay,

if

this

is

a

feature,

it

looks

like

a

feature:

it

doesn't

have

any

sort

of

label.

That

is

a

regression.

It

doesn't

have

an

exception

request.

It

doesn't

look

like

it

has

a

feature

flag.

Why

is

this

merge

request

here

right?

So

this

is

the

visual

confirmation

we

are

doing.

A

Ok

now

here

is

a

twist

here

a

bit,

so

you

need

to

do

this

things

for,

like

the

cherry

picker.

Does

this

automatically

for

about

see

any

right?

You

don't

need

to

run

it

twice.

So

you

have

these

two

preparation,

branches.

Popularly

the

thing

is,

we

need

to

make

sure

that

Cee

branch

or

the

see

a

preparation

branch

is

merged

into

EE

as

well.

You

know,

because

we

have

this

seat.

We

emerge

most

of

the

time

that

creates

some

conflicts.

A

It

relates

the

process

or

so

on

and

that

at

that

point

you

need

to

start

chasing

developers

to

help

out

with

merging

conflicts.

This

is

one

of

the

reasons

why

we

are

pushing

hard

towards

merging

these

code

bases.

They

are

the

same

code

base

just

not

completely

the

same,

and

that

creates

all

of

these

additional

requirements

all

of

this

additional

time

that

is

necessary

for

the

pipelines

to

pass

or

people

to

invest

time

in

resolving

conflicts

and

so

on,

and

so

on.

A

Okay,

so

now,

let's

say

all

the

preparation

branches

have

emerged

correctly.

Pipelines

are

green.

We

are

ready.

So

when

that

happens,

we

need

to

ensure

that

anything

that

might

be

nom

nibbles

github,

that

has

the

same

picking

to

stable

branch,

is

merged

right.

That

goes,

that

gets

merged

into

the

stable

branches

in

omnibus,

and

when

that

is

ready,

we

I

don't

know

why

they

call

it

packaging

these

days,

but

we

call.

We

say

that

we

have

a

situation

clear

for

release

for

tagging,

which

means

all

the

Omnibus

stable

branches

are

ready.

B

A

A

B

A

A

A

It's

not

gonna,

be

theoretically,

it's

gonna

be

exactly

that

one

right.

This

is

why

we

are

doing

it

in

white

one

pipeline

as

well

to

ensure

that

there

is

no

D

syncing.

So

these

versions

are

exactly

the

same

for

the

specific

version

as

the

one

in

github

II

and

see

right

now,

if

I

open

one

of

these,

because

we

are

on

the

master

branch

points

to

master

right,

but

if

we

select

any

of

this

ones

that.

A

B

A

See

use

this

one,

so

this

is

the

pipeline

that

gets

created

when

you're

tagged

to

run

you

quickly

through

the

pipeline.

We

build

actual

assets,

so

JavaScript

CSS

all

of

those

files

we

actually

build

them

in

the

C

II

and

in

the

EE

every

bills.

So

it's

separate

right

and

in

order

to

not,

like

we've,

been

doing

asset

building

in

like

multiple

other

places,

so,

for

example,

in

omnibus,

and

get

account

charts

and

so

on.

A

This

first

stage

we

built

the

package

that

is

going

to

be

used

for

public

consumption,

but

we

build

it

first

because

we

want

to

deploy

it

to

staging,

so

you

use

Ubuntu

1604.

That

means

that's

the

package

that

is

currently

extracted

and

at

the

same

time

we

build

another

docker

image

for

QA

when

the

package

is

built,

so

you

can

see

here,

the

build

is

completely

done,

uploaded

cache

is

uploaded

and

so

on.

B

A

A

So

now

this

pipeline

is

going

to

be

triggered.

We

call

this

a

staging

staging

the

staging

process.

Basically,

so,

basically,

first

of

all,

the

package

is

built

and

pushed

to

a

staging

repository

on

package

cloud.

So

this

this

repository

is

not

public,

it's

private

and

it's

used

across

our

infrastructure

and.

A

A

We

go

we'll

use

this

as

an

example.

It

doesn't

matter

at

all.

Basically,

the

same

pipeline

is

going

to

be

triggered

right,

the

same

one

that

will

do

a

bunch

of

preparation

tasks,

run

the

migrations

of

staging

deployed

italy,

deploy

to

the

rest

of

the

freight

run,

the

post,

deploy

immigrations

and

do

some

cleanup

and

then

fire

off

get

lab

QA

against

yeah.

A

B

B

A

Yeah

this

was

the

staging

one,

so

it's

it's

the

same

one

as

you

use

previously.

Basically,

so

this

runs

the

smoke

tests

it

fails.

Then

we

know

something

is

up

and

we

don't

want

to

pro

progress

further.

If

it

succeeds

like

it

did

now,

then

we

are

fine

with

going

further.

So

now

that

we

had

deployed

right,

this

is

no

longer

needed.

I,

don't

know.

Why

do

we

have

this?

A

That

needs

to

be

removed?

So

now

you

create

a

QA

issue

now

this

is

a

manual

task,

so

the

QA

issue

is

again

there

as

a

measure

to

prevent

something

that

we

had

like

a

year

ago

where

we

would

just

go

and

deploy

to

staging.

No

one

would

care,

no

one

would

touch

it

and

then

we

would

go

and

deploy

it

to

production

and

people

would

start

getting

annoyed

because

stock

is

broken

yeah,

because

of

that

reason

we

have

a

manual

QA

test.

A

A

This

is

nothing

like

you

should

see

the

first

RC

issue

nightmare,

but

shockingly

right,

like

we

are

going

to

remove

this

I

can't

wait

for

this

to

be

removed,

but

this

has

helped

us

out

so

many

times

in

the

past

year

we

found

so

many

actual

regressions

that

could

be

critical

in

production

on

staging.

Just

because

we

paying

someone

hey.

Can

you

check?

Yes,

yeah.

B

A

People

leave

a

comment

manual,

QA

is

done

and

when

someone

finds

a

regression

dipping

the

release

managers

yeah

it's

kind

of

difficult

to

explain

to

people

what

is

a

critical

regression

and

what

is

a

regression.

So

it's

up

to

the

release

manager

to

ask

questions

and

say

like

wait:

okay,

well,

this

pixel

is

misaligned

I.

Don't

think

this

is

a

crucial

regression.

I,

just

I'm

gonna

deploy.

Oh,

you

can

see

a

half

of

the

merge

request.

Interface,

that's

actually

a

critical

regression

right,

like

I,

don't

want

to

deploy

that

so

no!

A

This

is

where

some

decisions

can

be

additionally

made.

Okay,

so

say

to

a

deadline,

expired

or

everyone

checked

out

their

items.

Then

we

go

to

deploy

to

canary

right,

so

chat

ups

command.

You

saw

the

pipeline,

it's

the

same

thing:

it's

not

this

one,

it's

the

other

one.

So

this

is

staging,

so

the

other

one.

The

job

just

ran

on

master.

A

A

Free

to

service,

exactly

it

executes

QA

again

and

so

on

now

that

we

actually

have

more

eyes

on

canary

and

that

people

don't

have

an

option

as

in

they

have

an

option

to

opt

out

not

to

opt

in

now.

I

expect

way

more

valuable

feedback

from

canary,

and

this

has

already

started

happening

which

I'm

quite

happy

about,

but

we

also

kind

of

leave

canary

for

a

bit

right

like

okay,

you

deploy

this

canary.

Everything

like

new

version

is

up.

You

go

and

do

some

work.

A

A

All

of

that

is

fine.

We

can

then

go

and

decide

to

deploy

it

to

production

production.

Is

you

know

how

it's

done?

Basically,

it's

the

same

thing

you

just

use

production

for

the

chat

ups

deploy

and

because

we

run

all

the

migrations,

not

old,

but

online

migrations

inside

of

canary

now,

production,

deploy,

deploy

is

a

mostly

boring.

I

would

say.

Sometimes

the

number

of

loads

goes

off

like

that.

That

sometimes

happen

happens

because

of

the

post,

deploy

my

gracious

that

that

work,

a

large

data

set

behind.

B

A

A

A

Up

until

this

moment

here

right

package,

an

image

up

until

this

moment

here

still

everything

is

private,

so

yeah.

This

was

the

one

that

actually

triggered

the

deploy

to

staging

yeah.

This

one

continued

to

work

in

parallel,

so

it

built

all

the

packages

for

the

diversions

that

we're

shipping

no

actually

up

until

here.

Sorry,

so

this

one

is

actually

building

the

packages

and

this

one

is

actually

uploading

the

packages

to

package

clouds.

It's

separate,

gotcha.

B

A

Reason

why

they

are

separate

they

used

to

be

like

all

in

like

one

central

s6

and

upload

would

be

the

same

if

you,

if

the

only

reason

why

the

job

failed

is

the

package

failed

to

upload

and

you

need

to

retry

it,

you

would

have

to

wait

for

the

whole

package

to

build

again.

So

by

separating

this,

we

ensure

that

you

can

independently

right

o

package

build.

Let

me

try

o

package

built,

but

the

upload

failed.

B

A

B

B

A

A

Yep,

it's

so

crazy

unless

the

pipelines

fail

unless

the

bill

failed

somewhere.

Unless

you

know,

runners

are

not

operating

correctly

on

dev

or

there

is

a

contention

in

between

I,

don't

know

like

multiple

bills,

you're

running

at

the

same

time,

I

think

the

trickiest

part

at

the

moment,

like

all

of

this

automation,

is

done

in

the

past

year,

two

years,

actually

a

lot

of

it

in

the

past

year.

A

So

all

of

the

automate

it

is

stuff

like

oh

this

pipeline

of

triggers

this

pipeline.

This

was

done

recently.

All

of

that

you

had

to

do

manually

previously

right,

you're,

not

even

chat.

Ups

manually,

we

didn't

have

a

chat

up

command

for

this

yeah,

so

comparing

11/8

with

December

of

2017

or

January

2018

is

night

and

day

comparison,

but

that

still

doesn't

mean

that

we

don't

have

problems.

A

So

the

biggest

problem,

I

would

say

is

the

manual

decisions

you

need

to

make

right,

Oh

what

which

items

do

I

pick

or

okay

now

I

need

to

wait

for

the

pipelines

to

finish.

Oh

okay,

this

one

flaky

tests

failed

in

the

whole

seee

pipeline.

Let's

retry,

oh

no,

it's

not

actually

flaky.

It's

actually

a

valid

failure

now

go

to

developers

and

figure

out

what

the

hell

is

happening.

Can

someone

help

me

with

this

test

rerun

again?

Oh

okay,

it's

filled

again,

so

it's

really

a

failed

test.

You

need

to

fix

this

so

now.

A

B

A

I

think

they

needed

to

figure

this

out

and

I,

don't

know

whether

they

picked

it

up

just

by

sheer

getting

the

help

from

others

or

we

have

something

written

down,

but

a

lot

of

these

things

are

actually

written

down

in

the

process

document

or

in

individual

dogs

in

the

release.

But

when

I

run

you

through

the

process

right

now,

like

it's

a

bit

easier

to

see

how

this

all

works.

What's

important

to

note

here

is

that

we

are

working

on

github.com.

A

What

can

go

wrong

right,

like

any

of

these,

can

actually

fail

at

any

point,

for

whatever

reasons,

and

then

figuring

out,

that

is,

is

actually

the

biggest

concerning

problem,

so

by

actually

removing

some

of

these

manual

steps

of

deployment,

we

made

it

so

much

easier

for

all

of

us,

but

then

now

we

need

to

figure

out

how

to

have

the

automated

deploy

actually

react

properly

on

alerting,

oh

no,

like

Oh,

something

is

actually

happening

while

I'm

deploying

okay.

What

do

I

do

all?

A

Let

me

inform

the

operator

who

should

be

monitoring

me

right,

like

when

you

trigger

a

chato's

command,

you

go

into

other

Kings

and

you

wait

for

the

notification

come

up

and

I

think,

like

all

of

the

slowest

and

the

most

painful

parts

are

still

remaining

inside

of

the

development

side,

less

so

in

the

infrastructure

side

in

the

infrastructure

side.

Sadly,

we

just

kind

of

you

know

glued

things

together,

I

wouldn't

say

this

is

terrible.

A

A

A

When

creating

all

of

this

started,

we

only

had

github

seee

to

start

with.

Then

we

had

a

github

ee,

then

all

of

a

sudden,

both

of

them

started

getting.

Oh,

we

have

good

luck.

Shell,

Whitlam

shell

was

the

only

component

that

we

used

outside

of

github

rails,

but

then

we

added

workhorse,

then

we

added

pages,

then

we

added

registry.

Then

we

added

all

of

these,

and

this

is

why

you

have

all

these

version

files

inside

of

rails,

because

we

grew

like

oh

well.

We

have

a

system

that

works

for

one.

A

Why

don't

we

use

it

for

five

other

things?

Yeah

and

right

now

everything

is

starts

from

a

manual

task

which

I

want

to

try

and

turn

around

I

want

to

ensure

that

we

are

actually

asking

for

a

deploy

not

for

a

version

yeah

to

deploy,

and

then

you

want

to

create

an

artifact

for

that

deploy.

So

this

is

the

the

graph

you're

seeing

here

and

then

the

release

tooling

will

do

a

bunch

of

other

things

like

you

know,

prepare

everything

prepare

for

cherry

picking,

cherry

pick

and

so

on.

A

And

then

you

will

package

omnibus

package,

you'll

pack

package

charts.

You

will

package

five

other

things

that

we

are

going

to

introduce

at

some

point

and

that

is

going

to

like

come

back

and

say:

okay,

well,

here's

the

deploy,

artifact

you

can

go

and

deploy

I'm

gonna

go

and

do

all

of

my

other

things

right.

I'll

prepare

all

the

separate

artifacts

that

are

necessary

for

public

release.

Right

No,

so

get

solid.

Comm

receives

its

thing

as

its

necessary,

but

then

the

rest

of

the

process

continues

for.

A

A

B

We're

gonna

do

is

roll

through

the

docs

and

maybe

use

the

documentation

as

a

method.

For

me

to

regurgitate

what

you

stated

and

see

if

I

could

update

any

documentation,

and/or

gather

the

questions

necessary

from

either

a

conflict

of

the

documentation

between

what

you

stated

and

what

maybe,

what

just

needs

to

be

updated

then

go

from

there.

Probably

yeah

I

think

one

question

I

would

have

is

what.

B

A

Github

that

come

actually

uses

omnibus

installer

to

install

there

right.

They

have

a

github.

That

org

is

a

separate

instance

for

from

github.com

and

just

building

this

separately

on

their

butyl

of

the

tork

allows

us

to

be

completely

independent

from

any

sort

of

problems

that

github.com

might

have

all

the

mirrored

repositories,

all

the

images.

All

of

that

is

on

that.

So

it's

basically

just

a

question

of

building

that

artifact

that

github.com

can

use

later.

If

your

follow-up

question

is,

why

are

we

not

doing

this

on

ops?

A

Ops

is

an

ops

instance

right

operation

systems.

It

was

created

created

fairly

recently

and

I.

Don't

think

that

there

a

use

case

at

the

moment

for

migrating

all

of

this

process

to

ops,

because

there

get

up

the

tork

serves

the

same

purpose

at

the

moment.

So,

basically,

the

only

reason

why

this

is

completely

separate

is

we

can't

have

github.com

built

the

artifact

that

it

uses

for

deploying

its

self

make.

B

Thought

behind

a

question

was

more

long

lines

of

if

we're

trying

to

remove

development.

From

the

dev

instance,

I

was

wondering

if

we

could

eliminate

other

things

that

the

dev

instance

accomplishes

as

a

way

of

forcing

us

to

just

get

rid

of

it

completely.

I

know

that's

not

the

angle,

but

you

that

would

kind

of

you

know,

help

I

I'm

thinking

of

other

ways

to

help

security.

You

know

get

behind

the

fact

that

we

need

to

get

rid

of

that

process

or

that

instance

in

general,

so.

A

B

A

So

it

would

be

us

replacing

endpoints

there,

it

would

be

a

bit

painful,

but

it

would

be

doable.

I

think

one

other

thing

the

devkit

of

the

torque

does

is

it

serves

as

an

OAuth,

basically

for

our

license

or

version

for

you

know

all

those

external

so

whether

that's

also

easily

doable

or

not.

That's

a

different

question.

Yeah.

B

A

I

think

moving

development

would

be

a

the

biggest

item

because

we

do

need

a

place

where

we

will

do

security.

Vulnerability

fix

this,

a

private

one.

It

can

happen

in

public

right.

So

whether

does

that

get

for

that

or

or

get

love.com

private

projects,

groups

I,

don't

think

it

actually

matters

I

think

we

would

prefer

github.com,

because

you

get

all

these

great

things

like

automatic

linking

of

issues

as

soon

as

you

mention.

A

B

A

Lot

of

the

items

in

there

are

just

because

we

had

release

managers

previously,

which

was

a

rotating

role,

so

you

would

add.

Well

someone

forgot

to

do

act

so,

let's

add

it

to

the

template

so

that

we

don't

forget

it

again.

You

know

naturally,

and

that

kind

of

grew

out

of

control,

and

it

was

oh.

We

added

this

components.

So

let's

do

something

with

it:

okay,

well,

it's

release

manager.

It's

someone

else's

problem

right

with

automation,

and

you

know

questioning

what

is

necessary

and

what

is

not

necessary,

anymore.

B

B

A

A

A

A

B

Don't

know

how

to

phrase

this

question

because

I'm

not

really

sure

what

I'm

looking

for

out

of

this,

but

we

create

a

branch

off

of

some

previous

commit

at

some

point

in

time

and

we

label

it

11:8

stable

whatever,

and

then

we

pluck

things

from

master.

We

cherry-pick

things

from

master

into

that

branch,

all

right.

What

governs

what

starts

the

stable

branch

so.

B

A

The

feature

freeze

actually

governs

when

the

stable

branch

is

created.

Now,

in

the

past

six

months

from

a

bit

more,

I've

been

pushing

that

the

deadline

gets

blurred

on

purpose,

because

I

I

want

to

remove

feature

phrase:

I,

don't

care

for

feature.

Freeze,

I,

don't

think

it's

good

for

the

velocity

of

our

development,

so

we're.

B

A

B

A

Goes

into

master

exclusively

and

then

you

court

things

into

stable

branch,

which

is

slower

right,

like

it's,

a

slower

moving

branch

to

be

able

to

deploy

it

further.

And

if

you

hook

this

into

the

conversation

we

had

yesterday,

which

are

when

I

was

explaining

how

I

actually

want

to

deploy

from

a

commit

right.

You

know.

B

A

Where

I

want

to

remove

that

need

for

having

a

slower,

stable,

I

want

to

have

master

and,

like

short,

branching,

were

necessary.

I

want

to

branch

off

from

this

commit

fix

whatever

it

happens,

right

merge

that

into

master,

but

have

that

heads

of

that

branch,

so

I

can

deploy

it

to

all

of

our

environments

so

that

we

can

continue

deploying

from

master

and

every

time

we

have

a

bug

of

some

sort

branch

off.

B

A

B

A

At

the

moment,

we

have

the

release

done.

At

that

point

we

say:

okay,

and

now

we

are

going

to

back

port

security,

fixes

any

sort

of

new

bugs

there.

So

on

that,

we

see

for

the

users

right

for

the

result

managed

users.

Now

we

have

this

stable

branch.

That

is

a

definitely

slow

running

branch.

As

long

as

we

support

that

release

and

that

branch

now

has

its

own

separate

life

right

now,

the

stable

branch

is

not

really.

A

stable

branch

is

just

a

slower-moving

branch

of

Master,

which

makes

very

little

sense.

B

B

A

C's,

so

just

to

make

this

clear,

so

our

our

series

are

being

built

from

the

7th

to

the

22nd.

We

are

going

to

build

as

many

as

necessary

the

amount

of

fixes

that

need

to

port

the

amount

of

new

changes

that

are

bringing

in

all

of

them

are

mandating

the

number

of

horses.

So

what

I've

been

trying

to

push

for

a

while

now

is

that

every

day

should

have

a

release

candidate.

You.