►

From YouTube: Postgresql 10 to 11 GitLab Chart

Description

Update Postgres from 10 to 11 using GitLab Helm Chart

A

A

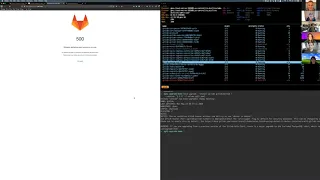

This

is

the

view

of

the

namespace.

That's

running

the

cluster

in

k-9s

and

I'll,

be

typing

commands

here

in

the

lower

right.

So

the

first

thing

I

want

to

do

as

administrator

to

fade.

Upgrade

Postgres

is

to

run

the

pre-migration

step,

and

so

this

is

actually

a

script

that

comes

out

of

the

megillah

charts

repo.

A

So

I'm

just

waiting

to

finish

for

this

on

the

lower

right

to

finish.

There's

a

point

here:

yeah:

well,

it's

packing

it

up.

It

doesn't

say

anything,

but

you

can

see

here

this

s.

3

like

is

where

the

backup

is

stored

in

Minaya,

and

so

we've

created

a

database

backup

now

we're

going

to

delete

the

database

from

the

the

running,

get

lab

and

then

up

re

run

the

helm

chart

to

update

to

Postgres

11

and

then

we'll

restore

this

this

backup.

A

Okay.

So

now

that

we

have

a

backup,

we

can

do

something

that

might

be

a

little

scary.

In

other

circumstances,

we

need

to

delete

the

Postgres

stateful

set,

so

the

stateful

set

for

Postgres

was

introduced

in

the

3.0

version

of

the

chart.

Previous

to

that,

we

had

been

using

deployments

for

Postgres,

which

means

that

if

you

updated

the,

if

you

updated

the

version

of

Postgres

and

redeployed

it,

it

would

work

fine

because

it

would

just

delete

the

deployment,

create

a

new

deployment

with

the

tag

and

then

launch

new

pods.

A

A

A

A

A

Hey

Dustin:

did

you

have

to

delete

the

Postgres

staple

set,

or

was

it

just

to

make

the

upgrade

process

a

little

cleaner

nimi

is

supposed

to

just

deleting

the

PVCs

yeah

just

curious.

If

you

could

have

just

left

the

postcards

10

state

will

set

there

and

and

if

helm

would

have

correctly

removed

it

and

replaced

it

with

11.

A

B

B

A

A

Run

that

again,

so

this

is

I'm

creating

a

config

map

to

track

this

process,

stopping

processes

that

depend

on

the

database

unpacking

the

backup

loading

that

into

Postgres

running

migrations

and

then

restarting

those

services.

At

the

end

of

this

process,

we

should

be

able

to

load

the

page

on

the

left

again

and

then

that

everything

is

working

is

fine

and

we're

now

running

Postgres

11,

with

our

data

restored.

A

Should

note

that

this

is

using

three

three

three

of

the

chart,

this

changes

to

Gale

app

web

service

in

forum

and

that

script

that

I

mentioned

that

we're

currently

in

to

bash

updates,

not

as

well.

That's

why

it's

important

that

we

don't

just

serve

one

version

of

that

script,

but

we

we

keep

the

gate

lab

tag

on

it.

So

if

you

try

the

room

in

the

for

make

for

our

integration,

you're

running

the

four

o--

scripts.

A

A

So

this

is

a

manual

process

and

I

assume

that

most

of

us

would

rather

not

have.

Our

users

have

to

go

through

manual

processes,

but

there's

only

a

few

steps

in

this

process

and

it

does

involve

getting

rid

of

data

or

not

moving

data

around

so

there's

a

little

more

risk

to

it.

Ordinarily

I

wouldn't

say

that

is

a

reason

to

now

animate

something

but

I

think

at

least

us

getting

it

down.

To

you

know.

Four

steps

is

pretty

manageable.

A

One

thing

I

did

run

into

here

and

I've

opened

an

issue

on

it

is

that,

for

some

reason,

if

you

are,

if

you

are

using

a

self-signed

certificate

from

an

I/o,

the

task

runner

pod

doesn't

know

about

that

certificate

and

then,

when

you

try

to

run

backed

up

Surrey

store,

it'll

just

stay

on

with

an

SSL

warning.

There's

a

document

a

workaround

for

this,

which

is

to

exact

into

the

task

runner

pod

and

edit,

the

s3

CFG

file

to

not

use

HTTPS

in

the

pod,

which

is

not

such

a

great

workaround.

A

B

Don't

think

we

could

really

get

any

safer.

We

need

to

have

that

safety

step

of

run.

The

backup

know

that

you

have

a

good

copy

of

it

first

and

then

delete

the

PVC.

If

we

do

fully

automate

it,

we

have

a

lot

of

edge

cases

to

watch

out

for,

admittedly,

this

only

comes

into

play

and

you're

actually

running

our

deployed

version

of

postgrads,

which

we

don't

currently

recommend

as

an

actual

production

database

that

might

change

in

the

future.

B

B

C

A

C

I'm,

not

I'm,

not

sure

I,

fully

I

mean

I,

hear

what

you're

saying

Jason

about

doing

two

two

backups

and

comparing

them,

but

I'm

not

sure.

That's

necessarily

a

good

way

to

do

it,

because,

if

I

get

a

bad

back

up

the

first

time,

if

I

do

the

exact

same

thing,

I'm

mate

potentially

get

the

same

bad

backup.

A

C

Don't

know

if

there's

a

and

I

don't

know

if

there's

a

good

way,

I

know

anyway,

I

could

conceive

of

it

is

if

we

had

something

that

would

then

afterwards

go

poke

the

database

and

then

compare

what's

in

the

backup.

I'm

not

sure

I'd

want

to

go,

say

every

single

value

because

that

time

consuming,

but

so

now

that.

B

C

B

If

PG

don't

fail

spectacularly,

then

that's

one

problem:

if

we

ran

PG

dump

and

it

failed

and

we

failed

to

catch

that

it

failed.

That's

our

problem

right,

so

we

should

be

sure

that

PG

dump,

when

executing,

is

actually

going

to

throw

us

an

error

if

it

fails-

and

we

react

to

that,

but

if

PG

dump

itself

says

all

is

well

and

your

content

is

not

all

as

well,

I

literally

have

no

idea

what

to

do

there,

and

maybe

we

should

poke

a

database

team

member

for

that.

C

B

A

B

A

B

B

So

this

points

at

the

external

URL,

so

one

thing

we

can

do

here

is

if

you're

actually

using

manao

one

just

not

worry

about

SSL

at

all

and

point

to

wreck

at

the

internal

endpoint

mm-hmm

right

that

completely

bypasses

that

problem

entirely,

which

might

actually

make

sense

if

you're,

using

manao

inside

of

your

deployment.

I

say

that,

because

for

the

rudder

to

hit

that

URL,

it

actually

has

to

go

out,

turn

around

and

come

back

in

nginx

ingress.

A

B

A

And

I

mean

I

I

mean

even

if

it

is

internal

I

think

you

would

still

have

TLS

on,

but

nope

it

was

using.

The

internal

employee

like

it

would

be

nice

if

we

had

a

way

of

tying

the

task.

Runner

that

you

know

since

the

same

CA

is

signing

all

of

the

internal

certs

and

the

task

runners

should

be

able

to

trust

that

self

Steinman

IO

internal

is

fine.

Yeah.

B

A

A

B

Let's

see

actually

it

looks

like

they

have

now

added

the

ability

to

do

that.

I

did

a

quick

search

and

they

do

have

the

ability

to

actually

serve

their

own

SSL.

At

this

point,

we're

still

likely

not

to

do

it

inside

of

our

chart

at

this

point,

because

nginx

is

expected

to

be

the

termination

and

we're

expecting

whatever

provides

nginx

or

the

ingress

to

do

that

termination

we're

not

doing

mutual

TLS

across

services

and

for

those

people

that

are

there

making

use

of

Sto

all

right.