►

From YouTube: Enablement Group Conversation Overview - Aug 3rd 2020

Description

Overview of Enablement's group conversation for Aug 3rd 2020

A

A

We,

of

course,

look

forward

to

all

of

your

questions,

so

please

do

attend

and

add

into

the

docs

if

you

can

at

a

time,

but

looking

forward

to

talking

with

you,

as

always

now,

I

think

most

folks,

probably

at

this

point

in

time,

know

who

the

section

is

and

what

we

do.

Also

our

categories,

those

have

not

changed.

We

have

had

to

push

back

the

maturity,

improvements

for

both

cloud

native

installation

and

disaster

recovery.

A

It's

taking

up

a

little

bit

more

time

for

disaster

recovery

to

add

support

for

all

the

missing

data

types,

and

so

that's

what

we're

driving

towards

still

a

number

one

focus

of

the

team,

including

it

in

q3

and

on

the

cloudina

side.

We

still

have

some

gaps

compared

to

omnibus

for

things

like

pages

that

we

have

to

walk

through,

hiring

is

complete,

so

we

have

everyone

on

board

and

we

are

continuing

to

see

some

of

the

newer

folks

continue

to

improve

their

velocity

and

overall

team

velocity

improve

as

well,

which

is

fantastic

to

see.

A

We'll

talk

more

about

those

in

the

engineering

metrics

side

of

things.

We

are

focusing

on

the

product

performance

indicators

as

well.

We

have

distribution

done,

we

are

being

able

to

complete

both

memory

and

database

in

13.3

geo

and

global

search

will

take

a

bit

more

time

because

they

are

non-trivial.

A

Now

I

mentioned

that

the

memory

group

is

completing

their

performance

indicator

in

thursday,

not

three.

This

has

been

the

culmination

of

a

lot

of

work

across

13.1,

13.2

and

13.3.

We

had

to

lead

the

foundation

by

being

able

to

pull

these

metrics

from

prometheus,

and

we

have

support

today

for

single

node

instances.

A

This

is

some

of

the

metrics

we're

getting

back

from

13.1

13.2

at

the

time.

This

recording

is

a

little

bit

too

new.

It's

only

been

out

for

about

a

week,

and

so

the

metrics

are

just

starting

to

trickle

back

in

but

13.1.

We

have

some

interesting

data

here

and

you

can

see,

for

example,

the

distribution

of

the

vertical

sizing

of

the

single

node

instances,

as

you

probably

would

expect.

We

have

four

and

e

kicks

in

memory

as

the

significant.

A

Common

deployment

sizes

along

with

two

and

four

course

memory-

that's

probably

pretty

expected-

what's

maybe

not

quite

as

expected,

is

that

we

have

some

very,

very

large,

vertically

scaled

instances.

We

have

four

with

over

a

terabyte

of

ram.

We

have

five

with

96

cores,

and

so

there

are

quite

a

few

customers

who

really

just

vertically

scale

up

and

really

having

some

success

in

doing

so.

A

We

also

change

recommendations

here

as

part

of

the

efficiency

program

to

encourage

users

who

can

still

fit

within

that

single

node

size

that

to

consider

doing

so,

and

so

they

can

get

the

benefits

and

ease

of

management

of

one

node

without

being

on

the

extra

complexity

of

multi-node.

So

some

proof

points

here

on

the

vertical

scalability

of

github

itself.

A

And

you

can

see

just

a

whole

bunch

more

metrics

in

this

dashboard

here.

I

just

pulled

out

these

two

and

we

are

continuing

to

do

some

further

analysis,

as

did

it

comes

back

engineering

metrics

I

mentioned

before

we

are

seeing

improvements

in

the

mr

rate

per

member,

so

great

job.

There

team,

as

we

continue

to

ramp

up

and

on

board

and

just

quick

note

that

we

also

have

the

highest

raw

number

of

contributions

from

the

wider

community,

which

is

fantastic.

A

A

The

properties

for

enabling

haven't

changed

they've

been

the

same

throughout

the

entire

year,

so

I'm

not

going

to

focus

on

them.

We're

just

continuing

to

drive

these

forward

throughout

the

year

and

dogfitting

in

particular,

we

are

looking

to

complete

the

work

for

petroni

and

also

the

releases

feature

in

13.3,

so

that

is

great

improvement

on

the

dog,

putting

side

of

things,

and

we

also

had

some

good

recent

blog

posts

got

some

traction.

A

Craig

wrote

up

a

great

blog,

most

recently

on

migrating

from

puma

gitlab

and

our

experience,

and

it

got

picked

up

as

the

lead

item

in

last

week's

ruby

weekly,

which

is

great,

and

it's

linked

there.

So

if

you're

curious

check

it

out,

there's

some

brief

conversation

on

their

threads

and

thank

you

craig

for

a

great

blog

post.

A

A

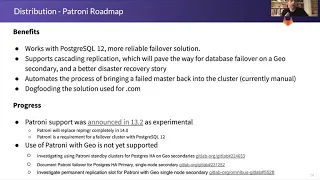

Coming

up

on

distribution,

we

have

some

really

exciting

releases

here,

we're

making

progress

on

an

openshift

operator

that

will

also

work

for

kubernetes

and

we'll

be

continuing

to

invest

there

in

the

future

in

the

coming

months

to

reduce

the

amount

of

maintenance

required

and

hands-on

work

for

running

gitlab

and

kubernetes,

so

really

excited

about

that.

We're

also

should

be

shipping.

The

first

iteration,

which

the

team

has

validated

with

customers,

will

address

their

needs

in

the

first

iteration

for

separating

configuration

and

secrets.

A

A

So

you

know

three

releases

ago

we

shipped

pushers

11,

as

required

and

here

at

fishing

nut

3,

we'll

have

great

support

for

postgres

12

and

giving

folks

a

lot

of

time

to

either

leverage

the

benefits

of

postgres

12

or

prepare

for

the

requirements

changing

to

pg12

in

may

of

next

year.

So

great

work

really

impressed

with

the

velocity

here

on

the

orchestrator

side

of

things.

A

A

So

that's

the

main

focus

of

the

team,

and

once

we

have

that

done,

we

can

then

also

work

on

other

improvements

for

things

like

geo-replication,

for

example

in

q4,

where

we

can

make

the

fact

that

you're

on

a

pr,

the

main

master,

no

the

main

gitlab

node

or

a

secondary

node

sort

of

irrelevant

totally

transparent,

gitlab

chooses

the

right

node

for

you

and

that's

all

you

need

to

know

so

really.

Looking

forward

to

that,

that

will,

I

think,

result

in

a

lot

more

adoption

and

leveraging

of

geo

in

q4

this

year.

A

If

you

could,

of

course

read

through

the

other

challenges,

I

meant

tried

to

mention

these,

as

I

went

through

the

presentation

already.

So

please

do

attend

our

call

on

monday

we'd

love

to

hear

from

you

love

to

answer

your

questions,

and

we

can

go

into

details

on

any

of

these.

That

you'd

like

to

have

more

questions

on.

Thank

you

so

much

and

talk

to

you

monday.