►

From YouTube: 4. #everyonecancontribute cafe: Jina.ai

Description

Blog: https://everyonecancontribute.com/post/2020-10-14-cafe-4-jina-ai/

- Repository: https://github.com/jina-ai/jina

- Examples: https://github.com/jina-ai/examples

- Documentation: https://docs.jina.ai/index.html

- Contribute to Jina.ai: https://github.com/jina-ai/jina/blob/master/CONTRIBUTING.md

A

B

B

Okay,

more

than

happy

to

I'm

alex

cg,

I'm

the

open

source

evangelist

here

at

gina,

ai,

we're

a

neural

search

company

based

out

of

beijing

and

berlin,

though

we

have

people

all

over

the

world

and

we're

always

looking

for

more

yeah,

so

we'll

be

talking

a

lot

more

about

gina

moving

forward.

So

I

won't

hog

my

time

here

and

I'll.

We

can

go

on

to

my

colleague

rituja,

who

will

also

be

speaking.

C

D

E

E

B

F

B

C

B

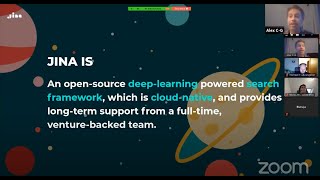

Okay,

excellent

so

yeah

we've

given

a

quick

intro

to

everyone

here,

here's

a

chance

to

look

at

all

our

lovely

faces

once

again,

so

yep

gina

gina

is

an

open

source

search

framework.

We're

powered

by

deep

learning,

we're

cloud

native

and

we've

been

going

since

early

this

year,

we're

pretty

new

startup,

but

we're

back

by

a

full-time

venture

back

team.

So

I

think

they're

about

20,

maybe

30

of

us

now

yeah.

So,

let's

dive

into

these

things

a

little

bit

more.

B

So

by

search

framework,

I

don't

just

mean

a

search

engine

that

you

install

and

just

plug

and

play,

though

we

are

pretty

simple

to

use

we're

a

framework

where

you

can

build

your.

You

can

use

to

build

your

own

search

engine

to

search

any

of

your

data

that

you

want

cloud

native,

meaning

we

support

all

the

lovely

buzzwords

like

containerization

microservices,

all

of

those

and

deep

learning.

B

Well,

we

use

all

of

the

top

deep

learning

frameworks,

things

like

tensorflow,

pytorch,

mindsport,

all

the

ones

you

know

and

love

and

have

maybe

played

with.

Maybe

not

the

main

part

of

gina

is

gina

core.

So

it's

a

state-of-the-art

design

pattern

for

such

workflows

use

the

latest

ai

yada

yada

works

out

of

the

box.

The

key

points

are

we're

universal,

so

we

run

on

a

lot

of

platforms,

mac

and

linux

right

now.

B

Windows

is

in

the

works

not

running

on

microwaves

yet,

but

you

can

run

us

on

a

raspberry

pi,

we're

really

easy

to

use.

I

mean

I

knew

barely

anything

about

machine

learning

before

I

joined

and

I've

been

building

my

own

examples

with

gina

to

search

like

all

the

lines

from

star

trek.

The

next

generation,

because

that's

who

I

am

cloud

native,

as

I

said,

and

with

that

cloud

nativity

comes

scaling,

we're

extensible.

We

have

a

hub

where

you

can

a

bit

like

transformers

hub.

B

B

So

when

I

talk

about

a

search

framework,

the

common

question

would

be

oh

well.

What

does

it

search

right?

Well,

hey?

What

doesn't

it

search?

We

do

text,

we

do

images,

we

do

audio,

we

do

video

and

that's

not

all.

We

do

cross

modal

and

multimodal

all

for

the

low

low

price

of

well,

nothing

at

all,

because

we're

open

source

so

cross

modal

and

multimodal

are

words

that

not

many

people

really

know.

I

didn't

know

before

I

came

on

board.

B

B

B

B

B

A

few

cool

features:

it's

really

quick

to

get

going.

We

we

use

cookie

cutter,

so

that'll

help

you

create

all

the

boilerplate

code.

You

need

just

by

running

a

command

from

your

terminal.

It'll,

give

you

interactive

choices.

You

just

hit

one

or

two

or

three

or

four

to

choose

text

or

graphics

or

whatever.

You

want

to

search

it's

cloud

native,

as

I

said,

universal,

all

kinds

of

data,

first

class

support

for

ai

models,

fast

annoy,

onyx,

all

the

lovely

buzzword

ones

and

plug

and

play

so

with

gina

hub.

B

B

B

B

B

B

B

B

B

Our

most

basic

example,

I

would

say,

is

hello

world.

So

this

uses

the

really

common

fashion,

mnist

data

set,

which

is

a

huge

data

set

of,

I

think,

24

by

24

monochrome

images

of

shirts

tops

bags

socks.

Things

like

that,

so

it'll

find

a

random

it'll,

dig

out

a

random

subset

and

then

find

closest

things

in

the

whole

data

set

that

match.

So

there's

no

real

user

query

here,

it's

just

randomly

generated

and

to

save

time,

where

are

we

here's

one

I

made

earlier

so

yeah

on

the

left?

B

B

So

we've

shown

you

what

gina

can

do.

We've

shown

you

some

examples,

and

now

how

do

you

actually

do

it?

So

gina

has

a

whole

family

of

different

components

that

all

interact

together.

So

you

can

get

a

high

level

view

a

mid-level

or

a

low

level

view

where

low

level

you'd

be

dealing

directly

with

the

models

but

on

the

highest

level

you're

dealing

with

the

flow,

so

the

flow

deals

with

a

specific

task

you

want

to

achieve

and

in

search,

that's

generally

indexing

or

querying,

and

each

flow

consists

of

different

pods.

B

These

are

the

things

that

actually

the

tasks

that

build

up

the

flow

and

we'll

go

into

those

in

a

bit,

so

it

acts

as

a

pipeline

telling

each

part

what

to

do

and

what

the

next

part

in

the

chain

is

and

for

building

your

own

flow.

We

have

three

ways

to

do

it.

I'm

a

fan

of

the

yaml

root

myself

because

I

live

in

vim,

but

you

can

also

do

it

directly

in

python

or

if

you're,

a

more

graphical

person,

we've

got

gina

dashboard,

so

you

can

do

it.

B

B

B

What

is

the

second

top

match

and

putting

them

in

order

instead

of

just

going

blah

here?

Are

all

the

red,

nike

sneakers

again,

it's

all

defined

in

yaml

and

I

like

to

think

of

it

as

the

brains

of

gina,

because

this

is

the

bit

that

uses

the

ai

models.

So,

as

you

can

see

down

here,

we've

got

transformer

torch.

Encoder

we've

got

distilbert,

which

is

a

really

common

language

model

based

on

bert

that

was

created

by

google

and

yeah.

B

Beyond

the

flow

and

the

pods

we've

got,

peas,

we've

got

executors,

we've

got

drivers

and

retuja

will

go

into

some

of

those

in

a

bit

and

then

to

run

it

all

it's

as

simple

as

python

app.py

index,

or

you

can

dockerize

it,

and

it's

really

simple

to

run

it.

That

way

too,

once

you're

up

and

running,

you

can

monitor

it

with

the

dashboard

and

also

in

the

dashboard.

B

B

I

talked

about

cookie

cutter

before

it

uses

that

so

it's

there's

almost

no

hands-on

coding

required

with

it

dead.

Simple

we've

got

our

examples,

repo

with

a

whole

bunch

of

stuff

up

there,

and

we've

got

gina

doc's

site

docs.gina.ai.

So

yep,

that's

all

from

me,

and

I

am

now

going

to

turn

it

over

to

retuja

thanks.

Everyone.

D

F

C

C

Yeah,

so

any

document

is

initially

crafted

in

the

sense

it's

subjected

to

different

sorts

of

pre-processing

and

transformations

into

different

chunks,

at

which

the

document

is

broken

down

before

it

enters

the

encoding

phase.

So

the

encoder

is

when

we

are

actually

making

a

vector

representation

of

each

chunk

and

post

this.

It

gets

to

the

indexer

phase,

where

we

actually

try

to

store

this

indexed

document

and

then

later

on

during

query

time.

C

This

is

used

for

retrieval

and

that's

where

we

sort

the

results

and

like

alex

mentioned

about

the

flow

api,

the

entire

context,

management

of

all

these

pods

and

executors

is

handled

by

the

flow

api.

So

basically,

we

could

imagine

all

these

spots

to

be

different

micro

services

that

are

very

well

executed

using

this

flow

api.

So

as

a

user,

I

don't

need

to

bother

about

where

my

pods

are

running

and

I

only

can

orchestrate

it

very

easily

using

simple

yaml

files.

C

C

So

a

typical

index

flow

looks

like,

as

alex

mentioned

in

the

previous

slides.

We

have

different

stages,

including

chunk

segmentation

document

indexing,

encode

and

so

on.

So

typically,

any

flow

in

gina

has

an

entry

point

through

the

gateway,

and

then

we

have

different

parts

that

are

either

sequential

or

parallel,

and

all

this

is

possible

to

it's

possible

to

implement

this

flow

very

easily,

using

gina

dashboards

or

through

the

cml

file.

So

it

makes

context

management.

Super

simple

in

general,

using

flow

as

we

mentioned,

gina

supports

different

modalities

of

search.

C

C

So

we

make

sure

that,

irrespective

of

the

mode,

the

input

is

always

in

the

form

of

a

byte

buffer,

and

we

allow

different

functionalities

like

index,

search

and

train

for

index

for

feeding

in

the

index

data.

So

we

have

different

crafters

for

different

types

of

modes

that

are

supported

by

gina

and

we

allow

all

indexing

and

searching

at

different

levels

like

if

there's

a

text

document

that

has

several

lines,

we

can

call

index

lines.

If

there

are

different

files,

we

can

call

index

files

directly

and

supply

the

input

function.

C

C

C

C

So

to

imagine

this

as

a

tree

structure,

this

is

how

a

a

sample

tree

traversal

looks

like

in

gina.

So

this

is

like,

given

granularity,

0

and

adjacency

0,

we

are

moving

down

at

the

chunk

level.

Then

we

move

down

at

match

level

and

so

on.

So

the

trick

here

is

we

move

on

from

0

0

to

1,

0

and

then

1

1.

We

cannot

move

directly

from

0

0

to

1

1,

so

that's

an

important

concept

here,

while

traversing

so

to

illustrate

this

in

a

better

manner.

C

This

is

an

example

where

we

have

a

car,

so

at

the

chunk

level,

what

we'll

be

doing

is

we'll

be

looking

at

having

a

zoomed

in

look

inside

the

car

looking

at

smaller

parts

of

this

image.

So

this

is

where

we

are

going

down

at

the

chunk

level.

So

this

is

at

level

one,

and

this

is

further

down

at

level

two.

Where

we

only

see

the

headlight,

then

what

we

are

doing

is

at

this

depth

of

level.

C

At

this

depth

of

chunk

level,

we

are

going

to

find

the

matching

images

so

for

this

zoom

in

car.

We

get

another

match

here,

which

is

quite

similar.

Similarly,

if

we

were

to

go

down

to

the

headlight

level,

then

we

find

a

similar

headlight

of

the

silver

car

corresponding

to

this

green

car,

and

this

is

like

different

levels

at

which

we

are

trying

to

match.

C

C

We

want

to

embed

documents

in

a

similar

space

and

compute

the

similarity

and

make

sure

that,

while

retrieving

or

while

querying

and

ranking

the

documents,

similar

documents

were

indexed

closer

to

each

other

in

the

same

embedding

space

so

further

down,

it's

easy

to

retrieve

the

corresponding

text

for

the

document.

If

we,

if

we

were

to

have

a

key

value

index

one,

so

these

two

indexes

vector

and

key

value

indexes,

are

often

used,

sequentially

and

parallely

for

different

purposes.

C

So

this

is

a

common

design

pattern

in

g

now.

Next,

I

would

talk

about

the

text

document

segmentation

concept.

So

in

g

now

we

introduce

the

concept

of

chunks

or

a

recursive

document

structure

where

we

say

that

one

document

is

in

turn

composed

of

multiple

other

documents

at

different

levels

of

depth,

making

it

a

recursive

document

structure,

and

each

of

these

compositions

is

a

chunk.

Basically.

C

Also,

we

have

indexers

available

at

different

depth

levels.

So

it's

important

to

note

that

when

we

are

searching

that

search

is

actually

performed

at

the

chunk

level,

where

we

follow

the

compound

index

or

pattern,

and

if

we

are

to

find

out

the

actual

document

that

corresponds

to

the

particular

chunk.

That's

where

the

document

indexer

comes

into

picture

as

a

final

step.

C

So

another

important

concept

is

what

happens

in

index

time

and

what

happens

at

query

time

so

at

index

time,

the

two

indexers,

which

is

the

document

indexer

and

the

chunk

indexer

they

work

in

parallel.

So

what

happens

is

chunk?

Indexer

is

responsible

for

receiving

messages

from

the

encoder

and

doc.

Indexer

is

the

indexer

that

actually

fetches

the

documents

from

the

gateway,

so

gateway

is

like

the

entry

point

for

the

flow.

C

So

in

at

query

time

document

and

chunk

indexes

work

sequentially

in

the

sense

that

a

document

gets

messages

from

chunky

indexer

with

a

ranker

that

we

have

implemented

called

the

chunk

to

dock

ranker,

and

then

the

ranker

ranks

these

chunks

by

relevance,

and

then

it

gets

the

parent

ids

corresponding

to

these

chunks

and

then

to

track

which

document

this

id

belongs

to.

We

use

the

doc

indexer.

C

So

to

summarize,

gina

supports

a

lot

of

different

types

of

modes

of

search,

and

it

makes

sure

that

different

piece

together

are

embedded

in

a

pod,

and

this

pod

acts

like

a

micro

service.

It

could

be

for

different

types

of

executors,

like

a

ranker,

a

crafter,

an

indexer,

an

encoder

and

so

on,

and

the

flow

api

gives

a

very

seamless

experience

in

the

context

management

for

all

these

pods

and

for

refining

our

results

to

make

our

search

even

more

better.

C

We

have

recently

introduced

the

concept

of

recursive

document

structure,

a

tree

traversal,

while

indexing

and

in

future

we

also

have

plans

of

supporting

kubernetes

and

managing

this

entire

thing.

That

is

now

available

as

a

docker

image

hosted

on

docker

hub

also

will

be

ported

to

kubernetes

so

yeah.

That's

it

from

my

side.

B

B

A

G

B

G

So

I

understand

the

question,

but

I

mean:

can

I

put

when

I

have

the

extractor

when

I

can

read

3d

data

and

extract

some

information

on

the?

I

have

a

bounding

box,

for

example,

and

I

use

the

length

of

the

box

or

something

like

that

can

is.

There

is

the

api

able

that

I

can

put

the

stuff

into

it.

So

then

I

can

search

it.

E

E

So

we

currently

do

not

have

anything

that

you

are

asking

for

this

kind

of

applications,

but

these

are

very

easy

to

sort

of

write

as

small

modules,

and

this

can

be

added

to

the

gina

and

the

rest

of

the

file

automatically.

You

just

need

to

make

sure

that

the

requirement

of

these

classes

are

satisfied,

inputs

and

outputs.

B

B

So

in

a

book

you

have

chapters,

so

each

of

those

would

be

a

sub-document

and

in

a

chapter

you

have

paragraphs

again,

sub-documents

of

the

chapter

so

sub-sub-documents

and

in

a

paragraph

you

have

sentences

and

sentence.

You

have

words,

so

that

would

so

you

could

search

from

the

word

level

and

that

would

bubble

up

or

you

could

search

for

a

paragraph

level

or,

however,

you

want

to

do

it.

Is

that

about

right,

return,

practice.

E

B

A

B

Search

engine

you

have

to

write

all

sorts

of

rules

and

processing

pipeline

to

do

stemming

lemmetization,

all

of

that

jazz

with

gina

we're

using

pre-trained

models

that

were

like

created

by

google.

So

they

already

know

what

they're

doing

so.

You

just

throw

the

data

at

it

and

it

returns

relevant

data,

but

it

needs

a

lot

of

time

to

index

that

data.

So

it's

the

computer

that

takes

the

time

not

the

developer.

A

B

B

So

this

is

where

you

can

find

us

where

you

can

talk

to

us,

ask

questions

and

yeah:

that's

the

best

way

to

get

started

in

talking

to

us,

and

we

really

like

people

to

use

the

general

channel

and

not

private

channels.

For

this,

because

you

know

sharing

is

caring.

Let's

share

that

information

out

there

and

let

the

world

see

it,

so

everyone

can

benefit.

B

B

B

B

G

B

So

yeah

you

can

run

it

from

docker.

You

can

query

it

straight

in

your

web

browser

earlier.

I

showed

you

ginabox,

which

is

just

here,

and

this

is

all

running

on

genus

server.

You

just

type

in

the

end

point

of

your

running

docker

instance

and

it'll

connect

flawless

well,

seamlessly,

I

would

say,

and

it

just

works

out

of

the

box,

so

you

don't

need

to

install

any

front

end.

You

don't

need

to

get

npm

going.

You

don't

need

to

run

a

web

server

locally.

B

B

B

In

this

tutorial

it

is

comprehensive.

It

goes

through

every

little

thing,

so

it

is

quite

long,

but

that's

because

it's

holding

your

hand,

because

this

is

the

first

project

for

a

lot

of

people

once

you've

done

this

project.

You've

already

picked

up

a

lot

of

info,

so

you

can

get

up

and

running

for

the

next

ones.

B

So

yeah

and

then,

when

you

want

to

run

it

it's

as

simple

as

app.py

index,

which

takes

a

while,

because

machine

learning

always

does

with

text

it's

not

so

bad.

It

might

take

well

depending

if

you

use

a

lower

max

stocks.

Value

you'll

get

worse

results

because

you'll

index

a

lot

less,

but

you

can

also

get

up

and

running

a

lot

more,

a

lot

quicker

because

it

doesn't

need

time

to

index

so

much

so

it's

a

speed,

quality

trade

off

there

and

then

to

run

it.

B

Which

introduces

you

to

the

gina

family?

I

return,

and

I

both

spoke

a

little

about

this,

so

we've

got

documents

and

trunks,

so

chunks

are

just

essentially

sub

documents,

everything's

defined

in

yaml,

so

dead,

simple

to

use

no

actual

code

required,

the

executors

do

most

of

the

work

encoding,

crafting,

ranking

and

so

on.

B

B

A

G

B

B

Yeah,

yes,

so

yeah!

So

that's

when

our

engineers

all

get

together,

overzoom,

it's

a

public

event.

Public

webinar!

Anyone

can

join

in.

Ask

questions,

learn

more

about

gina

there

and

you

know

find

out

what

the

engineers

are

working

on

right

now

and

that's

where

you

can

hear

from

our

ceo,

hans

xiao

he's

the

brains

behind

gina.

B

Well,

he's

the

guy

who

started

gina.

There

are

many

brains

behind

genome

there

and

yeah

he's

also

the

man

behind

the

fashion

mnist

data

set

that

I

demoed

earlier.

He

runs.

He

started

the

birth

as

service

github.

Repo

he's

done

a

lot

in

machine

learning,

we're

always

looking

for

new

team

members.

So

if

you've

got

a

background

in

yeah

machine

learning,

artificial

intelligence,

evangelism

and

developer

relations,

product

management

reach

out

to

us,

because

we're

growing

fast

we're

looking

for

new

talent

and

yeah.

B

B

But

yeah

the

number

one

way

would

be

join

us

on

our

slack.

The

link

should

be

in

the

chat

which

is

there

or

somewhere.

I

don't

know

where

you

people

put

your

chat

windows

but

it'll,

be

either

on

youtube

or

on

our

zoom

chat.

So

you

can

find

us

there

and

that's

the

best

place

to

reach

out

we're

very

friendly,

we're

eager

to

support

you.

We

don't

bite

much

so.

B

B

But

yeah

I'm

really

excited

personally

about

seeing

gina

used

for

real

world

applications.

We

had

another

one

built

by

arte

tanona.

He

built

a

search

system

for

european

judicial

rulings

using

gina

that

wasn't

even

at

a

hackathon.

That

was

just

something

he

did

and

I'm

also

looking

forward

to

integrations

with

other

frameworks.

Other

things

at

the

hackathon

someone

built

a

hybrid

chatbot,

neural

search

system

using

gina

and

rasa,

which

is

a

machine

learning

powered

chatbot

platform,

also

based

here

in

berlin.

B

B

E

Everyone

is

excited

about.

This

feature

is

that

a

lot

of

people

come

and

ask

us

that

in

the

current

we

can

use

the

models

that

are

available,

but

people

want

to

train

their

own

model

and

then

use

them.

So

this

is

something

that

we

look

forward

to

and

it's

a

very

complicated

piece,

because

we

have

to

do

it

in

a

distributed

way

in

a

cloud

and

also

support

lot

of

different

models.

So

this

is.

B

F

A

C

C

C

F

B

D

B

B

E

Maybe

in

one

of

the

next

talks

you

can

bring

a

child

a

kid

five-year-old

and

then

maybe

he

can

do

the

things

and

then

prove

it

simple.

Is

it

for

certain

use

cases?

It's

really

good

like

for

some

well-known

cases

like

semantic

search,

it

really

runs

runs

out

of

the

box.

Otherwise

you

have

to

do

tons

of

engineering

like

before.

E

E

G

A

Well,

I

was

actually

wondering

about

the

road

member

little

and

did

it

dive

into

the

projects

on

github,

and

then

I

found

out

about

something

about

cicd

and

then

I

was

reading

the

source

code,

but

we

can

also

do

that

asynchronously.

So

I

would

say

we

we

can

wrap

it

up

here,

thanks

for

the

for

the

great

introduction

for

everything,

you've

you've

shown

us-

and

I

I

have

learned

a

lot

today.