►

Description

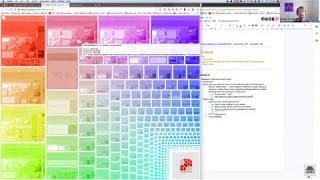

First iteration of our Frontend Foundation office hours.

Handbook: To be added

Agenda: https://docs.google.com/document/d/1rTkn3ZZEYALgLgrtl8rbMJ0PH2Tw6T2n19t9b3Ebur4

A

A

B

A

B

A

B

C

Hey

everyone,

sorry

for

the

delay,

Jung

and

Mike.

If

you

don't

mind,

I

would

be

driving

dissipate

because

I

have

like

a

little

idea

in

my

mind

at

the

end,

you

can

tell

us

if

it's

if

it

worked

out

well

and

if

you

like

this

one

I,

will

obviously

deferred

true

to

you

Mike.

If

anything,

you

know

or

feel

free

to

interrupt

at

any

time

if

I'm

talking

nonsense

and

all

the

others

that

are

here

also

feel

free

to

ask

any

questions.

C

B

C

C

So

you're

here

joined

by

one

of

the

elders

of

the

internet,

a

no

get

left

front.

End

yeah

cool

together,

we've

been

kind

of

forming

the

front

end

foundation

team

for

now,

as

you

know,

or

how

hiring

change

so

it's

it's

more

or

less

Mike

and

I'm

the

manager,

and

so

we

thought

hey.

Let's

do

office

hours

because

it

gives

us

the

opportunity

to

talk

about

long

topics

like

technical

topics.

You

know

in

in-depth,

but

we

haven't

really

done

any

more.

C

We

want

to

you

know

our.

We

wanted

to

start

our

work

with

some

other

work

that

we've

done

before,

but

in

the

back

working

group.

So

we

just

want

to

you

know,

jump

on

web

pack

and

it's

pretty

funny,

because

web

pack

also

has

to

do

with

our

front-end

performance

like

how

do

we

split

chunks?

Do

we

do

smart

things

to

be?

Do

not

so

smart

things

in

with

our

epic

config

and

resulting

bundles?

So

we

thought

it's

a

it's

a

good

topic

for

the

first

one

and

there

is

no

script.

It's

all

freebie.

C

C

B

No

I

think

you

kind

of

covered

it.

It's

yeah,

I

guess

the

mandate

of

at

least

this

section

of

the

ecosystem

team

is

to

you

know,

deal

with

sweeping

changes

to

the

build,

tooling

and

I

suppose

encompasses

testing

and

many

other

things

as

well,

but

I

think

I,

guess

early

on

and

we

sort

of

decided

we're

gonna

focus

on

some

long-standing

webpack

issues.

So

this

is

a

important

to

discuss

and

yeah

there's

a

lot

of

technical

debt

that

we've

accumulated

over

the

past

couple

years.

That

just

haven't

gotten

enough

attention.

B

A

Yeah

super

happy:

that's

this

team

exists

very

excited,

I'm,

not

sure,

like

it's

kind

of

related

to

their

webpage

thing

or

so

I'm

interested

in

kind

of

like

this

global

pack

situation.

Cuz,

just

I

stumbled

over

it,

like

recently

and

kind

of

start

to

look

into

the

look

closer,

and

we

have

like

our

global

package,

which

is

kind

of

like

large

right

now,

right

and

I

stumbled

over

okay,

we're

kind

of

struggling

kind

of

slimming

it

down

because,

like

it

has

like

coding

there.

That's

like

just

used

and

have

multiple

pages.

A

This

kind

of

thing,

yeah,

I,

think

you're

called

like

the

main

bundle

right.

Okay,

the

main

bundle

there,

the

big

one

and

I

thought

or

so

like

does

it

make

sense

for

us

from

approach

wise

till

we

say:

can

we

create

something

like

a

main

light

or

so

the

test?

That's

like?

It

really

only

has

two

libraries

we

care

for

and

to

take

care

of

the

initial

work

like

it's

saying.

We

need

to

get

probably

to

head

up

our

work

with

this

main

light

bundle

without

kind

of

like

loading,

everything

so.

C

C

C

No

I

didn't

work

on

the

weekend.

Never

never

work

on

the

weekend,

but

I

just

wanted

to

give

some

perspective

for

the

people

who

might

not

know

it.

So

the

thing

roman

is

talking

about

reloading

javascript

on

every

page.

That's

our

main

bundles

or

main

entry

point,

and

it's

just

loaded

on

every

page

and

right

now,

there's

a

lot

of

stuff

loaded

in

there.

If

we,

actually,

you

know,

search

in

the

javascript,

there

is

like

it's

called

main

GS

right

and

it

like

loads.

C

Jquery

web

pay,

epic

stuff,

like

polyfills,

a

lot

of

the

things

that

are.

You

know,

like

tooltips,

lazy

image

loading

all

these

things

that

you

might

kind

of

need

on

every

page.

Maybe

not

everything

that's

in

here

and

this.

This

bundle

right

now

gets

concatenated

with,

like

all

of

the

libraries

that

we

use

on

every

page

or

that

we

use

a

lot.

The

threshold

that

we

actually

have

is

like.

You

need

to

use

it

on

90%

of

the

pages

and

then

it's

thrown

in

here

as

well.

C

C

We

just

removed

underscore

to

yesterday,

so

if

you

search

for

underscore

yeah,

it's

not

there,

so

it's

fairly

recent.

Otherwise

you

you

can

find

it

by

looking

for

me,

front-end

maintainer,

into

a

murder

case

that

they

have

merged

so

I,

don't

know!

If

Natalia's

build

is

one

hour

ago

it

looks

good,

then

you

can

go

into

the

compile

assets

with

the

dots

and

then

it's

there.

As

a

report.

C

Repick

report

and

it's

the

same-

it's

the

same

HTML

file

that

will

be

pushed.

We

just

had

some

problems

in

the

past

because

our

pipelines,

nob

of

all

the

talks,

exceptions

and

so

on.

They

didn't

publish

like

two

pages

regularly,

so

the

stuff

that

you

might

find

here.

It

might

be

outdated,

but

you

know

in

order

to

illustrate

the

point:

it's

pretty

easy

to

see.

So

how

does

that

Peck

work

for

us,

but

we

have

different

entry

points

in

web

pack

that

are

tied

to

the

rails

routes.

C

C

You

know

here

you

can

see

Commons

page

IDE,

page

snippets,

edit

page

snippets,

edit

new,

so

this

is

like

code,

that's

shared

between

page

snippets,

edit

da

IDE

and

and

so

on,

and

you

can

see,

for

you

can

see

that

you

can

see

that

vet

peg

bundles.

These

things

together.

So

if

you,

for

example,

go

to

a

snippets,

show

page,

it

will

load

the

snippets

show

page

thing:

it

will

load

the

common

stuff

and

it

will

load

the

main

bundle.

C

B

C

C

So

one,

let's,

let's

dive

into

you

and

just

have

a

look

what's

actually

in

the

main

bundle.

So

you

can

see

like

everything-

that's

in

here's,

obviously

our

node

module.

So

this

is

dependencies

that

we're

loading.

Everything

else

is

what

we're

responsible

for.

So

one

of

the

things

will

responsive

power

Falls.

We

have

fall

back

for

emojis

and

we

have

like

a

big

JSON

file.

That's

that's!

You

know

generated

that

has

like

four

bags

for

emojis

to

load

actual

pictures.

C

If

your

system

doesn't

support

emojis-

and

this

is

one

that

has

been

moved

out-

but

there

was

like

a

little

bug

with

it,

so

it

has

been

moved

back

in

because

you

know

it

led

to

emojis

not

properly

loaded,

but

this

is

a

big

one.

It's

just

like

300

kilobyte

of

something

that

you

might

not

need.

If

you

probably

don't

need,

if

you

have

a

modern

system,

you

can

see

a

lot

of

stuff.

That

makes

sense.

We

have

like

view

in

there.

C

So

if

we

search

for

view

and

now

I

just

need

to

zoom

out

and

find

the

main

bundle

again,

where

is

it

main

bundle

you

large?

Why

are

you

yeah

all

right,

they've

got

a

different

color.

This

is

lovely.

You

can

see,

we

have

view

in

there.

We

have

boots

view

in

there,

which

is

now

highlighted.

We

have

UX

there.

We

have

you

Apollo

in

there.

C

One

of

the

things

that

we

just

accomplished

last

night-

big

props-

the

people

worked

in

it,

for

you

know,

especially

Scott

and

Laura,

getting

and

I

think

I.

Think

who

say

you

know

getting

rid

of

underscore

and

favor

of

Lowe,

because

Lowe

can

probably

properly

tree

shake

so

actually

just

in

at

the

stuff

that

we

really

use

here

right

and-

and

so

we

got

rid

like

of

50

kilobytes.

C

C

D

To

interrupt

you,

Lucas

I

think

maybe

if

we've

got

someone

on

the

call

from

monitoring

team

who

can

speed

this,

but

I

think

we

load

each

and

every

page,

as

well

as

the

monitoring.

There's

a

monitor.

Yes

chunk

there.

If

you

paste

a

link

to

a

monitoring,

dashboard

chart

anywhere

and

get

that

I,

think

or

any

markdown

area,

then

that

charts

get

rent

gets

rendered

in

line.

D

C

Exactly

good

point-

and

we

probably

should

employ

it

a

simple,

a

similar

technique

that

were

to

what

we're

doing

with,

for

example,

mermaid.

You

know

the

the

mermaid

charts

or

what

we're

doing

with

like

these

viewer.

So

you

can

see

we

have

like

pretty

big

dependency

if

you

ever

want

to

render

a

balsamic

file

like

no

one

wants

to,

but

or

if

you

want

to

render

PDFs.

We

have

like

these.

C

We

have

these

separate

chunks

that

are

just

low

if

you

actually

view

like

such

a

page

right

so

yeah,

great

call-out,

and

it's

exactly

the

stuff

that

we

want

to

talk

about

that

you

want

to

take

away.

We

need

issues

for

that.

You

know

the

front

end

foundation

team

might

could

do

or

you

know,

with

instructions.

Other

people

could

be

doing

on

the

team

as

well.

Another

big

culprit

that

we've

just

introduced

recently

is:

we

have

Indy

IDE

folder.

C

So

if

we

actually

look

into

our

ID

oops,

let's,

let's

find

IDE

utils,

there's

utils

GS,

which

loads

all

the

languages

Monaco

supports.

Does

some

mapping

on

the

languages

that

Minako

supports.

You

know,

because

we

use

that

to

be

smart

and

say

hey.

This

file

is

like

a

text

file

because

we

know

because

Monaco

knows

right.

We

just

rely

on

on

the

little

company

called

Microsoft

here

and

open-source

community

behind

it

that

we

know

and

and

render

icons

and

stuff

based

on

Monaco,

and

this

actually

leads.

C

You

know

to

ask

loading

Monaco

on

the

merge

request

page

because

unfortunately,

Monaco

cannot

be

tree

shake.

So

if

you

require

something-

and

this

is

like

two

two

or

three

layers

of

indirection

by

just

requiring

these

utils-

you

suddenly

load

up

seven

megabytes

into

the

merge

request

page.

So

if

you

go

to

any

merge

request

page

right

now,

you

load

15

megabytes

of

JavaScript

right

and

one

of

the

things

that

I

have

been

working

on

because

was

like

a

little

pet

peeve.

C

Is

we

have

this

web

pack

memory

metrics,

and

this

is

actually

to

track

like

development

memory

that

we

need

to

take

when

developer

can

pick

how

much

memory

of

your

RAM

of

your

computer

memory

it

takes

when

running

back

and

I've

wrote

like

scripts

to

analysis,

the

the

bundle

sizes

and

so

on.

In

order

to

be

able

and

would

do

it

continuously,

we

would

do

it

continuously

in

a

CI

job

that

creates

like

artifacts,

that

saved

a

bundle

sites

for

each

entry

point

and

for

the

main

trunk,

and

then

we

can

write

a

danger

job.

C

You

know

which

goes

in

and

says:

hey

your

merge

request,

just

bloat

it.

You

know

this

entry

bundle

by

two

megabytes,

200

kilobytes

whatever

and

or

you

know

your

merge

request-

made

the

situation

like

a

lot

better

and

so

because,

right

now,

a

lot

of

these

mishaps

probably

also

happen,

because

you

don't

have

any

visibility

into

it.

Maintain

us

how

don't

have

any

visibility

into

it

so

making

like

these

changes,

visible

and

visible

over

time

and

and

in

your

while

you

work

on

these

things

definitely

makes

sense

right.

C

Yeah,

sorry,

for

you

know,

taking

up

so

much

time

and

talking

I

could

talk

about

the

challenges

here

as

well,

because

currently

the

stats

JSON

is

530

megabytes.

So

pausing

that

and

doing

something

more

meaningful

out

of

it

is

was

a

bit

challenging

and

interesting,

which

we

also

can

improve

upon.

There's

great

stuff,

that's

going

to

come

here!

C

So

this

is

just

like

an

entry

point

for

discussion.

We

can

go

a

bit

more

in-depth

into

how

chunk

splitting

actually

works.

If

you

want

to,

there

are

a

few

things

that

have

changed

where

we,

you

know,

have

written

it

down

as

a

keyword

earlier

Mike

and

I

the

HTTP

through

server

push,

because

we

now

utilize

HTTP

2.

So

even

you

know

one

optimization

that

we

could

do

that

would

be

cheap

without

any

big

thing

would

be

hey

if

we

maybe

reflect

the

things.

Even

it's.

C

If

it's

the

same

JavaScript,

if

he

maybe

just

split

it

into

more

chunks,

we

could

make

use

of

HTTP

2

loading

at

parallel.

We

noted

a

lot

of

things

that

we

can

load

asynchronously

yeah

to

just

improve

this

situation

so

and

I

will

stop

talking

now

and

give

other

people

room

to

ask

questions

all

these

kind

of

things.

Yeah

I'd.

B

This

is

the

thing

that

webpack

purportedly

is

supposed

to

be

able

to

notice

and

account

for

on

its

own,

and

it

should

automatically

take

that

and

put

it

into

one

common

chunk,

because

this

is

you

know,

multi

megabytes

addition

to

several

chunks

that

could

be

put

into

one

cashable

chunk,

but

it

for

whatever

reason,

because

we

have

so

many

entry

points

at

gitlab

I

think

it's

not

the

way

they

anticipated

people

using

the

split

jumps

plug-in.

It

does

not

notice

these

things

automatically.

B

So,

in

order

to

fix

this,

you

can

look

at

the

changes

that

I

did

on

this

particular

mr,

but

it's

similar

to

what

I

had

to

do

with

the

main

chunk.

You

have

to

massage

the

configuration

to

basically

tell

it

to

notice

the

mónaco

dependency

and

creating

its

own

junk

for

it,

because,

unfortunately,

it's

not

doing

that

on

its

own

I

would

like

to

some

time

see

if

we

can

contribute

a

fixed

upstream

to

webpack

itself.

That

will

just

make

split

chunks,

plugins,

smarter,

so

that

we

don't

have

to

do

this

manual.

B

C

Yeah,

so

this

split

chunks

here

you

can

see

this.

The

main

bundle

right

with

what

we

mentioned

before

is

like

hey

all

the

stuff

that

ends

up

in

here

priorities

like

the

higher

you

know

right

now,

for

example,

if

Monaco

would

actually

end

up

in

the

main

trunk,

it

still

would

end

up

in

the

main

trunk.

So

maybe

we

should

talk

about

the

priority,

but

priority

is

just

like

how

important

is

this

main

chunk?

Did

you.

B

Can

see

from

the

configuration

there

that

min

chunks

is

set

to

entries

count

time,

zero

point

nine,

which

means

something

would

have

to

be

a

90%

of

all

the

entry

points

before

it

ends

up

in

the

main

chunk.

So

that

shouldn't

happen

with

Monaco,

but

you

know

could

make

a

mistake

and

and

import

it

somewhere

with.

C

And

similar

dis,

for

example,

would

be

a

point

where

we

could

go

in

and

start

grouping

like

other

dependencies

and

these

kind

of

things,

but

yeah,

probably

the

question

is

like:

how

much

would

this

help

if

we

still

load

it

on

every

page,

like

like

the

thing

that

mark

mentioned,

with

with

the

monitoring

bundle

right?

What's

actually

yeah

Jill's

come

in

day

time,

you

chills,

okay,

multiple

crafts,

chaos,

stuff.

D

C

Actually,

a

really

good

point-

and

this

is

what

the

little

script

here

is

doing

right

like

this,

gives

you

the

the

average

size

the

size

of

the

main

bundle,

and

this

is

the

average

size

of

a

page

without

the

main

bundle.

So

if

you

would

compare

the

East

is

this

would

definitely-

and

maybe

we

have

to

look

at

the

statistics

because

it

could

be

like

here-

you

actually

can

see

one

probably

when

we

did

that

this

Monaco

mistake,

because

apparently

I

had

a

jump

like

on

average

increasing

by

50

60.

C

You

know

how

much

is

this

I

can

yeah

50

kilobytes,

so

just

probably

something

where

we

did

this

mistake

and

something

grew

out

of

proportion

like

dramatically

and

this

you

know

this

is:

where

does

these

toolings

do

these

tools

once

we

write

them?

Let

me

just

let

me

just

open

up

here:

can

help

us

definitely

to

do

this.

A

be

testing

right

and

we

are

so

oh

and

I've

deleted

it.

No

I've

deleted

it.

C

So

you're

going

to

see

some

live

coding

in

a

second

I've,

actually

already

written

a

script

which

compares

to

which

compares

to

arbitrary

analysis

of

the

bundles

and

gives

you

groups

through

the

stuff.

After

you

know,

there's

like

a

threshold

of

one

kilobyte

which

is

really

low,

but

if

you-

and

if,

if

you

know

something

grows

at

least

1%

or

one

kilobyte,

it

would

be

would

be

counted

as

significant

change.

You

know

grow

or

shrinking,

and

this

is

what

we

would

visualize

on

the

danger

plugin.

C

D

C

D

C

That's

actually

good

call-out.

It's

a

good

question,

I,

wonder

if

we

theoretically

could

compile

a

web

pack

in

indifferent

in

two

different

at

least

forged

lab

common

omnibus

they

would

scream

at

us

if

we

have

include

our

JavaScript

twice

right

and

then

then

serve

it.

You

know

makes

no

sense.

This

a

be

testing

for

for

on

premise

but

I.

Think,

theoretically,

I

don't

know

exactly

how

it

works.

B

Yeah

we

could

do

that

as

well.

Yeah,

just

I,

wonder

not

gonna,

look

at

the

the

rails

web

pack

implementation

and

sees

I

think

it

caches

the

manifest

in

certain

ways

and

I

know

it's

doable

I.

Just

wonder

if

it's

as

simple

as

a

symbol

like

toggle

to

tell

it

you

know

which

manifests

to

load

or

if

it's

more

complicated

than

that,

but

you

can

look

into

it.

B

But

the

other

thing

about

this

is

we're

kind

of

just

we'd

be

checking

different

ways

of

splitting

up

the

chunks.

It

would

still

be

the

same

amount

of

code

being

parsed

and

executed

on

a

given

page

and

I

feel

like

that's,

where

a

lot

of

our

performance

gains

can

come

from

splitting

up.

The

chunks

might

improve

network

performance,

but

I,

don't

think

it'll

actually

improve

like

the

execution

performance

and

the

parsing

in

the

browser,

and

that's

where

we

should

really

I

think

focus

on

reducing

a

lot

of

the

code.

B

C

D

B

C

B

Is

somewhat

lifted

when,

when

you

can

count

on

using

HTTP

2,

because

if

you

request

multiple

things

at

the

same

time,

the

web

pack

by

default

right

now

tries

to

limit

the

number

of

chunks

per

entry

points

to

4.

Actually,

it's

think

it's

normally

3,

but

we've

increased

it

to

4

in

our

config,

but

we

could

make

that

much

higher

if

we

can

count

on

the

ability

to

load

them

simultaneously

and

perhaps

that

would

improve

the

network

performance

that'd

be

worth

fiddling

with.

If

we're

doing

an

a/b

test.

D

Actually

so

that

reminded

me

the

reason

I

brought

that

up

in

the

first

place

is

that

I

remember

reading

that

the

benefits

of

HTTP

2

are

lost

as

soon

as

you

get

a

connection

that

is,

is

less

reliable,

say,

for

instance,

you're

going

from

China

to

the

US

or

something

then

the

benefit

speech

to

actually

make

are

reversed

and

you

get

a

really

bad

experience.

That's

what

I've

read

so

if

we,

if

we

chunk

too

much,

we

might

be

preferentially,

we.

E

B

The

front-right

wouldn't

that

alleviate

some

of

the

you

know,

acting

from

china

to

the

server

in

the

u.s.

kind

of

situation,

less

common,

I

mean

yeah,

but

yeah.

We

have

to

consider

the

lowest

common

denominator,

which

might

be

somebody

using

an

android

phone

in

you

know

india,

or

something

with

a

core

like

a

3g

connection

or

something

we

want

to

make

it

a

decent

experience

for

for

them

as

well.

A

C

So

even

if

you

cashflow

like

those

two

point,

five

nine

megabytes

right-

it's

stuff

doesn't

change

that

often

right.

If,

but

if

once

we

move

more

to

a

CD

model,

it

might

even

be

meaningful

to

even

if

the

connections

are

worse.

If

you

have

like

users

who

are

using,

you

know

gitlab

regularly,

it

might

still

be

useful

to

put

some

stuff

in

other

chunks

in

order

to

be

able

to

catch

them

longer

or

something

like

that,

especially

stuff

like

jQuery,

which

we

never

update,

or

something

like

that

right,

but

yeah.

C

E

C

Right

speed

that

runs

from

one

data

center.

You

know

with

a

good

connection

to

get

let

that

runs

and

another

data

center.

It

might

not

give

you

real-life

results

right.

Like

you

mentioned

marked

it

I

mean

you

had

the

experience

in

the

past

that

probably

like

loading

stuff

via

VPN

and

so

on,

is

pretty

annoying.

B

To

that

ends,

as

far

as

trying

to

make

things

cacheable

long-term

I,

don't

know

if

that's

really

possible

by

massaging

the

webpack

config

itself,

because

there's

so

many

little

things

that

will

change

that

that

digest

hash

in

the

main

J

s

chunk

that

aren't

even

related

to

the

thing

the

contents

of

the

chunk.

It

could

be

references

to

another

chunk

that

you

know

is

part

of

the

same

runtime

that

you

know

and

any

little.

B

B

Yeah

and

that

could

encompass

you

know

things

like

the

emoji

digests.

Obviously

that

I

don't

think

they've

changed

in

probably

years

I,

don't

know

how

many

times

we've

updated

that

the

jQuery,

the

you

know,

I,

don't

know

how.

Often

we

update

a

charts,

though

each

charge

should

be

async

anyway

and

I'm,

not

sure

how

frequently

we

update

view,

but

maybe

we

have

a

new

policy

on

the

cadence

of

when

we

update

some

of

these

dependencies

with

mine,

for

you

know,

maintaining

cache

ability

to

I.

C

C

If

you

still

want

to

support

all

the

emojis,

you

still

need

to

update

it

and

you

now

suddenly

start

also

because

there

are

some

v8,

emojis

or

emojis

that

are

potentially

misused

or

whatever

you

you

might

even

end

up

in

a

situation

like

hey.

Could

we

please

remove

the

middle

finger

emoji?

Because

it's

not

nice

and

so

suddenly

you

not

only

become

the

gatekeepers

of

of

a

great

tool.

You

also

become

you

know

the

gatekeeper

of

which

emojis

are

okay

in

the

drama.

Sorry

forget

for

a

rent.

C

See

Roman

has

a

question

what

it

makes

sense.

Our

screams

of

the

web.

Big

bundle

suddenly

grew

bikes.

Yes,

exactly!

That's

that

see

it

intent.

Is

there

a

canonical

set

of

pages?

We

can

test

benchmark,

love,

chunking

strategies,

I

think

the

best

canonical

pages

we

would

have

is

are

the

ones

that

we

use

for

site,

speed,

so

site

site.

Speed

is

Andre

still

on

a

call

yeah

Andre.

Do

you

maybe

want

to

say

a

few

words

about

the

site,

speed

that

we

currently

have

I.

E

Can

talk

about

what

we're

doing

yeah

yeah,

so

basically

the

site,

speed

goal

is

just

to

keep

the

performance

periodically

checked,

so

it

doesn't

check

particularly

well

sorry,

the

site,

speed

tool

does

allow

you

to

check

once

the

feature

hits

production.

This

section

of

the

handbook

just

captures

it

the

speed

index

I,

think

and

just

see

if

we

got

it

worse

or

better,

I,

don't

know

what

you

were

expecting.

Why

are

you

expecting

me

to

cover,

though

I

be

in

particular,

so.

C

I

think

we

have

created

test

environments

or,

like

large,

merge

request

or

something

like

that

in

the

past

and

via

could

could,

for

example,

put

them

on

staging,

and

if

we

would

do

a

be

testing

or

these

kind

of

things,

we

could

configure

our

site

speed

in

a

way

that

hits

hits

both

with

a

and

B,

for

example,

and

or

we

can

enable

the

feature

flag

and

run

speeds

and

compare

them

right,

yeah,

yeah

and

in

the

site.

Speed

is

the

repo

actually

linked

here.

C

E

E

So

I

think

probably

I

think

the

one

of

the

team

members

that

is

more

familiarity

with

it

is

either

Tim

or

Jose

I

think

he

worked

on

it

very

own

a

long

time

ago,

but

we

can

totally

tweak

it.

We

can

trick

the

URLs

that

are

currently

seen,

but

the

site

speed

is

currently

just

checking

production,

not

checking

any

other

parts.

We

do

have

a

job

for

review

performance

built

by

the

quality

team.

I.

Think

that

checks

the

performance

of

the

moji

quests,

that's

probably

a

better

place

to

start.

C

Two

months

ago,

five

days

ago,

okay,

so

yeah

description

on

how

to

run

it.

Maybe

this

is

something

where

we

need

to

consolidate

efforts,

but

you

know

this

is

the.

This

is

the

repo

which

we

currently

use

just

linking

it

here,

and

apparently

this

one

would

be

interesting

to

know

the

differences

and

and

so

on.

C

This

is

the

one

that

we

use

for

the

handbook

and

for

continuous

monitoring,

but

I,

don't

know

if

there's

anyone,

you

know

if

you

like,

have

a

setup

that

suddenly

pings

people

if

something

gets

worse

or

if

this

I

mean

similar

to

the

vet

pack,

bundle

right

if

the

stuff

stuff

is

there,

but

nobody

looks

at

it.

It's

nice

that

it's

there,

but

you

know

you

need

alerts

around

these

kind

of

things,

and

but

it's

good

if

we

already

have

it

because

it

makes

it

easier

to

test.

C

For

example,

do

this

a

B

testing

right,

yeah

and

I

mean

hopefully

we're

in

a

good

spot

like

I,

don't

know

in

a

few

months

down

the

road

that

we

actually

can

start

digging

into

the

performance

of

the

code

right,

because

all

what

we're

talking

about

is

just

performance

of

loading

loading,

stuff

and

not

loading

unnecessary

stuff.

But

there's

probably

also

like

how

do

we

write

our

code?

C

E

Say

one

thing

which

one

of

the

things

we

noticed

with

the

review

performance

job

is

that

it's

tough

to

to

get

a

consistent

way

to

measure

performance

when

you're

running

within

a

reviews

app,

but

if

you're

looking

at

sizes

of

bundles,

that's

a

more

objective

way

to

compare

right.

Yes,

if

you're

looking

at

timings,

then

if

you

run

the

review,

if

you're

running

on

two

different

reviewers

apps,

the

timing

might

be

different,

even

though

nothing

changed.

C

And

this

is

like

here,

I'm

comparing

two

bundles.

This

is

the

code

that

I've

not

written

on

the

weekend

and

you

know

it.

You

know

you

can

see,

compares

like

two

two

points

in

time

and

you

can

see

significant

Frink.

So

this

is

the

the

page

that

shrunk.

The

most

you

know

loading

it's

not

considering

the

main

bundle,

because

you

know

the

main

bundle

you

know

just

gets

kind

of

it's

it's.

We

know

that

we

need

to

fix

the

main

bundle.

This

is

just

you

know

to

see.

C

Okay,

what

happens

on

the

merge

request

index

page

and

apparently

we

did

something

between

these

two

times

sense.

You

know

and

it

shrink

significantly,

and

if

you

look

on

the

significant

growth

you

actually

can

see,

you

know

this

is

this

is

what

happened?

What

this

big

bump

in

the

average

meant?

You

know

it's

like

hey.

If

we

had

a

patient

was

like

400

kilobytes

before

and

now

it's

six

megabytes

right,

so

so

stuff

like

that,

and

if,

if

we

know-

and

this

is

like

this

is

a

high

percentage.

C

So

if

we

scream

at

you

once

something

grows

more

than

one

percent

or

4

percent

or

two

percent

whatever.

Obviously

that's

also

can

be

a

downside

because

you

still

could

grow

over

time.

Just

having

this

thing,

scream

at

you

in

India

doesn't

mean

that

you

shouldn't

monitor

these

kind

of

things,

but

you

know

making

this

data

a

bit

more

accessible

and

you

know

maybe

even

giving

all

of

the

sections

and

groups

the

responsibility

hey.

C

You

know

look

at

your

pages

and

do

you

even

realize

how

much

code

you've

written

or

you

know,

even

if

you

just

perfect

awesome

code,

delete

some

code

and

have,

as

a

nice

side

effect,

see

how

much

of

an

impact

it

actually

had.

I

think

would

be

a

pretty

cool

thing

because

then,

once

you

start

deleting

code,

you

know

hey,

even

if

you,

you

know,

have

a

400

q

by

page

and

just

remove

20

kilobytes,

that's

substantial!

C

C

Cool

are

there

any

any

more

questions

regarding

webpack,

bundles

chunks?

We

haven't

touched

on

that

peg

memory

consumption.

This

is

usually

a

topic,

that's

more

interesting

to

the

backend

engineers,

because

they

don't

want

to

run

it

epic,

def,

server

or

young.

Otherwise,

we

maybe

could

do

a

little

retro

of

this

first

meeting

and

see

how

we

could

iterate

on

honored

from

for

the

in

the

future.

Has.

D

B

We

do

have

in

the

very

near

future

to

new

depth

server

options,

one

that

will

compile

once

and

not

retain

everything

in

memory.

So

basically

it

just

runs

web

pack

and

listens

for

changes

on

the

front,

end

assets

and

then

runs

web

back

again

from

scratch,

which

will

reduce

the

memory

footprint

a

lot

for

people

that

don't

free

when

they

touch

the

friend

code,

as

well

as

sort

of

an

intermediate

option

between

what

we

currently

do.

B

We

retain

everything

in

memory

and

nothing

is

exporting

all

of

our

large

dependencies

into

a

dll

and

using

at

the

dll

plugin

in

development,

which

seems

to

reduce

the

memory

footprint

a

bit

as

well,

but

still

allows

us

to

do.

You

know

hot

module,

replacement

and

other

niceties,

for

you

know

hacking

on

code

in

the

front

end

both

should

both

come

in

very

soon.

B

Amara

was

pretty

much

ready

to

go

a

couple

weeks

ago,

but

we

are

doing

a

minor

refactoring

right

now

and

then

it

should

should

get

in

soon

and

then

just

be

part

of

the

GDK

GT

KML

file

as

to

which

option

you

use.

But

as

far

as

compiling

the

code,

only

for

the

page

that

you

are

on

that's

a

fairly

complicated

because

the

way

web

pack

works

right

now

is

it

needs

to

look

at

kind

of

the

entirety

of

the

codebase

to

know

how

to

split

the

chunks

and

the

most

efficient.

B

Is

this

not

the

most

efficient

but

close

to

an

efficient

way

so

that

it's

not

retaining

a

lot

of

duplicate

code

in

memory

and

serving

up

code

in

duplicate

entry

points?

So

it

can't

really

take

a

single

page

in

isolation

or

it

could,

but

we

would

have

to

we'd

have

to

do

a

lot

of

work

to

make

it

work

that

way.

B

As

far

as

the

way

that

the

compile

process

worked

and

a

lot

of

people

to

have

a

manageable

memory

footprint

when

developing

it

might

be

something

worth

looking

into

I

bookmarked

it

and

I

can't

remember

the

name

and

the

tool

they

developed,

but

I

think

they

open

sourced

it.

So

that's

an

option

that

we

could

explore.

I

mean.

C

I

wonder

as

a

lot

of

friend,

engineers

are

basically

working

in

their

stages.

If

it's

theoretically

you

you

create

like

a

list

of

stuff

that

you

want

to

compile

right

like

hey

this,

this

I

I

just

could

care

about

this

code

and

then,

if

you

go

on

another

page,

we

just

give

you

a

banner

hey.

You

haven't

configured

this

code

to

compile

so

that

you're,

oh

yeah,

I'm,

reviewing

a

merge,

request,

I'm

going

to

add

it

to

that

list.

C

B

That's

actually

that's

a

really

I

think

elegant

way

to

do

it.

Perhaps

we

can

yeah.

We

basically

just

have

a

redundant

statically

compiled

web

pack.

You

know

set

of

assets

with

its

own

manifest

file

and

then

one

this

dynamic

that

only

gets

triggered

on

certain

pages

or

something

like

that.

That

would

be

a

way

to

do

it.

We

really

have

to

educate

people

that

are

using

it.

B

C

Don't

know

well,

maybe,

because

you

mentioned

the

static

analysis.

I

mean

these

webpack

statistic.

Files

contain

a

lot

of

stuff

I,

wonder

if

you

could

compile

once

save

out

a

file

that

basically

has

the

static

mapping

of

stuff

and

then

you

kind

of

know.

You

know

what

you

need

for

a

certain

page

and

then

you

you

do

a

pro

route,

compilation

or

something

I

don't

know

or

if

you're

I

it's

it's,

it's

really.

It's

really

tricky

really

tricky

stuff

and

probably

can

also.

C

C

Do

you

have

any

any

feedback

on

on

this

meeting

that

you

want

to

give

right

away,

if

not

I'm,

going

to

paste

the

link

to

the

I'm

going

to

paste

the

link

to

the

issue

that

you

know,

because

we

want

to

document

the

thing

in

the

handbook

which

we

didn't

do

so

yeah?

If

you

have

feedback,

feel

reach

out,

feel

free

to

reach

out

to

me

or

Mike,

let's

see

how

we're

going

to

do

it

in

future

formats.

We

definitely

want

to

have

this

as

regular

office

hours.

C

So

if

you

want

to

bring

topics

to

the

tables

like

hey,

you

know

I

heard

about

this

cool

thing.

Why

not

type

script

I

don't

know

it

doesn't

have

to

be

Mike

or

I

who

have

to

be

leading

this

meeting.

So

if

you

have

something

that

you

want

to

talk

about,

front-end

related

front-end

foundation,

office

hours,

we

give

frame

for

nice

technical

discussions

and

front-end

weirdness

yeah.