►

From YouTube: Gitaly: partial clone with filter spec

Description

A quick demo of what I learned writing some documentation about Git partial clone

- https://github.com/git/git/blob/master/Documentation/technical/partial-clone.txt

- https://git-scm.com/docs/git-read-tree#_sparse_checkout

Here's the merge request with the docs: https://gitlab.com/gitlab-org/gitlab-ce/merge_requests/30295

A

Hi,

my

name

is

James

Ramsey

I'm

product

manager

at

gitlab

and

I've

been

writing

some

documentation

about

its

partial

clone

feature

today

and

I

thought

it

would

be

interesting

to

record

a

quick

video

and

give

a

brief

demo

of

how

it

works.

So

partial

clone

for

those

of

you

who

aren't

familiar

is

a

performance

optimization

in

get

to

help

work

with

very

large

repositories

and

by

very

large

repositories.

I

mean

repository

is

greater

than

a

hundred

gigabytes

on

disk.

So

this

is

really

quite

large.

A

Quite

annoying

and

slow

to

download

and

partial

clone

tries

to

make

it

better

by

only

downloading

or

only

fetching,

the

objects

that

you

want,

either

filtered

by

size

or

by

file

path

and

I'll

be

demoing

how

to

do

this

by

file

path

today,

using

a

filter,

spec

file

in

the

git

project

here

that

I

have

forked

I,

have

a

branch

that

I

created

a

filter

spec

file

on.

So

let's

take

a

look

at

that.

A

A

This

is

obviously

quite

a

basic

example,

but

in

a

much

larger

project

you

might

find

lots

of

different

teams

working

together

on

different

components.

In

the

same

repository,

or

perhaps

you

have

a

very

different

architecture

in

your

repository

structure,

where

you've

got

20

or

more

different

applications

all

stored

in

one

monolithic

repository

in

that

case,

it's

very

likely

that

different

engineers

will

only

be

working

in

specific

sub

directories.

A

Perhaps

you've

got

some

shared

libraries

that

everyone

needs

to

clone,

but

then

otherwise

developers

are

contained

to

specific

applications

and

really

only

want

to

fetch

the

application

they

are

working

on

and

the

common

dependencies.

In

that

instance,

you

might

have

multiple

get

filter,

spec

files

living

inside

of

the

directory

of

each

application,

so

I

might

have

app

1

slash,

get

filter,

spec

and

app

2

slash,

get

filters

back

and

that

way

each

team

can

manage

their

own

filter

specs

that

they

need

to

get

the

data

and

to

get

the

job

done.

A

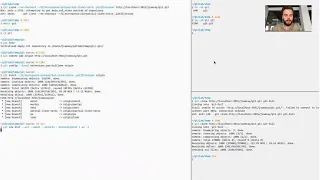

So,

let's

take

a

look

at

how

what

this

looks

like

in

practice.

So,

firstly,

I'm

going

to

show

you

a

command

that

doesn't

work,

which

is

the

git

clone

command

and

I

discovered.

This

doesn't

seem

to

work

in

get

2.2

in

my

testing,

but

hopefully

we'll

fix

it.

So

let's

run

it

and

see

what

happens

so.

This

is

the

bug

that

we

see

and

I'm

still

investigating

it.

But

I

want

to

point

out

one

thing

which

is

in

this

clone

command.

A

I

used

the

no

checkout

flag

and

the

reason

the

no

checkout

flag

is

important

when

running

the

clone

command

is

that

clone

does

two

things:

first,

it

fetches

and

then

it

does

a

checkout

and

if

you

were

to

do

a

checkout

without

first

configuring,

sparse

checkout

get,

would

then

fetch

one

by

one.

All

the

objects

needed

to

actually

complete

the

checkout

command

for

all

the

different

files

and

directories

for

the

branch

and

then

the

the

really

important

key

part

for

doing

a

partial

clone.

A

Hey

then,

we'll

commit

well

how

to

remote,

and

then

we

need

to

config

enable

partial

clone

for

the

remote

we

just

happen,

and

now

we

can

run

git

fetch

so

we're

using

the

same

filter

argument.

You

know,

start

filter

flag

here

with

the

get

filter

spec

on

the

server

and

then

we'll

fetch

and

you'll

notice.

A

And

so

the

the

benefit

would

be

somewhat

diminished

and

doing

a

partial

clone,

but

now

we

can

see

that

we're

receiving

data

back

so

I'll

jump

over

to

this

other

session

and

we'll

compare

the

size

of

the

two

directories.

So

I've

got

the

entire

git

repository

and

I've.

Sorry

I've

got

the

one

that

we

just

checked

out

here.

Just

fetched

sorry-

and

we

haven't

checked

it

out,

yet

that's

64

megabytes

and

if

I

compare

it

to

the

full

git

repository,

it's

133

megabytes.

So

it's

about

half

the

size.

A

A

A

You'll

see

this

is

much

faster.

It

immediately

begins

transferring

the

data,

unlike

the

example

over

here,

where

except

they're

thinking

for

quite

a

while

and

we'll

compare

the

size

of

these.

The

other

thing

we

can

do

to

verify

that

we've

successfully

done

a

partial

clone

versus

a

full

clone

is

by

running

the

rev

list,

command.

A

And

Counting

the

number

of

missing

objects,

while

that

commands

running.

Let's

look

at

the

size

of

get

full

and

you'll,

see

that

it's

actually

larger

than

the

bear

repository,

because

we've

actually

got

all

the

copy

of

everything.

And

then

we've

got

a

duplicate

of

the

data

in

the

working

directory

or

the

index.

Because

all

that

data

is

being

checked

out

and

copied

out

of

the

get

directory

into

where

we

expect.

A

A

There

we

go

great,

so

we've

got

a

partial

close

over

here,

close

this

for

now

great

okay.

So

looking

at

the

contents

of

the

git

repository,

though

we're

not

seeing

anything

and

that's

because

we

haven't

done

a

check

out

yet

we

haven't

checked

out

any

branch

and

there's

nothing

here.

So

let's

configure

sparse

check

out

and

do

a

sparse

check

out

based

on

the

filters

back

so

to

enable

that

we

have

to

first.

A

A

So

we

can

see

the

git

repository

locally

has

a

copy

of

this

blog

and

we

can

use

that

and

just

pipe

it

straight

into.

It's

fast

check

out

file

and

then,

if

we

were

and

we

actually

check

out

the

master

branch,

we

can

now

see,

we've

got

the

documentation

directory

and

if

we

check

out

the

partial

chlorine

branch

where

the

filter

spec

is,

we

can

also

see

the

filter

spec.

A

So

here

we

have

a

fully

working

copy

of

the

git

repository,

but

we've

out

a

whole

bunch

of

data,

and

so

you

can

imagine

for

a

100

gigabyte

repository

being

able

to

exclude

the

vast

majority

of

data

is

very

convenient.

There's

fewer

files

in

the

index,

which

means

faster

status

operations,

there's

fewer

objects

and

files

on

disk.

So

it's

consuming

less

of

your

hard

drive,

which

is

really

useful.

If

you've

got

a

laptop,

that's

only

got

maybe

256

gigabytes

of

storage.