►

Description

Watch the playback for a hands-on GitLab CI workshop and to learn how it can fit in your organization!

We will kick things off by going over the differences between CI/CD in Jenkins and GitLab, syntax requirements, advantages to using GitLab, and how you can achieve the same outcomes in GitLab. Getting started with CI/CD in GitLab will take a lot less time than tends to be required for Jenkins, and your users can stay in a single platform.

We will then dive into how to build simple GitLab pipelines and work up to more advanced pipeline structures and workflows, including security scanning and compliance enforcement.

A

A

A

So

if

you

would

mind

doing

that

through

the

session,

okay

folks,

we

still

have

people

joining

in,

but

we'll

get

started

as

we

have

a

lot

to

cover

today.

So

hello

and

welcome,

and

today's

session

we're

going

to

be

covering

gitlab

CI

for

Jenkins

users.

Today,

you're

going

to

be

provisioned

with

a

gitlab

ultimate

account

on

gitlab.com,

so

you

will

need

a

gitlab.com

username

to

be

registered

for

the

session.

A

A

You'll

also

have

access

to

the

training

environment

that

we

provisioned

today

for

the

next

four

days,

I

believe

and

finally,

I

do

have

my

colleagues

available

in

the

Q

a

section

of

Zoom

which

I

just

mentioned.

It's

not

the

chat

box.

It's

alongside

of

please

pop

your

questions.

In

there

we'd

ask

you

all

to

remain

on

mute

during

the

session,

just

so

that

we

can

keep

the

flow

going.

We've

a

lot

to

cover.

A

A

So

my

name

is

Justin

Conrad

I'm,

a

customer

success

engineer

here

at

gitlab

and

I

work

on

the

scale

team,

which

means

I,

mostly

Handler,

emea,

Enterprise

accounts,

it's

a

great

job,

I

love

working

here,

I

love

working

with

all

of

you

guys

and

your

your

efforts

with

devsecops

and

leveraging

gitlab

after

today's

session.

Please

feel

free

to

connect

with

me

whether

it's

on

LinkedIn

or

taking

a

look

at

some

of

my

contributions

on

gitlab

itself.

A

So

Jenkins

and

gitlab

so

I

guess

to

kick

us

off

today.

What

we're

going

to

do

is

take

a

quick

look

at

the

differences

between

Jenkins

and

gitlab

for

cicd,

and

this

is

mostly

to

give

you

a

sense

of

how

you

can

start

translating

your

pipelines

over

to

gitlab

when

we're

done

with

that

we'll

get

into

our

Hands-On

Workshop

the

material

there.

A

A

So

we're

going

to

start

talking

about

some

of

the

gitlab

platform

advantages

and

one

of

the

main

ones

is

reduced

tool.

Switching

so

people

are

having

to

make

their

commits

in

gitlab

and

then

go

over

to

Jenkins.

Cedar

manually

run

their

pipeline,

or

perhaps

you

have

a

trigger

setup

to

do

that,

for

you

with

gitlab

pipelines

operate

within

the

project,

where

developers

actually

create

their

commits

and

merge

requests,

you

can

access

all

pipelines

on

a

Project's

pipelines,

page

meaning

that

developers

don't

have

to

switch

tools

to

review

pipelines.

A

A

These

security

policies

are

actually

covered

in

some

of

the

optional

steps

of

today's

Workshop,

so

feel

free

to

go

through

them.

After

our

session

today,

as

I

said,

your

training

environment

will

be

provisioned

and

it'll

stay

alive

for

four

days.

I

believe

so

having

an

ultimate

subscription

will

also

allow

for

the

creation

and

enforcement

of

compliance

framework

pipelines

that

can

also

be

used

with

security

audits.

So

there's

a

difference

there

in

what

you

get

with

the

ultimate

subscription.

This

deck

will

be

shared

afterwards

and

you're

going

to

see

a

lot

of

stuff

underlying

throughout.

A

A

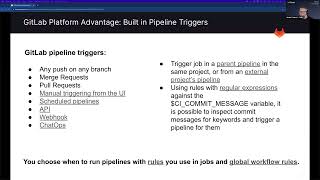

So

if

we

look

at

the

list

of

what

will

the

pipeline

get

triggered

by

you've

got

any

push

in

any

branch,

merge

requests

you've

got

scheduled

pipelines

which

run

GitHub

c,

o

CD

pipelines

at

regular

intervals.

You

can

trigger

them

via

an

API,

so

trigger

a

pipeline

for

a

specific

Branch

or

tag

with

an

API.

You

also

have

manual

triggering

from

the

UI,

which

we

will

actually

cover

today

as

well.

You'll

get

to

see

it

in

the

UI.

You've

got

web

hooks,

chat,

Ops

and,

of

course,

pull

requests.

A

The

main

instance

is

going

to

pull

everything

in

and

as

well

that's

an

option

if

you

need

to

take

that

route,

it's

also

possible

using

rules

with

regular

expression

against

the

CI,

commit

message

variable

and

it's

actually

possible

to

inspect

the

commit

message

and

look

for

you

know

certain

keywords

and

Trigger

pipeline

for

them.

So

that's

a

really

nice

option

there.

You

can

see

that

there's

so

many

ways

that

you

can

trigger

and

pipelines

and

it's

important

to

explore

in

a

while

to

see

what

best

fits

your

development

life

cycle.

A

One

of

the

use

cases

here

might

be

you

know,

maybe

you

don't

want

to

run

pipelines

for

every

single

portion

of

feature

Branch,

but

maybe

a

developer

once

in

a

while

wants

to

run

a

linter.

So

you

could

look

for

a

keyword

in

there

and

then

trigger

the

pipeline

with

them

entering

it.

And

if,

if

you

wanted

to

go

around

that

around

it,

that

way.

A

A

Now

the

really

neat

thing

about

this

is

that

users

who

are

triggering

the

pipelines

have

the

ability

to

pull

these

pipelines

the

pipeline

files

into

their

project

and

there's

a

couple

of

different

ways

that

that

can

happen,

and

but

all

that

they

have

to

have

is

read

access

to

that

Upstream

repository

that

has

the

pipeline

definitions

in

it.

So

essentially,

your

devops

seams

can

maintain

these

projects

that

have

the

template

repositories

in

them,

and

they

don't

have

to

worry

about

everybody

being

able

to

commit

to

it

so

with

the

pipeline

template

repositories.

A

One

thing

to

note

is

that

when

you

pull

these

files

in

and

run

them

as

pipelines

in

a

downstream

project,

they're

going

to

use

that

Downstream

projects

variable

context,

so

that

you

have

the

ability

and

we'll

be

talking

about

this

more

as

we

go

through

the

workshop

today.

But

you

do

have

the

availability

availability

to

set

variables

in

a

whole

host

of

different

ways

and

again

in

order

for

someone

to

be

able

to

run

that

pipeline.

A

They've

got

to

have

read

access

to

that

centralized

repository,

but

that's

all

that

they

have

to

have

this

centralized

repository

down

and

talk

about

can

have

a

ton

of

different

pipelines

in

it

if

it

needs

to,

and

it

can

contain

multiple

pipeline

definitions

for

different

conditions

that

exist

in

the

downstream

pipelines

or

for

different

types

of

projects.

Even

maybe

you've

got

a

python

and

node.js

projects

and

you

can

create

independent

pipelines

for

each

of

them.

A

So

projects

can

include

pipeline

files

from

external

repos

in

their

pipeline

files

so

that

they

can

create

their

own

gitlab

CI

yaml

file,

and

then

they

can

use

an

include

statement

to

include

the

Upstream

projects

if

they

want

to,

but

for

projects

that

don't

want

to

maintain.

And

we

all

know

that

these

exist.

A

Okay,

so

this

is,

this

is

an

important

one:

jobs

run

in

isolated

environments,

so

the

jobs

you

can

think

of

them

as

stages

and

Jenkins

so

stages

and

Jenkins

tend

to

have

the

steps

delineated

below

them

that

are

going

to

run

and

so

pipeline

jobs

run

independently

of

each

other,

and

they

have

a

fresh

environment

for

every

single

one.

If

this

is

unlike

a

Jenkins

agent,

where

the

sages

actually

run

sequentially

on

that

same

agent,

the

possibility

is

in

fact

it's

a

very

real

probability.

A

So

let's

say

your

job

produces

a

variable

and

you

need

to

be

able

to

evaluate

that

variable.

In

the

context

of

a

downstream

job,

you

can

do

that

with

these

dot

EnV

files

and

which

are

an

artifact

that

the

Upstream

job

would

leave

behind,

and

the

downtrend

job

would

then

require

using

either

the

needs

or

the

dependencies

keywords,

and

then

it

can

evaluate

it

in

the

context

of

its

own

runtime

and

I

mean

by

the

way

like

get.

A

That

runs

a

cleanup

after

every

single

job

to

ensure

a

clean

working

environment

for

its

next

job,

and

the

idea

is

that

these

Runners

are,

you

know

highly

disposable

and

highly

portable

and

Runners

can

run

for

any

project.

So

these

are

unlike

agents

where

you

configure

them

fairly

specifically,

and

they

could

just

run

for

any

project

that

you've

got.

A

A

Maybe

you

would

want

to

add

some

approvals

under

that,

so

that

somebody

can

approve

of

being

released

to

production

when

you

just

configure

a

manual

job

in

the

context

of

a

commit

they're

going

to

have

a

play

button

on

them

and

we'll

actually

be

able

to

show

you

that

later

in

the

UI

as

well

and

all

manual

jobs

are

going

to

have

that

play

button

on

them

and

any

developer

can

then

run

these

manual

jobs.

So

if

they

have

the

developer

role,

it'll

appear

for

them.

A

So

the

way

that

you

get

around,

that

is,

that

you

can

use

things

like

protected

branches,

for

example.

So,

in

pipelines

for

protective

branches,

only

users

who

are

allowed

to

actually

push

or

or

merge

to

that

protective

Branch

can

run

the

manual

jobs

and

if

the

job

is

run

in

a

protected

environment

which,

by

the

way,

is

a

setting

in

your

your

projects,

you

can

actually

make,

for

example,

a

production,

the

production

environment,

a

protected

environment.

A

You

can

also

add

deployment

approvals,

and

these

are

independent

of

who

can

click

on

that

that

run

button.

That

appears

in

the

UI.

You

can

add

two

or

three

approvals,

if

you

think

that's

appropriate,

maybe

one

and

if

that's

more

appropriate

and

you

can

actually

delineate

who

those

users

are

that

have

to

be

able

to

approve

it.

A

But

you

can

also

pick

the

people

who

are

allowed

to

run

the

jobs

in

the

protected

environments

too,

and

again,

you've

got

plenty

of

links

on

this

page

and

the

previous

one

that

that

will

link

out

to

what

we're

discussing

here.

I

know

it's

it's

a

lot

of

detail.

We're

trying

to

give

you

the

the

surface

level

to

set

the

scene

for

the

rest

of

the

CIA

Workshop

But,

be

sure

to

to

dive

into

these

links

that

are

shared

in

the

deck

afterwards.

I

think

you'll

you'll

get

some

great

Insight

from

them.

A

Okay,

so

obviously

you

know,

Jenkins

has

its

own

terminology.

Github

has

its

own

terminology.

So

it's

it's

good

to

kind

of

do

what

we

call

a

terminology

crosswalk

here,

where

we

can

reference

both

and

try

to

figure

out

what

we're

talking

about.

So

essentially,

it's

the

differences

in

syntax

that

we're

talking

about

here

and

and

we'll

cover

this

for

a

few

minutes,

so

I

mean

Jenkins

agents,

which

I've

already

touched

on

they're.

What

we

call

runners

in

gitlab,

there

are

very

different

kind

of

concept.

A

Agents

tend

to

be

highly

customized

for

projects,

as

we

said,

whereas

Runners

tend

to

be

highly

disposable,

so

they

can

be

just

reused

over

and

over

and

over

again,

you

do

have

the

ability

in

Jenkins

to

create

a

host

set

of

steps.

If

you

need

it

or

a

post

job,

you

can

support

that

with

actually

additional

stages

in

gitlab.

If

you

need

to

do

it,

and

but

remember

that

you

know

our

gitlab

runners

run

cleanup

jobs

already.

So

if

you're

only

using

that

to

do

a

cleanup,

it's

not

actually

needed.

A

The

runners

will

will

do

that

themselves

and

then

first,

look

at

stages

in

Jenkins,

which

I

tend

to

see

is

something

that

we

would

call

jobs

and

gitlab

and

because

they

tend

to

have

to

you

know

steps

enumerated

underneath

them.

We

actually

have

the

keyword.

Sorry,

we

have

a

keyword,

we

call

stages

but

stages

to

us

is

a

container

for

jobs,

and

so,

when

you

see

a

gitlab

pipeline,

you'll

see

kind

of

vertical

columns

and

JavaScript

listed

in

each

one

of

the

columns

and

those

are

stages

to

us.

So

later

on.

A

As

we're

going

to

the

workshop

I'll

be

able

to

show

you

what

I

mean

by

that

we'll

have

you

know

build

tests

and

Dev

stages.

I

think

are

in

it.

So

you'll

get

to

see

that

in

a

couple

of

minutes,

but

it's

just

good

to

be

aware

of

the

difference

and

when

you

delineate

the

steps

in

a

Jenkins

stage,

that

would

be

a

script

in

a

job

in

gitlabs

pipelines.

A

Now,

when

we

talk

about

the

next

one,

their

environment,

to

us

in

gitlab,

this

is

just

variables

and

variables

can

be

declared

in

a

job.

If

you

want

to

declare

specific

to

a

job

or

they

could

declare

it

globally

for

the

entire

pipeline.

That's

an

option.

If

you

want

to

take

that

road,

or

else

I

mean

either

one

is

sufficient

to

to

get

the

job

done.

It's

just.

However,

you

want

to

do

it.

What

fits

your

best

and

the

last

one

then

options

what

you

call

options

in

Jenkins.

A

I,

don't

see

anything

in

the

the

Q,

a

so

I'm

hoping

you're

all

following

along

nicely

and

please

Leverage

The

Q

a

and

not

to

chat.

If

you

do

have

any

questions

and

they'll

be

queued

up

for

my.

My

team

members

help

you

with

cool

so

now

with

respect

to

parameters,

and

this

is

not

actually

required

in

gitlab

when

you

go

to

run

a

manual

pipeline

you're

able

to

set

any

variable

that

you

want

to.

A

So,

if

you

go

to

the

pipelines

page

and

you

hit

run

pipeline

you're

going

to

have

the

opportunity

to

create

as

many

variables

as

you

want,

you

define

the

keys.

You

decide

to

define

the

the

values

for

it,

but

it's

also

possible

for

you

to

Define

these

variables

in

your

gitlab

CI

yaml

file

and

give

them

a

default

value

so

that

when

people

go

to

run

a

manual

pipeline,

it's

already

pre-populated

with

that

default

value.

A

You

know

it's

pretty

much

supported

in

the

in

the

same

way

that

it

is

in

Jenkins

now,

with

respect

to

triggers

and

cron.

Gitlab

is

tightly

integrated

with

Git

sem

pulling

options

for

triggers

are

not

needed

and

we

support

a

Syntax

for

scheduling

pipelines

and

again

that

link

there

on

that

deck

will

bring

you

out

there.

We

don't

need

to

go

through

it

today

and

but

I

would

recommend

going

off

and

taking

a

look

at

that

not

fall.

A

A

So

this

is

the

last

of

the

kind

of

terminology

crosswalk

slides

to

cover

today

with

respect

to

tools-

and

you

know-

we've

looked

into

tools

and

Jenkins

is

only

a

few

of

them

right

now,

they're,

primarily

supporting

Java.

We

don't

have

any

kind

of

tools

Direct

in

gitlab

and

best

practices

in

git

labor.

Actually,

too,

you

know

create

containers

of

your

own

that

already

have

these

libraries

pre-loaded

in

them,

and

then

you

can

store

those

and

get

them

and

consume

them

in

your

pipelines.

A

If

you

want

to

so,

it

makes

it

very

easy

and

convenient

way

for

you

to

kind

of

sub

out.

These

containers

create

your

own

Docker

files,

or

you

know

whatever

you

need

to

do

with

respect

to

input

it's

similar

to

the

parameters

keyword

again,

it's

not

needed

because

a

manual

job

can

always

be

provided

and

the

runtime

variable

entry

so

you're

covered

there

now

gitlab

does

support

a

when

keyword,

which

is

used

to

indicate

when

a

job

should

run

in

the

case

of

or

even

despite

failure.

A

We're

going

to

move

on

now

to

the

next

portion

of

today's

workshop

and

which

will

look

and

focus

mostly

on

CI

gitlab

we're

going

to

get

Hands-On

we're

going

to

get

to

to

get

a

feel

of

these

features

and

authoring,

some

pipelines

and

I

hope

you

get

great

benefit

from

it.

So

we

can

drive

on

this.

Is

our

agenda

for

the

workshop

portion

of

today.

First

we're

going

to

go

through

lab

setup

and

provisioning

your

training

environment

on

gitlab.com,

then

we're

going

to

go

through

the

setup

of

a

very

simple

pipeline.

A

We'll

then

move

on

to

look

at

execution

order

and

directed

acyclical

graphs

and

some

rules

and

failures

like

I,

discussed

and

also

SAS

and

artifacts.

Finally,

the

last

step

will

be

optional.

It

will

cover

transferring

out

the

project

and

we

won't

actually

go

through

that

step

by

step.

But

I'll

show

you

where

to

go

and

the

instructions

to

follow.

If

you

want

to

take

the

work

that

you

did

in

today's

training

environment

and

put

it

back

out

into

your

own

namespace,.

A

Okay,

so

let's

get

started

so

for

today's

session

we

have

a

fictional

new

startup

that

you're

all

a

member

of

it's,

creating

a

public

leaderboard

for

the

hit

new

racing

game,

Tanuki

racing.

So

let's

pretend

that

your

company

has

recently

swapped

over

to

using

gitlab

for

cicd

and

you've

been

tasked

with

learning

about

all

of

the

different

pipeline

capabilities.

A

A

A

So

you're

going

to

go

to

getlouddemo.com

you're

going

to

see

this

screen

and

you

want

to

click

on

this

blue

button.

That

says

redeem

invitation

code

now,

once

you

click

on

this

you're

going

to

be

brought

to

this

screen,

and

it's

this

invitation

code

here,

the

one

that

I

pasted

into

chat

that

you're

going

to

want

to

paste

in

there

you're

going

to

want

to

put

today's

invitation

code

into

the

box

and

just

click

on

the

blue

provision,

training

environment

button.

A

A

If

you

go

to

gitlab.com

or

if

you

know

it

already

great,

but

if

you

need

to

retrieve

it

on

gitlab.com,

if

you're

using

the

old

UI

and

your

profile

picture

will

be

in

the

top

right

hand

corner

if

you're

using

a

new

UI

it'll

be

in

the

top

left,

wherever

it

is,

click

on

your

profile

picture,

you'll,

get

a

drop

down,

you'll,

see

your

name

and

then

below

it.

You'll

see

your

username.

Now

it's

very

important

exclude

the

ad

symbol.

A

So

in

my

example

here

all

I

want

is

the

J

Conrad

2..

You

do

not

want

to

include

the

atom,

but

if

you

do,

it

won't

work.

So

if

you

can

grab

your

gitlab.com

username

you're

going

to

pop

it

in

box,

alongside

where

it

says,

getlive.com

username

paste

it

in

there

and

click

on

provision,

training,

environment.

A

A

Essentially,

this

is

going

to

be

your

link

to

the

provision,

training

environment.

You

can

get

there

by

clicking

the

URL

or

my

group,

so

I'm

going

to

go

ahead

and

do

it

now

follow

along,

if

you

don't

already

have

it

done

so

again,

this

is

gitlabdemo.com

I'm,

going

to

click

on

redeem

invitation

code,

I'm,

going

to

copy

today's

invitation

code,

paste

it

in

Vision,

training,

environment

and

my

username

great

now,

at

this

point,

I

can

click

here

or

here

it

will

bring

into

the

same

place

yeah.

This

is

what

you

should

end

up

with.

A

This

is

where

you

should

be.

This

is

your

training

environment

for

today,

and

if

you

get

to

this

stage,

which

you

all

should

by

now,

what

I'd

like

you

to

do

is

take

note

of

this

string.

So

you'll

see

it

after

my

test

group.

This

string

here

will

be

very

handy

for

a

future

step

where

we're

forking

a

source

project

for

today's

Workshop.

A

Alrighty

the

hero

I

can

see

you

in

the

chat

there.

If

you

don't

have

gitlab.com

account

you're

going

to

want

to

go

to

gitlab.com

or

users

forward,

slash

sign,

underscore

up

I

believe

it

is

and

set

up

a

sorry,

I

typed

in

gitlam

gitlab.

Obviously,

but

that's

the

link

it's

forward,

slash

users

or

as

a

sign

up

and

Morgan.

Maybe

you

can

paste

in

the

correct

link

there

for

the

hero

and

but

we

all

do

need

that

gitlab.com

username.

You

can

sign

up

for

a

free

one.

There.

A

Boom

Okay,

so

I

don't

see

any

major

chat

about

anyone

having

issues

with

anything

up

to

that

stage,

which

is

awesome,

I

trust

you're.

All

here

we've

copied

this

string,

put

it

somewhere

safe.

If

at

any

stage

you

got

this

or

anything

that

looks

like

that,

any

other

errors

you've

gone

wrong

somewhere,

there's

obviously

been

a

Miss

click

or

something

has

happened.

Reach

out

to

q,

a

either

myself

or

Morgan

will

help

you,

since

we

get

a

chance

and

we'll

send

you

in

the

right

direction.

A

A

So,

first,

what

I

want

you

all

to

do

is

click

on

the

link

that

I'm

going

to

paste

into

the

chat

gone

in

now.

So,

alas,

that

you

all

click

on

that

link,

I'm

going

to

do

it

now

myself

here

and

you

can

just

follow

along

with

me

on

the

screen

for

this

portion.

Essentially,

this

is

the

link

I

just

gave

you

and,

on

the

left

hand

side

you

can

see

that

this

is

the

training

environment.

A

What

I'm

saying

here

is

basically

telling

gitlab

I

want

you

to

Fork

this

source

project

into

my

training

environment,

I'm,

keeping

everything

else

standard

and

in

here

I

just

paste

it

in

that

string

and

I

select

the

only

option

that

appeared

for

me

now:

I'm

going

to

click

on

Fork

project

and

take

maybe

20

30

seconds.

Sometimes.

A

Great

when

it's

done

and

it's

completed

successfully

you're

going

to

see

this

little

notification

of

the

top

saying

the

project

was

successfully

forked

and

actually

over

here,

on

the

left

hand

side,

if

I

refresh

now,

we

should

see

that

it's

in

there

and

it

is

brilliant.

So

you

can

click

into

your

project

and

you

can

pretty

much

close

the

screen

over

here,

because

I'm

going

to

give

you

something

else

in

a

couple

of

minutes,

I'll

give

you

the

instruction

set.

A

Thank

you,

alrighty

I,

don't

see

any

major

questions

or

chatter

related

to

it.

So

hoping

you

all

followed

along

with

those

steps

and

your

Workshop

is

now

forked

just

again

to

cover

it.

Click

on

the

link

I

gave

you

in

chat

click

on

Fork

up

the

top

right

paste

in

your

workspace

or

your

training,

environment,

string

and

click

on

four

project,

and

this

is

the

next

step

that

we

want

to

do,

which

is

actually

removing

that

fork

relationship.

A

So

to

do

that,

you're

going

to

want

to

go

to

settings

in

general,

so

settings

in

the

left-hand

bar

and

then

General

and

you're

going

to

want

to

expand

out

Advanced.

If

you

scroll

right

down

to

the

bottom,

it

should

be

maybe

second

or

third

from

the

bottom.

You

want

to

click

on,

remove

Fork

relationship.

So

let's

go

do

that

now.

A

So

again,

I'm

in

my

project

on

the

left

hand,

side

I'm,

going

settings,

General,

I'm

scrolling

down

to

Advanced,

expand

you're,

going

to

remove

Fork

relationship.

It

asks

you

to

copy

and

paste

the

name

or

that

actually

asks

you

to

type,

but

you

can

copy

and

paste

the

name

of

the

workshop

click

and

confirm

again.

This

will

take

a

couple

of

seconds

and

off

the

top.

The

fork

relationship

has

been

removed

once

you

get

to

that

stage.

Just

click

on

this

bring

you

back

to

the

project

overview.

A

I've

just

fired

it

into

the

webinar

chat

and

I'm

going

to

open

it

up

alongside

here,

and

this

is

pretty

much

all

you

should

be

working

with

for

today.

So

on

the

right

hand,

side

or

the

left

hand

side

whatever

way

you

want

to

organize

it,

but

on

one

side

have

your

instructions

and,

on

the

left

hand,

side

have

your

training

environment.

A

You

can

see

here

one

through

four

the

steps

I'm

going

to

cover

with

you

today.

Five

is

totally

optional.

That's

if

you

want

to

transfer

the

project

out

afterwards

into

your

own

environment

and

then

six

and

seven

are

completely

optional

and

they

should

be

done

after

today,

at

some

stage,

as

I

said,

you're

going

to

main

access

or

keep

access

to

the

training

environment

for

a

couple

of

days.

So

it's

good

to

get

in

and

you

know

try

out

these

extra

features.

A

So,

let's

start

with

a

very

quick

reminder

on

how

gitlab

pipelines

work,

so

pipelines

are

defined

per

project

in

the

gitlab

CI

yaml

file

and

that's

always

stored

in

the

Project's

root

folder.

So,

firstly,

what

you're

seeing

here

up

on

this

screen

in

the

columns

or

the

stages

and

in

particular

we're

seeing

the

build

test

and

deploy

stage.

A

You

can

see

them

here

so

build

test

and

deploy

and

beneath

them

you

can

see

that

they

all

have

jobs

associated

with

each

so

to

build

and

deploy

stages

both

have

one

job

each

and

the

test

job

or

sorry.

The

test

stage

has

two

test

jobs

test

a

and

test

B.

So

you

can

see,

we've

got

three

stages.

These

two

only

have

one

job

and

test

then

has

two

jobs.

A

One

thing

to

know

about

gitlab

pipelines

is

that

by

default,

all

jobs

in

the

stage

must

complete

successfully

before

proceeding

to

the

next

stage.

So

in

our

example,

here

the

build

job

in

the

build

stage

would

have

to

complete

successfully

before

the

jobs

in

the

test.

Stage

can

never

begin,

and

the

other

thing

to

know

is

that

jobs

run

independently

and

sometimes

even

on

different

runners,

meaning

simply

that

you

can

execute

as

many

jobs

at

any

one

time

as

you

have

Runners.

A

Okay,

so

we're

going

to

take

a

quick

look

at

Job

statements,

so

in

this

job

in

particular

this

one

here

that

we're

looking

at

and

named

production

that

runs

in

the

deploy

function

stage.

You

can

see

that

the

before

script

and

script

statements

are

used.

So

there's

your

before

script

and

there's

your

script

statement

and

whenever

you're

writing

a

job,

you'll

always

have

the

script.

Keyword

defined

a

script

is

actually

the

only

required

keyword

that

a

job

needs

and

without

it

the

job

just

wouldn't

have

anything

to

do.

A

In

addition

to

script,

you

can

also

have

before

script

and

after

script,

and

so

before

script

runs

before

the

script

statement,

but

it

actually

runs

in

the

same

shell.

Its

main

purpose

is

to

run

steps

that

are

necessary

in

order

for

this

script

statements

to

actually

execute

properly.

A

good

example

will

be

a

before

script.

Installing

AWS

CLI

with

script

then

executing

some

AWS

CLI

commands

like

the

before

script.

A

There

is

an

after

script

and

that's

optional

after

script

runs

after

the

actual

script

keyword,

and

it's

worth

noting

that

after

script,

statements

are

executed

in

a

separate

shell

again,

a

good

example

of

uses

for

after

script

would

be

cleanup.

So

you

can

also

I

mean

it's

worth

noting

that

you

can

actually

evaluate

the

exit

code

of

script

in

after

script

and,

have

you

know

some

sort

of

additional

job

behaviors,

depending

on

the

result.

A

Cool,

so

let's

look

at

gitlab

Runners,

a

gitlab

runner

is

an

application

that

works

with

gitlab

cicd

to

run

jobs

in

a

pipeline.

When

you

register

a

runner,

you

can

actually

add

tags

to

it

and

when

a

cicd

job

runs,

it

knows

which

Runner

to

use

by

looking

at

these

assigned

tags

tags

are

actually

the

only

way

to

filter

the

list

of

available

Runners

for

a

job.

A

Okay,

so

in

terms

of

importing

the

application,

we

don't

need

to

do

that.

That's

just

the

forking,

the

source

project

we've

already

covered

that

you

want

to

scroll

down

to

step

two

immediately

and

look

at

creating

a

simple

pipeline.

So

first

click

the

project

overview

on

the

top

left

of

the

screen,

which

for

us

here

is

just

click

on

cicd

adoption.

Workshop

bring

you

to

this

page.

That's

your

project

overview

page

and

now

that

we

have

our

project

here.

We

want

to

go

ahead

and

take

a

look

at

the

gitlab

CR

yaml

file.

A

Now,

to

do

that,

we're

not

just

going

to

click

in

here

I'm

actually

going

to

bring

you

somewhere

else.

So

if

you

click

on

build

and

left

hand

side

and

go

to

pipeline

editor

so

again,

on

the

left

hand,

side

build

and

then

pipeline

editor.

This

opens

up

your

gitlab

CI

yaml

file

in

a

place

where

we

can

make

changes

live,

which

is

fantastic

and

it

does

things

like

you

know:

you'll

be

allowed

to

visualize

the

changes

you're

making

validate

the

syntax

that

you're

using

and

stuff

like

that.

A

So

I

like

to

recommend

using

the

pipeline

editor

for

this

stuff

and

cool,

so

you're

going

to

go

to

build

pipeline

editor

and

you'll.

Have

this

open

in

front

of

you

notice

that

we

have

a

simple

pipeline

already

defined?

We

have

two

stages,

build

and

test

which

you

can

see

up

here.

We've

defined

two

stages,

as

well

as

a

build

app

job,

which

is

this

guy

here

and

also

a

unit

test

job,

which

is

this

guy.

A

So

in

the

unit

test,

job,

which

is

this

one

down

the

bottom

here-

we

want

to

use

the

after

script

keyword

to

Echo

out

that

the

build

is

completed

so

first

to

edit

the

pipeline.

We

need

to

go

to

the

pipeline

editor

we're

there

already.

That's

fine,

and

essentially

what

we

want

to

do

is

add

in

this

after

script

keyword

and

it's

going

to

Echo

build

that

job

as

run.

You

have

two

ways

you

can

do

this.

A

You

can

pick

this

guy

off

by

copying

him

directly

here

and

pasting

below

the

the

script

section

make

sure

your

indentation

is

correct,

or

else

it

won't

work.

That's

one

way

of

doing

it.

The

other

way

of

doing

it

is

you

can

just

copy

this

whole

block,

because

it's

saying

your

new

unit

test

job

should

look

like

this.

You

can

see

the

after

script.

A

Keyword

is

included

and

you

can

just

replace

the

existing

block

with

that

block

either

or

will

do

the

job

once

you've

added

the

code,

you

can

go

ahead

and

click

on

Commit

changes

which

I'm

going

to

do

right

now,

and

it

should

immediately

trigger

the

pipeline

to

build

if

no

issues

were

detected

in

yaml

file,

which

they

shouldn't

be

and

for

troubleshooting.

You

can

use

a

validate

tab

to

see

when

your

pipeline

is

broken.

It's

it's

very

handy.

It's

this

guy

here

and

doing,

and

you

can

see

exactly

what's

happening.

A

You

can

see

that

the

simulation

for

our

example

completed

successfully

without

no

issues,

definitely

something

we're

playing

with

later

on

cool.

So

what

we

want

to

do

is

actually

go.

Look

at

that

change,

that

pipeline

running

for

a

change

so

we're

going

to

go

to

build

and

Pipelines

you

see.

Mine

is

still

running.

That's

okay,

I'm

going

to

click

onto

it!.

A

Alrighty

so

we've

gotten

our

simple

pipeline

set

up

and

we've

added

in

that

after

script

for

a

unit

test

job

we're

going

to

go

back

and

look

at

that

a

little

bit

later,

but

right

now

we're

going

to

talk

about

a

slightly

more

advanced

scenario,

with

job

execution

order

and

dags.

When

I

say

dags

I

mean

directed

acyclic

graphs,

as

you

can

imagine,

dags

is

a

lot

easier

to

say.

A

So

let's

talk

a

little

bit

about

execution

order

and

what

happens

by

default.

So

by

default

in

our

simple

pipeline

that

we

created

and

all

of

the

jobs

in

every

stage,

most

complete

successfully

before

the

next

stage

begins

no

jobs

execute.

Unless

all

the

preceding

jobs

are

successful

and

once

a

job

fails,

the

pipeline

execution

stops

completely

and

subsequent

jobs

will

not

be

executed.

So

in

our

example,

let's

imagine

buildup

fails

build

that

job

in

the

build

stage.

A

A

What

about

if

we

want

to

adjust

and

the

execution

order

of

a

pipeline

to

increase

efficiency,

so

the

pipeline

graph

shows

us

pipeline

stages

and

jobs.

That's

this

guy

over

here,

we've

just

seen

it

in

DUI

and

in

terms

of

execution

order.

In

this

scenario,

let's

imagine

that

the

QA

team

added

this

code

quality

job

to

the

test

stage.

A

It

makes

perfect

sense

that

this

job

should

be

added

to

the

pipeline,

but

one

drawback

is

that

it

means

that

by

default

it

can't

start

until

the

build-up

job

in

the

build

stage

completes

in

an

ideal

setup.

What

we'd

actually

like

to

achieve

is

that

these

jobs

run

in

parallel.

You

don't

want

the

core

quality

jobs

sitting

around

waiting

for

build

app

to

complete

before

it

can

start

because

they're

separate

separate

jobs,

separate

purposes,

and

if

we

can

do

that.

Obviously

it'll

boost

the

speed

at

which

the

pipeline

can

complete

and

increase

efficiency.

A

So

how

do

we

do

that

and

kind

of

an

adjustment

on

the

job

execution

order

and

we're

going

to

look

at

how

you

can

do

it

by

using

the

needs?

Keyword,

the

first?

Actually,

while

we

were

talking

about

code

quality,

it's

worth

mentioned

in

gitlab,

those

actually

have

a

call

Quality

template

and

they

can

also

be

used

and

will

cover

towards

the

end

of

today's

session,

how

those

templates

work

and

how

you

can

use

them.

It's

good

to

know

that

we

actually

offer

one

there

that

you

can

all

use

in

your

Pipelines.

A

So

now

you

can

see

at

the

end

of

this

code,

quality

job

snippet

that

we

have

here

you'll

see

the

needs.

Keyword

has

been

added

to

the

end

line,

and

this

keyword

allows

us

to

specify

which

other

jobs

need

to

be

run

before

this

job

can

start.

I

believe

in

this

needs

keyword,

value

empty,

we're

actually

asserting

that

this

code

quality

job

can

be

run

as

soon

as

the

pipeline

begins.

It

doesn't

need

anything

else

to

complete

it,

fires

up

straight

away,

and

it

can.

A

So

that's

the

needs

keyword

in

a

very

basic

setup.

You

can

actually

get

really

complicated

with

the

needs

keyword

if

you

want

to

or

Advanced

I

should

say

any

pipeline

that

has

more

than

tree

needs

in.

It

will

also

generate

what

we

call

a

directed

acyclic

graph.

A

dag

a

die

can

be

used

in

the

context

of

a

CI

CD

pipeline

to

build

relationships

between

jobs

such

that

execution

is

performed

in

the

quickest

possible

manner,

regardless

of

how

many

stages

may

be

set

up.

A

So

in

the

example

that

you

can

see

here

using

dike,

you

can

actually

relate

the

a

jobs

separately

from

the

B

jobs.

So

even

if

servers

a

has

taken

a

very

long

time

to

build

service,

B

doesn't

wafer

and

finishes

as

quickly

as

it

can.

A

good

example

of

this.

A

good

way

to

to

to

describe

it.

I

guess,

would

be

the

development

of

an

application

that

would

be

destined

for

Bose,

Android

and

iOS

platforms.

So

you

know

the

test.

A

It

is

worth

noting

another

use

case

that

actually

creates

a

stateless

pipeline.

That's

called,

and

essentially

you

can

build

a

stages

pipeline

where

you

declare

needs

on

every

single

job

in

general.

We

don't

recommend

it

as

it's

much

clearer

and

easier

to

follow.

If

you

just

do

the

jobs

by

category

settle,

but

it

is

worth

noting

that

it's

there

as

an

option

and

and

if

you're,

in

a

position

where

you

could

express

your

pipeline

100

by

only

using

the

needs

keyword,

it

can

increase

efficiency.

A

But,

as

I

said,

it's

not

really

our

go-to

okay

cool

time

first

stay

step,

two

in

our

Hands-On,

so

execution

order

and

dice

I'm

going

to

jump

back

over

here.

Hopefully

our

pipeline

is

finished,

running

quick,

refresh

yeah.

It

has

cool.

So

you

might

remember

in

our

last

step

we

edited

this

unit

test

job

to

include

an

after

script.

A

A

A

You

should

still

be

on

the

pipelines

page

we've

navigated

away

from

this.

So

that's

all

fine.

Basically,

what

we

want

to

do

here

is

go

back

to

our

pipeline

editor.

So,

on

the

left

hand

side,

if

you

can

click

on

build

and

go

back

to

pipeline

editor.

We're

going

to

go

back

in

here

now

make

a

couple

more

changes.

A

A

Now,

what

we've

done

here

is

we've

said

that

the

unit

test

job

needs

no

other

job

to

complete

to

start

running

and

cold

quality

job

needs,

no

other

job

start

running,

so

to

start

our

pipeline

when

it

kicks

off.

These

should

kick

off

straight

away

and

and

we'll

see

that

now

in

a

moment

again

I

added

lines

in

line

by

line.

If

you

want

to

just

copy

the

block,

you

can

do

it

here.

You

can

say

it.

Look.

The

new

unit

test

job

should

look

like

this.

A

Now

we're

going

to

use

the

left

hand

menu

again

to

go

to

build

and

Pipelines

and

mine

is

pending,

it's

probably

just

pending

for

an

available

Runner,

so

our

Runners

are

kind

of

scaled

for

these

training

environments

that

might

take

a

moment

to

have

something

available

to

actually

run

it.

For

me.

Okay,

it

started

now.

You

can

see

the

jobs

in

my

test

stage,

aren't

waiting

for

my

jobs,

my

bill

stage

to

run

anymore

or

to

complete

anymore,

because

we

had

added

those

empty

needs.

A

A

A

You

can

see

that

the

first

change

we're

making

here

is

we're

adding

in

the

new

stage,

so

we're

actually

adding

a

deploy

stage.

I'm

just

going

to

type

it

in.

You

can

copy

and

paste

this

block.

If

you

want

now

all

of

these

jobs,

we

want

to

add

in

underneath

our

code

quality

job,

and

these

are

all

going

to

generate

the

dike

that

we're

talking

about.

You

can

see

they're

all

super

simple

jobs,

but

what

you

do

see

as

well

is

that

their

needs

keywords

are

defined.

A

A

Okay,

now,

if

you

click

on

the

visualize

tab,

you

can

see

just

how

complex

the

many

stages

are.

So

you

remember

earlier

I

said

that

we

have

this

visualize

and

validate

type

they're,

so

helpful.

If

you

go

into

the

visualize

one

you're

going

to

see

this

relationship

all

show

off

now,

you'll

see

exactly

the

okay.

Deployee

actually

needs

test

e,

which

needs

building

and

so

on

and

so

forth.

So

that's

a

really

nice

way

of

looking

at

it.

Now

you

can

go

back

to

edit

and

click

on

Commit

changes.

A

Foreign

once

you've

committed

those

changes,

you

want

to

go

to

build

Pipelines,

and

you

can

see

here.

My

latest

one

is

running

I'm

just

going

to

click

into

it.

This

way

now

there's

a

couple

of

ways.

I

can

show

you

this.

You

can

see

here

on

this

default

view.

It's

grouped

jobs

by

stage,

so

you've

got

build

test

and

deploy.

A

You

can

also

group

it

by

dependencies,

which

shows,

especially

if

you

click

on

show

dependencies,

we'll

show

you

that

build

B

needs

test,

b

or

sorry

deploy,

B

needs,

test,

B

needs,

build,

B

and

so

on

and

so

forth.

The

other

way

of

looking

at

it

I'm

just

going

to

revert

that

back

to

the

way

it

was

is

by

actually

clicking

on

this

needs.

A

A

Okay,

folks,

we've

an

hour

done

and

I

think

it's

a

good

time

for

us

to

take

a

quick

break

by

my

time.

It's

two

minutes

to

the

hour.

So

let's

meet

back

at

five

past,

so