►

From YouTube: CMS Research for Continuous Integration

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

A

The

statement

for

this

goes

as

when

joining

a

pipeline

for

a

repository.

I

want

to

monitor

the

performance

of

jobs

or

tax

tasks

in

the

pipeline,

so

I

can

be

aware

of

delays

and

respond

to

failures

all

right.

So

what

we

did

after

selecting

the

jobs

to

be

done

was

we

we

kind

of

we

translated

them

into

scenarios,

and

then

we

put

each

one

of

those

scenarios

into

a

gitlab

project.

So

these

are

the

two

scenarios,

slash

projects

that

we

had

ready

for

the

research

activity.

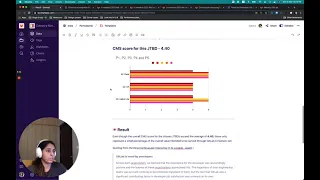

A

Okay,

now

talking

about

the

score

that

we

achieved

for

this,

so

the

overall

cms

score

for

this

particular

scenario.

It

was

pretty

high.

It

was

4.5

that

means

participants

found

it

pretty

convenient

and

easy

to

perform.

This

task

that

was

provided

to

them

and

talking

about

how

participant

one

versus

two

reacted

to

the

three

different

set

of

questions

that

we

had

put

forward.

The

first

question:

it

was

around

the

ease

of

performing

the

task.

A

It

kind

of

through

this

question

we

kind

of

asked

as

to

more

a

holistic

view

that

they

had

about

the

scenario

that

we

have

provided.

So,

whether

or

not

they

think

that

the

scenario

kind

of

had

all

the

functionalities

in

place

that

they

required

a

while

performing

their

tasks

and

the

I

mean

all

the

overall

responses.

They

stayed

in

the

zone

of

four

to

five

okay,

so

this

was

the

first

jdbd

now

moving

on

to

the

next

one.

A

A

Then

talking

about

the

overall

ux

experience,

I

mean

user

experience,

so

five

out

of

five

participants,

rated

it

as

four,

which

means

maybe

there's

some

little

room

for

some

work

to

be

done

there,

that's

good

to

know

and

finally,

for

the

ux

flight.

They

feel

that

this

scenario

did

have.

I

mean

at

least

two

of

the

five

participants

feel

very

strongly

about

the

functionalities

that

you

provided

in

the

scenario

for

them

to

perform

the

task

okay.

So

this

is

the

overall

score

that

we

have

here

like.

A

A

These

features

these

functionalities,

which

are

kind

of

which

come

under

these

jdbts.

They

only

represent

a

very

small

fraction

of

the

overall

value

that

we

intend

to

provide

our

users

through

the

gitlab

ci

feature

set

now,

quoting

from

the

blog

that

you

would

find

in

unfiltered,

which

is

written

by

jackie

here.

So

I

I

have

included

some

portions

from

it

and

just

to

highlight.

We

already

know

that

gitlab

is

loved

by

by

developers

and

this

this

research

activity

it

kind

of

it.

A

It

kind

of

proved

it

right

so,

but

there

are

other

areas

in

verify

that

we

also

need

to

consider,

and

those

are

the

places

where

we

need

to

improve,

to

make

gitlab

ci

lovable

in

like

we

to

really

move

towards

lovable.

So

considering

the

listed

factors

these

being

the

factors,

so

I've

also

included

two

other

two

other

insights

from

this

article.

A

This

one

says:

gitlab

has

some

challenges

with

performance

of

minimal,

viable

changes

and

they're

expected

to

work

at

a

higher

finish,

and

if

you

want

to

read

further,

you

can

go

to

this

blog

and

the

other

insights

is

github's.

Visibility

into

jobs

at

scale

is

painful.

That

means

we

are

not

maybe

catering

well

enough

to

this

persona.

That

says

dakota

here,

that's

I

mean

documented

as

dakota

in

the

gitlab

roles

and

personas

handbook

page.

A

So

considering

these

things,

we

have

decided

that

while

we

really

appreciate

the

results

from

the

activity-

and

we

are

very

excited

about

having

scored

such

high

scores

here,

it

should

be.

I

mean

it

should

be

safe

to

say

that

gitlab

ci

is

still

complete

and

we

need

some

work

to

do

before.

We

can

move

it

to

the

level

state

all

right.

A

You

can

follow

this

link,

which

is

what's

next

and

why

it

will

take

you

to

a

handbook

page

that

kind

of

gives

a

glimpse

of

what's

upcoming,

like

what's

the

next

thing

that

we

want

to

work

on,

and

why

is

it

that

we

have

chosen

that

area

to

work

on

all

right?

So

these

were

the

findings

from

our

cms

research

for

ci

and

feel

free

to

leave

your

thoughts

and

even

bring

me

on

the

issue

or

or

slack

if

you

want

to

just

have

some

comment

about

the

whole

process.

Thank

you.