►

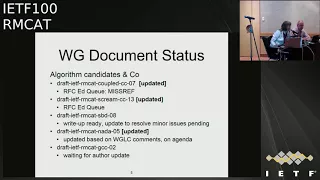

From YouTube: IETF100-RMCAT-20171115-1330

Description

RMCAT meeting session at IETF100

2017/11/15 1330

https://datatracker.ietf.org/meeting/100/proceedings/

A

A

A

A

A

For

the

working

group

document

status,

so

we

now

have

two

of

our

candidate

algorithms

that

are

in

the

RFC

additives

queue.

So

the

couple

congestion

control

is

there

waiting

for

the

north

draft

because

there

is

a

normative

reference.

So

once

nada

is

completed,

the

couple

CC

will

proceed,

so

that

is

ready.

The

or

The

Scream

draft

is

also

in

the

rough

sea.

Editor

queue

the

shared

bottleneck,

detection.

The

write

up

for

that

draft

is

ready.

So

during

that

review

there

were

a

few

needs

that

David

will

fix.

A

So

we

expect

to

ship

that

next

week

the

novel

draft

got

the

number

of

comments

during

the

working

group

last

call,

so

we

had

an

update

of

that

following

that

feedback

and

charging

will

present

the

updates

and-

and

you

evaluation

results

as

part

of

this

meeting

and

for

the

google

congestion

control.

That

draft

is

still

pending

author

updates,

so

I

guess

we

yeah.

We

will

have

to

see

what

will

happen

with

that.

One.

A

A

Last

call

what

is

holding

that

up

was

that

we

wanted

to

ship

it

together

with

the

eval

criteria,

and

here

we

are

waiting

for

a

small

update

on

the

tcp

model,

so

that

draft

has

been

stalled

in

that

status

for

a

bit,

so

we're

trying

to

push

the

waters

to

get

that

in

and

if

not,

we

may

have

to

have

another

approach

to

move

the

documents

forward.

So

I

think

maroon

was

saying

he

was

hoping

to

put

an

update

of

it

this

week.

So

we

will

see

what

happens

otherwise.

A

I

think

we

had

to

put

the

deadline

for

that

draft

and

maybe

see

if

we

can

get

another

editor

in

to

help

complete

them.

So

I

think

it

would

still

be

useful

to

have

them

go

together

because

they

are

so

tightly

coupled,

but

it's

a

small

update

that

is

missing

one

of

them

and

the

status

has

been

the

same

for

for

quite

some

while

there,

so

we

would

like

to

get

those

documents

moving.

A

Then

we

have

the

the

wireless

test

draft

and

the

video

traffic

model,

and

those

documents

are

also

basically

ready.

I

think

maybe

charging

will

also

say

something

about

the

video

traffic

model

today.

So

we

need

some

reviews

and

implementation

experience,

so

we

discussed

all

of

these

these

drafts.

The

last

time.

A

A

Without

the

draft

follow

us

as

a

supporting

document,

then

we

had

the

different

drafts

related

to

the

interfaces

and

we

had

that

up

for

discussion

in

our

last

meeting,

and

the

conclusion

was

basically

that

we

thought

that

the

codec

interaction

or

the

working

group

thought

that

the

code

that

interaction

and

the

framework

draft

could

still

be

useful

and

Varun

NC

had

say

it

had

a

action

point

to

try

and

go

through

these

drafts

and

come

back

to

the

working

group

on

the

suggestion

on

how

to

proceed.

I.

D

Had

on

the

framework

draft,

there

was

a

comment

that

this

need

to

be

in

line

with

the

RTP

topology

draft

RC

right

now,

I

have

reviewed

that

again

framework

drop

as

a

co.

Thor

I

didn't

really

find

that

there

is

a

there

is

so

much

need

to

be

updated

on

that

one.

The

question

here

is

more

about

like

how

do

you

unlined

the

codec

CC,

codec,

interaction

with

arm

frame

or

draft?

D

My

opinion

is

like

is

just

like

putting

them.

I

mean

figure

it

out

like.

Basically

it's

a

one

draft

or

two

draft.

We

need

to

be

having

said

that

I

think

me

and

Varun

I

I

kind

of

did

in.

We

didn't

manage

to

have

a

time

to

actually

do

that,

but

I

hope

we

can

do

it

faster,

but

otherwise

the

framework

draft

s

especially

I

think,

is

pretty

good

shape.

Actually.

E

Thank

you

so

for

the

framework

draft,

maybe

one

thing

I

should

mention

is

that

we're

open

sourcing,

the

NS

three

run

cat

code

and

that

code

kind

of

provides

a

reference

implementation

of,

let's

say

our

understanding

of

the

framework,

so

it

can

serve

as

a

reference

as

well

yeah,

and

as

that

he

was

mentioned.

The

previous

comment

on

allowing

the

terminology-

that's

something

we

can

address,

but

it's

Mora

finding

the

time

to

do

it.

Yeah.

A

D

Well,

I

cannot

speak

for

Berlin,

but

it

has

been

I

mean

lack

of

time.

Basically,

I

mean

II.

My

approach

would

be

like.

Well,

if

it

is

importance

is

the

interaction.

If

the

framework

document

is

really

really

is

ready

and-

and

you

have

a

reference

implementation

for

that

one,

then

perhaps

we

should

we

should

deal

with

them

separately.

Framework

document

I

mean,

as

one

of

the

thing

that

pointed

out

in

previous

meetings,

like

none

of

the

Canada

algorithm,

currently

follows

the

framework

document.

But

do

you

see

this

was

the

yeah?

D

The

I

think

the

reasoning

here

is

like

when

you

will

go

on

a

standard

track,

then

ephemeral

document

will

be

used,

and

it's

just

like

that.

Maybe

we

should

we

should

deal

it

with

separately

because

and

I

I'm

not

actually

sure

right

now

like

how

much

CC,

codec

and

framework

document

need

to

be

aligned,

I'm

not

sure

about

it,

but

Varun

has

more

strong

opportun

than

me,

so

maybe

it's

better

like

I

can

talk

with

Berlin.

D

A

Yeah

sounds

good

and,

as

you,

as

you

said,

say,

the

the

the

current

drives

did

not

follow

the

framework,

so

it's

will

come

in

the

next

step,

hopefully

for

the

so

in

that

sense,

it's

also

not

extremely

critical

at

this

time,

because

we

are

not

on

this

point

so,

but

it

would

be

good

to

have

a

plan

for

how

we

proceed

with

them.

So

yeah

that

sounds

good.

Okay,.

A

So

our

milestones,

so

we

have

now

completed

a

few

of

the

ones

that

we

had

in

the

summer.

So

we

have

the

couple.

Congestion

control

was

tripped

just

before

our

previous

meeting,

so

that

completes

that

milestone,

or

at

least

partly

and

with

the

SPD

draft

being

hopefully

some

next

week

that

milestone

will

be

fully

completed.

A

Feedback

requirements-

Center

apt

core-

has

also

been

completed,

so

the

one

that

we

are

a

little

bit

behind

with

now.

That

is

the

requirements

and

evaluation

draft.

So

maybe

we

will

update

that

and

they

say,

but

we

should

try

to

get

that

out.

So

we

will.

We

will

see

how

we

will

put

put

the

deadlines

on

updates

and

then

look

for

other

ways

to

proceed.

If

it

cannot

be

done.

You.

D

A

A

E

E

Yeah

great

so

yeah

I'll

give

an

update

and

another

draft,

and

basically

actually

the

next

page,

gives

a

brief

outline.

So,

basically,

the

updates

are

several

aspects.

We

have

some

update

on

still

you

know,

taking

the

algorithms

but

mainly

isolated

for

the

last

based

behavior

and

sort

of

scenario

along

and

then

accompany

the

draft

and

did

algorithm

design.

There

is

the

open

source

code

so

I'll

give

a

status

update

on

the

open

source

code

for

the

and

s3

ram

cat.

That's

where

the

NADA

reference

implementation

is

and

are

also

mentioned.

E

Briefly,

some

update

on

the

sync

codecs

open

source

code,

which

is

more

tied

to

the

video

traffic

model

draft.

As

the

chair

set

mentioned,

we

do

have

a

slide

to

cover

that

and

finally,

with

these

updates,

I'll

show

some

sort

of

a

selection

of

updated

evaluation

results.

We

have

the

four

set

ready

to

be

shared

with

the

amazing.

You

know,

submit

mailing

group

working

group,

but

I

didn't

want

to

bore

you

guys.

E

You

know

to

flip

through

50

slides,

so

I

picked

a

view

where

the

changes

are

reflected

in

those

sort

of

more

representative

ones

and

finally,

a

summary

of

the

draft

changes

from

o2,

o4,

205

and

also

some

of

the

I

would

say,

planned

changes

for

another

revision.

Just

because

of

some

more

recent

review

comments

we

have

received

over

the

mailing

list

next

page,

please,

basically

for

the

algorithm

updates.

We

have

been

motivated

by

actually

the

issue

reported

by

the

julius

flora

from

our

mailing

list.

E

He

reported

the

problems

he

encountered

with

his

own

and

on

net

plus

plus

implementation

of

the

nada

algorithm,

and

he

basically

reported

that

for

low

link

scenarios.

Nada

seems

to

be

pushing

away

his

other.

He

lost

based

TCP

flows,

so

that

was

kind

of

motivators

as

to

you,

don't

revisit

that

part

of

the

algorithm

and

hopefully

do

a

better

job

of

coming

up

with

a

more

robust

behavior

when

it's

competing

against

other

lost

based

flows.

E

So

the

changes

were

made

in

the

algorithm

are

actually

kind

of

revisited

the

entire

flow,

in

the

sense

that

then

we

tweaked

first

off,

we

tweaked

our

decision

in

whether

to

get

in

and

out

of

a

lost

based

modes.

Basically,

we

we

adopted

a

self

adapting

thresholding

to

make

it

more

adaptive

so

that

it

can

serve

self

scale

for

different

link

capacities,

then.

Secondly,

for

this

transition

also-

and

this

is

more

like

a

technical

tweet-

we

also

added

a

linear

interpolation

when

our

algorithm

is

getting

in

and

out

of

last

bit.

E

You

know

basically

switching

between

one

mode

in

another,

because

the

way

the

congestion

signal

being

calculated

is

following

two

different

sets

of

equations,

so

in

between

one

we're

doing

the

mode

selection.

We

just

added

a

linear

linear

interpolation

to

ensure

a

more

smooth

transition

of

the

reported

congestion

signals.

E

That

gives

a

more

basically

that

avoids

some

of

the

radical

changes

in

the

algorithm

sort

of

in

the

sender,

adaptation

and

then,

finally,

in

our

last

penalty

function

in

previous

versions,

we've

been

following

a

linear

sort

of

a

mapping

between

observed

packet

loss

ratio

and

corresponding

delay

penalty

term.

In

this

version,

we

have

changed

to

a

quadratic.

After

some

experimentation

we

find

quadratic

form

a

mapping

between

loss

and

corresponding

delayed

tends

to

kind

of

penalize

hold

back.

The

nether

flows

at

lower

link

rate

that

was

all

sold,

but

basically

motivated

to

make

nada

work.

E

Lower

link

reads

not

to

starve

other

flows.

So

these

are

the

main

changes

and

next

slide

I'm

going

to

maybe

explain

a

little

bit

more

for

this

self

adapting

thresholding

part.

Basically,

in

the

previous

version

of

the

draft,

we

simply

picked

a

kind

of

an

expiration

date,

it's

kind

of

our

expiration

time.

It's

a

fixed

threshold

to

to

consider

whether

the

flow

has

observed

losses-

and

you

know

if,

if

the

flow

no

longer

observed

losses

within

a

given

period

of

time,

it

will

try

to

get

out

of

the

last

base

mode.

E

That

obviously

needs

to

be

tuned

for

different

link

capacity

environments,

because

TCP

sawtooth

will

have

different

widths

for

different

and

delaying

vendors

products.

So

in

draft

l5

we

actually

adopted

the

the

calculation

used

by

DF

RC

for

calculating

an

average

loss

event

interval,

and

we

use

that

as

the

basis

for

coming

up

with

a

threshold

that

now

self

adapts

with

this

measured

average

loss

interval.

E

So

this

makes

the

algorithm

a

little

bit

more

self

scaling

as

we

were

seeing

the

results

and

then,

of

course,

to

be

a

bit

more

tolerant

for

missing

a

last

event

or

two,

we

add

a

constant

multiplier.

So

there's

still

a

parameter

bid

to

be

tuned,

but

it's

much

less

sensitive.

Typically,

we

can

stay

with

the

same

constant

multiplier

and

it

worked

worked

with

a

wide

range.

E

Alpha

bottleneck

link

bandwidth

to

illustrate

this

on

the

next

slide

and

basically

I'm

showing

the

experiment

of

test

case

5.6

in

the

rampant

eval

test

draft,

but

then

by

changing

the

bottleneck

capacity.

So

on

the

left,

you

see

the

result

where

nada,

the

blood

flow

is

competing

against

TCP,

the

purple

at

a

shared

bottleneck

of

one

man

and

on

the

right

day,

Shira

bottleneck

of

10

meter

per

second,

so

the

the

rate

TCP

cut

its

out

of

sort

of

out

of

range

for

this

plot.

E

Now

when

we

get

to

the

high

bandwidth

case,

ideally,

that's

where

the

total

link

bandwidth

could

have

accommodated

a

nada

flow

at

you

know,

source

back

straight

without

backing

off

at

all,

and

then

you

disappear

on

top

of

it,

but

because

nada

is

still

reacting

to

losses

as

a

measure

of

congestion

suitable

ever

so

slightly.

So

it's

backs

off

very

occasionally,

as

you

guys

have

seen

through

this

graph.

So

we

don't

get

too

awkward

at

our

max

and

also

because

of

the

sawtooth

widths.

E

So

that's

the

main

kind

of

study

it

for

and

improving

the

robustness

behavior

of

nada

in

the

last

flow

competition,

environment

and

next

page

now.

The

other

thing

we

changed

in

terms

of

our

evaluation

approach

for

the

code,

that

is

kind

of

sorry

for

de

nada

congestion

controller

is

somewhat

tied

to

the

traffic

source

we

use

for

driving

it

previously

or

our

experiments

are

reported

mainly

based

on

this.

What

we

call

it

the

perfect

orbit.

Basically,

it's

like

mimicking

CPR,

behavior

codec,

but

then

one

experimenting

with

dist

and

TCP

base

flow.

E

We

realize

that

with

the

video

codec

behavior,

one

main

thing

is

that

for

a

given

fixed

and

frame

per

second

certificate

for

a

given

frame

interval,

at

least

the

flow

has

some

crescents.

You

know

on

a

periodic

basis,

so

we

added

a

new

traffic

source

type

in

our

implementation

of

the

sync

codex.

That's

more

tie

to

the

video

traffic

model

and

dropped

where,

instead

of

spreading

out,

let's

say

at

very

low

rates

instead

of

spreading

instead

of

having

a

very

wide

packet

interval.

E

In

this

case,

we

actually

still

have

a

fixed

frame

interval

and

also

based

on

the

new

traffic

sort

of,

and

then

the

data

analysis

with

it.

We

presented

critically

at

IGF

meetings.

We

added

some

random

perturbations

after

frame

intervals,

so

we

believe

this

new

fixed,

you

know

so

called

fixed

FPS

codec

is

a

more

somewhat

more

realistic.

E

You

know

sort

of

modeling

of

the

video

traffic

behavior,

so

for

all

our

test

case

evaluations

as

you'll

see

later

we

use

this

one

as

default,

but

of

course

our

implementation

easily

supports

switching

between

different

codecs,

the

other

one

we

added

is

this

hybrid

codec,

which

the

main

most

of

the

explanations

are

in

the

video

traffic

model.

Where

basically,

will

combine

the

trace

collected

from

realistic

encoding

experiments

with

some

statistical

modeling

of

the

transient

behavior.

So

that's

another

one

that

an

applied

updated

there.

E

The

current

video

traffic

model

has

already

described,

and

now

in

our

Cinco,

that's

implementation,

which

is

catching

up.

So

let

me

just

insert

a

brief

status:

update

drive

for

the

traffic

model

draft,

basically

as

to

our

understanding,

there's

no

more

sort

of

outstanding

issues

or

sort

of

reduce

that

we

want.

We

I

think

we

have

basically

completed

all

the

things

we

have.

We

have

sort

of

and

wanted

to

control,

so

that

draft

should

be

ready

for

further

review

and

maybe

working

group

maskala

yeah.

E

But

where

are

you

not

getting

enough

review

and

the

code

is

of

course

available

online

and

next

page

so

now

getting

back

to

the

other,

an

open

source

code?

That's

the

NS

3

rampant

code.

We

have

presented

its

structure

and

how

to

use

it

kind

of

more

in

a

forecast

manner

in

our

last

and

intermediate

meeting

and

now

finally,

we're

happy

to

report

that

we're

ready

to

release

the

code.

I

just

checked

the

link

before

joining

the

presentation,

my

colleague

Sergio

has

already

sort

of

released

the

code,

so

the

link

it's

not

really

soon

right.

A

E

We

have

provided

two

examples

that

dummy

controller

where

you

can

configure

you

know

descending

grade

from

explicitly

and

whatever

you

configure

the

the

controller

just

sent

at

that

rate,

and

you

can

change

on

the

fly

and

another

controller

which

more

or

less

and

follows

our

draft

description

up

to

version

or

five

literally

so,

and

then,

of

course,

hopefully,

with

these

two

controllers,

you

guys

can

get

the

idea,

and

you

know,

do

your

own

controller.

We

we

have

designed

the

code

so

that

it

should

be.

E

We

think

it

should

be

easy

to

add

and

try

out

your

own,

and

we

encourage

people

to

give

us

feedback

on

that

and

then

also

in

this

referee

reference

implementation.

We

have

and

support

for

the

test

of

both

the

wire

test

cases

and

the

Wi-Fi

test

cases.

That's

the

section

for

in

the

wireless

test

case,

the

missing

part

is

cellular

and

that

needs

we

need

some

additional

support

of

that

one.

There's

a

question:

should

I

yeah.

F

E

E

So

the

next

page

yeah

now

now

I

just

flipped

through

maybe

bear

with

me

with

a

few

slides

of

update

on

the

results.

This

one

is

to

show

the

test

case.

Five

point

one:

that's

you

know

where

we

will

first

try

our

case

and

we

just

I

just

want

to

show

how

it

works

for

different

traffic

sources,

the

CBR

like

and

the

fixed

fps.

E

E

A

little

bit

misleading,

it's

not

fixed

fixed,

it's

really

fixed

width,

perturbation

next

page,

and

then

these

are

the

behaviors

when

we

have

the

hybrid

codec,

where

the

frame

sizes

are

and

using

in

sort

of

recordings

of

encoded

frame

sizes.

So

sometimes

you

know

sort

of

the

code

I

guess

the

codec

output

is

lower

than

what's

specified

and

then

the

one

on

the

right

is

for

content

sharing,

which

is-

and

there

were

some

discussion

I-

think

early

on

we've

previously

found

the

mailing

list,

which

it

attempts

to

mimic

the

behavior

of

slide

sharing

behaviors.

E

Occasionally

this

big

thing.

Otherwise,

the

the

frame

sizes

are

very

small

next

page,

and

this

is

the

case

where

I'm

showing

the

kind

of

the

before

and

after

for

an

the

test

case

5.2,

as

we

reported

in

the

previous

meeting

and

in

the

testing

so

previously

our

last

week,

behavior

were

still

fairly

fragile.

So

in

this

case

it

actually

got

lost

in

the

last

base

mode.

But

after

our

can

somewhat

tweaking

of

the

last

base,

we

now

got

a

a

better

result.

E

E

So,

as

you

can

see

in

this

new

version,

we

still

converged

the

same

route,

regardless

of

the

difference,

propagation

delay,

each

flow

and

experiences

and

on

the

end

of,

but

then

the

flip

side

is

that

the

standing

grades

are

that

you

know

not

as

smooth

as

before

bidders.

Now

we

have

this

fixed

at

PS

traffic

source,

and

it

takes

also

very

even

a

bit

long

to

converge

next

one.

E

So

that's

kind

of

all

the

evaluations

results

I'd

like

to

share

during

the

presentation

I'll

be

happy

to

send

maybe

a

PDF

document

on

the

mailing

list

for

maybe

on

they

can

to

to

the

for

connection

of

results,

for

people

to

review

and

to

summarize

the

draft

changes

from

all

four

2:05.

That's

the

change

we

have

already

made.

The

main

changes

are

really

in

updating

the

algorithm

descriptions,

as

sort

of

as

I

just

explained,

for

the

Lost

based

behavior

and

I

believe

that

one

have

also

addressed

and

sort

of

some

of

the

review

comments.

E

We

have

got

from

working

group

last

call

reveals

and

going

forward.

We

have

more

recently

collected

some

additional

review

comments

from

Ronan

and

also

Michael

has

pointed

out

some

kind

of

mistakes

and

in

our

use

of

normative

versus

informative

preferences,

so

we're

basically

intend

to

collect

additional

issues

that

were

aware

of

basically

update,

update

and

fits

those

and

also

update

the

section

seven

on

implementation

status.

E

E

E

Okay,

if

the

people

in

the

room

can

still

see

the

slides

I

have

a

local

copy,

so

I

can

keep

parking.

If

you

don't

mind,

basically

for

the

draft

yeah

we-

and

so

these

are

the

things

we

plan

to

update

pretty

soon,

probably

I

see

even

you

know

doing

the

course

of

IETF

100.

We

can

update

the

draft,

because

these

are

things

we

already

have

information.

E

B

E

E

E

E

E

E

But

I,

don't

I,

don't

see

this

okay

great.

So

the

slides

is

back.

It's

a

it's

a

live

test

and

you

know

like

oh,

oh

life

systems,

it

yeah,

sorry

for

the

the

glitch

in

between

yeah.

Basically,

I

was

trying

to

mention

that

for

our

planned

changes,

all

these

we

can

complete

very

quickly,

because

these

are

things

we

already

have

sufficient

information

I'll.

Maybe

my

question

back

is

like

what

happens.

Let's

say

after

we

submit

all

six

that

I'm

expecting

as

if

I

end

of

this

week,

but

we

should

be

able

to

update.

E

Of

course

we

want

people

to

review

the

updated

changes,

but

maybe

process

wise.

Do

we

need

to

go

through

this

and

working

group

last

call

again,

or

is

it

more

that

we

can

rely

on

the

reviewers

who

have

provided

feedback

before

to

kind

of

double-check

the

changes

we

have

made

and

then

assuming

the

draft

goes

well

but

are

working

in

parallel

now

what

we

want

to

do

is,

or

as

a

next

step

is

to

start

thinking

about

real

world

experiments

are

moving

beyond

simulation.

E

We've

previously

have

collaborated

with

render

a

little

bit

and

got

some

cut.

His

help

in

trying

trying

out

nada

in

Mozilla

browsers,

but

we

kind

of

dropped,

a

threat.

You

know

due

to

time

constraints.

Now

we

feel

like

since

the

simulated

version

the

code

is

out.

We

can

come

back

and

look

at

experiment.

So

that's

what

we

want

to

focus

on

sort

of

experimenting

with

embedding

nada

in

the

the

Mozilla

open

source,

browsers

yeah.

E

A

E

E

F

John

Lennox,

I,

guess

sort

of

a

related

to

my

previous

question,

guys

in

your

experiment,

when

your

plans

for

experimenting

in

the

Mozilla

browsers.

What

do

you

are

you

planning

to

implement

CC

feedback

there

or

is

there

some

other

I

know

they

have

various

other

preliminary

mechanisms,

but

he

plan

to

use

for

the

feedback

when

you're

in

Mozilla

experiment-

or

you

don't

know

yet.

E

My

understanding

is

that

it's

basically

four

algorithm

to

work

with

my

Trinity.

We

kind

of

need

per

packet,

information

writer,

at

least

at

the

receiver.

We

need

perfected

observation

of

the

delay

and

packet

loss.

We

may

need

to

look

into

whether

the

current

feedback

mechanism

already

supported

only

we

need

to

add

some

bigger

based

operations

yeah.

So.

F

A

E

C

G

Spicy

food,

okay,

okay,

good,

like

this-

and

the

mm

presentational

tom

screaming

experiment

with

the

sort

of

an

application

that

is

outside

the

original

intent

of

this

orrin

cat

working

and

it's

were

doing

it

using

it

to

remote

control

vehicles.

On

the

picture,

you

can

see

a

robot

and

below

students

at

University,

Lily,

University

of

Technology

I

sorta

just

took

this

scream

multi

camera

and

that's

the

control

software

and

put

it

on

a

probable

chastised

with.

G

Although

the

remote

control,

we

had

a

lot

of

fun

with

it

and

do

it

intentionally

in

long

run,

it

will

end

up

in

a

mining.

Truck

switched

on

mysterious

matter

is

wrong.

That

is

not

just

fun

and

yeah.

I

can

take

the

next

slide

and

if,

unless

I

guess,

everybody

knows

what

it

is

about,

it

they'll

be

about

going

from

a

crappy

video

image.

When

you

do

remand,

it

want

to

remote

control

a

car

or

something

for

whatever

you

want

to

do

a

reason

you

want

to

do

it.

G

G

You

need

to

have

several

cameras

in

order

to

get

the

were

almost

strike,

driving

experience

and

I

can't

first,

video

is

important

also,

and

although

that

results

in

peak

paid

phrases

can

exist,

30,

megabits

and

and

even

though

well,

we

posted

LT

and

fair

foggy

deployments,

can

can

guarantee

higher

uplink

bit

rate

the

reality.

Sometimes

you

end

up

in

congestion,

cells

or

very

coverage,

some

or

combination

of

the

two.

So

any

sort

of

remote

control

operation

needs

to

be

raised.

Adaptive,

that's

quite

obvious,

and

you

can

do

some

enhancements

to

improve

performance.

G

You

can

then

for

identify

the

network,

and

that

is

talked

to

an

ongoing

process

all

the

time

on,

and

you

can

article

quality

of

service.

You

can

prioritize.

If

you

want

to

talk

to

a

commercial

application,

you

can

prioritize

user,

not

a

bear

with

higher

priority

for

your

remote

control

applications

and

also

add

the

explicit

congestion

notification

to

it

all

to

load

packet

loss

as

to

congestion.

Sing-Along

get

these

emotions

done,

but

the

thing

is

that

I'm

going

to

present

just

without

the

without

the

tube

lower

bonus.

G

So

it's

not

very

useful

and

invested

better

cases.

It

works,

but

then,

when

it

doesn't

work

you

not

want

to

drive

the

car

okay,

you

can

take

next

line

and

the

what

tell

you

what

you

do,

that

you

hadn't

and

at

the

at

the

congestion

control

on

the

center

side

and

you

don't

own

feedback

on

the

receiver

side.

That

was

the

in

this

case.

G

G

If

you

want

to

do

retransmissions,

then

you

use

the

normal

or

TP

or

retransmission

mechanism,

and

it

acts

on

packet

loss

as

well

as

delay

and

also

be

seein,

is

the

easy

on

is

enabled

in

a

network

and

what

you

do.

Q,

the

OTP

packets

can

be

temporary

queued

up

in

sender.

You

don't

send

more

data

than

that's

actually

Toto

act

in

the

in

the

network,

and

that

can

be

image.

Can

some

can

have

some

cue

in

delaying

and

understanding

side

as

well.

It

developed

since

2014,

and

it's

actually

now.

G

Lo

and

behold,

is

in

normalcy

editors

cue.

After

a

long

while

long

wait-

and

it

has

been

a

long

run,

but

it's

a

long

journey

but

is

enough

soon

finished,

you

can

conducing

with

your

loss

to

the

media

that

is

packed

in

our

TV.

It

can

be

video,

audio

haptics,

motion,

JPEG

and

there's

all

this

still

image

on

the

stuff.

But

it's

mostly

only

the

video

that

is

tested

that

much

and

let's

Optima

try

it

out

multi-stream

handling

and

prioritization.

G

G

That's

pre

us

on

a

megabyte

video

right

now

ongoing

work,

currently

working

on

l4

s,

support

and

almost

done

and

will

be

pushed

up

to

the

screen,

don't

get

table

when

it's

done

and

there

will

be

store

to

the

student

project

doctor

willing,

integrate

scream

energy,

streamer

plugin,

okay,

next,

the

feedback,

it's

not

the

sort

of

the

proper

feedback

format,

this

sort

of

a

home,

a

girl,

home

room,

a

feedback

based

on

XOR,

and

it

has

Authority

calculates

accumulated

size

of

easy

and

more

fights

and

add

some

nectar

on

a

timestamp.

But

all

the

all.

G

If

you

have

many

streams

now,

you

can

send

one

or

more

TCP

feedback

for

each

stream

and

that

cost

of

little

bit-

or

it

can

say

it's

on

Mary

or

TCP

overhead

of,

if

you

bundle

what

is

it

/

packets,

yeah

and

the

most

challenging

works

has

actually

not

been

network

congestion

port

that

the

if,

if

I,

was

allowed

to

sort

of

count

the

number

of

hours

that

spent

with

this

so

roughly

eighty

percent

has

been

to

cope

with

a

varying

bitrate

from

video

coders.

Your

keyframes.

G

There

are

lots,

potrait

variations

and

also

the

case

at

all.

Funder

quantize

are

still

changed

on

keyframe

intervals.

So

if

you

in

the

middle

of

the

road

or

a

group

of

pictures

want

to

change

the

bitrate,

it's

not

a

guarantee

that

you'll

that

the

Bitterroot

will

go

down

on

up

up

and

often

hard

work.

Video

coders

video,

coda,

striping

cameras,

they're

very

limited

tuning

capabilities

and

that

has

had

Lord

she

impact

on

the

design

of

the

congestion

control,

its

additional

checks

and

balances

in

the

code.

G

Take

the

next

line

and

on

the

sender

side

here,

I

used,

reuse,

the

sort

of

IP

cameras

of

the

Panasonic

brand

and

in

order

to

make

them

stream

OTP,

do

you

actually

implement

the

North,

ESP

client

for

each

camera,

and

you

sort

of

thought

if

he

stream

on

make

it

go

through,

go

through

this

cream

sundae.

What

if

everything

is

multiplexed?

H

One

quick

question

on

the

graph

on

the

previous

slide:

I.

Take

it

you're

running

the

video

encoders

with

fixed

interval,

iframes

and

I

would

presume.

Also

the

that's.

That's

because

you're

not

handling

error,

error

recovery

via

other

mechanisms

like

retransmit

and

so

forth,

is

that

is

that

true,

yeah.

G

H

G

G

Okay,

yeah,

the

congestion,

the

sorry

so

always

talking

about

congestion

server,

though

the

complexities

are

quite

modest.

Currently

it

runs

on

a

Raspberry

Pi

3

and

it

consumes

like

five

to

ten

percent

sort

of

a

cpu

power

and

don't

have

the

exact

figures,

because

the

Raspberry

Pi

implemented

a

sort

of

a

VPN

tunnel

as

well

so

and

it

was

served

below

into

a

20%

when

you're

running

at

higher

bitrate,

so

I

had

the

students

doing

that

work

for

me.

G

G

But

you

need

to

also

totally

enforce,

with

priority

rate

adjustments

to

make

it

work,

and

that

is

because

there

are

video

coders

that

you

cope

with

and

some

sometimes

don't

we'll

give

you

a

median

code

or

insider.

Even

though,

if

you're

running

with

the

sort

of

remote

control

perma

boils

and

you

can

have

a

very

low

activity

in

one

cameras,

it

could

be

facing

just

a

blank

wall.

G

G

There's

a

lot

delay

based

ranching

and

no,

you

can

see

that

actually

the

prioritization

it

works

fairly

well,

but

it

I

believe

it

is

not

reasonable

to

assume

that

you

can

extract

priorities

and

agree

for

that

purpose

is

the

four

priority

levels.

Israel

is

good

enough.

You

can't

expect

in

reality,

to

get

much

high

granularity

done,

that

that

is

my

impression

of

list

next

slide,

how

much

time

I

spent

you

can

add.

The

initial

impressions

to

this

extreme

privation

and

the

average

behavior

in

general,

isn't

it

looks

quite

okay.

A

G

Because

of

the

weight

is

scheduling

so

even

though

you

sort

of

get

them

dramatically

can

reduce

throughput

the

front.

Camera

will

work

quite

well

so,

and

we

get

some

kind

of

tunnel

vision

effect

with

the

throughput

drops.

Basically,

when

you're

driving

in

a

basement

to

the

side,

cameras

were

just

crap

and

let

cool

burner

and

some

room

for

improvement,

and

is

that

easier

to

switch

off

some

cameras

in

very

problematic

conditions?

Add

some

extra

code.

G

You

have

to

turn

em

off

or

just

disable

these

streams

and

also

verify

this

runtime

priority

switch,

and

there

is

an

external

sort

of

a

mechanism

you

can

open

in

re

in

the

runtime.

You

can

switch

if

you

mean,

for

instance,

want

to

reverse

your

vehicle

and

you

might

want

to

increase

the

priority

for

the

back

camera

that

is

implemented,

not

not.

Try

it

out

so

in

knowledge,

real

media,

all

in

simulators.

G

G

Be

really

huge,

still

know

how

to

sort

of

educational,

especially

actually

in

3gpp,

need

to

educate

sort

of

convinced.

That

is

a

good

enough

in

this

set

up

is

as

big

bottleneck

actually

running

and

the

dr.

groves

all

simulations

and

the

one

is

without

a

QM

and

our

right

hand

side

its

way

code

code

Ella

with

easy

on,

and

you

can

see

that

if

you

implement

these

yen,

you

get

more

stable

sort

of

queuing

delay

and

actually

more

stable

bitrate,

and

this

is

totally

the

mimic

sort

of

a

keyframes

also

run.

G

It's

a

draw,

the

complex

video

source,

and

if

you,

if

you

dare

you,

can

look

at

the

YouTube

clips,

we're

sort

of

run,

there's

the

scream

algorithm.

We

don't

VIP,

Cameron

or

just

me

just

waving

me

to

my

hand,

son

who

Eden

without

you

seein

enable

coder,

and

in

that

case

we

chose

the

three

megabit

button.

I

can

and

erased

yesterday.

If

you

have

complex

video

sources,

easy

and

can

improve

performance

greatly,

so.

G

E

Yes,

yeah.

Okay,

can

you

hear

me

so

actually?

My

question

is

regarding

both

for

the

ECM

based

one

and

for

the

previous

one,

when

you

say

when

you're

saying

that

congestion

window

sometimes

doesn't

back

up

in

turn,

what

are

the

corresponding

packet

loss

rates

that

you

observe

because

the

cloth

don't

really

report

us.

E

G

I

G

You

saw

is

that

you

have

you:

had

you

have

the

occasional

packet

losses?

They

are

not

that

annoying.

Actually,

when

you're

on,

but

they're

still

ugly

they're

still

too

much

for

being

used

in

a

sort

of

a

real

commercial

deploying

not

done

before.

We

want

zero

packet

loss,

especially

with

video,

but

as

it

is

now,

if

remember

correctly,

goes

like

0.5%

packet

loss,

which

is

a

bit

too

high

for

video

and

the

possibly

one

can

use

retransmission

to

cover

it

out.

But

then

again

you

a

delay.

G

End-To-End

like

it,

you

see

also

do

for

reflection,

is

not

an

option

because

the

packet

loss

is

coming

burst,

it

could

be

burst

of

10

packets

lost

under

that

it

can

be

difficult

to

formulate

correct

unless

you

use

the

interleaving

or

something

like

that.

What

get

more

complicated

as

a

packet

loss

is

coming

burst,

and

that

is

also

an

indication

that

is

that

a

tail

drop

cubes

on

board

go.

There

is

no

other

traffic

in

the

uplink.

E

Maybe

a

quick

question

so

when

they're

saying

that

the

packet

loss

ratio

is

low,

only

0.5,

that's

kind

of

averaging

over

the

entire

session,

or

is

it

sort

of

locally?

Because

if

you're

saying

that

forward,

error

correction

doesn't

work,

it

seems

to

be

indicating

that

the

instantaneous

packet

loss

ratio

can

be

too

high

hapc

to

come.

Yeah.

E

G

Yes,

we're

trying

to

get

in

contact

with

the

ship's

tech

minders

actual

to

see

if

they

can

resolve

this

issue,

because

it

shouldn't

be

packet

losses.

It's

not,

though,

it's

outside

the

LT

specification,

because

there's

the

barriers

that

are

used,

the

Q's

here

in

on

various

they

also

to

default

Paris

and

they

use

acknowledge

mode

transporting

operand.

It

shouldn't

get

any

packet

losses,

but

still

you

get

them,

though.

It's

an

implementation

issue.

F

G

G

D

I

think

the

bottom

line

here

is

like

in

the

uplink

is

not

optimized

port.

This

kind

of

thing

I

mean

we're

running

like

30

megabits

per

second

and

appling

video.

This

is,

it

isn't

even

like

never

been

thought

up.

Yeah

when

they're

making

the

product

I

think

it's

a

specification,

perhaps

in

production

I,

don't

think

like

they

have

and

I

think

in

order

didn't

mention

like

they

tried

multiple

vendors

in

multiple,

more

amps,

and

they

all

have

same

problem.

So

this

is

I

I

personally,

don't

think

like

any

condition

come

from,

can

solve

that.

D

So

there

will

be

package

losses

until

the

implementation

is

done

so

I,

as

you

mentioned,

like

we're

talking

with

the

some

of

the

chipset

tender

and

say

like

if

we

can

solve

the

problem,

and

that

would

be

really

good

to

say,

yeah

the

uplink

is

they

4lt

or

or

any

rap

technology.

Radio

technologies

that

there

is

a

synchronous

system

in

downing

an

uplink

and

up

ting

is

not

just

not

optimized

yeah

and

we're

trying

to

get

every

bit

of

it

and

I

must

say

like

this.

D

G

E

Oh

sure,

so,

actually

maybe

I

didn't

clarify

my

question.

So

I

was

more

interested

in

when

you

mentioned

that,

like

there

are

places

where

the

stripper

jobs

and

the

congestion

window

doesn't

back

off

in

time.

Presumably

that

would

introduce

self-induced

losses

right.

So

I

understand

that

the

uplink

sort

of

has

some,

let's

say

non

congestion

in

these

losses

mysterious

throughout

but

I'm

more

interested

in

maybe

the

understanding

of

whether

there's

any

places

where

it's

a

kind

of

a

self-imposed

a

lot.

G

G

E

E

G

B

G

G

That's

a

kind

of

conclusion

at

you.

You

can

get

a

high

quality

video

with

a

video

feedback

for

a

remote

control

application

by

using

congestion

control.

Your

words

are

getting

black

screens

and

long

delays,

and

that's

often-

and

it

follows

to

Shannon

quite

good

and

it

toaster

I

expected

worst

behavior,

especially

when

you're

running

in

the

basement.

But

you

had

really

poor

coverage

on

and

even

video

with

complex

the

video

caller

that

you're

using

and

it

they

are

not

freely

adapted

for

or

for

real-time

application.

G

A

D

As

far

as,

if

I'm

correct,

we

the

arm

cat

group

working

beside

it

like?

Okay,

let's,

let's

analyze,

all

the

requirements

from

different

proponents,

we

are

at

least

have

three

proponents

at

that

time,

and

the

initial

slide

with

three

circles

represents

that

one.

So

we

have

three

candidates:

they

have

different

kind

of

requirements.

The

design

team

was

from

so

that

okay

look

into

the

recommend

see

like

if

we

can

basically

find

their

generate

feedback

masses,

then

they

were.

D

Then

we

presented

this

to

every

T

curve,

because

I'm

cat

doesn't

have

the

authority

to

finalize

the

that

kind

of

thing.

It

was

not

chartered

to

do

that.

So

we

went

to

every

to

go

and

say

bike.

Well,

this

is

what

we

have

now

Excel

block

based

and

we

have

artists,

and

then

we

get

some

feedback

and

I

think

the

most

the

the

sizing

factor

was

the

deployment

deployment

possibility.

So

people

has,

it

was

noted,

like

people

has

more

experience

with

participe

kind

of

feedback

and

they

have

like.

You

have

fear.

D

D

Perhaps

more

deployable,

so

that's

what

happened

next

slide.

So

this

is

the

this

is

the

this

is

the

goal,

if

you,

if

you

don't

remember,

this

is

a

recap

again

theory.

As

I

said,

the

design

team

was

formed

to

analyze

the

requirements

of

the

candidate

algorithms

and

then

try

to

say

at

and

and

generic

feedback

process

and

the

main

I

think

one

of

the

main

reasons

what

to

do.

That

is

interoperability.

D

So

basically

one

application

that

implemented

say

GCC

and

they

want

to

talk

with

scream

or

nada

and

in

a

communication

point

to

point,

communication

or

whatever

them

should

be

able

to

talk

to

each

other.

So

it

not.

Everybody

need

to

implement

all

of

them,

because

we

had

any

situation

like

I,

don't

think

like

I'm

cat

in

a

situation

right

now,

that's

really

well

for

experimental

purpose

will

have

three

candidates

up

there.

So

this

was

one

of

the

things

that

okay.

D

If

we

have

a

common

feedback

message,

then

we