►

From YouTube: IETF100-QUIC-20171115-0930

Description

QUIC meeting session at IETF100

2017/11/15 0930

https://datatracker.ietf.org/meeting/100/proceedings/

B

A

B

A

B

B

C

B

Okay,

well

welcome

to

soar

out

of

sync.

Okay,

let's

fix

that.

Welcome

to

quick.

This

is

our

second

session.

This

is

the

no

12

statement,

as

we've

said

many

times

before.

These

are

the

intellectual

property

terms

under

which

you

participate.

So

if

you're

not

familiar

with

this,

please

go

and

have

a

look

at

ia.

Tf

note

well

on

your

most

convenient

internet

search

engine.

It's

important

to

understand

this.

Can

everyone

in

the

back

hear

me?

Is

this

a

good

volume

I

need

positive,

okay,

good!

Thank

you.

D

A

B

Likewise,

just

a

reminder,

as

we've

done

a

few

times

recently,

we

expect

this

to

be

a

professional

environment.

If

you

have

issues

that

you

can't

handle

by

talking

to

the

person

who

you're

concerned

about

or

by

talking

to

ask

your

chairs,

we

also

have

an

Ombuds

team.

I

said

it

right

who

can

handle

issues,

especially

regarding

harassment

and

those

are

the

folks

at

the

bottom

of

the

slide.

So

today,

I

will

send

the

blue

sheets

out

in

a

second,

we

have

a

scribe.

Thank

you.

Brian

Trammell.

B

This

is

actually

a

little

out

of

date.

We're

going

to

take

the

item

that

we

postponed

yesterday,

Martin,

giving

a

summary

the

changes

by

the

editors

and

put

that

at

the

top

of

the

agenda,

then

ian

is

going

to

talk

about

loss,

recovery,

John's,

gonna,

talk

about

connection

migration

and

we're

gonna

talk

about

the

third

implementation

draft

and

what

it

might

contain.

And

finally,

if

we

have

time

we'll

discuss

any

open

issues,

any

other

items

agenda-

bashing,

ok,

great,

let's

start

so

Martin

Europe.

E

E

Ok,

this

should

be

history

for

people.

This

is

what

people

were

concentrating

on

during

the

hackathon,

which

was

draft

7.

We

didn't

do

a

lot

in

that

draft.

We

just

made

a

few

tweaks.

We

use

AES

GCM

to

protect

the

handshake

packets.

We

got

rid

of

the

1

RT

t,

long-form

headers,

our

packet

forms

and

there's

a

bunch

of

changes

that

don't

quite

complete

the

discussion

about

closing

but

got

most

of

the

way

there

and

I've

got

a

little

bit

more

on

that.

E

Here

is

that

there's

three

basic

modes

of

operation,

one

is

there's

an

idle

time

out

that

both

sides

advertise

when

that

timer

runs

it's

actually

in

negotiation,

I,

say

X.

Someone

else

says

y.

The

minimum

of

those

two

is

the

time

that

the

connection

can

remain

idle

before

it

starts

timing

out,

there's

also

the

immediate

close

which

we

decided

would

be

used

for

the

graceful

shutdown

case.

So

the

application

is

done

with

the

connection

or

it

detects

an

error.

It

sends

a

message.

E

We

have

two

of

those

now

look

at

the

draft

for

the

details

and

then

there's

a

stateless

reset.

So

a

lot

of

the

discussions

sort

of

focused

on

this,

the

states.

During

these

transitions

we

have

essentially

two

states

now

and

there's

a

pull

request.

That

needs

a

little

bit

more

work

before

it

goes

in,

but

essentially

we

have

the

one

timer

and

then

you

transition

between

two

states

in

that

time

of

the

first.

E

The

first

state

is

only

really

triggered

in

the

case

where

you

send

one

of

these

clothes

messages

and

that's

the

closing

State,

better

names,

people

that

doesn't

really

matter

in

that

state.

You

don't

send

any

packets

except

for

a

closed

message

in

response

to

receiving

packets

from

the

other

side.

So

in

case

your

closed

message

was

lost,

you

provide

more

of

them

just

so

make

sure

they

get

through.

The

second

state

is

the

draining

period,

which

is

essentially

you

keep

your

state

around

for

a

little

bit

of

time

to

make

of

that.

E

No

incoming

packets

for

that

connection,

reordered,

packets,

those

sorts

of

things

make

sure

they

don't

get

treated

as

if

they

were

new

connections

or

anything

like

that.

You

can

associate

them

correctly

with

the

the

connection

state

and

throw

them

away

properly

without

triggering

any

sort

of

other

machinery.

There's

a

bit

of

discussion

about

that

and

I

think

Christian

raised

a

few

points

of

that.

Well,

what

state

do

you

actually

need

to

maintain

in

each

one

of

these

things,

because

there's

not

a

lot

of

information

you

need

to

maintain

in

order

to

make

these

decisions

correctly.

E

You

both

sides

go

into

this

draining

state

and

the

reason

that

both

sides

going

to

the

straining

state

is

that

they're

in

individual

views

of

when

the

period

starts

is

skewed

based

on

time

that

packets

take

to

deliver.

Maybe

there

were

spurious

retransmissions

or

something

like

that.

The

cause

that

two

sides

to

have

some

slight

disagreement.

Current

draft

says

three

RTO.

That's

also

up

for

negotiation,

some

discussion

than

in

the

doc

about

how

you

might

short

shortcut

that,

in

certain

circumstances,

next,

the

immediate

close

is

more

interesting.

E

I've

shown

one

of

the

more

complicated

scenarios

here,

so

a

detects

some

sort

of

error

and

sends

a

connection

close

that

connection

close,

could

be

lost

and

I've

shown

that

disappearing

into

the

ether.

In

this

case,

B

continues

on

happily

ignorant

of

the

fact

that

has

generated

some

sort

of

error

and

sends

packets

to

a

a

because

it's

in

this

closing

state

detects

that

I've

got

an

error

on

the

slide,

but

it

it

sees

one

of

those

packets

coming

in

and

sends

a

connection

close

out,

so

that

this

is

essentially

lost.

Recovery

for

the

think.

E

The

amount

of

state

that

the

that

a

is

maintaining

in

this

case

is

pretty

minimal.

It

can

actually

just

save

the

packet

that

received

and

there's

some

question

about

whether

it

even

maintains

decryption

keys

for

the

for

the

incoming

packets.

But

that's

something

we're

going

to

sort

out.

B

can

send

a

connection

close

to

sort

of

shortcut

this

whole

process.

We

had

a

bit

of

discussion

with

with

implementers

about

this

one

and

they

were

concerned

that

in

these

shutdown

scenarios

there

wasn't

any

way

to

sort

of

guarantee

termination

in

a

reasonable

period

of

time.

E

So,

though,

I

had

having

test

cases

that

sort

of

in

this

sort

of

trailing

state

and

if

you're

operating

test

cases

in

a

in

a

sort

of

lossless

environment

being

able

to

send

a

closed

in

response

to

a

closed

is

actually

quite

nice,

because

then

both

sides

enter

into

a

state

whether

they're

essentially

done

and

there's

no

more

packets

needing

to

be

exchanged.

So

we

allow

that

to

happen

once,

but

we

don't

allow

that

to

be

repaired.

Otherwise

we

get

into

a

ping

pong

situation.

There's

a

bit

of

debate

about

this

particular

point

as

well.

E

Christiaan

suggests

that

we

could

use

a

particular

error

code

for

this

situation

and

allowed

B

to

continue

sending

but

I,

don't

actually

think

that's

necessary,

but

we

haven't

resolved.

That

particular

part

of

the

conversation.

The

error

on

the

slide

is

that

once

a

has

received

a

positive

indication

that

B

is

closing,

it

doesn't

need

to

send

connection

close

anymore,

and

so

it

enters

the

draining

state

also

and

both

both

of

those

just

quietly

go

away

at

that

point.

E

Next,

that's

actually

in

the

text

there,

but

never

mind.

Now.

Stateless

reset

is

really

quite

simple.

The

server

doesn't

maintain

state,

so

if

it

receives

a

packet

that

it

doesn't

know

about,

it

can

send

a

stateless

reset

and

the

client

then

enters

a

draining

period.

Just

in

case

this

was

a

reordered

packet

or

something

along

those

lines.

E

E

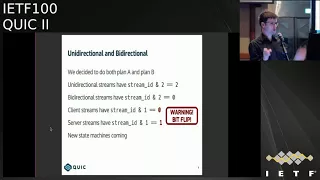

When

we

looked

into

this,

we

discovered

that

clot

stream

0,

which

we

use

for

special

purposes,

was

being

allocated

I,

think

it

was

unidirectional

server

or

something

like

that

in

the

first

iteration

of

this,

and

we

managed

to

get

it

to

bi-directional

server

initiated,

but

then

that

didn't

make

a

whole

lot

of

sense,

because

clients

initiated

this

dream,

so

we

flipped

the

bit

and

now

it

makes

a

little

bit

more

sense.

So

people

who

actually

implement

this

using

a

standard

stream

machinery

will

be

able

to

say

well.

E

This

is

just

a

bi-directional

stream

that

the

client

initiated

and

they

can

use

all

the

standard

stream

machinery

without

special

logic

other

than

the

other

than

the

necessary

special

logic

in

order

to

handle.

This

consequence

of

that

is

now

that

we've

flipped

the

bit

the

lowest

bit.

So

anyone

who's

used

to

saying

that

clients

initiate

requests

on

stream

3,

for

instance,

will

now

find

that

it's

stream

for

instead

and

it's

4

8

12

16,

because

we

now

have

more

bits

consumed.

E

There's

new

state

machines

coming

I

think

we're

gonna

go

through

in

a

little

bit

more

detail

about

how

the

individual

sides

of

a

stream

transition

through

states

so

we'll

have

a

sentence,

ID

state

and

a

receive

state

side

state,

and

it

will

be

up

to

implementations

to

synthesize

a

state

if

they

want

across

a

bi-directional

stream,

if

that's

at

all

interesting

to

them.

But

I,

don't

think

we're.

Gonna

spend

a

whole

lot

of

effort

on

that.

E

Previously

we

had

a

mix

of

different

things

for

all

of

our

different

numbers

in

the

protocol

we

had

8

bits

and

16

bits

and

32,000

64's

and,

and

then

we

had

these

weird

things

where

they

had

flags

over

here

and

it

was

either

8

16

12,

because

there's

many

many

different

ways

in

which

we

could

represent

a

number,

especially

especially

like

the

the

bespoke

16

bit

sort

of

floating-point

format

that

we

had.

That

was

that

was

great.

It

fit

the

purpose,

but

just

another

format

that

we

had

to

deal

with.

E

So

now

we

just

have

one:

it's

a

variable

size

encoding.

We

take

the

first

two

bits

and

that

encodes

a

size,

8

16,

32

64

and

the

remainder

of

her

to

be

beginning.

Your

number

leads

to

some

interesting

maximums

for

these,

but

it's

it

actually

works

out

pretty

well.

We

decided

to

also

move

this

to

the

HTTP

mapping,

conveniently

it

has

the

property

of

allowing

us

to

remove

continuations

for

header

box,

which

was

something

that

people

really

really

hated

in

HTTP

2,

and

that

is

no

more.

E

It

also

means

that

most

cases,

the

the

frame

size

will

actually

be

smaller.

Http

2

frames

have

a

three

bike,

lengths

I

believe

and

now,

for

the

most

part,

we'll

have

ones

and

two

by

plates,

which

is

which

is

quite

nice,

so

I

did

not

check

those

numbers,

particularly

that's

just

examples

of

things

that

that

he

did

yes,

6

14,

30

and

62

than

other

new

numbers.

E

There's

some

address

validation,

stuff

that

in

the

draft

now

so

someone

who

detects

a

connection

migration

can

can

sort

of

probe

that

particular

path

and

see

that

there's

actually

someone

live

on

the

other

end

and

return

route

ability

checks

are

quite

nice,

we've

renamed

the

packet

types

which

doesn't

seem

like

it's

much,

but

we

also

remove

the

seperation

between

client

and

shake

and

server

handshake

packets.

They

no

longer

have

a

different

packet

time.

I

think

there

was

something

that

was

one

other

thing

that

I

added

to

this

slide,

but

I've

forgotten.

E

E

E

And

I

did

in

my

own

copy

and

didn't

upload

the

changes,

my

apologies

so

so

Mike

and

I

have

been

talking

about

moving

to

a

model

where

requests

in

HTTP

ID

have

their

own

identifier

at

the

app

at

the

HTTP

layer.

So

when

we,

when

we

made

some

changes

for

push,

we

created

this

notion

of

a

push

identifier,

and

so

when

you,

when

you

make

a

push

promise,

it

has

an

identifier,

that's

explicit

at

the

edge

HTTP

layer

and

then

when

you

provide

the

response

in

that

unidirectional

stream,

that's

coming

back

now.

E

It

includes

that

identify.

Currently,

we

we

identify

requests

by

their

stream

identifiers,

their

quick

stream

identifiers,

and

the

suggestion

here

was

to

provide

an

HTTP

layer,

identifier,

that's

explicit

at

that

layer,

and

it

allows

people

to

implement

transports

that

don't

expose

the

stream

identifier

to

the

application

layer.

A.

G

I

asked

because,

if

you

wanted

to

make

it

trivial

to

use

the

same

mechanism

for

something

else

like

DNS

DNS

uses

you

name

for

this

exact

purpose

and

having

the

identifier

enable

that

would

be

handy.

It's

certainly

possible

to

map

a

queue

name

into

a

an

identifier

that

is

an

is

a

number

and

to

get

around

this

problem.

But

I

just

wanted

to

raise

the

fact

that

in

other

protocols,

using

a

string

might

be

handy.

So

this.

G

E

Okay,

so

then

DNS

might

use

its

what

@q

name

or

something

else.

It

doesn't

really

matter

that

they

have

that

better

choice.

This

would

be

specific

to

http,

but

we'd

be

establishing

I,

guess

a

best

practice

in

a

sense,

they're

saying

don't

rely

so

much

on

the

underlying

identifies

rely

on

make

your

own

identifies

for

this

and

I

think

several

people

have

expressed.

This

is

patent

they'd

like

to

see

Ronnie.

H

E

The

stream

states

are

different

between

bi-directional

and

unidirectional,

but

when

you

actually

look

at

the

at

the

independent

state

machines,

the

the

primary

difference

between

unidirectional

and

bi-directional

is

that

there's

an

opening

transition

invited

in

for

a

bi-directional

stream

when

the

the

paired

side

opens.

So

if,

if

the,

if

one

side

opens

on

a

bi-directional

string,

the

other

side

is

implicitly

opened

at

to

match

and

that's

the

primary

difference

beyond

that

point,

doesn't

there's

not

a

lot

of

difference

there?

E

The

the

things

that

the

things

that

sort

of

come

up

as

a

result

of

that

is,

if

you

are

blocked

on

a

particular

stream,

you

might

send

a

string

block

thing

that

will

cause

the

stream

to

open

in

the

opposite

side

in

the

opposite

direction,

those

sorts

of

things

where

it

gets

interesting,

but

other

than

that,

once

you're

in

steady

state

or

you've

transmitted.

All

the

data

on

all

the

states

are

exactly

the

same.

They

closed

the

same

way

and

and

everything

that

we

have

independent

read

and

write

closed.

E

I

Was

gonna

add

to

that

better

sorry,

Ian,

sweat,

Google,

Martin's,

PR,

that's

in

flight

and

hopefully

we'll

be

landed

soon.

There's

a

very

nice

job

of

like

clarifying

all

this

so

either.

If

you're

super

interested

in,

like

stream,

state

machines,

look

at

the

PR

and

if

you're

only

kind

of

interested,

then

like

wait,

408

yeah.

B

J

I

So

some

of

the

principles

packets

are

never

retransmitted.

Information

in

them

is

so

yeah

focus

on

what

to

declare

loss,

not

what

to

send

so

another

principle:

is

you

wait

until

you

actually

have

information

in

order

to

make

a

decision?

So

an

example?

Is

we

don't

mark

packets

lost

until

an

acknowledgement

for

a

packet

that

was

sent

after

it

is

received

kind

of

makes

sense,

but

makes

things

a

lot

simpler?

I

We

also

avoid

undue

in

general,

in

particular,

F

RTO,

if

you're

familiar

with

that,

which

is

the

process

where

you

might

reach

and

do

a

retransmission

time

out

and

then

decide

who

is

spurious

afterwards

and

then

try

to

like

unwind

your

state.

We

don't

do

that

because

unwinding

stage

is

complicated

and

bug

prone.

Instead,

we

just

wait

until

we

actually

know

whether

the

RTO

is

various

and

then

adjust

the

congestion

window.

At

that

point,

then

so,

and

in

the

meantime

we

basically

like

clamp

down

the

congestion

window.

I

So

it's

not

actually

it's

like

basically

temporarily

clamped

down,

but

we

don't

actually

change

any

of

the

congestion

control

state

so

turns

out

to

work

out

a

little

bit

simpler.

Next,

one

notable

differences

from

TCP

quick

doesn't

have

retransmission

ambiguity,

because

technically

no

packet

is

ever

really

retransmitted.

It

is,

you

know

the

same

frames.

Maybe

rebound

'old

with

a

new

packet

number

or

slightly

different

frames,

may

be

bundled

with

a

packet

number.

I

So

once

you

hear

an

acknowledgment

for

a

packet,

that

means

the

pure

has

received

it

and

processed

it,

which

is

next

next

item,

and

you

know

from

that

point,

you

don't

have

to

keep

it

in

your

send

buffer

or

any

other

sort

of

things

that

you

might

have

to

do

for

TCP

with

what's

acts

which

are

revocable

and

recovery

ends

when

the

packet

number,

not

a

sequence

number

after

the

largest

outstanding

packet

is

acknowledged.

So

this

is

sort

of

a

subtle

point,

so

I'll

try

to

elaborate

on

it.

In

TCP.

I

Recovery

is

the

period

of

time

you

enter

when

you

first

experience

a

loss

and

it

lasts

for

approximately

an

RTT

in

TCP.

However,

in

order

to

exit

recovery,

you

must

have

packets

that

are

sent

in

sort

of

like,

essentially,

all

the

lost

packets

have

to

be

filled

in

and

your

sequence

number

space

has

to

be

filled

in.

I

K

B

I

B

I

Okay,

so

now

I'm,

actually

gonna

step

through

the

the

two

types

of

loss

detection

in

quick,

one

is

act

based.

So

this

is

when

you

receive

an

acknowledgement

for

a

packet

that

is

larger,

then

you

know

some

previously

sent

packet.

So

you

know

I

received

an

acknowledgement

for

six

and

maybe

not

for

something

below

six,

as

this

example

will

show,

and

then

the

other

type

is

going

to

be

timer

based

so

like

care,

loss,

probes,

retransmission

times

out,

timeouts

and

and

the

such.

I

I

So

in

this

case

it's

basically

a

simple

matter

of

math.

Is

we

compute

like

whether

you

know

I

have

a

packet

number

that

is

three

or

more

above

a

packet

that

has

not

been

acknowledged

so

next

slide,

so

the

receiver

receives

one

and

two

sends

an

acknowledgement

frame

to

the

sender.

Next

slide.

Three

four

and

five

are

dropped.

So

six

is

still

in

flight

receiver.

I

Next

slide

received

six

and

immediately

sends

an

acknowledgment

for

one

and

two

as

well

as

six

next

slide,

and

when

that

acknowledgement

is

received,

the

receiver

declares

three

lost

so

because

it's

you

know

a

packet

distance

of

three.

In

this

example,

nothing

is

immediately

rejected,

just

because

I

don't

want

to

really

kind

of

go

into

that

at

the

moment.

But

yes,

as

if

three

was

retransmitted,

seven,

it

could

be

used

to

to

recover

for

so

I.

Actually,

I'm

gonna

go

into

a

different

example,

which

is

why

I'm

not

gonna

retransmits

reach.

I

Thank

you

next

slide,

currently

retransmitted.

So

early

retransmit,

as

with

TCP

kicks

in

anytime,

like

the

largest

outstanding

packet,

is

going

to

be

acknowledged,

is

acknowledged

and

there

are

packets

underneath

they

have

not

been

acknowledged.

So

this

is

an

effort

to

speed

up

lost

detection

in

cases

when

you

have

losses

near

the

tail.

I

I

Timer

base

lost

detection,

so

timer

based

loss

detection

is

probably

a

little

more

more

different

from

TCP

than

the

regular

fact

style

and

early

retransmit

cases.

I

just

ran

through

time

out.

Packets

do

count

towards

bytes

in

flight,

so

in

quick,

bytes

in-flight

can

exceed

the

congestion

window

for

a

period

of

time.

So

again

it

was

that

kind

of

ensures

that

you

actually

get

a

correct

congestion

control

signal,

even

if

you're

in

the

timeout

sort

of

mode

time

of

events

do

not

immediately

cause

packets

to

be

declared

lost

or

changed

the

congestion

control

state.

I

So

essentially

the

idea

is

I,

don't

know

anything

all

I

know

is

I

even

heard

anything,

so

that

could

mean

I.

Have

you

know

a

temporary

Wi-Fi

outage

or

it

could

mean

just

like

you

know.

Suddenly,

a

bunch

of

packets

are

in

the

queue,

and

you

know

everything's

fine,

so

I'm

gonna

wait

until

I

actually

know

something

to

adjust

the

congestion

window

or

declare

anything

lost.

So

therefore

yeah

the

ce-1

does

not

change

during

these

periods

of

time

and.

M

I

True

for

all

three

types

of

time

out,

I'm

gonna

walk

to

next

slide,

so

the

handshake

timeout

is

set

to

the

RTT,

which

is

either

a

measured,

RTD

or

initial

R

to

D

times

two

to

the

number

of

timeouts

plus

one.

So

essentially,

if

you

have

a

initial

RTT

like

quick,

does

of

a

hundred

milliseconds,

then

the

first

time

out

for

a

handshake

would

be

200,

milliseconds

and

then

400

and

800

and

so

forth,

except

from

the

last

cent

handshake

frame.

I

So

it's

kind

of

like

the

most

recent

handshake

gram

and

you

retransmit

all

not

acknowledge

tangent

packets.

So

this

is

a

little

bit

more

aggressive

than

the

other

time

out

modes

potentially.

But

the

idea

is

that

you

know

the

handshake

should

be

relatively

small

and

additionally,

it's

very

important

that

it

actually

completes,

because

otherwise

you

can't

really

make

any

progress

on

the

connection.

I

Sorry

and

yes,

the

expected

RT

t,

if

you,

if

you

don't

actually

have

an

R

DT

sample,

as

for

the

very

first

packet,

is

based,

possibly

on

previous

connections,

if

you're

doing

RT

0

R

2

T

resumption,

it

could

also

be

based

on

other

environmental

factors.

Like

I

know,

I'm

on

like

a

2g

network,

so

I

can

set

it

to

2

seconds

I

mean.

Certainly,

there

are

tweaks

that

can

be

made

there

next

slide.

I

G

I

Would

say:

that's

yes,

but

you

make

an

interesting

point.

The

the

original

pink

cream

was

retransmitted

well

because

actually

had

no

payload

and

we've

kind

of

tweaked,

the

the

meaning

of

the

the

ping

and

pong

frames

to

be

a

little

bit

different,

but

the

the

short

answer

is

yes,

essentially,

it's

anything,

but

an

acknowledgment

anytime.

You

actually

like

need

to

make

it

through

it.

Make.

I

Next

slide,

retransmission

time,

oh,

so

this

is

the

classic

retransmission

time

out

formula.

You

have

smooth

round-trip

time

plus

4

times

the

RTT

variants.

You

also

have

a

min

retransmission

time

out.

We,

as

as

with

many

of

these

other

constants.

We

are

currently

using

the

Linux

default,

which

I

believe

is

200

milliseconds

and

it

has

exponential

back-off,

so

quick

it

was

currently

is

setting

it.

I

So

when,

if

you're

doing

you

know

tail

off

probe

twice

and

then

retransmission

time,

I

would

say

once

or

twice

at

some

point,

one

of

two

things

is

going

to

happen

either

at

the

connection

is

going

to

timeout,

because

you

know

you

just

get

black

hold

or

eventually

you

are

going

to

receive

an

acknowledgment.

When

you

receive

an

acknowledgment

at

that

point.

In

the

case

of

the

retransmission

timeout,

you

will

go

down

to

the

classic

white

minimum

congestion

window,

which

we

default

to.

I

If

it

turns

out,

the

retransmission

time

out

was

was

valid.

Ie

the

retransmission

time

out,

packets

got

acknowledged,

but

nothing

previous

did.

Similarly,

if

any

of

these

packets

are

acknowledged

like

the

Kaos

probe

or

otherwise

packets,

besides

the

retransmission

time-

oh,

you

know

normal

loss.

Recovery

will

kick

in

so

even

though

we

don't

declare

a

packet

lost

when

we

do

at

a

loss

probe

any

previously

sent

packet.

If

the

tale

us

probe

packet

is

acknowledged-

and

it

hasn't-

you

know

the

previous

packets

haven't

been

delivered,

then

yes,

they

will

be

declared

lost

just

like

normal.

I

D

D

I

N

N

Gen

Iyengar,

yes,

TCP

is

supposed

to

send

one,

but

the

cost

of

free

time

spending

after

a

time

or

is

incredibly

high

and

the

whole

point

of

sending

one

packet

makes

that

one

packet

incredibly

fragile.

If

you

lose

it,

then

you

have

to

wait

for

twice

the

RTO

before

you

do

any

sort

of

recovery

again

so

sending

to

protects

you

from

that

fragility.

I

think

those

value

and

considering

this

for

TCP

as

well.

To

be

honest,.

I

So

so

yeah

I'm

gonna

actually

go

the

constants

in

the

next

slide,

but

I

think

there

definitely

are

things

here

that

are

taken

as

a

model

from

you

know

what

Linux

does

as

default

and

not

necessarily

from

from

the

RFC's,

and

so

like

that

the

constants

are

sort

of

in

formulas

are

sort

of

a

mixed

at

you.

That.

N

Is

okay

but

I

think

like

whenever

we

are

deviating?

We

should

probably

document

it,

so

this

is

perhaps

in

July,

and

this

is

perhaps

an

interesting

question

number

some

of

the

constants

that

you

will

see

here

are

also

aggressive,

because

we

found

them

to

be

quite

useful

and

and

and

quick

is

definitely

driving

towards,

like

the

handshake

time

out,

for

example,

next

slide.

N

And

some

of

them

aren't

even

what

Linux

does

one

of

the

things

that

is

DLP

in

quick.

We

do

two

tailless

probes

before

we

go

to

an

RTO,

whereas

in

Linux

it's

actually

one

TLP

before

we

go

to

an

RTO,

and

some

of

this

is

designed

to

be

try.

It

is

necessarily

trying

to

be

more

more

aggressive

in

terms

of

recovery,

but

still

safe,

because

the

fundamental

mechanisms

are

still

the

same.

I.

N

N

The

the

only

reason

so

so

we

should

discuss

that

I

think

the

constant.

So

a

big

part

of

this

is

basically

discussing

the

constants

I

think

those

are

those

are

in

my

mind.

What

I

expect

that

we

will

end

up

discussing,

but,

as

you

said,

one

of

the

higher-order

points

that

should

be

clear

from

here

is

of

the

safety

properties

are

not

getting

violated.

We

are

pushing

the

constants

around,

but

the

the

fundamental

mechanisms

are

still

the

same.

N

I

N

Because,

because

this

is

the

sender,

picking

the

value

of

energy,

doing

Taylors

probe,

one

of

the

deficiencies

in

TCP

was

we,

for

example,

can't

assume

that

the

other

side

is

doing

50

milliseconds

and

we

have

to

pick

a

worst-case

value

of

200

milliseconds.

So

this

might

be

a

useful

transport

parameter

to

negotiate

so

that

we

can

pick

the

value

I.

Would.

I

Agree,

that's

what

issue

912

is

actually

I

want.

I

get

a

feel

for

the

room,

because

this

is

like

one

of

those

things

where

I

would

very

much

like

to

move

forward

with

this

and

I.

Don't

think,

there's

a

lot

of

objection

but

I

think

for

once,

I

have

everyone

the

right

people

in

the

room

would

would

anyone

object

to

communicating

the

peers?

You

know

maximum

act

delay

in

transport

parameters

in

quick

center

reason.

We.

D

N

N

Informative

references,

but

not

we,

but

we

are

not

going

to

live

with

the

same

masts

and

shirts

that

we

have

in

DC

p.m.

in

DC,

be

in

our

sequin

581

of

physics

and

and

and

that's

part

of

the

reason.

Why

I

think

that

folks,

who

are

familiar

with

the

the

documents

relevant

documents,

should

be

engaged

in

the

sanitization

of

this

document

as

well.

N

That

said,

the

constants

here

are

different,

as

Ian

pointed

out

earlier.

Some

of

the

mechanisms

are

subtly

different

and

that

will

cause

some

constants

to

change.

We

are

also

chasing

perhaps

a

slightly

different

target,

which

is

latency,

and

so

some

of

the

constants

are

a

little

bit

more

aggressive

and,

as

you

pointed

out,

the

Baro

Naja's

from

the

specs,

but

also

from

current

practice.

So

this

may

be

something

that

can

feed

into

the

TCP

process

that

maybe

can

be

used

to

change

constants

that

we

use

for

TCP

as

well

at

the

IETF.

N

A

Lars

I

could

I

agree

that

the

conversation

should

happen

here,

but

we

have

a

lot

of

these

people

in

the

room.

That's

why

we

that's

why

we

specifically

said

we're

going

to

talk

about

this

stuff

here

and

not

another

and

where

that

might

not

be

the

case.

So

when

I

echo

praveen

suggestion

of

actually

documenting

the

differences

to

the

TCP

values

and

maybe

even

put

an

appendix

in

or

core

sort

of

describe

the

motivation

for

the

difference,

that's

right,

because

otherwise

we're

gonna

keep

getting

those

questions

over

and

over

so.

A

A

Doesn't

exist

in

the

RFC

stream

right?

Well,

it

does.

It

has

no

standards.

Well,

it's

an

expired

draft.

Believe

we

never

did

that.

Okay,

yeah!

Well,

so

information

recite

the

expired

draft,

but

so

it

is

what

I

think

sort

of

the

minister

sort

of

to

sever

constants

that

cholesterol

people

sort

of

know

about

right.

One

is

what

the

RFC

says

and

then

there's

what

Linux

does

that's.

N

A

Sure

I

mean

the

the

the

only

sets

of

values

that

are

easy

to

for

people

to

look

at

are

dope

from

the

open

source,

ones

right

and

I.

Don't

know

how

to

if

you're

willing

to

contribute

text

that

about

what

what

windows

might

be

doing

for

constant

and

that's

fine,

do

but

I

think

we're

running

in

the

danger

of

having

like

a

comparison

matrix

of

what

different

stacks

are

doing

in

this

talk

to

religious,

also,

probably

not

going

to

be

super

useful

yeah.

N

I

Seems

fine

to

me,

yeah

I

mean

I'd

be

happy

to

have

your

your

help,

Praveen.

If

you

wanted

to

contribute

some

to

that,

that's,

like

I,

said:

there's

a

small

section

there

that

sort

of

says

basically

like

most.

These

were

yanked,

but

from

Linux,

except

for

these

two

or

three,

which

is

pretty,

is

pretty

tersh,

so

I

mean

we

could

certainly

go

in

Tibetan

like

elaborate

a

little

bit

more.

If

it's

helpful

to

people

generally.

N

I

just

want

one

last

comment

on

the

understate

is

that

I

would

encourage

people

who

are

reviewing

this

interviewing.

The

constants

should

not

simply

look

at

the

constants,

because

the

mechanisms

can

be

subtly

different.

As

I

said

earlier,

the

safety

properties

are

are

not

violated,

but

the

mechanisms

are

definitely

in

in

general.

As

a

rule,

you

can

assume

that.

N

A

I'm

speaking

so,

I

also

got

some

feedback

that

people

are

confused

at

all.

The

other

drafts

are

at

over

seven,

and

this

is

at

Oh

6.

So

my

suggestion

would

be.

We

try

to

keep

the

base

draft

set

boy,

it's

another.

Seven.

It

showed

up

in

my

late

date.

Attract

aid

in

New

York

state

records

at

oh

six,

so

I'm

very

confused

I.

N

Was

a

question

about

the

max

height

delay

in

TCP,

M

yeah?

So

that's

a

deficiency

in

TCP

and

I

think

we

have

an

opportunity

here

to

kind

of

actually

explicitly

communicate

the

value,

so

we

can

do

the

right

thing

with

a

loss

probe.

The

other

question

I

had

was

this

default

RT

the

200

millisecond

handshake

timeout,

so

I

assume

that

things

like

caching,

the

initial

our

deity

in

the

path

and

using

that.

N

I

That

is

a

suggestion,

I

think.

Currently,

it

suggests

you

basically

like

cache

the

value

for

a

server

when

doing

0tt.

That's

not

quite

sufficient

for

some

circumstances,

because,

if

you're

on

a

very

long

r2t

link,

it

may

turn

out

that,

like

some

new

server,

you've

never

contacted

before

it,

like

also

has

a

very

long

RT,

so

I

mean

I,

don't

have

enough

experiment

like

implementation

experiments,

but

with

the

latter

part,

but

certainly

the

former

approach

of

just

saying.

Like

last

time,

I

went

to

WWE

example:

comm,

it

was

X.

P

I

D

So

Corey

first

I

think

the

algorithms

are

going

to

be

different

here,

because

there's

good

reasons

because

you're

mechanisms

are

different,

so

the

time

at

constants

are

going

to

be

different,

but

then

I

worry

about

whether

the

protocol

could

be

more

aggressive,

which

is

kind

of

repeating

I,

guess

what

the

previous

speaker

says,

but

we

need

some

way

to

get

a

handle

on

this.

Just

a

metric

saying

your

hundred

milliseconds,

it's

200

milliseconds

doesn't

help.

D

We

need

somehow

to

understand

how

it

will

compete

in

a

queue

and

in

particularly

in

really

strange

environments

where

you've

got

wireless

mangling

at

one

side

or

something

so

I.

Don't

think

this

is

an

easy

comparison,

but

if

it's

gonna

be

more

than

experimental,

then

somehow

we

have

to

figure

this

out.

So.

A

My

hope

is

that,

like

next

year,

we'll

have

or

early

next

year,

hopefully

we'll

have

implementations

that

you

can

actually

run

some

both

data

through

and

those

of

you

with

students

might

throw

some

at

this

and-

and

you

know,

get

some

papers

out

of

it

and

come

back

and

show

the

results

from

experiments

or

simulations.

Or

what

have

you

so

that

we

can

have

a

discussion?

Maybe

nice

ecog

or

something

on

on

creek

versus

tcp?

And

what

does

it

look

like

I,

like.

K

K

Well,

like

yeah

like

once,

we

decided

to

do

one

other

might

be

communits

well,

but

let's

just

focus

on

this

one

arm,

the

specification

doesn't

say

you

have

to

do

that

they

act

at

all,

and

it

also

doesn't

say

you

have

to

have

this

implemented

by

doing

a

single

timer

that

pops

and

then

you

pass

in

the

acts.

So

one

thing

you

might

first

is

to

do,

is

you

might

like

consider?

K

You

know

you,

by

considering

the

number

of

packets

you've

already

received

you've,

not

yet

act

as

pressure

on

when

you

act

now,

I'm

not

saying

any

particular

thing.

You

should

do

I'm

just

saying

that

that

I'm

worried

about

codifying

the

notion

that

this

opinion

about

that

the

receivers

algorithm

in

the

protocol,

because

the

receiver

might

not

have

a

timer

right,

and

so

what

is

he

gonna

say

that

I

think.

I

I

I'm

like

you

can

set

the

timer

for

this,

and

if

it's

been

this

long,

like

you

probably

waited

long

enough,

that's

that's

all

I'm

really

looking

for

it

right

now

in

TCP,

most

implementations

I

believe

now

either

use

100

or

200,

and

yet,

like

I,

think

the

RFC

says

like

one

second

and

so

we're

basically

like

I

mean

if

you're

conservative

you

choose

one

second,

but

like

that's

crazy,

so

no

one

does,

and

so

they

choose

some

smaller

number

and

hope

it

works.

Sure.

Well,

I!

Guess

they

just

like.

K

Q

Brett

Jordan

I

apologize

for

my

ignorance

in

advance

but

like

to

shift

gears

a

little

bit

so

from

my

understanding

that

all

of

the

acts

for

quick

as

we

currently

have

them

defined,

will

all

be

inside

of

the

encrypted

tunnel.

That's

correct

and

so

I

am

interested

to

know

how

we

plan

on

enabling

the

network

operators

to

actually

help

when

things

go

wrong.

So

when

you

run

a

large

network,

you

may

not

own

either

end

like

the

client

or

the

application.

A

I

N

Jahangir

I

want

to

respond

to

something

Cory

said

earlier,

which

was

around

comparing

this

with

TCP

and

so

on.

I

think,

that's!

That's

it's

fantastic.

We

should

do

it

at

some

point

and

try

to

understand

in

more

detail

how

friendly

these

constants

are

in

tips.

Michael

said

something

about

how

you

know:

quicks

benefits

are

coming

from

these

constants

part

of

my

response

is

absurd.

N

Specifically,

if

you

look

at

loss

recovery

in

pointed

out

earlier

that

the

recovery

period

itself

is

actually

different

in

DCP

as

compared

to

quick-

and

this

happens

because

quick

does

not

reuse,

sequence

numbers

and

as

a

result,

you

get

past

the

you.

Never

you

do

not

have

the

notion

of

a

queue

mac

point

so.

N

N

E

A

E

E

So

if

this

is

truly

valuable,

then

maybe

the

cost

is

not

particularly

high,

because

that

act

delay

is

actually

observe

of

all

on

the

network

anyway,

so

we

could

probably

say

not

a

problem,

but

for

the

general

class

of

things,

as

that

could

pointed

out

that

we

might

want

to

add

other

things,

we

have

to

think

about

think

carefully

about

what

we're

doing

with

it.

These

sorts

of

things

agreed.

I

I

think

we

should

consider

it

on

a

case-by-case

basis.

I

think

in

this

case,

I

think

I

did

25.

Milliseconds

is

actually

a

pretty

reasonable

default

for

the

public,

Internet

and

I

think

the

most

of

the

people

who

would

be

changing.

It

would

actually

be

cranking

it

down

for

data

center

environments,

yeah.

R

Mia

could

have

been

so

far

for

the

egg

delay.

It

might

actually

be

useful

to

have

a

mechanism

where

you

can

actually

change

it

later

on

as

well,

because

you

might

see

that

your

network

conditions

have

changed

somehow

and

you

want

to

adapt.

I

mean

it's

probably

it's

most

useful

to

have

it

right

from

the

beginning,

but

might

be

useful

yeah.

N

E

J

I

All

these

constants

are,

as

I

said,

kind

of

based

on

like

the

Linux

numbers,

but

like

all

the

RTT

measurements

in

quick,

are

designed

to

be

microsecond

accuracy

and

when

we

did

have

timestamps,

they

were

also

microsecond

accuracy,

though

now

they're

gone

so

but

yeah

definitely

I.

Think

microseconds

is

is

the

goal

you

know

and

all

these

circumstances.

N

K

Yeah

I

mean

our

now

we're

really

bike

shedding,

but

the

question

and

the

only

distinguishing

microseconds

in

milliseconds,

if

you're,

encoding

on

the

wire,

so

I

guess.

The

point

is

that

you're

gonna

encode

maximum

acti

on

the

wire

you

might

with

units

microseconds

giving

you

one

send

at

once

anyway,.

S

I

Crap

I

totally

had

one

more

slide:

yeah

implementation

tips,

so

these

are

things

that

Google

quick

has

messed

up

at

some

point.

So,

like

don't

do

these

spurious

retransmissions

happen,

you

should

really

continue

to

track

the

data

the

sent

to

the

lost

packets

for

at

least

a

little

while

after

they're

lost,

you

don't

have

to

track

it

forever,

but

like

at

least

some

small

period

of

time,

because

you

know

weird

twiddles

and

such

do

happen.

I

You

get

one

spurious

act

and

one

packet

that

like

Greece's

ahead

of

others

and

declares

50

lost

and

bad

things

happen.

Never

ever

ever

retransmit

an

acknowledgement

frame

always

send

up-to-date

ones.

If

you

don't

do

that,

like

your

world,

you'll

be

in

a

world

of

pain

and

like

loss,

recovery

will

work

like

absolute

crap,

always

send

packets

in

packet

number

order,

or

you

will

either

end

up

with

spurious

retransmissions

or

your

implementation

will

be

stupidly

complex.

Please

always

send

them

in

packet

number

all

order

it.

I

B

Thank

you

in

so

we're

doing

fine

on

time,

I

think

it's

10:30,

we've

got

one

more

presentation

and

then

we've

got

the

discussion

of

the

third

implementation

draft,

so

I

think

we're

fine

and,

as

I

think

was

mentioned,

we're

hoping

to

see

more

implementation

of

this

we'll

discuss

that

on

the

third

implementation

draft

timeline,

but

then

we'll

have

running

code

to

get

our

data

from

which

is

nice

and

and

draft

seven

is

indeed

now

up,