►

From YouTube: IETF100-NMRG-20171114-0930

Description

NMRG meeting session at IETF100

2017/11/14 0930

https://datatracker.ietf.org/meeting/100/proceedings/

A

Hello

good

morning,

everyone

welcome

to

this

Anna

Margie

meeting.

This

is

the

second

session

that

we

have

this

week.

Yesterday

we

have

the

first

session.

Yesterday

we

were

talking

about

mainly

on

intent-based

networking

in

the

some

kind

of

a

report

about

an

MRG

and

the

future

of

the

group

itself.

Today

we

are

going

to

concentrate

the

presentations

on

the

use

out

of

artificial

intelligence

for

network

management.

A

This

session

has

been

organized

after

call

for

participation

that

we

send

along,

of

course,

the

NMR

gmail

is,

but

also

together

with

out

our

other

medalists

in

other

communities

as

well.

The

approach

behind

this

session

is

that

we

wanted

to

revisit,

let's

say,

artificial

intelligence

techniques

and

solutions

to

observe

whether

they

can

be

used

for

network

management.

A

This

is

not

something

new,

because

network

management

has

been

already

observed

from

the

artificial

intelligence

perspective,

but

the

area

itself

major

along

the

years

and

then

it's

a

good

type

to

check

whether

artificial

intelligence

techniques

and

solutions

could

be

used

for

network

management.

In

this

as

a

new

context.

A

A

So

as

I

mentioned

before,

this

is

the

special

session

on

the

user

intelligence

for

network

management,

and

then

we

have

organized

the

presentation

in

let's

say

to

internal

sessions.

First

we're

going

to

see

a

set

of

presentations.

Then

we

have

some

questions

and

answers,

so

this

is

to

be

and

then

to

see

with

additional

presentations

and

then

in

2d.

We're

gonna

have

some

discussions,

conclusions

and

plans

for

the

future.

A

B

So,

thank

you.

So

I

will

start

by

motivating

a

little

bit

this

work

I.

Don't

think

they

need

to

explain

why

machine

learning

is

relevant

for

networking.

I

think

that

more

or

less

everybody

can

agree

on

that.

But

the

thing

is

that

it

is

working.

Ok,

now

it's

better,

but

I

would

like

to

in

this.

In

this

talk,

I

would

like

to

talk

about.

B

So

the

idea

is

to

use

these

deeper

informal

learning

techniques,

so

deeper

informal

learning

is,

let's

say

somehow

our

very

recent

breakthrough

that

we

can

claim

that

it

was

invented

by

Google

through

this

paper

in

2015,

where

they

managed

to

train

an

agent

to

play

a

video

game.

So

the

idea

in

this

case

was

that

you

have

a

nation

which

is

we

are

trying

to

teach

it

how

to

play

a

video

game.

The

agent

will

see

the

video

game

as

the

state,

ok,

and

it

will

act

upon

this

state

through

several

actions.

B

In

this

case,

it

has

three

different

actions:

right,

go

left

right

and

straight,

and

the

idea

is

that

the

agent

will

at

the

beginning

try

the

actions,

let's

say

randomly

like

a

human.

That

does

not

understand

anything

and

it

will

understand

how

the

actions

impact

environment.

Ok.

So

if

I

move

right,

what

happens

if

I

move

less

what

happens

and

so

on

and

after

a

while,

it

will

understand,

which

is

the

relation

between

the

actions

and

the

state,

and

the

idea

is

that

the

asian

has

a

goal

which

is

optimize

the

reward.

Ok.

B

So

what

Google

showed

in

this

paper

is

that

you

can

actually

train

an

agent

to

understand,

which

is

the

right

set

of

actions

so

that

in

the

long

term,

it

maximizes

the

reward,

which

means

that

learns

how

to

play

this

video

game.

So

we're

trying

to

do

exactly

the

same

to

networking,

which

is

it's

a

little

bit

straightforward.

In

our

case,

the

network

is

the

environment,

ok

and

then

the

act

Shen's

is

change,

something

upon

the

network.

B

We

need

to

change

like

the

routing

configuration

or

you

need

to

change

the

serving

fraction

path

or

you

need

to

change

something

outdoor

network.

Then

out

of

this

system,

when

you

change

something,

you

will

receive

two

signals.

The

first

one

is

the

state.

This

means

that

when

you

change

something

on

the

network,

something

will

change

right.

So

you

can,

you

can

understand

the

status,

and

this

is

a

very

broad

statement.

B

Many

many

different

answers

are

valid,

but

let's

say

that

you

can

think,

as

the

state

of

the

network

ask

the

traffic,

which

is

the

current

traffic

load

and

which

is

the

performance

that

my

network

is

providing

me

out

of

this

network

network

configuration

and

state,

and

then

you

have

the

reward.

The

reward

is

the

target

performance

of

the

network

right.

So

the

reward

is

how

well

more

than

the

target

performance.

B

B

Let

me

explain,

then,

what

kind

which

which

was

our

experiment,

which

I

have

to

say

that

it

is

a

work

in

progress.

We

have

a

paper

and

it

will

be

updated

with

new

results.

So

the

idea

is

that

you

have

one

of

these

deeper

informal

learning

agents

and

we

can

change

the

routing

configuration

and

for

this-

and

in

this

scenario,

we

have

assumed

that

the

routing

configuration

is

as

simple

as

the

weights

of

the

links

pretty

much

like

in

OSPF,

not

a

strictly

speaking

OSPF

as

the

IDF

protocol.

B

But

as

a

routing

protocol

that

you

set

the

weights

and

routing

happens,

then,

which

is

the

state

okay,

when

you,

the

state,

is

the

traffic

matrix?

Okay,

so

in

each

step

we

have

a

different

traffic

matrix.

The

traffic

matrix

can

be

actually

the

same

as

the

on

in

Russia.

Step

by

the

idea

is

that

in

each

step

the

agent

will

see

one

traffic

matrix

and

which

is

the

reward,

the

delay

okay,

depending

for

a

particular

traffic

matrix

and

for

a

set

of

weights.

B

What

you

will

get

is

a

certain

reward

delay

out

of

the

network,

then,

which

is

the

river

the

river

function.

So

we

train.

We

want

to

train

the

Asian

to

minimize

delay,

so

the

goal

of

the

Asian

is

find

the

right

set

of

weights

for

routing

such

that,

for

that

particular

traffic

matrix.

The

the

average

delay

is

minimum,

so

we

want

to

teach

the

agent

how

to

route

autonomously.

B

There

is

a

quite

interesting

discussion,

at

least

I

hope

that

you

find

it

interesting,

which

is

that

the

River

function

is

actually

the

network

policy

is

exactly

the

same.

So

what

we

understand

as

the

network

policy

and

yesterday

we

have

a

very

interesting

session

about

intent.

So

the

river

function

is

the

mathematical

representation

of

the

intent

that

you

need

to

install

on

the

Asian

so

that

the

agent

will

operate

the

network

following

that

policy,

I'm

going

to

jump

a

little

bit

over

the

methodology,

it's

also

in

the

paper,

but

pretty

much.

B

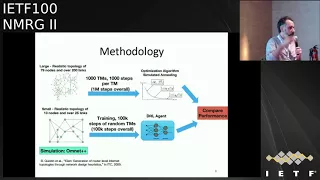

What

we

did

was

we

compared

the

performance

of

this

agent

with

a

very

quite

sophisticated,

optimization

technique,

a

traditional

atomization

technique,

which

is

called

simulated

annealing,

and

the

idea

was

that

so

in

in

RL.

What

we

do

is

we

train

the

system

with

100

thousand

steps,

random

steps

after

training

we

for

each

traffic

matrix.

B

We

asked

the

agent

okay

give

me,

which

is

the

optimal

weight

for

this

particular

traffic

matrix,

and

then

we

compare

that

with

a

similar

tunneling

with

it,

which

is

a

traditional

imaging

technique,

I,

which

is

a

search

base

and

for

in

this

case

for

annealing,

we

allow

it

to

run

for

1,000

steps.

So

we

asked

similar

tunneling

okay

for

this

particular

metric,

which

is

the

optimal

weights,

and

you

have

one

you

can

iterate

1,000

times,

so

you

can

find

you

can

test

1,000

different

weights.

B

So

it's

not

a

very

it's

a

fair

comparison

with

TRL,

but

well

it's

it's

enough

as

a

benchmark,

and

in

this

slide

you

can

see

how

the

Asian

learns.

This

is

the

traffic

intensity,

which

goes

well

beyond

100,

because

we

wanted

to

test

also

what

happens

when

you

have

a

network

which

is

very

separated

that,

if

he's

losing

a

lot

of

packets-

and

here

you

have

that

the

average

delay

and

each

box

plot

represents

how

many

training

steps

we

allowed

the

agent

to

have.

B

So

when

you

have

two

2000,

you

get

worse

performance

and

when

you

have

1000,

so

it

shows

that

the

Asian

is

learning,

and

you

can

see

this

very

common

exponential

decay

that

is

very

common

in

machine

learning.

So

the

more

training

you

get,

the

better

results

you

have

until

you

hit

a

flat

a

flat

curve-

and

this

is

the

performant

result

with

respect

to

simulated

annealing.

So

it's

the

same,

but

here

we

see

for

each

traffic

matrix,

which

is

the

delay

that

each

technique

achieves

and,

as

you

can

see,

both

results

are

quite

comparable.

B

B

So

from

that

point

onwards

on

the

presentation,

what

I

would

like

to

discuss

is

at

least

what

I

have

learned

and

which

are

my

conclusions

regarding

the

use

of

deep

brain,

formal

learning

techniques

in

networking,

so

I

have

tried

to

make

this

as

light

as

general

as

possible,

and

not

strictly

related

to

my

to

my

work

DRL.

They

have

amazing

advantage

in

in

our

area.

That's

at

least

my

opinion.

It's

a

quite

quite

an

amazing

technology.

So

on

the

one

hand

it

does

not

require

any

kind

of

prior

knowledge

of

the

network.

B

You

don't

have

to

explain

it.

I

have

this

amount

of

routers

the

capacity

of

the

links.

Is

this

one?

Nothing

because

it

understands

the

system

as

a

black

box

where

you

have

actions

and

you

get

a

reward?

That's

it!

So

that's

quite

quite

amazing.

It

works

online

and

in

real

time,

so

you

can

actually

run

it

and

expect

real-time

optimization

of

the

system,

something

which

is

extremely

hard

to

do

with

other

kind

of

techniques,

and

it

is

autonomously

not

just

for

optimizing,

but

also

for

learning.

It's

not

like

super

vise

learning.

B

Where

you

have

to

generate

a

dataset,

the

agent

will

generate

its

own

data

set.

You

don't

have

to

do

anything.

It

will

play

around

with

your

infrastructure

for

a

while

until

it

understands

how

it

works,

and

then

it

will

optimize

it.

So,

with

respect

to

traditional

optimization

techniques,

which

is

what

we

have

today

deployed,

it

provides

a

constant

optimization

time,

which

means

that

any

kind

of

optimization

technique

typically

has

to

iterate

I'm

trying

to

find

which

is

the

best

one

in

in

DRL

after

training.

It's

just

one

step.

B

That's

why

you

can

achieve

a

constant

time

optimization,

so

it's

model

free,

typically

for

optimism.

Our

typical

I

know

always

in

an

optimization

technique.

You

need

to

run

it

on

top

of

an

analytical

model

of

a

simulator

right,

and

then

the

analytical

technique

will

search

over

many

configurations

using

these

analytical

model

or

simulator.

To

try

to

find

which

is

the

optimal

one:

that's

not

the

case

for

DRL

you

can

make

it

learn

directly

on

your

infrastructure,

which

means

that

you

don't

need

to

simplify

anything.

B

It

doesn't

matter

how

complex

your

net

worries

and

with

enough

layers.

Although

this

has

to

be

confirmed

with

enough

with

a

deep

network,

you

should

be

able

to

learn

it

and

it's

a

black

box

optimization.

So

any

kind

of

optimization

is

tailored

to

the

problem

that

it

is

trying

to

optimize.

So

that's

not

the

case

for

general,

you

only.

So

if

you

want

to

change

your

optimization

function,

you

just

need

to

change

the

reward

function,

but

not

the

algorithm.

You

have

the

same

algorithm

for

different

reward

functions

and

in

a

duration,

optimization

technique

any

typically.

B

What

you

do

is

that

you

tailor

your

algorithm

to

your

optimization

goals.

Then,

of

course,

it

has

many

challenges

which

I

will

also

like

to

discuss.

The

first

one

is

training,

okay,

so

yeah,

it's

it's

very

cool,

so,

but

it's

very

hard

to

think

that

I

will

allow

an

agent

to

run

my

network

during

the

exploration

phase,

where

it

has

to

learn,

because

it

will

hit

actions

randomly

to

learn

what

is

happening

with

infrastructure

like

a

kid

right,

so

it

has

to

understand

how

things

work.

B

So

at

the

beginning,

it

will

be

a

little

bit

harsh

on

your

infrastructure

and

probably

you

are

not

going

to

allow

training

to

happen

online

and

that's

not

a

come.

That's

a

common

issue

not

for

us,

but

for

anyone

willing

to

apply

these

kind

of

techniques.

So

what

other

people

are

doing

is

well.

You

can

train

it

on

a

simulator,

that's

something

that

you

can

do,

and

people

are

doing

that

in

other

areas.

Then

you

lose

some

advantages

and

then,

once

you

have

the

system

trained,

you

put

it

online

to

work

in

the

real

network.

B

B

Then

there

is

a

second

challenge

and

that's

I

will

say

a

little

bit

more

conceptual,

but

I

will

say

it's

it's

bigger,

which

is

the

lack

of

explained

ability,

so

yeah,

deep,

neural

networks

are

very

cool.

My

students

are

very

happy

working

with

it,

but

at

the

end,

what

you

get

is

a

black

box.

It's

something

that

you

don't

know

how

it

works.

B

B

I

understand

that

from

the

industry

perspective,

that's

a

big

issue,

because

you

cannot,

it

won't

be

able

to

offer

you

anywhere

auntie's

and

it

won't

be

able

to

offer

you

any

kind

of

turret

shooting

if

it

breaks

you

don't

know

why

why

it

is

not

working

and

you

don't

know

how

to

fix

it

beyond

trained

it.

More

and

that's

I

understand,

for

the

industry

perspective

a

big

big

issue

also

in

terms

of

liabilities

and

so

on

and

again

that's

not

an

issue

just

for

us.

It's

an

issue

for

anyone

using

these

kind

of

techniques.

B

B

So

let's

say

that

you

have

an

intent

language,

you

compile

it

and

you

render

it

into

a

reward

function,

which

is

the

mathematical

representation

of

your

intent

language.

Once

you

have

done

that,

you

install

it

on

your

agent

and

then

the

agent

should

be

able

to

operate

following

that

policy.

So

I

believe

that

what?

Hopefully

you

find

it

relevant

for

for

this

working

group

for

this

research

group.

B

But

this

makes

me

ask

myself

some

questions

for

which

I

don't

have

an

answer

that

why

we

call

it

research

working

group,

I,

guess

the

first

one

is:

can

we

actually

represent

any

Network

policy

with

these

functions?

I

don't

know

because

those

functions

have

some

constraints.

For

instance,

they

are

continuous

and

so

on.

I,

don't

have

an

answer

for

that,

but

this

is

an

important

question.

B

If,

if

we

are

willing

to

go

that

path,

we

need

to

understand

if

this

reward

function

can

represent

any

kind

of

network

policy

and

then

okay,

how

we

can

compile

this

intent

language

into

these

River

functions.

So

as

a

summary

and

I'm

very

quick,

so

I

really

believe

that

this

is

an

amazing

technology.

B

It

is

to

me,

at

least,

it

really

represents

the

full

realization

of

an

autonomous,

intelligent

network,

which

is

something

that

we

have

been

discussing

out

in

the

past

and

to

me

this

is

what

and

how

I

understand

this

kind

of

autonomous,

intelligent

system,

but

it-

and

it

has

many

many

advantages.

Real-Time

operation,

plug-and-play,

no

configuration

just

pick

your

reward

function,

but

it

comes

with

important

challenges,

so

the

first

one

is

training

that

online

training

it's

challenging

and

the

second

one

is

that

it

doesn't

offer

any

warranties.

B

D

E

B

B

That

we

were

functioning

is

a

continuous

function

which

you

can

understand

it,

that

the

output

is

a

scalar

value,

so

the

higher

is

the

reward,

so

the

agent

is

trying

to

find

or

is

trying

to

act,

to

find

to

increase

all

always

this

value.

Okay,

so

the

higher

is,

is

the

better.

So

the

reward

function

should

be,

which

is

the

distance

between

in

a

mathematical

terms,

which

I

don't

know

how

to

do

that.

B

F

Presentation

or

I

want

to

ask:

do

you

consider

it

on

virtual

agent?

Because

if

we

simulate

the

top,

there

are

multiple

or

entity

in

multiple

our

motif

or

server.

So

I

guess

they

you

think

about

a

single

agent.

So

it

is

more

proper

to

SVN

cases.

But

if

we

reflect

or

your

network

infrastructure

is

involve

error

to

consider

multiple

age

on

vertical

Asian.

B

B

Will

12

vertical

or

will

12

wait

for

vertical

yeah?

So

we

only

did

one

Asian

on

top

of

an

SDN

controller,

finding

the

optical

optimal

configuration

for

a

single

network,

no

and

no

vertical

asian

anything

else

with

just

one

and

I:

don't

I!

Don't

for

this

scenario,

I,

don't

see

why

we

need

more

than

one.

G

B

Okay,

that's

a

let

me

elaborate,

so

another

limitation

of

these

kind

of

agents

is

that

the

action

is

space

cannot

be

very

large,

so

the

act,

the

output

of

the

agent

cannot

be.

Okay.

Give

me

the

CLL

configuration

formal

all

my

notes.

It

has

to

represent

like

levers

like

in

a

video

game

right,

so

a

few

levers

that

represent

how

you

steal

your

network.

So

in

this

case

the

Asian

was

choosing

the

weights

of

the

links

similar

to

our

or

SPF

I.

Guess.

G

B

A

H

H

Cvs

one

of

the

civil

one

of

the

popular

generative

models,

and

they

have

achieved

a

great

success

in

a

an

area

the

the

character

is,

it

can

extract

a

hidin

figure

from

the

training

site

data

and

then

reconstruct

the

distribution

model

of

the

focused

object,

because

this

this

24

faces

this

face

is

are

not

real.

They

are

generated

by

the

Civic

models.

H

If

we

input

the

label

such

as

we

want

a

female

or

male,

we

want

adult

or

children,

and

then

the

CV

can

generate

the

virtual

phases

for

us

and

why

we-

and

here

we

want

to

introduce

this-

can

conditional

aberration

or

auto

encoder

into

network

management

to

provide

inference

ability

for

the

qsr

performance.

This

is

our

basic

sort.

We

think

that

the

network

is

a

complex

system

and

the

curious

parameters

have

some

Haydn's,

that

is,

statistics

feeder,

which

is

hard

to

express

by

the

simple

distribution

formulas

or

the

combination

of

the

distribution

formulas.

H

Therefore,

we

can

use

the

CVA

II

to

model

the

network

us

and

then

we

can

use

the

Train

model

to

generate

the

new

samples

and

finally,

we

construct

reconstruct

the

curious

distribution

according

to

their

generated

samples.

That

is

always

truth,

be

a

pointer.

That

is,

we

input

a

condition

like

we

want

a

female.

H

If

you

want

a

busy

busy

tank

us

description

to

the

CVA

II

models,

and

then

it

can

generate

a

distribution

of

the

QoS

parameters,

and

then

we

can

do

some

actions

such

as

and

such

as

we

can

implement

the

proactive

operations

to

reserve

the

bandwidth

or

the

priority

sightings

or

migrate.

The

flow

of

we

focused

or

other

other

application

is.

We

can

evaluate

the

actions

that,

if

we

migrate

a

flow

or

VPN

sighting

to

a

new

path,

we

perform

well

enough

for

our

for

our

SLA

okay

and

this.

H

This

is

our

plan,

but

I

prefer

to

close

the

the

the

black

box.

As

this

looks

more

more

simple

here,

we

focus

on

the

icarus

variation

that

caused

by

the

traffic

such

as

we

pay

more

attention

on

the

queuing

delay

than

the

transmission

delay.

We

so

here

we

use

the

traffic

metrics

as

the

label

or

or

say

it,

the

condition

of

the

CVAG

model,

and

then

we

use

the

Q

s

as

the

other

value

like

this.

H

The

end

to

end

delay

may

be

ten

point

two

and

we

we

set

the

label

as

one

and

then

we

can

got

the

training

training

process

and

then

we

go.

We

obtain

the

CV

model

and

after

we

we

got

model,

we

can

infer.

We

can

infer

the

Q

s

distribution

by

into

the

traffic

metrics

as

a

label

or

as

the

condition,

and

then

it

will

generate

the

qsr

metric

samples

for

us,

and

then

we

can

use

these

samples

to

create

the

Q

s

distribution

in

any

conditions.

H

Here

we

have

a

simple,

simple

cases.

Is

a

demo

experiment

that

we

regenerate?

We

set

up

a

tent,

an

eye,

a

traffic

label

from

one

to

nine,

and

we

set

a

Hyden

rules

that

the

Q

s

label

is

the

the

Q

s.

Label

is

a

normal

distribution,

obey

the

normal

normal

distribution,

by

the

mean

the

mean

value

of

label,

multiple

10

and

the

very

earth,

and

the

variance

is

three

like

this.

We

have

this

distribution

of

the

proper

probability,

distribution

of

the

Q

s

parameters.

H

It

is

virtual

regenerated

and

then

we

use

1,000

sample

to

Train

the

CVAG

models

and

we

took

it

will

take

about

200

seconds

for

per

training

time

after

we

got

the

CV

model

and

we

will

test

whether

the

model

can

reboot

the

the

probability,

a

distribution

for

our

training

set.

So

we

input

the

training

label

from

1

to

9

such

as

you

will

input

the

label

to

whether

we

expect

the

CBA

model

can

output

Q

as

distribution

with

the

min

value

of

20

and

then

the

VAR,

the

variance

of

3.

H

That's

a

result

for

the

non

label.

We

can

obtain

the

accurate

distribution.

The

gray

is

not

very

clear.

The

gray

bar

the

great

curve

and

the

gray

bar

here

is

the

cases

or

the

samples

that

we

generated

and

the

red

one

is

the

the

cases

the

samples

the

CV

model

generated

for

us.

We

can

do.

You

can

see

that

this

it

looks

similar,

they,

the

arrow

of

mean

standard

and

the

19

point

like

this.

So

that

is

not

a

very

important

one,

because

many

many

AI

models

can

do

that.

H

The

answer

is

yes

for

the

non

label.

We

can

also

obtain

accurate

distribution.

So

here

this

label

11

to

14,

that's

very

important.

Somebody

may

say:

hyeon's

in

your

distribution

is

too

simple

and

you

have

only

two

parameters

and

one

of

the

1

now

is

a

fixed,

but

for

the

it

is

based

on

the

the

knowledge

of

human,

bring

that,

because

at

the

Machine

can

not

know

what

is

what

is

the

concept

of

the

normal

distribution?

H

Okay,

this

model

required

required

new,

maybe

new

merriment

technologies

to

feed

to

feed

such

as

we

need

more

high

frequency

and

accurate

accuracy

data

and

then

to

see

the

feed

out

this

model,

and

also

we

will

face

to

the

data

expression

and

the

transmission

problems.

Now

we

have

two

moles

for

the

CAE

training.

The

first

one

is

as

part

mode,

which

means

that

we

trained

the

model

severe

model

for

each

pass

as

a

unit

such

like

this

one.

H

H

If

we

can

do

that,

we

can

combine

each

of

the

note

as

a

past

as

the

path

q,

s

distribution,

but

now

we

we

cannot

simply

add

two

or

multiple

note

q

s

distribution

into

one,

because

it's

not

reasonable

in

mass,

so

why

we

use

severe

mode

when

why

not

use

other

mode?

We

have

some

advantages.

The

first

one

is

it

performed

quite

well

for

known

distribution

and

better

than

the

competitor

competitors.

One

of

the

competitors

in

the

area

is

against.

H

H

So

here's

a

conclusion

that

CV

can

can

use

to

model

the

network

Q

s

and

the

facility

has

been

approved

and

sir

and

second

one

is

severe-

has

many

advantage,

especially

it

can

infer

the

unknown

cases

and

third,

one

is

pass

mode

is

easier.

We

have

implements

that

we

will

try

to

explore

the

solution

for

the

node

mode.

It

is

still

a

challenge

and

said,

and

and

finally

we

can-

we

need

new

measurement

technologies

to

support

our

data.

Capturing

and

here

are

some

information

for

you

and

welcome

software.

I.

Do

not

think

it.

H

B

H

D

I

J

J

J

You

may

want

to

use

for

doing

some

Q

s

as

well

and

particularly

as

there

is

one

topic

which

is

traffic

classification,

when

you

aim

to

label

your

traffic

or

traffic

flows

with

some,

of

course,

letters

and

profiles,

which

can

be

different

depending

on

the

context

should

target

it

can

be,

for

example,

can

be

the

type

of

application

which

is

used

may

be.

The

user

may

be

the

type

of

the

attack

if

you

are

in

security

context

and

so

on.

J

If

you

want

to

try

some

service-

and

you

can

use-

maybe

DNS

name

and

so

on,

so

this

kind

of

standout

technique

in

order

to

to

know

what

type

of

traffic

or

what

equal

its

to.

And,

of

course,

if

you

can,

you

can

maybe

do

some

deep

packet

inspection

or

looking

at

the

content

of

the

packet.

In

order

to

add

a

bit

of

your

father

is

inside.

J

Of

course

it

has

a

wallet

change

and

no

a

mini

application

rely

on

same

framework,

so

it's

very

hard

to

distinguish

them.

Many

of

them

are

web-based

meaning

that

the

almost

all

use

the

same

protocol

I

mean

with

some

HTTP

HTTPS

and

so

on.

Of

course,

we

are

all

using

now

cloud,

CDN

and

so

on.

So

IP

addresses

are

not

so

very

valid

and

Iquitos

of,

for

example,

original

traffic,

and

of

course

there

is.

The

problem

is

the

encryption

technique?

J

Okay

with

privacy

and

concern

that

weighs

more

and

more

traffic

is

encrypted,

so

meaning

that

mainly

today,

it

would

be.

Maybe

a

big

carry

tacular.

A

lot

of

traffic

is

web-based

and

encrypted.

So

basically,

HTTPS

is

that

we

have

applied

a

lot

on

HTTPS

all

right.

So

yes,

of

course,

that's

good

to

have

encryption,

I

mean

for

user,

protecting

in

particular

you

your

privacy,

but

on

some

who

it's

also

legitimate.

J

If

you

do

some

network

operation

to

know

what

type

of

try

think

is

going

on

in

order

to

do

some

operation,

so

there

is

this

kind

of

need,

in

particular

for

security.

If

you

want

to

apply

some

security

policy,

you

need

a

bit

to

know

what

happens

and

then

somehow

you

mean

don't

need

to

break

and

you

should

not

break

the

user

privacy.

A

lot

of

solution

today

just

rely

on

an

HTTP

proxy

in

the

middle.

J

In

many

I

mean

many

companies

ensign,

which

I

think

is

not

good,

and

so

the

question

is:

can

we

do

some

directly

in

first

work?

I've

done.

Can

we

do

some

monitoring

and

fht

PPS

without

of

cross

decrypting,

whether

it

inside

so

this

is

a

first

example

and

very

rapidly.

I

will

show

you

because

I

present

you

this

example

in

a

previous

and

MLG

session.

So

that

is

that

ok,

so

different

kind

of

things

you

can

do

you

can

try

to.

J

If

you

look

at

where

we

can

try

to

look

at

what

is

a

web

page,

which

web

page

is

loaded

which

is

called

basically

a

website,

fingerprinting,

a

lot

of

record,

don't

try

to

categorize

traffic.

Is

it

fine

Apple

web?

Is

it

superior?

Is

it

a

voice

over

IP

and

so

on?

And

you

are

being

citizen

either

way?

We

try

basically

to

know

what

is

a

service

which

is

used.

So

basically

we

need

to

know

the

service

provider.

We

have

lay

your

classifier.

We

first

wait

to

know

what

is

a

service

provider

like

Google?

J

Is

it

Dropbox

is

a

book

and

Sun

and

then

the

different

services

that

the

heart

behind

so

we

will

want

a

z4

for

each

service

provider.

We

use

simple,

it's

a

simple

or

Revere.

It's

a

regular

decision,

tree

algorithm

to

do

that

and

one

one

main

I

mean

when

main

contribution

was

looking

so

digital

right

here.

Most

of

the

features

that

we

use

our

pretty

much

the

same

and

we

extended

a

bit

rather

than

look.

He

can,

for

example,

packets

I.

J

We

just

extract

the

encrypted

payload

and

we

apply

some

statistic

on

the

encrypted

pill,

and

actually

this

gives

better

results

here,

yeah

very

rapidly,

some

some

result

and,

as

you

can

show

here,

we

look

for

each

service

provider

in

which

a

range

of

co-education

we

are,

and

mainly

for

50

168

service

Friday.

We

have

a

classification,

so

we

are

really

able

to

know

what

is

the

service

I

use

for

this

provider

without

the

encryption?

What

is

the

traffic

with

an

accuracy

around

95

percent?

Okay

I

mean

not

in

too

much

into

the

detail.

J

J

We

know

that

there

is

probably

thios

there

is

a

scanning

and

so

on,

and

let

me

to

want

we

want

to

achieve

is

to

have

the

right

side

figure

right.

We

basically

have

the

different

kind

of

slices,

which

represent

the

scanning,

a

TCP

scan

we

have

on

the

docket

and

to

do

that.

We

try,

with

some

I'd,

say

no

sure

to

like

share

ricotta,

and

we

were

not

really

satisfied

with

the

result.

You

have

to

still

mainly

our

components

and

we

use

another

technique.

So

it's

traffic

is

not

on

fifty

by

the

primes.

J

We

should

not,

of

course,

in

analyze

individual

packet,

but

we

should

correct

them

in

order

to

find

the

right

pattern.

So,

even

if

it's

not

so

simple,

so

we

use

G

da,

which

is

topological

the

tanneries,

we

only

use

one

part

of

the

TDI

here

we

are

no

bit

further.

What

was

the

mapper,

which

is

R,

is

a

first

step,

which

is

basically

you

can

see

that

as

a

partition,

basically

stirring.

J

So

we

have

a

big

space

of

data

multi-dimensional

data

and

then

we

compose

few-

let's

say

a

per

cube

in

that,

and

we

are

this

intermediate

representation

that

we

built

in

the

middle,

which

is

based

on

kind

of

clustering,

and

then

we

measure

all

the

result

together.

Maybe

you

can

discuss

more

if

you

want

more

detail

about

this

technique,

but

with

this

technique

we

have

been

able,

of

course,

to

be

able

to

to

level

a

bit

more

they'd

help

you

have

in

the

document.

J

J

You

want

to

be

able

to

detect,

for

instance,

if

you,

if

you

think

about

security,

and

this

is

more

compliant.

If

you

are

a

bit

against

mass

surveillance,

because

you

don't

want

to

try,

for

example,

in

individual

user,

but

maybe

you

want

are

any

service

usage,

but

only

ones

have

issues

that

you

want

to

attract.

J

Of

course

you

have

to

think.

If

it's

you

can

do

your

honor

isn't

a

single.

Let's

say

that

instant,

so

multiple

that

instance

like

if

you

look

at

a

single

flow,

if

you

want

to

correlate

different

flow

together,

I

think

the

signal

technique

is

usually

a

more

efficient

but

is

more

difficult

to

apply.

I

will

so

then

we

have

the

methodology,

which

is

very

not

really

going

to

this.

It's

very

classical,

so

we

straining

and

so

on.

J

One

thing

that

I

want

to

point

out

is

already

point

to

it

and

in

the

mailing

list

as

well,

is

that

a

future

engineering

I

think

isn't

the

core

half

as

a

problem.

Choosing

the

right

feature:

I

mean

you:

can

you

can

use

a

different

algorithm?

We

need

people

just

just

try

a

lot

of

figures

on

without

thinking

first

about

maybe

the

second

slide.

Maybe

thinking

about

what

also

algorithm

really

works.

I

think

it's

really

important

to

know.

J

Use

of

course,

algorithm

like

black

box,

but

also

features

so

one

one

thing

which

I

think

is

very

a

representative

is

a

when

you

do

some

a

distance

metric.

Many

people

just

choose

algorithm

on

the

shelf.

They

use

distance

metric

on

the

Shelf,

for

example,

you

can

use

a

given

distance,

but

most

of

the

time

there

is

nonsense,

I'm

just

looking,

for

example,

at

port

number.

If

you

look

what

number

as

numerical

values,

of

course,

you

can

do

it,

you

can

apply.

J

Distance

can

compute

the

distance,

but

there

is

no

R

meaning

to

compute

a

numerical

or

akkadian

distance

between

port

numbers.

For

instance.

We

need

something

more

advanced

just

to

show

you

some

some

work,

we've

done

so

far.

Here

is

we

look

again

the

darknet

and,

of

course

there

is

some

semantics

in

sport

and

the

idea

was

not

to

automatically.

We

have

also

done

that

the

semantics

of

ports

from

knowledge,

but

to

build

our

knowledge

based

on

SciTech,

her

behavior.

J

J

Just

a

one

slide

in

the

rest

on

so

and

just

to

conclude,

I

really

think

that,

regarding

the

encrypted

use

case,

based

on

our

experience

on

what

you

can

win,

literature

I

think

if

you

target

well

precise

problem,

you

can

easily

find

a

solution,

can

find

algorithm

features

that

could

give

you

some

reasonable

and

good

results.

I,

don't

think

this

opera.

Of

course

it

suppose

that

you

are

adult

Amin

in

very

precise

database

for

different

environment.

So

there

is,

of

course,

some

things

that

you

have

to

fit,

and

it's

not

so

evident

like

that.

J

What

are

remaining

issues

that

most

of

the

case,

we

don't

consider

as

adverse

behaviors,

and

what

I

want

to

do

to

mention

is

that

the

fingers,

of

course,

is

always

evolving.

We

have

new

protocols,

have

kind

of

optimized

protocol.

If

you

look

at

HTTP

and

even

if

you

have

a

GPS,

of

course

on

top,

but

you

have

HTTP

to

so

know,

things

are

multiplexed

are

compressed

and

so

on.

So

it's

very

difficult

today

that

we

can

easily

apply

so

technique

because

you

before,

basically

we

have

one

flow

and

we

try

to

label.

J

Let's

go

if

I

can

do

an

analogy.

Today

we

have

the

big

Chanel,

where

we

put

everything

in

a

generic.

It's

all

the

same

application

all

encrypted,

it's

mixing

stationary

use

and

so

on.

It's

very

more

far

more

difficult

that

you

can

read

in

most

of

paper.

Actually,

if

you

look

with

the

new

context

and

I'm

that.

J

Okay,

so

it

depends

on

because

I

represent

a

different

use

cases

in

first-year

HTTP

use

case.

It's

quite

well

balanced

because

we

control-

or

we

collected

that

I

said,

of

course,

was

G

as

if

example,

for

the

darknet.

It's

not

so

well

balanced

because

I,

of

course

it's

real

data

that

we

gather.

So

we

cannot

really

control

the

different

proportion.

J

H

From

Paulie

I

have

a

question

that

you

said

that

you

need

to

collect

a

data

post

from

from

then

from

the

network

and

out

of

the

network,

and

my

question

is

how

you,

how

you

align

this

to

two

types:

two

types

of

data,

because

we

need

to

build

up

the

relationship

between

the

in

internal

internal

and

thank

you.

You.

J

Sure

I

mentioned

that

I

didn't

really

focus

on

that.

But

that's

true

that

in

cases

we

went

to

analyze

traffic,

we

need

also

some

knowledge

which

are

which

is

out

of

the

network

and

then

III

deepen

the

case.

Maybe

to

cite

an

example,

for

instance,

we

have

this

dark

net

space

and

also

we

have

also

only

pots.

So

there

are

two

different

I

mean

both

on

it

well,

but

two

difference:

Network

space,

for

example.

J

We

are

collecting

what

possible

which

I

use

by

the

attackers

when

they

try

to

connect

to

ssh

to

on

me,

but

and

we

can

fight

stance

if

you,

if

you

look

in

our

database,

we

can

see

that

that

some

hoes

are

some

changes

is

a

password

which

I

use

because

I

try

together.

If

I

get

some

other

devices

vio

two

devices

at

that

time,

and

so

you

can

see

also

the

same

change

in

the

port

which

are

targeted

or

in

the

in

the

document.

And

of

course

we

it's

really

depends

on

the

context.

J

L

L

L

F

J

If

I

understand

value,

what

question

was

regarding

the

features,

so

we

have,

we

have

difference

at

a

feature.

I

was

very

rapid

and

future,

so

classical

feature

has

features

that

you

extract

from

state-of-the-art.

That

has

been

used

for

I

should

GPS

traffic,

and

then

we

have

selected

set

of

features

that

then

you

have

full

features

which

is

state

of

just

features,

plus

our

new

features

on

encrypted

data,

and

then

we

have

features

which

is

a

selection

among

them.

If

I

go

back

to

once.

J

Okay,

here

you

can

see

what

other

the

selected

feature,

which

is

the

one

are

pouring

in

Pola,

the

ones

that

we

had.

It

is

user

as

one

from

state-of-the-art,

which

are

the

selected

feature

which

are

basically

based

on

an

information

gain

that

we

selection

and

then

so.

What

means

the

tables

at

for

each

line?

J

We

look

at

the

number,

so,

for

example,

the

first

line

is

the

number

of

service

provider

for

which

we

were

able

to

classify

between

95

and

Android

percent

of

the

service

which

have

been

used

and

so

on,

and

then

we

have

the

different

bins

and

so

for

so.

The

first

thing

is

the

one

most

from

penalty

for

the

best

classification,

because

it

means

that,

for

if

you

use

silicate

feature

51

of

service

provider,

I've

been

steep.

Services

of

59

of

service

provider

have

been.

M

J

J

The

idea,

because

I

think,

if

you

look

at

this

particular

gene

algorithm,

it's

I

would

call

near

we

had

time,

because

our

initial

goal

was

to

build

HTTP

firewall.

So

it's

I

think

in

terms

of

classification,

it's

very

fast

so,

but

the

problem

is

that

we

need

to

have

the

full

HTTP

session.

So

it

means

that

if

you

do

a

firewall,

you

cannot

block

the

connection.

You

need

to

observe

entirely

and

then

block.

M

J

N

N

J

N

O

Curatu

compeller

I

have

a

comment

for

you,

which

is

as

you're

doing

this

stuff

and

saying

how

well

you

can

classify

based

on

not

just

classical

features,

but

all

these

other

features

you're

suggesting

to

people

how

they

can

hide

their

traffic

better,

so

I

can

pad

my

packet

so

that

they

all

look

roughly

the

same

size.

I

can

adjust

the

inter

packet

gap

so

that

that

information

goes

away.

Just

a

comment.

Okay,.

P

So

the

motivation

is

the

following:

so

in

the

future

hyper-connected

society,

we

believe

that

we

will

have

a

major

challenge

in

controlling

and

managing

large

networks,

and

you

can

have

in

mind

the

AI

enabled

cloud

hosted,

applications

connected

through

5g

and

interconnecting

other

thousands

of

IOT

devices

until

end-users.

So

in

such

a

large

network,

traditional

approaches

to

management

will

be

challenged

because

they

require

and

the

link

of

a

model

in

advance

and

then

acting

and

the

reasoning

contact

model

a

while

in

such

a

large

and

complex

systems.

P

It

would

be

hard

to

learn

in

advance

the

model

that

maintain

it

update

it

because

it's

dynamics

of

this

large

system-

and

we

are,

after

a

noble

approach-

the

concept

of

learning

in

interaction

introduced

and

derived

from

reinforcement

learning.

So

the

idea

in

years

has

been

already

presented

by

the

first

presenter.

So

let

me

go

work

quickly

through

the

high-level

concept

here.

P

So

instead

of