►

From YouTube: IETF101-TCPM-20180319-0930

Description

TCPM meeting session at IETF101

2018/03/19 0930

https://datatracker.ietf.org/meeting/101/proceedings/

B

B

C

B

Here

yet

so,

if

he's,

if

he,

if

he

makes

it

in

time,

you

will

see

a

presentation

about

the

back

in

the

TCP

spec,

we

will

have

an

update

on

TCP,

fast,

open

deployment

and,

if

time

permits,

we

will

look

at

our

the

last

draft

on

this

list,

which

already

had

some

discussion

on

the

mailing

list.

So

working

group

documents

finished

between

the

last

ITF

in

this

one

is

the

RFC

described

in

cubic,

and

these

are

the

working

documents

which

are

active.

The

first

one

I'll

turn

off

back

off

is

actually

in

working

group.

B

Last

call

still,

we

just

figured

out

that

we

don't

have

a

milestone

for

it,

so

we

have

to

restart

everything.

Now,

that's

a

joke,

but

we

really

don't

have

a

milestone

for

it.

We

are

trying

to

fix

this.

Then

we

have

TCP

IDEO.

There

was

an

editorial

update,

but

it's

just

updating,

typos

fixing,

typos

and

that

stuff

Joe

said

they

are

trying

to

get

implementation.

Experience.

B

B

B

E

E

Also

market

auctions

essentially

saying

the

same

thing

about

some

of

the

idea:

references

being

RFC's

already.

So

that's

an

easy

fix,

we'll

fix

that,

and

he

asked

if

some

generic

rules

of

thumb

about

the

better

loss

versus

easy

an

adjustment

will

be

in

order.

Our

answer

to

that

was

that

this

really

depends

on

the

congestion

control.

Our

draft

us

ref

does

recommend

0.8

for

Reno

type

congestion

control

and

for

cubic.

E

There

is

a

bit

of

text

somewhere

already

that

says,

the

results

of

our

tests

indicate

that

cubic

benefits

from

0.85,

but

there's

no

actual

actual

specification

in

our

document

about

this

number

next,

then,

we

got

a

pretty

long

list

of

comments

from

Marco.

Some

of

them

are

pretty

easy

to

deal

with.

First

of

all,

there

was

a

wrong

statement

in

section

4-1

related

to

the

timeout

that

isn't

really

about

our

C

system.

E

I

mean

some

argumentation

on

why

I

use

ecn

to

vary

the

degree

of

back

off,

and

we

decided

that

this

paragraph

can

really

just

be

removed.

So

this

isn't

this

isn't

really

specifying

anything

then.

Secondly,

he

wanted

us

to

specify

what

happens

when

Seawind

is

as

a

thresh,

because

I

document

now

says

this

is

only

for

congestion

avoidance,

but

according

to

I,

receive

five

six,

eight

one,

it's

not

clear

whether

you're

in

congestion

avoidance

or

when

Seawind

is

at

Thresh.

E

So

our

suggestion

is

to

be

conservative

and

confirm

with

the

previous

versions

of

this

document,

which

say

that

you

only

apply

this

when

in

congestion

avoidance

now

this

is

only

definitely

the

case

when

cement

is

bigger

than

ssthresh

and

in

line

with

what

Mark

walls

has

suggested.

We

could

explain

that

there

is

a

gray

area.

E

There

is

a

sentence

in

RFC,

five,

six,

eight

one

talking

about

something

being

in

the

gray

area,

which

says

that

this

may

benefit

from

additional

attention:

experimentation

and

specifications

we'd

like

to

say

that

about

the

case

of

cement

being

as

this

rash,

as

well

as

serum

being

smaller

than

SSO

ash,

because

that

is

also

something

that

is

worth

looking

at.

We

looked

at

it,

but

we

don't

spec

it

next

and

then

there

was

a

concern

about

the

lower

bound

of

two

SMSs

being

introduced

in

in

this

RFC.

Now

that

comes

from

it's

an

editorial

thing.

E

E

We

at

this

point

I

just

want

to

say

that

we

never

intended

to

change

anything

about

the

EC

and

behavior,

except

for

this

calculation

factor.

So

we'll

just

fix

the

text

to

make

it

very

clear

that

we're

not

changing

anything

except

for

this

calculation

factor,

if

see

when

can

be

reduced

below

SS

Rashed,

and

so

be

it

we're

not

we're

not

trying

to

change

anything

about

the

RSC

3168

rules,

except

for

this

factor,

and

that's

it.

F

F

B

Any

other

comments,

so

if

that's

not

the

case,

then

I

sent

up

send

out

a

note

today

that

I'm

closing

the

working

of

last

call

and

ask

your

office

to

submit

in

your

document

and

then

we

will

progress

it

and

decide

it

or

your

changes.

So

we

don't

need

a

another.

Working

group.

Last

call

probably

wait

until

we

have

a

milestone.

G

G

Basically,

if

you

see

more

than

one

marking

in

a

round-trip

time,

you

wouldn't

know

at

the

sender

side,

so

accurate

ecn

is

just

changing

the

feedback

from

the

receiver

to

the

sender

and

providing

you

accurate

information

about

how

much

markings

you

have

seen

in

the

last

round

of

time.

Next

slide:

accurate

Sen

provides

capability,

negotiation,

Inspectorate

compatible

compatible

with

classic

Sen,

and

it

has

two

ways

to

send

feedback.

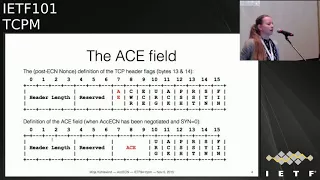

G

One

is

using

three

bits

in

the

TCP

header,

so

basically

reusing

the

East

End

bits

that

are

already

there

from

the

classic

is

in,

and

also

the

previously

the

the

bit

that

was

probably

known

as

the

nonce

easier

nonce

bit.

That

is

now

deprecated

or

not

in

use

anymore,

and

then

it

has

a

second

way

to

provide

you

even

more

feedback

with

the

TCP

option.

G

Next

slide:

that's

how

the

header

looks

like

so

you

have,

and

you

will

still

have

as

accurate

is

the

end.

We

have

the

two

easy

inflex

called

easy

and

cwr,

and

then

the

flag

that

was

currently

known

as

NS,

the

non

spec

will

in

future

probably

be

the

AE

flag.

The

accurate

is

the

N

flick

and

that's

like

what

you

used

during

the

handshake

and

then

later

on,

if

accurate,

easy

and

was

negotiated,

this

field

is

used

for

these

three

bits

are

used

as

a

field

to

provide

you

a

counter

next

slide.

G

This

is

how

the

option

looks

like

also

very

straightforward.

We

have

like

three

fields

for

for

three

different

counters.

The

difference

is

that

the

counter

provided

in

the

TCP

header

is

a

packet

counter,

and

the

counters

provided

in

the

option

field

are

white

counters.

So

basically,

what

this

also

means

is

that

the

packet

counter

does

include

control

packets,

which

don't

have

any

payload,

and

the

white

counters

don't

include

control

packets

because

they

don't

have

any

payload.

G

G

So

there

is

an

implementation.

This

implementation

provides

the

basic

functionality,

but

it

doesn't

cover

all

the

fallback

and

in

special

cases,

so

it's

kind

of

a

proof

of

concept

implementation.

It's

not

a

full

implementation,

but

it

works.

It's

also

a

little

bit

an

outdated

kernel

version,

I

believe

what

the

implementation

does.

It

uses

the

easy

and

sis

ETL

that's

already

there

and

just

sets

it

to

different

value

to

enable

equities.

Yet

so

the

internet

interface

also

stays

the

same,

and

what

we've

done

so

far

is

that

we

have

this

implemented

was

an

experimentation

option.

G

Sorry,

the

ACE,

a

II

flick

in

the

TCP

header

right

away

with

this

publication

of

this

document

to

be

clear

what

this

flag

is

used

for

and

then

the

process

would

be

with

I

used

a

approval

and

that's

what

the

document

currently

says.

So

basically

the

status

is.

We

got

two

more

reviews

since

the

last

meeting

from

Gauri

and

Myka's.

Thank

you

very

much.

I

try

to

address

most

of

their

comments

put

some

little

changes

in

there.

There

are

a

few

more

things

that

need

more

clarification

and

I.

Think

then

the

document

is

ready.

H

Questions

so

this

is

Michael

speaking

from

the

floor,

so

most

of

my

comments

are

purely

editorial

about

the

wording,

but

there's

one

comment

that

I'd

like

to

raise

again-

and

this

is

about

how

to

end

this

experiment.

If

there's

partial

deployment,

because

I

wonder

about

because

we

experimentally

are

signing

the

flag,

if

we

do

a

PS

spec

that

follows

so

how

do

we

do

that?

If

the

PS

spec

is

different

from

the

experiment,

and

this

comes

down

to

the

negotiation

and

as

far

as

I

can

see,

there's

no

way

to

do

that.

H

So

this

the

negotiation

mechanism

is

not

future-proof.

In

that

sense,

maybe

that's

a

downside

or

any

solution

that

I

could

think

of,

would

burn

a

TC

up,

TCP

option

or

whatever.

So

maybe

it

is

the

right

thing

to

do

it

that

way,

but

I'm

concerned

that

this

mechanism

is

not

future

proof

and

I.

Think

this

at

minimum

must

be

documented

and

recent.

Why

to

discuss

it?

A

of

the

the

header

it's

not

possible

to

decide

Robin

ago.

H

She

ation

mechanism

and

I

think

this

must

be

documented,

because

it's

a

downside

of

the

mechanism

and,

as

I

said,

I,

see

a

risk

as

a

road

reduce

your

risk

that

we

have

to

burn

another

head

a

bit

later

and

we

don't

have

many

of

them.

So

it's

different

to

do

options.

We

don't

have

many

header

bits

and

I

think

at

least

this

must

be

documented,

and

this

is

not

only

an

editorial

change

of

some

words.

It's

something

inherently

about

the

mechanism

and

its

downsides.

Yes,.

G

My

take

is

that

the

things

that

we

flick

as

questions

we

have

for

the

experiment

are

not

things

that

change

the

mechanism.

Basically

in

itself,

it's

things

that

if

you

change

them

in

a

in

the

standards,

Trek

spec,

you

can

just

implement

them

differently

and

it's

no

problem.

No

compatibility,

for

example,

the

question

about

how

often

to

send

the

TCP

option.

There's

no

real

I

know

that

the

this,

the

receiving

end

point

doesn't

have

to

rely

on

a

specific

rate.

G

I

G

H

G

H

J

Michael

can

I

can

I.

Just

ask

you

good

to

know

of

you

before

you

go

away

in

case

I'm,

just

trying

to

understand

what

you

mean

by

burning

a

bit,

and

this

is

Bob

Brisco

for

the

scribe,

because

we're

we're

using

a

bit.

That's

already

been

used.

So

in

a

sense,

we're

we're

not

burning

a

bit

and

we're

also

reusing

it

where

we're

using

it

for

negotiation

and

we're

repurposing

it

during

jegichagi.

H

They're

two

different

aspects:

first,

the

split

was

probably

never

used.

It

was

document.

It

was

a

documentation

for

a

proposal

how

to

use

it

probably

was

never

used.

But,

okay,

we

can

decide

in

this

group.

We

will

use

it

in

future.

That's

not

my

problem.

My

problem

is,

if

you

do

a

PS

spec

of

this,

and

we

have

to

change

the

protocol

as

outcome

of

the

experiment.

You

have

to

burn

another

bit

and

I'm

concerned

about

the

second

bit,

not

about

this

one

here.

This

is

the

second

one

that

I'm

concerned

about,

but.

H

Taking

that

there

would

be

a

way

for

I

mean

I've

not

done

the

design,

but

there

would

be

a

way,

for

example,

to

use

the

bit

and

all

this

in

together

with

an

option

and

then

the

peer

spec.

You

remove

the

option

and

then

you

would

be

able

to

distinguish

whether

you

run

the

peer

speck

of

the

protocol

or

the

experimental

one

so

that

there

would

be

ways

to

solve

it,

but

it

ruins

bits

elsewhere

in

the

header.

So

that's

the

downside.

G

I

did

my

not

change

their

actually

because,

like

we

had

in

mind

that

it's

a

receiver

site

decision

to

use

the

option,

because

if

you

know

you're

an

environment

where

options

don't

use,

then

don't

use

it

right.

So

we

reflect

this

out

a

little

more

explicitly.

That

was

like

a

hidden

assumption.

We

had

in

mind

and

I

added

a

sentence

somewhere

that

hopefully

clarifies

that

part.

It.

J

You

read

my

email

yesterday,

not

really:

okay,

Bob,

let's

go

yeah.

There's

a

statement

in

there

that

the

if

you

like

the

data

send

are

the

one

that's

receiving.

The

ACK

must

be

able

to

muster.

Have

the

implementation

to

be

other

as

an

option,

even

though

it's

not,

it's

only

assured

to

send

an

option.

Yeah.

G

J

So

that

if,

if

one

end

has

implemented

it,

the

other

end

can

use

it

I

think

that's!

Okay

with

you!

Isn't

it

because,

because

I

thought,

your

problem

was

that

you

didn't

want

all

the

complexity

of

having

to

deal

with

sort

of

the

failure

of

the

option

and

that

study

was

sending

it.

But

if

you

do

get

it

through

from

from

the

receiving

end,

I

mean.

G

I

think

it's

it's

it's

correct

to

say

and

I'm

not

sure

what

the

exact

wording

I

think

it's

correct

to

say:

you

have

to

have

the

implementation

to

be

able

to

pass

it.

If

you,

if

you

add

another

kind

of

switch

to

say

it's

implemented

but

I

already

know

I,

don't

need

this

information,

though

you

don't

have

to

pass

it.

It's

like

an

implementation

decision

only

it.

G

F

G

I

mean

like,

what's

here:

what's

your

use

case

here

because,

like

I

understand

that

for

someone

in

data

centers,

now

you

don't

want

the

option,

but

you

have

the

code

in

your

in

your

implementation,

because

you

use

a

standard

implementation

that

hopefully

already

has

the

code

and

you

never

use

the

code.

You

never

run

so

that

code

pass.

Is

that

a

problem

for

you.

F

G

J

Bob

Brisco

again

I,

don't

see

the

problem

with

I

mean,

obviously

it's

work

doing

the

implementation.

But

if,

if

you

cause

an

incoming

option,

you

don't

have

to

have

any

of

the

code

that

does

any

of

the

they

fall

back

because

you're

not

sending

an

options

and

all

effect

for

back

as

if

they

were

sending

it.

No

yeah

yeah.

G

H

B

G

B

L

L

So

doing

time-based

instead

of

counting

dewbacks

next,

please

on

another

sort

of

combined

feature

with

rockets

called

the

tailless

probe

and

the

problem

to

deal

with

this.

That

today,

when

you

have

a

tailless,

you

have

to

wait

for

a

time

out

and

tell

loss

is

occurring,

common

and

especially

short

connections.

L

They

say

you

send

two

packets

post

get

lost,

and

here

your

serial

might

be

100,

but

you

only

have

three

packets

to

package

or

three

packet

to

send

and

often

lost,

and

then

you

would,

you

see,

went

to

one

according

to

the

RC

and

which

is

like

a

big

penalty

because

literally

you,

sensory,

packets

and

the

last

to

get

lost.

So

you

take

a

time

out.

The

tail

loss

idea

is

that,

okay,

on

this

kind

of

occasion,

you

can

just

after

a

round-trip

or

two,

we

transmit

up

like

a

pro

packet.

L

What

we

do

here

is

you

simply:

we

transmit

the

last

one

and

if

the

last

one

is

quickly

act

within

an

RTT,

then

you

said

it's

really

just

like

fast

recovery.

There

is

really

not

that

many

packet

loss,

because

you

know

things

are

sort

of

coming

back.

It's

not

like

the

entire

flight

is

lost

for

a

long

time,

so

you

perform

a

fast

recovery

instead

of

like

a

four

time:

I'll

reset

Seawind

style.

L

L

So

that's

the

basic

idea

of

RAC

and

in

this

IDF

we

have

just

uploaded

the

cert

revision,

which

we

add

a

few

things.

One

is

I

talked

about

a

time,

the

reloading

window.

Basically,

when

you

wait

for

how

long

do

we

will

wait

before

you

declare

a

package

lost,

you

can

just

wait

for

an

RTT.

That's

too

aggressive,

so

you

add

some

cushion

and

that

cushion

what

we

call

is

the

reordering

window

is,

they

are

usually

they

might

be

reloading.

L

I'll

talk

about

details

later

and

in

terms

of

deployment

in

the

Google

server

kernel.

We

have

complete

sort

of.

We

place

all

the

doop,

a

special

approach

with

rack.

So

today,

in

our

server

production

colonel

there

is

rock

TLP

and

the

standard

time

out

mechanism

which

is

required,

but

that's

it

you

don't

see

other

like

a

FAQ

or

sweet

you

back

or

yeah

yeah.

There

are

quite

a

few.

This

is

completely

subsumed

next,

please!

L

So

what

is

this

dynamic,

reordering

window?

So

in

the

previous

draft,

the

reordering

window

is

simply

set

to

a

quarter

of

RTT.

Very,

very

simple

deals

with

most

of

the

losses

reordering,

because

a

lot

of

reordering

that

we

are

seeing

is

just

a

very

small

scale.

Let's

say

you

know,

the

packet

that

you

sent

in

the

next

is

delivered

just

a

little

bit

quicker

than

the

packet

that

you

sent

earlier.

L

So

the

reordering

degree

is

small,

but

there

are

cases

in

when

the

reordering

window

can

get

pretty

large,

especially

on

Wi-Fi

links

where

it's,

the

Wi-Fi

retransmission

that

costs

the

reordering

and

Wi-Fi

link

retransmission

is

highly

dependent

on

the

channel

status

and

in

this

case

rack

will

perform

a

spoof,

Schloss

detection.

You

say:

okay,

this

packet

timer

has

fired

and

this

package

should

be

consider

lost.

So

if

you

use

lost

based

congestion

control,

then

it's

gonna

take

a

hit

on

that,

and

in

this

case

initial

idea

we

have

is

alright.

L

This

precisely

measure

the

reordering

degree,

but

it

turns

out

to

be

really

complex,

because

when

you

want

to

do

that,

you

have

to

remember

the

per

packet

timestamps,

even

when

the

packet

has

been

acknowledged

right.

So,

usually

in

TCP

stack

when

a

package

cannot

you

free

the

the

packet

because

it's

been

delivered

in

this

case,

you

have

to

keep

those

extra

state

simply

to

do

this

precisely

and

we

believe

that's

not

really

worth

the

effort.

L

What

we

do

is

we

look

at

this

option

that

have

been

created

a

long

time

ago

called

the

bouquet

sack

and

what

it

does

is

when

you

receive

a

packet

that

covers

a

sequence

that

you

have

already

received.

You

simply

return.

The

sack

option

says:

hey

I

got

this

duplicated

sequence

and

it

has

been

on

implemented

in

all

the

major

stacks,

like

Linux,

iOS

and

Windows

since

all

on

by

default,

and

that's

a

great

indication

because

it

signals

buoys

which

transmission

Jenna.

You

have

a

question

there.

L

F

L

J

L

L

J

L

Oh

great,

so

the

objective

here

is

to

say:

you

want

to

adjust

this

sort

of

reordering

window,

essentially

the

time

now

for

the

packets

to

accommodate

higher

degree

of

reordering.

So

if

two

packet

being

we

order

more

than

a

quarter

of

minimun

RTT,

then

today

the

previous

version

couldn't

catch

that

and

it

will

cause

a

spools

sort

of

loss,

recovery,

yeah.

J

J

J

K

An

answer

to

that,

and-

and

that

is

if

you

take

your

favorite,

very,

very

complicated

recovery

scenario

and

then

insert

in

the

forward

path-

something

that

shuffles

every

four

packets

does

it

still

work,

and

the

answer

is

no,

because

you

can't

do

the

logic

in

sequence:

space.

You

want

to

do

the

logic

in

time-space

and

so

that

the

sort

of

the

limiting

case

is

allow

every

packet

to

have

an

independent

delay.

K

Okay

and

and

but

so

you're

correct

that

there

isn't

specified

a

reordering

threshold

or

an

implicit

reordering

threshold.

But

you

want

to

be

able

to

design

that

independently.

You

don't

want

the

algorithm

to

have

built

into

it

assumptions

about

how

much

reordering

what

is

the

upper

bound

on

the

amount

of

reordering

of

the

reordering

distance

and

so

making

the

algorithm

support.

Arbitrary

levels

of

reordering

such

as

you

can

then

put

in

a

policy

and

optimization

about

how

much

reordering

and

how

much

spurious

retransmissions

you're

willing

to

deal

with.

J

L

So

the

next

I'll

put

more

detail,

but

basically

the

idea

is

to

accommodate

reordering

up

to

an

RTT

further

than

that

Rach

couldn't

do

it

because

in

the

end

of

the

day,

if

you

have

to

pass

one,

send

it

to

them

over

the

moon

and

one

is

a

local

network.

There

is

no

way

we

can

accommodate.

We

ordering

like

that

yeah.

F

F

L

F

L

So

I

think

I

give

that

you

know

forget

what

I

need

to

modify

this

right.

This

is

about

what

happened

is

that

in

the

TSO

processing,

when

you

say

you

have,

you

know

they

say

package.

Seven,

eight

nine

that

gets

sacked

right

in

linux

they'll

be

collapsed

into

one

sort

of

packet

buffer.

If

you

call

that

yeah,

which

you

will

lose

the

timestamps

of

individuals.

M

L

L

Essentially

you

buy

another

quarter

of

monatti,

it's

important

that

it.

You

don't

increase

it

for

every

D

sack,

because

you

could

get

a

lot

of

D

sack

doing

we

ordering

in

a

roundtrip.

So

we

only

do

that

incremental

E

and

again.

This

could

still

miss

that

right.

Let's

say

the

reordering

degree

is

actually

3/4

of

our

TT.

So

for

the

next

round,

Ferb

you

still

going

to

cause

some

spewed,

we

transmission,

and

then

you

just

have

to

learn

again.

L

So

it

takes

some

round

trips

to

attempt

to

a

level.

That's

I

can

accommodate

the

current

reordering

and

then

we

don't

want

to

keep

this

high

reordering

for

forever,

because

the

problem

is

then

your

timeout

gets

too

long,

and

if

there

is

no

reordering,

you

don't

want

to

delay

your

fast

recovery

that

long.

L

So

what

we

do

is

another

heuristics

to

say

if,

after

16

magic,

number

16

last

recoveries-

and

we

see

all

the

recoveries

are

done

without

seeing

further

defects,

then

we

just

reset

it

and

just

repeat

this

process,

and

all

this

design

is

there's

a

lot

of

ways

to

make

it

more

fancier

more

adaptive.

But

we

just

trying

to

make

it

simple

and

good

enough

in

our

test

cases

and

then

doing

fast

recovery,

we

will

temporarily

we

set

the

reordering

window

to

zero

to

be

very

prompt

in

fast

retransmit.

L

So

the

idea

is

to

be

conservative

in

the

beginning,

but

once

we

decided

okay,

we

need

to

go

into

loss

recovery.

Then

we

are

very

aggressive

in

marking

the

packets.

We

could

have

cost

a

lot

of

spirit

retransmission,

but

with

that

decision,

but

it's

sort

of

a

trade-off

that

we

have

to

make

and

again

all

this

reordering

window

will

be

kept

under

the

smooth

RTT.

So

any

we

are

doing

further

than

that.

We

cannot

catch

that

you

will

cost

buoys

with

transmission,

but

I

would

order.

L

I

would

argue

that

for

any

kind

of

case

that

would

be

always

reordering

there.

It's

you

cannot

do

that

perfectly,

like

Bob

next

like

lease,

so

this

is

just

a

showcase

of

the

two

algorithms.

You

know

on

the

right

on

the

left,

its

the

O

one

and

on

the

right,

the

new

one

where

this

is

not

a

this

is

in

emulation

where

we

deliberately

we

order

packets

to

hell

and

the

sacks

are

in

the

purple

color

you

can

see.

We

are

triggering

a

tongue

of

sacs,

including

the

D

sacs

and

in

the

over

chin.

L

It

will

just

keep

trigger

all

this

false

recovery.

So

you

will

see

the

stupid

is

only

60

mega

bits

per

second,

but

in

the

new

one,

you'll

see

initially

we're

still

now.

Ramping

are

very

good,

but

we

are

learning

and

increasing

the

reloading

window.

Once

the

reloading

window

is

pick

enough

to

accommodate

the

reordering,

then

you

don't

cause

further

spoof

retransmission

and

even

under

severe

we

order

you

can

zoom

up

on

your

speed

very

quickly.

L

So

this

is

just

to

show

that

how

this

dynamic

we

ordering

window

works

under

very

severe

reorder

and

if,

on

the

right

hand,

side

the

new

one.

If

I

look

at

a

longer

time

scale,

you

will

see

after

the

while

that

we

essentially

the

reordering

window,

will

rewind

and

you

will

Constance

police

retransmit,

but

you

will

read

nirn

and

then

pick

up

again

thanks

like

lease.

L

So

the

last

thing

is

the

dupe.

A

special

emulation

mode

do

back.

Special

can

still

be

very

useful,

especially

in

ultra-low

our

titties.

Why

is

that?

Because

in

rack

I

talked

about

a

time

out

for

every

packet

right

and

but

in

say

they

are

sinners.

The

oddity

is

less

than

100

microsecond

a

lot

of

time,

but

your

stack,

timer

tick

might

be

much

bigger

than

that

say:

1

millisecond

or

even

10

millisecond.

So

in

this

case

let's

say

you're

reloading

time

is

reality:

300

microsecond,

the

fasted

timer

you

can

fire

is

say

a

millisecond.

L

L

N

So

this

is

a

rich

after

Canada

is

that

statement

actually

true

I

mean

I

was

under

the

impression

that

in

the

example

with

RC

6

to

675,

you

would

enter

loss

recovery

after

the

sack

of

packet

7,

but

once

you're

you're

in

loss

recovery,

the

entire

point

of

66

75

was

to

recover

all

these

four

packets

right

now.

If.

L

O

Alec

this

is

Josi

from

Mike

and

then

about

the

66

75.

You

are

right.

One

two

pocket

has

been

considered

as

a

roast.

That's

know.

If

there

is

any

window

size,

we

can

send

that

packet

six,

because

now

we

in

order

to

in

a

pro,

but

this

might

be

a

rose

but

we're

not

sure.

But

now

we

can

send

it

just

in

case

and

then

we

can

get

feedback.

L

N

L

L

F

L

F

So

this

seems

I

mean

again

a

little

uncertain

about

I'm,

not

pushing

back

I'm

just

trying

to

understand

what

the

intuition

here

is,

because

this

seems

like

early

transmit

is

getting

folded

in

in

a

strange

way,

because

early

they

transmit

tries

to

do

exactly

that

right.

If

seven

was

the

last

packet

that

was

sent,

then

early

retransmit

would

fire

recovery

of

six.

F

F

L

L

Next

slide,

please

so

the

last

one

is

interacting

with

congestion

control.

There

is

the

case

when,

on

a

single

AK,

when

we

receive

a

will

update

the

RTT

and

Rach

can

trigger

a

lot

of

packet.

That's

considered,

it

lost

the

simplest

of

they

say

you

sent

100

packet

right,

only

the

very

last

one

made

it

and

in

this

case

Rack

will

get

an

update

of

the

RTT

and

for

packet

1

to

99

it's

going

to

arm

a

timer

right

once

the

timer

fired.

L

They

say

after

a

quarter

of

RTT

later

it's

going

to

mark

1

to

99

packet

lost,

so

the

in

fly

will

drop

or

come

from

100

down

to

base

essentially

zero

from

99

to

zero.

So

that's

a

big

change

of

the

in

fly

and

if

you

just

implement

the

current

reno

congestion

control,

which

always

sent

an

fast

recovery

first,

it

reduce

the

Seawind

by

half.

Let's

say:

50

right

so

see

you

in

is

50

or

SS

ratio

is

50

and

the

in

fly

is

essentially

zero.

So

what

do

you

do?

Is

you

burst?

L

50,

packets

out

and

without

pacing?

You

is

very

likely

to

induce

another

round

of

loss

which

you

have

to

spend

to

recover.

So

Linux

doesn't

have

this

problem

because

it

uses

this

proportional

reduction,

which

what

it

does

is

that

during

the

fast

recovery

you

either

do

packet

conservation

for

every

packet

sex.

You

say

one

more

packet

out

or

you

do

slow,

sir,

for

every

packet

sack

you

send

two

packets

out,

so

it

does

have

this

situation.

So

we

will

recommend

using

this

fast

recovery

approach,

proportional

reduction.

L

When

you

implement

rack

and

another

helpful

one

is

you

can

do

TCP,

placing

so

that

you

don't

send

a

big

burst?

That

would

be

the

most

convenient

solution

for

a

lot

of

other

situations

too

next

piece,

so

the

development.

How

frog

has

is

near

the

end.

We

don't

plan

any

further

sort

of

algorithm

changes.

Of

course,

little

tunings

is

always

possible

and

Linux

BSD

and

Windows

all

support

that

and

I

think

the

author's

for

authors

see

the

racks.

L

B

E

Mike

Aversa

I

I

may

be

asking

something

very

strange,

because

this

isn't

I'm

a

I,

don't

know

so.

The

thing

is

I've

been

playing

with

a

variant

of

this,

and

that

is

really

not

quite

the

same.

It's

a

bit

more

drastic

in

a

certain

way.

Well,

just

something

that

I

experienced

that

I'm

just

wondering

if

the

same

thing

could

happen

here,

but

I,

probably

not

but

I'm

just

wondering

so

let

me

ask

something:

I

experienced

is

that,

with

the

logic

of

using

time

to

decide

what

has

been

lost

on

what

hasn't

been

lost?

E

Well,

the

were

cases

where

I

ended

up

terminating

recovery

and

I

was

basically

over

and

had

just

reach

and

speeded

everything,

but

I

was

lost.

I

was

left

with

a

large

window

that

I'm

now

able

to

basically

burst

out

immediately

so

I.

What

I

needed

to

create

is

a

phase

of

pacing

that

is

after

recovery.

I,

don't

know.

E

If

that

sort

of

thing

can

happen

to

you,

because

I

think

a

proportional

rate

reduction

would

operate

within

recovery,

so

I

I,

don't

know

if

you

need

to

have

something

at

the

end

of

recovery

where

you,

but

you

can.

You

know,

because

basically

the

a

clocking

always

allows

you

to

send

out

another

packet.

L

So

I

definitely

agree

your

observe

a

shink

as

we

see

the

same

thing,

that's

why

we

recommend

using

FQ

pacing

for

basically

in

general,

don't

just

do

that,

but

for

the

PR

are

this

really

for

just

the

fast

recovery?

But

again

TCP

is

inclined

to

cause

burns

because

of

the

Seawind

in

fly

differences,

and

it's

sort

of

this

is

sort

of

out

of

scope

of

the

loss

recovery.

L

E

E

L

E

E

E

E

L

I

F

I

F

Our

implementation,

we

actually

chose

to

keep

it

for

sequence

number

space,

because,

for

example,

if

you

do

like

the

TS

or

LS

o,

then

it's

a

group

of

packets

that

are

transmitted

at

the

pretty

much

the

same

time.

So

there's

some

implementation.

Efficiency

gained

by

tracking

this

per

sequence

base,

rather

than

per

packet,

which

also

means

that

we

can't

track

the

original

packet

and

the

retransmission

separately

so,

and

the

other

thing

I

found

is

that

there

is

one

case

that

you

talked

about

tail

drop,

which

will

not

be

triggered.

F

L

Think

the

trap

already

that's

exactly

why

we

put

t.o.p

there,

because

in

the

end,

rack

recent

acts

still

requires

some

ACK

right

and

so

for

tail

drops.

Where

you

don't

get

any

ACK.

There

is

just

nothing

you

can

do

in

using

these

time

stamps

you.

You

have

to

send

something

to

trigger

an

ACK

to

sort

of

cost

more

recovery

actions.

So

it

doesn't

matter

how

you

put

the

timestamp

in

in

a

sequence

base

or

in

the

packet

boundaries

yeah.

Okay,

another.

F

F

L

F

But

my

question

is

like

the

safety

property

would

be

just

obtained

by

doing

the

the

correct

inflation

of

the

congestion

window

right.

The

pacing

is

an

optional

part,

because

today,

for

example,

without

pacing,

like

you

know,

the

conventional

loss

recovery

yeah,

similarly

inflates

the

condition

window

so

that,

as

long

as

we

guarantee

that

safety

property,

then

the

other

portions

of

PR

are

not

really

needed

for

track.

L

B

Okay,

so

I

would

say,

give

the

implementers

a

bit

of

time

to

catch

up

with

version

o3,

okay,

so

hoping

that

whoever

has

implemented

version

over

to

has

interest

in

version.

Oh

three

can

report

whether

it

works,

whether

it's

implementable

in

a

good

way

or

if

they

have

any

comments,

so

get

some

feedback

before

starting

a

working

group

last

go

on

that

sure.

J

Bob

Brisco

I'm

wondering

whether

this

draft,

whether

what

you're

trying

to

do

is

standardize

the

algorithm

you

have

thought

of

or

whether

you're

trying

to

standardize

allow

people

to

use

different

algorithms

and

you

you

need

to

describe

what

you

were

trying

to

do

as

a

sort

of

black

box.

Do

you

see

what

I

mean

because

it's

the

former

Saints

right

yeah?

So

it's

it's

documenting

one

algorithm.

That's.

J

Okay,

that

you,

you

might

want

to

say

that

and

and

I

think

the

draft

would

benefit

from

having

what

the

algorithm

is

trying

to

do,

not

just

what

the

algorithm

is.

Okay,

can

you

repeat

that

again

what

the

algorithm

is

trying

to

do,

not

just

what

the

algorithm

is?

In

other

words,

what

are

the

objectives.

J

J

J

F

You

to

be

quick,

pravin,

Microsoft,

sorry,

one

more

question,

so

you

said

the

implementation

is

almost

your

implementation

is

replaced

all

over

the

other

loss

recovery

with

RAC.

Is

it

a

goal

for

the

draft

or

the

RFC

to

say

that

an

implementation

should

do

that,

or

are

you

going

to

leave

that

open

for

implementations

to

do

both

if

they

chose

to

do

so

to

do

both

yeah.

F

L

F

Jenai,

a

very

quick

suggestion

that

this

is

a

working

group

document,

so

I

think

you

should

take

input

from

people

who

have

specific

suggestions

about

motivating

text

and

so

on.

Specifically

speaking

to

Bob

Bob,

you

write

very

well.

I

didn't

be

wonderful

to

actually

have

what

you're

suggesting,

but

I

would

I

would

also

say

you

know,

such

as

text

yeah.

B

C

Okay,

so

so

this

presentation

is

both

the

first

presentation

of

the

contractor

draft

in

front

of

TCP

M,

because

it

was

already

presented

in

mp

TCP

in

Prague

last

year,

but

we

did

not

have

the

opportunity

to

present

in

TCP

I'm

in

Singapore

and

then

an

update

of

the

draft

after

the

working

group.

Acceptance

on

next

slide

please.

So

the

initial

motivation

from

the

convertor

comes

from

the

work

that

has

been

done

in

MP,

TCP

working

group

and

in

MP

DCP.

C

We

see

that

there

are

more

and

peaty

speak

Lions

then

MPT

MPT

CB

servers

and

there

is

a

benefit

of

using

MP

TCP

in

the

access

network

to

be

able

to

combine

different

paths,

even

if

the

server

does

not

support

MP

TCP,

so

that

you

can

go

to

a

controller,

that's

reports

and

P

TCP,

so

that

you

can

benefit

from

the

two

paths

in

the

access

network.

Even

if

the

converter

has

not

yet

been

upgraded

to

support.

Mp

TCP

next

slide,

please.

C

So

what

are

the

objectives

of

the

TCP

converter,

which

has

now

been

accepted

by

the

working

group,

is

to

aid

the

development,

the

deployment

of

new

TCP

extensions?

And

if

you

look

at

the

history

of

the

different

TCP

extensions,

we've

seen

that

extensions

are

first

deployed

on

the

clients

and

then

they

are

deployed

much

later

on

the

server

side

and

in

enterprise

networks

and

service

provider

networks

its.

C

There

is

a

possibility

of

deploying

converters

to

aid

the

deployment

of

some

TCP

extensions,

so

the

TCP

converters

they

act

like

proxies

and

they

will

proxy

connection

initiated

by

clients

and

the

objective

of

the

control

to

draft

is

to

do

this

proxy

without

requiring

additional

entities.

And

one

important

point

of

the

converter

Draft

compared

to

other

solutions,

is

that

the

converter

has

the

ability

to

inform

the

client

of

the

options

that

are

supported

by

the

server

so

that

the

client

can

detect.

C

C

If

you

want

to

do

NP

TCP

in

the

access

network

from

a

smart

phone

to

a

server

that

does

not

support

an

P

TCP,

you

just

use

n

P

TCP

to

the

convertor

the

tax

as

a

TCP

proxy,

and

then

you

have

a

regular

TCP

connection

to

fine

observer

next

slide,

the

next

slide

and

next

again.

So

what

are

the

basic

principles

for

the

converter?

So

it's

an

explicit

TCP

proxy

between

the

client

and

the

final

server.

So

the

client

knows

the

IP

address

of

the

TCP

proxy.

C

C

The

comments

of

the

proxy

in

the

syn

and

during

the

initial

and

shake

and

the

comments

and

response

are

encoded

in

TLB

format,

to

simplify

the

parsing

and

the

processing

of

the

options,

and

there

is

a

way

for

the

converter

to

inform

the

client

of

the

TCP

option

that

are

supported

by

the

server

to

enable

it

to

bypass

the

converter.

If

the

server

supports

the

required

extensions

next

slide

so

now,

I

have

a

set

of

examples

to

show

you

in

principle:

oh,

it

works

and

the

shim

add

in

all

the

figures

you

will

see.

C

There

are

three

colors.

The

green

color

corresponds

to

the

IP

addresses.

The

blue.

Colors

corresponds

to

the

TCP

header,

including

the

TCP

options,

and

the

red

color

is

the

information

which

is

added

by

the

converter

protocol,

and

this

is

part

of

the

byte

stream

and

it

is

encoded

as

a

set

of

tlvs.

So

let's

do

an

example.

C

So

the

client

wants

to

read

your

server

to

be

able

to

read

your

server

where

the

client

will

do

is

that

it

will

send

a

syn

with

the

TF

or

p

on

to

the

converter,

and

we

use

the

TF

option

to

be

able

to

put

data

inside

the

scene

and

the

data

that

we

put

inside

the

scene

is

the

IP

address

and

the

port

number

of

the

final

server.

So

the

convertor

received

the

syn

and

thanks

to

the

TF

o

cookie

that

it

has

provided

to

the

client.

C

It

confirmed

that

the

sin

is

legitimate

and

what

it

will

do

is

that

it

will

initiate

a

connection

next

lightly

to

other

server,

and

it

knows

the

IP

address

of

the

server

from

the

connect

TLD,

which

was

part

of

the

original

scene.

Then

the

final

server

will

reply

to

the

scene

with

a

cynic.

Next

slide.

Please,

and

so

the

connection

from

the

converter

to

the

server

is

now

established

and

the

converter

next

slide.

Please

will

confirm

with

a

cynic

that

the

connection

to

the

client

has

been

established.

C

Possibility

to

add

the

new

TLV,

where

you

put

a

dns

name

instead

of

an

IP

address

in

the

current

version.

It's

only

an

IP

address,

because

we

want

to

be

fast

on

the

conservative

side

and

next

slide.

So,

as

I

said,

one

of

the

benefit

of

the

converter

is

that

you

can

detect

whether

the

final

destination

supports

are

give

an

option.

C

So

let

me

take

an

example

with

MP

TCP,

but

it

would

work

with

other

TCP

option,

so

the

client

creates

a

connection

request

through

the

converter,

with

the,

and

here

we

are

using

an

T

capable

option

which

is

shown

as

NPC,

and

we

are

using

the

RFC

68

when

T

for

bit

so

just

MP

capable

without

the

key

to

the

converter.

Next

slide.

The

converter

will

try

to

establish

an

MP

TCP

connection

to

the

server

the

server

is

MP

TCP

enabled

so

next

slide.

C

Next

slide,

please

to

the

client

so

that

the

client,

by

just

passing

the

TLV,

which

is

part

of

the

byte

stream

of

the

TCP

connection

from

the

converter

to

the

client,

will

know

that

the

server

supports

and

P

TCP

and

knowing

that

the

server

supports

and

P

TCP.

Then

the

client

for

the

next

connection

can

decide

to

bypass

the

control

and

go

directly

to

the

server.

C

So

that's

a

generic

way

of

enabling

the

clients

to

understand

what

are

the

options

that

are

supported

by

a

given

server,

and

it

means

that

you

can

bypass

the

converter

for

this

specific

destination

and

next

slide.

Please

so