►

From YouTube: IETF101-ROLL-20180322-0930

Description

ROLL meeting session at IETF101

2018/03/22 0930

https://datatracker.ietf.org/meeting/101/proceedings/

A

B

A

A

A

D

A

Okay,

we

are

going

to

have

a

presentation

on

spur

a

little

with

the

sixties.

That

Michael

is

going

to

present

him

role

enrollment

priority,

but

is

using

sixties

and

okay.

We

sent

an

email

about

the

IPS

that

we

have

in

the

current

documents.

So

you

have

some

comments

on

that.

Please

into

the

mini

lists

are

now

ice.

I.

B

Wanted

to

make

I

wanted

to

make

a

few

comments.

It's

the

first

role

in

our

room

chairmanship,

which

takes

so

long.

So

it's

a

bit

of

an

experiment

and

I

hope

it

will

turn

out

correctly,

but

we

have

lots

of

new

new

subjects

and

I

think

they're

worse.

Well,

the

discussion,

especially

the

discussion

about

how

to

use

beer,

is

in

role

on

how

we're

going

to

forward

that.

So

we

all

think

that

needs

some

time.

B

F

E

G

E

Problem

with

using

the

standard

objective

functions,

as

described

by

draft

in

kissimmee

as

well,

relates

to

networks

becoming

unbalanced

when

you

use

them,

so

some

nodes

will

get

overloaded

when,

when

you

use

those

objective

functions

that

would

typically

lead

to

lower

Network

and

the

node

life

and

as

a

result,

you

will

have

higher

packet

losses

due

to

kill,

probably

and

higher

packet

delay.

Well,

this

is

very.

E

In

this

case,

a

balanced

version

would

be

like

this,

where

it's

half

and

half

now

the

objective

function

proposed

by

Kassim

will

create

will

resolve

this

problem

because

it

uses

the

channel

count

to

balance

the

network.

However,

if

the

nodes,

don't

all

transfer

data

with

the

same

rate,

the

result

will

not

be

balanced.

So

in

this

case,

this

node

doesn't

actually

send

data

with

the

same

rate

as

the

other

ones.

So

note

that

to

balance

it,

you

will

actually

need

to

make

it

like

this,

so

the

child

node

component

won't

be

the

same.

E

So

new

metric

recorded

packet

transmission

rate

and

in

our

case

we

use

the

packet

transmission

rate

for

each

note.

So

it's

a

local

information,

but

Derek

I

don't

recognize,

but

he

proposed

that

we

could

also

use

an

accumulative

version

of

this,

where

the

packet

transmission

rate

would

be

accumulated.

The

cumulative

thing

for

all

the

subtree.

E

In

any

case,

our

objective

function

basically

select

the

preferred

parent

by

the

parent,

which

has

the

lowest

PBR.

So

this

is

an

example

of

a

dia

which

contains

the

Dagon

sea

and

within

the

dark

matter,

container

data.

You

would

have

something

like

this:

a

new

routing

metric

container

type,

which

needs

to

be

assigned

and

just

a

two

octave

packet

transmission

rate.

So

this

is

the

current

state.

E

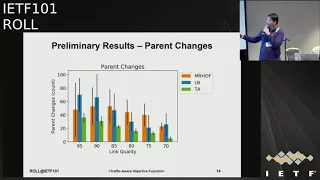

We

have

some

very,

very

preliminary

results.

We

have

a

network

here,

we

used

Kentucky

to

simulate

it,

and

we

have

you

can't

really

see

it,

maybe

in

this

diagram,

but

in

basically

the

network

is

heavily

lopsided

or

this

part

so

not

to

and

dates

and

data

much

faster

than

the

non

vult

ones.

So

this,

if

you

know

the

balance

the

whole

network

by

either

ripple

you,

it

won't

work

well,

but

even

with

channel

count,

this

mode

will

typically

have

more

children,

although

it

should

be

more.

E

E

The

load

balancing

network

already

gives

better

results.

Ours

gives

a

bit

better

and

mr.

Huff

tends

to

slightly

worse

due

to

the

load

balancing

issue.

We

also

count

parent

changes,

so

you

will

see

there's

a

lot

of

variability

here.

That's

a

side

effect

of

the

very

preliminary

result,

but

in

this

case

as

well,

our

objective

function

is

a

bit

more

stable

and

deletes

to

fewer

parent

changes,

and

we

also

can't

from

any

di

o--'s

who

are

so.

E

E

So

in

this

case,

we

do

one

and

we

have

some

issues

already

so

after

discussing

the

whole

idea,

we

that

we

need

to

discuss

how

general

we

want

our

solution

to

be

so

a

lot

of

things

that

moment

are

predefined,

so

we

you

have

gotten

to

a

constant

one

idea

would

be

to

either

keep

it

as

it

is,

or

also

keep

a

few

bits

in

the

metric

to

send

also

the

data

unit,

so

packets

are

octet

or

whatever.

Another

issue

is

the

time

unit,

and

that

might

also

be

a

good

idea

to

send

it.

E

G

E

We

could

normalize

the

PTR

to

a

given

capacity

or

you

could

send

the

max

capacity

as

another

field

as

well.

So

obviously,

this

has

some

network

overhead

as

well,

and

we

also

see

that

all

nodes

consume

energy

with

the

same

rate.

So

you

already

have

the

energy

metal

container,

which

you

count

energy

remaining

until

the

end

of

the

battery,

but

I

haven't

seen

something

that

actually

counts

the

relative

consumption.

So

we

can

deduce

that

from

the

to

some

extent

the

how

much

energy

is

remain

with

you.

It

might

be

more

accurate.

We

use

and

replace.

E

Finally,

at

the

moment,

the

packet

transmission

rate-

again

it's

it's

derived

from

what

we

note.

What

a

note

is

forwarding

in

terms

of

traffic

and

it

might

be

a

good

idea

to

because

at

the

end,

what

you

care

about

is

the

whole

path

to

the

root.

So

maybe

that

would

be

more

interesting.

So

do

a

cumulative

version

of

this

metric.

E

So

at

this

point

we

will

have

this

discussion

yesterday.

Some

of

these

metrics

might

be

interesting

to

track

them

to

the

a

B

information

element,

so

that

notes

are

better

able

to

do

the

whole

join

process

when

the

network

obviously

can

be

rolled

into

one

of

the

priority

values

we

discussed

yesterday,

instead

of

being

a

separate

thing.

E

C

G

G

E

H

H

That's

what

I

was

actually

interested

in

was

was

node

seven.

If

you

want

to

also

try

to

balance

the

children,

then

it

seems

that

you

very

much

want

to

make

sure

that

seven

connected

to

node

4

such

that

note

5

has

the

capacity

to

accommodate

no

D

and

I'm

wondering

how

do

you

do

you

kick

seven

over

that

way

because

it's

it's

a

it's

not

sending

traffic

really,

but

it

is

occupying

yeah.

Oh

no,.

E

E

H

But

what

I

understand

is

is

I

can

see

how

you

can,

how

to

in

an

eight,

you

know,

need

to

make

intelligent

choices

and

about

there

I'm

trying

to

understand

how

in

your

algorithm,

does

node

seven,

which

is

not

a

high

sender.

How

is

it

discouraged

from

connecting

to

five

such

that

number?

Five

has

the

capacity

the

child,

not

that

the

bandwidth

compared.

H

E

H

Might

be

good

to

do

some

experiments

where

you

have

enough

nodes

such

that

you

actually

overload

the

child

capacity

and

then

see

if

you

are

still

able

to

migrate

or

partition

your

network,

given

that

you

have

children

that,

like

12,

has

no

choice,

it

has

to

be

the

other

one

got

no

choice,

they

have

to

be

there,

but

7

is

critically.

It

has

a

choice.

Yes

right,

so

these

nodes

are

basically

here

just

to

add

some.

G

E

E

G

D

D

E

We're

talking

about

the

press,

the

traffic

right,

yeah

yeah,

you

might

have

that

that's

a

problem

with

all

the

objective

functions.

Whenever

you

have

like

some

up

the

changes

you

need

to

be

able

to

adapt

and,

depending

on

the

video,

how

quickly

you,

how

often

we

get

the

D

iOS,

they

update,

might

take

some

change

and

you

might

have

some

problems,

yeah

for

sure.

A

E

E

This

draft

we

have

presented

it

again

in

Singapore.

We

have

some

changes,

probably

go

over

them

a

bit

copy,

so

we

are

creating

a

new

TLV

in

the

NSA

in

the

note

state

and

attribute

object

within

the

metric

container.

This

is

used

basically

for

enabling

PR

a

packet,

replication

and

elimination.

So,

since

the

last

version

we

did

some

editorial

changes.

We

improved

some

of

the

diagrams

and

some

working

SR

model.

We

specified

how

some

of

the

vacuum

see,

fields

should

be

used

and

we

also

created

somewhere

shorter

dissectors

for

this

for

this

field.

E

So

the

here

is

that

we

were

trying

to

as

much

as

possible

achieve

some

determinism

to

get

some

reliable

communication

and

some

low

jitter

performance

as

part

of

that.

So

the

idea

is

to

use

packet

replication

illumination.

So

you

replicate

the

packets

to

multiple

parents.

You

make

sure

that

whenever

they

arrive

at

a

common

node

they

get

eliminated.

So

you

don't

get

a

storm-off

packet

and

they

also

use

promiscuous

overhearing

to

increase

the

number

of

packets

they

received.

E

E

So

we

need

to

extend

the

DAO

messages

with

some

information

to

allow

child

nodes

to

select

an

alternative

parent

as

best

as

possible

for

our

purposes

during

that,

for

that

parent

selection,

our

draft

enables

it

to

function

at

all,

and

specifically,

the

idea

is

to

allow

selecting

an

alternative

parent

which

has

a

common

ancestor

with

the

child

parent.

So,

in

this

diagram

the

idea

is

to

be

able

to

select

B,

which

has

a

common

ancestor

with

a

so

then

B

needs

to

be

selected

for

that

purpose.

E

Also,

very

quick

example

afterwards,

so

we

have

an

again

of

the

DAO

packet

with

the

dark

matter

container

and

in

it

we

have

the

MSA

object

and

within

the

NSA

object

will

have

a

new

request

for

a

new

TLV,

an

optional

TLV,

which

describes

the

information

which

has

information

used

for

PRA,

and

this

information

is

basically

a

number

of

addresses

of

ipv6

addresses

for

the

parents

of

a

node.

Now.

E

E

If

you

have

this

network-

and

you

have,

this

is

the

preferred

parent

of

s

and

D

is

the

preferred

parent

of

a,

and

this

is

the

parent

set

of

s,

and

this

is

the

parent

set

of

a

and

you

have

some

intersection

here.

This

is

a

parent

set

of

me.

You

want

to

select

the

sorry,

the

the

B

node

as

the

alternative

parent

for

s,

since

there

is

an

intersection

here.

So

since

B

is

in

the

is

in

the

parent

set

of

both

a

and

B,

you

select

B

as

an

alternative

parent.

E

Now

one

issue

you

have

is

that,

unfortunately,

sending

all

that

data

is

pretty

heavyweight

like

16

bytes

per

ipv6

address.

One

thing

that

we

have

done

is

to

remove

half

of

that

to

somewhat

compress

it,

but

again,

that's

not

ideal,

and

we

also

have

some

ideas.

We

we

are

thinking

about

using

this,

the

same

information

not

for

keeping

the

route

of

the

replicas

close

to

the

original

path,

but

intentionally

moving

away

from

the

original

path

to

get

some

extra

diversity,

both

for

just

the

preferred

parent.

E

H

Michael

Richardson

to

compress

your

v6

addresses

code.

That's

probably

already

present

would

be

in

the

sixth

orh

header,

which

basically

has

a

list

of

our

addresses

and

comprends

since

they're

similar.

It

does

a

very

good

job

of

compressing

them

into

maybe

one

or

two

bytes,

each.

That

would

be

ideal

and

the

codes

already

probably

present

on

most

systems,

so

I'll.

I

E

E

B

E

So

that's

obviously

an

issue

depending

on

what

you

want

to

achieve.

I

don't

know

if

you

can

necessarily

do

both

at

the

same

time,

but

so

what

we

use

at

the

moment

is

use

a

normal

metric

like

ETA,

X

or

whatever,

and

use

our

constraint

just

to

constrain

the

alternative

parent.

So

it

it

can.

It

can

work

with

other

metrics,

pretty

okay,

that's

not

a

problem

in

itself.

E

J

The

objective

functions

that

usually

we

present

at

the

ITF

are

very

simple,

like

use

one

metric,

but

we

expose

a

lot

more

metrics

and

now

we

put

more

and

more

stuff

and

real

world

objective

function

may

be

more

intelligent

than

that.

It's

an

objective

function

can

be

a

piece

of

logic.

It's

meant

to

be

a

piece

of

logic

which

ties

a

number

of

metrics

together

and

the

way

it's

tied

together,

maybe

Oh.

First,

you

look

at

this

because

that's

my

most

important

concerns

and

the

other

things

maybe

tiebreaker

something

in

these

guys.

J

You

really

want

to

build

two

non

congruent

paths

with

the

capability

to

do,

but

back

replication

and

elimination

in

the

middle

of

your

network,

because

network

are

so

lousy

and

you

really

want

your

data

to

go

through

in

the

definite

definite

time.

So

that's

your

priority.

Now,

if

you

can

do

that,

and

if

you

see

multiple

parents

which

would

fit

then

all

of

a

sudden,

your

whole

life

may

be

taking

more

ideas

from

some

of

this

component

works

that

we

have

used

up

the

Essbase,

TTX

or

use

the

load.

J

Balancing

that

we

just

saw

I

mean

that's.

How

it's

it's

rebound

together?

It's

the

fact

that

your

objective

function

will

not

be

one

of

those.

You

know

simple

apps

that

the

IDF

has

produced,

but

one

which

really

does

what

you

want.

So

if

you

have

an

industrial

consortium

which

really

cares

about

deterministic

properties

of

closer

to

deterministic

properties

in

this

network,

you

will

want

this

and

you

will

try

to

do

some

traffic

balancing

if

that's

possible.

J

J

G

Hello

Java

from

Huawei

technologists,

so

I

am

going

to

present

ripple

observations.

So

basically,

these

are

just

observations,

no

solutions

here.

These

are

some

of

the

issues

that

we

found

during

our

solutions:

analysis,

design,

deployment,

implementation

deployment

pilot.

Actually,

so

we

we

ended

up

having

some

sort

of

solutions

to

some

of

these

problems,

but

we

definitely

believe

that

those

solutions

are

not

optimal,

definitely

not

best

in

all

the

cases,

and

we

wanted

to

bring

these

problems

to

working

group

to

check

whether

these

make

sense

and

if

we

are

not

missing

a

major

point

here.

G

So

that

is

what

we

wanted

to

check

you.

So

our

our

deployment,

our

pilot,

was

primarily

towards

smart

meter

networks

where

we

have

thousand

know

the

cardinality

with

sixteen

hops,

which

is

quite

big,

and

we

have

storing,

as

well

as

non

storing

mode

of

operations

involved.

Having

said

that,

most

of

the

problems

that

are

listed

here

are

related

to

storing

mode

of,

but

we

definitely

believe

that

some

of

these

problems

can

be

solved

in

a

better

way.

Okay,

so

first

problem.

G

This

is

all

of

the

major

problem

that

we

have

faced

is

is

how

to

handle

the

DTS

in

increment.

This

is

non-trivial,

especially

in

storing

mode

actually

non,

storing

mode

of

operation.

This

is

pretty

easy

to

take

care

of

in

storing

mode.

How

do

you

increment

the

DTS

L,

so

the

the

decisions

that

you

make

that

can

impact

the

downstream

route

availability?

G

So

this

is

the

first

implementers

deployer

dilemma

that

we

we

had

so,

in

which

case

should

the

DTS

and

B

increment?

So

DTS

n

is

the

number

which

is

it's

a

sequence

number,

which

is

part

of

the

DAO

message,

which

essentially

is

tells

you

which

tells

now

the

child

nodes

in

what

errors,

whether

it

should

send

the

DAO

message

or

not.

Now

there

are

two

problems

here

now.

There

is

currently

no

way

for

the

target

node

to

know

whether

the

DA

was

actually

reached.

G

The

border

router,

the

end-to-end

path,

has

been

established,

so

it

needs

to

so

the

current

network.

The

current

mechanism

has

to

have

enough

redundancy

now

redundancy

so

as

to

make

sure

that

the

DAO

actually

reaches

the

border

router

and

after

that

it

could.

It

should

ideally

start

the

application

traffic.

It

is

very

important

because

if

you

end

up

starting

the

application

traffic

before

the

routes

are

established

end-to-end,

then

you

end

up

clogging

the

network.

You

end

up

queuing.

G

The

acts

are

not

going

to

come

back

and

you

don't

want

to

ideally

start

your

application

traffic

this

time.

So

essentially,

what

so?

Should

the

DTN

be

incremented

with

every

DI

electrical

timer

now

I

know

for

sure

that

at

least

the

old

implementation

of

quantity

incremented

the

D

TSN

in

every

trickle

timer

time

out,

which

is

bad.

But

then

there

is

no

option.

I

feel

because

the

moment

you

have

a

higher

number

of

might

the

number

of

hops

increase

in

case

of

storing

mode

of

operation.

G

Now,

if

you

don't

increment

DTS

in

in

the

electrical

timer,

then

your

doubt

redundancy

is

too

low

your

you

have

a

high

probability

of

now

not

reaching

the

border

router

and,

of

course,

with

the

increase

in

the

number

of

hops.

The

probability

of

now

success

drops

sharply.

So

this

is

the

something

that

we

had

seen

happening

in

our

networks

and

what

we

had

seen

is

actually

the

network

convergence

time

90%

of

the

nodes

gets

joined,

but

the

remaining

10%

of

the

nodes.

G

G

So

that

is

what

we

have

seen,

and

so

is

there

any

better

to

operate?

This

storing

mode

is

the

mode

of

operation

that

is

considered

here

in

non

storing

mode

of

operation.

Again,

this

is

not

a

problem

now.

This

again

is

one

important

point

and

it

has

so

Dow

AK.

This

has

some

relevance

to

the

previous

point

discussed.

The

DT

is

an

increment.

If

you

end

up

handling

the

Dow

back

properly,

then

it

will

solve

good

number

of

issues.

That

is

what

my

opinion

is.

G

There

had

been

discussion

on

the

mailing

list

in

2015

regarding

this,

and

there

are

implementations,

for

example,

with

the

current

ripple

spec.

There

are

multiple

interpretations

possible

whether

the

X

should

be

sent

hop-by-hop

or

it

should

be

sent

end

to

end,

and

there

is

no

way

that

this

simple

I

mean

once

currently

riot

implements

hop

by

hop

acknowledgment

acknowledgment.

The

older

version

of

quantity

implemented

hop

by

hop

acknowledgment,

but

recently

quanta.

He

found

that

we

have

they.

They

they

need

in

to

end

acknowledgement

mechanism,

because

the

primary

reason

is

the

target

node.

Without

this

mechanism.

G

The

target

node

won't

be

aware

that

it

has

reached

the

border

okay.

So

how

do

you

should

then

implement

or

interpret

this

particular

this

particular

scheme

in

in

the

ripple

specification?

There

are

pros

and

cons

for

each

of

this

mechanism.

Hop

by

hop

acknowledgement

is

actually

pretty

easy

to

implement.

There

is

no

state

involved

in

case

of

end-to-end

acknowledgment

either

there

is

some

sort

of

state

involved

in

the

routing

table

or

there

is

some

sort

of

overhead

involved

in

the

network

controller

and

network

messaging,

so

it

has

its

own

pros

and

cons.

G

We

eventually

ended

up

implementing

this

into

an

acknowledgment

one

in

it,

but

in

a

different

way,

without

that

we

couldn't

get

our

convergence

time

in

any

good

shape.

So

the

other

problem

here

is

how

do

we

handle

aggregated

targets

in

case

of

dower,

so

the

our

work

is

for

a

Down

message

and

not

for

individual

targets,

and

now

message

can

actually

contain

multiple

targets.

So

how

do

you

acknowledgment?

How

do

you

acknowledge

a

particular

die

which

has

multiple

targets

and

once

a

subset

of

the

targets

fail?

This

is

a

problem.

G

G

If

so,

another

problem

is

ripple

is

not

clear

on

how

to

handle

our

aggregated

targets.

It

certainly

allows

it.

There

is

thus

definite

wording

in

the

specification

which

allows

aggregated

target,

but

it

does

not

have

failure

handling

for

it.

This

has

been

discussed

on

the

mailing

list

in

2015

as

well,

and

I

feel

this

is

important

to

be

handled.

G

If

you

see

the

two

most

popular

implementation,

koa

riot

and

quantity,

does

it

in

a

different

way

today

it

is

impossible

to

get

an

any

sort

of

interoperability

possible

between

these

two

implementations

at

multiple

hops.

What

we

have

seen

is

right

sends

aggregated

targets,

quantitate

doesn't

handle

it,

the

network

doesn't

never

gets

formed,

so

the

way

I

have

been

experimented

with

is

I

have

a

border

router

and

a

few

of

the

notes

which

are

counting

here,

another

ad,

but

this

will

have

a

problem

only

at

multiple

hops.

G

If

you

have

a

smaller

network,

you

are

just

doing

an

interrupt

at

a

very

small

size

on

a

table

with

all

the

nodes

speaking

to

border

route,

but

directly

you

are

not

going

to

have

any

problems.

The

moment

you

try

to

scale

it

try

to

achieve

performance

at

a

bigger

scale.

That's

when

all

these

problems

will

start

creeping

in

so

now

AK

is

important.

I've

already

told

talked

about

it.

You

know,

because

it

is

important

to

know

when

the

end-to-end

path

is

established.

G

It

is

important

for

the

application

to

know

when

it

should

start

its

traffic

in

case

of

hop-by-hop.

The

Dower

can

fail

or

buy

up.

Acknowledgement

is

actually

not

much

useful

in

my

opinion,

because

you

have

link

layer

acknowledgement

as

well,

so

in

most

of

the

cases

most

of

the

implementation,

disable

it

by

d4.

So

I

don't

know

if

anyone

uses

hop

by

hop

acknowledgement

mechanism

to

achieve

anything

specific

right

now.

G

So

there

is

one

more

alternate

behavior

that

we

have

implemented.

Basically,

what

we

have

done

is

the

DAO

goes

like

this,

and

the

border

router

eventually

sends

or

the

root

route

eventually

sends

an

acknowledgement

directly

using

a

global

using

the

group

elect

because

the

downstream

path

is

already

established.

So

this

way

you

can

have

least

overhead

in

terms

of

control

traffic.

You

don't

have

to

have

any

additional

routing

state

on

6l,

arse,

and

but

the

problem

here

is

you

can't

handle

aggregated

acts.

G

G

The

lot

of

discussion

that

has

happened

already,

the

next

point

I'm

going

to

discuss

I'm,

not

sure

I,

would

really

like

to

have

some

feedback

here,

and

this

is

something

that

we

have

faced

so

in

case

of

storing

mode

of

operation

ripple

has

some

state

information

to

be

maintained

across

node

reboots

in

case

of

I/o

devices.

The

flash

endurance

is

a

big

problem.

G

If

you

end

up

having

your

network

protocol

flashing,

something

to

the

persistent

storage

every

now

and

then

it's

not

acceptable.

At

least

this

is

what

our

solutions

requirement

team

told

us.

It's

not

acceptable,

so

you

have

to

handle

it

in

some

other

way,

so

I'm

not

sure.

If

anyone

has

handled

this

particular

problem,

current

implementations,

open

source

implementations,

don't

handle

it.

They

expect

that

there

is

some

persistent

storage,

but

they

don't

handle

it

by

itself

and

we

feel

again.

This

is

important

again.

This

is

more

impacting,

especially

in

case

of

storing

mode

of

operation.

G

H

G

C

G

H

The

reason

I'm

asking

is

it

is

because

there's

other

things

like

a

SNS

and

in

six

station

other

stuff

and

network

keys

that

that

you

do

want

to

write

to,

but

you

don't

need

to

write

even

daily

to

the

system's,

but

there

are

some

other

things

that

the

network

stack

needs

to

keep

track

of.

I.

Think

so

that's

why

I

wanted

to

know

if

there

was

some

threshold,

that

is

a

pain

for

them

and

then

because

your

other

slides

were

how

often

do

we

increment

the

DTS

ed

well.

K

G

I

G

Sorry

so

that

you're

talking

about

right

amplification

where

you

change

the

location

of

the

flash

so

that

it

gets

leveled

across

multiple

listing,

so

we

I

have

put

some

numbers

in

the

draft.

Please

check

you

know

if

those

make

sense.

Okay,

I

really

want

those

to

be

reviewed

and

I

would

really

so

I've

put

some

specific

information,

considering

the

write

amplification,

considering

their

node

endurance

level,

considering

MLC

TLC

flashes

and

then

provided

some

data

there.

G

So

most

of

the

most

of

the

IOT

devices

implements

CC

2,

5,

3,

8,

2,

CC,

2,

6

5

0,

for

example,

implements

some

cheaper

flashes

and

it's

a

big

problem

with

endurance,

0,

6,

sellers

and

particularly,

are

more

impacted

water

out.

We

don't

care

mostly

because

yeah.

We

assume

that

it's,

it

has

some

sort

of

other

mechanisms

to

handle

it

okay.

G

So

this

is

again

a

hot

topic.

It's

already

been

a

handle,

I

think

other

works

in

progress,

and

this

is

definitely

impacting

the

overall

solution.

Implementation

for

us

basically

handling

resource

and

ability

in

terms

of

neighbor

cash

table

and

routing

table.

How

do

you

handle

what

happens

with

the

neighbor

cash

entry?

Goes.

Full

there

is

no

signaling,

currently

I.

G

Think

part

of

the

work

is

being

done

by

in

the

enrollment

as

part

of

the

enrollment

draft,

but

the

certain

scenarios

which

I

still

feel

may

not

be

the

draft,

might

not

be

still

able

to

handle

it.

For

example,

in

in

case

of

this,

let's

say,

for

example,

therefore

routing

table

entries

on

n1

and

n2.

Eventually,

there

is

only

a

single

like

6lr

here

so

or

rather

three

routing

table

entries.

So

how

do

you

handle?

How

do

you

signal

it?

G

So

m3

has

only

one

preferred

parent

so

either

all

these

four

entries

will

go

through

this

n1

or

n2,

and

both

of

them

have

out

think.

So.

How

is

this

going

to

be

handled

in?

So

there

are

some

scenarios

which

are

really

tricky,

especially

when

it

in

context

to

handle

degree,

social

mobility-

and

this

has

been

a

major

major

problem

for

us.

This

directly

impacts

the

network

convergence

time.

G

This

directly

impacts

the

packet

delivery

rate

for

us

and

we

have

an

implementation

which

tried

to

solve

this

issue

and

we

have

not

ended

up

anywhere

as

of

now,

but

we

have

a

lot

of

observations

and

I

feel

if

there

is

some,

if

other

people

have

some

other

alternate

solutions,

we'll

definitely

like

to

understand

and

if

I

mean

move,

definitely

implement.

If

we

someone

else,

has

a

solution

towards

this

all

right.

That's

all!

Thank

you.

Precious

peace.

H

Michael

Richardson

again,

this

is

wonderful

work

I

really

would

like

to

adopt.

This

is

clearly

there's

a

bunch

of

updates

your

points

about

do

AK,

Papa,

hot

versus

and

and

I

implemented

end

and

from

from

just

thinking

about

the

problem.

I

said

well

has

to

be,

and

and

or

there's

no

point

but

but

I

hadn't

occurred

to

me

that

the

spec

was

ambiguous

about

that

I

think

I

just

assumed.

H

That

was

the

case

so

clearly,

there's

some

bugs

in

the

spec

and

I

think

you've

identified

those

and

I

think

that

that

the

places

where

my

suggestion

is

that

that

I

haven't

looked

at

your

document,

I'm

sorry

but

I,

think

you

should

split

it

into

ones

that

are

clearly.

There

is

a

problem

here.

It

doesn't

matter

whether

you

recommend

and

end

or

or

hop-by-hop

act.

H

In

fact,

I

think

we

should

adopt

the

document

with

you

know,

a

or

B

and

as

a

working

group

document,

and

then

we

should

make

a

decision

as

the

working

group

as

to

which

one

we're

actually

going

to

compete

if

it

to

a

bunch

of

those

things,

DTS

n,

increment

I,

think

that's

a

bug

in

the

spec

I,

don't

know

what

the

answer

is,

but

it's

clearly

a

bug

in

this

back.

Okay,

so

I

think

those

are

updates.

H

26550

and

I

think

that

would

be

a

really

good

document

to

do

and

I

think

it

should

be

in

some

sense

non-controversial

right

and

then

you

should

have

a

second

document

for

issues

like

this

one

which

honestly

we've

known

about

in

some

sense

from

the

very

beginning.

We

don't

have

an

answer

for

I

think

ultimately,

a

kill,

storing

mode

yeah.

J

H

So

that

may

be

a

very

small

thing,

so

I

think

that's

the

right

answer

to

me:

that's

where

to

go

for

it,

but

the

bugs

I

would

like

to

see

that

just

let's

do

the

document,

let's

adopt

it,

let's

get

it

published

by

the

end

of

the

summer,

okay,

because

I

think

that's

like

non

I

think

it

should

be

non-controversial

once

we

have

the

two

questions

in

front

of

us

at

the

next

meeting.

You

know

a

or

B,

let's

hum

a

or

B

which

one

you

want.

H

J

J

Okay,

so

I

can

give

you

some

history

on

some

of

those

things

and

I

agree

on

the

on

that

fact

that

the

spec

is

that

complete

and

how

you

use

those

things

and

part

of

the

reasons

is:

probably

people

not

didn't

necessarily

agree

on

how

to

use

them.

When

you

wrote

this

back,

so

we

ended

up

leaving

it

open,

so

so

the

next

generation

would

decide

which

is

what's

happening

now

so

I'm

very,

very,

very

happy

to

see

this

so

I

love

your

draft

as

a

prime

statement.

J

J

The

dialog

was

mailed

two

replies

because

you're

thinking

about

radios

right

repos

are

watching

protocol.

We

run

it

on

wires

and

very

high

speed

networks.

Actually,

so

that

work

is

real,

you

don't

have

any

artwork

in

the

initial

design

was

not

end

to

end

I

mean

if

we

want

to

design

it

end

to

end

our

watch.

Let's

do

it

it

doesn't.

We

don't

even

have

to

call

it

that

watch.

We

can

call

it

the

way

we

want

and

we

don't

have

to

stick

to

the

dirac

format.

J

The

deck

is

read

there

when

you

can't

leverage

your

lower

lay

your

thing

for

doing

it

and

and

repo

as

never

of

things

like

that.

Well,

you

say:

okay,

if

we

ever,

if

you

have

a

lower

layer

thing

which,

which

will

do

it

you,

okay,

the

the

idea

was

if

you

have

a

Dao

information,

you

need

to

pass

it

to

your

parrot

and

if

it

doesn't

work,

then

you

need

neck

nourishment

and

you

retry

and

if

doesn't

work,

then

you

have

an

attachment.

You

retry

at

some

point.

J

You

need

to

pass

it

to

death

and

yes,

it

might.

We

have

to

see

the

consequences,

but

we

hoped

it

would

work

after

you,

ultimately,

retrying

to

the

athletes

would

end

up

working.

We

have

to

talk

about

what

happens

when

it

does

not

just

discovering

that

it

did

not.

Doesn't

help

a

lot,

because

you

don't

know

along

the

chain

what's

broken,

so

we

have

to

figure

out

a

solution.

J

It

will

take

a

little

bit

more

than

a

second

to

get

there,

but

just

understand

the

end-to-end

rod,

because

we

are,

the

initial

design

is

just

local

at

lachemann.

It's

not

enter

well,

we

want

something

to

solve

the

end

to

end.

Let's

welcome

it.

It's

not

there.

Ok,

all

right

the

dts.

There

was

initially

something

that

you

would

trigger

either.

If

you

find

the

discrepancy

in

what

you

see

like

you

know,

this

flies

in

the

packets

which

tell

you

there

is

something

wrong

there.

J

I

just

want

to

assert

that

I

have

my

knowledge

of

my

children

is

what

it

is

and

the

idea

was

either

us

just

your

first

half

children

or

you

go

as

you

said

down

through

the

geotag.

It

could

just

be

a

one

heart

with

rush

I

think

the

option

is

Taylor.

Initially,

that

was

the

option.

I

just

don't

remember

what

ended

up

in

the

spec.

We

met

so

many

revised,

but

in

my

mind,

was

either

for

your

first

hot

children

or

for

everybody

one

design

behind

it

was

to

rebuild

the

geotag

without

reforming

it.

J

If

you

change

the

version

number,

you

will

reform

that

you,

like

the

shape

of

the

topology,

will

be

changed

right

because

people

will

hear

her

and

selection.

Now.

Imagine

that

you

do

a

DTS,

M

and

trick

yourself.

You

skip

the

same.

Do

diet,

you

just

repaint

it

right

paint

by

painting

I

mean

we

put

the

addresses

where

they

are

on

the

other,

so

that

was

the

difference

between

the

DTS

n

and

the

version

version

reveals

everything

DTS

end

just

retains

the

existing

structure.

J

One

thing

was

I,

find

the

discrepancy

looking

at

the

data

traffic

I'm,

getting

a

packet

from

somebody

and

I,

don't

know

that

somebody,

okay,

interesting,

do

I

know

on

my

children

right,

that's

one

reason.

Another

reason

is

you

know,

I

think

these

guys

well

the

packet

going

up

when

you

think

should

be

going

down

all

those

things.

So

there

is

something

wrong.

I

may

just

repaint

my

graph

to

see

us

behind

me.

So

these

these

are

the

sorts

of

reason.

J

Also

another

one

would

be

I

want

to

reparent

I

lost

all

my

possible

parents,

and

I

would

like

to

explore

who's

behind

me,

so

I

avoid

them

and

I

try

to

jump

to

somebody

else

with

not

the

idea

just

want

to

reassert

behind

me

just

reassured

that

this

guy's

not

the

enemy.

Let

me

jump

to

it

and

take

the

risk.

You

know

we

can

stretch.

J

We

never

actually

standardized

that,

but

that

was

one

way

I

want

that

not

only

to

refine

the

dad

without

changing

it,

but

also

to

do

it

like

smoothly

like

a

counter

wave.

You

know

away

from

the

bottom

to

the

top.

So

that's

what

it's

for

now

can

we

use

it?

Is

it

usable,

etc?

I'm

just

telling

you

what

it's

for

yeah,

okay

and

then

again

agree

with

everything

about

the

prime

statement,

things

being

and

clear,

I'm

just

telling

you

what

the

tools

are

for

the

arc

was

local

one

hop

yeah.

J

H

Richards,

it

again

Pascal

that

was

wonderful,

I'm,

so

glad

it's

on

tape,

so

the

the

the

III

think

that

you're

in

violent

agreement

with

me

actually

well,

the

the

the

point

is

that

if

there's

in

clarity-

and

you

just

clarified

about

four

things-

okay-

that

clearly

many

of

us

were

unclear

about

okay,

so

clearly

6550

didn't

say

it

well,

it

clearly

enough.

So

that's

what

I'm

saying

all

those

things

you

just

clarified.

H

I

would

like

that

in

a

low-hanging

fruit

update

document,

okay,

and

if

it

turns

out,

though

that,

as

you

said,

we

need

an

end

to

end

thou

act

or

equivalent.

Okay,

then

great,

that

goes

in

the

other

document

of

of

things,

so

so

the

the

the

purpose

of

the

low-hanging

fruit

document

that

we

think

we

can

do

quickly

is

that

nothing

in

it

should

be

controversial

yeah,

because

something

now

you

just

clarified

a

bunch

of

things

which

now

makes

a

bunch

of

things

potentially

completely

non-controversial.

H

If

that's

what

the

the

clarification

ISM,

so

that's

all

I

want

is

so

that

there's

no

further,

you

know

concern

or

whatever

about

what

does

this

mean

or

what

does

this

do?

In

the

this

business

with

the

bottom

up,

that

sounds

great

I,

never

conceived

or

understood

that

from

you

know

the

last

time

I

read

6550,

but

that's

really

I

did

not

know

that.

So

that's

really

important.

That's

why

I'm

trying

to

capture

is,

is

there's

a

bunch

of

knowledge

that

we

haven't

all

come

to

and

we'd.

H

Maybe

we'll

go

back

to

6550

and

go

oh

yeah.

That's

what

that

paragraph

meant.

After

all,

it

was

never

really

clear

to

me

when

I

read

it

I

needed

a

bigger

example

to

explain

it

now

that

I've

implemented

in

Pascal

already

conceived

of

it

ten

years

ago.

So

we

already

it's

gone.

So

that's

what

I

want.

That's

really

what

I

want

is

to

capture

all

that

that

stuff

quickly

thanks.

G

G

B

J

That

was

my

question:

can

you

clarify

these

everything

is

in

there

must

be

captures.

They

be

document

blah

I'm.

Just

we

just

want

to

understand.

What's

the

future,

is

this

document

like

a

prime

statement

document

and

and

put

it

like

that

or

do

you

want

to

evolve

part

of

the

solution

in

the

same

document?

How

do

you

want

to

structure

the

world?

The.

G

B

J

J

B

J

G

I

I

A

B

A

B

We

have

this

discussion

now.

If

you

want

to

introduce

beer

into

ripple,

there

has

been

an

earlier

document

by

kirsten

who

says

how

you

could

do

multi

multi

cast

by

using

beer.

There

will

be

another

presentation

later

on

by

Pascal

to

see

the

different

modes

of

beer

you

can

use

and

also

for

unicast

and

having

all

these

proposals

we

wanted

in

the

end

to

start

and

Design.

Group

is

the

agreement

which

the

working

group

to

look

at

this

problem

further,

how

to

put

beer

in

to

roll

a

repo?

Sorry,

yes,

Carsten!

Please.

I

I

So

basically

the

the

observation

was

that

it

would

be

good

to

have

a

multicast

phone

on

story

mode

and

we

were

thinking

about

good

ways

to

do

sauce

routing

for

Manteca.

So

that

was

a

research

project

that

we

started

some

time

ago

and

we

came

up

with

a

solution

that

we

call

constraint

cast

and

that

actually

yeah

was

a

result

of

a

research

project,

but

not

necessarily

a

draft

that

we

wanted

to

pursue

here

in

the

ITF.

I

Then

in

2014

beer

happened

and

we

saw

oh,

there

are

other

people

who

were

working

on

source

voltage

miracast.

So

it's

not

exactly

sauce

routed

in

vo

necessarily,

it's

maybe

routed

somewhere,

it's

but

yeah.

So

this

draft.

Suddenly

this

research

suddenly

became

interesting

again

and

we

submitted

it

as

as

a

internet

draft

and.

I

Spun

for

a

while

and

became

a

working

document,

but

it

certainly

makes

sense

to

do

a

revisit

of

what's

actually

happening

in

the

working

group

and

see

how

that

is

applicable

to

what

we

are

doing,

but

maybe

also

the

other

direction.

How

what

we

are

doing

actually

can

be

useful

in

in

some

deployments

that

what

via

currently

has

doesn't

fully

address.

So

I

have

presented

this

before

just

to

remind

people

what

this

was

about.

So

sauce

route,

a

multicast

in

non

steering

mode,

the

source

routing

always

comes

from

the

route.

I

You

need

to

put

information

into

the

packet

for

every

forwarding

node

that

sees

the

packet

to

decide

whether

it

has

to

forward

it

or

not.

And

of

course,

one

way

of

doing

that

is

just

listing

the

forwarding

nodes

in

a

sauce

rod,

but

that

becomes

big

very

quickly,

even

with

with

great

six

large

compression

and

so

on.

So

what

we

looked

at

was

what

what

are

efficient

representations

of

lists

of

data

and

since

1970.

Actually

we

know

about

bloom

filters.

I

So

we

can

directly

use

the

occurring

interface

address.

We

don't

need

any

coordination

numbering

whatever,

so

this

is

a

small

incremental

layer

on

ripple

non-selling

mode

and

of

course

the

problem

is

that

bloom

filters

are

probabilistic,

so

we

have

false

positives

for

its

positives,

cause

various

transmission

and

the

spurious

transmission,

of

course

eats

some

energy

somewhere.

I

So

we

have

to

think

about

how

bad

is

that

in

a

specific

situation

and

how

bad

that

is,

of

course,

depends

on

the

properties

of

the

network.

So

there

are

a

lot

of

things

that

you're

into

that.

So,

for

instance,

how

full

are

your

bloom

filters,

and

that

of

course

depends

on

how

many

forwarding

nodes