►

From YouTube: IETF101-NWCRG-20180322-1330

Description

NWCRG meeting session at IETF101

2018/03/22 1330

https://datatracker.ietf.org/meeting/101/proceedings/

D

A

So

this

is

the

coding

for

efficient

network

communications

research

group

again,

like

I,

said

we

have

a

very

full

agenda

inside

actually

so

for

people

who

are

new

here,

our

goal

is

essentially

to

foster

research

and

I.

Would

you

know

and

emphasize

the

word

research

here

in

network

and

application

layer

coding

to

improve

performance?

What

does

that

research

mean?

It

means

research

in

codes

and

coding,

libraries

and

you're

going

to

hear

about

some

of

that

in

the

early

presentations

here.

A

We

also

want

to

focus

on

protocols

that

will

facilitate

the

use

of

coding

in

existing

systems

a

lot

of

times.

The

reason

that

coding

is

not

used

is

because

there's

not

an

easy

way

to

access

this

code

from

inside

another

protocol,

and

we

want

to

look

into

real-world

use

cases

and

also

work

work

in

progress

in

other

working

groups

and

in

other

research

groups

and

outside

or

group

also,

okay,.

B

So

now

the

usual

not

well

slide,

in

fact,

not

exactly

usual,

because

it

has.

It

has

changed

recent,

but

the

underlying

documents

remain

the

same,

so

I

don't

want

to

go

through

this

slide

just

to

highlight

one

points.

That

is

very

important

in

our

case.

If

you

are

reasonably

aware

of

IPR

related

to

your

work

or

work

from

somebody

else,

then

please

you

need

to

do

nykeya

disclosure

rapidly.

B

You

can

have

more

details

in

BCP

79

again

of

this

list.

Bcp

29

best

current

practice

is

also

a

short

name

for

another

name

for

IFC

81

79.

So

have

a

look

at

that,

then,

concerning

some

administrative

stuff,

if

ever

you

are

looking

for

any

information,

any

piece

of

information

concerning

our

research

group,

then

you

need

to

go

to

the

data

tracker.

I

got

ITF

dot,

all

websites,

everything

is

there

the

documents,

the

milestones

the

agent,

the

the

Charter

everything

is

there?

B

Okay,

we

also

have

wiki,

but

we

are

not

using

it

so

much

for

the

moments

main

list.

The

slides

are

all

uploaded,

except

maybe

one

of

them,

but

I

hope

that

we'll

get

it.

This

is

the

Yun

presentation,

but

you

will

sign

it

to

us

all.

The

slides

also

wise

are

available

online

and,

of

course,

from

a

pest

station

is

fully

usual

mythical

system.

B

The

agenda,

as

I

said,

is

pretty

full.

We

have

zero

time

balance.

So

if

you

intend

to

present

something

then

make

sure

that

you

presentation

is

stay

within

the

allocated

time,

so

we

have,

except

one

exception,

of

a

quarter

of

our

for

each

your

first

ten

minutes,

presentation

and

five

minute

questions.

If

you

spend

more

time,

presentation

means

less

time

on

prostate

on

discussion

after

you.

A

So

a

little

bit

of

a

a

quick

status

about

what

is

going

on,

we

have

a

document

that

is

at

is

G

review,

which

means

that

it

will

be

LRC

very

soon

and

it's

the

network

coding

taxonomy,

which

describes

the

words

that

we

are

using

and

describes

the

functions

of

network

coding.

There

are

currently

individual

ideas

did

this?

Has

one

there's

RL

and

C?

There's

a

presentation

that's

going

to

be

here

presented

today

that

has

both

information

about

ONC

and

also

about

symbols.

B

D

E

E

E

So

the

objective

of

our

work

is

to

propose

jarick

transport

protocol

framework

plus

some

building

blocks,

and

particularly

the

elastic

encoding

window

next

slide,

and

today

everything

is

inside

the

same

document,

so

we

defined

the

protocol,

so

we

defined

some

packet

formats

and

we

also

defined

a

set

of

building

blocks.

So

everything

in

each

side

is

document,

and

this

is

why

I

want

to

discuss.

E

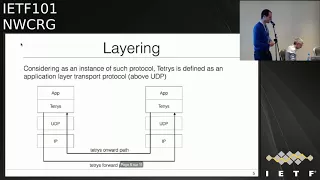

So

you

will

see

at

the

end

next

slide,

so

tetris

is

defined

as

an

application

layer,

Transfer

Protocol,

so

it's

n

to

n

and

above

UDP

next

slide.

So

the

use

case

are

unicast

and

multicast

communications

with

or

without

feedbacks.

So

in

the

protocol

part

we

like

it

simple.

So

we

have

three

packet

formats.

So

the

first

is

the

sauce

packet.

So

it's

just

to

pay

a

lot

with

an

ID.

The

second

is

a

credit

packet.

So

it's

a

runner

combination

of

some

sauce

packets

and

including

an

ID

plus

an

encoding

vector.

E

So

the

information

about

the

liner

combination

plus

decoded

packet

payload,

the

last

one,

is

the

acknowledge

pockets.

So

you

provide

some

feedback

like

packet

loss,

read

the

missing

pockets

or-

and

you

want

to

want

to

go

back

from

the

decoder

to

the

encoder

sonic

slide.

Ok,

so

we

proposed

this

encoding

vector,

so

this

is,

it

could

be

actually

the

first

building

block.

So

this

is

the

most

important

because

it

defines

how

we

code

the

data.

Ok,

so

each

coded

packet

contains

an

encoding

vector,

so

we

wanted

to

create

a

generic

encoding

vectors.

E

E

So,

for

example,

if

you

just

want

to

use

a

sliding

window

so

suppose

you

want

to

generate

an

encoding

vector

by

using

the

fighting

with

of

size

64

by

using

the

finite

field

with

2

to

power

8

elements.

So

you

want

to

code

simple

source

0

to

63,

so

you

actually

need

egg

bytes

to

store

all

the

information

about

the

liner

combination.

E

Yes,

so

another

building

block

could

be

the

generation

of

the

coefficients,

so

interest

will

propose

one

approach,

which

is

a

deterministic

way.

So,

basically,

you

are

inside

a

codec

coded

symbol,

I,

and

you

want

to

generate

the

coefficient

to

integrate

this

example

G.

So

you

have

defined

in

the

draft

a

method

to

just

to

generate

that

directly

so

coefficient

based

on

these

two

ideas,

so

you

don't

have

to

generate

all

the

coefficients

for

your

full

line

of

combination.

You

just

if

you

want

just

generate

1.

5

change

is

possible.

E

So

this

is

an

approach

we

propose.

Okay

does

not

cover

all

the

use

case,

internet

recording,

but

this

is

what

we

propose

all

right.

Okay,

so

now,

as

I

said,

everything

is

inside

the

same

document.

So

now

what

do

we

do?

Do

we

have

to

split

the

document

bit

between

the

protocol

and

a

set

of

building

blocks,

or

do

we

have

to

continue

with

this

Jerek

framework?

Or

do

we

have

to

create

something

addressed

by

defining

more

precisely.

C

F

Different

network

systems,

research

incident,

just

a

clarifying

question:

could

could

you

explain

to

some

of

us

that

don't

follow

all

the

details,

what

the

inner

overlap

if

any

and

field

of

applicability

between

this

and

the

TSV

are

encoding

drift

for

sliding

window,

RL

and

C.

That's

that's

happening

in

with

the

feck

frame.

Yes,

so.

E

F

A

So

I

and

I

think

there's

a

lot

of

overlap,

things

between

the

applicability

of

each

of

these

things,

but

we

would

like

also

to

allow

them

to

be

used

both

independently

or

to

address

the

same

problem.

But

then

you

could

choose

whatever

you

want.

Does

that

answer

your

question,

because

it

was

not

a

question

that

much

for

him

but

I

think

for

it

for

the

generic

group

I.

F

Think

it

answers

a

question.

I'm,

not

sure

I,

like

the

answer

very

much

in

the

sense

that

we

don't

have

a

neither

document

seems

to

provide

a

guy,

any

guidance

as

to

which

one

might

be

more

appropriate

for

any

given

thing-

and

you

know

you

mentioned

api's.

This

has

nothing

to

do

with

api's.

This

is

protocol

encoding

right

and

you

could

provide

a

generic

API

that

could

do

either

of

those

these

things.

F

But

then

who

decides

when

you

call

that

API,

which

one

gets

used

right

so

I

just

think

there

might

be

some

metal

work

to

be

done

to

give

the

community

some

guidance

and

I

don't

have

an

opinion

as

to

whether

technically

one's

better

than

the

other

for

this

or

the

other.

But

one

of

the

things

we

can

do

in

a

research

group

since

we're

doing

research

is

maybe

some

experimentation

that

later

can

provide

some

guidance

to

people

as

to

what

the

right

field

of

applicability

for

the

for

the

different

encoding

might

be.

Yes,.

B

As

I

saw

without

my

charts,

but

also

as

well

main

author

of

the

fact

frame

stuff,

that's

a

very

good

point

and

you

will

see

there

is

some

overlap

between

this

presentation.

The

presentation

that

will

appear

just

after

on,

like

it's

either

formats

and

also

some

overlap

with

what

I've

done

with

colleagues

in

the

context

of

fake

frame

for

freak

frame,

is

a

bit

special

because

it

was

focused

on

an

already

existing

protocol

with

some

specificities.

B

But,

yes,

there

is

some

commonality

and

we

need

to

have

common

understanding

and

guidance

regarding

how

to

format

others

in

an

appropriate

way

to

fulfill

the

requirements

of

that

on

this

and

that

protocol.

So,

yes,

this

is

work

that

we

need

to

be

done

and

I

wanted

to.

We

want,

in

with

Nigel

a

to

put

that

on

the

table

after

the

second

presentation,

yeah.

F

A

E

H

H

Yes

and

thank

you

for

the

other

author

summer,

who

is

with

us

remotely

and

surely

from

Chora

and

myself

and

hear

me

no,

can

hear

me

now:

yes,

okay,

so

and

Vince

from

from

inside.

So

can

you

okay?

So

the

agenda

is

essentially

a

general

motivation

and

the

objective

of

this

work

and

the

design

goals,

and

we

just

that

we

support

and

then

an

example

of

the

simple

representation

that

we

have

proposed

and

some

types

and

the

relationship

with

the

outer

protocol

and

some

limitations

of

this

work.

H

So

it's

a

related

to

the

first

presentation,

not

so

the

sort

of

the

out

the

starting

point

of

this

work

was

that

we

wanted

a

general

purpose,

very

low

overhead

presentation

of

coded

symbols.

So

it's

not

a

protocol,

it's

just

the

symbols

with

some

stuff

on

top

so

that

you

can

interpret

them

at

the

receiver.

H

You,

okay,

so

maybe

I

just

no!

No,

we

need

to

use

it.

Okay,

sorry,

sorry,

okay,

so

maybe

I,

just

okay!

So

try

this

one,

okay,

so

yeah.

So

the

motivation

is

a

representation

of

a

coda

symbol

that

because

we

need

that

in

in

multiple

different

protocols

and

if

we

can

reuse

that,

so

we

can

build

our

protocols

faster.

We

don't

need

to

redefine

this

every

time

like

the

last

and

and

also

the

chest.

H

No

and

then

we

can

give

up

some

interval

Otzi

between

implementations

of

the

underlying

code

of

the

coding,

libraries

and

stuff

like

that,

and

then

this

last

one

should

maybe

be

in

the

next

slide,

but

at

least

was

important

for

this

work.

That

was

that

we

wanted

to

accommodate

a

varying

frame

size

over

the

course

of

the

transposition

over

the

over

the

life,

the

transportation,

because

we're

working

on

a

network

where

that

happens,

to

be

the

case

that

the

underlying

frame

size

changes

during

the

because

of

some

changing

link

conditions.

H

So

so

we

have

the

goals

here.

They

know

we

want

to

know

era,

overhead.

You

know

we

want

to

support,

recording

because

it's

we

think

it's

an

important

feature

of

network

coding

that

we

need

and

and

we

want

to

be

able

to

generate

symbols

from

blocks

that

are

incomplete

in

case

of

a

block

code,

and

we

want

to

support

both

the

block

and

a

sliding

window

type

of

code.

H

So

the

features

that

we

have

included

as

a

consequence

of

this

is

a

variable

number

of

symbols

that

are

can

be

represented

within

each

of

these

representations,

because

we

have

a

usually

we

have

fixed,

simple

size,

and

that

means

that

the

only

thing

that

we

can

vary

is

the

number

of

symbols

we

put

into

each

representation,

unlike

unless

we

want

a

sort

of

a

purpose,

every

representation

for

each

simple.

And

if

we

have

that,

then

we

have

an

overhead

for

each

of

the

symbols

we

put

in.

H

So

that's

seriously

limits

the

sort

of

the

flexibility

we

have.

We

have

three

simple

types,

so

we

have

an

uncoded

and

I

code

it

and

a

recorded

one

so

similar

to

the

first

work

that

was

presented

so

in

order,

so

we

need

different

things

for

these

three

different

cases.

So,

in

order

to

be

efficient,

we

should

define

some

different

ones,

and

then

we

have

a

small,

small

and

a

large

encoding

window

which

essentially

limits

how

big

we

can

do,

how

much

data

we

can

put

in

our

blocks

and

in

our

windows.

H

So

here's

a

sort

of

a

generic

example

which

is

just

that

type

field.

We

have

these

different

types

and

then

we

have

a

field

for

some.

How

many

symbols

we

include

and

then

we

have

the

rank

of

at

the

encoder,

which

is

necessary.

If

you

want

to

encode

symbols

before

you

have

a

full

block,

for

example,

and

then

we

have

something

like

a

seed

or

a

coding

vector

that

we

include

and

the

data

that

is

encoded

next

I,

please

and

then

we

have

the

three

different

types.

H

H

Fixed

overhead

overhead,

the

regardless

of

how

many

symbols

we

will

put

into

the

representation

and

then,

in

the

case

of

a

recorded

symbol

we

put

in

the

coding

coefficients

or

if

we

have

some

system

where

we

don't

have

access

to

the

random

generator

or

something

else,

you

can

essentially

support

anything.

You

can

generate

an

arbitrary

coding

weight

so

any

way

you

want

and

put

that

into

this

representation,

but

it

also

cost

as

a

higher

weight.

H

So

it's

not

what

you

would

usually

do

and

the

reason

why

we

think

that

we

need

all

of

these

three

is

we

need

to

mix

and

that's

the

system

right.

That

could

be

that

most

of

the

traffic

is

uncoded

and

then

there's

a

little

bit

that

has

coded

or

recode

or

something

else.

So

if

we,

if

we

don't

permit

that,

we

can

change

that

they

are

interoperable,

then

we

will

not

be

most

efficient

so

in

relationship

to

with

the

outer

protocol.

Maybe

that's

a.

There

is

some

things

that

we

support

and

we

support

that.

H

You

can

essentially

put

any

number

of

representation

into

a

single

payload

and

then

in

each

of

these

representations

you

can

put

in

up

to

15

symbols,

and

then

we

have

two

different.

The

window

or

block

size

is

that

we

supported

a

small

one

up

to

a

thousand

approximately,

which

is

typically

enough,

a

block

code

and

then

there's

a

really

pick

one

up

to

two

hundred

and

sixty

something

if

you

want

maybe

a

really

big

sliding

window

so

and

then

there's

a

bunch

of

things

that

the

outer

protocol

needs

to

define

for

this

to

work.

H

So

it's

things

like

the

field,

the

symbol

size.

It's

the

type

of

representation

that

we

use

here

either

small,

a

large

and

typically

you

would

use,

would

choose

one

for

your

application

and

then

use

that's

route.

And

then

you

need

to

also

provide

the

block

ID

or

the

window

offset

in

the

outer

protocol,

and

that's

a

some

things

that

you

could

define

if

you

need

them

or

if

they

are

useful,

and

that

would

be

things

like,

for

example,

the

block

size

or

the

density

of

the

code.

H

B

Advanced

questions

I

have

okay,

so

thank

you

for

for

this

initiative,

because

it

is

something

that

was

missing

to

this

group

having

some

document

that

describes,

even

if

you

don't

go

into

the

details

for

the

moment,

but

that

describes

Ireland

C

is

something

that

was

considered

as

important.

We

put

that

in

the

milestones.

So

that's

great.

It's

also

important

to

start

discussions

on

protocol

headers

and

from

this

point

of

view,

we

need

to

work

together.

Well,

I

have

several

technical

commands

on

your

proposal.

B

B

You

is

a

janitor

and

ways

of

the

people

who

also

add

their

own

IDs

in

order

to

find

something

that

maybe

could

be

usable,

reusable

in

some

way,

maybe

not

for

all

the

use

cases

because,

for

instance,

I

was

mentioning

earlier

that,

with

fake

frame

we

have

some

already

existing

mechanism,

so

we

need

to

be

compliant

with

that,

so

that

require

not

specific

to

that,

but

also

otherwise

we

can

extract

some

ideas.

That's

are

more

or

less

aligned

with

what

you

present

it

with

what

Jonathan

presented

so

working

together.

B

B

B

B

End,

you

want

only

one

format:

no

I,

don't

necessarily

want

one

format,

it

depends.

I,

don't

have

the

answer

today.

It

depends

on

protocol

requirements.

In

that

case,

we

maybe

have

to

use

that

format,

because

it

makes

sense.

You

know

the

case,

we'll

have

another

format

but

at

least

having

a

single

document

and

see

what

alpha

we

can

go

into

this

direction.

Having

something

common

as

much

as

possible.

I,

don't

know

if

it

is

I,

don't

have

young.

So

today

we

need

to

think

about

it

together,

but

let's

try

to

work

together.

B

G

G

G

F

I

think

the

goal

is

a

good

one,

but

at

the

end

of

the

day

we

need

at

least

one

form

of

global

optimization,

which

is

that

we

have

n

formats

and

M

codepal

embeddings.

We

have

an

in

order

n

times

n

problem

in

terms

of

actually

putting

a

system

together.

So

the

caution

I

would

give

by

splitting

this

out

is

that

we

don't

run

two

parallel

efforts,

each

of

which

is

operating

in

its

own

degree

of

freedom

right,

so

so

that

we

wind

up

with

a

cross

product.

That's

you

know

unimaginably

large

right,

so

we.

F

To

constrain

the

number

of

protocol

embeddings,

we

have

or

constrain

the

number

of

format

we

have

or

both,

but

if

we

don't

constrain

either

one

we're

gonna,

you

know

I

mean

we've

seen

this

in

the

ITF

in

other

places

before

you

wind

up

with

a

gigantic

mess.

So

some

global

discipline

is

needed

here.

Yeah.

F

B

F

C

H

F

B

F

H

G

Yes,

I

completely

agree

with

Dave.

You

know

that

was

a

motivating

example,

particularly

for

instance,

for

recoding,

but

nothing

here

is

specific

to

Ireland,

see

in

terms

of

the

format

and,

conversely,

I

just

wanted

to

point

out

that

they

are.

They

have

been

proposals

and

they

did.

My

group

has

had

papers

on

doing

RL

and

C

without

conveying

without

conveying

the.

G

Presentation,

so

it's

a

motivating

example,

but

it's

it's

for

a

particular

it's.

It

enables

certain

things

that

are

effectively

coding,

which

are

particularly

nice

with

our

NSC,

but

but

it

is

really

just

a

generic

format.

What

happens

to

enable

certain

of

your

LMC

cases

that

that

we

have

found

to

be

very

effective

so

that

that

will

place

it?

It's

not

really

a

subset

or

or

super

set

it.

G

E

So

the

only

difference

between

a

recording

and

not

recording

is

you

just

need

the

coefficients

to

be

inserted

in

so

inside.

The

the

packets

right

I

think

this

is

the

only

friends

so

I'm

pretty

sure

we

could

imagine

a

format

generic

format

with

an

optional

field.

You

said:

okay,

I

want

the

coefficients

inside.

So

all

other

other

I

don't

want

the

coefficients,

so

it

should

be

pretty

simple

to

convert.

G

Yes,

so

the

recording

doesn't

always

require

the

yeah

that

does

not

always

require

the

the

conveyance

of

the

new

coefficients

upon

which

there

the

recoating

is,

is

affected,

so

you

know,

on

the

other

hand,

of

course,

there's

some

really

great

spaces

for

it.

But

again

it's

not

a

cynic,

one

on

condition.

I

just

wanted

to

make

that

clear,

and

indeed

some

of

the

papers

that,

for

my

group,

signed

fairly

on

on

on

sensor

networks,

which

people

generally

call

more

iot

right

now,

indeed,

did

not.

G

B

You,

okay,

so

I

think

we

are

almost

done

just

one

comment

because

we

already

mentioned:

we

already

had

some

discussion

offline

on

this

topic,

but

if

you

believe

there

is

an

idea,

disclosure

that

should

be

done

on

this

document,

please

do

that

rapidly

as

rapidly

as

possible.

There

is

a

an

exact

wording

in

the

BCP

79

that

says

as

rapidly

as

possible

after

document

submission

after

contribution.

So

please

keep

keep

that

in

mind.

One

more

comment

regarding

IPR

disclosure:

if

the

patent

is

not

yet

granted,

it's

not

a

problem,

there

is

a

checkbox.

B

J

Georgia

market

ask

occurred

on

we're

working

on

the

IPR

disclosure.

We

understand

that

you

know

it

should

be

disclosed

as

reasonably

as

possible,

not

as

rapidly

as

possible.

You

should

appreciate

that

you

know

the

IP

is

owned

by

predominantly

Hamilton

Caltech,

but

there

are

also

other

eight

universities

involved.

So

we

are

discussing

and

agreeing

the

best

licensing

strategy

and

approach,

but

you

know

we're

working

on

it

and

you

know,

as.

J

B

B

Yes,

next

and

we

well

I

updated

this

document

only

very

recently

yesterday.

In

fact,

sorry

for

that

we

try

to

do

better

next

time.

I

was

waiting

for

a

third

contribution.

So

now

this

document,

the

one

you

will

find

on

the

data

tracker-

includes

a

free

example

api's

for

sliding

window

codes,

nine,

the

one

from

jonathan

and

the

one

from

Morton,

so

those

free

api's

correspond

to

running

codes.

B

There

is

something

behind

them.

There

have

been

independently

developed

by

the

free

first

press.

Colleagues,

of

course

we

are

not

working

all

alone,

but

they've

been

indefinitely

developed.

So

that's

something

very

precious

and

there

is

also

a

link

to

a

fourth

implantation

of

sliding

window

codes,

the

one

from

Cedric

which

you

can

find

it.

You

are

yeah.

This

one

does

not

include

codec

API,

it's

developed

in

a

different

way.

There

is

no

standalone

codec,

so

I

mentioned

it.

We

can

use

it

to

get

inspiration.

We

can

also

discuss.

B

We

said

like

with

well

aware

of

foul.

We

can

do

that,

but

was

designed

in

different

ways,

so

there

is

no

fourth,

doesn't

the

only

free

examples

not

for

anyway,

so

we

have

analyzed

all

of

them,

and

we

came

with

a

few

preliminary

conclusions

on

several

questions

that

I

would

like

now

to

introduce

you.

They

are

not

in

the

document,

so

the

first

of

all

I

would

like

to

do

a

reminder

before

going

into

the

details.

B

We

need

to

understand

that

what,

while

looking

here

is

an

API

for

a

low-level

correction,

low-level

quake

will

include

a

certain

number

of

mechanisms,

but

certainly

not

all

of

them.

A

lot

of

stuff

will

remain

in

the

color

in

the

application

or

in

the

protocol.

That

will

use

this

low-level

correct.

So

we

are

the

correct

API

in

between,

but

a

lot

of

stuff

will

be

out

of

scope

for

this

API,

so

you

will

see

in

the

future

slides

some

of

them.

Some

of

the

questions

that

we

try

to

answer

are

really

dedicated

to.

B

B

So

the

first

question

is:

what

type

of

codec

should

we

focus

on

so,

of

course

we

need

to

have

something

which

is

as

generic

as

possible.

That's

the

title.

That's

goal,

but

we

add

we

need

to

take

an

important

decision.

Should

we

consider

poof

block

cuts

and

sliding

window

cuts

or

not.

We

discussed

that

together.

B

If

we

look

at

what

has

been

done

in

those

four

implementations,

you

will

see

that

three

of

them

concern

only

it

sliding

focus

only

on

sliding

window

cuts.

There

is

only

one

of

them

that

encompasses

both

block

cuts

and

sliding

window

cuts,

and

we

discussed,

and

for

the

moment

we

came

to

the

conclusion

that

we

should

focus

on

sliding

window

cuts

only

so

this

API,

which

should

be

an

API

for

sliding,

will

occurs.

B

F

B

Yeah

we

add

that

in

mind

some

parts

of

time.

Of

course,

a

block

code

is

a

sliding

window.

That

does

not

slide

so

there's

some

equivalence

from

this

point

of

view.

But

when

you

go

into

details

of

the

API

for

the

moments,

we

didn't

find

any

satisfying

manner

to

manage

both

in

this

in

a

unified

way

that

differences

technical

differences

and

when

you

try

to

to

address

them,

you

you

will

quickly

enter

into

problems

and

for

the

moment

we

have

no

solution.

Maybe

there

is

one,

but

we

I

didn't

find

them.

G

G

The

other

aspect

is

that

sometimes

you

get

implementations

which

start

out

as

a

block

code,

but

then,

because

of

the

way,

acknowledgments

are

managed

really

start.

Looking

very

much

like

a

sliding

window.

So

I

think

it's

important

to

realize

that

there's

really

quite

a

continuum

with

again

I

think

a

considerable

literature

behind

it

that

wouldn't

want

us

to

dismiss.

B

Yes,

there

is

a

continuum

but,

as

I

said,

when

you

go

into

details

and

want

to

design

an

API,

it's

not

that

easy

to

find

a

solution

that

encompasses

both

block

and

sliding-window

codes.

That

is

attractive

and

simple.

That

remains

simple,

even

for

a

particular

case

of

block

codes.

So

it's

a

very

practical

question

and

we

need

to

go

into

practical

considerations

yeah,

but,

yes

for

sure,

there's

a

continuum.

G

B

B

Second

question

is

now

one

of

those

questions.

Where

should

you

do

that

feature?

Will

it

be

inside

the

code

actually

be

on

top

of

the

collection?

So

should

the

API

consider

this

or

not

so

that's.

The

first

example

should

be

ad

you.

So

the

a

view

is

the

application

that

I

need

the

message

from

the

application.

Let's

say:

should

this

ad

you

to

source

symbols,

mapping

be

done

inside

the

codec

or

on

top

of

the

codec.

B

Should

the

API

consider

only

source

symbols,

Reaper

symbols,

which

is

the

conclusion

if

we

do

that

only

on

top

of

the

codec,

if

you

do

this

mapping

on

top

of

the

collec,

since,

in

that

case

the

API

will

only

see

and

consider

symbols,

or

should

this

be

inside

the

codec,

the

opposite

solution?

We

had

some

discussion,

there

are

impacts

in

terms

of

implementation

complexity.

B

This

mapping

is

not

trivial.

There

is

some

complexity

associated

to

this

mapping,

so

it's

once

again

an

important

question,

so

we

came

for

the

conclusion

for

now

after

discussing

that

should

be

done

by

the

color

outside

of

the

clique

to

keep

this

Killick

as

simple

as

possible.

The

a

consequence

is,

of

course,

comments.

No.

B

So

this

is

the

drawing,

so

on

top

of

the

correct

means

outside

of

the

API

so

yeah

in

this

example.

If

we

do

this

mapping

inside

the

protocol

inside

the

the

application,

let's

say

that

uses

the

codec.

The

codec

will

only

consider

symbols

because

this

mapping

will

be

done

before

entering

so

coming

back

to

the

format

discussion.

Just

for

a

second.

F

F

A

B

B

There

are

consequences.

This

is

once

again

a

very

important

question,

because

when

you

design

effect

scheme,

this

is

not

only

the

correct

part.

The

encoding

and

decoding

part

is

also

the

signaling

part

that

is

required

to

you

this

correct.

So

this

answer

in

this

question

will

also

answer

the

question:

does

the

codec

input

does

the

API

implements

a

fact

scheme

or

just

a

codec?

B

So

we

had

some

discussion

and

for

the

moment

our

position

is

that

once

again

we

keep

the

codec

as

simple

as

possible,

focusing

only

an

encoding,

encoding

and

decoding

sorry

and

leave

this

packet

error,

manipulation,

creation,

processing,

passing

inside

the

application

on

top

of

the

API.

So

that's

our

position

for

the

moment.

B

Fourth,

question

asked:

you

have

five

minutes:

should

the

codec

once

again

same

cat

same

type

of

question?

Should

the

codec

take

into

consideration

timing

aspects?

So

if

you

are

manipulating

real-time

flow,

there

is

a

limited

validity

duration

for

each

of

the

piece

of

information

that

you

will

send

to

the

receiver.

So

should

the

correct

should

be

yes

to

the

correct

and

the

API.

B

Where

are

those

tiny

aspects

or

not?

Once

again,

it's

as

implications

on

the

API

point

of

view,

because

that,

typically

with

slamming

window

codes,

whereas

this

distinction

between

decoding

window,

which

is

which

needs

to

consider

timing

aspects

and

linear

system

size

which

does

not

consider

tiny

aspect,

so

there

are

consequences

once

again.

I

don't

want

to

go

too

much

into

the

details,

but

the

implications.

So

we

add

once

again

our

discussion

and

for

the

moment

our

position

is

that

this

should

be

done

inside

inside

the

application

on

top

of

the

API.

B

Five

fifth

question:

this

is

especially

a

question

for

you

Dave,

and

you

you

mentioned

at

previous

ITF-

that

it

should

be

nice

to

take

into

consideration

other

constraints.

That's

a

very

good

point.

We,

but

unfortunately

none

of

us

has

any

sufficient

experience

in

the

domain

to

see

what

it

means.

What

are

the

implications

and

therefore

to

design

this

in

an

appropriate

way

or

avoid

some

mistakes

that

might

be

done

if

we

don't

keep

that

in

mind.

So

if

anybody

has

an

opinion

on

these

examples

ins,

then

we

would

appreciate

that.

B

F

F

Out

so,

if

you're

doing

the

coding

on

an

FPGA

yet

and

the

application

is

running

on

the

CPU

yeah,

if

the

API

is

passing

individual

symbols

back

and

forth,

uncoded

symbols

decoded

symbols

over

that

API

between

the

hardware

and

the

software

right.

It

won't

work

well,

so

you

have

to

consider

an

API

in

which

there's

inherent

ability

to

do

batching,

I

guess

so

that

so

that

the

interaction

this

is

the

control

interaction

with

the

hardware

can

operate

over

a

reasonably

large

number

of

input,

symbols

and

output,

symbols.

Okay,.

B

Okay,

that's

the

key

points

to

keep

in

mind.

Yeah,

okay,

I

will

probably

get

in

touch

with

you

to

try

fine

more

precisely

this.

But

yes,

yes,

thank

you.

Thank

you

very

much.

So

quick

final

slide.

To

summarize,

we

need

to

make

choices.

Those

choices

as

have

implications.

It's

not

obvious

to

see

what

are

all

the

implications

of

each

of

those

choices.

B

A

L

B

K

K

B

But

you

should

do

that

in

the

application

in

the

protocol,

not

in

the

collection

correct

when

whether

you

see

when

a

new

saw

symbol

has

been

decoded,

then

the

codec

will

give

it

back

to

the

application.

And

so

the

timing

will

be

that

the

wrong

timing.

When

this

happens.

But

if

you

have

a

maybe

you

are

running.

K

G

More

send

it

to

the

list.

Okay,

great

I

just

wanted

to

point

out

that

the

the

this

actually

announcer

I

think

to

the

question

that

was

being

asked

about

whether

there

are

implementations

there

are

FPGA

implementations

of

that

my

lab

did

with

the

lab

of

Anantha

Chandra

Carson,

our

current

Dean

of

engineering

on

FPGA.

We

also

have

done

this

in

with

a

you

know,

sponsored

by

the

semiconductor

Research

Council.

We

there

is

a

chip

implementation

of

network,

a

lot

of

and

by

the

way,

I

asked

answering

him,

and

you

has

a

question.

A

B

I

Basically,

the

problem

is

that

the

thing

is

interactions

between

net

recording

and

congestion

control

could

be

done

in

many

working

groups

at

the

IETF.

So

why

could

we

start

it

here?

First

T

is

that

we

have

already

Network

coding

and

congestion

control

interaction,

solutions

existing

and

also

the

discussion

needs

to

start

somewhere

anyway,

then

see

where

you

go

forward

through

the

objective

of

these

five-minute

discussions

that

may

be

worth

having

on

the

list

afterwards

is

to

see

whether

we

make

a

group

document

here

or

we

just

peek

I,

know,

I.

I

Control

scheme

at

the

consequence,

and

also

depending

on

I,

will

present

after

this

catch

a

use

case,

draft

on

network

ligand

satellites

and

the

problem

that

we

see

that,

depending

on

the

use

case,

because

network

coding

is

a

building

block

that

you

can

deploy

and

depending

on

the

use

case

and

the

traffic

you're,

considering

you

have

lots

of

different

possibilities

and

the

same

happens

here.

Sometimes

there's

no

point

in

actually

having

interactions

between

these

two

catch

country,

control

and

intercutting.

I

So

next

slide,

please

it's

just

example

on

what

is

it

that's

at

the

moment,

for

example,

we

have

things

that

are

at

the

user

space

and

we

already

have

quick

with

some

sort

of

network

coding.

We

can

do

some

middleware

network

coding

as

well

and

in

the

kernel.

These

are

examples

of

the

what

you

can

basically

be

below

a

transport

layer

and

have

no

interactions

at

all

with

TCP

you

can

have

as

well.

I

B

N

Now

that

might

not

actually

see

the

coding,

but

it

might

still

interact

with

the

congestion

controller,

that's

running

and

end

because

you

know,

for

example,

if

you

run

in

cubic

like

you,

don't

see

any

loss,

you're

like

oh

everything's,

going

great

and

you'd

like

get

some

huge

window

and

suddenly

it

explodes

and

goes

very

poorly.

So

it

may

almost

be

an

interaction

with

like

a

QM

as

a

secondary

layer,

I

guess

trying

to

figure

out

what

the

scope

is.

N

B

Always

discussions

but

well

Korean

vessels,

congestion

control

and

if

this

document

called

where

I

explained,

among

other

things,

that

K

we

can

do

things

in

an

intelligent

way.

Where

coding

will

not

negatively

impact

congestion,

control,

break

everything,

then

that

could

be

also

one

of

the

goals.

So.

F

So

if

you

have

a

static,

if

you

have

a

static

coding,

you'll

do

different

things

that

the

congestion

control

is

above

the

coding

then

below

the

coding.

But

if

you

have

a

dynamic

adjustment

of

the

coding

level

based

on

a

perception

of

loss,

things

get

really

complicated

and

I

don't

know

the

answer,

but

there's

not

enough

expertise

either

in

a

classic

congestion

control,

transport

group

or

in

a

coding

group

like

your

to

deal

with

that.

So

I

think

it's

a

really

good

research

problem

and

we

need

expertise

from

both

sides

to

deal

with

it.

F

O

Mr.

Dawkins

says

responsible

area

director

for

the

quick

working

group,

the

those

guys

are

encoding

everything

and

then

well

anyway.

Some

of

them

are

sitting

in

this

room,

so

there

I

know

there

is

communication

back

and

forth,

but

you

perhaps

perhaps

dropping

a

note

to

the

quick

chairs

would

be.

It

would

be

a

useful

thing

to

do

just

to

make

sure

so

that

they

can

make

sure

that

the

right

people

are

involved

from

that

side.

Thank

you.

Thank.

I

I

O

A

G

A

A

N

D

B

D

B

G

B

P

N

B

A

A

N

At

least

yes,

thank

you.

Okay,

sorry,

there's

another

kind

of

conversation.

Now

we're

coding

and

quick.

We've

talked

a

little

bit

back

and

forth

of

both

like

how

this

could

be

done

in

a

v2,

I

v1

was

done

and

I

Google

quick

a

long

time

ago

that

didn't

really

work

out

for

various

reasons

which

I

talked

about

in

previous

sessions.

N

So

I

I

want

to

say

that,

for

the

record

you

know,

I

know

a

fair

amount,

quick

as

a

transport

I'm,

not

a

coding

expert.

So

as

much

as

anything,

this

is

an

effort

to

kind

of

design,

an

architecture

that

would

fit

best

with

the

quick

transport

next

slide.

So

the

top

level

requirements

number

one.

We

don't

want

to

actually

change

quick

p1.

The

current

proposal

is

to

use

the

extension

mechanism

where

rude,

negotiate,

one

or

more.

You

know

forward

error

correction

frames

for

use

inside

quick

as

additional

frames.

N

N

Ideally,

they

would

all

have

the

same

base

frame,

but

the

actual

order,

correction

algorithm

being

used

would

be

different

depending

on

the

extension

tag,

and

you

know

you

potentially,

you

could

even

negotiate

like

two

or

three

if

that

was

suitable,

so

this

design

mostly

focuses

on

coding

taking

place

within

the

stream

or

across

multiple

streams,

so

kind

of

focusing

on

the

data

actually

being

delivered

as

a

few

slides

later

about

kind

of.

Why

we're

we're

shifting

in

that

direction?

N

But

the

key

thing

is

not

all

streams

actually

need

to

be

coded,

so

you

know

doing

it

on

a

packet

layer

is

less

natural

control

frames

usually

are

and

is

latency

sensitive,

and

it

also

may

just

fit

with

the

quick

extension

mechanism

a

little

more

seamlessly

and

it's

going

to

be

and

end

is

the

design.

So

quick

has

ended

and

pretty

much

everything

else.

Quick

is

encrypted

and

end

and

so

doing

coding

engined

is

the

most

natural

thing.

N

One

could

certainly

implement

a

middle

box

that

you

know

shared

the

ephemeral

keys

and

like

did

coding

within

the

network.

That

seems

extraordinarily

challenging

and

I

can

imagine

it

actually

be

worth

the

effort.

But

you

know

this

just

fits

into

visit

on

principle,

so

quick,

much

more

naturally

and

yeah

coding

happens

before

encryption,

because

it's

but

then

the

encrypted.

He

live

next

slide

yeah.

So

some

streams

may

need

to

be

coded.

N

Some

may

not

so

we're

gonna

negotiate

what

kind

of

algorithms

are

available

and

the

quick

handshake

the

PRI

mentioned

is

one

way

to

do

it

and

then

the

application

it's

a

it's

awful

desired

like

this

is

very

high

priority

or

maybe

I

have

extra

bandwidth

or

something

and

try

to

provide

some

signal.

Even

in

quit.

Today

we

actually

have

what's

called

like

a

bandwidth.

N

F

N

It

is

to

define

a

new

quick

base

frame

that

all

of

these

various

coded

approaches

you

could

use.

The

simple

version

would

have

a

type

assumed

ID

and

offset

into

the

stream

of

what

you're

trying

to

protect

and

recover

from,

and

then

add

a

the

length

of

the

number

of

bytes

of

code

and

within

it

you

know.

N

N

However,

we

can't

change

the

packet

numbers

or

we

don't

want

to

in

such

a

scheme,

but

we

do

want

to

allow

things

like

non-consecutive

packet

protection,

because

you

know

maybe

some

packets

are

important

and

others

are

not.

For

previous

reasons,

we

can,

you

know,

have

issues

with

things

like

path:

migration

which

caused

you

like

huge

jumps

and

packet

number

space,

and

so

in

general,

like

the

interaction

between

the

packet

numbering

and

the

code

and

kind

of

could

become

a

little

messy

in

some

edge

cases

that

we

thought

we

didn't

really

want

to

do.

N

One

of

the

worst

issues

is

actually

that

all

of

these

schemes

have

overhead.

You

know

it's

not

necessarily

huge,

but

it

is

so

amount

of

over

in

and

in

order

to

have

kind

of

one

coded

packet

that

protects.

You

know

some

number

of

other

packets.

The

natural

thing

is,

to

just

add

the

extra

overhead

to

the

one

that

has

the

coding

frame.

N

However,

that

would

blow