►

From YouTube: IETF101-HTTPBIS-20180320-1550

Description

HTTPBIS meeting session at IETF101

2018/03/20 1550

https://datatracker.ietf.org/meeting/101/proceedings/

A

A

A

This

is

the

note-

well

hopefully,

you're

familiar

with

this

by

now

at

this

point

in

the

week,

this

is

a

new

one.

It

covers

not

only

the

intellectual

property

terms

of

participating

here,

but

also

things

like

working

group

procedures,

anti-harassment

code

of

conduct

and

copyright,

which

is

IPR

as

well

in

the

privacy

policy.

So

if

you're

not

familiar

with

this,

the

best

thing

to

do

is

to

go

and

look

up.

Ietf

note

well

on

your

favorite

internet

search

engine.

A

Our

agenda

for

today

we

have

one

session

this

week.

We've

done

the

blue

sheets,

correct

they're,

flying

around

yep.

We

have

scribes.

Thank

you

so

much

on

right.

Now

we're

doing

the

agenda

bash

and

so

we're

going

to

go

over

our

active

drafts

as

quickly

as

we

can

and

then

we're

going

to

talk

about

a

related

piece

of

work,

signed,

HTTP

exchanges

and

then

some

work

that's

been

proposed

for

start

sooner,

which

is

h-2b

Turk.

We

talked

about

a

few

times

the

S&L

service

parameter

and

the

alt

service

via

DNS

and

their

aunts.

A

Traps

that

marks

the

author

of

is

first

so

I,

don't

have

any

slides

for

this

draft

BCP

56

bits

for

those

who

don't

remember

is

the

revision

of

the

use

of

HTTP

is

a

substrate

draft

and

that

was

put

together

in

the

early

part

of

this

century.

Based

on

current

practice,

then

current

practice

has

moved

on

quite

a

bit

and

so

we're

trying

to

write

that

down

again.

I,

don't

have

much

to

say

about

this,

except

that

we

seem

to

be

making

pretty

good

progress.

A

I

think

we're

not

quite

done

yet

more

examples,

more

details

and

certainly

more

review

are

required,

but

there

aren't

too

many

open

issues

on

it

right

now.

So,

if

you

have

it

already

or

if

you

haven't

for

awhile,

please

have

a

look

at

the

document.

Make

sure

that

it

makes

sense

to

you

and

that

you're

comfortable

with

it

and

raise

any

issues

or

or

have

discussions

is

necessary.

A

B

I

will

make

the

comment

that

it

has

been

actively

used

in

a

couple

other

scenarios

outside

of

this

working

group,

which

is

actually

it's.

You

know

designated

audience

right

so

I

think,

even

though

it's

still

you

know

at

the

internet

draft

stage,

it's

been

a

useful

North

Star

in

the

work

in

doe

and

I

was

able

to

reference

it

in

a

TLS

which

is

really

you

don't

have

a

DAB

on

stage.

B

But

you

know

it's

been

a

very

useful

sort

of

thing

to

point

to

to

say

you

know:

how

does

your

design

you

know

flow

against

this?

So

hopefully

we

could

expect

some

comments

from

those

constituencies,

as

well

as

on

its

usefulness

as

well.

As

you

know,

from

this

room

on

its

correctness

right

and

as

with

anything

we

want

to

publish

you

know

we're

interested

in

hearing

their

diversity

of

voices.

That

say,

you

know,

they've

read

this.

A

And,

and

and

we're

trying

to

walk

a

line

with

this

document

where

it

is

not

dokoni

and

it's

not

saying,

thou

shalt

not

or

thou

shalt

must

do

this

or

whatever,

but

more.

You

know,

as

that.

The

status

intends

its

best

practice

it's.

This

is

why

you

should

probably

think

about

doing

this,

this

way

and

so

forth

and

so

on.

So

if

you

see

a

place

where

you

think

it

is

still

too

draconian

or

or

it's

not

on

the

market

place,

bring

it

up,

we'll

talk

about

I.

B

B

It's

easily

appropriate

there

we

go

spring

in

London,

no

next

one

all

right,

here's

the

wrap

for

where

we

are.

Last

time

we

met.

We

discussed

an

individual

draft

of

mine

and

we

essentially

reached

consensus

shortly

thereafter

on

the

mailing

list

and

in

the

meeting

on

refactoring.

That

draft

to

include

a

similar

level

of

header

information

in

the

HT

request

that

was

going

to

bootstrap

web

sockets

as

the

old

style.

It

should

be.

B

B

The

only

changes

since

then

have

been

editorial

in

nature:

Thank,

You,

Julian,

making

it

better

and

we've

had

a

successful

browser,

implementations,

I'm,

hoping

to

get

Ben's

to

come

up

and

say

a

couple

words

about

when

we

get

to

come

in

time

in

chrome

and

several

independent

server

implementations

that

that

has

has

worked

against

with

oo.

So

if

it's

kind

of

the

state

of

things,

I

essentially

have

two

issues,

I

am

aware

of

that

I'm

happy

to

talk

about

in

my

10

minutes

today.

B

On

the

left

and

the

next

point

on

the

Left

uses

the

connect

method

to

build

a

tunnel

very

similar

to

the

way

a

bi-directional

byte

stream

has

traditionally

works,

but

with

a

few

slight

differences,

the

primary

one

being

that

there's

a

protocol

pseudo

header

on

here

that

defines

how

you

route

and

terminate

that

tunnel

instead

of

routing

to

a

new

IP

address

as

as

Connect

might

normally

do

through

a

proxy.

The

other

request.

Headers

there

are

typical

web

Asaka,

64

fifty-five

stuff

in

the

same

stuff.

That

was

on

the

get

an

HTV

one.

B

The

next

flight

says:

okay,

that's

great.

It

includes

its

own

set

of

web

sockets,

headers,

doing

sub

protocol

negotiation,

and

then

you

just

use

the

H

to

stream

back

and

forth

as

if

it

were

a

TCP

session

that

have

been

upgraded

next

okay.

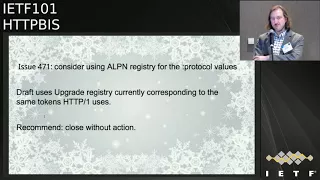

So

we

have

one

github

open

issue

open

here,

which

says

consider

using

a

LPU

and

registry

values

for

the

protocol

pseudo

header.

The

draft

uses

the

upgrade

registry

currently

which

corresponds

to

the

same

tokens

HTTP

one

uses.

B

Fundamentally.

This

means

that

you

send

a

protocol

pseudo

header

with

a

WebSocket

about

you,

which

just

obviously

not

exist

in

the

a

OPN

registry

which

tends

to

have

things

like

h1

h2,

a

hole.

Connection

in

my

might.

My

rationale

there

is

that

we

don't

want

a

hole

connection

based

protocol

value.

What

we're

looking

for

is

really

the

the

semantics

we

had

with

the

upgrade

registry.

So

my

recommendation

is

close

this

without

action,

but

there

was

no

further

discussion,

so

I

want

to

raise

it.

Oh

yes,

I

am

pausing

for

further

discussion.

C

Where

you

write

so

you

you

get

given

a

WSS,

your

eye

and

you

you

faithfully,

go

off

and

connect

to

that

thing

over

TCP

which,

which

LP

ins

do

you

put

in

that

to

have

the

greatest

chance

of

success

now.

Obviously,

this

is

the

best

way

to

proceed,

because

then

you

get

to

use

the

connection

for

other

things

as

well,

but.

B

Well,

I

mean

yeah

I'm,

not

sure

we

need

to

prescribe

that

that

could

be

an

implementation

implementation

choice

about

how

you

want

to

do

that.

You

can

happy

eyeballs

that

you

do

a

few

different

things.

You

know

my

approach

would

probably

start

by

thinking

about

whether

I

wanted

to

use

the

connection

for

different

things

right,

but

they

always.

B

C

Loosely

in

the

sense

that

if

the

answer

to

that

question

was

a

OPN

and

I

think

your

response,

I'm

quite

happy

with

suggests

that

it

doesn't.

Then

no,

it

doesn't

read

on

this,

but

there

was

a

potential

here

for

this

to

say:

well,

I

need

an

LPN

and

then,

and

then

it

sort

of

now

we're

down

the

rabbit,

hole

and

creating

ourselves

other

issues.

C

But

if

your

response

there

is

no-

and

the

answer

is

work

it

out

for

yourselves

people-

sorry

and

we

just

document

that

side

of

things

I

think

it's

probably

okay,

I,

don't

know

what

other

browser

implementers

another

WebSocket

is.

This

is

largely

a

browser

problem.

What

other

browser

implements

think

about

that.

D

Eric

Nygren,

Akamai

on

and

I

think

it

that

is

somewhat

decoupled

from

this,

because

in

some

ways

this

is

that

this

issues

about

the

portal

pin

for

the

protocol

version,

but

I

think

there's

that

question

for

for

with

that,

whether

or

not

for

the

h2

with

connect

with

connection

protocol.

It's

worth

having

a

h2

with

connection

protocol,

LPN

token

that

you

can

put

before

HTTP

one-one

and

along

with

H,

wait

on

the

list

list

when

you're

making

a

WebSockets

can

actually.

B

E

Meant

Bakey

from

Google

first,

as

Patrick

asked

me

to

comment

very

briefly

on

the

state

of

implementation.

Chrome

has

an

implementation,

it's

rough

around

the

edges,

but

it

seemed

to

work.

There's

two

server

implementations,

I

know

of

NGH

ep2

and

live

WebSockets.

The

respective

developers

set

up

publicly

available

servers

for

testing

and

confirmed

that

they

were

able

to

make

it

work

with

chrome.

I

have

not

tried

myself,

I'm,

sorry,

there's

a

third

implementer

who

I'm

corresponding

it

privately.

It

doesn't

work

yet

with

them.

E

Second,

to

contribute

to

the

discussion

that

Patrick

just

prompted

about

this

particular

github

issue.

I

support,

metrics

recommendation:

I,

don't

think

that

connection

level.

A

LPN

registry

is

right

for

protocol

values.

Third,

to

respond

to

Martin's

concern

about.

There

is

no

way

for

the

client

to

know

in

advance

whether

the

server

does

support

WebSocket

over

HTTP

or

not,

and

this

is

a

performance

issue,

because,

if

I

open

up

an

h2

connection,

even

if

I

have

previous

knowledge

about

the

server

having

support

exams

occur

in

the

past,

I

need

to

wait.

E

A

roundtrip

to

receive

the

settings

from

the

server

before

I

can

send

a

request.

So

I'm

kind

of

inclined

to

just

send

the

WebSocket

request

according

to

this

specification

at

the

server

before

getting

the

setting,

because

if

the

server

doesn't

support

WebSockets

over

h2

is

gonna

fail

anyway,

and

then

I

can

retry

on

h1.

C

So

mom

Tom's

to

the

point

performance

point

I

think

that's

have

and

I'm

an

eminently

reasonable

thing

to

do.

I

will

also

point

out

that

in

until

s13

the

server

consented

settings

first,

so

you

won't

have

to

worry

about

that

particularly

work

much

in

in

the

future.

So

it's

it's

a

temporary

problem

and

there's

certainly

not

a

particularly

dial

one.

So

yeah.

B

All

right,

thank

you.

One

more

slide,

one

more

issue,

okay,

so

the

other

piece

of

feedback,

I've

gotten

it

doesn't

have

a

good

hope

issue.

Open

I

can

see

this

primarily

from

mark

and

I.

Think

I

know

we

talked

about

this

briefly

in

Singapore,

as

it

was

the

same

in

my

individual

draft

is

that

the

this

mechanism

is

built

around

the

connect

method

and

the

argument

for

using

the

command

act

method

is

that

you

know

Connect

is

a

special

snowflake,

which

you

know

it's

consistent

with

the

background

on

the

slide.

B

So

it

must

be

the

right

choice

and

then

everything

surprising

about

you

know.

Connect

is

exactly

what

we

need

in

this

case.

So

it

satisfies

the

principle

of

least

surprise

that

there's

only

a

small

change

in

behavior

the

behavior

around

Connect.

Being

that

you

know

terminates

to

a

to

a

protocol

version

instead

of

terminating

to

a

another

host

as

it

traditionally

does,

but

the

sense

there's

a

special

snowflake

and

in

every

code

base,

which

is

quite

a

few

left

at

that

implement.

B

Http

connect

is

really

handled

in

a

different

code

path

because

it

needs

to

bi-directionally

process

the

byte

stream,

which

is

considerably

different

than

is

somewhat

store-and-forward

oriented.

You

know

message

semantics

that

go

on

with

them,

some

other

with

with

other

methods,

including

unknowns.

I

would

summarize,

you

know

the

argument

against

is

that

you

know

creating

a

link,

level

modification

or

hop

two

hop

level

modification.

B

You

know

using

a

different

of

a

modification

of

a

method,

is

itself

quite

surprising

and

you

could

use

an

alternative

method

and

it

quite

totally

new

namespace,

like

you

know,

get

with

upgrade

or

something

like

that,

and

that

could

be

a

bit

cleaner.

I

have

concerns

around

that.

If

that

method

is

referenced

at

all

outside

of

this

hop

hop

semantics,

its

meaning

is,

is

totally

undefined

and

isn't

like

rooted

in

any

kind

of

Asia.

So

I

comments,

I'm

sure.

A

To

that

first,

actually,

thank

you.

If

that

was

the

intent

there,

so

it

I

would

state

it

a

little

differently

in

that

I'm.

Just

I

want

to

make

sure

that

we

consciously

do

this,

which

is

have

a

a

protocol,

specific

protocol

version,

specific

extension

in

HTTP

to

setting

override

this

mimics

of

a

generic

HTTP

method

and

and

I

think

your

point

about

it

being

a

special

snowflake

is

is

very

well

taken.

It's

always

been

weird,

and

it

could

be

that

maybe

the

outcome

is.

Is

we

decide?

A

G

A

B

C

Madden

Thompson

I'm

I

just

put

two

and

two

together

and

Vince's

comment

about

sending

one

of

these

requests

to

a

server

that

may

or

may

not

support.

This

I

just

realized

that

if

you're

gonna

put

this

new

pseudo

header

in

a

request

and

send

it

to

a

server

that

you're

you

haven't

received

settings

from

yet

I

predict

based

on

the

general

posture

that

we

took

in

HTTP

to

regarding

stuff

that

we

didn't

allow

explosions

right

so

can.

B

We

avoid

that,

well,

we

can,

by

reading

it

because

to

yell

it's

one,

three

sentence.

First,

and

that's

a

problem

actually

not

just

with

the

proposed

here,

it's

true

of

any

of

the

proposals

that

seem

to

be

able

to

do

this.

We

don't

have

the

semantics

we

need

for

this,

so

we

need

an

extension

of

some

sort,

a

method,

a

new

method

that

has

this

behavior

is

also

not

really

well

isn't.

C

C

B

B

D

A

B

I

mean

I

think

we

did

so

we

can

revisit

the

issue.

So

the

resolution

of

that

issue

was

this

is

negotiated

and

negotiations

take

a

round

trip

and

maybe

that's

settled

because

it

doesn't

require

bilateral

negotiation.

So

if

service

ends

first

it

it's

a

half

a

round

trip

right

and

then

this

is

the

scope

of

the

use

case.

This

is

we

have

this

discussion

same

for

we

can

revisit

it,

but

they

don't

have

us

really

a

solution

space.

Isn't

it

often

okay,

I

see.

D

A

H

H

C

A

A

B

A

F

Well,

to

be

fair,

dar

check,

I

got

it

published

last

night,

so

yeah

so

I

guess

I!

Don't

really

have

any

slides

today

just

wanted

to

draw

attention

to

the

new

draft

who

just

had

editorial

changes

and

one

clarification

on

the

shift

buffer

buffer

representations

so

and

says

it

just

went

out

last

night.

I

can't

really

expect

people

to

have

reviewed

it

and

oh

hi,

everybody

I'm

apologize

for

not

being

there

again,

hey

someday,

maybe

I'll

make

it

so

more

just

trying

to

draw

attention

to

the

new

draft.

F

It's

not

significantly

different

from

Oh

to

mostly

editorial

thanks

to

Julian

and

Martin,

and

also

thanks

for

the

feedback

on

shift

buffers

and

but

I'm

willing

to

entertain

any

questions

right

now

that

anyone

has

I'm.

Sorry,

if

my

face

is

like

10

feet

tall

because

I

don't

have

any

slides

there

now

I

didn't

think

about

that.

Let's

see!

Look

good.

B

So

when

Mark

and

I

were

you

know

discussing

this

in

our

regular

meeting,

what

we

were

looking

forward

to

was

getting

a

sense

from

the

working

group

of

whether

you

know

they

thought

this

effort

was

substantive

enough

and

applicable

and

opportune

up

different

people

that

it

was

worth

going

through

the

publication

process,

for

you

know

and

and

since

then

I

mean

right,

feel

free

to

weigh

in.

But

there

have

been.

You

know

a

number

of

comments.

F

Yeah

and

thanks

for

rattling

the

cages

and

and

get

everyone's

attention,

I

think

that's

what

helped

us

get

some

feedback.

Nothing

really

I

mean

that

the

one

area,

I

kind

of

expected

there

to

be

a

few

clarifications

on,

is

exactly

where

we've

had

them,

which

is

in

that

last

section

of

our

draft.

Regarding

the

shift

buffers

there's

one

question

out

there

that

I'll

answer

on

the

mailing

list:

I

think

that

has

a

pretty

clear

answer.

F

The

and

so

no

there's

nothing

nothing

I

can

think

of

I

mean

this

is

I'd

rather

I'd

like

to

get

this

draft

to

bed.

I,

don't

want

to

add

anything

to

it.

I

mean

if

somebody

found

a

fatal

flaw

in

that

in

the

shift

buffers

I

was

already

just

that

section

is

autonomous

really

from

the

rest

of

it.

I

don't

know.

If

that's

the

right

word,

it's

discrete.

So

we

could,

you

know

not

even

discuss

it.

The

core.

F

C

Partin

I

have

a

suggestion.

I

think

we

should

work

in

group

last

call

this

document

right:

we've

had

a

lot

of

people,

look

at

it

and

those

people

are

now

largely

happy

and

people

can

pay

extra

attention

to

the

shift.

Buffers

section

in

that

working

group

last

call.

That's

not

unusual

for

us

not

only

usual

for

us

to

do

that,

but

otherwise

I

don't

think

we

need

to

hold

on

to

this

document

any

longer

very.

A

I

F

F

F

F

It

should

always

move

forward,

and

if

it's

ever

used

for

any

other

content,

it

should

be

a

different

representation

with

a

different

URI.

That

would

be

my

answer

to

that

I

mean

so

that

you

can't

eternally

have

this

model

of

a

circular

buffer,

in

my

mind,

using

HTTP.

If

you

want

to

be

casual,

it

always

needs

to

move

forward.

That's

my

answer,

I

think

to

the

question.

F

F

B

H

Well,

I

wasn't

sure

I

needed

to

walk

all

this

way

to

make

a

short

statement

but

sure

so

we

adopted

it

last

time

around

we

published

it.

We

have

merged

the

integration

with

exported

authenticators,

which

is

making

progress

in

ALS

group

we

still

have

one

real

open

issue,

which

is

handling

cases

where

the

client

wants

to

offer

a

cert,

because

they

expect

the

server

to

want

it

and

negotiating

things

around

that

and

the

bigger

meta

issues

it

crops

up

in.

H

That

is

how

we're

using

frames

on

on

streams

with

requests

that

may

have

already

ended,

which

in

HCP

yeah

it's

all

a

TCP

connection.

You

can

send

the

frame.

The

reference

is

a

closed

stream

and

nobody

cares

and

it

should

be

over

quick

that

doesn't

work

so

well,

because

the

stream

is

actually

gone.

So

we

need

to

find

a

different

way

to

deal

with

that

which

is

probably

sending

on

the

control

stream.

I

just

haven't

done

that

yet.

C

Go

away

Martin,

so

mutton

Thompson,

the

the

the

other

thing

to

point

out,

is

that

there's

a

lot

of

activity

going

on

and

on

this

work

in

TLS,

and

until

that

resolves

a

bunch

of

this

stuff,

won't

really

be

able

to

be

buttoned

down

anyway.

So

we're

working

in

parallel,

but

we're

primarily

focusing

on

the

working

TLS

right

and

then-

and

that

was

understood

when

we

took

it

on

so

we're

happy,

don't

let

it

bubble

along

for

a

while

and.

B

It's

good,

you

know

that

relationship

is

going

well.

You

know

you

put

your

peanut

butter,

my

certificate,

vice

versa.

I

actually

think

this

is

going

to

be

a

pretty

important.

You

know

draft

the

future

uses

of

HTTP

and

I

saw

I

wanted

to

draw

people's

attention

to

that.

To

make

sure

it

got.

You

know

fairly

wide

review.

B

B

A

K

J

I

Ryan

sleevee

Google,

so

I've

looked

through

the

draft

and

and

in

particular

it

seems

and

I

think

have

you

framed

it

it's

it's

a

rethinking

of

how

we

think

about

the

TLS

crypto

I

mean

quite

quite

simply,

and

from

talking

to

various

server

operators.

It

seems

like

this

one

of

the

things

that

is

not

yet

addressed

on

the

draft.

I

might

be

curious

to

see

here

how

the

author's

plan

to

address

is

the

it

addresses,

client

risk,

meaning

you

know

the

client

may

want

to

look

at

DNS

in

addition

to

the

certificate

frame.

J

J

This

is

you

obtain

a

a

certificate

for

and

I'll

use,

Google

comm

as

an

example,

and

you

have

a

connection

and

you

can

advertise

that

I

am

authoritative

for

Google

comm

in

today's

model

you

have

things

like

EGP

detection

that

you

can

use

to

detect

this,

but

this

would

no

longer

apply

and

so

I'm

trying

to

understand

and

I

did

not

see

discussion

on

list

on

these

security

concerns.

Yet

I'm

just

curious.

If

there's

a

way

forward

with

that.

L

So

I'm

not

one

of

the

signers

of

this,

but

I

err,

Chris

Farlowe,

but

I

spent

a

lot

about

it

just

like

to

make

sure

we

got

our

Terms

straight.

So

in

the

existing

design

I'm,

the

only

gonna

compromise

didn't

forget

for

google

comm,

then

and

I'm

able

to

with

DNS

then

I

can

then

I

can

then

I

can

mount

serious

attacks.

Sorry

gonna

come

out

serious

attacks.

We

agree

about

that

right

right

so

or

v,

GP

or

BGP.

Yes,.

J

M

L

L

J

L

So

I

think

you

obviously

accurately

summed

up

the

situation,

and

there

was

a

bunch

of

discussion

about

this

and

I

think

that

the

working

group

kind

of

felt

okay

with

that.

But

that

could

be

I

think

that's

certainly

something

people

are

aware

of,

but

that

may

have

been

the

wrong

decision.

And

so,

if

you

want

to

relitigate

that

I'm

not

gonna

so.

J

Editor

queue

right,

the

the

relaxation

of

DNS

was

done

in

the

origin.

My

highlight

here,

though,

is

that

the

security

considerations

list

on

the

client

may

be

concerned

with

this

right

and

stuff.

The

client

may

want

to

take

provide

a

sufficient

level

of

assurance,

such

as

optionally

checking

DNS,

as

the

ordinary

mentioned.

What

I'm

speaking,

though,

is

the

server's

ability

to

detect

or

mitigate

compromised

to

its

own

services

right.

J

So,

in

the

case

of

say,

Google

com,

you

could

look

at

BGP,

routing

tables,

advertisements

and

see,

is

someone

say,

Pakistan

claiming

a

YouTube

I

P

in

a

case,

a

certificate

frame.

You

no

longer

have

these

mitigate,

able

or

detectable

things,

even

if

it's

after

the

fact

detection

the

mechanism

disappears,

and

so

the

security

considerations

highlight

except

the

client

may

want

to

take,

but

not

steps

for

a

server

operator

concerned

about

compromised.

Do

you.

B

B

L

I'm,

just

like

tired

today,

so

bear

with

me

I

the

what

the

different.

How

is

this

different

from

the

organ

frame,

in

the

sense

that

so

so,

if

I

can

acquire

a

certificate

that

is

for

Google

and

myself?

It

seems

to

me

that

the

origin

frame

is

the

same

security

properties

that

this

does.

What

am

I

missing,

I.

J

Now

is

you

at

least

have

there

is

some

day

extent

of

detect

ability

of

the

comm

and

that

certificate?

Whether

and

this

depends

right

prior

to

TLS,

1

3,

the

unencrypted

and

the

certificate

things

like

that,

but

the

whole

threat

model

itself

doesn't

seem

to

be

explored

as

to

different

levels

of

mitigations

right.

L

O

A

certificate

legitimization,

that's

right,

I

mean

the

certificate

transparency

work

is,

that

is

the

goal.

Defense

pants

work

is

to

detect

certificate

in

assurance,

so

to

say

that

this

removes

the

ability

to

detect

that

there's

a

problem.

It

seems

like

it's

I

mean

we're

not

giving

up

on

CT,

right

and

I.

Believe

CT

is

one

of

the

recommended

things

for

clients

to

do.

J

H

Think

really,

that

is

the

difference

in

that

with

origin.

You

have

to

get

a

Miss

issued

cert

for

your

own

domain

with

an

extra

sayin,

and

this

this

would

go

a

step

further

and

let

you

expand

that

attack

to

also

if

I

got

the

surviving

and

there

your

protection

is

revoking

the

key

when

you

discover

the

compromised,

but

you

have

to

find

the

compromise

and

I

think

that's

that

is

independent

of

this

draft,

but

you're

right.

It

is

a

real

operational

security

concern.

H

D

Yeah

Eric,

nygren,

yeah,

actually

I

think

when

we

think

we're

thinking

about

for

origin

and

I

still

have

people

ended

up

from.

Is

it

the

the

ID

how

we

looked

at?

It

was

a

you

was

it.

You

have

ct2

for

detecting

this

issue

in

tuned

OCSP

stapling

as

the

way

to

respond.

I

think

that

corner

the

case

of

compromise

is

one

that

not

really

well

covered

there.

Another

related

one

that

this

does

make

worse

is

in

the

case

where

you

have

a

a

side

loaded

certificate

from

an

enterprise

into

this

CA

list.

D

This

also

makes

it

worse

because

that

means

that

a

enterprise,

for

example,

could

capture

traffic

much

more

easily

without

being

on

path,

which

is

kind

of

it's

not

compromised

and

I

know

that's

a

case

where

kind

of

like

yeah.

If

that

we're

doing

this

once

you

have

that

CA

and

they're

all

bets

are

off,

but

it

does

just

make

that

these

each

of

these

us

they

can't

combine

together,

make

things

a

little

bit

worse.

P

Kyle

Noke

Rhodes

I'm

also

very

concerned

with

this

issue

on

both

how

it

makes

it

a

lot

easier

to

exploit

a

compromised

certificate

in

mass,

and

it

also

makes

it

a

lot

easier

to

targets

exploitation,

but

I'm

wondering

if

we

were

to

expand

on

a

security

considerations

into

suicide.

What

options

do

we

even

have.

H

L

J

J

On

musicians,

it's

actually

key

compromised

that,

in

terms

of

thinking,

you

know

what

our

threat

model

is

for

what

the

future

the

PKI

ecosystem

is

because

of

CT

key

compromises.

Actually,

because

you

have

these

certificates

online

on

active

server

systems,

it

seems

much

more

likely

at

the

set

of

attacks.

We're

gonna

see

over

the

next

five

years

are

going

to

be

relate

to

key

compromising.

We

already

see

this.

J

L

Right

I

think

that's

only

true

I

mean

I.

Suppose

so

one

thing

I

mean

so

one

the

whole

things

we

could

do.

You

could

imagine

doing

right

you

guys.

One

thing

I

would

say:

is

it

sub

certs

do

how

to

help

this

a

little

bit.

The

sense

that

you

can

have

it.

You

can

have

the

subscribers

for

a

lifetime

and

then

and

then

you

and

then

you

rotate,

you

rotate

their

work.

They're

working

keys,

of

course

create

other

problems.

L

L

Don't

ever

let

me

be

applied

this

mechanism

right

and

if

you're

like,

like

HSTs

P.

If

you

remember

that,

then

then,

then

that

would

be

a

pretty

safe

and

thing

to

omit

like

nhts,

but

it

would

also

give

you

a

fair

amount

of

protection

against

this

kind

of

against

the

kind

of

attack.

Now,

of

course,

we

need

to

like

standardize

that

but

I

mean

like

I

guys.

L

Can

you

form

a

queue,

because

you

think

you

know

I

think

we

think

that

flux

is

obviously

unfortunate,

that,

like

we've,

created

a

situation

where

we

have

a

new

risk

for

everybody.

You

know

a

new

risk

for

everybody

that

they

can't

really

mitigate

without

doing

taking

active

steps,

but

that's

one

possibility

there

might

be

some

where

I

didn't

vote

that

have

it

be

opt-in,

but

I

haven't

bought

that

three

lexemes.

J

Order,

yeah

and

that's

I

mean,

and

what

you're

saying

is

very

similar,

we're

thinking

about

this

in

the

context

of

what

packaging

and

that's

where

these

the

similarities

between

web

packaging

certificate

frame

are

very

strong

and

the

the

mitigation

of

opt

and

versus

opt

out,

etc.

I

I

do

want

at

the

risk

of

pivoting

to

sort

of

a

second

conversation

point

on

the

draft.

One

of

the

things

that

that

did

seem

missing

is

a.

J

Things

like

that,

and

so

that

would

be

the

other

piece

that

I

would

suggest

for

consideration

in

the

draft

is

figuring

out

what

the

D

conflict

sort

of

scenario

looks

like

when

you

have

these

multiple

connections.

It's

mentioned

in

security

considerations

as

a

possible

confusion,

but

not

a

guidance

for

implementers

as

to

how

to

prioritize

and

select

available

connections

that

you

have

when

you

do

have

multiple

connections.

The.

A

A

A

H

Would

welcome

some

text

on

that,

but

my

initial

inclination

is

right

now

HCP

to

talks

about.

You

may

use

a

connection

for

certain

domains

and

if

you

wind

up

with

multiple

connections

that

are

acceptable

for

that

domain,

it

already

gives

no

guidance

on

what

to

do

and

browsers

already

deal

with

that.

A

common

case

for

that

is

you

don't

know

whether

its

support

HCP,

1

or

2.

You

open

two

connections

in

parallel,

because

that's

what

you

would

do

in

HQ

p11

LPN

kicks

in

they're,

both

HCP

2

and

in

practice,

what

winds

up

happening

is.

P

Q

I

was

also

going

to

second,

the

idea

that

I,

sorry

in

sweat,

for

all

the

reasons,

only

he

just

mentioned,

opt

in

would

make

me

feel

more.

Warm

physique

is

I,

certainly

know

of

Surtsey

Google,

where

you

know

we,

wouldn't

it

wouldn't

actually

be

the

end

of

the

world

if

they

got

compromised

and

then

there

are

others

that

would

be

be

in

the

world,

so

you

know

I

mean

we.

We

do

have.

H

Q

So

I

mean

I,

don't

I

haven't

thought

this

through

enough

to

have

a

mechanism

I

mean

previously.

When

we

talked

about

this,

we

basically

decided

like

this

seems

scary,

but

we

didn't

know

what

to

do

about

it.

I

mean

that's

basically

what

we

ended

up

with

when

we

talked

about

this

last

week's

all

right,

I

literally

have

no

idea

what

to

do

about

it:

yeah,

okay,

okay,

I'm!

Sorry,

for

that

I

apologize,

I,

don't

have.

If

I

had

a

solution,

I

would

suggest

it.

Okay,.

A

Ad

kg

of

the

cuse

closed.

Thank

you.

It

sounds

like

we

still

have

more

to

talk

about,

and

this

isn't

going

to

working

group

blasts

school

anytime

soon.

So

thanks,

but

thank

you

for

the

substantive

discussion.

Indeed.

Next

up

is

expect.

Ct

Emily

can't

be

with

us,

but

she

sent

a

summary

of

where

she's

at

which

I've

put

on

the

screen

so

they're

having

meta-major

changes

to

the

draft

in

a

while

only

some

minor

tweaks

to

reporting,

which

I

think

is

mirroring

some

of

the

other

stuff

happening

in

reporting

in

other

browser,

HDPE

extensions.

A

The

open

design

issue

is

where

the

header

should

have

include

some

domain

support

and

she's,

not

inclined

to

add

this

at

the

moment.

Unless

another

implementer

comes

along

and

wants

it

we're

unlikely

to

implement

in

chrome,

because

it's

a

moderately

complex

implementation,

the

marginal

value

is

somewhat

small.

A

A

A

What's

him

hello

next

slide,

so

this

is

structured

headers.

So

as

a

reminder

to

folks

the

goals

of

this

effort

are,

we

want

to

make

it

easier

and

more

reliable

for

people

to

specify

and

two

pars

HTTP

headers,

there's

a

long

history

of

people

specifying

HTTP,

headers

and

messing

it

up,

sometimes

in

major

ways,

for

example,

cookies,

and

we

have

recently

made

a

somewhat

bad

habit

of

putting

a

tremendous

workload

on

Julian

of

verifying

the

the

different,

a

B

and

F

of

the

people

trying

to

put

in

their

specs.

So

that's

not

fair

to

Julian.

A

So

after

some

discussion

in

Singapore,

we

agreed

to

rebase

the

draft

on

a

draft

that

I

put

together

and

talk

to

Paul

Henning

about

so

that's

otwo

and

then

in

draft.

Oh

three,

we

did

a

lot

of

refining

of

the

algorithms

in

the

spec

which

addressed

various

issues.

We

split

numbers

into

integers

and

floats

and

I

really

hope

that

into

the

bike

shedding

on

numbers,

but

we'll

see

and

lots

of

other

stuff

like

we

would

throw

an

error

on

child,

trailing,

garbage,

settles

and

so

on.

A

And

then

we

didn't

know

for

more

recently

lots

more

editorial

work,

changed

labels

to

identify

hers

and

made

some

other

adjustments

next

slide.

So

one

thing

I

wanted

to

highlight

the

folks

is

that

the

current

design

that

we've

settled

on

you

have

a

number

of

possible

top-level

types

for

use.

When

you

define

a

structured

header,

so

you

say:

I'm

gonna

define

the

Foo

header.

The

Foo

header

is

a

structured

header

and

it's

top-level

type

is

one

of

these

types.

A

You

have

to

specify

that

and

more

importantly,

when

someone

goes

to

parse

it,

they

have

to

tell

the

parser

in

some

fashion

that

which

type

it

is

at

the

top

level

and

that

might

be

with

the

method

name

or

any

other

number

of

programming

language

specific

constructs,

but

you

have

to

say:

I

went

to

par

section

area

now

the

bits

below

that

are

hinted

on

the

wire

and

it

will

parse

those

automatically.

You

have

to

validate

that.

That

in

structure

you

get

is

the

data

structure.

A

A

None

of

these

are

top

level

types

wreckers.

We

have

an

item,

a

simple

item,

so

you

know

identify

or

a

float

in

a

chair,

a

string

or

a

binary

content,

and-

and

that's

all

that's

available

and

in

discussions

with

bunches

of

folks

for

new

headers.

We

think

that

this

has

enough

descriptive

power

for

most

use

cases.

It

may

not

be

a

hundred

percent

of

new

HTTP

headers

on

the

planet,

but

it's

enough

to

add

some

value

and

that's

what

we're

shooting

for.

A

We

think

next

slide

a

couple

of

open

issues

that

would

be

good

to

get

input

on

number

433

length

limits

right

now.

The

spec

specifies

length

limits

on

all

of

the

different

structures.

So

it

says

a

string

must

be

no

more

than

I.

Forget

I

think

it's

added

a

thousand

24

characters.

You

must

have

no

more

than

so

many

items

in

a

list

so

forth

and

so

on,

and

that's

to

make

sure

that

there

are

same

limits

so

that

parsers,

don't

overflow

or

whatever

integers

are

64

bit

sign.

A

For

example,

this

helps

assure

Interop

and

assists

optimizations

down

the

road,

and

it

also

means

that

specifications

don't

have

to

specify

limits.

They

can

rely

on

the

built

in

limits,

even

though

they

might

be

very

large.

So

the

question

is:

is

that

a

good

approach

in

in

previous

discussion,

some

people

that

said

no,

these

limits

that

make

sense.

Everyone

who

specifies

a

structured

header

needs

to

specify

their

own

limits

if

they

want

limits,

and

we

don't

want

to

prematurely

constrain,

how

many

things

people

use,

for

example,

items

in

a

list.

A

C

C

That

doesn't

mean

that

someone

can't

define

go

off

the

path

and

do

their

own

thing,

and

that's

that's

why

I

think

that

it

doesn't

actually

matter

too

much

what

the

right,

what

the

limits

are

that

you

choose

in

here

and

they

seem

sensible.

Did

you

have

a

limit

on

the

number

of

items

in

a

list

or

the

number

of

yeses.

A

A

C

I'm

comfortable

I

think

you

had

256

parameters

or

something

crazy

about

512.

So

is

that

yeah,

those

are

big

numbers.

If

anyone

ever

decides

to

use

all

the

space

they

have

available

to

them,

things

will

explode

in

other

ways.

So

I'm

not

concerned

about

the

numbers

that

you

have

and

I'm

also

perfectly

content

with

letting

people

just

go

ahead

and

do

that

so

I

think

this

is.

This

is

about

right.

G

Truly,

unless

you

come,

I

I

think

it's

a

bit

confusing

to

put

a

limit

on

the

number

size

on

the

same

page

as

like

limits

on

the

number

of

identifiers,

because

they

are

very

different

things

than

implementation,

so

I'm

I

think

I

can

be

convinced.

That's

having

limits

on

the

integer

sizes

are

good,

but

I'm

very

skeptical

about

saying

256

identifiers

are

okay

about

257

are

not

okay,

so

maybe

we

should

discuss

that

as

two

different

kinds

of

limitations,

so.

A

M

You

that's

all

good

I,

consider

this

from

a

extension

point

of

view

and

for

numbers

and

strings.

You

always

have

the

option

to

extend

the

spec.

If

you

want

to

include

a

large

number

so

I'm,

pretty

fine

with

that.

So

I

think

that

you're

naughty

Julian's

comment.

If

we

could

provide

a

way

to

two

may

add

more

than

256.

A

I

want

to

push

back

on

that

a

little

bit,

because

the

way

that

it's

currently

specified

it's,

you

literally

go

through

the

algorithm,

and

if

you

have

let's

say

the

limit

for

sense

for

purpose

of

argument

is

256.

If

you

get

more

than

256,

you

throw

a

hard

error,

and

so

then,

if

a

header

specifies

no

don't

pay

attention

to

that,

that

means

that

you

have

to

modify

you

know.

The

whole

point

here

is

to

have

generic

implementations

of

structured

headers.

A

Now

you

have

to

modify

generic

implementations

and

if

someone

else

is

parsing

the

header

and

they're

using

somebody

else's

implementation,

they

have

to

have

the

ability

to

modify

that.

So

it

seems

like

it's

making

things

worse,

not

better.

Like

I

said,

I'm

I

think

it

would

be

great

if

people

are

uncomfortable

with

the

size

of

the

limits

making

them

bigger.

A

If

that's

the

issue

that

people

are

having,

but

if,

if

we

want

to

have

no

limits

whatsoever,

then

I

get

into

this,

you

know

the

the

other

concern

that

comes

up

is

that

well

implementation,

a

you

know

decided

that

256

or

too

many

an

implementation

be

decided

at

512

or

too

many,

and

then

you

get

interoperability

problems

in

that

sense,

just

like

we

do

for

for

integers,

you

know

so

having

some

sort

of

sanity

line

is

I.

Think

a

good

thing.

Yeah.

P

A

R

But

aside

from

that,

if

we

do

this

and

it's

in

10

or

extension

header

fields

in

the

in

the

future,

I

think

it's

really

important

that

it

be

self

descriptive

in

the

sense

that

if

you

have

a

specific

type,

you

if

partial

needs

to

know

what

the

type

is

before

it

begins.

Parsing,

then

that

should

be

visible

in

the

text

of

that.

Are

you

talking

about

the

gravy's

issue

now

talk

about

well,

yeah,

I,

love

that

the

whole

thing

so

I

mean

I

for

this

particular

issue.

R

A

Now

it's

enforced

by

the

parser,

and

the

idea

is,

is

that

if

I'm

saying

the

foo

header

I

can

say

it's

it's

a

list

of

no

more

than

five

items

and

then

the

you

know

a

value

comes

off

on

the

wire

I

gave

it

to

my

generic

parser.

If

the

value

length

is

over,

what

the

inspect

limits

are

so

one

or

24

for

example,

then

it

will

raise

an

error

and

I.

Don't

ever

see

it.

A

R

I,

so

that

that

clarifies

what

it

is,

you

would

expect

for

the

invitation.

What

I

don't

understand

is

why

would

you

ever

want

the

implementation

to

end?

At

that

point

and

say:

look

the

parts

were

made,

a

decision

for

me

and

what

what

is

the

correct

response

after

that?

Do

you

and

the

connection

terminate

the

stream

no.

S

A

It

so