►

From YouTube: IETF101-TUTORIAL-TRANSPORTAREA-20180318-1345

Description

TRANSPORTAREA TUTORIAL at IETF101

2018/03/18 1345

https://datatracker.ietf.org/meeting/101/proceedings/

B

C

A

D

F

A

F

Yeah

we

just

talked

about

the

student

newcomers

tutorial

that

people

like

tell

you

eat

the

mic

get

closer.

My

name

is

Miriam

Cunha,

not

me

yeah

coolant.

Might

this

is

very

confusing

for

people?

Sometimes

you

get

emails

for

each

other

I'm

not

actually

doing

giving

this

presentation.

I

am

a

member

of

the

educational

team.

That's

responsible

for

organizing

these

tutorials,

like

the

newcomers

tutorial

that

some

of

you

have

been

just

now.

A

next-door

was

another

tutorial

here

in

the

room

we

usually

give

for

tutorials

on

Sunday

before

the

ITF

meetings.

F

This

is

one

of

it,

one

of

them

and

that's

an

overview

of

the

transport

area.

So

we

we

always

give

a

newcomers

tutorial.

We

usually

also

give

an

overview

tutorial

of

one

of

the

areas

so

they're,

currently

seven

areas

and

transport

is

this

time

around

giving

a

tutorial

and

giving

an

introduction

on

what's

currently

happening

in

the

transport

area

and

a

next

time

there

will

be

another

area

and

so

forth

until

like

in

seven

meetings

or

so

transporters

up

again,

and

then

there

were.

F

There

is

another

tutorial

in

parallel

here

now:

oh

yeah,

that's

in

how

to

write

a

good

RFC,

but

the

party

you're

interested

in

that

that's

so

next

time,

so

we're

always

rotating

this

we're

having

a

different

topics

each

time

and

from

time

to

time.

We

also

repeating

some

topics

that

might

be

interesting

for

a

wider

audience.

F

We

also

have

some

other

activities

like

the

mentoring

activities

that

maybe

some

of

you

have

teamed

up

with

a

mentor

or

mentee

the

quick

connections,

kind

of

get

together

for

new

commerce-

that's

happening

this

afternoon

and

when

other

activity

is

some

training

and

for

an

information

sharing

with

working

group

chairs.

So

as

always,

a

working

group

chairs

lunch

training

session

that

the

education

team

is

organizes.

F

It

kind

of

falls

into

a

bigger

at

education

and

mentoring,

Directorate

that

we

are

it

be

organized

in

and

it's

kind

of

under

the

general

general

area,

so

I'm

trying

to

kill

some

time

here,

because

Maria

is

coming

out

of

a

meeting

that

she's

held

up

with

because

she

is

also

on

the

is

on

the

IHC

right.

Yes,

she's,

a

transfer

area

director.

Yes,

so

you

have

to

actually

the

area

director

telling

me

what's

happening

in

the

area.

F

A

A

So

the

TSV

area

is

often

referred

to

as

just

transported.

I

covers

a

range

captain

cog

related

to

data

transport

in

the

internet,

primarily

protocol

design

and

maintenance

at

layer,

4

and

I'll

show

you

in

just

a

minute

what

we

mean

by

layer

by

layer

for

this

is

TCP,

UDP,

setp

and

Fran's.

These

the

protocols

that

that

there

were

that

layer,

major

concerns

in

major

technical

concerns

at

this

layer

of

the

protocol

stack,

are

congestion,

control

and

active

queue

management,

transport

started

out.

Big.

A

big

concern

was

to

prevent

congestive

collapse.

A

The

internet

been

there

done

that

not

going

back

again,

wait

long

long,

well,

back

in

internet

history

hasn't

been

a

concern

recently,

more

recent

concern

is

queue

occupancy

and

in

particular

buffer

bloat,

about

which

quite

a

bit

of

a

very

useful

work

has

been

been

done

recently.

Transport

also

covers

quality

of

service

and

related

singling

protocols.

Qos

two

examples

are

differentiated

services

framework,

usually

called

diffserv,

and

the

RSVP

a

single

protocol

sometimes

called

the

reservation

protocol.

Now

there

are

some

activities

in

the

transfer

area.

There

aren't

layer

for

specific.

A

For

example,

storage

protocols

like

I

scuzzy

are

really

late

layer,

five

and

above

they're,

located

in

TSV

for

historical

reasons.

Since

we

go

through

the

survey,

you'll

get

a

sense

of

what

the

what

those

are.

It's

often

the

case

that

they

use

a

great

deal

of

the

cable

you

can

put

quite

a

bit

of

bandwidth

or

that

there

were

concerns

that

were

transferred

specific

in

the

protocol,

design

that

would

best

worked

on

in

this

area,

for

example,

dealing

with

certain

aspects

of

congestion

control.

A

A

There's

transport

right

in

the

middle

at

suck

at

layer

4

give

you

an

idea

what

this

means

in

practice,

if

you're

busy

looking

at

the

web

instead

of

paint

into

the

slides,

here's

G

stack,

might

look

like

if

you're

plugged

into

a

socket

in

the

wall,

I

used

hardware,

cat,

5,

cable,

100,

megabit,

Ethernet,

IP

and

sure

enough.

There's

TCP

at

layer,

4

below

the

HTTP

and

HTML

stack

that

the

web

browser

uses.

Now

this

is

what's

in

the

textbook.

This

is

where

is

where

transport

lives

and

practice

to

two

points?

A

Layer,

six

doesn't

really

exist.

We

get

up

to

about

layer,

five

and

then

it's

other

stuff

that

happens

inside

layer

five

protocol

and

the

other

thing

is

that

the

network

stack

for

the

Internet

is

often

referred

to

as

an

hourglass

stack

or

architecture,

where

IP

is

the

thin

point

of

the

hourglass.

It's

a

relatively

simple

protocol

by

comparison

to

some

other

things,

and

it

allows

great

little

flexibility

in

what

runs

below

it.

Ip

runs,

or

nearly

everything

heck

comes,

even

a

German

pigeon

fanciers

club

that

got

it

to

run

over

carrier

pigeons.

A

The

thing

time

was

truly

impressive

and

above

IP,

a

great

deal

of

innovation

is

possible

because,

as

this

interface

now

lately,

it's

become

the

case

that

a

lot

of

the

sort

of

stability

has

now

encompassed

both

the

network

and

transport

layers.

So

people

are

tending

to

work

with

one

of

a

very

small

number

of

transport

protocols,

TCP

and

UDP.

So

speaking

of

TCP

in

the

beginning,

there

is

TCP.

Well,

let's

sort

of

how

our

story

begins.

Transport

is

one

of

the

oldest

IETF

areas

at

layer,

4

of

their

qnet

elements.

Whatever

you

do.

A

Oh,

the

Internet

almost

certainly

involves

some

transport

protocol

TCP

UDP

later

on

SCTP,

which

was

originally

developed

for

very

low

latency.

It's

left

early

signaling,

but

it's

found

a

number

of

other

uses.

The

most

recent

interesting

use

is

it's

the

data

channel

for

web

RTC

DCP,

which

is

an

intermediate

protocol

that

tries

to

do

congestion,

control

for

for

unreliable

transmissions

and

latest

work

and

transport,

which

you'll

hear

about

a

bit

later

in

this

presentation

is,

is

quic,

which

is

a

new

transport

protocol.

A

That's

been

designed

and

adapted

for

web

traffic

and

that's

the

general

theme

of

transport.

We

adapt

technology

to

the

Internet,

make

things

work

of

our

packets

at

large

scale,

with

congestion

control

and

so

storage.

Pseudo

wires

and

multimedia

are

examples

of

things.

The

transfer

area

has

worked

on

to

get

to

work

at

large

scale,

with

congestion

control

need

to

say

a

few

things

about

multimedia

and

the

riot

area.

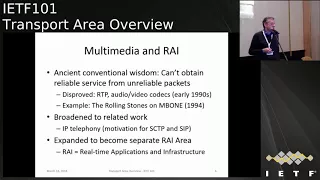

A

Once

upon

a

time

when

the

internet

was

first

put

together

the

conventional

wisdom,

what

everybody

understood

was

you

couldn't

get

reliable

storage

or

whatever

packets,

unless

you

had

some

kind

of

protocol

like

TCP,

that

was

prepared

to

to

insert

to

insert

some

serious

buffering

delays

and

be

prepared

to

do

retransmission

or

impactors

were

dropped.

This

was

disproved

by

the

early

1990s

RTP,

which

is

a

real-time

transport

protocol,

runs

over

UDP

and

order.

A

Video

codecs

had

gotten

to

the

point

that,

in

combination

with

the

underlying

network

hardware,

you

could

in

fact

do

real-time

audio

and

video

over

the

Internet,

and

it

all

worked

on

example

that

turned

everybody's

heads

was

in

1994.

The

Rolling

Stones

were

multicast

on

the

mbone

over

over

the

Internet,

and

people

got

got

a

real

console

experience,

or

at

least

as

good

as

you

could

get

on.

The

computer

displays

at

the

time

that

whole

area

of

multimedia

work

broadened

to

broaden

to

relate

work,

particularly

IP

telephony

and

I.

A

Believe

left

me

was

motivation

for

work

initially

on

SCTP

and

then

on.

Sip,

which

is

a

switch,

is

a

session

initiation

protocol.

That's

widely

used

for

IP

telephony

and

all

of

this

broadened

to

the

point

that

transport

was

actually

possible

into

two,

that

all

of

the

work

in

transport

that

related

to

multimedia

and

IP

telephony

was

then

split

off

to

become

a

separate

area

called

the

real-time

applications

and

infrastructure

area,

and

so

historically,

that

was

transport

work

because

interacted

very

very

strongly

with

the

transport

layer.

A

A

All

right,

let

me

see

if

and

chew

up

a

little

bit

of

time

here,

do

sort

of

a

real

quick

overview.

They'll

be

slides

on

they'll,

be

slides

on

each

of

these,

so

today's

transfer

area

scope

is

sort

of

summarized

in

these

bullets.

You

have

the

core

transfer

protocols,

things

like

TCP,

SCTP

and

UDP

that

many

cases

have

been

around

for

a

long

time.

The

relatively

mature

protocols

Paints

worked

on

them,

quick,

which

is

a

new

transfer

protocol

with

security

intended

primarily

for

web

traffic,

congestion

control

and

cue

management.

A

This

is

the

infrastructure

that

sits

inside

the

network

in

routers.

That

is

that

this

is

where

the

queue

management

sits

and

then

the

corresponding

protocol

logic

at

the

endpoints.

That

response

was

going

on

in

the

routers

to

appropriately

manage

manage

congestion

and

try

not

to

over

the

network.

Nat

traversal

NAT

stands

for

network

address

translation.

You

probably

have

one

of

these

in

the

box

that

your

net

provider

gave

you

put

to

put

in

your

house.

It

allows

you

to

connect

multiple

things

up

to

one

internet

connection.

A

It

stands

for

network

address

translation,

because

what

commonly

happens

this

is

a

provider

will

give

you

one

IP

address,

but

every

single

device

you

want

to

attach

to

the

Internet

via

your

local

box,

wants

its

own

IP

address

and

what's

sitting

in

the

middle

in

that

box

is

something

called

a

NAT.

It's

actually

a

port.

A

Translating

version

of

an

of

a

network

address,

translator

and

translation

is

not

perfect

when

I

go

from

many

IP

addresses

to

the

one

IP

address

that

the

unit

that

the

unit

service

provider

uses

certain

things,

don't

work

transparently,

a

lot

of

the

work

in

figuring

out

how

to

get

things

to

run

through

these

boxes

is

transferred

area

work,

quality

of

service

and

related

quality

of

service.

Signaling

work

goes

on

goes

on

in

in

the

transport

area.

This

is

topics

like

the

differentiated

services

QoS

framework

for

for

the

internet

storage.

A

Networking

a

number

of

very

important

protocols

that

are

used

in

this

read

storage

are

developed

in

the

transport

area.

I

scuzzy

NFS

are

two

examples:

there's

a

whole

bunch

of

other

topics

such

as

delay-tolerant

networking

and

performance

metrics

and

measurement

where

the

transport

area

has

been

a

logical

or

commune

for

that,

and

with

that

here's

Miriah

who

will

introduce

yourself

and

pick

up

from.

G

So

you

were

quick

here

so

hi,

I'm

melia,

I'm

one

of

the

transport

area,

directors,

and

usually

I

try

to

dedicate

this

to

somebody

else.

So

anyway,

I'm

more

less

qualified

to

tell

you

some

more

specific

things

about

part

of

the

work

we

do

in

transport,

so

I

would

go

over

the

first

half

of

these

and

then

David

would

pick

up

the

second

half

again.

G

Yes,

so

transfer

protocols,

that's

like

our

main

business

and

like

TCP

and

UDP

albano

and

transfer

protocols

that

has

been

developed

in

the

ITF

and

we're

still

maintaining

these

I

mean

UDP

is

not

really

a

transfer

protocol,

but

it's

still

I

mean

a

lot

of

service

running

running

over

over

UDP.

So

our

task

is

also

to

give

advice

to

people

developing

protocols

on

top

of

UDP,

for

example,

for

TCP

we

have

our

own

working

group,

that's

TCP,

m4,

maintenance,

work

and

UDP

usually

has

topics

that

are

discussed

in

the

t's.

G

We

work

in

groups,

oh

geez,

we

working

group.

Did

you

say

something

about

this?

Already?

No

geez.

We

working

group

is

a

it's

a

working

group

that

kind

of

catches

up

the

topics

that

doesn't

don't

fit

anywhere

and

are

not

big

enough

for

our

own

working

group.

There

are

a

couple

more

terms

of

protocols

actually

that

our

less

well-known

and

less

tight

but

are

deployed

in

special

use

cases.

G

One

of

them

is

DCP,

for

example,

the

other

one

is

SCTP

and

then

there's,

of

course,

a

big

new

shiny

one,

which

is

quick,

so

quick,

is

currently

under

development.

Quick

was

first

brought

up

by

Google

and

they

already

deployed

it

in

on

a

large

scale

on

their

communication

systems.

Basically,

but

what

we

have

now

in

the

ITF

since

about

a

year

is

not

like

the

same

protocol

anymore,

so

we

basically

usually

speak

about

the

Google

quick

and

the

IETF

Cricut

already

diverged

a

lot

because

you

bring

more

people

in

the

process.

G

You

have

more

opinions,

and

hopefully

that

also

helps

to

improve

the

proposed

protocol.

The

difference

between

quick

and

all

of

the

other

protocols

you've

seen

on

the

previous

slide,

is

that

quick

has

encryption

integrated,

inherently

from

the

beginning,

so

all

quick

traffic

will

be

encrypted

and

the

encryption

uses

the

TLS

protocol.

So

that's

also

big

difference

from

the

Google

version

of

the

off

quick

which

just

using

is

also

encrypted

but

using

own

crypto

protocol.

Basically-

and

that's

also

one

of

the

big

discussion

sets

that

are

happening

currently

in

transport.

G

Okay,

to

go

a

little

bit

more

into

detail

because

that's

one

of

the

big

topics.

So,

if

you

look

at

this

picture,

what

you

have

today,

basically

and

and

I,

have

to

say

HTTP

is

the

main

use

case

for

quick

but

quick

as

a

general

purpose

protocol.

So

currently

the

working

group

is

really

focusing

on

making

it

work

for

HTTP,

but

it's

not

the

only

use

case.

So

today

you

have

HTTP,

hopefully

HTTP

over

TLS

over

TCP

and

was

quick.

G

One

of

the

big

benefits

for

HTTP

traffic

is

that

this

public

box

here

the

multiplexing,

is

moved

into

the

protocol,

so

that

actually

provides

your

performance

benefit

because

you

don't

have

any

head-of-line

blocking

anymore,

which

you

still

have

in

the

old

model.

The

other

big

difference.

What

I

already

managing

is

that

the

green

box

is

not

a

layer

anymore.

It's

really

within

quick

and

there's

a

lot

of

inter

working

between

quick

and

TLS

yeah.

G

Yeah

so

beside

hand

of

line

blocking

one

also

big

difference

for

performances

that

it

actually

reduces

latency

and

with

TLS

you

already

have

a

quicker

handshake.

Basically,

so

you

have

something

which

is

called

session

resumption

or

zero,

a

GT,

which

means,

if

you

have

talked

to

the

server

previously,

you

can

rejoin

your

session

and

like

send

encrypted

data

from

the

beginning,

without

the

handshake

or

like,

without

waiting

for

the

completion

of

the

handshake.

Due

to

the

integration

of

the

cripton

into

TLS,

you

even

save

the

transport

headshake.

G

The

crypto

handshake

is

combined

with

the

transport

handshake

and

you

can

start

sending

data

to

a

recent

session

right

from

the

beginning

with

the

very

first

packet.

So,

basically,

you

send

out

your

first

hello

to

the

server

and

you

immediately

send

out

your

HTTP

requests

as

well

and

then,

as

soon

as

the

server

has

calculated

the

crypto

context,

it

can

send

you

the

data.

You

need.

G

Okay

related

to

all

this

work,

not

quick

and

specific,

specifically,

is

a

work.

That's

happened

in

the

taps.

Working

group

tap

stands

for

transport

services

and

the

idea

here

is-

or

the

question

here

is:

how

can

we

use

more

effective

use

of

these

different

protocols,

including

quick,

because

today,

if

you

want

to

use

a

different

protocol,

you

actually

have

to

basically

change

your

operating

system,

because

it

gives

you

kind

of

two

options:

either

TCP

or

UDP

I

mean

the

other.

G

Specifically,

you

see

this

case

and

that

today,

when

quic

is

implemented

which

works

over

UDP,

you

always

have

a

fallback

to

TCP.

So,

basically,

depending

on

how

it's

implemented,

you

either

send

out

like

two

connection

requests

and

the

one

that

guess

gives

you

back

first

or

as

the

one

you

select

or

you

wait

for

a

while.

If

quick

doesn't

work,

then

you

try

to

connect

with

TCP

and

run

everything

over

TCP.

G

Yeah

okay,

connected

to

transfer

protocols

is

a

very

important

topic.

Is

congestion

control,

so

congestion

control

is

the

main

purposes

basically

or

it

was

invented

when

there

was

a

connection

connection

congestion

collapse

in

the

late

80s

or

beginning

of

the

90s?

So

the

goal

here

really

is

to

kind

of

let

the

internet

run

safely,

don't

destroy

the

internet

right.

So,

of

course,

congestion

control

also

tries

to

maximize

your

throughput

and

tries

to

get

the

most

out

of

the

connection

you

have

but

like

when

we

are

in

the

IETF,

discuss

congestion

control.

G

Really

the

safety

point

is

kind

of

in

focus.

We

don't

want

to

write

something

down

in

our

specs

that

can

break

the

internet.

So

that's

also

kind

of

part

of

the

transport

area

for

work

that

we

are

kind

of

monitoring

in

the

other

areas

is

like

trying

to

figure

out.

If

the

work

that's

done

in

those

areas

is

safe

to

deploy

with

respect

to

the

load

that

goes

on

the

internet

we

have

I

could

go

on

the

next

slide.

For

that

one.

We

have

very

few

congestion

control

schemes

that

are

documented

in

RC.

G

Basically,

it's

like

New

Reno,

which

is

the

original

proposal

and,

like

since

few

months,

there's

also

an

hour,

see

that

that

describes

cubic,

and

that

is

because

we

only

put

something

down,

as

I

said

already,

when

we

know

it's

safe

to

deploy

and

for

conditional

towards

really

hard

to

figure

out

if

it's

safe

to

deploy.

If

you

don't

deploy,

if

you

don't

have

a

deployed

yet

right

so

for

cubic,

this

isn't

use

for

I,

don't

know

more

than

10

or

20

years,

so

that

seems

pretty

safe

for

new

proposals.

It's

really

hard

to

say

something.

G

However.

What

we

do

have

is

that

we

have

also

research

groups

connected

to

the

IETF.

Those

research

groups

are

part

of

the

IRT

F

of

the

internet.

Research

task

force,

but

are

co-located

with

the

ITF,

so

folks

see

each

other

can

talk

to

each

other

and

go

to

the

to

the

meetings

and

there.

If

you

have

a

new

congestion

control,

that's

the

right

place

to

go

to

and

get

feedback

and

discusses,

and

also

the

IRT

of

groups

can

publish

documents,

of

course,

yeah,

but

I

mean

in

general.

G

We

have

this

long,

lasting

question

about

where

to

do

the

work

on

congestion

control

and

it

really

depends

in

a

case-by-case

basis

where

to

put

the

work

at

the

end

in

which

working

group

current

activity.

So

in

the

in

the

internet,

congestion

control,

research

group,

the

ICC's

ERG,

we

see

a

lot

of

presentations

from

google

again

on

PBR,

so

that's

a

new

congestion

control

that

they

are

already

using

in

quick,

and

it's

discussed

a

lot

here

also

concerns

about

deployment

and

stability

are

discussed

and

the

other

part

where

we

have

congestion

control

work.

G

Right

now

is

the

RM

ket

working

group,

and

that

is

focusing

on

congestion

control

for

real-time

media,

because

that's

really

a

special

case,

because

you

have

the

meteor

decoder

that

might

also

dep

the

rate.

So

you

have

some

interactions

there.

So

that's

like

hard

case

and

it's

a

little

bit

of

research

group,

but

it's

part

of

the

IETF,

because

we

have

a

real

problem

that

we

need

to

address.

You.

G

The

other

part

of

congestion

control

is

what's

happening

in

the

network.

So

basically,

how

do

you

know

that

there's

congestion

in

the

network

today?

You

know

this

mainly

by

seeing

drops

and

you-

and

those

drops

usually

happen

when

there's

a

buffer

in

the

network

that

overflows

and

then

a

packet

is

lost.

You

assume

it's

congestion,

you

reduce

it

sending

rate

so

to

get

a

little

bit

ahead

of

this

buffer.

Overflow

aqm

is

what

you

need.

G

Basically,

so

you

watch

the

queues

in

your

router

and

when

you

see

that

your

queue

is

filling,

you

drop

a

few

packets

early

on,

not

too

many.

So

you

give

some

feedback

to

the

endpoint

at

the

end,

one

can

reduce

the

rate

and

you

avoid

the

congestion

before

it's

actually

happening

before

it's

completely

overflowing

the

buffers,

and

we

used

to

have

a

working

group

on

this

one,

because

in

the

last

couple

of

years

more

and

more

schemes

have

been

proposed

and

we

also

wanted

to

standardize

them.

G

So

we

can

support,

or

we

can

advise

people

to

actually

deploy

those

mechanisms,

because

it's

beneficial

to

have

those

mechanisms

and

not

have

like

this.

This

burst

packet

loss

when

your

buffer

overflows,

even

better.

It's

the

situation

when

you

use

ezn,

because

in

this

case,

instead

of

throwing

away

the

packet,

you

actually

just

mark

the

packet

and

then

the

endpoint

knows

about

it

and

can

reduce

the

sending

rate

with

losing

the

packet.

G

However,

the

deployment

star

of

easy

and

is

a

little

bit

more

complicated,

because

it's

not

only

the

router

that

needs

to

Mack

the

packet,

it's

both

of

the

endpoints

that

need

to

understand

this

marking.

So

the

sender,

of

course,

has

to

understand

the

marking,

because

it

has

to

reduce

the

sending

rate,

and

the

receiver

also

has

to

understand

the

marking,

because

it

has

to

tell

the

sender

that

it

saw

the

marking.

G

Another

thing

that

supports

ecn

deployment

is

that

you

can

actually

do

better

with

easy

and

effectively.

You

don't

have

to

use

the

same

congestion

control

as

it

used

today.

You

can

actually

optimize

for

the

aqm

mechanism

that

use

that

use

ECM,

because

you

can

provide

a

very

early

signal

without

any

disadvantage

right,

because

you

don't

drop

any

pockets.

You

can

provide

much

more

feedback

than

when

you

want

to

do

it

on

a

drop

base

with

drops.

You

always

try

to

avoid

packet

loss

with

easy

end.

There's

actually

no

reason

to

avoid

the

congestion

feedback.

G

If

you

are

in

congestion

right,

you

can

send

as

much

feedback

as

you

want

as

long

as

the

situation

is

under

control.

So

what

you

need

is,

however,

if

you

send

different

kind

of

feedback,

is

also

different

reaction.

So

there

is

this

example

of

data

sender.

Tcp

that

was

actually

doing

exactly

this.

You

provide

a

different

kind

of

aqm

in

your

data

center

and

you

react

differently

with

it

and

both

together

optimize

your

network

and

use

it

more

efficiently,

and

we

try

to

achieve

the

same

thing

in

the

internet.

G

Basically,

but

those

two

different

schemes

cannot

really

coexist

on

the

same

connection.

So

what

we

do

is

we

try

to

put

them

into

different

queues

and

one

queue

is

working.

The

traditional

way,

may-maybe

drop

base

may

be

easy

in

base,

but

in

both

cases

the

same

feedback

you

get

and

the

other

queue

is

giving

you

much

earlier

much

more

feedback.

You

can

react

too

quickly

in

a

different

way.

G

Yeah

and

there's

a

picture

for

it,

so

what

it

means

is

in

the

in

the

first

picture

here

this

is

the

traditional

day

in

a

way

you

do

it.

So,

basically,

when

you

get

a

congestion

feedback

signal,

you

have

your

sending

rate

and

to

maintain

your

link

utilization.

You

have

to

have

a

number

of

packets

in

your

buffer

to

actually

cover

this

period,

where

you

have

just

have

less

packet

sending.

G

If

you

would

now

just

change

your

echo

mechanism

and

give

your

very

early

feedback

at

a

low

buffer

rate,

then

that

means

you

still

have

your

sending

rate.

In

a

certain

point,

you

don't

have

enough

packets

to

send

anymore,

so

your

utilization

would

suffer

as

well,

and

what

we

really

want

is

give

you

a

very

early

signal.

You

only

reduce

your

sending

rate

a

little

bit

and

the

link

is

still

fully

utilized,

which

is

the

third

picture,

and

maybe

this

is

a

good

point

to

ask

for

questions.

Do

you

have

any

questions

on

this

part?

G

I

Thanks,

my

name

is

Saul

I

work

for

the

International

ad

agency.

It's

just

if

I

don't

put

the

question

now.

I

will

forget

for

sure.

So

just

one

question

about

congestion

and

with

the

four

KS

like

we're,

gonna

have

more

millions

of

billions

of

device

connected,

and

so

do

you

have

a

work

in

the

transport

and

services

area

or

to

forecast

you

to

see

when

the

bottleneck

will

be

happening,

that

we

you're

scared

to

solve,

or

there

is

no

such

work

going

and

we

solve

the

problems

as

they

appear

to

you.

You.

G

I'm

trying

to

make

my

head

up

that

could

even

work,

but

it's

really

hard

in

a

distributed

network

like

the

Internet

to

actually

try

to

forecast

anything

right.

It's

like

it's

a

it's

a

tons

of

distributed,

endpoints

connected

and

the

traffic

is

routing

routed

in

a

way

that

you

might

not

see

on

the

transport

layer

right

so

I

don't

even

know

how

that

could

work.

I

guess

in

this

case

the

answer's

no

for.

A

A

A

Okay,

so

I'm

going

to

pick

up

the

rest

of

the

transport

errors,

area

scope

and

described

things

starting

from

NAT

traversal,

NAT,

traversal

NAT

used

to

be

a

dirty

word

around

here.

The

IETF

originally

believed

that

we

ought

to

be

able

to

have

an

IP

address

for

every

single

device

and

they

should

all

all

be

able

to

each

other

that

hasn't

worked

out

so

well.

3-2

bits

turned

out

not

to

be

enough

address

space

for

ipv4

addresses,

and

there

are

other

reasons

to

do

things

like

add.

A

To

do

address

translation

in

boxes

at

the

edges

of

the

internet.

Nats

are

now

widely

deployed

at

first,

the

the

traversal

for

the

net

redress.

Translation

was

done

on

a

protocol

by

protocol

basis,

Ike,

which

was,

which

is

the

key

negotiations

that

a

protocol

for

IPSec

was

the

first

four

of

which

work

was

done

now

as

a

protocol

independent

framework

consisting

of

the

stun

turn

and

ice

protocols.

The

basic

idea

behind

these

protocols

is

pin

hole

punching,

which

is

that

I

have

an

application

running

on

in

system

behind

that

and

it

needs

to

use.

A

It

needs

to

use

a

port,

and

so

what

happens

is

a

pin

holes

punch

that

port

or

that

session?

Actually,

so

you

know

what

the

ports

are

both

ends

and

that

pin

hole

is

kept

open

by

a

keepalive

traffic

as

necessary,

so

the

application

to

do

it.

The

protocols

involved

in

this

are

called

stun

turn

and

ice.

Stun

involves

address

discovery

so

that

you

can

come

through

a

pinhole

and

think

and

figure

out.

What

the

contact

address

is.

A

Look

like

turn

is

a

relay

protocol,

because

it's

sometimes

necessary

if

I

have

gnats

at

both

ends

of

a

connection.

To

do

a

relay

in

the

middle

and

ice

is

an

overall

negotiation

framework.

You

use

these.

These

all

have

rfcs.

Rfc

numbers

are

on

the

slide.

There's

an

ongoing

work

to

do

other

improvements

to

turn

security

improvements,

some

auto-discovery,

better

ipv6

support

in

the

tram

working

group.

Quality

of

service

general

quality

service

frameworks

are

in

the

transport

era.

This

used

to

be

an

air

with

a

lot

of

work.

A

Integrated

services

was

the

first

type

of

quality

service

back

when

I

first

joined

the

IETF,

it

was

it

it

did.

What

him

to

do,

but

was

per

flow

you

needed

to

have

state

for

every

insert

flow

in

every

router

participating

in

that

flow.

Every

router

could

that

they

could

potentially

be

a

bottleneck

for

it

and

the

result

was

very

poor

scaling

and

so

we've

moved

to

something

called

differentiated

services.

It

puts

a

traffic

class

in

the

IP

header.

A

We

have

the

problem,

the

other

in

the

spectrum

now,

which

is

that

there

are

limited

number

of

traffic

classes

that

can

be

used

and

there

additional

of

variants

and

cures

frameworks.

One

example

is

pre

congestion,

notification,

which

Matt

does

capacity

management

for

real

time,

not

just

responsive

flows,

QoS

signaling,

the

one

protocol

that

we

have

have

had

for

quite

some

time

is

called

RSVP

the

resource

reservation

protocol.

Today,

it's

mostly

used

for

traffic

engineering.

We

don't

see

much

RSVP

for

end-to-end

traffic

and

the

upshot

is

saw.

A

The

theme

playing

across

the

slide

is

most

what

I've

described

is

pretty

much

completed.

There's

limit

development

and

maintenance

of

diffserv

and

RSVP

in

the

transport

area

working

group,

TS

vwg.

One

of

the

things

that

is

going

on

right

now

is

improving

support

for

what's

called

scavenger

traffic

or

the

ability

to

send

traffic

that

that

you

would

like

to

have

less

treatment,

lower

effort

to

odd

than

best-effort

traffic

stories.

Networking

a

lot

of

ribs

on

the

IETF

I

scuzzy

and

the

fibre

channel

over

at

each

IP

protocols

were

developed

here

in

cooperation.

A

The

stories

Janos

bodies

shown

in

the

slide.

This

was

done.

The

stories

matey's

working

group,

which

has

now

concluded

for

nas

storage,

NFS

network

file

system,

NFS

v3

and

then

get

the

end

of

current

versions

before

were

developed

in

IETF.

The

current

version

is

nfsv4

point

too

close

to

complete

and

the

other

most

common

file

sharing

protocols.

The

windows,

sips

and

SMB

protocols

are

not

developed

in

in

the

IETF

there's

an

argument.

Protocol

suite

called

I

warped

on

the

argue.

Working

group

also

concluded

our

team,

a

stance

remote

directory

access.

A

This

is

often

used

for

storage

protocols,

I'm

involved

in

some

new

stories

protocol

work

outside

the

IETF

that

makes

extensive

use

of

our

DMA

delay-tolerant

networking.

So

how

do

we

communicate

with

with

over

IP

to

the

Voyager

probe,

which

is

way

to

heck

out

to

beyond

the

solar

system?

This

is

this

is

what

the

delay

tonic

networking

group

is

is

is

trying

to

solve

deal

with

very

high

delay,

low

connectivity

environments,

all

kinds

of

examples

shown

here:

there's

has

been

a

set

of

protocols

to

find

the

two

most

important,

which

are

bundle

and

and

LTP.

A

The

DTN

working

group

is

working

on

updating

these

protocols

based

importation

experience.

Some

support

for

new

use

cases

and

standards

track

work.

Finally,

another

topic

network

performance

management,

the

IP,

PM,

IP

performance

measurement,

working

group,

sort

of

goes

by

sort

of

the

credo.

If

you

can't

manage

you

can't

measure,

and

so

that's

all

about

putting

in

support

to

measure

things

on

the

internet

standard

metrics

methods

to

improve

metrics

current

work

is

a

is

the

measurement

version

of

what

is

sometimes

called

scooty.

A

People

might

sometimes

referred

to

as

a

known

plaintext

attack,

you

deliberately

inject

measurement

traffic,

the

protocol,

you

want

to

measure

and

then

observe

from

from

the

internet

how

the

protocol

reacts

to

it.

This

is

being

used

in

part

to

do

in

band

OAM,

so

that

you

can

be

absolutely

certain

that

the

OP

measurements

used

for

operation

ministration

management

are

coming

from

the

path

that

the

traffic

took,

because

the

measurements

actually

embedded

in

the

protocol

that

transmits

the

traffic.

A

G

Yes,

so

this

is

like

a

higher

layer

topic

that

is

widely

discussed,

basically

in

the

whole

IETF.

But

it's

very

closely

connected

to

quick,

because

that's

where

we

encrypt

everything,

also

information

that

is

in

the

transport

and

we

I

give

you

a

quick

introduction

about

the

discussion

there

may

be,

and

then

we

go

on

the

details

about

what

happening

this

week

right,

yeah.

D

G

So,

basically,

where

we

started

off

kind

of

nothing

was

encrypted.

Everything

was

visible

to

the

network.

You

have

in

this

picture

at

the

bottom,

the

payload,

because

this

is

kind

of

indicating

and

the

different

headers.

You

will

see

on

a

packet,

so

you

could

even

see

the

payload.

You

can

see

the

application

layer

headers,

so

the

HTTP

headers,

for

example-

and

in

this

use

case

you

can

see

all

the

information

in

the

transport

header.

G

So

everything

it's

in

the

TCP

header,

the

UDP

header

a

little

bit

smaller,

doesn't

give

you

as

much

information

and,

of

course

you

can

see

the

IP

header

where

which

you

also

need

for

the

routing

information.

So,

basically,

even

so

only

the

the

information,

the

P

header-

were

actually

initially

meant

for

the

network

and

what

happened

because

everything

was

visible

was

that

some

of

the

information

provided

in

this

header

was

utilized

by

different

network

functions.

G

So

yeah

I

mean

basically,

you

have

a

lot

of

information

in

the

transport

in

TCP

header,

specifically,

that

is

meant

for

inter

and

operation.

But

when

you

look

at

the

data,

you

can

also

do

something

else

with

it.

So,

for

example,

if

you

match

up

the

sequence

and

the

egg

number

or

you

even

look

at

the

TCP

time

send

option,

you

can

use

it

for

round-trip

time

measurements

of

the

flow

you're

looking

at.

So

that's

really

helpful

for

you

for

your

troubleshooting

for

your

network

monitoring

purposes.

G

In

some

cases

there

are

also

boxes

in

the

network

that

would

change

these

bits.

So,

for

example,

the

initial

sequence

number

is

sometimes

to

stay

with

this

example,

sometimes

overwritten,

because

that

gives

you

a

few

way

you

can

put

additional

information

in

or

if

you,

for

some

reason,

don't

want

to

support

a

certain

TCP

option.

You

can

actually

just

strip

it

from

the

TCP

soon

and

it

will

not

be

used.

G

There

are

other

use

cases

where

you

have

a

good

reason

to

rewrite

stuff

as

well.

So

what

we

see

more

recently

is

that

we

have

a

increasing

deployment

of

HTTPS

or

TLS

in

this

case.

So

what

this

change

is

that

you

cannot

see

the

higher

layer

headers

anymore.

You

cannot

see

any

HTTP

information

anymore,

but

you

can

still

see

the

transport

layer.

Header

I

wish

we're

still

not

meant

for

the

network,

but

still

provide

sometimes

very

useful

information.

G

If

you,

if

you

modify

and

change

these

things,

that

can

also

sometimes

have

negative

impact,

for

example,

even

if

you

as

Network,

don't

want

to

support

a

certain

TCP

option

like

it

might

never

get

deployed

right

and

we

developed

it

for

a

purpose,

and

there

are

also

privacy

implications.

So,

for

example,

the

time

steam

timestamp

option

is

a

good

example.

You

can

actually.

Basically,

if

you

look

at

the

time

ssin

option,

you

can

match

up

which

connections

belong

to

the

same

host,

because

they

have

the

same

clock

drift.

G

G

So

why

is

there?

Why

is

it

so

horrible

or

why

do

we

actually

want

to

encrypt

more

than

this?

And

there

are

two

good

reasons

which

I

kind

of

already

mentioned

right

now.

One

is

really

privacy

concerned,

but

the

other

one

is

also

ossification,

so

we

have

had,

especially

in

transport

area,

a

lot

of

discussions

over

the

last

couple

of

years.

We

wanted

to

change

something,

and

then

this

cushon

ended

up

and

no

you

can't

change

it

because

there's

something

applied

in

the

network

that

will

not

interoperate

with

this

that

will

just

drop

drop

packets.

G

If

you

change

this

bit

or

or

you

have

to

have

a

fallback,

if

this

option

gets

dropped

or

dropped

from

TCP,

so

it

really

made

our

protocol

development

more

complicated

and

sometimes-

and

sometimes

it's

even

impossible

to

to

innovate

on

the

protocols

anymore.

So

it

does

help

us

also

in

the

in

the

process.

If

we

try

to

at

least

secures

information

in

a

way

that

we

can

still

change

the

meaning,

modify

it

on

an

end-to-end

layer

later

on.

G

G

Kind

of

for

some

information

on

purpose

other

and

other

kind

of

information,

you

kind

of

really

avoid

it,

but

you

have

also

a

lot

of

the

information

that

was

privily

in

the

TCP

header

in

the

encrypted

part

of

the

packet,

so

that

is

kind

of

the

inner

transport

header

that

is

not

visible

to

the

outer

anymore,

and

so

basically,

what

came

up

as

a

term

that

might

be

interesting,

for

you

is

also

called

wire

image.

So

what's

not

visible

on

the

path,

however,.

G

So

that

might

be,

this

is

at

the

bottom.

These

are

two

individual

drafts

currently,

but

they

are

discussed

in

the

IAB

and

the

internet

architecture

board

to

give

guidance

on

this

discussion.

One

is

called

the

wire

image

explaining

what

this

term

wire

image

actually

means.

What

is

covered

by

the

wire

image

and

the

other

is

talking

about

explicit,

parsing,

I'll,

say

making

or

taking

more

a

look

on

when

we

develop

a

protocol.

What

should

be

provided

to

the

pass

and

what

is

useful

for

the

pass

to

provide.

G

Don't

have

an

answer

for

you

right

now

but,

like

we

are

aware

of

these

things

and

we

need

to

figure

out

what

we

need

to

support,

what

information

we

need

to

provide

and

how

we

can

on

the

one.

On

the

one

hand,

can

we

can

improve

security

privacy

resolve

the

ossification

problem,

but

also

keep

the

network

manageable.

So

that's

part

of

the

discussion

here.

G

Yeah

so,

as

I

just

said,

this

is

like

this

is

not

only

about

quick.

This

is

really

about

like

encryption

generalists

as

there

are

different

drafts

in

the

ITF,

which

are

not

again

not

always

specific

to

a

certain

working

group.

It's

really

the

all

over

the

discussion

we

have

in

the

ITF.

That

provides

you

further

big

background

information

on

these

topics,

and

these

cautions

here,

but

now

I'm

done.

A

Okay,

so

I'm

going

to

round

this

up

by

giving

you

a

fairly

quick

tour

of

all

the

meetings

that

are

happening

in

London

this

week.

So

here's

here's

all

the

meetings

on

one

slide,

I'll

just

put

in

the

categories:

area-wide

meetings,

tcp

related

meetings,

congestion

control

meetings,

new

protocols

and

deploy

new

protocols

and

then

everything

else

that

didn't

fit

into

one

of

those

categories

and

sorry

for

the

acronyms

soup.

I'll

expand

the

acronyms

on

the

following

slides.

A

So

the

first

meeting

is

actually

one

that

that

Mirko

chairs

there's

an

area-wide

transport

area

meeting,

which

is

a

venue

for

discussion,

public

general

interest,

the

entire

transport